Compiler Design Lecture Notes on Compiler Design

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Parser Tables for Non-LR(1) Grammars with Conflict Resolution Joel E

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Elsevier - Publisher Connector Science of Computer Programming 75 (2010) 943–979 Contents lists available at ScienceDirect Science of Computer Programming journal homepage: www.elsevier.com/locate/scico The IELR(1) algorithm for generating minimal LR(1) parser tables for non-LR(1) grammars with conflict resolution Joel E. Denny ∗, Brian A. Malloy School of Computing, Clemson University, Clemson, SC 29634, USA article info a b s t r a c t Article history: There has been a recent effort in the literature to reconsider grammar-dependent software Received 17 July 2008 development from an engineering point of view. As part of that effort, we examine a Received in revised form 31 March 2009 deficiency in the state of the art of practical LR parser table generation. Specifically, LALR Accepted 12 August 2009 sometimes generates parser tables that do not accept the full language that the grammar Available online 10 September 2009 developer expects, but canonical LR is too inefficient to be practical particularly during grammar development. In response, many researchers have attempted to develop minimal Keywords: LR parser table generation algorithms. In this paper, we demonstrate that a well known Grammarware Canonical LR algorithm described by David Pager and implemented in Menhir, the most robust minimal LALR LR(1) implementation we have discovered, does not always achieve the full power of Minimal LR canonical LR(1) when the given grammar is non-LR(1) coupled with a specification for Yacc resolving conflicts. We also detail an original minimal LR(1) algorithm, IELR(1) (Inadequacy Bison Elimination LR(1)), which we have implemented as an extension of GNU Bison and which does not exhibit this deficiency. -

Chapter 4 Syntax Analysis

Chapter 4 Syntax Analysis By Varun Arora Outline Role of parser Context free grammars Top down parsing Bottom up parsing Parser generators By Varun Arora The role of parser token Source Lexical Parse tree Rest of Intermediate Parser program Analyzer Front End representation getNext Token Symbol table By Varun Arora Uses of grammars E -> E + T | T T -> T * F | F F -> (E) | id E -> TE’ E’ -> +TE’ | Ɛ T -> FT’ T’ -> *FT’ | Ɛ F -> (E) | id By Varun Arora Error handling Common programming errors Lexical errors Syntactic errors Semantic errors Lexical errors Error handler goals Report the presence of errors clearly and accurately Recover from each error quickly enough to detect subsequent errors Add minimal overhead to the processing of correct progrms By Varun Arora Error-recover strategies Panic mode recovery Discard input symbol one at a time until one of designated set of synchronization tokens is found Phrase level recovery Replacing a prefix of remaining input by some string that allows the parser to continue Error productions Augment the grammar with productions that generate the erroneous constructs Global correction Choosing minimal sequence of changes to obtain a globally least-cost correction By Varun Arora Context free grammars Terminals Nonterminals expression -> expression + term Start symbol expression -> expression – term productions expression -> term term -> term * factor term -> term / factor term -> factor factor -> (expression) factor -> id By Varun Arora Derivations Productions are treated as -

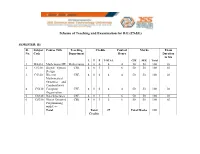

Scheme of Teaching and Examination for BE

Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: III Sl. Subject Course Title Teaching Credits Contact Marks Exam No. Code Department Hours Duration in hrs L T P TOTAL CIE SEE Total 1 MA310 Mathematics III Mathematics 4 0 0 4 4 50 50 100 03 2 CS310 Digital System CSE 4 0 1 5 6 50 50 100 03 Design 3 CS320 Discrete CSE 4 0 0 4 4 50 50 100 03 Mathematical Structures and Combinatorics 4 CS330 Computer CSE 4 0 0 4 4 50 50 100 03 Organization 5 CS340 Data Structures CSE 4 0 1 5 6 50 50 100 03 6 CS350 Object Oriented CSE 4 0 1 5 6 50 50 100 03 Programming with C++ Total Total 27 Total Marks 600 Credits Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: IV Sl. Subject Course Title Teaching Credits Contact Marks Exam No. Code Department Hours Duration in hrs L T P TOTAL CIE SEE Total 1 MA410 Probability, Mathematics 4 0 0 4 4 50 50 100 03 Statistics and Queuing 2 CS410 Operating CSE 4 0 1 5 6 50 50 100 03 Systems 3 CS420 Design and CSE 4 0 1 5 6 50 50 100 03 Analysis of Algorithms 4 CS430 Theory of CSE 4 0 0 4 4 50 50 100 03 Computation 5 CS440 Microprocessors CSE 4 0 1 5 6 50 50 100 03 6 CS450 Data CSE 4 0 0 4 4 50 50 100 03 Communication Total Total 27 Total Marks 600 Credits Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: V Sl. -

Validating LR(1) Parsers

Validating LR(1) Parsers Jacques-Henri Jourdan1;2, Fran¸coisPottier2, and Xavier Leroy2 1 Ecole´ Normale Sup´erieure 2 INRIA Paris-Rocquencourt Abstract. An LR(1) parser is a finite-state automaton, equipped with a stack, which uses a combination of its current state and one lookahead symbol in order to determine which action to perform next. We present a validator which, when applied to a context-free grammar G and an automaton A, checks that A and G agree. Validating the parser pro- vides the correctness guarantees required by verified compilers and other high-assurance software that involves parsing. The validation process is independent of which technique was used to construct A. The validator is implemented and proved correct using the Coq proof assistant. As an application, we build a formally-verified parser for the C99 language. 1 Introduction Parsing remains an essential component of compilers and other programs that input textual representations of structured data. Its theoretical foundations are well understood today, and mature technology, ranging from parser combinator libraries to sophisticated parser generators, is readily available to help imple- menting parsers. The issue we focus on in this paper is that of parser correctness: how to obtain formal evidence that a parser is correct with respect to its specification? Here, following established practice, we choose to specify parsers via context-free grammars enriched with semantic actions. One application area where the parser correctness issue naturally arises is formally-verified compilers such as the CompCert verified C compiler [14]. In- deed, in the current state of CompCert, the passes that have been formally ver- ified start at abstract syntax trees (AST) for the CompCert C subset of C and extend to ASTs for three assembly languages. -

Backtrack Parsing Context-Free Grammar Context-Free Grammar

Context-free Grammar Problems with Regular Context-free Grammar Language and Is English a regular language? Bad question! We do not even know what English is! Two eggs and bacon make(s) a big breakfast Backtrack Parsing Can you slide me the salt? He didn't ought to do that But—No! Martin Kay I put the wine you brought in the fridge I put the wine you brought for Sandy in the fridge Should we bring the wine you put in the fridge out Stanford University now? and University of the Saarland You said you thought nobody had the right to claim that they were above the law Martin Kay Context-free Grammar 1 Martin Kay Context-free Grammar 2 Problems with Regular Problems with Regular Language Language You said you thought nobody had the right to claim [You said you thought [nobody had the right [to claim that they were above the law that [they were above the law]]]] Martin Kay Context-free Grammar 3 Martin Kay Context-free Grammar 4 Problems with Regular Context-free Grammar Language Nonterminal symbols ~ grammatical categories Is English mophology a regular language? Bad question! We do not even know what English Terminal Symbols ~ words morphology is! They sell collectables of all sorts Productions ~ (unordered) (rewriting) rules This concerns unredecontaminatability Distinguished Symbol This really is an untiable knot. But—Probably! (Not sure about Swahili, though) Not all that important • Terminals and nonterminals are disjoint • Distinguished symbol Martin Kay Context-free Grammar 5 Martin Kay Context-free Grammar 6 Context-free Grammar Context-free -

Adaptive LL(*) Parsing: the Power of Dynamic Analysis

Adaptive LL(*) Parsing: The Power of Dynamic Analysis Terence Parr Sam Harwell Kathleen Fisher University of San Francisco University of Texas at Austin Tufts University [email protected] [email protected] kfi[email protected] Abstract PEGs are unambiguous by definition but have a quirk where Despite the advances made by modern parsing strategies such rule A ! a j ab (meaning “A matches either a or ab”) can never as PEG, LL(*), GLR, and GLL, parsing is not a solved prob- match ab since PEGs choose the first alternative that matches lem. Existing approaches suffer from a number of weaknesses, a prefix of the remaining input. Nested backtracking makes de- including difficulties supporting side-effecting embedded ac- bugging PEGs difficult. tions, slow and/or unpredictable performance, and counter- Second, side-effecting programmer-supplied actions (muta- intuitive matching strategies. This paper introduces the ALL(*) tors) like print statements should be avoided in any strategy that parsing strategy that combines the simplicity, efficiency, and continuously speculates (PEG) or supports multiple interpreta- predictability of conventional top-down LL(k) parsers with the tions of the input (GLL and GLR) because such actions may power of a GLR-like mechanism to make parsing decisions. never really take place [17]. (Though DParser [24] supports The critical innovation is to move grammar analysis to parse- “final” actions when the programmer is certain a reduction is time, which lets ALL(*) handle any non-left-recursive context- part of an unambiguous final parse.) Without side effects, ac- free grammar. ALL(*) is O(n4) in theory but consistently per- tions must buffer data for all interpretations in immutable data forms linearly on grammars used in practice, outperforming structures or provide undo actions. -

CWI Scanprofile/PDF/300

Centrum voor Wiskunde en lnformatica Centre for Mathematics and Computer Science J. Heering, P. Klint, J.G. Rekers Incremental generation of parsers , Computer Science/Department of Software Technology Report CS-R8822 May Biblk>tlleek Centrum ypor Wisl~unde en lnformatk:a Am~tel>dam The Centre for Mathematics and Computer Science is a research institute of the Stichting Mathematisch Centrum, which was founded on February 11, 1946, as a nonprofit institution aim ing at the promotion of mathematics, computer science, and their applications. It is sponsored by the Dutch Government through the Netherlands Organization for the Advancement of Pure Research (Z.W.0.). q\ ' Copyright (t:: Stichting Mathematisch Centrum, Amsterdam 1 Incremental Generation of Parsers J. Heering Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AS Amsterdam, The Netherlands P. Klint Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AS Amsterdam, The Netherlands and Programming Research Group, University of Amsterdam P.O. BOX 41882, 1009 DB Amsterdam, The Netherlands J. Rekers Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AB Amsterdam, The Netherlands A parser checks whether a text is a sentence in a language. Therefore, the parser is provided with the grammar of the language, and it usually generates a structure (parse tree) that represents the text according to that grammar. Most present-day parsers are not directly driven by the grammar but by a 'parse table', which is generated by a parse table generator. A table based parser wolks more efficiently than a grammar based parser does, and provided that the parser is used often enough, the cost of gen erating the parse table is outweighed by the gain in parsing efficiency. -

Sequence Alignment/Map Format Specification

Sequence Alignment/Map Format Specification The SAM/BAM Format Specification Working Group 3 Jun 2021 The master version of this document can be found at https://github.com/samtools/hts-specs. This printing is version 53752fa from that repository, last modified on the date shown above. 1 The SAM Format Specification SAM stands for Sequence Alignment/Map format. It is a TAB-delimited text format consisting of a header section, which is optional, and an alignment section. If present, the header must be prior to the alignments. Header lines start with `@', while alignment lines do not. Each alignment line has 11 mandatory fields for essential alignment information such as mapping position, and variable number of optional fields for flexible or aligner specific information. This specification is for version 1.6 of the SAM and BAM formats. Each SAM and BAMfilemay optionally specify the version being used via the @HD VN tag. For full version history see Appendix B. Unless explicitly specified elsewhere, all fields are encoded using 7-bit US-ASCII 1 in using the POSIX / C locale. Regular expressions listed use the POSIX / IEEE Std 1003.1 extended syntax. 1.1 An example Suppose we have the following alignment with bases in lowercase clipped from the alignment. Read r001/1 and r001/2 constitute a read pair; r003 is a chimeric read; r004 represents a split alignment. Coor 12345678901234 5678901234567890123456789012345 ref AGCATGTTAGATAA**GATAGCTGTGCTAGTAGGCAGTCAGCGCCAT +r001/1 TTAGATAAAGGATA*CTG +r002 aaaAGATAA*GGATA +r003 gcctaAGCTAA +r004 ATAGCT..............TCAGC -r003 ttagctTAGGC -r001/2 CAGCGGCAT The corresponding SAM format is:2 1Charset ANSI X3.4-1968 as defined in RFC1345. -

Lexing and Parsing with ANTLR4

Lab 2 Lexing and Parsing with ANTLR4 Objective • Understand the software architecture of ANTLR4. • Be able to write simple grammars and correct grammar issues in ANTLR4. EXERCISE #1 Lab preparation Ï In the cap-labs directory: git pull will provide you all the necessary files for this lab in TP02. You also have to install ANTLR4. 2.1 User install for ANTLR4 and ANTLR4 Python runtime User installation steps: mkdir ~/lib cd ~/lib wget http://www.antlr.org/download/antlr-4.7-complete.jar pip3 install antlr4-python3-runtime --user Then in your .bashrc: export CLASSPATH=".:$HOME/lib/antlr-4.7-complete.jar:$CLASSPATH" export ANTLR4="java -jar $HOME/lib/antlr-4.7-complete.jar" alias antlr4="java -jar $HOME/lib/antlr-4.7-complete.jar" alias grun='java org.antlr.v4.gui.TestRig' Then source your .bashrc: source ~/.bashrc 2.2 Structure of a .g4 file and compilation Links to a bit of ANTLR4 syntax : • Lexical rules (extended regular expressions): https://github.com/antlr/antlr4/blob/4.7/doc/ lexer-rules.md • Parser rules (grammars) https://github.com/antlr/antlr4/blob/4.7/doc/parser-rules.md The compilation of a given .g4 (for the PYTHON back-end) is done by the following command line: java -jar ~/lib/antlr-4.7-complete.jar -Dlanguage=Python3 filename.g4 or if you modified your .bashrc properly: antlr4 -Dlanguage=Python3 filename.g4 2.3 Simple examples with ANTLR4 EXERCISE #2 Demo files Ï Work your way through the five examples in the directory demo_files: Aurore Alcolei, Laure Gonnord, Valentin Lorentz. 1/4 ENS de Lyon, Département Informatique, M1 CAP Lab #2 – Automne 2017 ex1 with ANTLR4 + Java : A very simple lexical analysis1 for simple arithmetic expressions of the form x+3. -

B.E Computer Science and Engineering

CURRICULUM B.E. – Computer Science and Engineering Regulations 2019 VISION MISSION “To become a center of excellence • To produce technocrats in the in Computer Science and industry and academia by Engineering and Research to create educating computer concepts and global leaders with holistic growth techniques. and ethical values for the industry • To facilitate the students to and academics.” trigger more creativity by applying modern tools and technologies in the field of computer science and engineering. • To inculcate the spirit of ethical values contributing to the welfare of the society. Department of Computer Science and Engineering Department of CSE, Francis Xavier Engineering College | Regulation 2019 2 Department of CSE, Francis Xavier Engineering College | Regulation 2019 5 B.E.-COMPUTER SCIENCE AND ENGINEERING (REGULATIONS 2019) CHOICE BASED CREDIT SYSTEM SUMMARY OF CREDIT DISTRIBUTION Range Of CREDITS PER SEMESTER Total S. TOTAL CREDITS CATEGORY Credits No CREDIT IN % I II III IV V VI VII VIII Min Max 1 HSS 3 2 3 8 4.5% 9 11 2 BS 12 4 4 4 24 14.5% 21 21 3 ES 8 11 3 22 13.9% 23 26 4 PC 13 17 10 11 8 59 35.75% 59 59 5 PE 6 6 6 6 24 14.5% 24 27 6 OE 3 3 3 3 12 7.3% 12 15 7 EEC 2 2 1 10 15 9.1% 12 15 TOTAL 23 18 20 23 22 22 21 16 165 100% - - BS - Basic Sciences ES - Engineering Sciences HSS - Humanities and Social Sciences PC - Professional Core PE - Professional Elective OE - Open Elective EEC - Employability Enhancement Course Department of CSE, Francis Xavier Engineering College | Regulation 2019 6 B.E.- COMPUTER SCIENCE AND ENGINEERING (REGULATIONS 2019) CHOICE BASED CREDIT SYSTEM I – VIII SEMESTERS CURRICULUM AND SYLLABI FIRST SEMESTER Code No. -

Compiler Design

CCOOMMPPIILLEERR DDEESSIIGGNN -- PPAARRSSEERR http://www.tutorialspoint.com/compiler_design/compiler_design_parser.htm Copyright © tutorialspoint.com In the previous chapter, we understood the basic concepts involved in parsing. In this chapter, we will learn the various types of parser construction methods available. Parsing can be defined as top-down or bottom-up based on how the parse-tree is constructed. Top-Down Parsing We have learnt in the last chapter that the top-down parsing technique parses the input, and starts constructing a parse tree from the root node gradually moving down to the leaf nodes. The types of top-down parsing are depicted below: Recursive Descent Parsing Recursive descent is a top-down parsing technique that constructs the parse tree from the top and the input is read from left to right. It uses procedures for every terminal and non-terminal entity. This parsing technique recursively parses the input to make a parse tree, which may or may not require back-tracking. But the grammar associated with it ifnotleftfactored cannot avoid back- tracking. A form of recursive-descent parsing that does not require any back-tracking is known as predictive parsing. This parsing technique is regarded recursive as it uses context-free grammar which is recursive in nature. Back-tracking Top- down parsers start from the root node startsymbol and match the input string against the production rules to replace them ifmatched. To understand this, take the following example of CFG: S → rXd | rZd X → oa | ea Z → ai For an input string: read, a top-down parser, will behave like this: It will start with S from the production rules and will match its yield to the left-most letter of the input, i.e. -

CLR(1) and LALR(1) Parsers

CLR(1) and LALR(1) Parsers C.Naga Raju B.Tech(CSE),M.Tech(CSE),PhD(CSE),MIEEE,MCSI,MISTE Professor Department of CSE YSR Engineering College of YVU Proddatur 6/21/2020 Prof.C.NagaRaju YSREC of YVU 9949218570 Contents • Limitations of SLR(1) Parser • Introduction to CLR(1) Parser • Animated example • Limitation of CLR(1) • LALR(1) Parser Animated example • GATE Problems and Solutions Prof.C.NagaRaju YSREC of YVU 9949218570 Drawbacks of SLR(1) ❖ The SLR Parser discussed in the earlier class has certain flaws. ❖ 1.On single input, State may be included a Final Item and a Non- Final Item. This may result in a Shift-Reduce Conflict . ❖ 2.A State may be included Two Different Final Items. This might result in a Reduce-Reduce Conflict Prof.C.NagaRaju YSREC of YVU 6/21/2020 9949218570 ❖ 3.SLR(1) Parser reduces only when the next token is in Follow of the left-hand side of the production. ❖ 4.SLR(1) can reduce shift-reduce conflicts but not reduce-reduce conflicts ❖ These two conflicts are reduced by CLR(1) Parser by keeping track of lookahead information in the states of the parser. ❖ This is also called as LR(1) grammar Prof.C.NagaRaju YSREC of YVU 6/21/2020 9949218570 CLR(1) Parser ❖ LR(1) Parser greatly increases the strength of the parser, but also the size of its parse tables. ❖ The LR(1) techniques does not rely on FOLLOW sets, but it keeps the Specific Look-ahead with each item. Prof.C.NagaRaju YSREC of YVU 6/21/2020 9949218570 CLR(1) Parser ❖ LR(1) Parsing configurations have the general form: A –> X1...Xi • Xi+1...Xj , a ❖ The Look Ahead