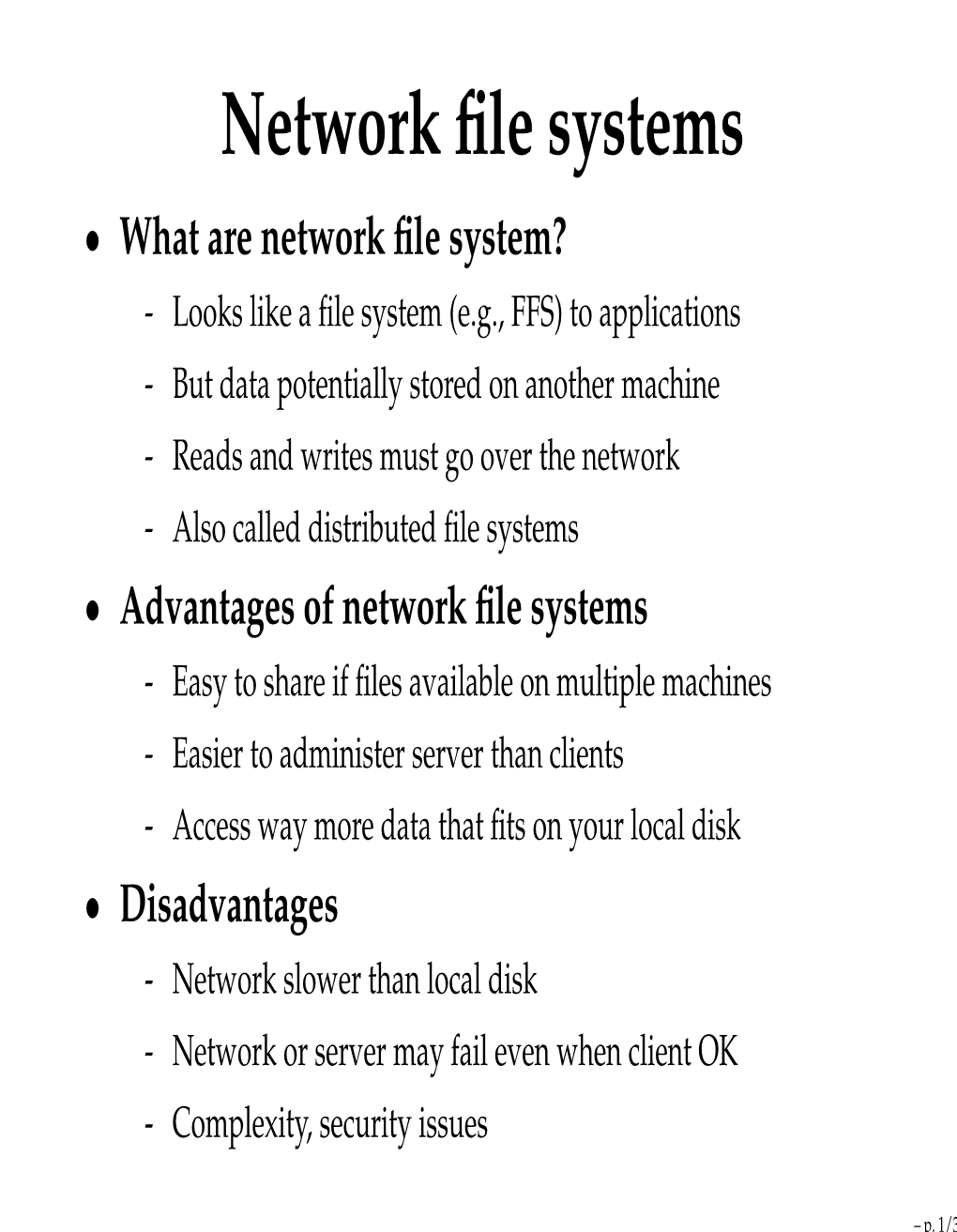

Network File Systems

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Oracle® ZFS Storage Appliance Security Guide, Release OS8.6.X

® Oracle ZFS Storage Appliance Security Guide, Release OS8.6.x Part No: E76480-01 September 2016 Oracle ZFS Storage Appliance Security Guide, Release OS8.6.x Part No: E76480-01 Copyright © 2014, 2016, Oracle and/or its affiliates. All rights reserved. This software and related documentation are provided under a license agreement containing restrictions on use and disclosure and are protected by intellectual property laws. Except as expressly permitted in your license agreement or allowed by law, you may not use, copy, reproduce, translate, broadcast, modify, license, transmit, distribute, exhibit, perform, publish, or display any part, in any form, or by any means. Reverse engineering, disassembly, or decompilation of this software, unless required by law for interoperability, is prohibited. The information contained herein is subject to change without notice and is not warranted to be error-free. If you find any errors, please report them to us in writing. If this is software or related documentation that is delivered to the U.S. Government or anyone licensing it on behalf of the U.S. Government, then the following notice is applicable: U.S. GOVERNMENT END USERS. Oracle programs, including any operating system, integrated software, any programs installed on the hardware, and/or documentation, delivered to U.S. Government end users are "commercial computer software" pursuant to the applicable Federal Acquisition Regulation and agency-specific supplemental regulations. As such, use, duplication, disclosure, modification, and adaptation of the programs, including any operating system, integrated software, any programs installed on the hardware, and/or documentation, shall be subject to license terms and license restrictions applicable to the programs. -

Virtfs—A Virtualization Aware File System Pass-Through

VirtFS—A virtualization aware File System pass-through Venkateswararao Jujjuri Eric Van Hensbergen Anthony Liguori IBM Linux Technology Center IBM Research Austin IBM Linux Technology Center [email protected] [email protected] [email protected] Badari Pulavarty IBM Linux Technology Center [email protected] Abstract operations into block device operations and then again into host file system operations. This paper describes the design and implementation of In addition to performance improvements over a tradi- a paravirtualized file system interface for Linux in the tional virtual block device, exposing guest file system KVM environment. Today’s solution of sharing host activity to the hypervisor provides greater insight to the files on the guest through generic network file systems hypervisor about the workload the guest is running. This like NFS and CIFS suffer from major performance and allows the hypervisor to make more intelligent decisions feature deficiencies as these protocols are not designed with respect to I/O caching and creates new opportuni- or optimized for virtualization. To address the needs of ties for hypervisor-based services like de-duplification. the virtualization paradigm, in this paper we are intro- ducing a new paravirtualized file system called VirtFS. In Section 2 of this paper, we explore more details about This new file system is currently under development and the motivating factors for paravirtualizing the file sys- is being built using QEMU, KVM, VirtIO technologies tem layer. In Section 3, we introduce the VirtFS design and 9P2000.L protocol. including an overview of the 9P protocol, which VirtFS is based on, along with a set of extensions introduced for greater Linux guest compatibility. -

Managing Network File Systems in Oracle® Solaris 11.4

Managing Network File Systems in ® Oracle Solaris 11.4 Part No: E61004 August 2021 Managing Network File Systems in Oracle Solaris 11.4 Part No: E61004 Copyright © 2002, 2021, Oracle and/or its affiliates. This software and related documentation are provided under a license agreement containing restrictions on use and disclosure and are protected by intellectual property laws. Except as expressly permitted in your license agreement or allowed by law, you may not use, copy, reproduce, translate, broadcast, modify, license, transmit, distribute, exhibit, perform, publish, or display any part, in any form, or by any means. Reverse engineering, disassembly, or decompilation of this software, unless required by law for interoperability, is prohibited. The information contained herein is subject to change without notice and is not warranted to be error-free. If you find any errors, please report them to us in writing. If this is software or related documentation that is delivered to the U.S. Government or anyone licensing it on behalf of the U.S. Government, then the following notice is applicable: U.S. GOVERNMENT END USERS: Oracle programs (including any operating system, integrated software, any programs embedded, installed or activated on delivered hardware, and modifications of such programs) and Oracle computer documentation or other Oracle data delivered to or accessed by U.S. Government end users are "commercial computer software" or "commercial computer software documentation" pursuant to the applicable Federal Acquisition Regulation and agency-specific supplemental regulations. As such, the use, reproduction, duplication, release, display, disclosure, modification, preparation of derivative works, and/or adaptation of i) Oracle programs (including any operating system, integrated software, any programs embedded, installed or activated on delivered hardware, and modifications of such programs), ii) Oracle computer documentation and/or iii) other Oracle data, is subject to the rights and limitations specified in the license contained in the applicable contract. -

Chapter 15: File System Internals

Chapter 15: File System Internals Operating System Concepts – 10th dition Silberschatz, Galvin and Gagne ©2018 Chapter 15: File System Internals File Systems File-System Mounting Partitions and Mounting File Sharing Virtual File Systems Remote File Systems Consistency Semantics NFS Operating System Concepts – 10th dition 1!"2 Silberschatz, Galvin and Gagne ©2018 Objectives Delve into the details of file systems and their implementation Explore "ooting and file sharing Describe remote file systems, using NFS as an example Operating System Concepts – 10th dition 1!"# Silberschatz, Galvin and Gagne ©2018 File System $eneral-purpose computers can have multiple storage devices Devices can "e sliced into partitions, %hich hold volumes Volumes can span multiple partitions Each volume usually formatted into a file system # of file systems varies, typically dozens available to choose from Typical storage device organization) Operating System Concepts – 10th dition 1!"$ Silberschatz, Galvin and Gagne ©2018 Example Mount Points and File Systems - Solaris Operating System Concepts – 10th dition 1!"! Silberschatz, Galvin and Gagne ©2018 Partitions and Mounting Partition can be a volume containing a file system +* cooked-) or ra& – just a sequence of "loc,s %ith no file system Boot bloc, can point to boot volume or "oot loader set of "loc,s that contain enough code to ,now how to load the ,ernel from the file system 3r a boot management program for multi-os booting 'oot partition contains the 3S, other partitions can hold other -

File-System Implementation

CHAPTER File-System 11 Implementation As we saw in Chapter 10, the ®le system provides the mechanism for on-line storage and access to ®le contents, including data and programs. The ®le system resides permanently on secondary storage, which is designed to hold a large amount of data permanently. This chapter is primarily concerned with issues surrounding ®le storage and access on the most common secondary-storage medium, the disk. We explore ways to structure ®le use, to allocate disk space, to recover freed space, to track the locations of data, and to interface other parts of the operating system to secondary storage. Performance issues are considered throughout the chapter. Bibliographical Notes The MS-DOS FAT system is explained in[Norton and Wilton (1988)]. The internals of the BSD UNIX system are covered in full in[McKusick and Neville- Neil (2005)]. Details concerning ®le systems for Linux can be found in[Love (2010)]. The Google ®le system is described in[Ghemawat et al. (2003)]. FUSE can be found at http://fuse.sourceforge.net. Log-structured ®le organizations for enhancing both performance and consistency are discussed in[Rosenblum and Ousterhout (1991)], [Seltzer et al. (1993)], and[Seltzer et al. (1995)]. Algorithms such as balanced trees (and much more) are covered by[Knuth (1998)] and [Cormen et al. (2009)].[Silvers (2000)] discusses implementing the page cache in the NetBSD operating system. The ZFS source code for space maps can be found at http://src.opensolaris.org/source/xref/onnv/onnv- gate/usr/src/uts/common/fs/zfs/space map.c. The network ®le system (NFS) is discussed in[Callaghan (2000)]. -

Comparative Analysis of Distributed and Parallel File Systems' Internal Techniques

Comparative Analysis of Distributed and Parallel File Systems’ Internal Techniques Viacheslav Dubeyko Content 1 TERMINOLOGY AND ABBREVIATIONS ................................................................................ 4 2 INTRODUCTION......................................................................................................................... 5 3 COMPARATIVE ANALYSIS METHODOLOGY ....................................................................... 5 4 FILE SYSTEM FEATURES CLASSIFICATION ........................................................................ 5 4.1 Distributed File Systems ............................................................................................................................ 6 4.1.1 HDFS ..................................................................................................................................................... 6 4.1.2 GFS (Google File System) ....................................................................................................................... 7 4.1.3 InterMezzo ............................................................................................................................................ 9 4.1.4 CodA .................................................................................................................................................... 10 4.1.5 Ceph.................................................................................................................................................... 12 4.1.6 DDFS .................................................................................................................................................. -

Zfs-Ascalabledistributedfilesystemusingobjectdisks

zFS-AScalableDistributedFileSystemUsingObjectDisks Ohad Rodeh Avi Teperman [email protected] [email protected] IBM Labs, Haifa University, Mount Carmel, Haifa 31905, Israel. Abstract retrieves the data block from the remote machine. zFS also uses distributed transactions and leases, instead of group- zFS is a research project aimed at building a decentral- communication and clustering software. We intend to test ized file system that distributes all aspects of file and stor- and show the effectiveness of these two features in our pro- age management over a set of cooperating machines inter- totype. connected by a high-speed network. zFS is designed to be zFS has six components: a Front End (FE), a Cooper- a file system that scales from a few networked computers to ative Cache (Cache), a File Manager (FMGR), a Lease several thousand machines and to be built from commodity Manager (LMGR), a Transaction Server (TSVR), and an off-the-shelf components. Object Store (OSD). These components work together to The two most prominent features of zFS are its coop- provide applications/users with a distributed file system. erative cache and distributed transactions. zFS integrates The design of zFS addresses, and is influenced by, issues the memory of all participating machines into one coher- of fault tolerance, security and backup/mirroring. How- ent cache. Thus, instead of going to the disk for a block ever, in this article, we focus on the zFS high-level archi- of data already in one of the machine memories, zFS re- tecture and briefly describe zFS’s fault tolerance character- trieves the data block from the remote machine. -

Sun Storedge™ QFS and Sun Storedge SAM-FS Software Installation and Configuration Guide

Sun StorEdge™ QFS and Sun StorEdge SAM-FS Software Installation and Configuration Guide Release 4.2 Sun Microsystems, Inc. www.sun.com Part No. 817-7722-10 September 2004, Revision A Submit comments about this document at: http://www.sun.com/hwdocs/feedback Copyright 2004 Sun Microsystems, Inc., 4150 Network Circle, Santa Clara, California 95054, U.S.A. All rights reserved. Sun Microsystems, Inc. has intellectual property rights relating to technology that is described in this document. In particular, and without limitation, these intellectual property rights may include one or more of the U.S. patents listed at http://www.sun.com/patents and one or more additional patents or pending patent applications in the U.S. and in other countries. This document and the product to which it pertains are distributed under licenses restricting their use, copying, distribution, and decompilation. No part of the product or of this document may be reproduced in any form by any means without prior written authorization of Sun and its licensors, if any. Third-party software, including font technology, is copyrighted and licensed from Sun suppliers. Parts of the product may be derived from Berkeley BSD systems, licensed from the University of California. UNIX is a registered trademark in the U.S. and in other countries, exclusively licensed through X/Open Company, Ltd. Sun, Sun Microsystems, the Sun logo, AnswerBook2, docs.sun.com, Solaris, SunOS, SunSolve, Java, JavaScript, Solstice DiskSuite, and StorEdge are trademarks or registered trademarks of Sun Microsystems, Inc. in the U.S. and in other countries. All SPARC trademarks are used under license and are trademarks or registered trademarks of SPARC International, Inc. -

Distributed Storage

Distributed and Federated Storage How to store things… in… many places... (maybe) CS2510 Presented by: wilkie [email protected] University of Pittsburgh Recommended Reading (or Skimming) • NFS: http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.14.473 • WAFL: https://dl.acm.org/citation.cfm?id=1267093 • Hierarchical File Systems are Dead (Margo Seltzer, 2009): https://www.eecs.harvard.edu/margo/papers/hotos09/paper.pdf • Chord (Ion Stoica, Robert Morris, David Karger, M. Frans Kaashoek, Hari Balakrishnan, 2001): https://pdos.csail.mit.edu/papers/chord:sigcomm01/chord_sigcomm.pdf • Kademlia (Petar Maymounkov, David Mazières, 2002): https://pdos.csail.mit.edu/~petar/papers/maymounkov-kademlia-lncs.pdf • BitTorrent Overview: http://web.cs.ucla.edu/classes/cs217/05BitTorrent.pdf • IPFS (Juan Benet, 2014): https://ipfs.io/ipfs/QmR7GSQM93Cx5eAg6a6yRzNde1FQv7uL6X1o4k7zrJa3LX/ ipfs.draft3.pdf (served via IPFS, neat) Network File System NFS: A Traditional and Classic Distributed File System Problem • Storage is cheap. • YES. This is a problem in a classical sense. • People are storing more stuff and want very strong storage guarantees. • Networked (web) applications are global and people want strong availability and stable speed/performance (wherever in the world they are.) Yikes! • More data == Greater probability of failure • We want consistency (correct, up-to-date data) • We want availability (when we need it) • We want partition tolerance (even in the presence of downtime) • Oh. Hmm. Well, heck. • That’s hard (technically impossible) so what can we do? Lightning Round: Distributed Storage • Network File System (NFS) • We will gloss over details, here, Unreliable but the papers are definitely worth a read. • It invented the Virtual File System (VFS) • Basically, though, it is an early attempt to investigate the trade-offs for client/server file consistency Most Reliable?? NFS System Model • Each client connects directly to the server. -

Ops Center Protector File System Support Matrix

Hitachi Ops Center Protector v7.0 Support Matrix: File Systems Introduction: Agent-Based and Agentless Protection Protector can provide consistent protection either with or without the use of a host agent. • Agent-based protection performs the application interaction required to provide application-aware consistency. • Agentless protection ensures the application data is protected on disk and therefore provides crash consistency. Pre-execution script- and post-execution scripts can be called as part of a policy to enable application aware consistency. This support matrix describes the environments supported by either method of protection. Agent-Based Protection Agent-based protection provides application consistent protection support with the following operating systems and configurations. Operating System(1) Supported Protection Types Host Based Storage Based Gen 1 Gen 2 Hitachi Repository Repository, Block Gen 2 HCP, Amazon S3 Microsoft Windows Windows Server 2012 (64-bit) Windows Server 2012 R2 (64-bit) Windows Server 2016 (64-bit) (2) (NTFS, ReFS) (NTFS, ReFS) (NTFS, ReFS) Windows Server 2019(2) Microsoft Windows (Desktop) Windows 8 and 8.1 Windows 10 (NTFS) (NTFS) Linux (3)(4) RHEL 6 x64 (6.3 and newer) RHEL 7 x64 (7.0 and newer) RHEL 8 x64 (8.0 and 8.1 Only) OEL 6 x64 (6.3 and newer) (EXT3, EXT4, (EXT3, EXT4, OEL 7 x64 (7.0 and newer) (EXT3, LVM, NFS, LVM, NFS, OEL 8 x64 (8.0 and 8.1 Only) EXT4,LVM,ASM) SUSE 11 x64 (11.3 and newer) XFS,ASM) XFS,ASM)) SUSE 12 x64 (12.0 and newer) SUSE 15 x64(15.0 SP1 Only) NFS = network file system, -

COS 318: Operating Systems Journaling, NFS and WAFL

COS 318: Operating Systems Journaling, NFS and WAFL Jaswinder Pal Singh Computer Science Department Princeton University (http://www.cs.princeton.edu/courses/cos318/) Topics ◆ Journaling and LFS ◆ Network File System ◆ NetApp File System 2 Log-structured File System (LFS) ◆ Structure the entire file system as a log with segments ! A segment has i-nodes, indirect blocks, and data blocks ! An i-node map to map i-node number to i-node locations ! All writes are sequential ◆ Issues ! There will be holes when deleting files ! Need garbage collection to get rid of holes ! Read performance? ◆ Goal is to improve write performance ! Not to confuse with the log for transactions/journaling ! Also useful for write and wear-leveling with NAND Flash i P D i P D i P D i P D i P D Unused Inode Log structured map Revisit Implementation of Transactions ◆ BeginTransaction ! Start using a “write-ahead” log on disk ! Log all updates ◆ Commit ! Write “commit” at the end of the log ! Then “write-behind” to disk by writing updates to disk ! Clear the log ◆ Rollback ! Clear the log ◆ Crash recovery ! If there is no “commit” in the log, do nothing ! If there is “commit,” replay the log and clear the log ◆ Issues ! All updates on the log must be idempotent ! Each transaction has an Id or TID ! Must have a way to confirm that a disk write completes 4 Journaling File System ◆ Example: Append a data block to a file on disk ! Allocate disk blocks for data and i-node (update bitmap) ! Update i-node and data blocks ◆ Journaling all updates ! Execute the following transaction: -

Chapter 11: File System Implementation

Chapter 11: File System Implementation File-System Structure File-System Implementation Directory Implementation Allocation Methods Free-Space Management Efficiency and Performance Recovery Log-Structured File Systems NFS Example: WAFL File System Objectives To describe the details of implementing local file systems and directory structures To describe the implementation of remote file systems To discuss block allocation and free-block algorithms and trade-offs File-System Structure File structure Logical storage unit Collection of related information File system resides on secondary storage (disks) File system organized into layers File control block – storage structure consisting of information about a file Layered File System A Typical File Control Block In-Memory File System Structures The following figure illustrates the necessary file system structures provided by the operating systems. Figure 12-3(a) refers to opening a file. Figure 12-3(b) refers to reading a file. In-Memory File System Structures Virtual File Systems Virtual File Systems (VFS) provide an object-oriented way of implementing file systems. VFS allows the same system call interface (the API) to be used for different types of file systems. The API is to the VFS interface, rather than any specific type of file system. Schematic View of Virtual File System Directory Implementation Linear list of file names with pointer to the data blocks. simple to program time-consuming to execute Hash Table – linear list with hash data structure. decreases