Memory Hierarchy and Caches

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

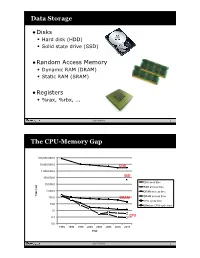

Data Storage the CPU-Memory

Data Storage •Disks • Hard disk (HDD) • Solid state drive (SSD) •Random Access Memory • Dynamic RAM (DRAM) • Static RAM (SRAM) •Registers • %rax, %rbx, ... Sean Barker 1 The CPU-Memory Gap 100,000,000.0 10,000,000.0 Disk 1,000,000.0 100,000.0 SSD Disk seek time 10,000.0 SSD access time 1,000.0 DRAM access time Time (ns) Time 100.0 DRAM SRAM access time CPU cycle time 10.0 Effective CPU cycle time 1.0 0.1 CPU 0.0 1985 1990 1995 2000 2003 2005 2010 2015 Year Sean Barker 2 Caching Smaller, faster, more expensive Cache 8 4 9 10 14 3 memory caches a subset of the blocks Data is copied in block-sized 10 4 transfer units Larger, slower, cheaper memory Memory 0 1 2 3 viewed as par@@oned into “blocks” 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 3 Cache Hit Request: 14 Cache 8 9 14 3 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 4 Cache Miss Request: 12 Cache 8 12 9 14 3 12 Request: 12 Memory 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 Sean Barker 5 Locality ¢ Temporal locality: ¢ Spa0al locality: Sean Barker 6 Locality Example (1) sum = 0; for (i = 0; i < n; i++) sum += a[i]; return sum; Sean Barker 7 Locality Example (2) int sum_array_rows(int a[M][N]) { int i, j, sum = 0; for (i = 0; i < M; i++) for (j = 0; j < N; j++) sum += a[i][j]; return sum; } Sean Barker 8 Locality Example (3) int sum_array_cols(int a[M][N]) { int i, j, sum = 0; for (j = 0; j < N; j++) for (i = 0; i < M; i++) sum += a[i][j]; return sum; } Sean Barker 9 The Memory Hierarchy The Memory Hierarchy Smaller On 1 cycle to access CPU Chip Registers Faster Storage Costlier instrs can L1, L2 per byte directly Cache(s) ~10’s of cycles to access access (SRAM) Main memory ~100 cycles to access (DRAM) Larger Slower Flash SSD / Local network ~100 M cycles to access Cheaper Local secondary storage (disk) per byte slower Remote secondary storage than local (tapes, Web servers / Internet) disk to access Sean Barker 10. -

Managing the Memory Hierarchy

Managing the Memory Hierarchy Jeffrey S. Vetter Sparsh Mittal, Joel Denny, Seyong Lee Presented to SOS20 Asheville 24 Mar 2016 ORNL is managed by UT-Battelle for the US Department of Energy http://ft.ornl.gov [email protected] Exascale architecture targets circa 2009 2009 Exascale Challenges Workshop in San Diego Attendees envisioned two possible architectural swim lanes: 1. Homogeneous many-core thin-node system 2. Heterogeneous (accelerator + CPU) fat-node system System attributes 2009 “Pre-Exascale” “Exascale” System peak 2 PF 100-200 PF/s 1 Exaflop/s Power 6 MW 15 MW 20 MW System memory 0.3 PB 5 PB 32–64 PB Storage 15 PB 150 PB 500 PB Node performance 125 GF 0.5 TF 7 TF 1 TF 10 TF Node memory BW 25 GB/s 0.1 TB/s 1 TB/s 0.4 TB/s 4 TB/s Node concurrency 12 O(100) O(1,000) O(1,000) O(10,000) System size (nodes) 18,700 500,000 50,000 1,000,000 100,000 Node interconnect BW 1.5 GB/s 150 GB/s 1 TB/s 250 GB/s 2 TB/s IO Bandwidth 0.2 TB/s 10 TB/s 30-60 TB/s MTTI day O(1 day) O(0.1 day) 2 Memory Systems • Multimode memories – Fused, shared memory – Scratchpads – Write through, write back, etc – Virtual v. Physical, paging strategies – Consistency and coherence protocols • 2.5D, 3D Stacking • HMC, HBM/2/3, LPDDR4, GDDR5, WIDEIO2, etc https://www.micron.com/~/media/track-2-images/content-images/content_image_hmc.jpg?la=en • New devices (ReRAM, PCRAM, Xpoint) J.S. -

The Design of a Drum Memory System

Scholars' Mine Masters Theses Student Theses and Dissertations 1967 The design of a drum memory system Frederick W. Lynch Follow this and additional works at: https://scholarsmine.mst.edu/masters_theses Part of the Electrical and Computer Engineering Commons Department: Recommended Citation Lynch, Frederick W., "The design of a drum memory system" (1967). Masters Theses. 5163. https://scholarsmine.mst.edu/masters_theses/5163 This thesis is brought to you by Scholars' Mine, a service of the Missouri S&T Library and Learning Resources. This work is protected by U. S. Copyright Law. Unauthorized use including reproduction for redistribution requires the permission of the copyright holder. For more information, please contact [email protected]. THE DESIGN OF A DRUM MEMORY SYSTEM BY FREDERICK W. LYNCH A l'HESIS 129533 submitted to the faculty of the UNIVERSITY OF MISSOURI AT ROLLA in partial fulfillment of the requirements for the Degree of MASTER OF SCIENCE IN ELECTRICAL ENGINEERING Rolla, Missouri I"",,--;;'d I ~ 1967 rfJ·.dfl c, I ii ABSTRACT Three methods for obtaining an auxiliary memory for an SeC-6S0 (Scientific Control Corporation) digital computer are presented. A logic design is then developed on the basis of using the drum and write circuits from an available IBM-6S0 digital computer and con structing the remaining logic functions. The results of the logic design are the transfer equations necessary to implement the memory system. iii ACKNOWLEDGMENT The author wishes to express his sincere appreciation to his major professor, Dr. James Tracey, for guidance and support in the preparation of this thesis. iv TABLE OF CONTENTS Page ABSTRACT ii ACKNm>JLEDGlVIENT iii LIST OF FIGURES v LIST OF TABLES vi I. -

Caching Basics

Introduction Why memory subsystem design is important • CPU speeds increase 25%-30% per year • DRAM speeds increase 2%-11% per year Autumn 2006 CSE P548 - Memory Hierarchy 1 Memory Hierarchy Levels of memory with different sizes & speeds • close to the CPU: small, fast access • close to memory: large, slow access Memory hierarchies improve performance • caches: demand-driven storage • principal of locality of reference temporal: a referenced word will be referenced again soon spatial: words near a reference word will be referenced soon • speed/size trade-off in technology ⇒ fast access for most references First Cache: IBM 360/85 in the late ‘60s Autumn 2006 CSE P548 - Memory Hierarchy 2 1 Cache Organization Block: • # bytes associated with 1 tag • usually the # bytes transferred on a memory request Set: the blocks that can be accessed with the same index bits Associativity: the number of blocks in a set • direct mapped • set associative • fully associative Size: # bytes of data How do you calculate this? Autumn 2006 CSE P548 - Memory Hierarchy 3 Logical Diagram of a Cache Autumn 2006 CSE P548 - Memory Hierarchy 4 2 Logical Diagram of a Set-associative Cache Autumn 2006 CSE P548 - Memory Hierarchy 5 Accessing a Cache General formulas • number of index bits = log2(cache size / block size) (for a direct mapped cache) • number of index bits = log2(cache size /( block size * associativity)) (for a set-associative cache) Autumn 2006 CSE P548 - Memory Hierarchy 6 3 Design Tradeoffs Cache size the bigger the cache, + the higher the hit ratio -

DRAM EMAIL GIM Teams!!!/Teaming Issues •Memories in Verilog •Memories on the FPGA

Memories & More •Overview of Memories •External Memories •SRAM (async, sync) •Flash •DRAM EMAIL GIM teams!!!/teaming Issues •Memories in Verilog •Memories on the FPGA 10/18/18 6.111 Fall 2018 1 Memories: a practical primer • The good news: huge selection of technologies • Small & faster vs. large & slower • Every year capacities go up and prices go down • Almost cost competitive with hard disks: high density, fast flash memories • Non-volatile, read/write, no moving parts! (robust, efficient) • The bad news: perennial system bottleneck • Latencies (access time) haven’t kept pace with cycle times • Separate technology from logic, so must communicate between silicon, so physical limitations (# of pins, R’s and C’s and L’s) limit bandwidths • New hopes: capacitive interconnect, 3D IC’s • Likely the limiting factor in cost & performance of many digital systems: designers spend a lot of time figuring out how to keep memories running at peak bandwidth • “It’s the memory - just add more faster memory” 10/18/18 6.111 Fall 2018 2 How do we Electrically Remember Things? • We can convey/transfer information with voltages that change over time • How can we store information in an electrically accessible manner? • Store in either: • Electric Field • Magnetic Field 10/18/18 6.111 Fall 2018 3 Mostly focus on rewritable • Punched Cards have existed as electromechanical program storage since ~1800s • We’re mostly concerned with rewritable storage mechanisms today (cards were true Computer program in punched card format ROMs) https://en.wiKipedia.org/wiKi/Computer_programming_in_the_ -

45-Year CPU Evolution: One Law and Two Equations

45-year CPU evolution: one law and two equations Daniel Etiemble LRI-CNRS University Paris Sud Orsay, France [email protected] Abstract— Moore’s law and two equations allow to explain the a) IC is the instruction count. main trends of CPU evolution since MOS technologies have been b) CPI is the clock cycles per instruction and IPC = 1/CPI is the used to implement microprocessors. Instruction count per clock cycle. c) Tc is the clock cycle time and F=1/Tc is the clock frequency. Keywords—Moore’s law, execution time, CM0S power dissipation. The Power dissipation of CMOS circuits is the second I. INTRODUCTION equation (2). CMOS power dissipation is decomposed into static and dynamic powers. For dynamic power, Vdd is the power A new era started when MOS technologies were used to supply, F is the clock frequency, ΣCi is the sum of gate and build microprocessors. After pMOS (Intel 4004 in 1971) and interconnection capacitances and α is the average percentage of nMOS (Intel 8080 in 1974), CMOS became quickly the leading switching capacitances: α is the activity factor of the overall technology, used by Intel since 1985 with 80386 CPU. circuit MOS technologies obey an empirical law, stated in 1965 and 2 Pd = Pdstatic + α x ΣCi x Vdd x F (2) known as Moore’s law: the number of transistors integrated on a chip doubles every N months. Fig. 1 presents the evolution for II. CONSEQUENCES OF MOORE LAW DRAM memories, processors (MPU) and three types of read- only memories [1]. The growth rate decreases with years, from A. -

Hierarchical Roofline Analysis for Gpus: Accelerating Performance

Hierarchical Roofline Analysis for GPUs: Accelerating Performance Optimization for the NERSC-9 Perlmutter System Charlene Yang, Thorsten Kurth Samuel Williams National Energy Research Scientific Computing Center Computational Research Division Lawrence Berkeley National Laboratory Lawrence Berkeley National Laboratory Berkeley, CA 94720, USA Berkeley, CA 94720, USA fcjyang, [email protected] [email protected] Abstract—The Roofline performance model provides an Performance (GFLOP/s) is bound by: intuitive and insightful approach to identifying performance bottlenecks and guiding performance optimization. In prepa- Peak GFLOP/s GFLOP/s ≤ min (1) ration for the next-generation supercomputer Perlmutter at Peak GB/s × Arithmetic Intensity NERSC, this paper presents a methodology to construct a hi- erarchical Roofline on NVIDIA GPUs and extend it to support which produces the traditional Roofline formulation when reduced precision and Tensor Cores. The hierarchical Roofline incorporates L1, L2, device memory and system memory plotted on a log-log plot. bandwidths into one single figure, and it offers more profound Previously, the Roofline model was expanded to support insights into performance analysis than the traditional DRAM- the full memory hierarchy [2], [3] by adding additional band- only Roofline. We use our Roofline methodology to analyze width “ceilings”. Similarly, additional ceilings beneath the three proxy applications: GPP from BerkeleyGW, HPGMG Roofline can be added to represent performance bottlenecks from AMReX, and conv2d from TensorFlow. In so doing, we demonstrate the ability of our methodology to readily arising from lack of vectorization or the failure to exploit understand various aspects of performance and performance fused multiply-add (FMA) instructions. bottlenecks on NVIDIA GPUs and motivate code optimizations. -

Embedded DRAM

Embedded DRAM Raviprasad Kuloor Semiconductor Research and Development Centre, Bangalore IBM Systems and Technology Group DRAM Topics Introduction to memory DRAM basics and bitcell array eDRAM operational details (case study) Noise concerns Wordline driver (WLDRV) and level translators (LT) Challenges in eDRAM Understanding Timing diagram – An example References Slide 1 Acknowledgement • John Barth, IBM SRDC for most of the slides content • Madabusi Govindarajan • Subramanian S. Iyer • Many Others Slide 2 Topics Introduction to memory DRAM basics and bitcell array eDRAM operational details (case study) Noise concerns Wordline driver (WLDRV) and level translators (LT) Challenges in eDRAM Understanding Timing diagram – An example Slide 3 Memory Classification revisited Slide 4 Motivation for a memory hierarchy – infinite memory Memory store Processor Infinitely fast Infinitely large Cycles per Instruction Number of processor clock cycles (CPI) = required per instruction CPI[ ∞ cache] Finite memory speed Memory store Processor Finite speed Infinite size CPI = CPI[∞ cache] + FCP Finite cache penalty Locality of reference – spatial and temporal Temporal If you access something now you’ll need it again soon e.g: Loops Spatial If you accessed something you’ll also need its neighbor e.g: Arrays Exploit this to divide memory into hierarchy Hit L2 L1 (Slow) Processor Miss (Fast) Hit Register Cache size impacts cycles-per-instruction Access rate reduces Slower memory is sufficient Cache size impacts cycles-per-instruction For a 5GHz -

An Algorithmic Theory of Caches by Sridhar Ramachandran

An algorithmic theory of caches by Sridhar Ramachandran Submitted to the Department of Electrical Engineering and Computer Science in partial fulfillment of the requirements for the degree of Master of Science at the MASSACHUSETTS INSTITUTE OF TECHNOLOGY. December 1999 Massachusetts Institute of Technology 1999. All rights reserved. Author Department of Electrical Engineering and Computer Science Jan 31, 1999 Certified by / -f Charles E. Leiserson Professor of Computer Science and Engineering Thesis Supervisor Accepted by Arthur C. Smith Chairman, Departmental Committee on Graduate Students MSSACHUSVTS INSTITUT OF TECHNOLOGY MAR 0 4 2000 LIBRARIES 2 An algorithmic theory of caches by Sridhar Ramachandran Submitted to the Department of Electrical Engineeringand Computer Science on Jan 31, 1999 in partialfulfillment of the requirementsfor the degree of Master of Science. Abstract The ideal-cache model, an extension of the RAM model, evaluates the referential locality exhibited by algorithms. The ideal-cache model is characterized by two parameters-the cache size Z, and line length L. As suggested by its name, the ideal-cache model practices automatic, optimal, omniscient replacement algorithm. The performance of an algorithm on the ideal-cache model consists of two measures-the RAM running time, called work complexity, and the number of misses on the ideal cache, called cache complexity. This thesis proposes the ideal-cache model as a "bridging" model for caches in the sense proposed by Valiant [49]. A bridging model for caches serves two purposes. It can be viewed as a hardware "ideal" that influences cache design. On the other hand, it can be used as a powerful tool to design cache-efficient algorithms. -

Generalized Methods for Application Specific Hardware Specialization

Generalized methods for application specific hardware specialization by Snehasish Kumar M. Sc., Simon Fraser University, 2013 B. Tech., Biju Patnaik University of Technology, 2010 Dissertation Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy in the School of Computing Science Faculty of Applied Sciences c Snehasish Kumar 2017 SIMON FRASER UNIVERSITY Spring 2017 All rights reserved. However, in accordance with the Copyright Act of Canada, this work may be reproduced without authorization under the conditions for “Fair Dealing.” Therefore, limited reproduction of this work for the purposes of private study, research, education, satire, parody, criticism, review and news reporting is likely to be in accordance with the law, particularly if cited appropriately. Approval Name: Snehasish Kumar Degree: Doctor of Philosophy (Computing Science) Title: Generalized methods for application specific hardware specialization Examining Committee: Chair: Binay Bhattacharyya Professor Arrvindh Shriraman Senior Supervisor Associate Professor Simon Fraser University William Sumner Supervisor Assistant Professor Simon Fraser University Vijayalakshmi Srinivasan Supervisor Research Staff Member, IBM Research Alexandra Fedorova Supervisor Associate Professor University of British Columbia Richard Vaughan Internal Examiner Associate Professor Simon Fraser University Andreas Moshovos External Examiner Professor University of Toronto Date Defended: November 21, 2016 ii Abstract Since the invention of the microprocessor in 1971, the computational capacity of the microprocessor has scaled over 1000× with Moore and Dennard scaling. Dennard scaling ended with a rapid increase in leakage power 30 years after it was proposed. This ushered in the era of multiprocessing where additional transistors afforded by Moore’s scaling were put to use. With the scaling of computational capacity no longer guaranteed every generation, application specific hardware specialization is an attractive alternative to sustain scaling trends. -

14. Caching and Cache-Efficient Algorithms

MITOCW | 14. Caching and Cache-Efficient Algorithms The following content is provided under a Creative Commons license. Your support will help MIT OpenCourseWare continue to offer high quality educational resources for free. To make a donation or to view additional materials from hundreds of MIT courses, visit MIT OpenCourseWare at ocw.mit.edu. JULIAN SHUN: All right. So we've talked a little bit about caching before, but today we're going to talk in much more detail about caching and how to design cache-efficient algorithms. So first, let's look at the caching hardware on modern machines today. So here's what the cache hierarchy looks like for a multicore chip. We have a whole bunch of processors. They all have their own private L1 caches for both the data, as well as the instruction. They also have a private L2 cache. And then they share a last level cache, or L3 cache, which is also called LLC. They're all connected to a memory controller that can access DRAM. And then, oftentimes, you'll have multiple chips on the same server, and these chips would be connected through a network. So here we have a bunch of multicore chips that are connected together. So we can see that there are different levels of memory here. And the sizes of each one of these levels of memory is different. So the sizes tend to go up as you move up the memory hierarchy. The L1 caches tend to be about 32 kilobytes. In fact, these are the specifications for the machines that you're using in this class. -

Magnetic Storage- Magnetic-Core Memory, Magnetic Tape,RAM

Magnetic storage- From magnetic tape to HDD Juhász Levente 2016.02.24 Table of contents 1. Introduction 2. Magnetic tape 3. Magnetic-core memory 4. Bubble memory 5. Hard disk drive 6. Applications, future prospects 7. References 1. Magnetic storage - introduction Magnetic storage: Recording & storage of data on a magnetised medium A form of „non-volatile” memory Data accessed using read/write heads Widely used for computer data storage, audio and video applications, magnetic stripe cards etc. 1. Magnetic storage - introduction 2. Magnetic tape 1928 Germany: Magnetic tape for audio recording by Fritz Pfleumer • Fe2O3 coating on paper stripes, further developed by AEG & BASF 1951: UNIVAC- first use of magnetic tape for data storage • 12,7 mm Ni-plated brass-phosphorus alloy tape • 128 characters /inch data density • 7000 ch. /s writing speed 2. Magnetic tape 2. Magnetic tape 1950s: IBM : patented magnetic tape technology • 12,7 mm wide magnetic tape on a 26,7 cm reel • 370-730 m long tapes 1980: 1100 m PET –based tape • 18 cm reel for developers • 7, 9 stripe tapes (8 bit + parity) • Capacity up to 140 MB DEC –tapes for personal use 2. Magnetic tape 2014: Sony & IBM recorded 148 Gbit /squareinch tape capacity 185 TB! 2. Magnetic tape Remanent structural change in a magnetic medium Analog or digital recording (binary storage) Longitudinal or perpendicular recording Ni-Fe –alloy core in tape head 2. Magnetic tape Hysteresis in magnetic recording 40-150 kHz bias signal applied to the tape to remove its „magnetic history” and „stir” the magnetization Each recorded signal will encounter the same magnetic condition Current in tape head proportional to the signal to be recorded 2.