Chapter 10 Eigenvectors and Eigenvalues

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Enhancing Self-Reflection and Mathematics Achievement of At-Risk Urban Technical College Students

Psychological Test and Assessment Modeling, Volume 53, 2011 (1), 108-127 Enhancing self-reflection and mathematics achievement of at-risk urban technical college students Barry J. Zimmerman1, Adam Moylan2, John Hudesman3, Niesha White3, & Bert Flugman3 Abstract A classroom-based intervention study sought to help struggling learners respond to their academic grades in math as sources of self-regulated learning (SRL) rather than as indices of personal limita- tion. Technical college students (N = 496) in developmental (remedial) math or introductory col- lege-level math courses were randomly assigned to receive SRL instruction or conventional in- struction (control) in their respective courses. SRL instruction was hypothesized to improve stu- dents’ math achievement by showing them how to self-reflect (i.e., self-assess and adapt to aca- demic quiz outcomes) more effectively. The results indicated that students receiving self-reflection training outperformed students in the control group on instructor-developed examinations and were better calibrated in their task-specific self-efficacy beliefs before solving problems and in their self- evaluative judgments after solving problems. Self-reflection training also increased students’ pass- rate on a national gateway examination in mathematics by 25% in comparison to that of control students. Key words: self-regulation, self-reflection, math instruction 1 Correspondence concerning this article should be addressed to: Barry Zimmerman, PhD, Graduate Center of the City University of New York and Center for Advanced Study in Education, 365 Fifth Ave- nue, New York, NY 10016, USA; email: [email protected] 2 Now affiliated with the University of California, San Francisco, School of Medicine 3 Graduate Center of the City University of New York and Center for Advanced Study in Education Enhancing self-reflection and math achievement 109 Across America, faculty and policy makers at two-year and technical colleges have been deeply troubled by the low academic achievement and high attrition rate of at-risk stu- dents. -

Reflection Invariant and Symmetry Detection

1 Reflection Invariant and Symmetry Detection Erbo Li and Hua Li Abstract—Symmetry detection and discrimination are of fundamental meaning in science, technology, and engineering. This paper introduces reflection invariants and defines the directional moments(DMs) to detect symmetry for shape analysis and object recognition. And it demonstrates that detection of reflection symmetry can be done in a simple way by solving a trigonometric system derived from the DMs, and discrimination of reflection symmetry can be achieved by application of the reflection invariants in 2D and 3D. Rotation symmetry can also be determined based on that. Also, if none of reflection invariants is equal to zero, then there is no symmetry. And the experiments in 2D and 3D show that all the reflection lines or planes can be deterministically found using DMs up to order six. This result can be used to simplify the efforts of symmetry detection in research areas,such as protein structure, model retrieval, reverse engineering, and machine vision etc. Index Terms—symmetry detection, shape analysis, object recognition, directional moment, moment invariant, isometry, congruent, reflection, chirality, rotation F 1 INTRODUCTION Kazhdan et al. [1] developed a continuous measure and dis- The essence of geometric symmetry is self-evident, which cussed the properties of the reflective symmetry descriptor, can be found everywhere in nature and social lives, as which was expanded to 3D by [2] and was augmented in shown in Figure 1. It is true that we are living in a spatial distribution of the objects asymmetry by [3] . For symmetric world. Pursuing the explanation of symmetry symmetry discrimination [4] defined a symmetry distance will provide better understanding to the surrounding world of shapes. -

Isometries and the Plane

Chapter 1 Isometries of the Plane \For geometry, you know, is the gate of science, and the gate is so low and small that one can only enter it as a little child. (W. K. Clifford) The focus of this first chapter is the 2-dimensional real plane R2, in which a point P can be described by its coordinates: 2 P 2 R ;P = (x; y); x 2 R; y 2 R: Alternatively, we can describe P as a complex number by writing P = (x; y) = x + iy 2 C: 2 The plane R comes with a usual distance. If P1 = (x1; y1);P2 = (x2; y2) 2 R2 are two points in the plane, then p 2 2 d(P1;P2) = (x2 − x1) + (y2 − y1) : Note that this is consistent withp the complex notation. For P = x + iy 2 C, p 2 2 recall that jP j = x + y = P P , thus for two complex points P1 = x1 + iy1;P2 = x2 + iy2 2 C, we have q d(P1;P2) = jP2 − P1j = (P2 − P1)(P2 − P1) p 2 2 = j(x2 − x1) + i(y2 − y1)j = (x2 − x1) + (y2 − y1) ; where ( ) denotes the complex conjugation, i.e. x + iy = x − iy. We are now interested in planar transformations (that is, maps from R2 to R2) that preserve distances. 1 2 CHAPTER 1. ISOMETRIES OF THE PLANE Points in the Plane • A point P in the plane is a pair of real numbers P=(x,y). d(0,P)2 = x2+y2. • A point P=(x,y) in the plane can be seen as a complex number x+iy. -

Lesson 4: Definition of Reflection and Basic Properties

NYS COMMON CORE MATHEMATICS CURRICULUM Lesson 4 8•2 Lesson 4: Definition of Reflection and Basic Properties Student Outcomes . Students know the definition of reflection and perform reflections across a line using a transparency. Students show that reflections share some of the same fundamental properties with translations (e.g., lines map to lines, angle- and distance-preserving motion). Students know that reflections map parallel lines to parallel lines. Students know that for the reflection across a line 퐿 and for every point 푃 not on 퐿, 퐿 is the bisector of the segment joining 푃 to its reflected image 푃′. Classwork Example 1 (5 minutes) The reflection across a line 퐿 is defined by using the following example. MP.6 . Let 퐿 be a vertical line, and let 푃 and 퐴 be two points not on 퐿, as shown below. Also, let 푄 be a point on 퐿. (The black rectangle indicates the border of the paper.) . The following is a description of how the reflection moves the points 푃, 푄, and 퐴 by making use of the transparency. Trace the line 퐿 and three points onto the transparency exactly, using red. (Be sure to use a transparency that is the same size as the paper.) . Keeping the paper fixed, flip the transparency across the vertical line (interchanging left and right) while keeping the vertical line and point 푄 on top of their black images. The position of the red figures on the transparency now represents the Scaffolding: reflection of the original figure. 푅푒푓푙푒푐푡푖표푛(푃) is the point represented by the There are manipulatives, such red dot to the left of 퐿, 푅푒푓푙푒푐푡푖표푛(퐴) is the red dot to the right of 퐿, and point as MIRA and Georeflector, 푅푒푓푙푒푐푡푖표푛(푄) is point 푄 itself. -

2-D Drawing Geometry Homogeneous Coordinates

2-D Drawing Geometry Homogeneous Coordinates The rotation of a point, straight line or an entire image on the screen, about a point other than origin, is achieved by first moving the image until the point of rotation occupies the origin, then performing rotation, then finally moving the image to its original position. The moving of an image from one place to another in a straight line is called a translation. A translation may be done by adding or subtracting to each point, the amount, by which picture is required to be shifted. Translation of point by the change of coordinate cannot be combined with other transformation by using simple matrix application. Such a combination is essential if we wish to rotate an image about a point other than origin by translation, rotation again translation. To combine these three transformations into a single transformation, homogeneous coordinates are used. In homogeneous coordinate system, two-dimensional coordinate positions (x, y) are represented by triple- coordinates. Homogeneous coordinates are generally used in design and construction applications. Here we perform translations, rotations, scaling to fit the picture into proper position 2D Transformation in Computer Graphics- In Computer graphics, Transformation is a process of modifying and re- positioning the existing graphics. • 2D Transformations take place in a two dimensional plane. • Transformations are helpful in changing the position, size, orientation, shape etc of the object. Transformation Techniques- In computer graphics, various transformation techniques are- 1. Translation 2. Rotation 3. Scaling 4. Reflection 2D Translation in Computer Graphics- In Computer graphics, 2D Translation is a process of moving an object from one position to another in a two dimensional plane. -

In This Handout, We Discuss Orthogonal Maps and Their Significance from A

In this handout, we discuss orthogonal maps and their significance from a geometric standpoint. Preliminary results on the transpose The definition of an orthogonal matrix involves transpose, so we prove some facts about it first. Proposition 1. (a) If A is an ` × m matrix and B is an m × n matrix, then (AB)> = B>A>: (b) If A is an invertible n × n matrix, then A> is invertible and (A>)−1 = (A−1)>: Proof. (a) We compute the (i; j)-entries of both sides for 1 ≤ i ≤ n and 1 ≤ j ≤ `: m m m > X X > > X > > > > [(AB) ]ij = [AB]ji = AjkBki = [A ]kj[B ]ik = [B ]ik[A ]kj = [B A ]ij: k=1 k=1 k=1 (b) It suffices to show that A>(A−1)> = I. By (a), A>(A−1)> = (A−1A)> = I> = I: Orthogonal matrices Definition (Orthogonal matrix). An n × n matrix A is orthogonal if A−1 = A>. We will first show that \being orthogonal" is preserved by various matrix operations. Proposition 2. (a) If A is orthogonal, then so is A−1 = A>. (b) If A and B are orthogonal n × n matrices, then so is AB. Proof. (a) We have (A−1)> = (A>)> = A = (A−1)−1, so A−1 is orthogonal. (b) We have (AB)−1 = B−1A−1 = B>A> = (AB)>, so AB is orthogonal. The collection O(n) of n × n orthogonal matrices is the orthogonal group in dimension n. The above definition is often not how we identify orthogonal matrices, as it requires us to compute an n × n inverse. -

Some Linear Transformations on R2 Math 130 Linear Algebra D Joyce, Fall 2013

Some linear transformations on R2 Math 130 Linear Algebra D Joyce, Fall 2013 Let's look at some some linear transformations on the plane R2. We'll look at several kinds of operators on R2 including reflections, rotations, scalings, and others. We'll illustrate these transformations by applying them to the leaf shown in figure 1. In each of the figures the x-axis is the red line and the y-axis is the blue line. Figure 1: Basic leaf Figure 2: Reflected across x-axis 1 0 Example 1 (A reflection). Consider the 2 × 2 matrix A = . Take a generic point 0 −1 x x = (x; y) in the plane, and write it as the column vector x = . Then the matrix product y Ax is 1 0 x x Ax = = 0 −1 y −y Thus, the matrix A transforms the point (x; y) to the point T (x; y) = (x; −y). You'll recognize this right away as a reflection across the x-axis. The x-axis is special to this operator as it's the set of fixed points. In other words, it's the 1-eigenspace. The y-axis is also special as every point (0; y) is sent to its negation −(0; y). That means the y-axis is an eigenspace with eigenvalue −1, that is, it's the −1- eigenspace. There aren't any other eigenspaces. A reflection always has these two eigenspaces a 1-eigenspace and a −1-eigenspace. Every 2 × 2 matrix describes some kind of geometric transformation of the plane. But since the origin (0; 0) is always sent to itself, not every geometric transformation can be described by a matrix in this way. -

Math 21B Orthogonal Matrices

Math 21b Orthogonal Matrices T Transpose. The transpose of an n × m matrix A with entries aij is the m × n matrix A with entries aji. The transpose AT and A are related by swapping rows with columns. Properties. Let A, B be matrices and ~v, ~w be vectors. 1.( AB)T = BT AT 2. ~vT ~w = ~v · ~w. 3.( A~v) · ~w = ~v · (AT ~w) 4. If A is invertible, so is AT and (AT )−1 = (A−1)T . 5. ker(AT ) = (im A)? and im(AT ) = (ker A)? Orthogonal Matrices. An invertible matrix A is called orthogonal if A−1 = AT or equivalently, AT A = I. The corresponding linear transformation T (~x) = A~x is called an orthogonal transformation. Caution! Orthogonal projections are almost never orthogonal transformations! Examples. Determine which of the following types of matrices are orthogonal. For those which aren't, under what conditions would they be? cos α − sin α 1. (Rotation) A = sin α cos α Solution. Check if AAT = I: cos α − sin α cos α sin α 1 0 AAT = = sin α cos α − sin α cos α 0 1 so yes this matrix is orthogonal. This shows that any rotation is an orthogonal transforma- tion. a b 2. (Reflection Dilation) B = . b −a Solution. Check if BBT = I: a b a b a2 + b2 0 BBT = = : b −a b −a 0 a2 + b2 This will equal I only if a2 + b2 = 1, i.e., B is a reflection (no dilation). This shows that any reflection is an orthogonal transformation. 2 j j j 3 3 3. -

Geometry Topics

GEOMETRY TOPICS Transformations Rotation Turn! Reflection Flip! Translation Slide! After any of these transformations (turn, flip or slide), the shape still has the same size so the shapes are congruent. Rotations Rotation means turning around a center. The distance from the center to any point on the shape stays the same. The rotation has the same size as the original shape. Here a triangle is rotated around the point marked with a "+" Translations In Geometry, "Translation" simply means Moving. The translation has the same size of the original shape. To Translate a shape: Every point of the shape must move: the same distance in the same direction. Reflections A reflection is a flip over a line. In a Reflection every point is the same distance from a central line. The reflection has the same size as the original image. The central line is called the Mirror Line ... Mirror Lines can be in any direction. Reflection Symmetry Reflection Symmetry (sometimes called Line Symmetry or Mirror Symmetry) is easy to see, because one half is the reflection of the other half. Here my dog "Flame" has her face made perfectly symmetrical with a bit of photo magic. The white line down the center is the Line of Symmetry (also called the "Mirror Line") The reflection in this lake also has symmetry, but in this case: -The Line of Symmetry runs left-to-right (horizontally) -It is not perfect symmetry, because of the lake surface. Line of Symmetry The Line of Symmetry (also called the Mirror Line) can be in any direction. But there are four common directions, and they are named for the line they make on the standard XY graph. -

Euclidean Plane Isometries ( Congruences )

Euclidean plane Isometries ( Congruences ) A file of the Geometrikon gallery by Paris Pamfilos To properly know the truth is to be in the truth; it is to have the truth for one’s life. This always costs a struggle. Any other kind of knowledge is a falsification. In short, the truth, if it is really there, is a being, a life. Kierkegaard, Truth is the way Contents 1 Transformations of the plane1 2 Isometries, general properties3 3 Reflections or mirrorings4 4 Translations8 5 Rotations 14 6 Congruence 19 7 Some compositions of isometries 22 1 Transformations of the plane “Transformation” of the plane is called a process f , which assigns to every point X of the plane, with a possible exception of some special points, another point Y of the plane which we denote by f ¹Xº. We write Y = f ¹Xº and we call X a “preimage” of the trans- formation and Y the “image” of X through the transformation. We often say that the transformation f “maps” X to Y. For the process f we accept that it satisfies the require- ment X , X 0 ) f ¹Xº , f ¹X 0º: In other words, different points also have different images. Equivalently, this means that, if for two points X, X 0 holds f ¹Xº = f ¹X 0º, then it will also hold X = X 0. The set of points 1 Transformations of the plane 2 X, on which the transformation f is defined, is called “domain” of the transformation f , while the set consisting of all Y, such that Y = f ¹Xº, when X varies in the domain of f , is called “range” of f . -

39 Symmetry of Plane Figures

39 Symmetry of Plane Figures In this section, we are interested in the symmetric properties of plane figures. By a symmetry of a plane figure we mean a motion of the plane that moves the figure so that it falls back on itself. The two types of symmetry that we discuss are Reflection Symmetry: A plane figure is symmetric about a line if it is its own image when flipped across the line. We call the reflection line the line of symmetry. In other words, a figure has a line symmetry if it can be folded along the line so that one half of the figure matches the other half. Reflection symmetry is also known as mirror symmetry, since the line of symmetry acts like a double-sided mirror. Points on each side are reflected to the opposite side. Many plane figures have several line symmetries. Figure 39.1 shows some of the plane figures with their line symmetries shown dashed. Figure 39.1 Remark 39.1 Using reflection symmetry we can establish properties for some plane figures. For example, since an isosceles triangle has one line of symmetry then the 1 base angles, i.e. angles opposed the congruent sides, are congruent. A similar property holds for isosceles trapezoids. Rotational Symmetry: A plane figure has rotational symmetry if and only if it can be rotated more than 0◦ and less than or equal to 360◦ about a fixed point called the center of rotation so that its image coincides with its original position. Figure 39.2 shows the four different rotations of a square. -

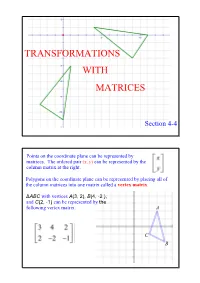

Transformations with Matrices

TRANSFORMATIONS WITH MATRICES Section 44 Points on the coordinate plane can be represented by matrices. The ordered pair (x, y) can be represented by the column matrix at the right. Polygons on the coordinate plane can be represented by placing all of the column matrices into one matrix called a vertex matrix. ΔABC with vertices A(3, 2), B(4, 2 ), and C(2, 1) can be represented by the following vertex matrix. A C B You can use matrices to perform transformations. (translations, reflections, and rotations) Remember that the original figure is called the preimage and the figure after the transformation is the image. If the two figures are congruent then the transformation is an isometry. Example 1 Translation a. Find the coordinates of the vertices of the image of quadrilateral QUAD with Q(2, 3), U(5, 2), A(4, 2), and D(1, 1), if it is moved 4 units to the left and 2 units up. Write the vertex matrix for quadrilateral QUAD. Write the transformation matrix. Example 1 continued Vertex Matrix Translation Vertex Matrix of QUAD Matrix of Q'U'A'D' + = The coordinates of Q'U'A'D' are: Q'(2, 5), U'(1, 4), A'(0, 0) and D'(3, 1). b. Graph the preimage and the image. Example 2 Rectangle A'B'C'D' is the result of a translation of rectangle ABCD. A table of the vertices of each rectangle is shown. Find the coordinates of A and D'. Rectangle Rectangle ABCD A'B'C'D' A A'(1, 1) B(1, 5) B'(4, 1) C(1, 2) C'(4, 6) D(4, 2) D' Dilations When a figure is reduced or enlarged it is called a dilation.