The Design of Terra: Harnessing the Best Features of High-Level and Low-Level Languages

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Chapter 12 Calc Macros Automating Repetitive Tasks Copyright

Calc Guide Chapter 12 Calc Macros Automating repetitive tasks Copyright This document is Copyright © 2019 by the LibreOffice Documentation Team. Contributors are listed below. You may distribute it and/or modify it under the terms of either the GNU General Public License (http://www.gnu.org/licenses/gpl.html), version 3 or later, or the Creative Commons Attribution License (http://creativecommons.org/licenses/by/4.0/), version 4.0 or later. All trademarks within this guide belong to their legitimate owners. Contributors This book is adapted and updated from the LibreOffice 4.1 Calc Guide. To this edition Steve Fanning Jean Hollis Weber To previous editions Andrew Pitonyak Barbara Duprey Jean Hollis Weber Simon Brydon Feedback Please direct any comments or suggestions about this document to the Documentation Team’s mailing list: [email protected]. Note Everything you send to a mailing list, including your email address and any other personal information that is written in the message, is publicly archived and cannot be deleted. Publication date and software version Published December 2019. Based on LibreOffice 6.2. Using LibreOffice on macOS Some keystrokes and menu items are different on macOS from those used in Windows and Linux. The table below gives some common substitutions for the instructions in this chapter. For a more detailed list, see the application Help. Windows or Linux macOS equivalent Effect Tools > Options menu LibreOffice > Preferences Access setup options Right-click Control + click or right-click -

Rexx Programmer's Reference

01_579967 ffirs.qxd 2/3/05 9:00 PM Page i Rexx Programmer’s Reference Howard Fosdick 01_579967 ffirs.qxd 2/3/05 9:00 PM Page iv 01_579967 ffirs.qxd 2/3/05 9:00 PM Page i Rexx Programmer’s Reference Howard Fosdick 01_579967 ffirs.qxd 2/3/05 9:00 PM Page ii Rexx Programmer’s Reference Published by Wiley Publishing, Inc. 10475 Crosspoint Boulevard Indianapolis, IN 46256 www.wiley.com Copyright © 2005 by Wiley Publishing, Inc., Indianapolis, Indiana Published simultaneously in Canada ISBN: 0-7645-7996-7 Manufactured in the United States of America 10 9 8 7 6 5 4 3 2 1 1MA/ST/QS/QV/IN No part of this publication may be reproduced, stored in a retrieval system or transmitted in any form or by any means, electronic, mechanical, photocopying, recording, scanning or otherwise, except as permitted under Sections 107 or 108 of the 1976 United States Copyright Act, without either the prior written permission of the Publisher, or authorization through payment of the appropriate per-copy fee to the Copyright Clearance Center, 222 Rosewood Drive, Danvers, MA 01923, (978) 750-8400, fax (978) 646-8600. Requests to the Publisher for permission should be addressed to the Legal Department, Wiley Publishing, Inc., 10475 Crosspoint Blvd., Indianapolis, IN 46256, (317) 572-3447, fax (317) 572-4355, e-mail: [email protected]. LIMIT OF LIABILITY/DISCLAIMER OF WARRANTY: THE PUBLISHER AND THE AUTHOR MAKE NO REPRESENTATIONS OR WARRANTIES WITH RESPECT TO THE ACCURACY OR COM- PLETENESS OF THE CONTENTS OF THIS WORK AND SPECIFICALLY DISCLAIM ALL WAR- RANTIES, INCLUDING WITHOUT LIMITATION WARRANTIES OF FITNESS FOR A PARTICULAR PURPOSE. -

The LLVM Instruction Set and Compilation Strategy

The LLVM Instruction Set and Compilation Strategy Chris Lattner Vikram Adve University of Illinois at Urbana-Champaign lattner,vadve ¡ @cs.uiuc.edu Abstract This document introduces the LLVM compiler infrastructure and instruction set, a simple approach that enables sophisticated code transformations at link time, runtime, and in the field. It is a pragmatic approach to compilation, interfering with programmers and tools as little as possible, while still retaining extensive high-level information from source-level compilers for later stages of an application’s lifetime. We describe the LLVM instruction set, the design of the LLVM system, and some of its key components. 1 Introduction Modern programming languages and software practices aim to support more reliable, flexible, and powerful software applications, increase programmer productivity, and provide higher level semantic information to the compiler. Un- fortunately, traditional approaches to compilation either fail to extract sufficient performance from the program (by not using interprocedural analysis or profile information) or interfere with the build process substantially (by requiring build scripts to be modified for either profiling or interprocedural optimization). Furthermore, they do not support optimization either at runtime or after an application has been installed at an end-user’s site, when the most relevant information about actual usage patterns would be available. The LLVM Compilation Strategy is designed to enable effective multi-stage optimization (at compile-time, link-time, runtime, and offline) and more effective profile-driven optimization, and to do so without changes to the traditional build process or programmer intervention. LLVM (Low Level Virtual Machine) is a compilation strategy that uses a low-level virtual instruction set with rich type information as a common code representation for all phases of compilation. -

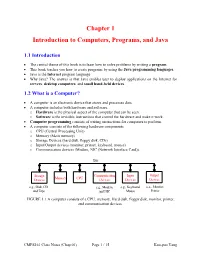

Chapter 1 Introduction to Computers, Programs, and Java

Chapter 1 Introduction to Computers, Programs, and Java 1.1 Introduction • The central theme of this book is to learn how to solve problems by writing a program . • This book teaches you how to create programs by using the Java programming languages . • Java is the Internet program language • Why Java? The answer is that Java enables user to deploy applications on the Internet for servers , desktop computers , and small hand-held devices . 1.2 What is a Computer? • A computer is an electronic device that stores and processes data. • A computer includes both hardware and software. o Hardware is the physical aspect of the computer that can be seen. o Software is the invisible instructions that control the hardware and make it work. • Computer programming consists of writing instructions for computers to perform. • A computer consists of the following hardware components o CPU (Central Processing Unit) o Memory (Main memory) o Storage Devices (hard disk, floppy disk, CDs) o Input/Output devices (monitor, printer, keyboard, mouse) o Communication devices (Modem, NIC (Network Interface Card)). Bus Storage Communication Input Output Memory CPU Devices Devices Devices Devices e.g., Disk, CD, e.g., Modem, e.g., Keyboard, e.g., Monitor, and Tape and NIC Mouse Printer FIGURE 1.1 A computer consists of a CPU, memory, Hard disk, floppy disk, monitor, printer, and communication devices. CMPS161 Class Notes (Chap 01) Page 1 / 15 Kuo-pao Yang 1.2.1 Central Processing Unit (CPU) • The central processing unit (CPU) is the brain of a computer. • It retrieves instructions from memory and executes them. -

Scripting: Higher- Level Programming for the 21St Century

. John K. Ousterhout Sun Microsystems Laboratories Scripting: Higher- Cybersquare Level Programming for the 21st Century Increases in computer speed and changes in the application mix are making scripting languages more and more important for the applications of the future. Scripting languages differ from system programming languages in that they are designed for “gluing” applications together. They use typeless approaches to achieve a higher level of programming and more rapid application development than system programming languages. or the past 15 years, a fundamental change has been ated with system programming languages and glued Foccurring in the way people write computer programs. together with scripting languages. However, several The change is a transition from system programming recent trends, such as faster machines, better script- languages such as C or C++ to scripting languages such ing languages, the increasing importance of graphical as Perl or Tcl. Although many people are participat- user interfaces (GUIs) and component architectures, ing in the change, few realize that the change is occur- and the growth of the Internet, have greatly expanded ring and even fewer know why it is happening. This the applicability of scripting languages. These trends article explains why scripting languages will handle will continue over the next decade, with more and many of the programming tasks in the next century more new applications written entirely in scripting better than system programming languages. languages and system programming -

What Is LLVM? and a Status Update

What is LLVM? And a Status Update. Approved for public release Hal Finkel Leadership Computing Facility Argonne National Laboratory Clang, LLVM, etc. ✔ LLVM is a liberally-licensed(*) infrastructure for creating compilers, other toolchain components, and JIT compilation engines. ✔ Clang is a modern C++ frontend for LLVM ✔ LLVM and Clang will play significant roles in exascale computing systems! (*) Now under the Apache 2 license with the LLVM Exception LLVM/Clang is both a research platform and a production-quality compiler. 2 A role in exascale? Current/Future HPC vendors are already involved (plus many others)... Apple + Google Intel (Many millions invested annually) + many others (Qualcomm, Sony, Microsoft, Facebook, Ericcson, etc.) ARM LLVM IBM Cray NVIDIA (and PGI) Academia, Labs, etc. AMD 3 What is LLVM: LLVM is a multi-architecture infrastructure for constructing compilers and other toolchain components. LLVM is not a “low-level virtual machine”! LLVM IR Architecture-independent simplification Architecture-aware optimization (e.g. vectorization) Assembly printing, binary generation, or JIT execution Backends (Type legalization, instruction selection, register allocation, etc.) 4 What is Clang: LLVM IR Clang is a C++ frontend for LLVM... Code generation Parsing and C++ Source semantic analysis (C++14, C11, etc.) Static analysis ● For basic compilation, Clang works just like gcc – using clang instead of gcc, or clang++ instead of g++, in your makefile will likely “just work.” ● Clang has a scalable LTO, check out: https://clang.llvm.org/docs/ThinLTO.html 5 The core LLVM compiler-infrastructure components are one of the subprojects in the LLVM project. These components are also referred to as “LLVM.” 6 What About Flang? ● Started as a collaboration between DOE and NVIDIA/PGI. -

Compile-Time Safety and Runtime Performance in Programming Frameworks for Distributed Systems

Compile-time Safety and Runtime Performance in Programming Frameworks for Distributed Systems lars kroll Doctoral Thesis in Information and Communication Technology School of Electrical Engineering and Computer Science KTH Royal Institute of Technology Stockholm, Sweden 2020 School of Electrical Engineering and Computer Science KTH Royal Institute of Technology TRITA-EECS-AVL-2020:13 SE-164 40 Kista ISBN: 978-91-7873-445-0 SWEDEN Akademisk avhandling som med tillstånd av Kungliga Tekniska Högskolan fram- lägges till offentlig granskning för avläggande av teknologie doktorsexamen i informations- och kommunikationsteknik på fredagen den 6 mars 2020 kl. 13:00 i Sal C, Electrum, Kungliga Tekniska Högskolan, Kistagången 16, Kista. © Lars Kroll, February 2020 Printed by Universitetsservice US-AB IV Abstract Distributed Systems, that is systems that must tolerate partial failures while exploiting parallelism, are a fundamental part of the software landscape today. Yet, their development and design still pose many challenges to developers when it comes to reliability and performance, and these challenges often have a negative impact on developer productivity. Distributed programming frameworks and languages attempt to provide solutions to common challenges, so that application developers can focus on business logic. However, the choice of programming model as provided by a such a framework or language will have significant impact both on the runtime performance of applications, as well as their reliability. In this thesis, we argue for programming models that are statically typed, both for reliability and performance reasons, and that provide powerful abstractions, giving developers the tools to implement fast algorithms without being constrained by the choice of the programming model. -

10 Programming Languages You Should Learn Right Now by Deborah Rothberg September 15, 2006 8 Comments Posted Add Your Opinion

10 Programming Languages You Should Learn Right Now By Deborah Rothberg September 15, 2006 8 comments posted Add your opinion Knowing a handful of programming languages is seen by many as a harbor in a job market storm, solid skills that will be marketable as long as the languages are. Yet, there is beauty in numbers. While there may be developers who have had riches heaped on them by knowing the right programming language at the right time in the right place, most longtime coders will tell you that periodically learning a new language is an essential part of being a good and successful Web developer. "One of my mentors once told me that a programming language is just a programming language. It doesn't matter if you're a good programmer, it's the syntax that matters," Tim Huckaby, CEO of San Diego-based software engineering company CEO Interknowlogy.com, told eWEEK. However, Huckaby said that while his company is "swimmi ng" in work, he's having a nearly impossible time finding recruits, even on the entry level, that know specific programming languages. "We're hiring like crazy, but we're not having an easy time. We're just looking for attitude and aptitude, kids right out of school that know .Net, or even Java, because with that we can train them on .Net," said Huckaby. "Don't get fixated on one or two languages. When I started in 1969, FORTRAN, COBOL and S/360 Assembler were the big tickets. Today, Java, C and Visual Basic are. In 10 years time, some new set of languages will be the 'in thing.' …At last count, I knew/have learned over 24 different languages in over 30 years," Wayne Duqaine, director of Software Development at Grandview Systems, of Sebastopol, Calif., told eWEEK. -

CUDA Flux: a Lightweight Instruction Profiler for CUDA Applications

CUDA Flux: A Lightweight Instruction Profiler for CUDA Applications Lorenz Braun Holger Froning¨ Institute of Computer Engineering Institute of Computer Engineering Heidelberg University Heidelberg University Heidelberg, Germany Heidelberg, Germany [email protected] [email protected] Abstract—GPUs are powerful, massively parallel processors, hardware, it lacks verbosity when it comes to analyzing the which require a vast amount of thread parallelism to keep their different types of instructions executed. This problem is aggra- thousands of execution units busy, and to tolerate latency when vated by the recently frequent introduction of new instructions, accessing its high-throughput memory system. Understanding the behavior of massively threaded GPU programs can be for instance floating point operations with reduced precision difficult, even though recent GPUs provide an abundance of or special function operations including Tensor Cores for ma- hardware performance counters, which collect statistics about chine learning. Similarly, information on the use of vectorized certain events. Profiling tools that assist the user in such analysis operations is often lost. Characterizing workloads on PTX for their GPUs, like NVIDIA’s nvprof and cupti, are state-of- level is desirable as PTX allows us to determine the exact types the-art. However, instrumentation based on reading hardware performance counters can be slow, in particular when the number of the instructions a kernel executes. On the contrary, nvprof of metrics is large. Furthermore, the results can be inaccurate as metrics are based on a lower-level representation called SASS. instructions are grouped to match the available set of hardware Still, even if there was an exact mapping, some information on counters. -

Implementing Continuation Based Language in LLVM and Clang

Implementing Continuation based language in LLVM and Clang Kaito TOKUMORI Shinji KONO University of the Ryukyus University of the Ryukyus Email: [email protected] Email: [email protected] Abstract—The programming paradigm which use data seg- 1 __code f(Allocate allocate){ 2 allocate.size = 0; ments and code segments are proposed. This paradigm uses 3 goto g(allocate); Continuation based C (CbC), which a slight modified C language. 4 } 5 Code segments are units of calculation and Data segments are 6 // data segment definition sets of typed data. We use these segments as units of computation 7 // (generated automatically) 8 union Data { and meta computation. In this paper we show the implementation 9 struct Allocate { of CbC on LLVM and Clang 3.7. 10 long size; 11 } allocate; 12 }; I. A PRACTICAL CONTINUATION BASED LANGUAGE TABLE I The proposed units of programming are named code seg- CBCEXAMPLE ments and data segments. Code segments are units of calcu- lation which have no state. Data segments are sets of typed data. Code segments are connected to data segments by a meta data segment called a context. After the execution of a code have input data segments and output data segments. Data segment and its context, the next code segment (continuation) segments have two kind of dependency with code segments. is executed. First, Code segments access the contents of data segments Continuation based C (CbC) [1], hereafter referred to as using field names. So data segments should have the named CbC, is a slight modified C which supports code segments. -

Comparative Studies of Programming Languages; Course Lecture Notes

Comparative Studies of Programming Languages, COMP6411 Lecture Notes, Revision 1.9 Joey Paquet Serguei A. Mokhov (Eds.) August 5, 2010 arXiv:1007.2123v6 [cs.PL] 4 Aug 2010 2 Preface Lecture notes for the Comparative Studies of Programming Languages course, COMP6411, taught at the Department of Computer Science and Software Engineering, Faculty of Engineering and Computer Science, Concordia University, Montreal, QC, Canada. These notes include a compiled book of primarily related articles from the Wikipedia, the Free Encyclopedia [24], as well as Comparative Programming Languages book [7] and other resources, including our own. The original notes were compiled by Dr. Paquet [14] 3 4 Contents 1 Brief History and Genealogy of Programming Languages 7 1.1 Introduction . 7 1.1.1 Subreferences . 7 1.2 History . 7 1.2.1 Pre-computer era . 7 1.2.2 Subreferences . 8 1.2.3 Early computer era . 8 1.2.4 Subreferences . 8 1.2.5 Modern/Structured programming languages . 9 1.3 References . 19 2 Programming Paradigms 21 2.1 Introduction . 21 2.2 History . 21 2.2.1 Low-level: binary, assembly . 21 2.2.2 Procedural programming . 22 2.2.3 Object-oriented programming . 23 2.2.4 Declarative programming . 27 3 Program Evaluation 33 3.1 Program analysis and translation phases . 33 3.1.1 Front end . 33 3.1.2 Back end . 34 3.2 Compilation vs. interpretation . 34 3.2.1 Compilation . 34 3.2.2 Interpretation . 36 3.2.3 Subreferences . 37 3.3 Type System . 38 3.3.1 Type checking . 38 3.4 Memory management . -

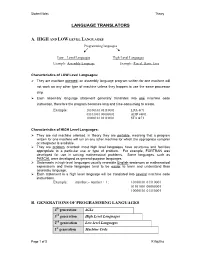

Language Translators

Student Notes Theory LANGUAGE TRANSLATORS A. HIGH AND LOW LEVEL LANGUAGES Programming languages Low – Level Languages High-Level Languages Example: Assembly Language Example: Pascal, Basic, Java Characteristics of LOW Level Languages: They are machine oriented : an assembly language program written for one machine will not work on any other type of machine unless they happen to use the same processor chip. Each assembly language statement generally translates into one machine code instruction, therefore the program becomes long and time-consuming to create. Example: 10100101 01110001 LDA &71 01101001 00000001 ADD #&01 10000101 01110001 STA &71 Characteristics of HIGH Level Languages: They are not machine oriented: in theory they are portable , meaning that a program written for one machine will run on any other machine for which the appropriate compiler or interpreter is available. They are problem oriented: most high level languages have structures and facilities appropriate to a particular use or type of problem. For example, FORTRAN was developed for use in solving mathematical problems. Some languages, such as PASCAL were developed as general-purpose languages. Statements in high-level languages usually resemble English sentences or mathematical expressions and these languages tend to be easier to learn and understand than assembly language. Each statement in a high level language will be translated into several machine code instructions. Example: number:= number + 1; 10100101 01110001 01101001 00000001 10000101 01110001 B. GENERATIONS OF PROGRAMMING LANGUAGES 4th generation 4GLs 3rd generation High Level Languages 2nd generation Low-level Languages 1st generation Machine Code Page 1 of 5 K Aquilina Student Notes Theory 1. MACHINE LANGUAGE – 1ST GENERATION In the early days of computer programming all programs had to be written in machine code.