Functional Debugging

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

E2 Studio Integrated Development Environment User's Manual: Getting

User’s Manual e2 studio Integrated Development Environment User’s Manual: Getting Started Guide Target Device RX, RL78, RH850 and RZ Family Rev.4.00 May 2016 www.renesas.com Notice 1. Descriptions of circuits, software and other related information in this document are provided only to illustrate the operation of semiconductor products and application examples. You are fully responsible for the incorporation of these circuits, software, and information in the design of your equipment. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from the use of these circuits, software, or information. 2. Renesas Electronics has used reasonable care in preparing the information included in this document, but Renesas Electronics does not warrant that such information is error free. Renesas Electronics assumes no liability whatsoever for any damages incurred by you resulting from errors in or omissions from the information included herein. 3. Renesas Electronics does not assume any liability for infringement of patents, copyrights, or other intellectual property rights of third parties by or arising from the use of Renesas Electronics products or technical information described in this document. No license, express, implied or otherwise, is granted hereby under any patents, copyrights or other intellectual property rights of Renesas Electronics or others. 4. You should not alter, modify, copy, or otherwise misappropriate any Renesas Electronics product, whether in whole or in part. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from such alteration, modification, copy or otherwise misappropriation of Renesas Electronics product. 5. Renesas Electronics products are classified according to the following two quality grades: “Standard” and “High Quality”. -

Release Notes (100 001420)

VERITAS File System™ 3.3.3 Release Notes Solaris January 2000 100-001420 Disclaimer The information contained in this publication is subject to change without notice. VERITAS Software Corporation makes no warranty of any kind with regard to this manual, including, but not limited to, the implied warranties of merchantability and fitness for a particular purpose. VERITAS Software Corporation shall not be liable for errors contained herein or for incidental or consequential damages in connection with the furnishing, performance, or use of this manual. Copyright Copyright © 2000 VERITAS Software Corporation. All rights reserved. VERITAS is a registered trademark of VERITAS Software Corporation in the US and other countries. The VERITAS logo and VERITAS File System are trademarks of VERITAS Software Corporation. All other trademarks or registered trademarks are the property of their respective owners. Printed in the USA, January 2000. VERITAS Software Corporation 1600 Plymouth St. Mountain View, CA 94043 Phone 650–335–8000 Fax 650–335–8050 www.veritas.com VERITAS File System Release Notes This guide provides information on VERITAS File System™ (VxFS) Release 3.3.3 for Solaris 2.5.1, Solaris 2.6, Solaris 7 (32-bit and 64-bit), and Solaris 8 (32-bit and 64-bit) operating systems. References in this document to VxFS 3.3 regarding new features, end of product support, compatibility, and software limitations apply to VxFS 3.3.3. Review this entire document before installing VxFS. The VERITAS File System packages include VxFS software, documentation, -

SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California

PharmaSUG 2017 - Paper TT03 SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California Abstract SAS® users are almost always surprised to discover their programs contain bugs. In fact, when asked users will emphatically stand by their programs and logic by saying they are bug free. But, the vast number of experiences along with the realities of writing code says otherwise. Bugs in software can appear anywhere; whether accidentally built into the software by developers, or introduced by programmers when writing code. No matter where the origins of bugs occur, the one thing that all SAS users know is that debugging SAS program errors and warnings can be a daunting, and humbling, task. This presentation explores the world of SAS bugs, providing essential information about the types of bugs, how bugs are created, the symptoms of bugs, and how to locate bugs. Attendees learn how to apply effective techniques to better understand, identify, and repair bugs and enable program code to work as intended. Introduction From the very beginning of computing history, program code, or the instructions used to tell a computer how and when to do something, has been plagued with errors, or bugs. Even if your own program code is determined to be error-free, there is a high probability that the operating system and/or software being used, such as the SAS software, have embedded program errors in them. As a result, program code should be written to assume the unexpected and, as much as possible, be able to handle unforeseen events using acceptable strategies and programming methodologies. -

Functional Specification for Integrated Document and Records Management Solutions

FUNCTIONAL SPECIFICATION FOR INTEGRATED DOCUMENT AND RECORDS MANAGEMENT SOLUTIONS APRIL 2004 National Archives and Records Service of South Africa Department of Arts and Culture draft National Archives and Records Service of South Africa Private Bag X236 PRETORIA 0001 Version 1, April 2004 The information contained in this publication was, with the kind permission of the UK National Archives, for the most part derived from their Functional Requirements for Electronic Records Management Systems {http://www.pro.gov.uk/recordsmanagement/erecords/2002reqs/} The information contained in this publication may be re-used provided that proper acknowledgement is given to the specific publication and to the National Archives and Records Service of South Africa. draft CONTENT 1. INTRODUCTION...................................................................................................... 1 2. KEY TERMINOLOGY .............................................................................................. 3 3. FUNCTIONAL REQUIREMENTS........................................................................ 11 A CORE REQUIREMENTS............................................................................................. 11 A1: DOCUMENT MANAGEMENT ................................................................................................. 11 A2: RECORDS MANAGEMENT ..................................................................................................... 14 A3: RECORD CAPTURE, DECLARATION AND MANAGEMENT............................................ -

Agile Playbook V2.1—What’S New?

AGILE P L AY B O OK TABLE OF CONTENTS INTRODUCTION ..........................................................................................................4 Who should use this playbook? ................................................................................6 How should you use this playbook? .........................................................................6 Agile Playbook v2.1—What’s new? ...........................................................................6 How and where can you contribute to this playbook?.............................................7 MEET YOUR GUIDES ...................................................................................................8 AN AGILE DELIVERY MODEL ....................................................................................10 GETTING STARTED.....................................................................................................12 THE PLAYS ...................................................................................................................14 Delivery ......................................................................................................................15 Play: Start with Scrum ...........................................................................................15 Play: Seeing success but need more fexibility? Move on to Scrumban ............17 Play: If you are ready to kick of the training wheels, try Kanban .......................18 Value ......................................................................................................................19 -

Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK

1 From Start-ups to Scale-ups: Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK Abstract—This paper1 describes some of the challenges and research questions that target the most productive intersection opportunities when deploying static and dynamic analysis at we have yet witnessed: that between exciting, intellectually scale, drawing on the authors’ experience with the Infer and challenging science, and real-world deployment impact. Sapienz Technologies at Facebook, each of which started life as a research-led start-up that was subsequently deployed at scale, Many industrialists have perhaps tended to regard it unlikely impacting billions of people worldwide. that much academic work will prove relevant to their most The paper identifies open problems that have yet to receive pressing industrial concerns. On the other hand, it is not significant attention from the scientific community, yet which uncommon for academic and scientific researchers to believe have potential for profound real world impact, formulating these that most of the problems faced by industrialists are either as research questions that, we believe, are ripe for exploration and that would make excellent topics for research projects. boring, tedious or scientifically uninteresting. This sociological phenomenon has led to a great deal of miscommunication between the academic and industrial sectors. I. INTRODUCTION We hope that we can make a small contribution by focusing on the intersection of challenging and interesting scientific How do we transition research on static and dynamic problems with pressing industrial deployment needs. Our aim analysis techniques from the testing and verification research is to move the debate beyond relatively unhelpful observations communities to industrial practice? Many have asked this we have typically encountered in, for example, conference question, and others related to it. -

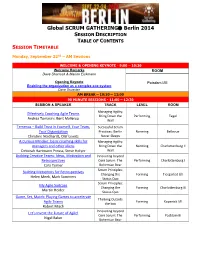

Global SCRUM GATHERING® Berlin 2014

Global SCRUM GATHERING Berlin 2014 SESSION DESCRIPTION TABLE OF CONTENTS SESSION TIMETABLE Monday, September 22nd – AM Sessions WELCOME & OPENING KEYNOTE - 9:00 – 10:30 Welcome Remarks ROOM Dave Sharrock & Marion Eickmann Opening Keynote Potsdam I/III Enabling the organization as a complex eco-system Dave Snowden AM BREAK – 10:30 – 11:00 90 MINUTE SESSIONS - 11:00 – 12:30 SESSION & SPEAKER TRACK LEVEL ROOM Managing Agility: Effectively Coaching Agile Teams Bring Down the Performing Tegel Andrea Tomasini, Bent Myllerup Wall Temenos – Build Trust in Yourself, Your Team, Successful Scrum Your Organization Practices: Berlin Norming Bellevue Christine Neidhardt, Olaf Lewitz Never Sleeps A Curious Mindset: basic coaching skills for Managing Agility: managers and other aliens Bring Down the Norming Charlottenburg II Deborah Hartmann Preuss, Steve Holyer Wall Building Creative Teams: Ideas, Motivation and Innovating beyond Retrospectives Core Scrum: The Performing Charlottenburg I Cara Turner Bohemian Bear Scrum Principles: Building Metaphors for Retrospectives Changing the Forming Tiergarted I/II Helen Meek, Mark Summers Status Quo Scrum Principles: My Agile Suitcase Changing the Forming Charlottenburg III Martin Heider Status Quo Game, Set, Match: Playing Games to accelerate Thinking Outside Agile Teams Forming Kopenick I/II the box Robert Misch Innovating beyond Let's Invent the Future of Agile! Core Scrum: The Performing Postdam III Nigel Baker Bohemian Bear Monday, September 22nd – PM Sessions LUNCH – 12:30 – 13:30 90 MINUTE SESSIONS - 13:30 -

Classification of Chemicals

Classification of Chemicals Flame & Detonation Arrester Specifications PROTECTOSEAL ® The Protectoseal Company recommends that the National Butadiene would qualify as a Group D material. In each of Electric Code (NEC) Article 500, rankings of various chemi - these cases, the chemicals were primarly listed in a higher cals be used, whenever possible, to determine the suitability category (Group B), because of relatively high pressure read - of a detonation arrester for use with a particular chemical. ings noted in one phase of the standard test procedure con - When no NEC rating of the particular chemical is available, ducted by Underwriters Laboratories. These pressures were the International Electrotechnical Commission (IEC) classifica - of concern when categorizing the chemicals because these tion (Groups IIA, IIB and IIC) is recommended as a secondary NEC groupings are also used as standard indicators for the source of information for determining the suitability of an ar - design strength requirements of electrical boxes, apparatus, rester for its intended service. In general, the IEC Group IIA is etc. that must withstand the pressures generated by an igni - equivalent to the NEC Group D; the IEC Group IIB is equiva - tion within the container. It should be noted that, in each of lent to the NEC Group C; and the IEC Group IIC includes these cases, the test pressures recorded were significantly chemicals in the NEC Groups A and B. In the event of a dis - lower than those commonly encountered when testing a deto - crepancy between the NEC and the IEC ratings, Protectoseal nation arrester for its ability to withstand stable and over - recommends that the NEC groups be referenced. -

Enabling Devops on Premise Or Cloud with Jenkins

Enabling DevOps on Premise or Cloud with Jenkins Sam Rostam [email protected] Cloud & Enterprise Integration Consultant/Trainer Certified SOA & Cloud Architect Certified Big Data Professional MSc @SFU & PhD Studies – Partial @UBC Topics The Context - Digital Transformation An Agile IT Framework What DevOps bring to Teams? - Disrupting Software Development - Improved Quality, shorten cycles - highly responsive for the business needs What is CI /CD ? Simple Scenario with Jenkins Advanced Jenkins : Plug-ins , APIs & Pipelines Toolchain concept Q/A Digital Transformation – Modernization As stated by a As established enterprises in all industries begin to evolve themselves into the successful Digital Organizations of the future they need to begin with the realization that the road to becoming a Digital Business goes through their IT functions. However, many of these incumbents are saddled with IT that has organizational structures, management models, operational processes, workforces and systems that were built to solve “turn of the century” problems of the past. Many analysts and industry experts have recognized the need for a new model to manage IT in their Businesses and have proposed approaches to understand and manage a hybrid IT environment that includes slower legacy applications and infrastructure in combination with today’s rapidly evolving Digital-first, mobile- first and analytics-enabled applications. http://www.ntti3.com/wp-content/uploads/Agile-IT-v1.3.pdf Digital Transformation requires building an ecosystem • Digital transformation is a strategic approach to IT that treats IT infrastructure and data as a potential product for customers. • Digital transformation requires shifting perspectives and by looking at new ways to use data and data sources and looking at new ways to engage with customers. -

Integrating the GNU Debugger with Cycle Accurate Models a Case Study Using a Verilator Systemc Model of the Openrisc 1000

Integrating the GNU Debugger with Cycle Accurate Models A Case Study using a Verilator SystemC Model of the OpenRISC 1000 Jeremy Bennett Embecosm Application Note 7. Issue 1 Published March 2009 Legal Notice This work is licensed under the Creative Commons Attribution 2.0 UK: England & Wales License. To view a copy of this license, visit http://creativecommons.org/licenses/by/2.0/uk/ or send a letter to Creative Commons, 171 Second Street, Suite 300, San Francisco, California, 94105, USA. This license means you are free: • to copy, distribute, display, and perform the work • to make derivative works under the following conditions: • Attribution. You must give the original author, Jeremy Bennett of Embecosm (www.embecosm.com), credit; • For any reuse or distribution, you must make clear to others the license terms of this work; • Any of these conditions can be waived if you get permission from the copyright holder, Embecosm; and • Nothing in this license impairs or restricts the author's moral rights. The software for the SystemC cycle accurate model written by Embecosm and used in this document is licensed under the GNU General Public License (GNU General Public License). For detailed licensing information see the file COPYING in the source code. Embecosm is the business name of Embecosm Limited, a private limited company registered in England and Wales. Registration number 6577021. ii Copyright © 2009 Embecosm Limited Table of Contents 1. Introduction ................................................................................................................ 1 1.1. Why Use Cycle Accurate Modeling .................................................................... 1 1.2. Target Audience ................................................................................................ 1 1.3. Open Source ..................................................................................................... 2 1.4. Further Sources of Information ......................................................................... 2 1.4.1. -

PEATS Functional Specification 1 2 Architecture Overview of PEATS

ApTest PowerPC EABI TEST SUITE (PEATS) Functional Specification Version 1.0 April 8, 1996 Applied Testing and Technology, Inc. Release Date Area Modifications 1.0 April 8, 1996 Initial release Copyright 1996 Applied Testing and Technology Inc. All rights reserved. No part of this publication may be reproduced, stored in a retrieval system or transmitted, in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, without prior permission of the copyright holder. Applied Testing and Technology, Inc. 59 North Santa Cruz Avenue, Suite U Los Gatos, CA 95030 USA Voice: 408-399-1930 Fax: 408-399-1931 [email protected] PowerPC is a trademark of IBM. UNIX is a registered trademark in the United States and other countries, licensed exclusively through X/Open Company Limited. Windows is a trademark of Microsoft Corporation. X/Open is a trademark of X/Open Company Limited. ii PEATS Programmer’s Guide Version 1.0 Applied Testing and Technology, Inc. CONTENTS CONTENTS 1Overview1 Document objective 1 Document scope 1 Associated references 1 2 Architecture 2 Overview of PEATS 2 TCL test logic 3 Analysis programs 3 C test programs 4 Installation and configuration tools and methods 4 General framework for static testing of EABI files 5 DejaGNU framework 5 Organization of directories 5 Use of trusted objects 5 Supplemental testing using run-time validation 6 Extensibility of PEATS 7 Adding test programs 7 Adding test variables 7 Testing other tools 7 3 Testing Logic 8 Framework 8 Testing steps 8 Testing choices 9 Exception handling 10 4 Coverage 11 What is tested 11 What is not tested 11 Methods used to obtain broad coverage 11 Checks on coverage 12 5User Interface13 Installation and configuration 13 Building Test Suite components 13 Editing configuration files 13 Runtest command line 14 Basic syntax 14 Applied Testing and Technology, Inc. -

UNIX (Solaris/Linux) Quick Reference Card Logging in Directory Commands at the Login: Prompt, Enter Your Username

UNIX (Solaris/Linux) QUICK REFERENCE CARD Logging In Directory Commands At the Login: prompt, enter your username. At the Password: prompt, enter ls Lists files in current directory your system password. Linux is case-sensitive, so enter upper and lower case ls -l Long listing of files letters as required for your username, password and commands. ls -a List all files, including hidden files ls -lat Long listing of all files sorted by last Exiting or Logging Out modification time. ls wcp List all files matching the wildcard Enter logout and press <Enter> or type <Ctrl>-D. pattern Changing your Password ls dn List files in the directory dn tree List files in tree format Type passwd at the command prompt. Type in your old password, then your new cd dn Change current directory to dn password, then re-enter your new password for verification. If the new password cd pub Changes to subdirectory “pub” is verified, your password will be changed. Many systems age passwords; this cd .. Changes to next higher level directory forces users to change their passwords at predetermined intervals. (previous directory) cd / Changes to the root directory Changing your MS Network Password cd Changes to the users home directory cd /usr/xx Changes to the subdirectory “xx” in the Some servers maintain a second password exclusively for use with Microsoft windows directory “usr” networking, allowing you to mount your home directory as a Network Drive. mkdir dn Makes a new directory named dn Type smbpasswd at the command prompt. Type in your old SMB passwword, rmdir dn Removes the directory dn (the then your new password, then re-enter your new password for verification.