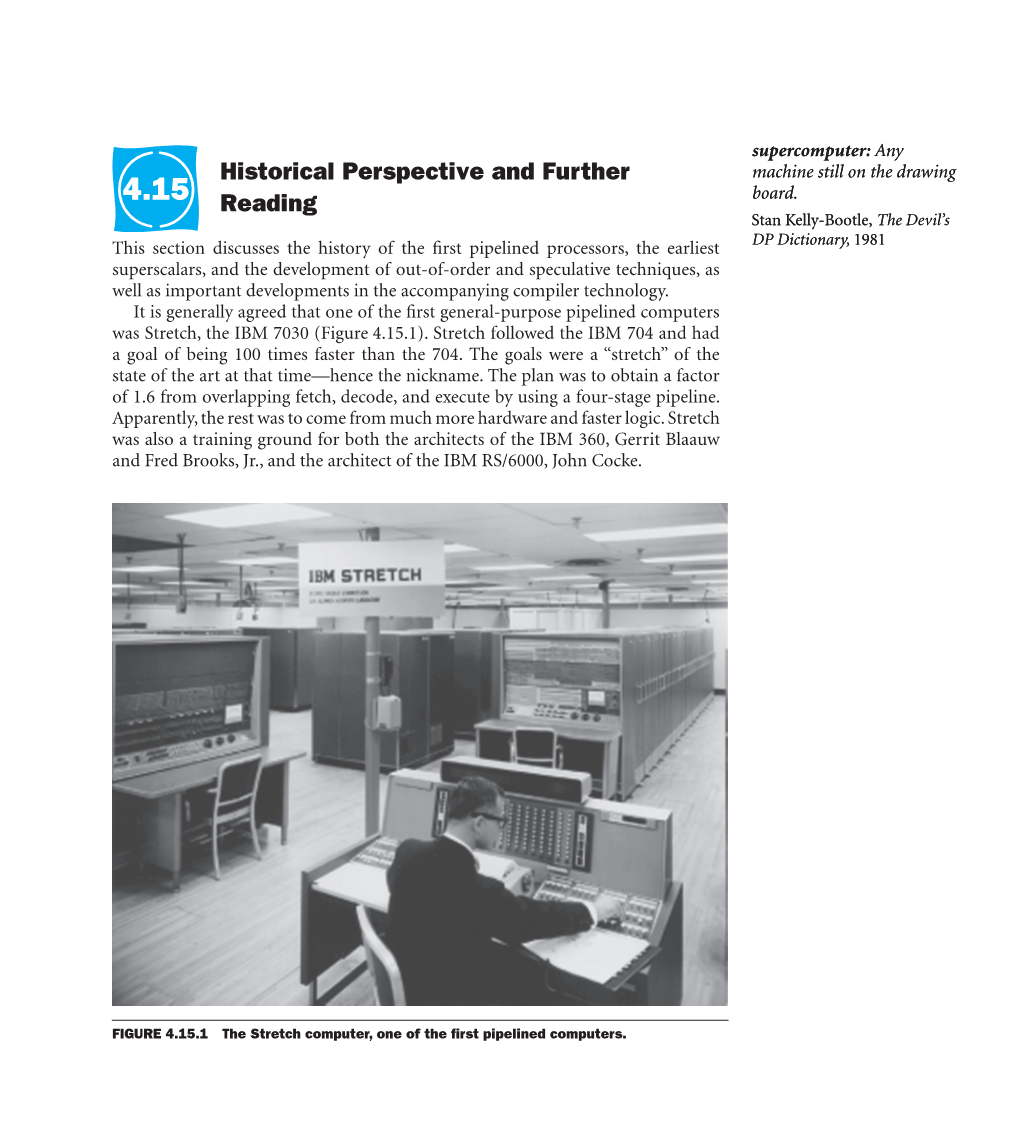

4.15 Historical Perspective and Further Reading

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Mi!!Lxlosalamos SCIENTIFIC LABORATORY

LA=8902-MS C3b ClC-l 4 REPORT COLLECTION REPRODUCTION COPY VAXNMS Benchmarking 1-’ > .— u) 9 g .— mi!!lxLOS ALAMOS SCIENTIFIC LABORATORY Post Office Box 1663 Los Alamos. New Mexico 87545 — wAifiimative Action/Equal Opportunity Employer b . l)lS(”L,\l\ll K “Thisreport wm prcpmd J, an xcttunt ,,1”wurk ,pmwrd by an dgmcy d the tlnitwl SIdtcs (kvcm. mm:. Ncit her t hc llniml SIJIL.. ( Lwcrnmcm nor any .gcncy tlhmd. nor my 08”Ihcif cmployccs. makci my wur,nly. mprcss w mphd. or JwImL.s m> lcg.d Iululity ur rcspmuhdily ltw Ilw w.cur- acy. .vmplctcncs. w uscftthtc>. ttt”any ml’ormdt ml. dpprdl us. prudu.i. w proccw didowd. or rep. resent%Ihd IIS us wuukl not mfrm$e priwtcly mvnd rqdtts. Itcl”crmcti herein 10 my sp.xi!l tom. mrcial ptotlucr. prtxcm. or S.rvskc hy tdc mmw. Irdcnmrl.. nmu(a.lurm. or dwrwi~.. does nut mmwsuily mnstitutc or reply its mdursmwnt. rccummcnddton. or favorin: by the llniwd States (“mvcmment ormy qxncy thctcd. rhc V!C$VSmd opinmm d .mthor% qmxd herein do nut net’. UMrily r;~lt or died lhow. ol”the llnttcd SIJIL.S( ;ovwnnwnt or my ugcncy lhure of. UNITED STATES .. DEPARTMENT OF ENERGY CONTRACT W-7405 -ENG. 36 . ... LA-8902-MS UC-32 Issued: July 1981 G- . VAX/VMS Benchmarking Larry Creel —. I . .._- -- ----- ,. .- .-. .: .- ,.. .. ., ..,..: , .. .., . ... ..... - .-, ..:. .. *._–: - . VAX/VMS BENCHMARKING by Larry Creel ABSTRACT Primary emphasis in this report is on the perform- ance of three Digital Equipment Corporation VAX-11/780 computers at the Los Alamos National Laboratory. Programs used in the study are part of the Laboratory’s set of benchmark programs. -

Final Report and Recommendation on New

CERN LIBRARIES, GENEVA CERN/FC/661 Original: English 5 December, 1963 CM-P00085196 ORGANISATION EUROPĒENNE POUR LA RECHERCHE NUCLĒAIRE CERN EUROPEAN ORGANIZATION FOR NUCLEAR RESEARCH FINANCE COMMITTEE Fifty-sixth Meeting Geneva - 16 December, 1963 FINAL REPORT AND RECOMMENDATION ON NEW COMPUTERS FOR CERN This paper contains the results of the enquiries and studies unfinished at the time of the meeting of the Finance Committee, on 13 November, together with the Director-General's conclusions and request to the Finance Committee to authorize CERN to purchase a CDC 6600 computer for delivery early in 1965. 7752/e CERN/FC/661 FINAL REPORT AND RECOMMENDATION ON NEW COMPUTERS FOR CERN 1. Introduction The Finance Committee, at its meeting, on 13 November, discussed an interim report on CERN's computing needs (CERN/FC/653), which accompanied the Report of the European Committee on the Future Computing Needs of CERN, CERN/516 (hereinafter referred to as "the Report"). Since then, the final offers from manufacturers have been received and evaluated, and the outstanding technical studies referred to in the conclusion of the Interim Report (section 5) have been carried out. This paper contains: - a short report on the results of these technical studies, - the evaluation of the final offers, technically and financially, - a proposal for financing the purchase of the new computer, - the implications on the CERN budget and programme of a new computer, - the conclusion, with a request that the Finance Committee authorize CERN to purchase a CDC 6600 computer and to raise a loan for the necessary amount, - an annex containing prices and other material from the offers. -

Lecture 14 Data Level Parallelism (2) EEC 171 Parallel Architectures John Owens UC Davis Credits • © John Owens / UC Davis 2007–9

Lecture 14 Data Level Parallelism (2) EEC 171 Parallel Architectures John Owens UC Davis Credits • © John Owens / UC Davis 2007–9. • Thanks to many sources for slide material: Computer Organization and Design (Patterson & Hennessy) © 2005, Computer Architecture (Hennessy & Patterson) © 2007, Inside the Machine (Jon Stokes) © 2007, © Dan Connors / University of Colorado 2007, © Kathy Yelick / UCB 2007, © Wen-Mei Hwu/David Kirk, University of Illinois 2007, © David Patterson / UCB 2003–7, © John Lazzaro / UCB 2006, © Mary Jane Irwin / Penn State 2005, © John Kubiatowicz / UCB 2002, © Krste Asinovic/Arvind / MIT 2002, © Morgan Kaufmann Publishers 1998. Outline • Vector machines (Cray 1) • Vector complexities • Massively parallel machines (Thinking Machines CM-2) • Parallel algorithms Vector Processing • Appendix F & slides by Krste Asanovic, MIT Supercomputers • Definition of a supercomputer: • Fastest machine in world at given task • A device to turn a compute-bound problem into an I/O bound problem • Any machine costing $30M+ • Any machine designed by Seymour Cray • CDC 6600 (Cray, 1964) regarded as first supercomputer Seymour Cray • “Anyone can build a fast CPU. The trick is to build a fast system.” • When asked what kind of CAD tools he used for the Cray-1, Cray said that he liked “#3 pencils with quadrille pads”. Cray recommended using the backs of the pages so that the lines were not so dominant. • When he was told that Apple Computer had just bought a Cray to help design the next Apple Macintosh, Cray commented that he had just bought -

CHAPTER 1 Introduction

CHAPTER 1 Introduction 1.1 Overview 1 1.2 The Main Components of a Computer 3 1.3 An Example System: Wading through the Jargon 4 1.4 Standards Organizations 15 1.5 Historical Development 16 1.5.1 Generation Zero: Mechanical Calculating Machines (1642–1945) 17 1.5.2 The First Generation: Vacuum Tube Computers (1945–1953) 19 1.5.3 The Second Generation: Transistorized Computers (1954–1965) 23 1.5.4 The Third Generation: Integrated Circuit Computers (1965–1980) 26 1.5.5 The Fourth Generation: VLSI Computers (1980–????) 26 1.5.6 Moore’s Law 30 1.6 The Computer Level Hierarchy 31 1.7 The von Neumann Model 34 1.8 Non-von Neumann Models 37 Chapter Summary 40 CMPS375 Class Notes (Chap01) Page 1 / 11 by Kuo-pao Yang 1.1 Overview 1 • Computer Organization o We must become familiar with how various circuits and components fit together to create working computer system. o How does a computer work? • Computer Architecture: o It focuses on the structure and behavior of the computer and refers to the logical aspects of system implementation as seen by the programmer. o Computer architecture includes many elements such as instruction sets and formats, operation code, data types, the number and types of registers, addressing modes, main memory access methods, and various I/O mechanisms. o How do I design a computer? • The computer architecture for a given machine is the combination of its hardware components plus its instruction set architecture (ISA). • The ISA is the agreed-upon interface between all the software that runs on the machine and the hardware that executes it. -

A Look at Some Compilers MATERIALS from the DRAGON BOOK and WIKIPEDIA MICHAEL WOLLOWSKI

2/11/20 A Look at some Compilers MATERIALS FROM THE DRAGON BOOK AND WIKIPEDIA MICHAEL WOLLOWSKI EQN oTakes inputs like “E sub 1” and produces commands for text formatter TROFF to produce “E1” 1 2/11/20 EQN EQN oTreating EQN as a language and applying compiler technologies has several benefits: oEase of implementation. oLanguage evolution. In response to user needs 2 2/11/20 Pascal Developed by Nicolas Wirth. Generated machine code for the CDC 6000 series machines To increase portability, the Pascal-P compiler generates P-code for an abstract stack machine. One pass recursive-descent compiler Storage is organized into 4 areas: ◦ Code for procedures ◦ Constants ◦ Stack for activation records ◦ Heap for data allocated by the new operator. Procedures may be nested, hence, activation record for a procedure contains both access and control links. CDC 6000 series The first member of the CDC 6000 series Was the supercomputer CDC 6600, Designed by Seymour Cray and James E. Thornton Introduced in September 1964 Performed up to three million instructions per second, three times faster than the IBM Stretch, the speed champion for the previous couple of years. It remained the fastest machine for five years until the CDC 7600 Was launched. The machine Was Freon refrigerant cooled. Control Data manufactured about 100 machines of this type, selling for $6 to $10 million each. 3 2/11/20 CDC 6000 series By Steve Jurvetson from Menlo Park, USA - Flickr, CC BY 2.0, https://commons.Wikimedia.org/w/index.php?curid=1114605 CDC 205 CDC 205 DKRZ 4 2/11/20 CDC 205 CDC 205 Wiring, davdata.nl Pascal 5 2/11/20 Pascal One of the compiler Writers states about the use of a one-pass compiler: ◦ Easy to implement ◦ Imposes severe restrictions on the quality of the generated code and suffers from relatively high storage requirements. -

NASA's Supercomputing Experience

O NASA Technical Memorandum 102890 NASA's Supercomputing Experience F. Ron Bailey N91-I_777 (NASA-TM-I02890) NASA's SUP_RCOMPUTING 12A CXPER[ENCE (NASA) 30 p CSCL G_/p December 1990 National Aeronautics and Space Administration \ \ \ "L _11 _ L _ _ tm NASA Technical Memorandum 102890 NASA's Supercomputing Experience F. Ron Bailey, Ames Research Center, Moffett Field, California December 1990 NationalAeronautics and Space Administration Ames Research Center Moffett Reid, California 94035-1000 \ \ \ NASA's SUPERCOMPUTING EXPERIENCE F. Ron Bailey NASA Ames Research Center Moffett Field, CA 94035, USA ABSTRACT A brief overview of NASA's recent experience in supereomputing is presented from two perspectives: early systems development and advanced supercomputing applications. NASA's role in supercomputing systems development is illustrated by discussion of activities carried out by the Numerical Aerodynamic Simulation Program. Current capa- bilities in advanced technology applications are illustrated with examples in turbulence physics, aerodynamics, aerothermodynamies, chemistry, and structural mechanics. Capa- bilities in science applications are illustrated by examples in astrophysics and atmo- spheric modeling. The paper concludes with a brief comment on the future directions and NASA's new High Performance Computing Program. 1. Introduction The National Aeronautics and Space Administration (NASA) has for many years been one of the pioneers in the development of supercomputing systems and in the appli- cation of supercomputers to solve challenging problems in science and engineering. Today supercomputing is an essential tool within the Agency. There are supercomputer installations at every NASA research and development center and their use has enabled NASA to develop new aerospace technologies and to make new scientific discoveries that could not have been accomplished without them. -

(Is!!I%Losalamos Scientific Laboratory

LA-8689-MS Los Alamos Scientific Laboratory Computer Benchmark Performance 1979 Y I I i PERMANENT RETENTION i i I REQUIRED BY CONTRACT ~- i _...._. -.. .— - .. ———--J (IS!!I%LOS ALAMOS SCIENTIFIC LABORATORY Post Office Box 1663 Los Alamos. New Mexico 87545 An AffKmtitive Action/I!qual Opportunity Employer t- Editedby MargeryMcCormick GroupC-2 I)lSCLAIM1.R Thh rqwtl was prtpued as an ‘mount or work sponwrcd by m aguncy or !he UnllcdSIws Gown. mcnt. Nclthcr the Unltcd Stales Govw,lmcnt nor any agency thcr.wf, nor any of lheif cmpluyws, mtkci my warranty, cipre!> or Impllcd, or assumes any Iqd USIWY or rc$ponslbdlty for the Iccur. ncv, mmplclcness, or u~cfulnc$s of any information, appm’’luq product, or process disclosed, oc r+ re$enh thit Its use would not Infrlngc privntcly owned r~hts. Rcfcrcncc hercln 10 my $I!CCUSCcorn. mercltl product, PIOCC$S,or SCNICCby trtdc name, Imdcmtrk, manufaL!urer, or o!hmwisc, does not necenarily mmtitute or imply its cndorscmcnt, retommcndation, or favoring by lhc United Stttcs Government or any agency thereof. The views and opinions of authors expressed herein do not ncc. c:mrily ttmtc or reflect those of the Unltcd Statct Government 01 my s;ency thcceof. UNITED STATES DEPARTMENT OF ENERGY CONTRACT W-7405 -ENG. 36 LA-8689-MS UC-32 Issued: February 1981 Los Alamos Scientific Laboratory Computer Benchmark Performance 1979 Ann H. Hayes Ingrid Y. Bucher — ----- . J E- -“ ““- ”’”-” .- ------- r- ------ . ..- . .. — ,.. LOS ALAMOS SCIENTIFIC LABORATORY COMPUTER BENCHMARK PERFOWCE 1979 by Ann H. Hayes and Ingrid Y. Bucher ABSTRACT Benchmarking of computers for performance evalua- tion and procurement purposes is an ongoing activity of the Research and Applications Group (Group C-3) at the Los Alamos Scientific Laboratory (LASL). -

Software Development

Software Development D. Ball As computers have become larger, faster Although, in general, the manufacturer SCOPE. Since this would be used by other and more complex, so have the program• supplies software, it will rarely fit the computers of the CDC 6000 series and ming systems necessary to exploit their needs of a large specialized organization since all future compilers, etc. would be capabilities. The development of these such as CERN exactly. Thus it is necessary written to work with SCOPE, the decision systems ('software') is estimated to cost to adapt and modify the systems locally. was taken to change over as soon as a the manufacturer as much as the con• With the CDC 6600, the situation was suitable local version was available. The struction of the machine ('hardware'). This aggravated because the machine acquired work on this conversion from one operating increase in complexity and magnitude of for CERN was one of the first to be system to another has been carried out the software for the latest machines has produced, and it was delivered without its mainly on the CDC 6400 in recent months arisen because of: software. This made it necessary to accept and it is planned to phase out SIPROS by an incomplete system and develop, in a the end of the year. a) the increase in the speed of the cen• crash programme, together with a local It is also the responsibility of the tral processor (the time to add two CDC support group, the present operating systems section to advise on trends in floating point numbers on CERN's first system which is called SIPROS. -

Supercomputers: the Amazing Race Gordon Bell November 2014

Supercomputers: The Amazing Race Gordon Bell November 2014 Technical Report MSR-TR-2015-2 Gordon Bell, Researcher Emeritus Microsoft Research, Microsoft Corporation 555 California, 94104 San Francisco, CA Version 1.0 January 2015 1 Submitted to STARS IEEE Global History Network Supercomputers: The Amazing Race Timeline (The top 20 significant events. Constrained for Draft IEEE STARS Article) 1. 1957 Fortran introduced for scientific and technical computing 2. 1960 Univac LARC, IBM Stretch, and Manchester Atlas finish 1956 race to build largest “conceivable” computers 3. 1964 Beginning of Cray Era with CDC 6600 (.48 MFlops) functional parallel units. “No more small computers” –S R Cray. “First super”-G. A. Michael 4. 1964 IBM System/360 announcement. One architecture for commercial & technical use. 5. 1965 Amdahl’s Law defines the difficulty of increasing parallel processing performance based on the fraction of a program that has to be run sequentially. 6. 1976 Cray 1 Vector Processor (26 MF ) Vector data. Sid Karin: “1st Super was the Cray 1” 7. 1982 Caltech Cosmic Cube (4 node, 64 node in 1983) Cray 1 cost performance x 50. 8. 1983-93 Billion dollar SCI--Strategic Computing Initiative of DARPA IPTO response to Japanese Fifth Gen. 1990 redirected to supercomputing after failure to achieve AI goals 9. 1982 Cray XMP (1 GF) Cray shared memory vector multiprocessor 10. 1984 NSF Establishes Office of Scientific Computing in response to scientists demand and to counteract the use of VAXen as personal supercomputers 11. 1987 nCUBE (1K computers) achieves 400-600 speedup, Sandia winning first Bell Prize, stimulated Gustafson’s Law of Scalable Speed-Up, Amdahl’s Law Corollary 12. -

CDC 6600: Design of a Computer

CENTRAL PROCESSOR INSTRUCTION EXECUTION TIMES (Times Listed in Minor Cycles) BRANCH UNIT I_- a, STOP 36 INTEGER SUM of XI and Xk to Xi 3 01 RETURN JUMP to K 14 37 INTEGER DIFFERENCE of Xj and Xk to Xi 3 02 GO TO K + BI (Note 1) 14 030 GO TO K if XI = zero 99 03 1 GO TO K if XI # zero 9. 9" 032 GO TO K if XI = positive 44 FLOATING DIVIDE XJ by Xk to Xi 9' 29 033 GO TO K if XI = negative Note 45 ROUND FLOATING DIVIDE Xi by Xk to Xi 9' 29 034 GO TO K if XI IS in range 2 46 PASS - GO TO K if XI is out of range 9* 035 47 SUM of 1's in Xk to XI a 036 GO TO K if XI is definite 9" 037 GO TO K if XI is indefinite 94 04 GO TO K if Bi = BI 8' 05 GO TO K if Bi # B) Note 8* 40 FLOATING PRODUCT of XI and Xk to Xi 06 GO TO K if Bi 2 BI 1 8O 10 41 ROUND FLOATING PRODUCT of XI and Xk to Xi 07 GO TO K if BI <: BI 8* 10 42 FLOATING DP PRODUCT of XI and Xk to Xi 10 INCREMENT UNIT* - 50 SUM of A1 and K to Ai 3 51 SUM of €3) and K to Ai 3 52 SUM of XI and K to Ai 3 53 SUM of XI and Bk to At 3 54 SUM of A1 and Bk to Ai 3 10 TRANSMIT XI to XI 3 55 DIFFERENCE of AI and Bk to Ai 3 11 LOGICAL PRODUCT of XI and Xk to Xi 3 56 SUM of B1 and Bk to 21 3 12 LOGICAL SUM of XI and Xk to Xi 3 57 DIFFERENCE of B1 and Bk to 21 3 13 LOGICAL DIFFERENCE of Xj and Xk to XI 3 14 TRANSMIT Xk COMP to Xi 3 60 SUM of A1 and K to Bi 3 15 LOGICAL PRODUCT of XJ and Xk COMP to Xi 3 61 SUM of B] and K to Bi 3 16 LOGICAL SUM of XI and Xk COMP to Xi 3 62 SUM of XI and K to Bi 3 17 LOGICAL DIFFERENCE of XI and Xk COMP to Xi 3 63 SUM of XI and Bk to Bi 3 64 SUM of A1 and Bk to BI 3 65 DIFFERENCE -

Carolyn Connor, Jeff Johnson, Gary Grider, Fredie Marshall

Nicholas Lewis, University of Minnesota Mentors: Carolyn Connor, Jeff Johnson, Gary Grider, Fredie Marshall Abstract A Tale of Two Companies The Fatal Flaw It is widely known that the very first Cray-1 underwent In the early 1970s, CDC had two supercomputer In late 1973, Los Alamos ran tests on the nearly evaluation at Los Alamos in 1976, but few realize that projects underway, the STAR-100, and the 8600, completed STAR, revealing that its architecture was Seymour Cray’s iconic supercomputer almost went Seymour Cray’s follow-up to the 7600. After the 8600 heavily biased toward long-vectors, making the computer elsewhere. If not for Livermore’s involvement with encountered severe setbacks, CDC canceled the project. slow on anything but large vector datasets. Livermore’s developing and failing to detect a fatal flaw with a less- In response, Cray left to form his own company Cray example codes, used to design the STAR’s architecture, well-known supercomputer from the Control Data Research, Inc. (CRI) in 1972. were not representative of either lab’s workload, and Corporation (CDC), the Cray-1 might have been installed were too easy a test to reveal the STAR’s fatal flaw. for evaluation at Livermore instead. The Scalar Bottleneck Supercomputer performance was still increasing rapidly in the mid-1960s, exemplified by the CDC 6600, but experts like Sidney Fernbach, leader of Livermore’s Computational Division, worried that a slowing of component improvements in conventional scalar architectures would form a performance bottleneck in the The CDC STAR-100 was the first future. As a result, Livermore and the Atomic Energy commercially available “vector” The Cray-1 at Los Alamos, Commission (AEC) contracted with CDC to produce the supercomputer. -

COMPUTERS on NASTRAN James L. Rogers, Jr. NASA Langley

THE I_ACT OF "FOURTH GENERATION" COMPUTERS ON NASTRAN James L. Rogers, Jr. NASA Langley Research Center INTRODUCTION The NASTRAN computer program (ref.l) is currently capable of execut- ing on three different "third generation" computers, the CDC 6000 series, the IBM 360/370 series, and the UNIVAC Ii00 series. In the past, NASTRAN has proved to be adaptable to the new hardware and software developments for these computers. The NASTRAN Systems Management Office (NSMO), as part of NASA's research effort to identify desirable formats for future large general-purpose programs, funded studies on the impact of the STAR- i00 (ref. 2) and ILLIAC IV (ref. 3) computers on NASTRAN. The STAR-100 and ILLIAC IV are referred to as "fourth generation" or "4G" computers in this paper. "Fourth generation" is in quotes because the differences between generations of computers is not easily definable. Many new improvements have been made to NASTRAN as it has evolved through the years. With each new release, there have been improved capa- bilities, efficiency improvements, and error corrections. The purpose of this paper is to shed light on the desired characteristics of future large programs, like NASTRAN, if designed for execution on "4G" machines. Concentration will be placed on the following two areas: i. Conversion to these new machines 2. Maintenance on these machines The advantages of operating NASTRAN on a "4G" computer is also discussed. BACKGROUND Figure i shows an example of the system changes NSMO has dealt with in the past and of some changes presently being contended with. Minor changes had to be made to Level 15 of NASTRAN when IBM released their 3330 disk packs.