So the Colors Cover the Wires": Interface, Aesthetics, and Usability 523 Matthew G

Total Page:16

File Type:pdf, Size:1020Kb

Load more

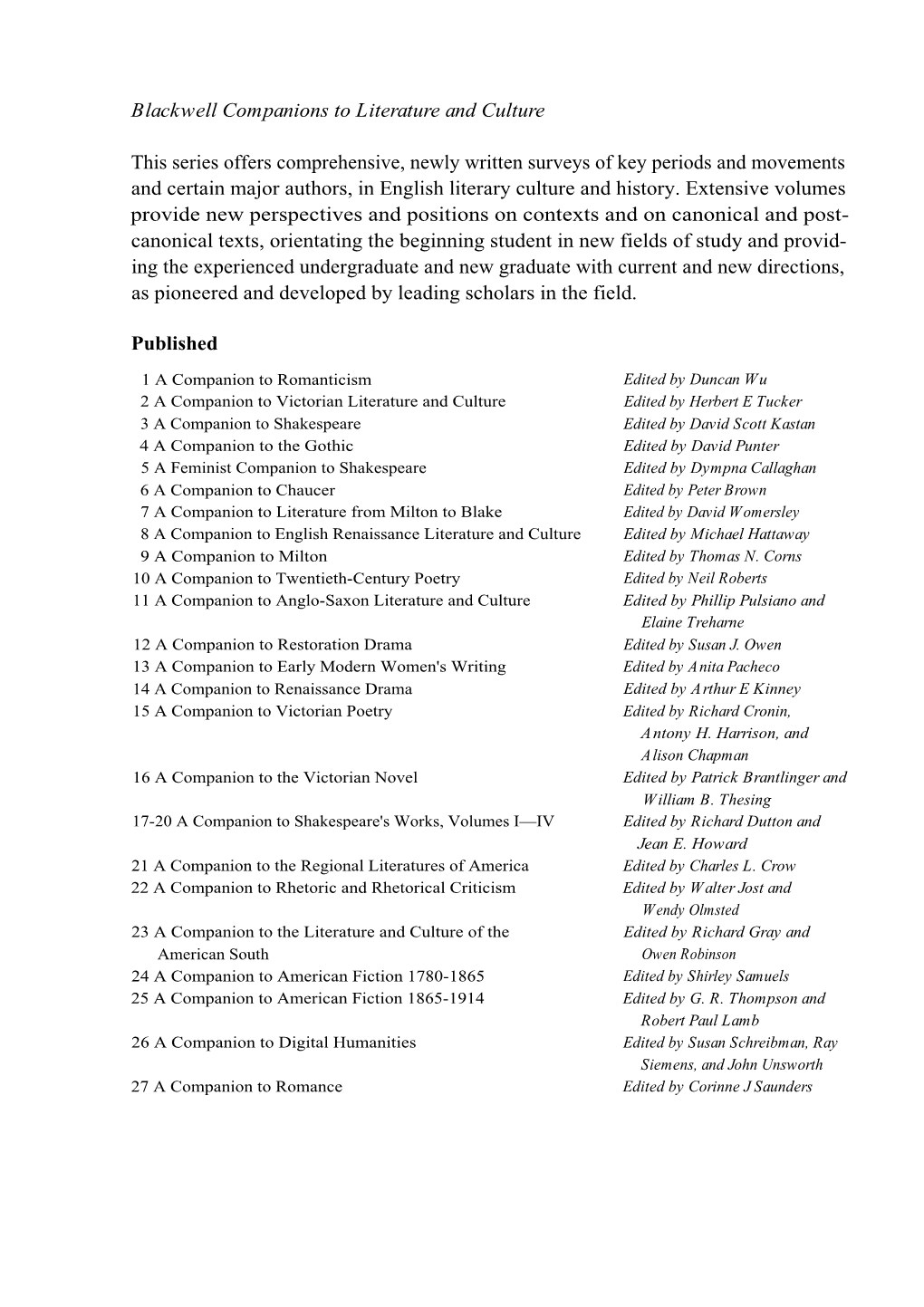

Recommended publications

-

Extensible Markup Language (XML) 1.0 (Fifth Edition) W3C Recommendation 26 November 2008

Extensible Markup Language (XML) 1.0 (Fifth Edition) W3C Recommendation 26 November 2008 This version: http://www.w3.org/TR/2008/REC-xml-20081126/ Latest version: http://www.w3.org/TR/xml/ Previous versions: http://www.w3.org/TR/2008/PER-xml-20080205/ http://www.w3.org/TR/2006/REC-xml-20060816/ Editors: Tim Bray, Textuality and Netscape <[email protected]> Jean Paoli, Microsoft <[email protected]> C. M. Sperberg-McQueen, W3C <[email protected]> Eve Maler, Sun Microsystems, Inc. <[email protected]> François Yergeau Please refer to the errata for this document, which may include some normative corrections. The previous errata for this document, are also available. See also translations. This document is also available in these non-normative formats: XML and XHTML with color-coded revision indicators. Copyright © 2008 W3C® (MIT, ERCIM, Keio), All Rights Reserved. W3C liability, trademark and document use rules apply. Abstract The Extensible Markup Language (XML) is a subset of SGML that is completely described in this document. Its goal is to enable generic SGML to be served, received, and processed on the Web in the way that is now possible with HTML. XML has been designed for ease of implementation and for interoperability with both SGML and HTML. Status of this Document This section describes the status of this document at the time of its publication. Other documents may supersede this document. A list of current W3C publications and the latest revision of this technical report can be found in the W3C technical reports index at http://www.w3.org/TR/. -

The Geography of Uzbekistan by Zaripova Zarina Content

The Geography of Uzbekistan By Zaripova Zarina Content Introduction 3-9 Chapter I. Review of the literature on the problems of Distance Education and Programmed Teaching. 1.1 Distance education and technologies used in delivery. 10-22 1.2 E-book in Education Process. 22-29 1.3 Principles of compiling an E-book on the topic 30- 37 “The Geography of Uzbekistan” Conclusion 38-40 Chapter II. Materials for compiling E-book on the topic “Geography of Uzbekistan” 41-69 Bibliography 69-73 2 Introduction “Today there is no need to prove that the 21st century is commonly acknowledged to be the century of globalization and vanishing borders, the century of information and communication technologies and the Internet, the century of ever growing competition worldwide and in the global market. In these conditions a state can consider itself viable if it always has among its main priorities the growth of investments and inputs into human capital, upbringing of an educated and intellectually advanced generation which in the modern world is the most important value and decisive power in achieving the goals of democratic development, modernization and renewal.” I.A.Karimov With the development of computers and advanced technology, the human social activities are changing basically. Education, especially the education reforms in different countries, has been experiencing the great help from the computers and advanced technology. Generally speaking, education is a field which needs more information, while the computers, advanced technology and internet are a good information provider. Also, with the aid of the computer and advanced technology, persons can make the education an effective combination. -

Julianne Nyhan Andrew Flinn Towards an Oral History of Digital

Springer Series on Cultural Computing Julianne Nyhan Andrew Flinn Computation and the Humanities Towards an Oral History of Digital Humanities Springer Series on Cultural Computing Editor-in-Chief Ernest Edmonds, University of Technology, Sydney, Australia Editorial Board Frieder Nake, University of Bremen, Bremen, Germany Nick Bryan-Kinns, Queen Mary University of London, London, UK Linda Candy, University of Technology, Sydney, Australia David England, Liverpool John Moores University, Liverpool, UK Andrew Hugill, De Montfort University, Leicester, UK Shigeki Amitani, Adobe Systems Inc., Tokyo, Japan Doug Riecken, Columbia University, New York, USA Jonas Lowgren, Linköping University, Norrköping, Sweden More information about this series at http://www.springer.com/series/10481 Julianne Nyhan • Andrew Flinn Computation and the Humanities Towards an Oral History of Digital Humanities Julianne Nyhan Andrew Flinn Department of Information Studies Department of Information Studies University College London (UCL) University College London (UCL) London, UK London, UK ISSN 2195-9056 ISSN 2195-9064 (electronic) Springer Series on Cultural Computing ISBN 978-3-319-20169-6 ISBN 978-3-319-20170-2 (eBook) DOI 10.1007/978-3-319-20170-2 Library of Congress Control Number: 2016942045 © The Editor(s) (if applicable) and the Author(s) 2016. This book is published open access. Open Access This book is distributed under the terms of the Creative Commons Attribution- Noncommercial 2.5 License (http://creativecommons.org/licenses/cc by-nc/2.5/) which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited. The images or other third party material in this book are included in the work’s Creative Commons license, unless indicated otherwise in the credit line; if such material is not included in the work’s Creative Commons license and the respective action is not permitted by statutory regulation, users will need to obtain permission from the license holder to duplicate, adapt or reproduce the material. -

Seybold Reports.Com | IP Vol 3 Index File:///C:/A%20Instabook/Corporation/Prensa/Seybold%20Reports/Seybo

Seybold Reports.com | IP Vol 3 Index file:///C:/a%20instabook/Corporation/Prensa/seybold%20reports/Seybo... Search Our Site: Go Home The following is an index of The Seybold Report on Internet Publishing Sitemap / Search Volume 3; all of the content is available online. To get the story you want try Subscribe Now! the following: Publications The Seybold Report Browse the more human-readable back issue list for this volume. The Bulletin Use our search engine with options that let you sort by relevance and E-Book Zone publication date. ARCHIVE: Publishing Systems Look over the main Internet Publishing page to find out what IP is all Internet Publishing about and what in the current issue. Other Services Consulting Note: Some articles on our site require some type of subscription access. Special Reports However, we do offer low-cost options such as a Monthly Pass. Vendor Directory Calendar of Events Reprints Seybold Report on Internet Publishing Association Partners Index to Volume 3 Customer Support Account Status September 1998 - August 1999 Missing Issue Renew / Cancel Notes About This Index Password Help This is a complete index of Volume 3 of The Seybold Report Our Guarantee on Internet Publishing, including all 12 issues of the journal. FAQ The index is alphabetized letter by letter and includes About Us proper names as well as subjects or topics. Numbers are Company History alphabetized as if they were spelled out, except in the Press Info case of vendors that have a whole series of numbered products, Meet the Editors where the numbers are sorted numerically. -

The Birth of XML 259/09/Sunday 15H40

The Birth of XML 259/09/Sunday 15h40 http://java.sun.com/xml/birth_of_xml.html Sep 16, 2007 Article The Birth of XML By Jon Bosak Solaris Global Engineering and Information Services XML arose from the recognition that key components of the original web infrastructure -- HTML tagging, simple hypertext linking, and hardcoded presentation -- would not scale up to meet the future needs of the web. This awareness started with people like me who were involved in industrial-strength electronic publishing before the web came into existence. I learned the shape of the future by supervising the transition of Novell's NetWare documentation from print to online delivery. This transition, which took from 1990 through 1994 to implement and perfect, was based on SGML. The decision to use SGML paid off in 1995 when I was able single-handedly to put 150,000 pages of Novell technical manuals on the web. This is the kind of thing that an SGML-based system will let you do. A more advanced and heavily customized version of the same system, built on technology from Inso Corporation, is used today for Solaris documentation under the name AnswerBook2. You can see it running at http://docs.sun.com, which looks like an HTML web site but in fact contains no HTML; all of the HTML is generated the moment it's needed from an SGML database. (You can get XML from this site if you know how -- but that's another story.) Like many of my colleagues in industry, I had learned the hard way that nothing substantially less powerful than SGML was going to work over the long run. -

Parsing and Querying XML Documents in SML

Parsing and Querying XML Documents in SML Dissertation zur Erlangung des akademischen Grades des Doktors der Naturwissenschaften am Fachbereich IV der Universitat¨ Trier vorgelegt von Diplom-Informatiker Andreas Neumann Dezember 1999 The forest walks are wide and spacious; And many unfrequented plots there are. The Moor Aaron, in1: The Tragedy of Titus Andronicus by William Shakespeare Danksagung An dieser Stelle mochte¨ ich allen Personen danken, die mich bei der Erstellung meiner Arbeit direkt oder indirekt unterstutzt¨ haben. An erster Stelle gilt mein Dank dem Betreuer meiner Arbeit, Helmut Seidl. In zahlreichen Diskussionen hatte er stets die Zeit und Geduld, sich mit meinen Vorstellungen von Baumautomaten auseinanderzusetzen. Seine umfassendes Hintergrundwissen und die konstruktive Kritik, die er ausubte,¨ haben wesent- lich zum Gelingen der Arbeit beigetragen. Besonderer Dank gilt Steven Bashford und Craig Smith fur¨ das muhevol-¨ le und aufmerksame Korrekturlesen der Arbeit. Sie haben manche Unstim- migkeit aufgedeckt und nicht wenige all zu “deutsche” Formulierungen in meinem Englisch gefunden. Allen Mitarbeitern des Lehrstuhls fur¨ Program- miersprachen und Ubersetzerbau¨ an der Universitat¨ Trier sei hiermit auch fur¨ die gute Arbeitsatmosphare¨ ein Lob ausgesprochen, die gewiss nicht an jedem Lehrstuhl selbstverstandlich¨ ist. Nicht zuletzt danke ich meiner Freundin Dagmar, die in den letzten Mo- naten viel Geduld und Verstandnis¨ fur¨ meine haufige¨ – nicht nur korperliche¨ – Abwesenheit aufbrachte. Ihre Unterstutzung¨ und Ermutigungen so wie ihr stets offenes Ohr waren von unschatzbarem¨ Wert. 1Found with fxgrep using the pattern ==SPEECH[ (==”forest”) ] in titus.xml [Bos99] Abstract The Extensible Markup Language (XML) is a language for storing and exchang- ing structured data in a sequential format. -

Electronic Books and the Open Ebook Publication Structure”; Allen H

“Electronic Books and the Open eBook Publication Structure”; Allen H. Renear and Dorothea Salo. Chapter 11 in The Columbia Guide to Digital Publishing, William Kasdorf (ed) Columbia University Press, 2003. Final MS. 11 Electronic Books and the Open eBook Publication Structure Allen Renear University of Illinois, Urbana-Champaign Dorothea Salo University of Wisconsin, Madison Electronic books, or e-books, will soon be a major part of electronic publishing. This chapter introduces the notion of electronic books, reviewing their history, the advantages they promise, and the difficulties in predicting the pace and nature of e-book development and adoption. It then analyzes some of the critical problems facing both individual publishers and the industry as a whole, drawing on our current understanding of fundamental principles and best practice in information processing and publishing. In the context of this analysis the Open eBook Forum Publication Structure, a widely used XML-based content format, is presented as a foundation for high-performance electronic publishing. Introduction Reading is becoming increasingly electronic, and electronic books, or e-books, are a big part of this trend. The recent skepticism of trade and popular journalists notwithstanding, the evidence shows that electronic books are making steady inroads with the reading public, that the technological and commercial obstacles to adoption are rapidly diminishing, and that the much-touted advantages of electronic books are in fact every bit as significant as claimed. While opinions certainly vary widely on the exact schedule, most publishers believe that it is only a matter of time before e-books are a commercially important part of the publishing industry. -

Contents Introduction

CONTENTS INTRODUCTION…………………………………………………………………...2 Chapter I: Theoretical basis of compiling E-books using the material on “Stay in touch” 1.1. An electronic book is a book-length publication in digital form………………..5 1.2. Timeline of the E-books………………………………………………………...10 1.3. Advantages and disadvantages of the E-books……………………………….15 1.4. E-books makes publishing more accessible…………………………………….20 1.5. Conclusion……………………………………………………………………....22 Chapter II: Presentation material for the E-manual on the topic “Theatres, Museums, and art Galleries in Uzbekistan ” The list of the books and E-resources used ………………………………………33 2 Introduction Development of a science as a whole and a linguistic science, in particular is connected not only to the decision of actuality scientific problems, but also with features internal and foreign policy of the state, the maintenance of the state educational standards which are to the generators of progress providing social economic society. It forms the society capable quickly to adapt in the modern world. It is now clearly seen in the economic socio-political and cultural life of the Republic of Uzbekistan today, when we are celebrating the 21th anniversary of the National Independence of our Fatherland, Uzbekistan. Conditions of reforming оf all education system the question of the world assistance to improvement of quality of scientific-theoretical aspect of educational process is especially actually put. President I.A.Karimov has declared in the programme speech "Harmonic development of generation a basis progress of Uzbekistan1": "... all of us realize that: achievement of the great purposes put today before us, noble aspiration necessary for updating a society". -

Extensible Markup Language (XML) 1.0 (Second Edition)

Extensible Markup Language (XML) 1.0 (Second Edition) Extensible Markup Language (XML) 1.0 (Second Edition) W3C Working Draft 14 August 2000 This version: http://www.w3.org/TR/2000/WD-xml-2e-20000814 (HTML, XML, review version with color-coded revision indicators) Latest version: http://www.w3.org/TR/REC-xml Previous versions: http://www.w3.org/TR/1998/REC-xml-19980210 Editors: Tim Bray, Textuality and Netscape <[email protected]> Jean Paoli, Microsoft <[email protected]> C. M. Sperberg-McQueen, University of Illinois at Chicago and Text Encoding Initiative <[email protected]> Eve Maler, Sun Microsystems, Inc. <[email protected]> - Second Edition Copyright © 2000 W3C® (MIT, INRIA, Keio), All Rights Reserved. W3C liability, trademark, document use, and software licensing rules apply. Abstract The Extensible Markup Language (XML) is a subset of SGML that is completely described in this document. Its goal is to enable generic SGML to be served, received, and processed on the Web in the way that is now possible with HTML. XML has been designed for ease of implementation and for interoperability with both SGML and HTML. Status of this Document This document is a version of the XML 1.0 specification incorporating the errata changes as of Aug 9 2000. It is released by the XML Core Working Group as a W3C Working Draft to gather public feedback before its final release as the XML 1.0 second edition W3C Recommendation. This document should not be used as reference material or cited as a normative reference from another document. The review period for this Working Draft is 4 weeks ending Sep 11 2000. -

Using XML to Build the Annotated XML Specification

XML.COM - Building the Annotated XML Specification Building the Annotated XML Specification Sept. 12, 1998 Java, Publishing, Tools Books about XML Java, Publishing, Tools Using XML to Build the Amazon.com Book Search Annotated XML Specification Current Issue by Tim Bray Article Search Previous Issues The design of XML 1.0 stretched over 20 months ending in February 1998, with input from a couple of Annotated XML 1.0 hundred of the world's best experts in the area of What is XML? markup, publishing, and Web design. The result of that work, the XML 1.0 Specification, is a highly XML Resource Guide condensed document that contains little or no The Standards List information about how it came to read the way it does. NEW: The Submissions List Even before the release of XML 1.0, it became Authoring Tools Guide obvious that some parts of the spec were Content Mgmt. Tools self-explanatory, while others were causing headaches XML Events Calendar for its users. The Annotated XML Specification addresses both of View Messages these problems. It supplements the basic specification, Register first with historical background and explanation of Login how things came to be the way they are, and second Change your profile with detailed explanations of the portions of the spec that have proved difficult. Commercially, it has been a Puzzlin' Evidence success; in its first month on the Web, it had over XML Q&A 100,000 page views from over 26,000 unique Internet XML:Geek addresses. It remains, by a substantial margin, the most popular item available at the XML.com site. -

The Evolution, Current Status, and Future Direction of XML

Rochester Institute of Technology RIT Scholar Works Theses 2004 The Evolution, current status, and future direction of XML Christina Ann Oak Follow this and additional works at: https://scholarworks.rit.edu/theses Recommended Citation Oak, Christina Ann, "The Evolution, current status, and future direction of XML" (2004). Thesis. Rochester Institute of Technology. Accessed from This Thesis is brought to you for free and open access by RIT Scholar Works. It has been accepted for inclusion in Theses by an authorized administrator of RIT Scholar Works. For more information, please contact [email protected]. The Evolution, Current Status, and Future Direction of XML By, Christina Ann Oak Thesis submitted in partial fulfillment of the requirements for the degree of Master of Science in Information Technology Rochester Institute of Technology B. Thomas Golisano College of Computing and Information Sciences June 21, 2004 Rochester Institute of Technology B. Thomas Golisano College of Computing and Information Sciences Master of Science in Information Technology Thesis Approval Form Student Name: Christina Ann Oak Project Title: The Evolution. Current Status. and Future Direction of XML Thesis Committee Name Signature Date William J. Stratton William J. Stratton Chair Name Illegible Name Illegible Committee Member Tona Henderson lona Henderson Committee Member Thesis Reproduction Permission Form Rochester Institute of Technology B. Thomas Golisano College of Computing and Information Sciences Master of Science in Information Technology The Evolution, Current Status, and Future Direction of XML I, Christina Ann Oak, hereby grant permission to the Wallace Library of the Rochester Institute of Technology to reproduce my thesis in whole or in part. -

The Semantic Web: May 2001

Scientific American: Feature Article: The Semantic Web: May 2001 The Semantic Web A new form of Web content that is meaningful to computers will unleash a revolution of new possibilities by TIM BERNERS-LEE, JAMES HENDLER and ORA LASSILA ........... The entertainment system was belting out the Beatles' "We SUBTOPICS: Can Work It Out" when the phone rang. When Pete Expressing Meaning answered, his phone turned the sound down by sending a Knowledge message to all the other local devices that had a volume Representation control. His sister, Lucy, was on the line from the doctor's office: "Mom needs to see a specialist and then has to have Ontologies a series of physical therapy sessions. Biweekly or something. I'm going to have my agent set up the appointments." Pete immediately agreed to share Agents the chauffeuring. Evolution of At the doctor's office, Lucy instructed her Knowledge Semantic Web agent through her handheld Web browser. The agent promptly SIDEBARS: retrieved information about Mom's Overview / Semantic prescribed treatment from the doctor's Web agent, looked up several lists of providers, and checked for the ones in- plan for Mom's insurance within a 20- Glossary mile radius of her home and with a rating of excellent or very good on What is the Killer trusted rating services. It then began trying to find a match between App? available appointment times (supplied by the agents of individual providers through their Web sites) and Pete's and Lucy's busy schedules. http://www.scientificamerican.com/2001/0501issue/0501berners-lee.html (1 of 12) [18.04.2002 21:56:54] Scientific American: Feature Article: The Semantic Web: May 2001 ILLUSTRATIONS: (The emphasized keywords indicate terms whose semantics, or meaning, Software Agents were defined for the agent through the Semantic Web.) Web Searches Today In a few minutes the agent presented them with a plan.