Hadoop Trainer Nosql Fan Boy

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

An Enterprise Knowledge Network

Fogbeam Labs Cut Through The Information Fog http://www.fogbeam.com An Enterprise Knowledge Network Knowledge exists in many forms inside your organization – ranging from tacit knowledge which exists only in the minds of the users who possess it, to codified knowledge stored in databases and document repositories. Unfortunately while knowledge exists throughout the organization, it is often not easy (if even possible) to locate, use, share, and reuse existing knowledge. This results in a situation often described as “the left hand doesn't know what the right hand is doing” and damages morale as employees spend their days frustrated and complaining that “nobody knows what is going on around here”. The obstacles that hinder access to existing knowledge can be cultural, geographical, social, and/or technological. And while no technological solution can guarantee perfect knowledge-sharing, tools drawn from big data, data mining / machine learning, deep learning, and artificial intelligence techniques can improve an organization's power to generate, capture, use, share and reuse knowledge. Using technologies developed as part of the semantic web initiative, and applying the principles of linked data within the enterprise, the Fogbeam Labs Enterprise Knowledge Network approach can help your firm integrate and aggregate knowledge which is spread across your existing enterprise applications, content repositories and Intranet. An Enterprise Knowledge Network enables your firm's capabilities to: • engage in high levels of knowledge transfer and -

Horn: a System for Parallel Training and Regularizing of Large-Scale Neural Networks

Horn: A System for Parallel Training and Regularizing of Large-Scale Neural Networks Edward J. Yoon [email protected] I Am ● Edward J. Yoon ● Member and Vice President of Apache Software Foundation ● Committer, PMC, Mentor of ○ Apache Hama ○ Apache Bigtop ○ Apache Rya ○ Apache Horn ○ Apache MRQL ● Keywords: big data, cloud, machine learning, database What is Apache Software Foundation? The Apache Software Foundation is an Non-profit foundation that is dedicated to open source software development 1) What Apache Software Foundation is, 2) Which projects are being developed, 3) What’s HORN? 4) and How to contribute them. Apache HTTP Server (NCSA HTTPd) powers nearly 500+ million websites (There are 644 million websites on the Internet) And Now! 161 Top Level Projects, 108 SubProjects, 39 Incubating Podlings, 4700+ Committers, 550 ASF Members Unknown number of developers and users Domain Diversity Programming Language Diversity Which projects are being developed? What’s HORN? ● Oct 2015, accepted as Apache Incubator Project ● Was born from Apache Hama ● A System for Deep Neural Networks ○ A neuron-level abstraction framework ○ Written in Java :/ ○ Works on distributed environments Apache Hama 1. K-means clustering Hama is 1,000x faster than Apache Mahout At UT Arlington & Oracle 2013 2. PageRank on 10 Billion edges Graph Hama is 3x faster than Facebook’s Giraph At Samsung Electronics (Yoon & Kim) 2015 3. Top-k Set Similarity Joins on Flickr Hama is clearly faster than Apache Spark At IEEE 2015 (University of Melbourne) Why we do this? 1. How to parallelize the training of large models? 2. How to avoid overfitting due to large size of the network, even with large datasets? JonathanNet Distributed Training Parameter Server Parameter Server Parameter Swapping Task 5 Each group performs Task 2 Task 4 Task 3 .. -

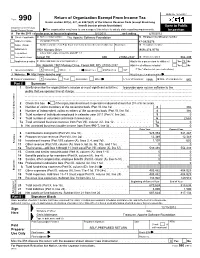

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

Projects – Other Than Hadoop! Created By:-Samarjit Mahapatra [email protected]

Projects – other than Hadoop! Created By:-Samarjit Mahapatra [email protected] Mostly compatible with Hadoop/HDFS Apache Drill - provides low latency ad-hoc queries to many different data sources, including nested data. Inspired by Google's Dremel, Drill is designed to scale to 10,000 servers and query petabytes of data in seconds. Apache Hama - is a pure BSP (Bulk Synchronous Parallel) computing framework on top of HDFS for massive scientific computations such as matrix, graph and network algorithms. Akka - a toolkit and runtime for building highly concurrent, distributed, and fault tolerant event-driven applications on the JVM. ML-Hadoop - Hadoop implementation of Machine learning algorithms Shark - is a large-scale data warehouse system for Spark designed to be compatible with Apache Hive. It can execute Hive QL queries up to 100 times faster than Hive without any modification to the existing data or queries. Shark supports Hive's query language, metastore, serialization formats, and user-defined functions, providing seamless integration with existing Hive deployments and a familiar, more powerful option for new ones. Apache Crunch - Java library provides a framework for writing, testing, and running MapReduce pipelines. Its goal is to make pipelines that are composed of many user-defined functions simple to write, easy to test, and efficient to run Azkaban - batch workflow job scheduler created at LinkedIn to run their Hadoop Jobs Apache Mesos - is a cluster manager that provides efficient resource isolation and sharing across distributed applications, or frameworks. It can run Hadoop, MPI, Hypertable, Spark, and other applications on a dynamically shared pool of nodes. -

Optimizing Resource Utilization in Distributed Computing Systems For

THESE` DE DOCTORAT DE L’ETABLISSEMENT´ UNIVERSITE´ BOURGOGNE FRANCHE-COMTE´ PREPAR´ EE´ A` L’UNIVERSITE´ DE FRANCHE-COMTE´ Ecole´ doctorale n°37 Sciences Pour l’Ingenieur´ et Microtechniques Doctorat d’Informatique par ANTHONY NASSAR Optimizing Resource Utilization in Distributed Computing Systems for Automotive Applications Optimisation de l’utilisation des ressources dans les systemes` informatiques distribues´ pour les applications automobiles These` present´ ee´ et soutenue publiquement le 04-02-2021 a` Belfort, devant le Jury compose´ de : MR CERIN CHRISTOPHE Professeur a` l’Universite´ Sorbonne Paris Nord President´ MR CHBEIR RICHARD Professeur a` l’Universite´ de Pau et des Pays de l’Adour Rapporteur MME BENBERNOU SALIMA Professeur a` l’Universite´ Paris-Descartes Rapporteur MR MOSTEFAOUI AHMED Maˆıtre de conferences´ a` l’Universite´ de Franche-Comte´ Directeur de these` MR DESSABLES FRANC¸ OIS Ingenieur´ chez Groupe PSA Codirecteur de these` DOCTORAL THESIS OF THE UNIVERSITY BOURGOGNE FRANCHE-COMTE´ INSTITUTION PREPARED AT UNIVERSITE´ DE FRANCHE-COMTE´ Doctoral school n°37 Engineering Sciences and Microtechnologies Computer Science Doctorate by ANTHONY NASSAR Optimizing Resource Utilization in Distributed Computing Systems for Automotive Applications Optimisation de l’utilisation des ressources dans les systemes` informatiques distribues´ pour les applications automobiles Thesis presented and publicly defended in Belfort, on 04-02-2021 Composition of the Jury : CERIN CHRISTOPHE Professor at Universite´ Sorbonne Paris Nord President -

Graft: a Debugging Tool for Apache Giraph

Graft: A Debugging Tool For Apache Giraph Semih Salihoglu, Jaeho Shin, Vikesh Khanna, Ba Quan Truong, Jennifer Widom Stanford University {semih, jaeho.shin, vikesh, bqtruong, widom}@cs.stanford.edu ABSTRACT optional master.compute() function is executed by the Master We address the problem of debugging programs written for Pregel- task between supersteps. like systems. After interviewing Giraph and GPS users, we devel- We have tackled the challenge of debugging programs written oped Graft. Graft supports the debugging cycle that users typically for Pregel-like systems. Despite being a core component of pro- go through: (1) Users describe programmatically the set of vertices grammers’ development cycles, very little work has been done on they are interested in inspecting. During execution, Graft captures debugging in these systems. We interviewed several Giraph and the context information of these vertices across supersteps. (2) Us- GPS programmers (hereafter referred to as “users”) and studied vertex.compute() ing Graft’s GUI, users visualize how the values and messages of the how they currently debug their functions. captured vertices change from superstep to superstep,narrowing in We found that the following three steps were common across users: suspicious vertices and supersteps. (3) Users replay the exact lines (1) Users add print statements to their code to capture information of the vertex.compute() function that executed for the sus- about a select set of potentially “buggy” vertices, e.g., vertices that picious vertices and supersteps, by copying code that Graft gener- are assigned incorrect values, send incorrect messages, or throw ates into their development environments’ line-by-line debuggers. -

A Review on Big Data Analytics in the Field of Agriculture

International Journal of Latest Transactions in Engineering and Science A Review on Big Data Analytics in the field of Agriculture Harish Kumar M Department. of Computer Science and Engineering Adhiyamaan College of Engineering,Hosur, Tamilnadu, India Dr. T Menakadevi Dept. of Electronics and Communication Engineering Adhiyamaan College of Engineering,Hosur, Tamilnadu, India Abstract- Big Data Analytics is a Data-Driven technology useful in generating significant productivity improvement in various industries by collecting, storing, managing, processing and analyzing various kinds of structured and unstructured data. The role of big data in Agriculture provides an opportunity to increase economic gain of the farmers by undergoing digital revolution in this aspect we examine through precision agriculture schemas equipped in many countries. This paper reviews the applications of big data to support agriculture. In addition it attempts to identify the tools that support the implementation of big data applications for agriculture services. The review reveals that several opportunities are available for utilizing big data in agriculture; however, there are still many issues and challenges to be addressed to achieve better utilization of this technology. Keywords—Agriculture, Big data Analytics, Hadoop, HDFS, Farmers I. INTRODUCTION The technologies employed are exciting, involve analysis of mind-numbing amounts of data and require fundamental rethinking as to what constitutes data. Big data is a collecting raw data which undergoes various phases like Classification, Processing and organizing into meaningful information. Raw information cannot be consumed directly for any form of analysis. It’s a process of examining uncover patterns, finding unknown correlation and finding useful information which are adopted for decision making analysis. -

Full-Graph-Limited-Mvn-Deps.Pdf

org.jboss.cl.jboss-cl-2.0.9.GA org.jboss.cl.jboss-cl-parent-2.2.1.GA org.jboss.cl.jboss-classloader-N/A org.jboss.cl.jboss-classloading-vfs-N/A org.jboss.cl.jboss-classloading-N/A org.primefaces.extensions.master-pom-1.0.0 org.sonatype.mercury.mercury-mp3-1.0-alpha-1 org.primefaces.themes.overcast-${primefaces.theme.version} org.primefaces.themes.dark-hive-${primefaces.theme.version}org.primefaces.themes.humanity-${primefaces.theme.version}org.primefaces.themes.le-frog-${primefaces.theme.version} org.primefaces.themes.south-street-${primefaces.theme.version}org.primefaces.themes.sunny-${primefaces.theme.version}org.primefaces.themes.hot-sneaks-${primefaces.theme.version}org.primefaces.themes.cupertino-${primefaces.theme.version} org.primefaces.themes.trontastic-${primefaces.theme.version}org.primefaces.themes.excite-bike-${primefaces.theme.version} org.apache.maven.mercury.mercury-external-N/A org.primefaces.themes.redmond-${primefaces.theme.version}org.primefaces.themes.afterwork-${primefaces.theme.version}org.primefaces.themes.glass-x-${primefaces.theme.version}org.primefaces.themes.home-${primefaces.theme.version} org.primefaces.themes.black-tie-${primefaces.theme.version}org.primefaces.themes.eggplant-${primefaces.theme.version} org.apache.maven.mercury.mercury-repo-remote-m2-N/Aorg.apache.maven.mercury.mercury-md-sat-N/A org.primefaces.themes.ui-lightness-${primefaces.theme.version}org.primefaces.themes.midnight-${primefaces.theme.version}org.primefaces.themes.mint-choc-${primefaces.theme.version}org.primefaces.themes.afternoon-${primefaces.theme.version}org.primefaces.themes.dot-luv-${primefaces.theme.version}org.primefaces.themes.smoothness-${primefaces.theme.version}org.primefaces.themes.swanky-purse-${primefaces.theme.version} -

Apache Giraph 3

Other Distributed Frameworks Shannon Quinn Distinction 1. General Compute Engines – Hadoop 2. User-facing APIs – Cascading – Scalding Alternative Frameworks 1. Apache Mahout 2. Apache Giraph 3. GraphLab 4. Apache Storm 5. Apache Tez 6. Apache Flink Alternative Frameworks 1. Apache Mahout 2. Apache Giraph 3. GraphLab 4. Apache Storm 5. Apache Tez 6. Apache Flink Apache Mahout • A Tale of Two Frameworks 1. Distributed machine learning on Hadoop – 0.1 to 0.9 2. “Samsara” – New in 0.10+ Machine learning on Hadoop • Born out of the Apache Lucene project • Built on Hadoop (all in Java) • Pragmatic machine learning at scale 1: Recommendation 2: Classification 3: Clustering Other MapReduce algorithms • Dimensionality reduction – Lanczos – SSVD – LDA • Regression – Logistic – Linear – Random Forest • Evolutionary algorithms Mahout-Samsara • Programming “environment” for distributed machine learning • R-like syntax • Interactive shell (like Spark) • Under-the-hood algebraic optimizer • Engine-agnostic – Spark – H2O – Flink – ? Mahout-Samsara Mahout • 3 main components Engine-agnostic environment for Engine-specific Legacy MapReduce building scalable ML algorithms (Spark, algorithms algorithms H2O) (Samsara) Mahout • v0.10.0 released April 11 (as in, 5 days ago!) • 0.10.1 – More base linear algebra functionality • 0.11.0 – Compatible with Spark 1.3 • 1.0 – ? Mahout features by engine Mahout features by engine Mahout features by engine • No engine-agnostic clustering algorithms yet – Still the domain of legacy MapReduce • H2O and especially Flink -

Chapter 2 Introduction to Big Data Technology

Chapter 2 Introduction to Big data Technology Bilal Abu-Salih1, Pornpit Wongthongtham2 Dengya Zhu3 , Kit Yan Chan3 , Amit Rudra3 1The University of Jordan 2 The University of Western Australia 3 Curtin University Abstract: Big data is no more “all just hype” but widely applied in nearly all aspects of our business, governments, and organizations with the technology stack of AI. Its influences are far beyond a simple technique innovation but involves all rears in the world. This chapter will first have historical review of big data; followed by discussion of characteristics of big data, i.e. from the 3V’s to up 10V’s of big data. The chapter then introduces technology stacks for an organization to build a big data application, from infrastructure/platform/ecosystem to constructional units and components. Finally, we provide some big data online resources for reference. Keywords Big data, 3V of Big data, Cloud Computing, Data Lake, Enterprise Data Centre, PaaS, IaaS, SaaS, Hadoop, Spark, HBase, Information retrieval, Solr 2.1 Introduction The ability to exploit the ever-growing amounts of business-related data will al- low to comprehend what is emerging in the world. In this context, Big Data is one of the current major buzzwords [1]. Big Data (BD) is the technical term used in reference to the vast quantity of heterogeneous datasets which are created and spread rapidly, and for which the conventional techniques used to process, analyse, retrieve, store and visualise such massive sets of data are now unsuitable and inad- equate. This can be seen in many areas such as sensor-generated data, social media, uploading and downloading of digital media. -

Study Materials for Big Data Processing Tools

MASARYK UNIVERSITY FACULTY OF INFORMATICS Study materials for Big Data processing tools BACHELOR'S THESIS Martin Durkac Brno, Spring 2021 MASARYK UNIVERSITY FACULTY OF INFORMATICS Study materials for Big Data processing tools BACHELOR'S THESIS Martin Durkáč Brno, Spring 2021 This is where a copy of the official signed thesis assignment and a copy of the Statement of an Author is located in the printed version of the document. Declaration Hereby I declare that this paper is my original authorial work, which I have worked out on my own. All sources, references, and literature used or excerpted during elaboration of this work are properly cited and listed in complete reference to the due source. Martin Durkäc Advisor: RNDr. Martin Macák i Acknowledgements I would like to thank my supervisor RNDr. Martin Macak for all the support and guidance. His constant feedback helped me to improve and finish it. I would also like to express my gratitude towards my colleagues at Greycortex for letting me use their resources to develop the practical part of the thesis. ii Abstract This thesis focuses on providing study materials for the Big Data sem• inar. The thesis is divided into six chapters plus a conclusion, where the first chapter introduces Big Data in general, four chapters contain information about Big Data processing tools and the sixth chapter describes study materials provided in this thesis. For each Big Data processing tool, the thesis contains a practical demonstration and an assignment for the seminar in the attachments. All the assignments are provided in both with and without solution forms. -

Classifying, Evaluating and Advancing Big Data Benchmarks

Classifying, Evaluating and Advancing Big Data Benchmarks Dissertation zur Erlangung des Doktorgrades der Naturwissenschaften vorgelegt beim Fachbereich 12 Informatik der Johann Wolfgang Goethe-Universität in Frankfurt am Main von Todor Ivanov aus Stara Zagora Frankfurt am Main 2019 (D 30) vom Fachbereich 12 Informatik der Johann Wolfgang Goethe-Universität als Dissertation angenommen. Dekan: Prof. Dr. Andreas Bernig Gutachter: Prof. Dott. -Ing. Roberto V. Zicari Prof. Dr. Carsten Binnig Datum der Disputation: 23.07.2019 Abstract The main contribution of the thesis is in helping to understand which software system parameters mostly affect the performance of Big Data Platforms under realistic workloads. In detail, the main research contributions of the thesis are: 1. Definition of the new concept of heterogeneity for Big Data Architectures (Chapter 2); 2. Investigation of the performance of Big Data systems (e.g. Hadoop) in virtual- ized environments (Section 3.1); 3. Investigation of the performance of NoSQL databases versus Hadoop distribu- tions (Section 3.2); 4. Execution and evaluation of the TPCx-HS benchmark (Section 3.3); 5. Evaluation and comparison of Hive and Spark SQL engines using benchmark queries (Section 3.4); 6. Evaluation of the impact of compression techniques on SQL-on-Hadoop engine performance (Section 3.5); 7. Extensions of the standardized Big Data benchmark BigBench (TPCx-BB) (Section 4.1 and 4.3); 8. Definition of a new benchmark, called ABench (Big Data Architecture Stack Benchmark), that takes into account the heterogeneity of Big Data architectures (Section 4.5). The thesis is an attempt to re-define system benchmarking taking into account the new requirements posed by the Big Data applications.