Chapter 2 Introduction to Big Data Technology

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Netapp Solutions for Hadoop Reference Architecture: Cloudera Faiz Abidi (Netapp) and Udai Potluri (Cloudera) June 2018 | WP-7217

White Paper NetApp Solutions for Hadoop Reference Architecture: Cloudera Faiz Abidi (NetApp) and Udai Potluri (Cloudera) June 2018 | WP-7217 In partnership with Abstract There has been an exponential growth in data over the past decade and analyzing huge amounts of data in a reasonable time can be a challenge. Apache Hadoop is an open- source tool that can help your organization quickly mine big data and extract meaningful patterns from it. However, enterprises face several technical challenges when deploying Hadoop, specifically in the areas of cluster availability, operations, and scaling. NetApp® has developed a reference architecture with Cloudera to deliver a solution that overcomes some of these challenges so that businesses can ingest, store, and manage big data with greater reliability and scalability and with less time spent on operations and maintenance. This white paper discusses a flexible, validated, enterprise-class Hadoop architecture that is based on NetApp E-Series storage using Cloudera’s Hadoop distribution. TABLE OF CONTENTS 1 Introduction ........................................................................................................................................... 4 1.1 Big Data ..........................................................................................................................................................4 1.2 Hadoop Overview ...........................................................................................................................................4 2 NetApp E-Series -

Security Log Analysis Using Hadoop Harikrishna Annangi Harikrishna Annangi, [email protected]

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by St. Cloud State University St. Cloud State University theRepository at St. Cloud State Culminating Projects in Information Assurance Department of Information Systems 3-2017 Security Log Analysis Using Hadoop Harikrishna Annangi Harikrishna Annangi, [email protected] Follow this and additional works at: https://repository.stcloudstate.edu/msia_etds Recommended Citation Annangi, Harikrishna, "Security Log Analysis Using Hadoop" (2017). Culminating Projects in Information Assurance. 19. https://repository.stcloudstate.edu/msia_etds/19 This Starred Paper is brought to you for free and open access by the Department of Information Systems at theRepository at St. Cloud State. It has been accepted for inclusion in Culminating Projects in Information Assurance by an authorized administrator of theRepository at St. Cloud State. For more information, please contact [email protected]. Security Log Analysis Using Hadoop by Harikrishna Annangi A Starred Paper Submitted to the Graduate Faculty of St. Cloud State University in Partial Fulfillment of the Requirements for the Degree of Master of Science in Information Assurance April, 2016 Starred Paper Committee: Dr. Dennis Guster, Chairperson Dr. Susantha Herath Dr. Sneh Kalia 2 Abstract Hadoop is used as a general-purpose storage and analysis platform for big data by industries. Commercial Hadoop support is available from large enterprises, like EMC, IBM, Microsoft and Oracle and Hadoop companies like Cloudera, Hortonworks, and Map Reduce. Hadoop is a scheme written in Java that allows distributed processes of large data sets across clusters of computers using programming models. A Hadoop frame work application works in an environment that provides storage and computation across clusters of computers. -

Building a Scalable Index and a Web Search Engine for Music on the Internet Using Open Source Software

Department of Information Science and Technology Building a Scalable Index and a Web Search Engine for Music on the Internet using Open Source software André Parreira Ricardo Thesis submitted in partial fulfillment of the requirements for the degree of Master in Computer Science and Business Management Advisor: Professor Carlos Serrão, Assistant Professor, ISCTE-IUL September, 2010 Acknowledgments I should say that I feel grateful for doing a thesis linked to music, an art which I love and esteem so much. Therefore, I would like to take a moment to thank all the persons who made my accomplishment possible and hence this is also part of their deed too. To my family, first for having instigated in me the curiosity to read, to know, to think and go further. And secondly for allowing me to continue my studies, providing the environment and the financial means to make it possible. To my classmate André Guerreiro, I would like to thank the invaluable brainstorming, the patience and the help through our college years. To my friend Isabel Silva, who gave me a precious help in the final revision of this document. Everyone in ADETTI-IUL for the time and the attention they gave me. Especially the people over Caixa Mágica, because I truly value the expertise transmitted, which was useful to my thesis and I am sure will also help me during my professional course. To my teacher and MSc. advisor, Professor Carlos Serrão, for embracing my will to master in this area and for being always available to help me when I needed some advice. -

An Enterprise Knowledge Network

Fogbeam Labs Cut Through The Information Fog http://www.fogbeam.com An Enterprise Knowledge Network Knowledge exists in many forms inside your organization – ranging from tacit knowledge which exists only in the minds of the users who possess it, to codified knowledge stored in databases and document repositories. Unfortunately while knowledge exists throughout the organization, it is often not easy (if even possible) to locate, use, share, and reuse existing knowledge. This results in a situation often described as “the left hand doesn't know what the right hand is doing” and damages morale as employees spend their days frustrated and complaining that “nobody knows what is going on around here”. The obstacles that hinder access to existing knowledge can be cultural, geographical, social, and/or technological. And while no technological solution can guarantee perfect knowledge-sharing, tools drawn from big data, data mining / machine learning, deep learning, and artificial intelligence techniques can improve an organization's power to generate, capture, use, share and reuse knowledge. Using technologies developed as part of the semantic web initiative, and applying the principles of linked data within the enterprise, the Fogbeam Labs Enterprise Knowledge Network approach can help your firm integrate and aggregate knowledge which is spread across your existing enterprise applications, content repositories and Intranet. An Enterprise Knowledge Network enables your firm's capabilities to: • engage in high levels of knowledge transfer and -

Natural Language Processing Technique for Information Extraction and Analysis

International Journal of Research Studies in Computer Science and Engineering (IJRSCSE) Volume 2, Issue 8, August 2015, PP 32-40 ISSN 2349-4840 (Print) & ISSN 2349-4859 (Online) www.arcjournals.org Natural Language Processing Technique for Information Extraction and Analysis T. Sri Sravya1, T. Sudha2, M. Soumya Harika3 1 M.Tech (C.S.E) Sri Padmavati Mahila Visvavidyalayam (Women’s University), School of Engineering and Technology, Tirupati. [email protected] 2 Head (I/C) of C.S.E & IT Sri Padmavati Mahila Visvavidyalayam (Women’s University), School of Engineering and Technology, Tirupati. [email protected] 3 M. Tech C.S.E, Assistant Professor, Sri Padmavati Mahila Visvavidyalayam (Women’s University), School of Engineering and Technology, Tirupati. [email protected] Abstract: In the current internet era, there are a large number of systems and sensors which generate data continuously and inform users about their status and the status of devices and surroundings they monitor. Examples include web cameras at traffic intersections, key government installations etc., seismic activity measurement sensors, tsunami early warning systems and many others. A Natural Language Processing based activity, the current project is aimed at extracting entities from data collected from various sources such as social media, internet news articles and other websites and integrating this data into contextual information, providing visualization of this data on a map and further performing co-reference analysis to establish linkage amongst the entities. Keywords: Apache Nutch, Solr, crawling, indexing 1. INTRODUCTION In today’s harsh global business arena, the pace of events has increased rapidly, with technological innovations occurring at ever-increasing speed and considerably shorter life cycles. -

Apache Cassandra on AWS Whitepaper

Apache Cassandra on AWS Guidelines and Best Practices January 2016 Amazon Web Services – Apache Cassandra on AWS January 2016 © 2016, Amazon Web Services, Inc. or its affiliates. All rights reserved. Notices This document is provided for informational purposes only. It represents AWS’s current product offerings and practices as of the date of issue of this document, which are subject to change without notice. Customers are responsible for making their own independent assessment of the information in this document and any use of AWS’s products or services, each of which is provided “as is” without warranty of any kind, whether express or implied. This document does not create any warranties, representations, contractual commitments, conditions or assurances from AWS, its affiliates, suppliers or licensors. The responsibilities and liabilities of AWS to its customers are controlled by AWS agreements, and this document is not part of, nor does it modify, any agreement between AWS and its customers. Page 2 of 52 Amazon Web Services – Apache Cassandra on AWS January 2016 Notices 2 Abstract 4 Introduction 4 NoSQL on AWS 5 Cassandra: A Brief Introduction 6 Cassandra: Key Terms and Concepts 6 Write Request Flow 8 Compaction 11 Read Request Flow 11 Cassandra: Resource Requirements 14 Storage and IO Requirements 14 Network Requirements 15 Memory Requirements 15 CPU Requirements 15 Planning Cassandra Clusters on AWS 16 Planning Regions and Availability Zones 16 Planning an Amazon Virtual Private Cloud 18 Planning Elastic Network Interfaces 19 Planning -

Apache Cassandra and Apache Spark Integration a Detailed Implementation

Apache Cassandra and Apache Spark Integration A detailed implementation Integrated Media Systems Center USC Spring 2015 Supervisor Dr. Cyrus Shahabi Student Name Stripelis Dimitrios 1 Contents 1. Introduction 2. Apache Cassandra Overview 3. Apache Cassandra Production Development 4. Apache Cassandra Running Requirements 5. Apache Cassandra Read/Write Requests using the Python API 6. Types of Cassandra Queries 7. Apache Spark Overview 8. Building the Spark Project 9. Spark Nodes Configuration 10. Building the Spark Cassandra Integration 11. Running the Spark-Cassandra Shell 12. Summary 2 1. Introduction This paper can be used as a reference guide for a detailed technical implementation of Apache Spark v. 1.2.1 and Apache Cassandra v. 2.0.13. The integration of both systems was deployed on Google Cloud servers using the RHEL operating system. The same guidelines can be easily applied to other operating systems (Linux based) as well with insignificant changes. Cluster Requirements: Software Java 1.7+ installed Python 2.7+ installed Ports A number of at least 7 ports in each node of the cluster must be constantly opened. For Apache Cassandra the following ports are the default ones and must be opened securely: 9042 - Cassandra native transport for clients 9160 - Cassandra Port for listening for clients 7000 - Cassandra TCP port for commands and data 7199 - JMX Port Cassandra For Apache Spark any 4 random ports should be also opened and secured, excluding ports 8080 and 4040 which are used by default from apache Spark for creating the Web UI of each application. It is highly advisable that one of the four random ports should be the port 7077, because it is the default port used by the Spark Master listening service. -

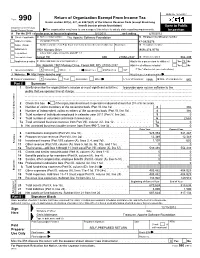

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

Groups and Activities Report 2017

Groups and Activities Report 2017 ISBN 978-92-9083-491-5 This report is released under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License. 2 | Page CERN IT Department Groups and Activities Report 2017 CONTENTS GROUPS REPORTS 2017 Collaborations, Devices & Applications (CDA) Group ............................................................................. 6 Communication Systems (CS) Group .................................................................................................... 11 Compute & Monitoring (CM) Group ..................................................................................................... 16 Computing Facilities (CF) Group ........................................................................................................... 20 Databases (DB) Group ........................................................................................................................... 23 Departmental Infrastructure (DI) Group ............................................................................................... 27 Storage (ST) Group ................................................................................................................................ 28 ACTIVITIES AND PROJECTS REPORTS 2017 CERN openlab ........................................................................................................................................ 34 CERN School of Computing (CSC) ......................................................................................................... -

Building a Search Engine for the Cuban Web

Building a Search Engine for the Cuban Web Jorge Luis Betancourt Search/Crawl Engineer NOVEMBER 16-18, 2016 • SEVILLE, SPAIN Who am I 01 Jorge Luis Betancourt González Search/Crawl Engineer Apache Nutch Committer & PMC Apache Solr/ES enthusiast 2 Agenda • Introduction & motivation • Technologies used • Customizations • Conclusions and future work 3 Introduction / Motivation Cuba Internet Intranet Global search engines can’t access documents hosted the Cuban Intranet 4 Writing your own web search engine from scratch? or … 5 Common search engine features 1 Web search: HTML & documents (PDF, DOC) • highlighting • suggestions • filters (facets) • autocorrection 2 Image search (size, format, color, objects) • thumbnails • show metadata • filters (facets) • match text with images 3 News search (alerting, notifications) • near real time • email, push, SMS 6 How to fulfill these requirements? store query At the core a search engine: stores some information a retrieve this information when a question is received 7 Open Source to the rescue … crawler 1 Index Server 2 web interface 3 8 Apache Nutch Nutch is a well matured, production ready “ Web crawler. Enables fine grained configuration, relying on Apache Hadoop™ data structures, which are great for batch processing. 9 Apache Nutch • Highly scalable • Highly extensible • Pluggable parsing protocols, storage, indexing, scoring, • Active community • Apache License 10 Apache Solr TOTAL DOWNLOADS 8M+ MONTHLY DOWNLOADS 250,000+ • Apache License • Great community • Highly modular • Stability / Scalability -

CDP DATA CENTER 7.1 Laurent Edel : Solution Engineer Jacques Marchand : Solution Engineer Mael Ropars : Principal Solution Engineer

CDP DATA CENTER 7.1 Laurent Edel : Solution Engineer Jacques Marchand : Solution Engineer Mael Ropars : Principal Solution Engineer 30 Juin 2020 SPEAKERS • © 2019 Cloudera, Inc. All rights reserved. AGENDA • CDP DATA CENTER OVERVIEW • DETAILS ABOUT MAJOR COMPONENTS • PATH TO CDP DC && SMART MIGRATION • Q/A © 2019 Cloudera, Inc. All rights reserved. CLOUDERA DATA PLATFORM © 2020 Cloudera, Inc. All rights reserved. 4 ARCHITECTURE CIBLE : ENTERPRISE DATA CLOUD CDP Cloud Public CDP On-Prem (platform-as-a-service) (installable software) © 2020 Cloudera, Inc. All rights reserved. 5 CDP DATA CENTER OVERVIEW CDP Data Center (installable software) NEW CDP Data Center features include: Cloudera Manager • High-performance SQL analytics • Real-time stream processing, analytics, and management • Fine-grained security, enterprise metadata, and scalable data lineage • Support for object storage (tech preview) • Single pane of glass for management - multi-cluster support Enterprise analytics and data management platform, built for hybrid cloud, optimized for bare metal and ready for private cloud Cloudera Runtime © 2020 Cloudera, Inc. All rights reserved. 6 A NEW OPEN SOURCE DISTRIBUTION FOR BETTER CAPABILITY Cloudera Runtime - created from the best of CDH and HDP Deprecate competitive Merge overlapping Keep complementary Upgrade shared technologies technologies technologies technologies © 2019 Cloudera, Inc. All rights reserved. 7 COMPONENT LIST CDP Data Center 7.1(May) 2020 • Cloudera Manager 7.1 • HBase 2.2 • Key HSM 7.1 • Kafka Schema Registry 0.8 -

Graft: a Debugging Tool for Apache Giraph

Graft: A Debugging Tool For Apache Giraph Semih Salihoglu, Jaeho Shin, Vikesh Khanna, Ba Quan Truong, Jennifer Widom Stanford University {semih, jaeho.shin, vikesh, bqtruong, widom}@cs.stanford.edu ABSTRACT optional master.compute() function is executed by the Master We address the problem of debugging programs written for Pregel- task between supersteps. like systems. After interviewing Giraph and GPS users, we devel- We have tackled the challenge of debugging programs written oped Graft. Graft supports the debugging cycle that users typically for Pregel-like systems. Despite being a core component of pro- go through: (1) Users describe programmatically the set of vertices grammers’ development cycles, very little work has been done on they are interested in inspecting. During execution, Graft captures debugging in these systems. We interviewed several Giraph and the context information of these vertices across supersteps. (2) Us- GPS programmers (hereafter referred to as “users”) and studied vertex.compute() ing Graft’s GUI, users visualize how the values and messages of the how they currently debug their functions. captured vertices change from superstep to superstep,narrowing in We found that the following three steps were common across users: suspicious vertices and supersteps. (3) Users replay the exact lines (1) Users add print statements to their code to capture information of the vertex.compute() function that executed for the sus- about a select set of potentially “buggy” vertices, e.g., vertices that picious vertices and supersteps, by copying code that Graft gener- are assigned incorrect values, send incorrect messages, or throw ates into their development environments’ line-by-line debuggers.