Power9 CTE User's Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Investigations on Hardware Compression of IBM Power9 Processors

Investigations on hardware compression of IBM Power9 processors Jérome Kieffer, Pierre Paleo, Antoine Roux, Benoît Rousselle Outline ● The bandwidth issue at synchrotrons sources ● Presentation of the evaluated systems: – Intel Xeon vs IBM Power9 – Benchmarks on bandwidth ● The need for compression of scientific data – Compression as part of HDF5 – The hardware compression engine NX-gzip within Power9 – Gzip performance benchmark – Bitshuffle-LZ4 benchmark – Filter optimizations – Benchmark of parallel filtered gzip ● Conclusions – on the hardware – on the compression pipeline in HDF5 Page 2 HDF5 on Power9 18/09/2019 Bandwidth issue at synchrotrons sources Data analysis computer with the main interconnections and their associated bandwidth. Data reduction Upgrade to → Azimuthal integration 100 Gbit/s Data compression ! Figures from former generation of servers Kieffer et al. Volume 25 | Part 2 | March 2018 | Pages 612–617 | 10.1107/S1600577518000607 Page 3 HDF5 on Power9 18/09/2019 Topologies of Intel Xeon servers in 2019 Source: intel.com Page 4 HDF5 on Power9 18/09/2019 Architecture of the AC922 server from IBM featuring Power9 Credit: Thibaud Besson, IBM France Page 6 HDF5 on Power9 18/09/2019 Bandwidth measurement: Xeon vs Power9 Computer Dell R840 IBM AC922 Processor 4 Intel Xeon (12 cores) 2 IBM Power9 (16 cores) 2.6 GHz 2.7 GHz Cache (L3) 19 MB 8x 10 MB Memory channels 4x 6 DDR4 2x 8 DDR4 Memory capacity → 3TB → 2TB Memory speed theory 512 GB/s 340 GB/s Measured memory speed 160 GB/s 270 GB/s Interconnects PCIe v3 PCIe v4 NVlink2 & CAPI2 GP-GPU co-processor 2Tesla V100 PCIe v3 2Tesla V100 NVlink2 Interconnect speed 12 GB/s 48 GB/s CPU ↔ GPU Page 8 HDF5 on Power9 18/09/2019 Strength and weaknesses of the OpenPower architecture While amd64 is today’s de facto standard in HPC, it has a few competitors: arm64, ppc64le and to a less extend riscv and mips64. -

POWER® Processor-Based Systems

IBM® Power® Systems RAS Introduction to IBM® Power® Reliability, Availability, and Serviceability for POWER9® processor-based systems using IBM PowerVM™ With Updates covering the latest 4+ Socket Power10 processor-based systems IBM Systems Group Daniel Henderson, Irving Baysah Trademarks, Copyrights, Notices and Acknowledgements Trademarks IBM, the IBM logo, and ibm.com are trademarks or registered trademarks of International Business Machines Corporation in the United States, other countries, or both. These and other IBM trademarked terms are marked on their first occurrence in this information with the appropriate symbol (® or ™), indicating US registered or common law trademarks owned by IBM at the time this information was published. Such trademarks may also be registered or common law trademarks in other countries. A current list of IBM trademarks is available on the Web at http://www.ibm.com/legal/copytrade.shtml The following terms are trademarks of the International Business Machines Corporation in the United States, other countries, or both: Active AIX® POWER® POWER Power Power Systems Memory™ Hypervisor™ Systems™ Software™ Power® POWER POWER7 POWER8™ POWER® PowerLinux™ 7® +™ POWER® PowerHA® POWER6 ® PowerVM System System PowerVC™ POWER Power Architecture™ ® x® z® Hypervisor™ Additional Trademarks may be identified in the body of this document. Other company, product, or service names may be trademarks or service marks of others. Notices The last page of this document contains copyright information, important notices, and other information. Acknowledgements While this whitepaper has two principal authors/editors it is the culmination of the work of a number of different subject matter experts within IBM who contributed ideas, detailed technical information, and the occasional photograph and section of description. -

IBM Power Systems Performance Report Apr 13, 2021

IBM Power Performance Report Power7 to Power10 September 8, 2021 Table of Contents 3 Introduction to Performance of IBM UNIX, IBM i, and Linux Operating System Servers 4 Section 1 – SPEC® CPU Benchmark Performance 4 Section 1a – Linux Multi-user SPEC® CPU2017 Performance (Power10) 4 Section 1b – Linux Multi-user SPEC® CPU2017 Performance (Power9) 4 Section 1c – AIX Multi-user SPEC® CPU2006 Performance (Power7, Power7+, Power8) 5 Section 1d – Linux Multi-user SPEC® CPU2006 Performance (Power7, Power7+, Power8) 6 Section 2 – AIX Multi-user Performance (rPerf) 6 Section 2a – AIX Multi-user Performance (Power8, Power9 and Power10) 9 Section 2b – AIX Multi-user Performance (Power9) in Non-default Processor Power Mode Setting 9 Section 2c – AIX Multi-user Performance (Power7 and Power7+) 13 Section 2d – AIX Capacity Upgrade on Demand Relative Performance Guidelines (Power8) 15 Section 2e – AIX Capacity Upgrade on Demand Relative Performance Guidelines (Power7 and Power7+) 20 Section 3 – CPW Benchmark Performance 19 Section 3a – CPW Benchmark Performance (Power8, Power9 and Power10) 22 Section 3b – CPW Benchmark Performance (Power7 and Power7+) 25 Section 4 – SPECjbb®2015 Benchmark Performance 25 Section 4a – SPECjbb®2015 Benchmark Performance (Power9) 25 Section 4b – SPECjbb®2015 Benchmark Performance (Power8) 25 Section 5 – AIX SAP® Standard Application Benchmark Performance 25 Section 5a – SAP® Sales and Distribution (SD) 2-Tier – AIX (Power7 to Power8) 26 Section 5b – SAP® Sales and Distribution (SD) 2-Tier – Linux on Power (Power7 to Power7+) -

Towards a Portable Hierarchical View of Distributed Shared Memory Systems: Challenges and Solutions

Towards A Portable Hierarchical View of Distributed Shared Memory Systems: Challenges and Solutions Millad Ghane Sunita Chandrasekaran Margaret S. Cheung Department of Computer Science Department of Computer and Information Physics Department University of Houston Sciences University of Houston TX, USA University of Delaware Center for Theoretical Biological Physics, [email protected],[email protected] DE, USA Rice University [email protected] TX, USA [email protected] Abstract 1 Introduction An ever-growing diversity in the architecture of modern super- Heterogeneity has become increasingly prevalent in the recent computers has led to challenges in developing scientifc software. years given its promising role in tackling energy and power con- Utilizing heterogeneous and disruptive architectures (e.g., of-chip sumption crisis of high-performance computing (HPC) systems [15, and, in the near future, on-chip accelerators) has increased the soft- 20]. Dennard scaling [14] has instigated the adaptation of heteroge- ware complexity and worsened its maintainability. To that end, we neous architectures in the design of supercomputers and clusters need a productive software ecosystem that improves the usability by the HPC community. The July 2019 TOP500 [36] report shows and portability of applications for such systems while allowing how 126 systems in the list are heterogeneous systems confgured every parallelism opportunity to be exploited. with one or many GPUs. This is the prevailing trend in current gen- In this paper, we outline several challenges that we encountered eration of supercomputers. As an example, Summit [31], the fastest in the implementation of Gecko, a hierarchical model for distributed supercomputer according to the Top500 list (June 2019) [36], has shared memory architectures, using a directive-based program- two IBM POWER9 processors and six NVIDIA Volta V100 GPUs. -

Upgrade to POWER9 Planning Checklist

Achieve your full business potential with IBM POWER9 Future-forward infrastructure designed to crush data-intensive workloads Upgrade to POWER9 Planning Checklist Using this checklist will help to ensure that your infrastructure strategy is aligned to your needs, avoiding potential cost overruns or capability shortfalls. 1 Determine current and future capacity 5 Identify all dependencies for major database requirements. Bring your team together, platforms, including Oracle, DB2, SAP HANA, assess your current application workload and open-source databases like EnterpriseDB, requirements and three- to five-year MongoDB, neo4j, and Redis. You’re likely outlook. You’ll then have a good picture running major databases on the Power of when and where application growth Systems platform; co-locating your current will take place, enabling you to secure servers may be a way to reduce expenditure capacity at the appropriate time on an and increase flexibility. as-needed basis. 6 Understand current and future data center 2 Assess operational efficiencies and identify environmental requirements. You may be opportunities to improve service levels unnecessarily overspending on power, while decreasing exposure to security and cooling and space. Savings here will help your compliancy issues/problems. With new organization avoid costs associated with data technologies that allow you to easily adjust center expansion. capacity, you will be in a much better position to lower costs, improve service Identify the requirements of your strategy for levels, and increase efficiency. 7 on- and off-premises cloud infrastructure. As you move to the cloud, ensure you 3 Create a detailed inventory of servers have a strong strategy to determine which across your entire IT infrastructure. -

AC922 Data Movement for CORAL

AC922 Data Movement for CORAL Roberts, Steve Ramanna, Pradeep Walthour, John IBM IBM IBM Cognitive Systems Cognitive Systems Cognitive Systems Austin, TX Austin, TX Austin, TX [email protected] [email protected] [email protected] Abstract—Recent publications have considered the challenge of and GPU processing elements associated with their respective movement in and out of the high bandwidth memory in attempt DRAM or high bandwidth memory (HBM2). Each processor to maximize GPU utilization and minimize overall application element creates a NUMA domain which in total encompasses wall time. This paper builds on previous contributions, [5] [17], which simulate software models, advocated optimization, and over > 2PB worth of total memory (see Table I for total suggest design considerations. This contribution characterizes the capacity). data movement innovations of the AC922 nodes IBM delivered to Oak Ridge National Labs and Lawrence Livermore National TABLE I Labs as part of the 2014 Collaboration of Oak Ridge, Argonne, CORAL SYSTEMS MEMORY SUMMARY and Livermore (CORAL) joint procurement activity. With a single HPC system able to perform up to 200PF of processing Lab Nodes Sockets DRAM (TB) GPUs HBM2 (TB) with access to 2.5PB of memory, this architecture motivates a ORNL 4607 9216 2,304 27648 432 careful look at data movement. The AC922 POWER9 system LLNL 4320 8640 2,160 17280 270 with NVIDIA V100 GPUs have cache line granularity, more than double the bandwidth of PCIe Gen3, low latency interfaces Efficient programming models call for accessing system and are interconnected by dual-rail Mellanox CAPI/EDR HCAs. memory with as little data replication as possible and As such, the bandwidth and latency assumptions from previous with low instruction overhead. -

POWER10 Processor Chip

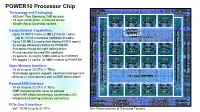

POWER10 Processor Chip Technology and Packaging: PowerAXON PowerAXON - 602mm2 7nm Samsung (18B devices) x x 3 SMT8 SMT8 SMT8 SMT8 3 - 18 layer metal stack, enhanced device 2 Core Core Core Core 2 - Single-chip or Dual-chip sockets + 2MB L2 2MB L2 2MB L2 2MB L2 + 4 4 SMP, Memory, Accel, Cluster, PCI Interconnect Cluster,Accel, Memory, SMP, Computational Capabilities: PCI Interconnect Cluster,Accel, Memory, SMP, Local 8MB - Up to 15 SMT8 Cores (2 MB L2 Cache / core) L3 region (Up to 120 simultaneous hardware threads) 64 MB L3 Hemisphere Memory Signaling (8x8 OMI) (8x8 Signaling Memory - Up to 120 MB L3 cache (low latency NUCA mgmt) OMI) (8x8 Signaling Memory - 3x energy efficiency relative to POWER9 SMT8 SMT8 SMT8 SMT8 - Enterprise thread strength optimizations Core Core Core Core - AI and security focused ISA additions 2MB L2 2MB L2 2MB L2 2MB L2 - 2x general, 4x matrix SIMD relative to POWER9 - EA-tagged L1 cache, 4x MMU relative to POWER9 SMT8 SMT8 SMT8 SMT8 Core Core Core Core Open Memory Interface: 2MB L2 2MB L2 2MB L2 2MB L2 - 16 x8 at up to 32 GT/s (1 TB/s) - Technology agnostic support: near/main/storage tiers - Minimal (< 10ns latency) add vs DDR direct attach 64 MB L3 Hemisphere PowerAXON Interface: - 16 x8 at up to 32 GT/s (1 TB/s) SMT8 SMT8 SMT8 SMT8 x Core Core Core Core x - SMP interconnect for up to 16 sockets 3 2MB L2 2MB L2 2MB L2 2MB L2 3 - OpenCAPI attach for memory, accelerators, I/O 2 2 + + - Integrated clustering (memory semantics) 4 4 PCIe Gen 5 PCIe Gen 5 PowerAXON PowerAXON PCIe Gen 5 Interface: Signaling (x16) Signaling -

A Bibliography of Publications in IEEE Micro

A Bibliography of Publications in IEEE Micro Nelson H. F. Beebe University of Utah Department of Mathematics, 110 LCB 155 S 1400 E RM 233 Salt Lake City, UT 84112-0090 USA Tel: +1 801 581 5254 FAX: +1 801 581 4148 E-mail: [email protected], [email protected], [email protected] (Internet) WWW URL: http://www.math.utah.edu/~beebe/ 16 September 2021 Version 2.108 Title word cross-reference -Core [MAT+18]. -Cubes [YW94]. -D [ASX19, BWMS19, DDG+19, Joh19c, PZB+19, ZSS+19]. -nm [ABG+16, KBN16, TKI+14]. #1 [Kah93i]. 0.18-Micron [HBd+99]. 0.9-micron + [Ano02d]. 000-fps [KII09]. 000-Processor $1 [Ano17-58, Ano17-59]. 12 [MAT 18]. 16 + + [ABG+16]. 2 [DTH+95]. 21=2 [Ste00a]. 28 [BSP 17]. 024-Core [JJK 11]. [KBN16]. 3 [ASX19, Alt14e, Ano96o, + AOYS95, BWMS19, CMAS11, DDG+19, 1 [Ano98s, BH15, Bre10, PFC 02a, Ste02a, + + Ste14a]. 1-GHz [Ano98s]. 1-terabits DFG 13, Joh19c, LXB07, LX10, MKT 13, + MAS+07, PMM15, PZB+19, SYW+14, [MIM 97]. 10 [Loc03]. 10-Gigabit SCSR93, VPV12, WLF+08, ZSS+19]. 60 [Gad07, HcF04]. 100 [TKI+14]. < [BMM15]. > [BMM15]. 2 [Kir84a, Pat84, PSW91, YSMH91, ZACM14]. [WHCK18]. 3 [KBW95]. II [BAH+05]. ∆ 100-Mops [PSW91]. 1000 [ES84]. 11- + [Lyl04]. 11/780 [Abr83]. 115 [JBF94]. [MKG 20]. k [Eng00j]. µ + [AT93, Dia95c, TS95]. N [YW94]. x 11FO4 [ASD 05]. 12 [And82a]. [DTB01, Dur96, SS05]. 12-DSP [Dur96]. 1284 [Dia94b]. 1284-1994 [Dia94b]. 13 * [CCD+82]. [KW02]. 1394 [SB00]. 1394-1955 [Dia96d]. 1 2 14 [WD03]. 15 [FD04]. 15-Billion-Dollar [KR19a]. -

IBM's Next Generation POWER Processor

IBM’s Next Generation POWER Processor Hot Chips August 18-20, 2019 Jeff Stuecheli Scott Willenborg William Starke Proposed POWER Processor Technology and I/O Roadmap Focus of 2018 talk POWER7 Architecture POWER8 Architecture POWER9 Architecture POWER10 2010 2012 2014 2016 2017 2018 2020 2021 POWER7 POWER7+ POWER8 POWER8 P9 SO P9 SU P9 AIO P10 8 cores 8 cores 12 cores w/ NVLink 12/24 cores 12/24 cores 12/24 cores TBA cores 45nm 32nm 22nm 12 cores 14nm 14nm 14nm 22nm New Micro- New Micro- Enhanced Enhanced New Micro- Enhanced Enhanced Micro- New Micro- Architecture Micro- Architecture Architecture Micro- Micro- Architecture Architecture Architecture Architecture Architecture With NVLink Direct attach memory Buffered New New Process Memory Memory New Process New Process Subsystem New Process Technology Technology Technology New Process Technology Technology Up To Up To Up To Up To Up To Up To Up To Up To Sustained Memory Bandwidth 65 GB/s 65 GB/s 210 GB/s 210 GB/s 150 GB/s 210 GB/s 650 GB/s 800 GB/s Standard I/O Interconnect PCIe Gen2 PCIe Gen2 PCIe Gen3 PCIe Gen3 PCIe Gen4 x48 PCIe Gen4 x48 PCIe Gen4 x48 PCIe Gen5 20 GT/s 25 GT/s Advanced I/O Signaling N/A N/A N/A 25 GT/s 25 GT/s 32 & 50 GT/s 160GB/s 300GB/s 300GB/s 300GB/s CAPI 2.0, CAPI 2.0, CAPI 2.0, CAPI 1.0 , Advanced I/O Architecture N/A N/A CAPI 1.0 OpenCAPI3.0, OpenCAPI3.0, OpenCAPI4.0, TBA NVLink NVLink NVLink NVLink © 2019 IBM Corporation Statement of Direction, Subject to Change 2 Proposed POWER Processor Technology and I/O Roadmap Focus of today’s talk POWER7 Architecture POWER8 -

Ilore: Discovering a Lineage of Microprocessors

iLORE: Discovering a Lineage of Microprocessors Samuel Lewis Furman Thesis submitted to the Faculty of the Virginia Polytechnic Institute and State University in partial fulfillment of the requirements for the degree of Master of Science in Computer Science & Applications Kirk Cameron, Chair Godmar Back Margaret Ellis May 24, 2021 Blacksburg, Virginia Keywords: Computer history, systems, computer architecture, microprocessors Copyright 2021, Samuel Lewis Furman iLORE: Discovering a Lineage of Microprocessors Samuel Lewis Furman (ABSTRACT) Researchers, benchmarking organizations, and hardware manufacturers maintain repositories of computer component and performance information. However, this data is split across many isolated sources and is stored in a form that is not conducive to analysis. A centralized repository of said data would arm stakeholders across industry and academia with a tool to more quantitatively understand the history of computing. We propose iLORE, a data model designed to represent intricate relationships between computer system benchmarks and computer components. We detail the methods we used to implement and populate the iLORE data model using data harvested from publicly available sources. Finally, we demonstrate the validity and utility of our iLORE implementation through an analysis of the characteristics and lineage of commercial microprocessors. We encourage the research community to interact with our data and visualizations at csgenome.org. iLORE: Discovering a Lineage of Microprocessors Samuel Lewis Furman (GENERAL AUDIENCE ABSTRACT) Researchers, benchmarking organizations, and hardware manufacturers maintain repositories of computer component and performance information. However, this data is split across many isolated sources and is stored in a form that is not conducive to analysis. A centralized repository of said data would arm stakeholders across industry and academia with a tool to more quantitatively understand the history of computing. -

Introduction to the CINECA Marconi100 HPC System

Introduction to the CINECA Marconi100 HPC system May 29, 2020 Isabella Baccarelli [email protected] SuperComputing Applications and Innovations (SCAI) – High Performance Computing Dept Outline ● CINECA infrastructure and Marconi100 ● System architecture (CPUs, GPUs, Memory, Interconnections) ● Software environment ● Programming environment ● Production environment (SLURM) ● Considerations and tips on the use of Marconi100 ● Final remarks CINECA Infrastructure M100 Infrastructure: how to access The access to M100 is granted to users with approved projects for this platform (Eurofusion, Prace, Iscra B and C, European HPC programs,…). Eurofusion community has 80 dedicated nodes of M100 ● New Users: ● Register on the User Portal UserDB userdb.hpc.cineca.it ● Get associated to an active project on M100: ● Principal Investigators (PIs): automatically associated if registered on UserDB (otherwise inform [email protected] once done) ● Collaborators: ask your PI to associate you to the project ● Fill the “HPC Access” section on UserDB ● Submit your request for the HPC Access (from UserDB) ● You will receive your credentials by e-mail in the next few working hours (Note: the way to get access to the machine will change in the near future) M100 Infrastructure: how to access $ ssh -X [email protected] ******************************************************************************* ** * Welcome to MARCONI100 Cluster / * * IBM Power AC922 (Whiterspoon) - Access by public keys (with the * ssh keys generated on a local and * Red Hat Enterprise -

Craig B. Agricola

Craig B. Agricola Home Work 3 Sydney Drive 1000 River St Essex, VT 05452 Essex Junction, VT 05452 (802) 662-1124 (802) 769-8236 [email protected] [email protected] Profile Seasoned engineer with experience in positions requiring deep knowledge of software, hardware, development infrastructures, and tools. Seeking challenges that will allow me to learn new tools and solve new types of problems. Co-author of two patents and two academic papers (one winning a \best paper" award). Technical Proficiencies C, C++, Perl, Java, Shell (Bash, Bourne, C), SQL, PostScript, Git, Various assembly languages, Verilog, PLI, Linux, Solaris, AIX, Windows, Mac OS Experience IBM Advisory Development Engineer IBM Jun 2008 to Jul 2011 Jun 2013 to Present Responsible for verification of multiple accelerator engines for POWER7+ and POWER9 processors, functions including symmetric encryption, cryptographic hashing, dynamic memory compression, and GZIP compression. Advisory Development Engineer IBM Jul 2011 to Jun 2013 Responsible for command generator and driver for verification of CAPI (Coherent Accelerator Pro- cessor Interface) unit on POWER8 processor. Netronome Systems, Inc Senior Staff Engineer Netronome Systems, Inc. Mar 2008 to Jun 2008 Developed verification components in System Verilog using the Open Verification Methodology to be used in the verification of a network processor based on Intel's IXP28xx line of processors. Intel Component Design Engineer Intel Jan 2005 to Mar 2008 Developed infrastructure to support pre-silicon platform verification of Intel's Common System In- terface (CSI) link technology in the inaugural Itanium microprocessor to use CSI and the supporting I/O Hub (IOH) chipset. As the microprocessor and the IOH chip were written in different design languages, the environment involved two separate simulation environments connected by a software backplane.