Thermodynamics and Statistical Mechanics

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Lab: Specific Heat

Lab: Specific Heat Summary Students relate thermal energy to heat capacity by comparing the heat capacities of different materials and graphing the change in temperature over time for a specific material. Students explore the idea of insulators and conductors in an attempt to apply their knowledge in real world applications, such as those that engineers would. Engineering Connection Engineers must understand the heat capacity of insulators and conductors if they wish to stay competitive in “green” enterprises. Engineers who design passive solar heating systems take advantage of the natural heat capacity characteristics of materials. In areas of cooler climates engineers chose building materials with a low heat capacity such as slate roofs in an attempt to heat the home during the day. In areas of warmer climates tile roofs are popular for their ability to store the sun's heat energy for an extended time and release it slowly after the sun sets, to prevent rapid temperature fluctuations. Engineers also consider heat capacity and thermal energy when they design food containers and household appliances. Just think about your aluminum soda can versus glass bottles! Grade Level: 9-12 Group Size: 4 Time Required: 90 minutes Activity Dependency :None Expendable Cost Per Group : US$ 5 The cost is less if thermometers are available for each group. NYS Science Standards: STANDARD 1 Analysis, Inquiry, and Design STANDARD 4 Performance indicator 2.2 Keywords: conductor, energy, energy storage, heat, specific heat capacity, insulator, thermal energy, thermometer, thermocouple Learning Objectives After this activity, students should be able to: Define heat capacity. Explain how heat capacity determines a material's ability to store thermal energy. -

Equilibrium Thermodynamics

Equilibrium Thermodynamics Instructor: - Clare Yu (e-mail [email protected], phone: 824-6216) - office hours: Wed from 2:30 pm to 3:30 pm in Rowland Hall 210E Textbooks: - primary: Herbert Callen “Thermodynamics and an Introduction to Thermostatistics” - secondary: Frederick Reif “Statistical and Thermal Physics” - secondary: Kittel and Kroemer “Thermal Physics” - secondary: Enrico Fermi “Thermodynamics” Grading: - weekly homework: 25% - discussion problems: 10% - midterm exam: 30% - final exam: 35% Equilibrium Thermodynamics Material Covered: Equilibrium thermodynamics, phase transitions, critical phenomena (~ 10 first chapters of Callen’s textbook) Homework: - Homework assignments posted on course website Exams: - One midterm, 80 minutes, Tuesday, May 8 - Final, 2 hours, Tuesday, June 12, 10:30 am - 12:20 pm - All exams are in this room 210M RH Course website is at http://eiffel.ps.uci.edu/cyu/p115B/class.html The Subject of Thermodynamics Thermodynamics describes average properties of macroscopic matter in equilibrium. - Macroscopic matter: large objects that consist of many atoms and molecules. - Average properties: properties (such as volume, pressure, temperature etc) that do not depend on the detailed positions and velocities of atoms and molecules of macroscopic matter. Such quantities are called thermodynamic coordinates, variables or parameters. - Equilibrium: state of a macroscopic system in which all average properties do not change with time. (System is not driven by external driving force.) Why Study Thermodynamics ? - Thermodynamics predicts that the average macroscopic properties of a system in equilibrium are not independent from each other. Therefore, if we measure a subset of these properties, we can calculate the rest of them using thermodynamic relations. - Thermodynamics not only gives the exact description of the state of equilibrium but also provides an approximate description (to a very high degree of precision!) of relatively slow processes. -

Lecture 4: 09.16.05 Temperature, Heat, and Entropy

3.012 Fundamentals of Materials Science Fall 2005 Lecture 4: 09.16.05 Temperature, heat, and entropy Today: LAST TIME .........................................................................................................................................................................................2� State functions ..............................................................................................................................................................................2� Path dependent variables: heat and work..................................................................................................................................2� DEFINING TEMPERATURE ...................................................................................................................................................................4� The zeroth law of thermodynamics .............................................................................................................................................4� The absolute temperature scale ..................................................................................................................................................5� CONSEQUENCES OF THE RELATION BETWEEN TEMPERATURE, HEAT, AND ENTROPY: HEAT CAPACITY .......................................6� The difference between heat and temperature ...........................................................................................................................6� Defining heat capacity.................................................................................................................................................................6� -

Einstein Solid 1 / 36 the Results 2

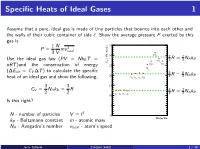

Specific Heats of Ideal Gases 1 Assume that a pure, ideal gas is made of tiny particles that bounce into each other and the walls of their cubic container of side `. Show the average pressure P exerted by this gas is 1 N 35 P = mv 2 3 V total SO 30 2 7 7 (J/K-mole) R = NAkB Use the ideal gas law (PV = NkB T = V 2 2 CO2 C H O CH nRT )and the conservation of energy 25 2 4 Cl2 (∆Eint = CV ∆T ) to calculate the specific 5 5 20 2 R = 2 NAkB heat of an ideal gas and show the following. H2 N2 O2 CO 3 3 15 CV = NAkB = R 3 3 R = NAkB 2 2 He Ar Ne Kr 2 2 10 Is this right? 5 3 N - number of particles V = ` 0 Molecule kB - Boltzmann constant m - atomic mass NA - Avogadro's number vtotal - atom's speed Jerry Gilfoyle Einstein Solid 1 / 36 The Results 2 1 N 2 2 N 7 P = mv = hEkini 2 NAkB 3 V total 3 V 35 30 SO2 3 (J/K-mole) V 5 CO2 C N k hEkini = NkB T H O CH 2 A B 2 25 2 4 Cl2 20 H N O CO 3 3 2 2 2 3 CV = NAkB = R 2 NAkB 2 2 15 He Ar Ne Kr 10 5 0 Molecule Jerry Gilfoyle Einstein Solid 2 / 36 Quantum mechanically 2 E qm = `(` + 1) ~ rot 2I where l is the angular momen- tum quantum number. -

Compression of an Ideal Gas with Temperature Dependent Specific

Session 3666 Compression of an Ideal Gas with Temperature-Dependent Specific Heat Capacities Donald W. Mueller, Jr., Hosni I. Abu-Mulaweh Engineering Department Indiana University–Purdue University Fort Wayne Fort Wayne, IN 46805-1499, USA Overview The compression of gas in a steady-state, steady-flow (SSSF) compressor is an important topic that is addressed in virtually all engineering thermodynamics courses. A typical situation encountered is when the gas inlet conditions, temperature and pressure, are known and the compressor dis- charge pressure is specified. The traditional instructional approach is to make certain assumptions about the compression process and about the gas itself. For example, a special case often consid- ered is that the compression process is reversible and adiabatic, i.e. isentropic. The gas is usually ÊÌ considered to be ideal, i.e. ÈÚ applies, with either constant or temperature-dependent spe- cific heat capacities. The constant specific heat capacity assumption allows for direct computation of the discharge temperature, while the temperature-dependent specific heat assumption does not. In this paper, a MATLAB computer model of the compression process is presented. The substance being compressed is air which is considered to be an ideal gas with temperature-dependent specific heat capacities. Two approaches are presented to describe the temperature-dependent properties of air. The first approach is to use the ideal gas table data from Ref. [1] with a look-up interpolation scheme. The second approach is to use a curve fit with the NASA Lewis coefficients [2,3]. The computer model developed makes use of a bracketing–bisection algorithm [4] to determine the temperature of the air after compression. -

![Arxiv:1509.06955V1 [Physics.Optics]](https://docslib.b-cdn.net/cover/4098/arxiv-1509-06955v1-physics-optics-274098.webp)

Arxiv:1509.06955V1 [Physics.Optics]

Statistical mechanics models for multimode lasers and random lasers F. Antenucci 1,2, A. Crisanti2,3, M. Ib´a˜nez Berganza2,4, A. Marruzzo1,2, L. Leuzzi1,2∗ 1 NANOTEC-CNR, Institute of Nanotechnology, Soft and Living Matter Lab, Rome, Piazzale A. Moro 2, I-00185, Roma, Italy 2 Dipartimento di Fisica, Universit`adi Roma “Sapienza,” Piazzale A. Moro 2, I-00185, Roma, Italy 3 ISC-CNR, UOS Sapienza, Piazzale A. Moro 2, I-00185, Roma, Italy 4 INFN, Gruppo Collegato di Parma, via G.P. Usberti, 7/A - 43124, Parma, Italy We review recent statistical mechanical approaches to multimode laser theory. The theory has proved very effective to describe standard lasers. We refer of the mean field theory for passive mode locking and developments based on Monte Carlo simulations and cavity method to study the role of the frequency matching condition. The status for a complete theory of multimode lasing in open and disordered cavities is discussed and the derivation of the general statistical models in this framework is presented. When light is propagating in a disordered medium, the system can be analyzed via the replica method. For high degrees of disorder and nonlinearity, a glassy behavior is expected at the lasing threshold, providing a suggestive link between glasses and photonics. We describe in details the results for the general Hamiltonian model in mean field approximation and mention an available test for replica symmetry breaking from intensity spectra measurements. Finally, we summary some perspectives still opened for such approaches. The idea that the lasing threshold can be understood as a proper thermodynamic transition goes back since the early development of laser theory and non-linear optics in the 1970s, in particular in connection with modulation instability (see, e.g.,1 and the review2). -

Statistics of a Free Single Quantum Particle at a Finite

STATISTICS OF A FREE SINGLE QUANTUM PARTICLE AT A FINITE TEMPERATURE JIAN-PING PENG Department of Physics, Shanghai Jiao Tong University, Shanghai 200240, China Abstract We present a model to study the statistics of a single structureless quantum particle freely moving in a space at a finite temperature. It is shown that the quantum particle feels the temperature and can exchange energy with its environment in the form of heat transfer. The underlying mechanism is diffraction at the edge of the wave front of its matter wave. Expressions of energy and entropy of the particle are obtained for the irreversible process. Keywords: Quantum particle at a finite temperature, Thermodynamics of a single quantum particle PACS: 05.30.-d, 02.70.Rr 1 Quantum mechanics is the theoretical framework that describes phenomena on the microscopic level and is exact at zero temperature. The fundamental statistical character in quantum mechanics, due to the Heisenberg uncertainty relation, is unrelated to temperature. On the other hand, temperature is generally believed to have no microscopic meaning and can only be conceived at the macroscopic level. For instance, one can define the energy of a single quantum particle, but one can not ascribe a temperature to it. However, it is physically meaningful to place a single quantum particle in a box or let it move in a space where temperature is well-defined. This raises the well-known question: How a single quantum particle feels the temperature and what is the consequence? The question is particular important and interesting, since experimental techniques in recent years have improved to such an extent that direct measurement of electron dynamics is possible.1,2,3 It should also closely related to the question on the applicability of the thermodynamics to small systems on the nanometer scale.4 We present here a model to study the behavior of a structureless quantum particle moving freely in a space at a nonzero temperature. -

![Arxiv:1910.10745V1 [Cond-Mat.Str-El] 23 Oct 2019 2.2 Symmetry-Protected Time Crystals](https://docslib.b-cdn.net/cover/4942/arxiv-1910-10745v1-cond-mat-str-el-23-oct-2019-2-2-symmetry-protected-time-crystals-304942.webp)

Arxiv:1910.10745V1 [Cond-Mat.Str-El] 23 Oct 2019 2.2 Symmetry-Protected Time Crystals

A Brief History of Time Crystals Vedika Khemania,b,∗, Roderich Moessnerc, S. L. Sondhid aDepartment of Physics, Harvard University, Cambridge, Massachusetts 02138, USA bDepartment of Physics, Stanford University, Stanford, California 94305, USA cMax-Planck-Institut f¨urPhysik komplexer Systeme, 01187 Dresden, Germany dDepartment of Physics, Princeton University, Princeton, New Jersey 08544, USA Abstract The idea of breaking time-translation symmetry has fascinated humanity at least since ancient proposals of the per- petuum mobile. Unlike the breaking of other symmetries, such as spatial translation in a crystal or spin rotation in a magnet, time translation symmetry breaking (TTSB) has been tantalisingly elusive. We review this history up to recent developments which have shown that discrete TTSB does takes place in periodically driven (Floquet) systems in the presence of many-body localization (MBL). Such Floquet time-crystals represent a new paradigm in quantum statistical mechanics — that of an intrinsically out-of-equilibrium many-body phase of matter with no equilibrium counterpart. We include a compendium of the necessary background on the statistical mechanics of phase structure in many- body systems, before specializing to a detailed discussion of the nature, and diagnostics, of TTSB. In particular, we provide precise definitions that formalize the notion of a time-crystal as a stable, macroscopic, conservative clock — explaining both the need for a many-body system in the infinite volume limit, and for a lack of net energy absorption or dissipation. Our discussion emphasizes that TTSB in a time-crystal is accompanied by the breaking of a spatial symmetry — so that time-crystals exhibit a novel form of spatiotemporal order. -

Conclusion: the Impact of Statistical Physics

Conclusion: The Impact of Statistical Physics Applications of Statistical Physics The different examples which we have treated in the present book have shown the role played by statistical mechanics in other branches of physics: as a theory of matter in its various forms it serves as a bridge between macroscopic physics, which is more descriptive and experimental, and microscopic physics, which aims at finding the principles on which our understanding of Nature is based. This bridge can be crossed fruitfully in both directions, to explain or predict macroscopic properties from our knowledge of microphysics, and inversely to consolidate the microscopic principles by exploring their more or less distant macroscopic consequences which may be checked experimentally. The use of statistical methods for deductive purposes becomes unavoidable as soon as the systems or the effects studied are no longer elementary. Statistical physics is a tool of common use in the other natural sciences. Astrophysics resorts to it for describing the various forms of matter existing in the Universe where densities are either too high or too low, and tempera tures too high, to enable us to carry out the appropriate laboratory studies. Chemical kinetics as well as chemical equilibria depend in an essential way on it. We have also seen that this makes it possible to calculate the mechan ical properties of fluids or of solids; these properties are postulated in the mechanics of continuous media. Even biophysics has recourse to it when it treats general properties or poorly organized systems. If we turn to the domain of practical applications it is clear that the technology of materials, a science aiming to develop substances which have definite mechanical (metallurgy, plastics, lubricants), chemical (corrosion), thermal (conduction or insulation), electrical (resistance, superconductivity), or optical (display by liquid crystals) properties, has a great interest in statis tical physics. -

Otto Sackur's Pioneering Exploits in the Quantum Theory Of

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Catalogo dei prodotti della ricerca Chapter 3 Putting the Quantum to Work: Otto Sackur’s Pioneering Exploits in the Quantum Theory of Gases Massimiliano Badino and Bretislav Friedrich After its appearance in the context of radiation theory, the quantum hypothesis rapidly diffused into other fields. By 1910, the crisis of classical traditions of physics and chemistry—while taking the quantum into account—became increas- ingly evident. The First Solvay Conference in 1911 pushed quantum theory to the fore, and many leading physicists responded by embracing the quantum hypoth- esis as a way to solve outstanding problems in the theory of matter. Until about 1910, quantum physics had drawn much of its inspiration from two sources. The first was the complex formal machinery connected with Max Planck’s theory of radiation and, above all, its close relationship with probabilis- tic arguments and statistical mechanics. The fledgling 1900–1901 version of this theory hinged on the application of Ludwig Boltzmann’s 1877 combinatorial pro- cedure to determine the state of maximum probability for a set of oscillators. In his 1906 book on heat radiation, Planck made the connection with gas theory even tighter. To illustrate the use of the procedure Boltzmann originally developed for an ideal gas, Planck showed how to extend the analysis of the phase space, com- monplace among practitioners of statistical mechanics, to electromagnetic oscil- lators (Planck 1906, 140–148). In doing so, Planck identified a crucial difference between the phase space of the gas molecules and that of oscillators used in quan- tum theory. -

Applied Physics

Physics 1. What is Physics 2. Nature Science 3. Science 4. Scientific Method 5. Branches of Science 6. Branches of Physics Einstein Newton 7. Branches of Applied Physics Bardeen Feynmann 2016-03-05 What is Physics • Meaning of Physics (from Ancient Greek: φυσική (phusikḗ )) is knowledge of nature (from φύσις phúsis "nature"). • Physics is the natural science that involves the study of matter and its motion through space and time, along with related concepts such as energy and force. More broadly, it is the general analysis of nature, conducted in order to understand how the universe behaves. • Physics is one of the oldest academic disciplines, perhaps the oldest through its inclusion of astronomy. Over the last two millennia, physics was a part of natural philosophy along with chemistry, certain branches of mathematics, and biology, but during the scientific revolution in the 17th century, the natural sciences emerged as unique research programs in their own right. Physics intersects with many interdisciplinary areas of research, such as biophysics and quantum chemistry, and the boundaries of physics are not rigidly defined. • New ideas in physics often explain the fundamental mechanisms of other sciences, while opening new avenues of research in areas such as mathematics and philosophy. 2016-03-05 What is Physics • Physics also makes significant contributions through advances in new technologies that arise from theoretical breakthroughs. • For example, advances in the understanding of electromagnetism or nuclear physics led directly to the development of new products that have dramatically transformed modern-day society, such as television, computers, domestic appliances, and nuclear weapons; advances in thermodynamics led to the development of industrialization, and advances in mechanics inspired the development of calculus. -

Physics 357 – Thermal Physics - Spring 2005

Physics 357 – Thermal Physics - Spring 2005 Contact Info MTTH 9-9:50. Science 255 Doug Juers, Science 244, 527-5229, [email protected] Office Hours: Mon 10-noon, Wed 9-10 and by appointment or whenever I’m around. Description Thermal physics involves the study of systems with lots (1023) of particles. Since only a two-body problem can be solved analytically, you can imagine we will look at these systems somewhat differently from simple systems in mechanics. Rather than try to predict individual trajectories of individual particles we will consider things on a larger scale. Probability and statistics will play an important role in thinking about what can happen. There are two general methods of dealing with such systems – a top down approach and a bottom up approach. In the top down approach (thermodynamics) one doesn’t even really consider that there are particles there, and instead thinks about things in terms of quantities like temperature heat, energy, work, entropy and enthalpy. In the bottom up approach (statistical mechanics) one considers in detail the interactions between individual particles. Building up from there yields impressive predictions of bulk properties of different materials. In this course we will cover both thermodynamics and statistical mechanics. The first part of the term will emphasize the former, while the second part of the term will emphasize the latter. Both topics have important applications in many fields, including solid-state physics, fluids, geology, chemistry, biology, astrophysics, cosmology. The great thing about thermal physics is that in large part it’s about trying to deal with reality.