Newsql Principles, Systems and Current Trends

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Smart Methods for Retrieving Physiological Data in Physiqual

University of Groningen Bachelor thesis Smart methods for retrieving physiological data in Physiqual Marc Babtist & Sebastian Wehkamp Supervisors: Frank Blaauw & Marco Aiello July, 2016 Contents 1 Introduction 4 1.1 Physiqual . 4 1.2 Problem description . 4 1.3 Research questions . 5 1.4 Document structure . 5 2 Related work 5 2.1 IJkdijk project . 5 2.2 MUMPS . 6 3 Background 7 3.1 Scalability . 7 3.2 CAP theorem . 7 3.3 Reliability models . 7 4 Requirements 8 4.1 Scalability . 8 4.2 Reliability . 9 4.3 Performance . 9 4.4 Open source . 9 5 General database options 9 5.1 Relational or Non-relational . 9 5.2 NoSQL categories . 10 5.2.1 Key-value Stores . 10 5.2.2 Document Stores . 11 5.2.3 Column Family Stores . 11 5.2.4 Graph Stores . 12 5.3 When to use which category . 12 5.4 Conclusion . 12 5.4.1 Key-Value . 13 5.4.2 Document stores . 13 5.4.3 Column family stores . 13 5.4.4 Graph Stores . 13 6 Specific database options 13 6.1 Key-value stores: Riak . 14 6.2 Document stores: MongoDB . 14 6.3 Column Family stores: Cassandra . 14 6.4 Performance comparison . 15 6.4.1 Sensor Data Storage Performance . 16 6.4.2 Conclusion . 16 7 Data model 17 7.1 Modeling rules . 17 7.2 Data model Design . 18 2 7.3 Summary . 18 8 Architecture 20 8.1 Basic layout . 20 8.2 Sidekiq . 21 9 Design decisions 21 9.1 Keyspaces . 21 9.2 Gap determination . -

Big Data Velocity in Plain English

Big Data Velocity in Plain English John Ryan Data Warehouse Solution Architect Table of Contents The Requirement . 1 What’s the Problem? . .. 2 Components Needed . 3 Data Capture . 3 Transformation . 3 Storage and Analytics . 4 The Traditional Solution . 6 The NewSQL Based Solution . 7 NewSQL Advantage . 9 Thank You. 10 About the Author . 10 ii The Requirement The assumed requirement is the ability to capture, transform and analyse data at potentially massive velocity in real time. This involves capturing data from millions of customers or electronic sensors, and transforming and storing the results for real time analysis on dashboards. The solution must minimise latency — the delay between a real world event and it’s impact upon a dashboard, to under a second. Typical applications include: • Monitoring Machine Sensors: Using embedded sensors in industrial machines or vehicles — typically referred to as The Internet of Things (IoT) . For example Progressive Insurance use real time speed and vehicle braking data to help classify accident risk and deliver appropriate discounts. Similar technology is used by logistics giant FedEx which uses SenseAware technology to provide near real-time parcel tracking. • Fraud Detection: To assess the risk of credit card fraud prior to authorising or declining the transaction. This can be based upon a simple report of a lost or stolen card, or more likely, an analysis of aggregate spending behaviour, aligned with machine learning techniques. • Clickstream Analysis: Producing real time analysis of user web site clicks to dynamically deliver pages, recommended products or services, or deliver individually targeted advertising. Big Data: Velocity in Plain English eBook 1 What’s the Problem? The primary challenge for real time systems architects is the potentially massive throughput required which could exceed a million transactions per second. -

Let's Talk About Storage & Recovery Methods for Non-Volatile Memory

Let’s Talk About Storage & Recovery Methods for Non-Volatile Memory Database Systems Joy Arulraj Andrew Pavlo Subramanya R. Dulloor [email protected] [email protected] [email protected] Carnegie Mellon University Carnegie Mellon University Intel Labs ABSTRACT of power, the DBMS must write that data to a non-volatile device, The advent of non-volatile memory (NVM) will fundamentally such as a SSD or HDD. Such devices only support slow, bulk data change the dichotomy between memory and durable storage in transfers as blocks. Contrast this with volatile DRAM, where a database management systems (DBMSs). These new NVM devices DBMS can quickly read and write a single byte from these devices, are almost as fast as DRAM, but all writes to it are potentially but all data is lost once power is lost. persistent even after power loss. Existing DBMSs are unable to take In addition, there are inherent physical limitations that prevent full advantage of this technology because their internal architectures DRAM from scaling to capacities beyond today’s levels [46]. Using are predicated on the assumption that memory is volatile. With a large amount of DRAM also consumes a lot of energy since it NVM, many of the components of legacy DBMSs are unnecessary requires periodic refreshing to preserve data even if it is not actively and will degrade the performance of data intensive applications. used. Studies have shown that DRAM consumes about 40% of the To better understand these issues, we implemented three engines overall power consumed by a server [42]. in a modular DBMS testbed that are based on different storage Although flash-based SSDs have better storage capacities and use management architectures: (1) in-place updates, (2) copy-on-write less energy than DRAM, they have other issues that make them less updates, and (3) log-structured updates. -

(DDL) Reference Manual

Data Definition Language (DDL) Reference Manual Abstract This publication describes the DDL language syntax and the DDL dictionary database. The audience includes application programmers and database administrators. Product Version DDL D40 DDL H01 Supported Release Version Updates (RVUs) This publication supports J06.03 and all subsequent J-series RVUs, H06.03 and all subsequent H-series RVUs, and G06.26 and all subsequent G-series RVUs, until otherwise indicated by its replacement publications. Part Number Published 529431-003 May 2010 Document History Part Number Product Version Published 529431-002 DDL D40, DDL H01 July 2005 529431-003 DDL D40, DDL H01 May 2010 Legal Notices Copyright 2010 Hewlett-Packard Development Company L.P. Confidential computer software. Valid license from HP required for possession, use or copying. Consistent with FAR 12.211 and 12.212, Commercial Computer Software, Computer Software Documentation, and Technical Data for Commercial Items are licensed to the U.S. Government under vendor's standard commercial license. The information contained herein is subject to change without notice. The only warranties for HP products and services are set forth in the express warranty statements accompanying such products and services. Nothing herein should be construed as constituting an additional warranty. HP shall not be liable for technical or editorial errors or omissions contained herein. Export of the information contained in this publication may require authorization from the U.S. Department of Commerce. Microsoft, Windows, and Windows NT are U.S. registered trademarks of Microsoft Corporation. Intel, Itanium, Pentium, and Celeron are trademarks or registered trademarks of Intel Corporation or its subsidiaries in the United States and other countries. -

Failures in DBMS

Chapter 11 Database Recovery 1 Failures in DBMS Two common kinds of failures StSystem filfailure (t)(e.g. power outage) ‒ affects all transactions currently in progress but does not physically damage the data (soft crash) Media failures (e.g. Head crash on the disk) ‒ damagg()e to the database (hard crash) ‒ need backup data Recoveryyp scheme responsible for handling failures and restoring database to consistent state 2 Recovery Recovering the database itself Recovery algorithm has two parts ‒ Actions taken during normal operation to ensure system can recover from failure (e.g., backup, log file) ‒ Actions taken after a failure to restore database to consistent state We will discuss (briefly) ‒ Transactions/Transaction recovery ‒ System Recovery 3 Transactions A database is updated by processing transactions that result in changes to one or more records. A user’s program may carry out many operations on the data retrieved from the database, but the DBMS is only concerned with data read/written from/to the database. The DBMS’s abstract view of a user program is a sequence of transactions (reads and writes). To understand database recovery, we must first understand the concept of transaction integrity. 4 Transactions A transaction is considered a logical unit of work ‒ START Statement: BEGIN TRANSACTION ‒ END Statement: COMMIT ‒ Execution errors: ROLLBACK Assume we want to transfer $100 from one bank (A) account to another (B): UPDATE Account_A SET Balance= Balance -100; UPDATE Account_B SET Balance= Balance +100; We want these two operations to appear as a single atomic action 5 Transactions We want these two operations to appear as a single atomic action ‒ To avoid inconsistent states of the database in-between the two updates ‒ And obviously we cannot allow the first UPDATE to be executed and the second not or vice versa. -

Oracle Vs. Nosql Vs. Newsql Comparing Database Technology

Oracle vs. NoSQL vs. NewSQL Comparing Database Technology John Ryan Senior Solution Architect, Snowflake Computing Table of Contents The World has Changed . 1 What’s Changed? . 2 What’s the Problem? . .. 3 Performance vs. Availability and Durability . 3 Consistecy vs. Availability . 4 Flexibility vs . Scalability . 5 ACID vs. Eventual Consistency . 6 The OLTP Database Reimagined . 7 Achieving the Impossible! . .. 8 NewSQL Database Technology . 9 VoltDB . 10 MemSQL . 11 Which Applications Need NewSQL Technology? . 12 Conclusion . 13 About the Author . 13 ii The World has Changed The world has changed massively in the past 20 years. Back in the year 2000, a few million users connected to the web using a 56k modem attached to a PC, and Amazon only sold books. Now billions of people are using to their smartphone or tablet 24x7 to buy just about everything, and they’re interacting with Facebook, Twitter and Instagram. The pace has been unstoppable . Expectations have also changed. If a web page doesn’t refresh within seconds we’re quickly frustrated, and go elsewhere. If a web site is down, we fear it’s the end of civilisation as we know it. If a major site is down, it makes global headlines. Instant gratification takes too long! — Ladawn Clare-Panton Aside: If you’re not a seasoned Database Architect, you may want to start with my previous articles on Scalability and Database Architecture. Oracle vs. NoSQL vs. NewSQL eBook 1 What’s Changed? The above leads to a few observations: • Scalability — With potentially explosive traffic growth, IT systems need to quickly grow to meet exponential numbers of transactions • High Availability — IT systems must run 24x7, and be resilient to failure. -

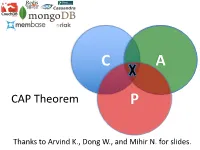

CAP Theorem P

C A CAP Theorem P Thanks to Arvind K., Dong W., and Mihir N. for slides. CAP Theorem • “It is impossible for a web service to provide these three guarantees at the same time (pick 2 of 3): • (Sequential) Consistency • Availability • Partition-tolerance” • Conjectured by Eric Brewer in ’00 • Proved by Gilbert and Lynch in ’02 • But with definitions that do not match what you’d assume (or Brewer meant) • Influenced the NoSQL mania • Highly controversial: “the CAP theorem encourages engineers to make awful decisions.” – Stonebraker • Many misinterpretations 2 CAP Theorem • Consistency: – Sequential consistency (a data item behaves as if there is one copy) • Availability: – Node failures do not prevent survivors from continuing to operate • Partition-tolerance: – The system continues to operate despite network partitions • CAP says that “A distributed system can satisfy any two of these guarantees at the same time but not all three” 3 C in CAP != C in ACID • They are different! • CAP’s C(onsistency) = sequential consistency – Similar to ACID’s A(tomicity) = Visibility to all future operations • ACID’s C(onsistency) = Does the data satisfy schema constraints 4 Sequential consistency • Makes it appear as if there is one copy of the object • Strict ordering on ops from same client • A single linear ordering across client ops – If client a executes operations {a1, a2, a3, ...}, client b executes operations {b1, b2, b3, ...} – Then, globally, clients observe some serialized version of the sequence • e.g., {a1, b1, b2, a2, ...} (or whatever) Notice -

What Is Nosql? the Only Thing That All Nosql Solutions Providers Generally Agree on Is That the Term “Nosql” Isn’T Perfect, but It Is Catchy

NoSQL GREG SYSADMINBURD Greg Burd is a Developer Choosing between databases used to boil down to examining the differences Advocate for Basho between the available commercial and open source relational databases . The term Technologies, makers of Riak. “database” had become synonymous with SQL, and for a while not much else came Before Basho, Greg spent close to being a viable solution for data storage . But recently there has been a shift nearly ten years as the product manager for in the database landscape . When considering options for data storage, there is a Berkeley DB at Sleepycat Software and then new game in town: NoSQL databases . In this article I’ll introduce this new cat- at Oracle. Previously, Greg worked for NeXT egory of databases, examine where they came from and what they are good for, and Computer, Sun Microsystems, and KnowNow. help you understand whether you, too, should be considering a NoSQL solution in Greg has long been an avid supporter of open place of, or in addition to, your RDBMS database . source software. [email protected] What Is NoSQL? The only thing that all NoSQL solutions providers generally agree on is that the term “NoSQL” isn’t perfect, but it is catchy . Most agree that the “no” stands for “not only”—an admission that the goal is not to reject SQL but, rather, to compensate for the technical limitations shared by the majority of relational database implemen- tations . In fact, NoSQL is more a rejection of a particular software and hardware architecture for databases than of any single technology, language, or product . -

Oracle Nosql Database

An Oracle White Paper November 2012 Oracle NoSQL Database Oracle NoSQL Database Table of Contents Introduction ........................................................................................ 2 Technical Overview ............................................................................ 4 Data Model ..................................................................................... 4 API ................................................................................................. 5 Create, Remove, Update, and Delete..................................................... 5 Iteration ................................................................................................... 6 Bulk Operation API ................................................................................. 7 Administration .................................................................................... 7 Architecture ........................................................................................ 8 Implementation ................................................................................... 9 Storage Nodes ............................................................................... 9 Client Driver ................................................................................. 10 Performance ..................................................................................... 11 Conclusion ....................................................................................... 12 1 Oracle NoSQL Database Introduction NoSQL databases -

PS Non-Standard Database Systems Overview

PS Non-Standard Database Systems Overview Daniel Kocher March 2019 is document gives a brief overview on three categories of non-standard database systems (DBS) in the context of this class. For each category, we provide you with a motivation, a discussion about the key properties, a list of commonly used implementations (proprietary and open-source/freely-available), and recommendation(s) for your project. For your convenience, we also recap some relevant terms (e.g., ACID, OLTP, ...). Terminology OLTP Online transaction processing. OLTP systems are highly optimized to process (1) short queries (operating only on a small portion of the database) and (2) small transac- tions (inserting/updating few tuples per relation). Typically, OLTP includes insertions, updates, and deletions. e main focus of OLTP systems is on low response time and high throughput (in terms of transactions per second). Databases in OLTP systems are highly normalized [5]. OLAP Online analytical processing. OLAP systems analyze complex data (usually from a data warehouse). Typically, queries in an OLAP system may run for a long time (as opposed to queries in OLTP systems) and the number of transactions is usually low. Fur- thermore, typical OLAP queries are read-only and touch a large portion of the database (e.g., scan or join large tables). e main focus of OLAP systems is on low response time. Databases in OLAP systems are oen de-normalized intentionally [5]. ACID Traditional relational database systems guarantee ACID properties: – Atomicity All or no operation(s) of a transaction are reected in the database. – Consistency Isolated execution of a transaction preserves consistency of the database. -

Nosql Databases: Yearning for Disambiguation

NOSQL DATABASES: YEARNING FOR DISAMBIGUATION Chaimae Asaad Alqualsadi, Rabat IT Center, ENSIAS, University Mohammed V in Rabat and TicLab, International University of Rabat, Morocco [email protected] Karim Baïna Alqualsadi, Rabat IT Center, ENSIAS, University Mohammed V in Rabat, Morocco [email protected] Mounir Ghogho TicLab, International University of Rabat Morocco [email protected] March 17, 2020 ABSTRACT The demanding requirements of the new Big Data intensive era raised the need for flexible storage systems capable of handling huge volumes of unstructured data and of tackling the challenges that arXiv:2003.04074v2 [cs.DB] 16 Mar 2020 traditional databases were facing. NoSQL Databases, in their heterogeneity, are a powerful and diverse set of databases tailored to specific industrial and business needs. However, the lack of the- oretical background creates a lack of consensus even among experts about many NoSQL concepts, leading to ambiguity and confusion. In this paper, we present a survey of NoSQL databases and their classification by data model type. We also conduct a benchmark in order to compare different NoSQL databases and distinguish their characteristics. Additionally, we present the major areas of ambiguity and confusion around NoSQL databases and their related concepts, and attempt to disambiguate them. Keywords NoSQL Databases · NoSQL data models · NoSQL characteristics · NoSQL Classification A PREPRINT -MARCH 17, 2020 1 Introduction The proliferation of data sources ranging from social media and Internet of Things (IoT) to industrially generated data (e.g. transactions) has led to a growing demand for data intensive cloud based applications and has created new challenges for big-data-era databases. -

IBM Cloudant: Database As a Service Fundamentals

Front cover IBM Cloudant: Database as a Service Fundamentals Understand the basics of NoSQL document data stores Access and work with the Cloudant API Work programmatically with Cloudant data Christopher Bienko Marina Greenstein Stephen E Holt Richard T Phillips ibm.com/redbooks Redpaper Contents Notices . 5 Trademarks . 6 Preface . 7 Authors. 7 Now you can become a published author, too! . 8 Comments welcome. 8 Stay connected to IBM Redbooks . 9 Chapter 1. Survey of the database landscape . 1 1.1 The fundamentals and evolution of relational databases . 2 1.1.1 The relational model . 2 1.1.2 The CAP Theorem . 4 1.2 The NoSQL paradigm . 5 1.2.1 ACID versus BASE systems . 7 1.2.2 What is NoSQL? . 8 1.2.3 NoSQL database landscape . 9 1.2.4 JSON and schema-less model . 11 Chapter 2. Build more, grow more, and sleep more with IBM Cloudant . 13 2.1 Business value . 15 2.2 Solution overview . 16 2.3 Solution architecture . 18 2.4 Usage scenarios . 20 2.5 Intuitively interact with data using Cloudant Dashboard . 21 2.5.1 Editing JSON documents using Cloudant Dashboard . 22 2.5.2 Configuring access permissions and replication jobs within Cloudant Dashboard. 24 2.6 A continuum of services working together on cloud . 25 2.6.1 Provisioning an analytics warehouse with IBM dashDB . 26 2.6.2 Data refinement services on-premises. 30 2.6.3 Data refinement services on the cloud. 30 2.6.4 Hybrid clouds: Data refinement across on/off–premises. 31 Chapter 3.