Recursive-Descent Parsing and Code Generation

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

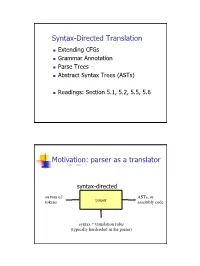

Syntax-Directed Translation, Parse Trees, Abstract Syntax Trees

Syntax-Directed Translation Extending CFGs Grammar Annotation Parse Trees Abstract Syntax Trees (ASTs) Readings: Section 5.1, 5.2, 5.5, 5.6 Motivation: parser as a translator syntax-directed translation stream of ASTs, or tokens parser assembly code syntax + translation rules (typically hardcoded in the parser) 1 Mechanism of syntax-directed translation syntax-directed translation is done by extending the CFG a translation rule is defined for each production given X Æ d A B c the translation of X is defined in terms of translation of nonterminals A, B values of attributes of terminals d, c constants To translate an input string: 1. Build the parse tree. 2. Working bottom-up • Use the translation rules to compute the translation of each nonterminal in the tree Result: the translation of the string is the translation of the parse tree's root nonterminal Why bottom up? a nonterminal's value may depend on the value of the symbols on the right-hand side, so translate a non-terminal node only after children translations are available 2 Example 1: arith expr to its value Syntax-directed translation: the CFG translation rules E Æ E + T E1.trans = E2.trans + T.trans E Æ T E.trans = T.trans T Æ T * F T1.trans = T2.trans * F.trans T Æ F T.trans = F.trans F Æ int F.trans = int.value F Æ ( E ) F.trans = E.trans Example 1 (cont) E (18) Input: 2 * (4 + 5) T (18) T (2) * F (9) F (2) ( E (9) ) int (2) E (4) * T (5) Annotated Parse Tree T (4) F (5) F (4) int (5) int (4) 3 Example 2: Compute type of expr E -> E + E if ((E2.trans == INT) and (E3.trans == INT) then E1.trans = INT else E1.trans = ERROR E -> E and E if ((E2.trans == BOOL) and (E3.trans == BOOL) then E1.trans = BOOL else E1.trans = ERROR E -> E == E if ((E2.trans == E3.trans) and (E2.trans != ERROR)) then E1.trans = BOOL else E1.trans = ERROR E -> true E.trans = BOOL E -> false E.trans = BOOL E -> int E.trans = INT E -> ( E ) E1.trans = E2.trans Example 2 (cont) Input: (2 + 2) == 4 1. -

Derivatives of Parsing Expression Grammars

Derivatives of Parsing Expression Grammars Aaron Moss Cheriton School of Computer Science University of Waterloo Waterloo, Ontario, Canada [email protected] This paper introduces a new derivative parsing algorithm for recognition of parsing expression gram- mars. Derivative parsing is shown to have a polynomial worst-case time bound, an improvement on the exponential bound of the recursive descent algorithm. This work also introduces asymptotic analysis based on inputs with a constant bound on both grammar nesting depth and number of back- tracking choices; derivative and recursive descent parsing are shown to run in linear time and constant space on this useful class of inputs, with both the theoretical bounds and the reasonability of the in- put class validated empirically. This common-case constant memory usage of derivative parsing is an improvement on the linear space required by the packrat algorithm. 1 Introduction Parsing expression grammars (PEGs) are a parsing formalism introduced by Ford [6]. Any LR(k) lan- guage can be represented as a PEG [7], but there are some non-context-free languages that may also be represented as PEGs (e.g. anbncn [7]). Unlike context-free grammars (CFGs), PEGs are unambiguous, admitting no more than one parse tree for any grammar and input. PEGs are a formalization of recursive descent parsers allowing limited backtracking and infinite lookahead; a string in the language of a PEG can be recognized in exponential time and linear space using a recursive descent algorithm, or linear time and space using the memoized packrat algorithm [6]. PEGs are formally defined and these algo- rithms outlined in Section 3. -

A Typed, Algebraic Approach to Parsing

A Typed, Algebraic Approach to Parsing Neelakantan R. Krishnaswami Jeremy Yallop University of Cambridge University of Cambridge United Kingdom United Kingdom [email protected] [email protected] Abstract the subject have remained remarkably stable: context-free In this paper, we recall the definition of the context-free ex- languages are specified in BackusśNaur form, and parsers pressions (or µ-regular expressions), an algebraic presenta- for these specifications are implemented using algorithms tion of the context-free languages. Then, we define a core derived from automata theory. This integration of theory and type system for the context-free expressions which gives a practice has yielded many benefits: we have linear-time algo- compositional criterion for identifying those context-free ex- rithms for parsing unambiguous grammars efficiently [Knuth pressions which can be parsed unambiguously by predictive 1965; Lewis and Stearns 1968] and with excellent error re- algorithms in the style of recursive descent or LL(1). porting for bad input strings [Jeffery 2003]. Next, we show how these typed grammar expressions can However, in languages with good support for higher-order be used to derive a parser combinator library which both functions (such as ML, Haskell, and Scala) it is very popular guarantees linear-time parsing with no backtracking and to use parser combinators [Hutton 1992] instead. These li- single-token lookahead, and which respects the natural de- braries typically build backtracking recursive descent parsers notational semantics of context-free expressions. Finally, we by composing higher-order functions. This allows building show how to exploit the type information to write a staged up parsers with the ordinary abstraction features of the version of this library, which produces dramatic increases programming language (variables, data structures and func- in performance, even outperforming code generated by the tions), which is sufficiently beneficial that these libraries are standard parser generator tool ocamlyacc. -

Adaptive LL(*) Parsing: the Power of Dynamic Analysis

Adaptive LL(*) Parsing: The Power of Dynamic Analysis Terence Parr Sam Harwell Kathleen Fisher University of San Francisco University of Texas at Austin Tufts University [email protected] [email protected] kfi[email protected] Abstract PEGs are unambiguous by definition but have a quirk where Despite the advances made by modern parsing strategies such rule A ! a j ab (meaning “A matches either a or ab”) can never as PEG, LL(*), GLR, and GLL, parsing is not a solved prob- match ab since PEGs choose the first alternative that matches lem. Existing approaches suffer from a number of weaknesses, a prefix of the remaining input. Nested backtracking makes de- including difficulties supporting side-effecting embedded ac- bugging PEGs difficult. tions, slow and/or unpredictable performance, and counter- Second, side-effecting programmer-supplied actions (muta- intuitive matching strategies. This paper introduces the ALL(*) tors) like print statements should be avoided in any strategy that parsing strategy that combines the simplicity, efficiency, and continuously speculates (PEG) or supports multiple interpreta- predictability of conventional top-down LL(k) parsers with the tions of the input (GLL and GLR) because such actions may power of a GLR-like mechanism to make parsing decisions. never really take place [17]. (Though DParser [24] supports The critical innovation is to move grammar analysis to parse- “final” actions when the programmer is certain a reduction is time, which lets ALL(*) handle any non-left-recursive context- part of an unambiguous final parse.) Without side effects, ac- free grammar. ALL(*) is O(n4) in theory but consistently per- tions must buffer data for all interpretations in immutable data forms linearly on grammars used in practice, outperforming structures or provide undo actions. -

Ambiguity Detection Methods for Context-Free Grammars

Ambiguity Detection Methods for Context-Free Grammars H.J.S. Basten Master’s thesis August 17, 2007 One Year Master Software Engineering Universiteit van Amsterdam Thesis and Internship Supervisor: Prof. Dr. P. Klint Institute: Centrum voor Wiskunde en Informatica Availability: public domain Contents Abstract 5 Preface 7 1 Introduction 9 1.1 Motivation . 9 1.2 Scope . 10 1.3 Criteria for practical usability . 11 1.4 Existing ambiguity detection methods . 12 1.5 Research questions . 13 1.6 Thesis overview . 14 2 Background and context 15 2.1 Grammars and languages . 15 2.2 Ambiguity in context-free grammars . 16 2.3 Ambiguity detection for context-free grammars . 18 3 Current ambiguity detection methods 19 3.1 Gorn . 19 3.2 Cheung and Uzgalis . 20 3.3 AMBER . 20 3.4 Jampana . 21 3.5 LR(k)test...................................... 22 3.6 Brabrand, Giegerich and Møller . 23 3.7 Schmitz . 24 3.8 Summary . 26 4 Research method 29 4.1 Measurements . 29 4.1.1 Accuracy . 29 4.1.2 Performance . 31 4.1.3 Termination . 31 4.1.4 Usefulness of the output . 31 4.2 Analysis . 33 4.2.1 Scalability . 33 4.2.2 Practical usability . 33 4.3 Investigated ADM implementations . 34 5 Analysis of AMBER 35 5.1 Accuracy . 35 5.2 Performance . 36 3 5.3 Termination . 37 5.4 Usefulness of the output . 38 5.5 Analysis . 38 6 Analysis of MSTA 41 6.1 Accuracy . 41 6.2 Performance . 41 6.3 Termination . 42 6.4 Usefulness of the output . 42 6.5 Analysis . -

Parsing 1. Grammars and Parsing 2. Top-Down and Bottom-Up Parsing 3

Syntax Parsing syntax: from the Greek syntaxis, meaning “setting out together or arrangement.” 1. Grammars and parsing Refers to the way words are arranged together. 2. Top-down and bottom-up parsing Why worry about syntax? 3. Chart parsers • The boy ate the frog. 4. Bottom-up chart parsing • The frog was eaten by the boy. 5. The Earley Algorithm • The frog that the boy ate died. • The boy whom the frog was eaten by died. Slide CS474–1 Slide CS474–2 Grammars and Parsing Need a grammar: a formal specification of the structures allowable in Syntactic Analysis the language. Key ideas: Need a parser: algorithm for assigning syntactic structure to an input • constituency: groups of words may behave as a single unit or phrase sentence. • grammatical relations: refer to the subject, object, indirect Sentence Parse Tree object, etc. Beavis ate the cat. S • subcategorization and dependencies: refer to certain kinds of relations between words and phrases, e.g. want can be followed by an NP VP infinitive, but find and work cannot. NAME V NP All can be modeled by various kinds of grammars that are based on ART N context-free grammars. Beavis ate the cat Slide CS474–3 Slide CS474–4 CFG example CFG’s are also called phrase-structure grammars. CFG’s Equivalent to Backus-Naur Form (BNF). A context free grammar consists of: 1. S → NP VP 5. NAME → Beavis 1. a set of non-terminal symbols N 2. VP → V NP 6. V → ate 2. a set of terminal symbols Σ (disjoint from N) 3. -

Abstract Syntax Trees & Top-Down Parsing

Abstract Syntax Trees & Top-Down Parsing Review of Parsing • Given a language L(G), a parser consumes a sequence of tokens s and produces a parse tree • Issues: – How do we recognize that s ∈ L(G) ? – A parse tree of s describes how s ∈ L(G) – Ambiguity: more than one parse tree (possible interpretation) for some string s – Error: no parse tree for some string s – How do we construct the parse tree? Compiler Design 1 (2011) 2 Abstract Syntax Trees • So far, a parser traces the derivation of a sequence of tokens • The rest of the compiler needs a structural representation of the program • Abstract syntax trees – Like parse trees but ignore some details – Abbreviated as AST Compiler Design 1 (2011) 3 Abstract Syntax Trees (Cont.) • Consider the grammar E → int | ( E ) | E + E • And the string 5 + (2 + 3) • After lexical analysis (a list of tokens) int5 ‘+’ ‘(‘ int2 ‘+’ int3 ‘)’ • During parsing we build a parse tree … Compiler Design 1 (2011) 4 Example of Parse Tree E • Traces the operation of the parser E + E • Captures the nesting structure • But too much info int5 ( E ) – Parentheses – Single-successor nodes + E E int 2 int3 Compiler Design 1 (2011) 5 Example of Abstract Syntax Tree PLUS PLUS 5 2 3 • Also captures the nesting structure • But abstracts from the concrete syntax a more compact and easier to use • An important data structure in a compiler Compiler Design 1 (2011) 6 Semantic Actions • This is what we’ll use to construct ASTs • Each grammar symbol may have attributes – An attribute is a property of a programming language construct -

Efficient Recursive Parsing

Purdue University Purdue e-Pubs Department of Computer Science Technical Reports Department of Computer Science 1976 Efficient Recursive Parsing Christoph M. Hoffmann Purdue University, [email protected] Report Number: 77-215 Hoffmann, Christoph M., "Efficient Recursive Parsing" (1976). Department of Computer Science Technical Reports. Paper 155. https://docs.lib.purdue.edu/cstech/155 This document has been made available through Purdue e-Pubs, a service of the Purdue University Libraries. Please contact [email protected] for additional information. EFFICIENT RECURSIVE PARSING Christoph M. Hoffmann Computer Science Department Purdue University West Lafayette, Indiana 47907 CSD-TR 215 December 1976 Efficient Recursive Parsing Christoph M. Hoffmann Computer Science Department Purdue University- Abstract Algorithms are developed which construct from a given LL(l) grammar a recursive descent parser with as much, recursion resolved by iteration as is possible without introducing auxiliary memory. Unlike other proposed methods in the literature designed to arrive at parsers of this kind, the algorithms do not require extensions of the notational formalism nor alter the grammar in any way. The algorithms constructing the parsers operate in 0(k«s) steps, where s is the size of the grammar, i.e. the sum of the lengths of all productions, and k is a grammar - dependent constant. A speedup of the algorithm is possible which improves the bound to 0(s) for all LL(l) grammars, and constructs smaller parsers with some auxiliary memory in form of parameters -

Conflict Resolution in a Recursive Descent Compiler Generator

LL(1) Conflict Resolution in a Recursive Descent Compiler Generator Albrecht Wöß, Markus Löberbauer, Hanspeter Mössenböck Johannes Kepler University Linz, Institute of Practical Computer Science, Altenbergerstr. 69, 4040 Linz, Austria {woess,loeberbauer,moessenboeck}@ssw.uni-linz.ac.at Abstract. Recursive descent parsing is restricted to languages whose grammars are LL(1), i.e., which can be parsed top-down with a single lookahead symbol. Unfortunately, many languages such as Java, C++, or C# are not LL(1). There- fore recursive descent parsing cannot be used or the parser has to make its deci- sions based on semantic information or a multi-symbol lookahead. In this paper we suggest a systematic technique for resolving LL(1) conflicts in recursive descent parsing and show how to integrate it into a compiler gen- erator (Coco/R). The idea is to evaluate user-defined boolean expressions, in order to allow the parser to make its parsing decisions where a one symbol loo- kahead does not suffice. Using our extended compiler generator we implemented a compiler front end for C# that can be used as a framework for implementing a variety of tools. 1 Introduction Recursive descent parsing [16] is a popular top-down parsing technique that is sim- ple, efficient, and convenient for integrating semantic processing. However, it re- quires the grammar of the parsed language to be LL(1), which means that the parser must always be able to select between alternatives with a single symbol lookahead. Unfortunately, many languages such as Java, C++ or C# are not LL(1) so that one either has to resort to bottom-up LALR(1) parsing [5, 1], which is more powerful but less convenient for semantic processing, or the parser has to resolve the LL(1) con- flicts using semantic information or a multi-symbol lookahead. -

Compiler Construction

UNIVERSITY OF CAMBRIDGE Compiler Construction An 18-lecture course Alan Mycroft Computer Laboratory, Cambridge University http://www.cl.cam.ac.uk/users/am/ Lent Term 2007 Compiler Construction 1 Lent Term 2007 Course Plan UNIVERSITY OF CAMBRIDGE Part A : intro/background Part B : a simple compiler for a simple language Part C : implementing harder things Compiler Construction 2 Lent Term 2007 A compiler UNIVERSITY OF CAMBRIDGE A compiler is a program which translates the source form of a program into a semantically equivalent target form. • Traditionally this was machine code or relocatable binary form, but nowadays the target form may be a virtual machine (e.g. JVM) or indeed another language such as C. • Can appear a very hard program to write. • How can one even start? • It’s just like juggling too many balls (picking instructions while determining whether this ‘+’ is part of ‘++’ or whether its right operand is just a variable or an expression ...). Compiler Construction 3 Lent Term 2007 How to even start? UNIVERSITY OF CAMBRIDGE “When finding it hard to juggle 4 balls at once, juggle them each in turn instead ...” character -token -parse -intermediate -target stream stream tree code code syn trans cg lex A multi-pass compiler does one ‘simple’ thing at once and passes its output to the next stage. These are pretty standard stages, and indeed language and (e.g. JVM) system design has co-evolved around them. Compiler Construction 4 Lent Term 2007 Compilers can be big and hard to understand UNIVERSITY OF CAMBRIDGE Compilers can be very large. In 2004 the Gnu Compiler Collection (GCC) was noted to “[consist] of about 2.1 million lines of code and has been in development for over 15 years”. -

AST Indexing: a Near-Constant Time Solution to the Get-Descendants-By-Type Problem Samuel Livingston Kelly Dickinson College

Dickinson College Dickinson Scholar Student Honors Theses By Year Student Honors Theses 5-18-2014 AST Indexing: A Near-Constant Time Solution to the Get-Descendants-by-Type Problem Samuel Livingston Kelly Dickinson College Follow this and additional works at: http://scholar.dickinson.edu/student_honors Part of the Software Engineering Commons Recommended Citation Kelly, Samuel Livingston, "AST Indexing: A Near-Constant Time Solution to the Get-Descendants-by-Type Problem" (2014). Dickinson College Honors Theses. Paper 147. This Honors Thesis is brought to you for free and open access by Dickinson Scholar. It has been accepted for inclusion by an authorized administrator. For more information, please contact [email protected]. AST Indexing: A Near-Constant Time Solution to the Get-Descendants-by-Type Problem by Sam Kelly Submitted in partial fulfillment of Honors Requirements for the Computer Science Major Dickinson College, 2014 Associate Professor Tim Wahls, Supervisor Associate Professor John MacCormick, Reader Part-Time Instructor Rebecca Wells, Reader April 22, 2014 The Department of Mathematics and Computer Science at Dickinson College hereby accepts this senior honors thesis by Sam Kelly, and awards departmental honors in Computer Science. Tim Wahls (Advisor) Date John MacCormick (Committee Member) Date Rebecca Wells (Committee Member) Date Richard Forrester (Department Chair) Date Department of Mathematics and Computer Science Dickinson College April 2014 ii Abstract AST Indexing: A Near-Constant Time Solution to the Get- Descendants-by-Type Problem by Sam Kelly In this paper we present two novel abstract syntax tree (AST) indexing algorithms that solve the get-descendants-by-type problem in near constant time. -

Context Free Languages and Pushdown Automata

Context Free Languages and Pushdown Automata COMP2600 — Formal Methods for Software Engineering Ranald Clouston Australian National University Semester 2, 2013 COMP 2600 — Context Free Languages and Pushdown Automata 1 Parsing The process of parsing a program is partly about confirming that a given program is well-formed – syntax. But it is also about representing the structure of the program so that it can be executed – semantics. For this purpose, the trail of sentential forms created en route to generating a given sentence is just as important as the question of whether the sentence can be generated or not. COMP 2600 — Context Free Languages and Pushdown Automata 2 The Semantics of Parses Take the code if e1 then if e2 then s1 else s2 where e1, e2 are boolean expressions and s1, s2 are subprograms. Does this mean if e1 then( if e2 then s1 else s2) or if e1 then( if e2 else s1) else s2 We’d better have an unambiguous way to tell which is right, or we cannot know what the program will do at runtime! COMP 2600 — Context Free Languages and Pushdown Automata 3 Ambiguity Recall that we can present CFG derivations as parse trees. Until now this was mere pretty presentation; now it will become important. A context-free grammar G is unambiguous iff every string can be derived by at most one parse tree. G is ambiguous iff there exists any word w 2 L(G) derivable by more than one parse tree. COMP 2600 — Context Free Languages and Pushdown Automata 4 Example: If-Then and If-Then-Else Consider the CFG S ! if bexp then S j if bexp then S else S j prog where bexp and prog stand for boolean expressions and (if-statement free) programs respectively, defined elsewhere.