Using History to Teach Computer Science and Related Disciplines

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Edsger Dijkstra: the Man Who Carried Computer Science on His Shoulders

INFERENCE / Vol. 5, No. 3 Edsger Dijkstra The Man Who Carried Computer Science on His Shoulders Krzysztof Apt s it turned out, the train I had taken from dsger dijkstra was born in Rotterdam in 1930. Nijmegen to Eindhoven arrived late. To make He described his father, at one time the president matters worse, I was then unable to find the right of the Dutch Chemical Society, as “an excellent Aoffice in the university building. When I eventually arrived Echemist,” and his mother as “a brilliant mathematician for my appointment, I was more than half an hour behind who had no job.”1 In 1948, Dijkstra achieved remarkable schedule. The professor completely ignored my profuse results when he completed secondary school at the famous apologies and proceeded to take a full hour for the meet- Erasmiaans Gymnasium in Rotterdam. His school diploma ing. It was the first time I met Edsger Wybe Dijkstra. shows that he earned the highest possible grade in no less At the time of our meeting in 1975, Dijkstra was 45 than six out of thirteen subjects. He then enrolled at the years old. The most prestigious award in computer sci- University of Leiden to study physics. ence, the ACM Turing Award, had been conferred on In September 1951, Dijkstra’s father suggested he attend him three years earlier. Almost twenty years his junior, I a three-week course on programming in Cambridge. It knew very little about the field—I had only learned what turned out to be an idea with far-reaching consequences. a flowchart was a couple of weeks earlier. -

Early Stored Program Computers

Stored Program Computers Thomas J. Bergin Computing History Museum American University 7/9/2012 1 Early Thoughts about Stored Programming • January 1944 Moore School team thinks of better ways to do things; leverages delay line memories from War research • September 1944 John von Neumann visits project – Goldstine’s meeting at Aberdeen Train Station • October 1944 Army extends the ENIAC contract research on EDVAC stored-program concept • Spring 1945 ENIAC working well • June 1945 First Draft of a Report on the EDVAC 7/9/2012 2 First Draft Report (June 1945) • John von Neumann prepares (?) a report on the EDVAC which identifies how the machine could be programmed (unfinished very rough draft) – academic: publish for the good of science – engineers: patents, patents, patents • von Neumann never repudiates the myth that he wrote it; most members of the ENIAC team contribute ideas; Goldstine note about “bashing” summer7/9/2012 letters together 3 • 1.0 Definitions – The considerations which follow deal with the structure of a very high speed automatic digital computing system, and in particular with its logical control…. – The instructions which govern this operation must be given to the device in absolutely exhaustive detail. They include all numerical information which is required to solve the problem…. – Once these instructions are given to the device, it must be be able to carry them out completely and without any need for further intelligent human intervention…. • 2.0 Main Subdivision of the System – First: since the device is a computor, it will have to perform the elementary operations of arithmetics…. – Second: the logical control of the device is the proper sequencing of its operations (by…a control organ. -

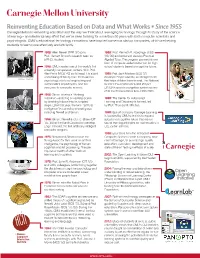

Reinventing Education Based on Data and What Works • Since 1955

Reinventing Education Based on Data and What Works • Since 1955 Carnegie Mellon is reinventing education and the way we think about leveraging technology through its study of the science of learning – an interdisciplinary effort that we’ve been tackling for more than 50 years with both computer scientists and psychologists. CMU's educational technology innovations have inspired numerous startup companies, which are helping students to learn more effectively and efficiently. 1955: Allen Newell (TPR ’57) joins 1995: Prof. Kenneth R. Koedinger (HSS Prof. Herbert Simon’s research team as ’88,’90) and Anderson develop Practical a Ph.D. student. Algebra Tutor. The program pioneers a new form of computer-aided instruction for high 1956: CMU creates one of the world’s first school students based on cognitive tutors. university computation centers. With Prof. Alan Perlis (MCS ’42) as its head, it is a joint 1995: Prof. Jack Mostow (SCS ’81) undertaking of faculty from the business, develops Project LISTEN, an intelligent tutor Simon, Newell psychology, electrical engineering and that helps children learn to read. The National mathematics departments, and the Science Foundation included Project precursor to computer science. LISTEN’s speech recognition system as one of its top 50 innovations from 1950-2000. 1956: Simon creates a “thinking machine”—enacting a mental process 1995: The Center for Automated by breaking it down into its simplest Learning and Discovery is formed, led steps. Later that year, the term “artificial by Prof. Thomas M. Mitchell. intelligence” is coined by a small group Perlis including Newell and Simon. 1998: Spinoff company Carnegie Learning is founded by CMU scientists to expand 1956: Simon, Newell and J. -

Graduationl Speakers

Graduationl speakers ~~~~~~~~*L-- --- I - I -· P 8-·1111~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~ stress public service By Andrew L. Fish san P. Thomas, MIT's Lutheran MIT President Paul E. Gray chaplain, who delivered the inlvo- '54 told graduating students that cation. "Grant that we may use their education is "more than a the privilege of this MIT educa- meal ticket" and should be used tion and degree wisely - not as to serve "the public interest and an entitlement to power or re- the common good." His remarks gard, but as a means to serve," were made at MIT's 122nd com- Thomas said. "May the technol- mencement on May 27. A total ogy that we use and develop be of 1733 students received 1899 humane, and the world we create degrees at the ceremony, which with it one in which people can was held in Killian Court under live more fully human lives rather sunny skies, than less, a world where clean air The importance of public ser- and water, adequate food and vice was also emphasized by Su- shelter, and freedom from fear and want are commonplace rath- Prof. IVMurman er than exceptional." named to Proj. Text of CGray's commencement address. Page 2. Athena post In his commencement address, By Irene Kuo baseball's National League Presi- Professor Earll Murman of the dent A. Bartlett Giamatti urged Department of Aeronautics and graduates to "have the courage to Astronautics was recently named connect" with people of all ideo- the new director of Project Athe- logies. Equality will come only ~~~~~~~~~~~~~~~~~,,4. na by Gerald L. -

The Advent of Recursion & Logic in Computer Science

The Advent of Recursion & Logic in Computer Science MSc Thesis (Afstudeerscriptie) written by Karel Van Oudheusden –alias Edgar G. Daylight (born October 21st, 1977 in Antwerpen, Belgium) under the supervision of Dr Gerard Alberts, and submitted to the Board of Examiners in partial fulfillment of the requirements for the degree of MSc in Logic at the Universiteit van Amsterdam. Date of the public defense: Members of the Thesis Committee: November 17, 2009 Dr Gerard Alberts Prof Dr Krzysztof Apt Prof Dr Dick de Jongh Prof Dr Benedikt Löwe Dr Elizabeth de Mol Dr Leen Torenvliet 1 “We are reaching the stage of development where each new gener- ation of participants is unaware both of their overall technological ancestry and the history of the development of their speciality, and have no past to build upon.” J.A.N. Lee in 1996 [73, p.54] “To many of our colleagues, history is only the study of an irrele- vant past, with no redeeming modern value –a subject without useful scholarship.” J.A.N. Lee [73, p.55] “[E]ven when we can't know the answers, it is important to see the questions. They too form part of our understanding. If you cannot answer them now, you can alert future historians to them.” M.S. Mahoney [76, p.832] “Only do what only you can do.” E.W. Dijkstra [103, p.9] 2 Abstract The history of computer science can be viewed from a number of disciplinary perspectives, ranging from electrical engineering to linguistics. As stressed by the historian Michael Mahoney, different `communities of computing' had their own views towards what could be accomplished with a programmable comput- ing machine. -

Trademarks As Entrepreneurial Change Agents for Legal Reform Deborah M

NORTH CAROLINA LAW REVIEW Volume 95 | Number 5 Article 8 6-1-2017 Trademarks as Entrepreneurial Change Agents for Legal Reform Deborah M. Gerhardt Follow this and additional works at: http://scholarship.law.unc.edu/nclr Part of the Law Commons Recommended Citation Deborah M. Gerhardt, Trademarks as Entrepreneurial Change Agents for Legal Reform, 95 N.C. L. Rev. 1519 (2017). Available at: http://scholarship.law.unc.edu/nclr/vol95/iss5/8 This Essays is brought to you for free and open access by Carolina Law Scholarship Repository. It has been accepted for inclusion in North Carolina Law Review by an authorized editor of Carolina Law Scholarship Repository. For more information, please contact [email protected]. 95 N.C. L. REV. 1519 (2017) TRADEMARKS AS ENTREPRENEURIAL CHANGE AGENTS FOR LEGAL REFORM* DEBORAH R. GERHARDT** INTRODUCTION ..................................................................................... 1519 I. BOTH GOVERNMENTS AND BRANDS CREATE COMMUNITIES AROUND CORE VALUES ........................................................... 1524 II. NAVIGATING THE CHOICE TO LINK A TRADEMARK WITH POLITICS ...................................................................................... 1531 A. Risks Inherent to Political Engagement ............................... 1532 B. Political Hijacking ................................................................. 1535 III. AFFIRMING BRAND VALUES THROUGH POLITICAL AND CULTURAL ENGAGEMENT ....................................................... 1541 A. Cultural Leadership ............................................................. -

Turning a Blind Eye: Why Washington Keeps Giving in to Wall Street

GW Law Faculty Publications & Other Works Faculty Scholarship 2013 Turning a Blind Eye: Why Washington Keeps Giving In to Wall Street Arthur E. Wilmarth Jr. George Washington University Law School, [email protected] Follow this and additional works at: https://scholarship.law.gwu.edu/faculty_publications Part of the Law Commons Recommended Citation Arthur E. Wilmarth, Jr., Turning a Blind Eye: Why Washington Keeps Giving In to Wall Street, 81 University of Cincinnati Law Review 1283-1446 (2013). This Article is brought to you for free and open access by the Faculty Scholarship at Scholarly Commons. It has been accepted for inclusion in GW Law Faculty Publications & Other Works by an authorized administrator of Scholarly Commons. For more information, please contact [email protected]. GW Law School Public Law and Legal Theory Paper No. 2013‐117 GW Legal Studies Research Paper No. 2013‐117 Turning a Blind Eye: Why Washington Keeps Giving In to Wall Street Arthur E. Wilmarth, Jr. 2013 81 U. CIN. L. REV. 1283-1446 This paper can be downloaded free of charge from the Social Science Research Network: http://ssrn.com/abstract=2327872 TURNING A BLIND EYE: WHY WASHINGTON KEEPS GIVING IN TO WALL STREET Arthur E. Wilmarth, Jr.* As the Dodd–Frank Act approaches its third anniversary in mid-2013, federal regulators have missed deadlines for more than 60% of the required implementing rules. The financial industry has undermined Dodd–Frank by lobbying regulators to delay or weaken rules, by suing to overturn completed rules, and by pushing for legislation to freeze agency budgets and repeal Dodd–Frank’s key mandates. -

Section 1: MIT Facts and History

1 MIT Facts and History Economic Information 9 Technology Licensing Office 9 People 9 Students 10 Undergraduate Students 11 Graduate Students 12 Degrees 13 Alumni 13 Postdoctoral Appointments 14 Faculty and Staff 15 Awards and Honors of Current Faculty and Staff 16 Awards Highlights 17 Fields of Study 18 Research Laboratories, Centers, and Programs 19 Academic and Research Affiliations 20 Education Highlights 23 Research Highlights 26 7 MIT Facts and History The Massachusetts Institute of Technology is one nologies for artificial limbs, and the magnetic core of the world’s preeminent research universities, memory that enabled the development of digital dedicated to advancing knowledge and educating computers. Exciting areas of research and education students in science, technology, and other areas of today include neuroscience and the study of the scholarship that will best serve the nation and the brain and mind, bioengineering, energy, the envi- world. It is known for rigorous academic programs, ronment and sustainable development, informa- cutting-edge research, a diverse campus commu- tion sciences and technology, new media, financial nity, and its long-standing commitment to working technology, and entrepreneurship. with the public and private sectors to bring new knowledge to bear on the world’s great challenges. University research is one of the mainsprings of growth in an economy that is increasingly defined William Barton Rogers, the Institute’s founding pres- by technology. A study released in February 2009 ident, believed that education should be both broad by the Kauffman Foundation estimates that MIT and useful, enabling students to participate in “the graduates had founded 25,800 active companies. -

ACM Student Membership

student membership application and order form INSTRUCTIONS Name Please print clearly Member Number Carefully complete this application and Mailing Address return with payment by mail or fax to ACM. You must be a full-time student City/State/Province Postal Code/Zip to qualify for student rates. Country q Please do not release my postal address to third parties Area Code & Daytime Phone CONTACT ACM Email Address q Yes, please send me ACM Announcements via email q No, please do not send me ACM Announcements via email phone: 1-800-342-6626 (US & Canada) +1-212-626-0500 (Global) MEMBERSHIP OPTIONS AND ADD-ONS Check the appropriate box(es) hours: 8:30AM - 4:30PM (US EST) fax: +1-212-944-1318 q ACM Student Membership: $19 USD email: [email protected] q ACM Student Membership PLUS ACM Digital Library: $42 USD mail: ACM, Member Services q ACM Student Membership PLUS Print CACM Magazine: $42 USD General Post Offi ce q ACM Student Membership with ACM Digital Library PLUS Print CACM Magazine: $62 USD P.O. Box 30777 MEMBERSHIP ADD-ONS: New York, NY 10087-0777 q ACM Books Subscription: $10 USD USA q Additional Print Publications and/or Special Interest Groups For immediate processing, FAX this application to +1-212-944-1318. PUBLICATIONS Check the appropriate box and calculate amount due on reverse. PLEASE CHECK ONE WHAT’S NEW Issues per year Code Member Rate Air Rate * • ACM Inroads 4 178 $41 q $69 q ACM Learning Webinars keep you at the • Communications of the ACM 12 101 $50 q $69 q q q cutting edge of the latest technical and • Computing Reviews 12 104 $80 $46 • Computing Surveys 4 103 $61 q $39 q technological developments. -

The Myth of the Innovator Hero - Business - the Atlantic

The Myth of the Innovator Hero - Business - The Atlantic http://www.theatlantic.com/business/print/2011/11/the-myth-of-the-inno... Print | Close By Vaclav Smil Every successful modern e-gadget is a combination of components made by many makers. The story of how the transistor became the building block of modern machines explains why. WIKIPEDIA: Steve Jobs, Thomas Edison, Benjamin Franklin We like to think that invention comes as a flash of insight, the equivalent of that sudden Archimedean displacement of bath water that occasioned one of the most famous Greek interjections, εὕρηκα. Then the inventor gets to rapidly translating a stunning discovery into a new product. Its mass appeal soon transforms the world, proving once again the power of a single, simple idea. But this story is a myth. The popular heroic narrative has almost nothing to do with the way modern invention (conceptual creation of a new product or process, sometimes accompanied by a prototypical design) and innovation (large-scale diffusion of commercially viable inventions) work. A closer examination reveals that many award-winning inventions are re-inventions. Most scientific or engineering discoveries would never become successful products without contributions from other scientists or engineers. Every major invention is the child of far-flung parents who may never meet. These contributions may be just as important as the original insight, but they will 1 of 4 11/20/2011 8:48 PM The Myth of the Innovator Hero - Business - The Atlantic http://www.theatlantic.com/business/print/2011/11/the-myth-of-the-inno... not attract public adulation. -

Silicon Genesis: Oral History Interviews

http://oac.cdlib.org/findaid/ark:/13030/c8f76kbr No online items Guide to the Silicon Genesis oral history interviews M0741 Department of Special Collections and University Archives 2019 Green Library 557 Escondido Mall Stanford 94305-6064 [email protected] URL: http://library.stanford.edu/spc Guide to the Silicon Genesis oral M0741 1 history interviews M0741 Language of Material: English Contributing Institution: Department of Special Collections and University Archives Title: Silicon Genesis: oral history interviews creator: Walker, Rob Identifier/Call Number: M0741 Physical Description: 12 Linear Feet (26 boxes) Date (inclusive): 1995-2018 Abstract: "Silcon Genesis" is a series of interviews with individuals active in California's Silicon Valley technology sector beginning in 1995 and through at least 2018. Immediate Source of Acquisition Gift of Rob Walker, 1995-2018. Scope and Contents Interviews of key figures in the microelectronics industry conducted by Rob Walker (1935-2016) and later Rob Blair, who continues to carry out interviews for the project. Interviewees include John Derringer, Elliot Sopkin, Floyd Kvamme, Mike Markkula, Steve Zelencik, T. J. Rogers, Bill Davidow, Aart de Geus, and more. Interviews have been fully digitized and are available streaming from our catalog here: https://searchworks.stanford.edu/view/4084160 An exhibit for the collection is available here: https://silicongenesis.stanford.edu/ Written transcripts are available for the interviews with Marcian (Ted) Hoff, Federico Faggin, C. Lester Hogan, Regis McKenna, and Gordon E. Moore. Also included is an interview with Rob Walker himself, made by Susan Ayers-Walker, 1998. Conditions Governing Access Original recordings are closed. Digital copies of interviews are available online: https://exhibits.stanford.edu/silicongenesis Conditions Governing Use While Special Collections is the owner of the physical and digital items, permission to examine collection materials is not an authorization to publish. -

Filesystems HOWTO Filesystems HOWTO Table of Contents Filesystems HOWTO

Filesystems HOWTO Filesystems HOWTO Table of Contents Filesystems HOWTO..........................................................................................................................................1 Martin Hinner < [email protected]>, http://martin.hinner.info............................................................1 1. Introduction..........................................................................................................................................1 2. Volumes...............................................................................................................................................1 3. DOS FAT 12/16/32, VFAT.................................................................................................................2 4. High Performance FileSystem (HPFS)................................................................................................2 5. New Technology FileSystem (NTFS).................................................................................................2 6. Extended filesystems (Ext, Ext2, Ext3)...............................................................................................2 7. Macintosh Hierarchical Filesystem − HFS..........................................................................................3 8. ISO 9660 − CD−ROM filesystem.......................................................................................................3 9. Other filesystems.................................................................................................................................3