Monte Carlo Method - Wikipedia

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Kalman and Particle Filtering

Abstract: The Kalman and Particle filters are algorithms that recursively update an estimate of the state and find the innovations driving a stochastic process given a sequence of observations. The Kalman filter accomplishes this goal by linear projections, while the Particle filter does so by a sequential Monte Carlo method. With the state estimates, we can forecast and smooth the stochastic process. With the innovations, we can estimate the parameters of the model. The article discusses how to set a dynamic model in a state-space form, derives the Kalman and Particle filters, and explains how to use them for estimation. Kalman and Particle Filtering The Kalman and Particle filters are algorithms that recursively update an estimate of the state and find the innovations driving a stochastic process given a sequence of observations. The Kalman filter accomplishes this goal by linear projections, while the Particle filter does so by a sequential Monte Carlo method. Since both filters start with a state-space representation of the stochastic processes of interest, section 1 presents the state-space form of a dynamic model. Then, section 2 intro- duces the Kalman filter and section 3 develops the Particle filter. For extended expositions of this material, see Doucet, de Freitas, and Gordon (2001), Durbin and Koopman (2001), and Ljungqvist and Sargent (2004). 1. The state-space representation of a dynamic model A large class of dynamic models can be represented by a state-space form: Xt+1 = ϕ (Xt,Wt+1; γ) (1) Yt = g (Xt,Vt; γ) . (2) This representation handles a stochastic process by finding three objects: a vector that l describes the position of the system (a state, Xt X R ) and two functions, one mapping ∈ ⊂ 1 the state today into the state tomorrow (the transition equation, (1)) and one mapping the state into observables, Yt (the measurement equation, (2)). -

A Fourier-Wavelet Monte Carlo Method for Fractal Random Fields

JOURNAL OF COMPUTATIONAL PHYSICS 132, 384±408 (1997) ARTICLE NO. CP965647 A Fourier±Wavelet Monte Carlo Method for Fractal Random Fields Frank W. Elliott Jr., David J. Horntrop, and Andrew J. Majda Courant Institute of Mathematical Sciences, 251 Mercer Street, New York, New York 10012 Received August 2, 1996; revised December 23, 1996 2 2H k[v(x) 2 v(y)] l 5 CHux 2 yu , (1.1) A new hierarchical method for the Monte Carlo simulation of random ®elds called the Fourier±wavelet method is developed and where 0 , H , 1 is the Hurst exponent and k?l denotes applied to isotropic Gaussian random ®elds with power law spectral the expected value. density functions. This technique is based upon the orthogonal Here we develop a new Monte Carlo method based upon decomposition of the Fourier stochastic integral representation of the ®eld using wavelets. The Meyer wavelet is used here because a wavelet expansion of the Fourier space representation of its rapid decay properties allow for a very compact representation the fractal random ®elds in (1.1). This method is capable of the ®eld. The Fourier±wavelet method is shown to be straightfor- of generating a velocity ®eld with the Kolmogoroff spec- ward to implement, given the nature of the necessary precomputa- trum (H 5 Ad in (1.1)) over many (10 to 15) decades of tions and the run-time calculations, and yields comparable results scaling behavior comparable to the physical space multi- with scaling behavior over as many decades as the physical space multiwavelet methods developed recently by two of the authors. -

Fourier, Wavelet and Monte Carlo Methods in Computational Finance

Fourier, Wavelet and Monte Carlo Methods in Computational Finance Kees Oosterlee1;2 1CWI, Amsterdam 2Delft University of Technology, the Netherlands AANMPDE-9-16, 7/7/2016 Kees Oosterlee (CWI, TU Delft) Comp. Finance AANMPDE-9-16 1 / 51 Joint work with Fang Fang, Marjon Ruijter, Luis Ortiz, Shashi Jain, Alvaro Leitao, Fei Cong, Qian Feng Agenda Derivatives pricing, Feynman-Kac Theorem Fourier methods Basics of COS method; Basics of SWIFT method; Options with early-exercise features COS method for Bermudan options Monte Carlo method BSDEs, BCOS method (very briefly) Kees Oosterlee (CWI, TU Delft) Comp. Finance AANMPDE-9-16 1 / 51 Agenda Derivatives pricing, Feynman-Kac Theorem Fourier methods Basics of COS method; Basics of SWIFT method; Options with early-exercise features COS method for Bermudan options Monte Carlo method BSDEs, BCOS method (very briefly) Joint work with Fang Fang, Marjon Ruijter, Luis Ortiz, Shashi Jain, Alvaro Leitao, Fei Cong, Qian Feng Kees Oosterlee (CWI, TU Delft) Comp. Finance AANMPDE-9-16 1 / 51 Feynman-Kac theorem: Z T v(t; x) = E g(s; Xs )ds + h(XT ) ; t where Xs is the solution to the FSDE dXs = µ(Xs )ds + σ(Xs )d!s ; Xt = x: Feynman-Kac Theorem The linear partial differential equation: @v(t; x) + Lv(t; x) + g(t; x) = 0; v(T ; x) = h(x); @t with operator 1 Lv(t; x) = µ(x)Dv(t; x) + σ2(x)D2v(t; x): 2 Kees Oosterlee (CWI, TU Delft) Comp. Finance AANMPDE-9-16 2 / 51 Feynman-Kac Theorem The linear partial differential equation: @v(t; x) + Lv(t; x) + g(t; x) = 0; v(T ; x) = h(x); @t with operator 1 Lv(t; x) = µ(x)Dv(t; x) + σ2(x)D2v(t; x): 2 Feynman-Kac theorem: Z T v(t; x) = E g(s; Xs )ds + h(XT ) ; t where Xs is the solution to the FSDE dXs = µ(Xs )ds + σ(Xs )d!s ; Xt = x: Kees Oosterlee (CWI, TU Delft) Comp. -

Efficient Monte Carlo Methods for Estimating Failure Probabilities

Reliability Engineering and System Safety 165 (2017) 376–394 Contents lists available at ScienceDirect Reliability Engineering and System Safety journal homepage: www.elsevier.com/locate/ress ffi E cient Monte Carlo methods for estimating failure probabilities MARK ⁎ Andres Albana, Hardik A. Darjia,b, Atsuki Imamurac, Marvin K. Nakayamac, a Mathematical Sciences Dept., New Jersey Institute of Technology, Newark, NJ 07102, USA b Mechanical Engineering Dept., New Jersey Institute of Technology, Newark, NJ 07102, USA c Computer Science Dept., New Jersey Institute of Technology, Newark, NJ 07102, USA ARTICLE INFO ABSTRACT Keywords: We develop efficient Monte Carlo methods for estimating the failure probability of a system. An example of the Probabilistic safety assessment problem comes from an approach for probabilistic safety assessment of nuclear power plants known as risk- Risk analysis informed safety-margin characterization, but it also arises in other contexts, e.g., structural reliability, Structural reliability catastrophe modeling, and finance. We estimate the failure probability using different combinations of Uncertainty simulation methodologies, including stratified sampling (SS), (replicated) Latin hypercube sampling (LHS), Monte Carlo and conditional Monte Carlo (CMC). We prove theorems establishing that the combination SS+LHS (resp., SS Variance reduction Confidence intervals +CMC+LHS) has smaller asymptotic variance than SS (resp., SS+LHS). We also devise asymptotically valid (as Nuclear regulation the overall sample size grows large) upper confidence bounds for the failure probability for the methods Risk-informed safety-margin characterization considered. The confidence bounds may be employed to perform an asymptotically valid probabilistic safety assessment. We present numerical results demonstrating that the combination SS+CMC+LHS can result in substantial variance reductions compared to stratified sampling alone. -

Basic Statistics and Monte-Carlo Method -2

Applied Statistical Mechanics Lecture Note - 10 Basic Statistics and Monte-Carlo Method -2 고려대학교 화공생명공학과 강정원 Table of Contents 1. General Monte Carlo Method 2. Variance Reduction Techniques 3. Metropolis Monte Carlo Simulation 1.1 Introduction Monte Carlo Method Any method that uses random numbers Random sampling the population Application • Science and engineering • Management and finance For given subject, various techniques and error analysis will be presented Subject : evaluation of definite integral b I = ρ(x)dx a 1.1 Introduction Monte Carlo method can be used to compute integral of any dimension d (d-fold integrals) Error comparison of d-fold integrals Simpson’s rule,… E ∝ N −1/ d − Monte Carlo method E ∝ N 1/ 2 purely statistical, not rely on the dimension ! Monte Carlo method WINS, when d >> 3 1.2 Hit-or-Miss Method Evaluation of a definite integral b I = ρ(x)dx a h X X X ≥ ρ X h (x) for any x X Probability that a random point reside inside X O the area O O I N' O O r = ≈ O O (b − a)h N a b N : Total number of points N’ : points that reside inside the region N' I ≈ (b − a)h N 1.2 Hit-or-Miss Method Start Set N : large integer N’ = 0 h X X X X X Choose a point x in [a,b] = − + X Loop x (b a)u1 a O N times O O y = hu O O Choose a point y in [0,h] 2 O O a b if [x,y] reside inside then N’ = N’+1 I = (b-a) h (N’/N) End 1.2 Hit-or-Miss Method Error Analysis of the Hit-or-Miss Method It is important to know how accurate the result of simulations are The rule of 3σ’s Identifying Random Variable N = 1 X X n N n=1 From -

A Tutorial on Quantile Estimation Via Monte Carlo

A Tutorial on Quantile Estimation via Monte Carlo Hui Dong and Marvin K. Nakayama Abstract Quantiles are frequently used to assess risk in a wide spectrum of applica- tion areas, such as finance, nuclear engineering, and service industries. This tutorial discusses Monte Carlo simulation methods for estimating a quantile, also known as a percentile or value-at-risk, where p of a distribution’s mass lies below its p-quantile. We describe a general approach that is often followed to construct quantile estimators, and show how it applies when employing naive Monte Carlo or variance-reduction techniques. We review some large-sample properties of quantile estimators. We also describe procedures for building a confidence interval for a quantile, which provides a measure of the sampling error. 1 Introduction Numerous application settings have adopted quantiles as a way of measuring risk. For a fixed constant 0 < p < 1, the p-quantile of a continuous random variable is a constant x such that p of the distribution’s mass lies below x. For example, the median is the 0:5-quantile. In finance, a quantile is called a value-at-risk, and risk managers commonly employ p-quantiles for p ≈ 1 (e.g., p = 0:99 or p = 0:999) to help determine capital levels needed to be able to cover future large losses with high probability; e.g., see [33]. Nuclear engineers use 0:95-quantiles in probabilistic safety assessments (PSAs) of nuclear power plants. PSAs are often performed with Monte Carlo, and the U.S. Nuclear Regulatory Commission (NRC) further requires that a PSA accounts for the Hui Dong Amazon.com Corporate LLC∗, Seattle, WA 98109, USA e-mail: [email protected] ∗This work is not related to Amazon, regardless of the affiliation. -

Two-Dimensional Monte Carlo Filter for a Non-Gaussian Environment

electronics Article Two-Dimensional Monte Carlo Filter for a Non-Gaussian Environment Xingzi Qiang 1 , Rui Xue 1,* and Yanbo Zhu 2 1 School of Electrical and Information Engineering, Beihang University, Beijing 100191, China; [email protected] 2 Aviation Data Communication Corporation, Beijing 100191, China; [email protected] * Correspondence: [email protected] Abstract: In a non-Gaussian environment, the accuracy of a Kalman filter might be reduced. In this paper, a two- dimensional Monte Carlo Filter is proposed to overcome the challenge of the non-Gaussian environment for filtering. The two-dimensional Monte Carlo (TMC) method is first proposed to improve the efficacy of the sampling. Then, the TMC filter (TMCF) algorithm is proposed to solve the non-Gaussian filter problem based on the TMC. In the TMCF, particles are deployed in the confidence interval uniformly in terms of the sampling interval, and their weights are calculated based on Bayesian inference. Then, the posterior distribution is described more accurately with less particles and their weights. Different from the PF, the TMCF completes the transfer of the distribution using a series of calculations of weights and uses particles to occupy the state space in the confidence interval. Numerical simulations demonstrated that, the accuracy of the TMCF approximates the Kalman filter (KF) (the error is about 10−6) in a two-dimensional linear/ Gaussian environment. In a two-dimensional linear/non-Gaussian system, the accuracy of the TMCF is improved by 0.01, and the computation time reduced to 0.067 s from 0.20 s, compared with the particle filter. -

The Monte Carlo Method

The Monte Carlo Method Introduction Let S denote a set of numbers or vectors, and let real-valued function g(x) be defined over S. There are essentially three fundamental computational problems associated with S and g. R Integral Evaluation Evaluate S g(x)dx. Note that if S is discrete, then integration can be replaced by summation. Optimization Find x0 2 S that minimizes g over S. In other words, find x0 2 S such that, for all x 2 S, g(x0) ≤ g(x). Mixed Optimization and Integration If g is defined over the space S × Θ, where Θ is a param- R eter space, then the problem is to determine θ 2 Θ for which S g(x; θ)dx is a minimum. The mathematical disciplines of real analysis (e.g. calculus), functional analysis, and combinatorial optimization offer deterministic algorithms for solving these problems (by solving we mean finding an exact solution or an approximate solution) for special instances of S and g. For example, if S is a closed interval of numbers [a; b] and g is a polynomial, then Z Z b g(x)dx = g(x)dx = G(b) − G(a); S a where G is an antiderivative of g. As another example, if G = (V; E; w) is a connected weighted simple graph, S is the set of all spanning trees of G, and g(x) denotes the sum of all the weights on the edges of spanning tree x, then Prim's algorithm may be used to find a spanning tree of G that minimizes g. -

Fundamentals of the Monte Carlo Method for Neutral and Charged Particle Transport

Fundamentals of the Monte Carlo method for neutral and charged particle transport Alex F Bielajew The University of Michigan Department of Nuclear Engineering and Radiological Sciences 2927 Cooley Building (North Campus) 2355 Bonisteel Boulevard Ann Arbor, Michigan 48109-2104 U. S. A. Tel: 734 764 6364 Fax: 734 763 4540 email: [email protected] c 1998—2020 Alex F Bielajew c 1998—2020 The University of Michigan May 31, 2020 2 Preface This book arises out of a course I am teaching for a two-credit (26 hour) graduate-level course Monte Carlo Methods being taught at the Department of Nuclear Engineering and Radiological Sciences at the University of Michigan. AFB, May 31, 2020 i ii Contents 1 What is the Monte Carlo method? 1 1.1 WhyisMonteCarlo?............................... 7 1.2 Somehistory ................................... 11 2 Elementary probability theory 15 2.1 Continuousrandomvariables .......................... 15 2.1.1 One-dimensional probability distributions . 15 2.1.2 Two-dimensional probability distributions . 24 2.1.3 Cumulative probability distributions . 27 2.2 Discreterandomvariables ............................ 27 3 Random Number Generation 31 3.1 Linear congruential random number generators. ...... 32 3.2 Long sequence random number generators . .... 36 4 Sampling Theory 41 4.1 Invertible cumulative distribution functions (direct method) . ...... 42 4.2 Rejectionmethod................................. 46 4.3 Mixedmethods .................................. 49 4.4 Examples of sampling techniques . 50 4.4.1 Circularly collimated parallel beam . 50 4.4.2 Point source collimated to a planar circle . 52 4.4.3 Mixedmethodexample.......................... 53 iii iv CONTENTS 4.4.4 Multi-dimensional example . 55 5 Error estimation 59 5.1 Directerrorestimation .............................. 62 5.2 Batch statistics error estimation . -

1. Monte Carlo Integration

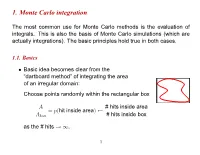

1. Monte Carlo integration The most common use for Monte Carlo methods is the evaluation of integrals. This is also the basis of Monte Carlo simulations (which are actually integrations). The basic principles hold true in both cases. 1.1. Basics Basic idea becomes clear from the • “dartboard method” of integrating the area of an irregular domain: Choose points randomly within the rectangular box A # hits inside area = p(hit inside area) Abox # hits inside box as the # hits . ! 1 1 Buffon’s (1707–1788) needle experiment to determine π: • – Throw needles, length l, on a grid of lines l distance d apart. – Probability that a needle falls on a line: d 2l P = πd – Aside: Lazzarini 1901: Buffon’s experiment with 34080 throws: 355 π = 3:14159292 ≈ 113 Way too good result! (See “π through the ages”, http://www-groups.dcs.st-and.ac.uk/ history/HistTopics/Pi through the ages.html) ∼ 2 1.2. Standard Monte Carlo integration Let us consider an integral in d dimensions: • I = ddxf(x) ZV Let V be a d-dim hypercube, with 0 x 1 (for simplicity). ≤ µ ≤ Monte Carlo integration: • - Generate N random vectors x from flat distribution (0 (x ) 1). i ≤ i µ ≤ - As N , ! 1 V N f(xi) I : N iX=1 ! - Error: 1=pN independent of d! (Central limit) / “Normal” numerical integration methods (Numerical Recipes): • - Divide each axis in n evenly spaced intervals 3 - Total # of points N nd ≡ - Error: 1=n (Midpoint rule) / 1=n2 (Trapezoidal rule) / 1=n4 (Simpson) / If d is small, Monte Carlo integration has much larger errors than • standard methods. -

Lab 4: Monte Carlo Integration and Variance Reduction

Lab 4: Monte Carlo Integration and Variance Reduction Lecturer: Zhao Jianhua Department of Statistics Yunnan University of Finance and Economics Task The objective in this lab is to learn the methods for Monte Carlo Integration and Variance Re- duction, including Monte Carlo Integration, Antithetic Variables, Control Variates, Importance Sampling, Stratified Sampling, Stratified Importance Sampling. 1 Monte Carlo Integration 1.1 Simple Monte Carlo estimator 1.1.1 Example 5.1 (Simple Monte Carlo integration) Compute a Monte Carlo(MC) estimate of Z 1 θ = e−xdx 0 and compare the estimate with the exact value. m <- 10000 x <- runif(m) theta.hat <- mean(exp(-x)) print(theta.hat) ## [1] 0.6324415 print(1 - exp(-1)) ## [1] 0.6321206 : − : The estimate is θ^ = 0:6355 and θ = 1 − e 1 = 0:6321. 1.1.2 Example 5.2 (Simple Monte Carlo integration, cont.) R 4 −x Compute a MC estimate of θ = 2 e dx: and compare the estimate with the exact value of the integral. m <- 10000 x <- runif(m, min = 2, max = 4) theta.hat <- mean(exp(-x)) * 2 print(theta.hat) 1 ## [1] 0.1168929 print(exp(-2) - exp(-4)) ## [1] 0.1170196 : − : The estimate is θ^ = 0:1172 and θ = 1 − e 1 = 0:1170. 1.1.3 Example 5.3 (Monte Carlo integration, unbounded interval) Use the MC approach to estimate the standard normal cdf Z 1 1 2 Φ(x) = p e−t /2dt: −∞ 2π Since the integration cover an unbounded interval, we break this problem into two cases: x ≥ 0 and x < 0, and use the symmetry of the normal density to handle the second case. -

Bayesian Computation: MCMC and All That

Bayesian Computation: MCMC and All That SCMA V Short-Course, Pennsylvania State University Alan Heavens, Tom Loredo, and David A van Dyk 11 and 12 June 2011 11 June Morning Session 10.00 - 13.00 10.00 - 11.15 The Basics: Heavens Bayes Theorem, Priors, and Posteriors. 11.15 - 11.30 Coffee Break 11.30 - 13.00 Low Dimensional Computing Part I Loredo 11 June Afternoon Session 14.15 - 17.30 14.15 - 15.15 Low Dimensional Computing Part II Loredo 15.15 - 16.00 MCMC Part I van Dyk 16.00 - 16.15 Coffee Break 16.15 - 17.00 MCMC Part II van Dyk 17.00 - 17.30 R Tutorial van Dyk 12 June Morning Session 10.00 - 13.15 10.00 - 10.45 Data Augmentation and PyBLoCXS Demo van Dyk 10.45 - 11.45 MCMC Lab van Dyk 11.45 - 12.00 Coffee Break 12.00 - 13.15 Output Analysls* Heavens and Loredo 12 June Afternoon Session 14.30 - 17.30 14.30 - 15.25 MCMC and Output Analysis Lab Heavens 15.25 - 16.05 Hamiltonian Monte Carlo Heavens 16.05 - 16.20 Coffee Break 16.20 - 17.00 Overview of Other Advanced Methods Loredo 17.00 - 17.30 Final Q&A Heavens, Loredo, and van Dyk * Heavens (45 min) will cover basic convergence issues and methods, such as G&R's multiple chains. Loredo (30 min) will discuss Resampling The Bayesics Alan Heavens University of Edinburgh, UK Lectures given at SCMA V, Penn State June 2011 I V N E R U S E I T H Y T O H F G E R D I N B U Outline • Types of problem • Bayes’ theorem • Parameter EsJmaon – Marginalisaon – Errors • Error predicJon and experimental design: Fisher Matrices • Model SelecJon LCDM fits the WMAP data well.