Basics of Video

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

An Improved SPSIM Index for Image Quality Assessment

S S symmetry Article An Improved SPSIM Index for Image Quality Assessment Mariusz Frackiewicz * , Grzegorz Szolc and Henryk Palus Department of Data Science and Engineering, Silesian University of Technology, Akademicka 16, 44-100 Gliwice, Poland; [email protected] (G.S.); [email protected] (H.P.) * Correspondence: [email protected]; Tel.: +48-32-2371066 Abstract: Objective image quality assessment (IQA) measures are playing an increasingly important role in the evaluation of digital image quality. New IQA indices are expected to be strongly correlated with subjective observer evaluations expressed by Mean Opinion Score (MOS) or Difference Mean Opinion Score (DMOS). One such recently proposed index is the SuperPixel-based SIMilarity (SPSIM) index, which uses superpixel patches instead of a rectangular pixel grid. The authors of this paper have proposed three modifications to the SPSIM index. For this purpose, the color space used by SPSIM was changed and the way SPSIM determines similarity maps was modified using methods derived from an algorithm for computing the Mean Deviation Similarity Index (MDSI). The third modification was a combination of the first two. These three new quality indices were used in the assessment process. The experimental results obtained for many color images from five image databases demonstrated the advantages of the proposed SPSIM modifications. Keywords: image quality assessment; image databases; superpixels; color image; color space; image quality measures Citation: Frackiewicz, M.; Szolc, G.; Palus, H. An Improved SPSIM Index 1. Introduction for Image Quality Assessment. Quantitative domination of acquired color images over gray level images results in Symmetry 2021, 13, 518. https:// the development not only of color image processing methods but also of Image Quality doi.org/10.3390/sym13030518 Assessment (IQA) methods. -

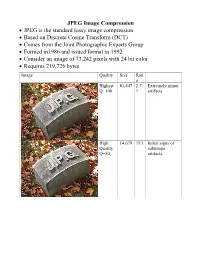

JPEG Image Compression

JPEG Image Compression JPEG is the standard lossy image compression Based on Discrete Cosine Transform (DCT) Comes from the Joint Photographic Experts Group Formed in1986 and issued format in 1992 Consider an image of 73,242 pixels with 24 bit color Requires 219,726 bytes Image Quality Size Rati o Highest 81,447 2.7: Extremely minor Q=100 1 artifacts High 14,679 15:1 Initial signs of Quality subimage Q=50 artifacts Medium 9,407 23:1 Stronger Q artifacts; loss of high frequency information Low 4,787 46:1 Severe high frequency loss leads to obvious artifacts on subimage boundaries ("macroblocking ") Lowest 1,523 144: Extreme loss of 1 color and detail; the leaves are nearly unrecognizable JPEG How it works Begin with a color translation RGB goes to Y′CBCR Luma and two Chroma colors Y is brightness CB is B-Y CR is R-Y Downsample or Chroma Subsampling Chroma data resolutions reduced by 2 or 3 Eye is less sensitive to fine color details than to brightness Block splitting Each channel broken into 8x8 blocks no subsampling Or 16x8 most common at medium compression Or 16x16 Must fill in remaining areas of incomplete blocks This gives the values DCT - centering Center the data about 0 Range is now -128 to 127 Middle is zero Discrete cosine transform formula Apply as 2D DCT using the formula Creates a new matrix Top left (largest) is the DC coefficient constant component Gives basic hue for the block Remaining 63 are AC coefficients Discrete cosine transform The DCT transforms an 8×8 block of input values to a linear combination of these 64 patterns. -

COLOR SPACE MODELS for VIDEO and CHROMA SUBSAMPLING

COLOR SPACE MODELS for VIDEO and CHROMA SUBSAMPLING Color space A color model is an abstract mathematical model describing the way colors can be represented as tuples of numbers, typically as three or four values or color components (e.g. RGB and CMYK are color models). However, a color model with no associated mapping function to an absolute color space is a more or less arbitrary color system with little connection to the requirements of any given application. Adding a certain mapping function between the color model and a certain reference color space results in a definite "footprint" within the reference color space. This "footprint" is known as a gamut, and, in combination with the color model, defines a new color space. For example, Adobe RGB and sRGB are two different absolute color spaces, both based on the RGB model. In the most generic sense of the definition above, color spaces can be defined without the use of a color model. These spaces, such as Pantone, are in effect a given set of names or numbers which are defined by the existence of a corresponding set of physical color swatches. This article focuses on the mathematical model concept. Understanding the concept Most people have heard that a wide range of colors can be created by the primary colors red, blue, and yellow, if working with paints. Those colors then define a color space. We can specify the amount of red color as the X axis, the amount of blue as the Y axis, and the amount of yellow as the Z axis, giving us a three-dimensional space, wherein every possible color has a unique position. -

The Video Codecs Behind Modern Remote Display Protocols Rody Kossen

The video codecs behind modern remote display protocols Rody Kossen 1 Rody Kossen - Consultant [email protected] @r_kossen rody-kossen-186b4b40 www.rodykossen.com 2 Agenda The basics H264 – The magician Other codecs Hardware support (NVIDIA) The basics 4 Let’s start with some terms YCbCr RGB Bit Depth YUV 5 Colors 6 7 8 9 Visible colors 10 Color Bit-Depth = Amount of different colors Used in 2 ways: > The number of bits used to indicate the color of a single pixel, for example 24 bit > Number of bits used for each color component of a single pixel (Red Green Blue), for example 8 bit Can be combined with Alpha, which describes transparency Most commonly used: > High Color – 16 bit = 5 bits per color + 1 unused bit = 32.768 colors = 2^15 > True Color – 24 bit Color + 8 bit Alpha = 8 bits per color = 16.777.216 colors > Deep color – 30 bit Color + 10 bit Alpha = 10 bits per color = 1.073 billion colors 11 Color Bit-Depth 12 Color Gamut Describes the subset of colors which can be displayed in relation to the human eye Standardized gamut: > Rec.709 (Blu-ray) > Rec.2020 (Ultra HD Blu-ray) > Adobe RGB > sRBG 13 Compression Lossy > Irreversible compression > Used to reduce data size > Examples: MP3, JPEG, MPEG-4 Lossless > Reversable compression > Examples: ZIP, PNG, FLAC or Dolby TrueHD Visually Lossless > Irreversible compression > Difference can’t be seen by the Human Eye 14 YCbCr Encoding RGB uses Red Green Blue values to describe a color YCbCr is a different way of storing color: > Y = Luma or Brightness of the Color > Cr = Chroma difference -

Creating 4K/UHD Content Poster

Creating 4K/UHD Content Colorimetry Image Format / SMPTE Standards Figure A2. Using a Table B1: SMPTE Standards The television color specification is based on standards defined by the CIE (Commission 100% color bar signal Square Division separates the image into quad links for distribution. to show conversion Internationale de L’Éclairage) in 1931. The CIE specified an idealized set of primary XYZ SMPTE Standards of RGB levels from UHDTV 1: 3840x2160 (4x1920x1080) tristimulus values. This set is a group of all-positive values converted from R’G’B’ where 700 mv (100%) to ST 125 SDTV Component Video Signal Coding for 4:4:4 and 4:2:2 for 13.5 MHz and 18 MHz Systems 0mv (0%) for each ST 240 Television – 1125-Line High-Definition Production Systems – Signal Parameters Y is proportional to the luminance of the additive mix. This specification is used as the color component with a color bar split ST 259 Television – SDTV Digital Signal/Data – Serial Digital Interface basis for color within 4K/UHDTV1 that supports both ITU-R BT.709 and BT2020. 2020 field BT.2020 and ST 272 Television – Formatting AES/EBU Audio and Auxiliary Data into Digital Video Ancillary Data Space BT.709 test signal. ST 274 Television – 1920 x 1080 Image Sample Structure, Digital Representation and Digital Timing Reference Sequences for The WFM8300 was Table A1: Illuminant (Ill.) Value Multiple Picture Rates 709 configured for Source X / Y BT.709 colorimetry ST 296 1280 x 720 Progressive Image 4:2:2 and 4:4:4 Sample Structure – Analog & Digital Representation & Analog Interface as shown in the video ST 299-0/1/2 24-Bit Digital Audio Format for SMPTE Bit-Serial Interfaces at 1.5 Gb/s and 3 Gb/s – Document Suite Illuminant A: Tungsten Filament Lamp, 2854°K x = 0.4476 y = 0.4075 session display. -

Measuring Perceived Color Difference Using YIQ Color Space

Programación Matemática y Software (2010) Vol. 2. No 2. ISSN: 2007-3283 Recibido: 17 de Agosto de 2010 Aceptado: 25 de Noviembre de 2010 Publicado en línea: 30 de Diciembre de 2010 Measuring perceived color difference using YIQ NTSC transmission color space in mobile applications Yuriy Kotsarenko, Fernando Ramos TECNOLOGICO DE DE MONTERREY, CAMPUS CUERNAVACA. Resumen: En este trabajo varias fórmulas están introducidas que permiten calcular la medir la diferencia entre colores de forma perceptible, utilizando el espacio de colores YIQ. Las formulas clásicas y sus derivados que utilizan los espacios CIELAB y CIELUV requieren muchas transformaciones aritméticas de valores entrantes definidos comúnmente con los componentes de rojo, verde y azul, y por lo tanto son muy pesadas para su implementación en dispositivos móviles. Las fórmulas alternativas propuestas en este trabajo basadas en espacio de colores YIQ son sencillas y se calculan rápidamente, incluso en tiempo real. La comparación está incluida en este trabajo entre las formulas clásicas y las propuestas utilizando dos diferentes grupos de experimentos. El primer grupo de experimentos se enfoca en evaluar la diferencia perceptible utilizando diferentes fórmulas, mientras el segundo grupo de experimentos permite determinar el desempeño de cada una de las fórmulas para determinar su velocidad cuando se procesan imágenes. Los resultados experimentales indican que las formulas propuestas en este trabajo son muy cercanas en términos perceptibles a las de CIELAB y CIELUV, pero son significativamente más rápidas, lo que los hace buenos candidatos para la medición de las diferencias de colores en dispositivos móviles y aplicaciones en tiempo real. Abstract: An alternative color difference formulas are presented for measuring the perceived difference between two color samples defined in YIQ color space. -

AN9717: Ycbcr to RGB Considerations (Multimedia)

YCbCr to RGB Considerations TM Application Note March 1997 AN9717 Author: Keith Jack Introduction Converting 4:2:2 to 4:4:4 YCbCr Many video ICs now generate 4:2:2 YCbCr video data. The Prior to converting YCbCr data to R´G´B´ data, the 4:2:2 YCbCr color space was developed as part of ITU-R BT.601 YCbCr data must be converted to 4:4:4 YCbCr data. For the (formerly CCIR 601) during the development of a world-wide YCbCr to RGB conversion process, each Y sample must digital component video standard. have a corresponding Cb and Cr sample. Some of these video ICs also generate digital RGB video Figure 1 illustrates the positioning of YCbCr samples for the data, using lookup tables to assist with the YCbCr to RGB 4:4:4 format. Each sample has a Y, a Cb, and a Cr value. conversion. By understanding the YCbCr to RGB conversion Each sample is typically 8 bits (consumer applications) or 10 process, the lookup tables can be eliminated, resulting in a bits (professional editing applications) per component. substantial cost savings. Figure 2 illustrates the positioning of YCbCr samples for the This application note covers some of the considerations for 4:2:2 format. For every two horizontal Y samples, there is converting the YCbCr data to RGB data without the use of one Cb and Cr sample. Each sample is typically 8 bits (con- lookup tables. The process basically consists of three steps: sumer applications) or 10 bits (professional editing applica- tions) per component. -

Measuring Perceived Color Difference Using YIQ NTSC Transmission Color Space in Mobile Applications

Programación Matemática y Software (2010) Vol.2. Num. 2. Dirección de Reservas de Derecho: 04-2009-011611475800-102 Measuring perceived color difference using YIQ NTSC transmission color space in mobile applications Yuriy Kotsarenko, Fernando Ramos TECNOLÓGICO DE DE MONTERREY, CAMPUS CUERNAVACA. Resumen. En este trabajo varias formulas están introducidas que permiten calcular la medir la diferencia entre colores de forma perceptible, utilizando el espacio de colores YIQ. Las formulas clásicas y sus derivados que utilizan los espacios CIELAB y CIELUV requieren muchas transformaciones aritméticas de valores entrantes definidos comúnmente con los componentes de rojo, verde y azul, y por lo tanto son muy pesadas para su implementación en dispositivos móviles. Las formulas alternativas propuestas en este trabajo basadas en espacio de colores YIQ son sencillas y se calculan rápidamente, incluso en tiempo real. La comparación está incluida en este trabajo entre las formulas clásicas y las propuestas utilizando dos diferentes grupos de experimentos. El primer grupo de experimentos se enfoca en evaluar la diferencia perceptible utilizando diferentes formulas, mientras el segundo grupo de experimentos permite determinar el desempeño de cada una de las formulas para determinar su velocidad cuando se procesan imágenes. Los resultados experimentales indican que las formulas propuestas en este trabajo son muy cercanas en términos perceptibles a las de CIELAB y CIELUV, pero son significativamente más rápidas, lo que los hace buenos candidatos para la medición de las diferencias de colores en dispositivos móviles y aplicaciones en tiempo real. Abstract. An alternative color difference formulas are presented for measuring the perceived difference between two color samples defined in YIQ color space. -

Color Image Enhancement with Saturation Adjustment Method

Journal of Applied Science and Engineering, Vol. 17, No. 4, pp. 341-352 (2014) DOI: 10.6180/jase.2014.17.4.01 Color Image Enhancement with Saturation Adjustment Method Jen-Shiun Chiang1, Chih-Hsien Hsia2*, Hao-Wei Peng1 and Chun-Hung Lien3 1Department of Electrical Engineering, Tamkang University, Tamsui, Taiwan 251 2Department of Electrical Engineering, Chinese Culture University, Taipei, Taiwan 111 3Commercialization and Service Center, Industrial Technology Research Institute, Taipei, Taiwan 106 Abstract In the traditional color adjustment approach, people tried to separately adjust the luminance and saturation. This approach makes the color over-saturate very easily and makes the image look unnatural. In this study, we try to use the concept of exposure compensation to simulate the brightness changes and to find the relationship among luminance, saturation, and hue. The simulation indicates that saturation changes withthe change of luminance and the simulation also shows there are certain relationships between color variation model and YCbCr color model. Together with all these symptoms, we also include the human vision characteristics to propose a new saturation method to enhance the vision effect of an image. As results, the proposed approach can make the image have better vivid and contrast. Most important of all, unlike the over-saturation caused by the conventional approach, our approach prevents over-saturation and further makes the adjusted image look natural. Key Words: Color Adjustment, Human Vision, Color Image Processing, YCbCr, Over-Saturation 1. Introduction over-saturated images. An over-saturated image looks unnatural due to the loss of color details in high satura- With the advanced development of technology, the tion area of the image. -

Color Images, Color Spaces and Color Image Processing

color images, color spaces and color image processing Ole-Johan Skrede 08.03.2017 INF2310 - Digital Image Processing Department of Informatics The Faculty of Mathematics and Natural Sciences University of Oslo After original slides by Fritz Albregtsen today’s lecture ∙ Color, color vision and color detection ∙ Color spaces and color models ∙ Transitions between color spaces ∙ Color image display ∙ Look up tables for colors ∙ Color image printing ∙ Pseudocolors and fake colors ∙ Color image processing ∙ Sections in Gonzales & Woods: ∙ 6.1 Color Funcdamentals ∙ 6.2 Color Models ∙ 6.3 Pseudocolor Image Processing ∙ 6.4 Basics of Full-Color Image Processing ∙ 6.5.5 Histogram Processing ∙ 6.6 Smoothing and Sharpening ∙ 6.7 Image Segmentation Based on Color 1 motivation ∙ We can differentiate between thousands of colors ∙ Colors make it easy to distinguish objects ∙ Visually ∙ And digitally ∙ We need to: ∙ Know what color space to use for different tasks ∙ Transit between color spaces ∙ Store color images rationally and compactly ∙ Know techniques for color image printing 2 the color of the light from the sun spectral exitance The light from the sun can be modeled with the spectral exitance of a black surface (the radiant exitance of a surface per unit wavelength) 2πhc2 1 M(λ) = { } : λ5 hc − exp λkT 1 where ∙ h ≈ 6:626 070 04 × 10−34 m2 kg s−1 is the Planck constant. ∙ c = 299 792 458 m s−1 is the speed of light. ∙ λ [m] is the radiation wavelength. ∙ k ≈ 1:380 648 52 × 10−23 m2 kg s−2 K−1 is the Boltzmann constant. T ∙ [K] is the surface temperature of the radiating Figure 1: Spectral exitance of a black body surface for different body. -

Image Compression GIF (Graphics Interchange Format) LZW (Lempel

GIF (Graphics Interchange Image Compression Format) • GIF (Graphics Interchange Format) • Introduced by Compuserve in 1987 (GIF87a), multiple-image in one file/application specific • PNG (Portable Network Graphics)/MNG metadata support added in 1989 (GIF89a) (Multiple-image Network Graphics) • LZW (Lempel-Ziv-Welch) compression replaced • JPEG (Joint Pictures Experts Group) earlier RLE (Run Length Encoding) B&W version – Patented by Compuserve/Unisys (has run out in US, will run out in June 2004 in Europe) • Maximum of 256 colours (from a palette) including a “transparent” colour • Optional interlacing feature • http://www.w3.org/Graphics/GIF/spec-gif89a.txt LZW (Lempel-Ziv-Welch) LZW Algorithm • Most of this method was invented and published • Dictionary initially contains all possible one byte codes by Lempel and Ziv in 1978 (LZ78 algorithm) (256 entries) • Input is taken one byte at a time to find the longest initial • A few details and improvements were later given string present in the dictionary by Welch in 1984 (variable increasing index sizes, • The code for that string is output, then the string is efficient dictionary data structure) extended with one more input byte, b • Achieves approx. 50% compression on large • A new entry is added to the table mapping the extended English texts string to the next unused code • superseded by DEFLATE and Burrows-Wheeler • The process repeats, starting from byte b transform methods • The number of bits in an output code, and hence the maximum number of entries in the table is fixed • once -

![Arxiv:1902.00267V1 [Cs.CV] 1 Feb 2019 Fcmue Iin Oto H Aaesue O Mg Classificat Image Th for in Used Applications Datasets Fundamental the Most of the Most Vision](https://docslib.b-cdn.net/cover/0817/arxiv-1902-00267v1-cs-cv-1-feb-2019-fcmue-iin-oto-h-aaesue-o-mg-classi-cat-image-th-for-in-used-applications-datasets-fundamental-the-most-of-the-most-vision-1150817.webp)

Arxiv:1902.00267V1 [Cs.CV] 1 Feb 2019 Fcmue Iin Oto H Aaesue O Mg Classificat Image Th for in Used Applications Datasets Fundamental the Most of the Most Vision

ColorNet: Investigating the importance of color spaces for image classification⋆ Shreyank N Gowda1 and Chun Yuan2 1 Computer Science Department, Tsinghua University, Beijing 10084, China [email protected] 2 Graduate School at Shenzhen, Tsinghua University, Shenzhen 518055, China [email protected] Abstract. Image classification is a fundamental application in computer vision. Recently, deeper networks and highly connected networks have shown state of the art performance for image classification tasks. Most datasets these days consist of a finite number of color images. These color images are taken as input in the form of RGB images and clas- sification is done without modifying them. We explore the importance of color spaces and show that color spaces (essentially transformations of original RGB images) can significantly affect classification accuracy. Further, we show that certain classes of images are better represented in particular color spaces and for a dataset with a highly varying number of classes such as CIFAR and Imagenet, using a model that considers multi- ple color spaces within the same model gives excellent levels of accuracy. Also, we show that such a model, where the input is preprocessed into multiple color spaces simultaneously, needs far fewer parameters to ob- tain high accuracy for classification. For example, our model with 1.75M parameters significantly outperforms DenseNet 100-12 that has 12M pa- rameters and gives results comparable to Densenet-BC-190-40 that has 25.6M parameters for classification of four competitive image classifica- tion datasets namely: CIFAR-10, CIFAR-100, SVHN and Imagenet. Our model essentially takes an RGB image as input, simultaneously converts the image into 7 different color spaces and uses these as inputs to individ- ual densenets.