An Altmetric Analysis of Top Journals in Library and Information Science

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Gies for Increasing Citations

CORE Metadata, citation and similar papers at core.ac.uk Provided by International SERIES on Information Systems and Management in Creative eMedia (CreMedia) Maximized Research Impact: Effective Strate- gies for Increasing Citations Nader Ale Ebrahim*1, Hossein Gholizadeh2, Artur Lugmayr3 1Research Support Unit, Centre for Research Services, Institute of Research Management and Monitoring (IPPP), University of Malaya, Malaysia, Email: [email protected], *Corresponding author 2Department of Biomedical Engineering, Faculty of Engineering, University of Malaya, 50603 Kuala Lumpur, Malaysia 3VisLab., School of Media, Culture and Creative Arts, Curtin University, Perth, Australia Abstract The high competitive environment has forced higher education authori- ties to set their strategies to improve university ranking. Citations of published pa- pers are among the most widely used inputs to measure national and global uni- versity ranking (which accounts for 20% of QS, 30% of THE, and etc.). Therefore, from one hand, improving the citation impact of a search is one of the university manager’s strategies. On the other hand, the researchers are also looking for some helpful techniques to increase their citation record. This chapter by reviewing the relevant articles covers 48 different strategies for maximizing research impact and visibility. The results show that some features of article can help predict the num- ber of article views and citation counts. The findings presented in this chapter could be used by university authorities, authors, reviewers, and editors to maxim- ize the impact of articles in the scientific community. Keywords: University ranking, Improve citation, Citation frequency, Research impact, Open access, h-index Introduction The research output is an essential part of an institution’s measure and evaluation of research quality. -

Sci-Hub Provides Access to Nearly All Scholarly Literature

Sci-Hub provides access to nearly all scholarly literature A DOI-citable version of this manuscript is available at https://doi.org/10.7287/peerj.preprints.3100. This manuscript was automatically generated from greenelab/scihub-manuscript@51678a7 on October 12, 2017. Submit feedback on the manuscript at git.io/v7feh or on the analyses at git.io/v7fvJ. Authors • Daniel S. Himmelstein 0000-0002-3012-7446 · dhimmel · dhimmel Department of Systems Pharmacology and Translational Therapeutics, University of Pennsylvania · Funded by GBMF4552 • Ariel Rodriguez Romero 0000-0003-2290-4927 · arielsvn · arielswn Bidwise, Inc • Stephen Reid McLaughlin 0000-0002-9888-3168 · stevemclaugh · SteveMcLaugh School of Information, University of Texas at Austin • Bastian Greshake Tzovaras 0000-0002-9925-9623 · gedankenstuecke · gedankenstuecke Department of Applied Bioinformatics, Institute of Cell Biology and Neuroscience, Goethe University Frankfurt • Casey S. Greene 0000-0001-8713-9213 · cgreene · GreeneScientist Department of Systems Pharmacology and Translational Therapeutics, University of Pennsylvania · Funded by GBMF4552 PeerJ Preprints | https://doi.org/10.7287/peerj.preprints.3100v2 | CC BY 4.0 Open Access | rec: 12 Oct 2017, publ: 12 Oct 2017 Abstract The website Sci-Hub provides access to scholarly literature via full text PDF downloads. The site enables users to access articles that would otherwise be paywalled. Since its creation in 2011, Sci- Hub has grown rapidly in popularity. However, until now, the extent of Sci-Hub’s coverage was unclear. As of March 2017, we find that Sci-Hub’s database contains 68.9% of all 81.6 million scholarly articles, which rises to 85.2% for those published in toll access journals. -

The Journal Ranking System Undermining the Impact of 2 Brazilian Science 3 4 Rodolfo Jaffé1 5 6 1 Instituto Tecnológico Vale, Belém-PA, Brazil

bioRxiv preprint doi: https://doi.org/10.1101/2020.07.05.188425; this version posted July 6, 2020. The copyright holder for this preprint (which was not certified by peer review) is the author/funder, who has granted bioRxiv a license to display the preprint in perpetuity. It is made available under aCC-BY-NC-ND 4.0 International license. 1 QUALIS: The journal ranking system undermining the impact of 2 Brazilian science 3 4 Rodolfo Jaffé1 5 6 1 Instituto Tecnológico Vale, Belém-PA, Brazil. Email: [email protected] 7 8 Abstract 9 10 A journal ranking system called QUALIS was implemented in Brazil in 2009, intended to rank 11 graduate programs from different subject areas and promote selected national journals. Since this 12 system uses a complicated suit of criteria (differing among subject areas) to group journals into 13 discrete categories, it could potentially create incentives to publish in low-impact journals ranked 14 highly by QUALIS. Here I assess the influence of the QUALIS journal ranking system on the 15 global impact of Brazilian science. Results reveal a steeper decrease in the number of citations 16 per document since the implementation of this QUALIS system, compared to the top Latin 17 American countries publishing more scientific articles. All the subject areas making up the 18 QUALIS system showed some degree of bias, with social sciences being usually more biased 19 than natural sciences. Lastly, the decrease in the number of citations over time proved steeper in a 20 more biased area, suggesting a faster shift towards low-impact journals ranked highly by 21 QUALIS. -

Conference Accreditation and Need of a Bibliometric Measure to Distinguish Predatory Conferences

publications Viewpoint Conference Accreditation and Need of a Bibliometric Measure to Distinguish Predatory Conferences Pooyan Makvandi 1,* , Anahita Nodehi 2 and Franklin R. Tay 3 1 Centre for Materials Interfaces, Istituto Italiano di Tecnologia, Viale Rinaldo Piaggio 34, 56025 Pontedera, Italy 2 Department of Statistics, Computer Science, Applications (DiSIA), Florence University, Viale Morgagni 59, 50134 Florence, Italy; Anahita.nodehi@unifi.it 3 The Graduate School, Augusta University, Augusta, GA 30912, USA; [email protected] * Correspondence: [email protected] or [email protected] Abstract: Academic conferences offer scientists the opportunity to share their findings and knowledge with other researchers. However, the number of conferences is rapidly increasing globally and many unsolicited e-mails are received from conference organizers. These e-mails take time for researchers to read and ascertain their legitimacy. Because not every conference is of high quality, there is a need for young researchers and scholars to recognize the so-called “predatory conferences” which make a profit from unsuspecting researchers without the core purpose of advancing science or collaboration. Unlike journals that possess accreditation indices, there is no appropriate accreditation for international conferences. Here, a bibliometric measure is proposed that enables scholars to evaluate conference quality before attending. Keywords: conference indicator; conference impact factor; conference accreditation; bibliometric measure Citation: Makvandi, P.; Nodehi, A.; Tay, F.R. Conference Accreditation and Need of a Bibliometric Measure 1. Introduction to Distinguish Predatory Conferences. Academic conferences offer scientists the opportunity to share their findings and Publications 2021, 9, 16. https:// knowledge with other researchers. Conferences are organized by institutions or societies, doi.org/10.3390/publications9020016 and in rare cases, by individuals [1]. -

Citation Analysis: Web of Science, Scopus

Citation analysis: Web of science, scopus Masoud Mohammadi Golestan University of Medical Sciences Information Management and Research Network Citation Analysis • Citation analysis is the study of the impact and assumed quality of an article, an author, or an institution based on the number of times works and/or authors have been cited by others • Citation analysis is the examination of the frequency, patterns, and graphs of citations in documents. It uses the pattern of citations, links from one document to another document, to reveal properties of the documents. A typical aim would be to identify the most important documents in a collection. A classic example is that of the citations between academic articles and books.The judgements produced by judges of law to support their decisions refer back to judgements made in earlier cases so citation analysis in a legal context is important. Another example is provided by patents which contain prior art, citation earlier patents relevant to the current claim. Citation Databases • Citation databases are databases that have been developed for evaluating publications. The citation databases enable you to count citations and check, for example, which articles or journals are the most cited ones • In a citation database you get information about who has cited an article and how many times an author has been cited. You can also list all articles citing the same source. • Most important citation database are • “Web of Science”, • “Scopus” • “Google Scholar” Web of Sciences • Web of Science is owned and produced by Thomson Reuters. WoS is composed of three databases containing citations from international scientific journals: • Arts & Humanities Citation Index - AHCI • Social Sciences Citation Index - SSCI • Science Citation Index - SCI • Journal Coverage: • Aims to include the best journals of all fields. -

Introduction to Bibliometrics

Introduction to Bibliometrics Bibliometrics A new service of the university library to support academic research Carolin Ahnert and Martin Bauschmann This work is licensed under: Creative Commons Attribution 4.0 International License. Chemnitz ∙ 16.05.17 ∙ Carolin Ahnert, Martin Bauschmann www.tu-chemnitz.de Introduction to Bibliometrics What is bibliometrics? – short definition „Bibliometrics is the statistical analysis of bibliographic data, commonly focusing on citation analysis of research outputs and publications, i.e. how many times research outputs and publications are being cited“ (University of Leeds, 2014) Quantitative analysis and visualisation of scientific research output based on publication and citation data Chemnitz ∙ 16.05.17 ∙ Carolin Ahnert, Martin Bauschmann www.tu-chemnitz.de Introduction to Bibliometrics What is bibliometrics? – a general classification descriptive bibliometrics evaluative bibliometrics Identification of relevant research topics Evaluation of research performance cognition or thematic trends (groups of researchers, institutes, Identification of key actors universities, countries) interests Exploration of cooperation patterns and Assessment of publication venues communication structures (especially journals) Interdisciplinarity Productivity → visibility → impact → Internationality quality? examined Topical cluster constructs Research fronts/ knowledge bases Social network analysis: co-author, co- Indicators: number of articles, citation methods/ citation, co-word-networks etc. rate, h-Index, -

Understanding Research Metrics INTRODUCTION Discover How to Monitor Your Journal’S Performance Through a Range of Research Metrics

Understanding research metrics INTRODUCTION Discover how to monitor your journal’s performance through a range of research metrics. This page will take you through the “basket of metrics”, from Impact Factor to usage, and help you identify the right research metrics for your journal. Contents UNDERSTANDING RESEACH METRICS 2 What are research metrics? What are research metrics? Research metrics are the fundamental tools used across the publishing industry to measure performance, both at journal- and author-level. For a long time, the only tool for assessing journal performance was the Impact Factor – more on that in a moment. Now there are a range of different research metrics available. This “basket of metrics” is growing every day, from the traditional Impact Factor to Altmetrics, h-index, and beyond. But what do they all mean? How is each metric calculated? Which research metrics are the most relevant to your journal? And how can you use these tools to monitor your journal’s performance? For a quick overview, download our simple guide to research metrics. Or keep reading for a more in-depth look at what’s in the “basket of metrics” and how to interpret it. UNDERSTANDING RESEACH METRICS 3 Citation-based metrics Citation-based metrics IMPACT FACTOR What is the Impact Factor? The Impact Factor is probably the most well-known metric for assessing journal performance. Designed to help librarians with collection management in the 1960s, it has since become a common proxy for journal quality. The Impact Factor is a simple research metric: it’s the average number of citations received by articles in a journal within a two-year window. -

Tracking the Popularity and Outcomes of All Biorxiv Preprints

1 Meta-Research: Tracking the popularity and outcomes of all bioRxiv 2 preprints 3 Richard J. Abdill1 and Ran Blekhman1,2 4 1 – Department of Genetics, Cell Biology, and Development, University of Minnesota, 5 Minneapolis, MN 6 2 – Department of Ecology, Evolution, and Behavior, University of Minnesota, St. Paul, 7 MN 8 9 ORCID iDs 10 RJA: 0000-0001-9565-5832 11 RB: 0000-0003-3218-613X 12 13 Correspondence 14 Ran Blekhman, PhD 15 University of Minnesota 16 MCB 6-126 17 420 Washington Avenue SE 18 Minneapolis, MN 55455 19 Email: [email protected] 1 20 Abstract 21 The growth of preprints in the life sciences has been reported widely and is 22 driving policy changes for journals and funders, but little quantitative information has 23 been published about preprint usage. Here, we report how we collected and analyzed 24 data on all 37,648 preprints uploaded to bioRxiv.org, the largest biology-focused preprint 25 server, in its first five years. The rate of preprint uploads to bioRxiv continues to grow 26 (exceeding 2,100 in October 2018), as does the number of downloads (1.1 million in 27 October 2018). We also find that two-thirds of preprints posted before 2017 were later 28 published in peer-reviewed journals, and find a relationship between the number of 29 downloads a preprint has received and the impact factor of the journal in which it is 30 published. We also describe Rxivist.org, a web application that provides multiple ways 31 to interact with preprint metadata. 32 Introduction 33 In the 30 days of September 2018, four leading biology journals – The Journal of 34 Biochemistry, PLOS Biology, Genetics and Cell – published 85 full-length research 35 articles. -

A Reference Bacterial Genome Dataset Generated on the Miniontm Portable Single-Molecule Nanopore Sequencer Joshua Quick1,2, Aaron R Quinlan3 and Nicholas J Loman1*

Quick et al. GigaScience (2015) 4:6 DOI 10.1186/s13742-015-0043-z ERRATUM Open Access Erratum: A reference bacterial genome dataset generated on the MinIONTM portable single-molecule nanopore sequencer Joshua Quick1,2, Aaron R Quinlan3 and Nicholas J Loman1* Recently we noticed that we could not reproduce Author details Figure three (Figure 1 here) while analysing this data as 1Institute of Microbiology and Infection, University of Birmingham, Birmingham, B15 2TT, UK. 2NIHR Surgical Reconstruction and Microbiology part of a new experiment [1]. This was due to a modifica- Research Centre, University of Birmingham, B15 2TT, Birmingham, UK. 3Center tion to the script used for extracting sequence alignment for Public Health Genomics, University of Virginia, Virginia, Charlottesville, VA profiles from the dataset [2]. On further investigation we 22908, US. found that an error in this script had been reported and Received: 31 December 2014 Accepted: 5 January 2015 a fix supplied by an anonymous online contributor on October 27th 2014 via Github [3]. The error prevented mismatches from being properly counted in the calcu- References lation of read accuracy (insertions and deletions were). 1. Quick J, Quinlan AR, Loman NJ. A reference bacterial genome dataset generated on the minion™ portable single-molecule nanopore We therefore present updated versions of Figures three sequencer. GigaScience. 2014;3:22. (Figure 1 here) and four (Figure 2 here) generated by the 2. Quinlan AR. 2014. https://github.com/arq5x/nanopore-scripts/blob/ corrected script. We are grateful to the anonymous con- master/count-errors.py.Github 3. Anon. 2014. https://github.com/arq5x/nanopore-scripts/pull/1.Github tributor who noticed this error. -

1 Scientometric Indicators and Their Exploitation by Journal Publishers

Scientometric indicators and their exploitation by journal publishers Name: Pranay Parsuram Student number: 2240564 Course: Master’s Thesis (Book and Digital Media Studies) Supervisor: Prof. Fleur Praal Second reader: Dr. Adriaan van der Weel Date of completion: 12 July 2019 Word count: 18177 words 1 Contents 1. Introduction ............................................................................................................................ 3 2. Scientometric Indicators ........................................................................................................ 8 2.1. Journal Impact Factor ...................................................................................................... 8 2.2. h-Index .......................................................................................................................... 10 2.3. Eigenfactor™ ................................................................................................................ 11 2.4. SCImago Journal Rank.................................................................................................. 13 2.5. Source Normalized Impact Per Paper ........................................................................... 14 2.6. CiteScore ....................................................................................................................... 15 2.6. General Limitations of Citation Count .......................................................................... 16 3. Conceptual Framework ....................................................................................................... -

Open Science and the Role of Publishers in Reproducible Research

Open science and the role of publishers in reproducible research Authors: Iain Hrynaszkiewicz (Faculty of 1000), Peter Li (BGI), Scott Edmunds (BGI) Chapter summary Reproducible computational research is and will be facilitated by the wide availability of scientific data, literature and code which is freely accessible and, furthermore, licensed such that it can be reused, inteGrated and built upon to drive new scientific discoveries without leGal impediments. Scholarly publishers have an important role in encouraGing and mandating the availability of data and code accordinG to community norms and best practices, and developinG innovative mechanisms and platforms for sharinG and publishinG products of research, beyond papers in journals. Open access publishers, in particular the first commercial open access publisher BioMed Central, have played a key role in the development of policies on open access and open data, and increasing the use by scientists of leGal tools – licenses and waivers – which maximize reproducibility. Collaborations, between publishers and funders of scientific research, are vital for the successful implementation of reproducible research policies. The genomics and, latterly, other ‘omics communities historically have been leaders in the creation and wide adoption of policies on public availability of data. This has been throuGh policies, such as Fort Lauderdale and the Bermuda Principles; infrastructure, such as the INSDC databases; and incentives, such as conditions of journal publication. We review some of these policies and practices, and how these events relate to the open access publishinG movement. We describe the implementation and adoption of licenses and waivers prepared by Creative Commons, in science publishinG, with a focus on licensing of research data published in scholarly journals and data repositories. -

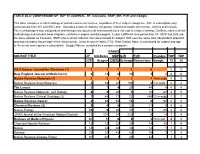

TABLE 46.4: COMPARISON of TOP 10 JOURNAL JIF, Citescore, SNIP, SIR, FCR and Google

TABLE 46.4: COMPARISON OF TOP 10 JOURNAL JIF, Citescore, SNIP, SIR, FCR and Google This table compares current rankings of journals across six sources, regardless of their subject categories. JCR is subscription only, uses journals from SCI and SSCI and calculates a ratio of citations comparing citations to citable documents.- articles and reviews. The methodology is size independent and having more documents and citations does not lead to a higher ranking. CiteScore uses a similar methodology and includes book chapters, conference papers and data papers. It uses a different time period than JIF. SNIP and SJR use the same dataset as Citescore. SNIP uses a similar ratio but normalizes based on subject. SJR uses the same size independent approach and also considers the prestige of the citing journal. It has its own H-Index. FCR, Field Citation Ratio, is normalized for subject and age of the article and requires a subscription. Google Metrics compiled by a computer program. RANKS SOURCE TITLE JIF CiteScore SNIP SJR FCR h5-index TOP TOP JCR Scopus CWTS Scimago Dimensions Google 10 20 CA:A Cancer Journal for Clinicians (1) 1 1 1 1 6 5 5 New England Journal of Medicine (2) 2 13 8 19 1 2 4 6 Nature Reviews Materials (1) 3 3 5 3 4 16 in subc 5 5 Nature Reviews Drug Discovery 4 53 22 156 5 1 in subc 2 2 The Lancet 5 7 4 29 44 4 4 4 Nature Reviews Molecular Cell Biology 6 8 20 5 15 67 3 5 Nature Reviews Clinical Oncology (3) 7 44 71 72 249 13 in subc 1 1 Nature Reviews Cancer 8 10 18 10 9 90 4 5 Chemical Reviews (2) 9 4 9 12 11 9 4 6 Nature Energy