Probability, Conditional Probability & Bayes Rule

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Bayes and the Law

Bayes and the Law Norman Fenton, Martin Neil and Daniel Berger [email protected] January 2016 This is a pre-publication version of the following article: Fenton N.E, Neil M, Berger D, “Bayes and the Law”, Annual Review of Statistics and Its Application, Volume 3, 2016, doi: 10.1146/annurev-statistics-041715-033428 Posted with permission from the Annual Review of Statistics and Its Application, Volume 3 (c) 2016 by Annual Reviews, http://www.annualreviews.org. Abstract Although the last forty years has seen considerable growth in the use of statistics in legal proceedings, it is primarily classical statistical methods rather than Bayesian methods that have been used. Yet the Bayesian approach avoids many of the problems of classical statistics and is also well suited to a broader range of problems. This paper reviews the potential and actual use of Bayes in the law and explains the main reasons for its lack of impact on legal practice. These include misconceptions by the legal community about Bayes’ theorem, over-reliance on the use of the likelihood ratio and the lack of adoption of modern computational methods. We argue that Bayesian Networks (BNs), which automatically produce the necessary Bayesian calculations, provide an opportunity to address most concerns about using Bayes in the law. Keywords: Bayes, Bayesian networks, statistics in court, legal arguments 1 1 Introduction The use of statistics in legal proceedings (both criminal and civil) has a long, but not terribly well distinguished, history that has been very well documented in (Finkelstein, 2009; Gastwirth, 2000; Kadane, 2008; Koehler, 1992; Vosk and Emery, 2014). -

Estimating the Accuracy of Jury Verdicts

Institute for Policy Research Northwestern University Working Paper Series WP-06-05 Estimating the Accuracy of Jury Verdicts Bruce D. Spencer Faculty Fellow, Institute for Policy Research Professor of Statistics Northwestern University Version date: April 17, 2006; rev. May 4, 2007 Forthcoming in Journal of Empirical Legal Studies 2040 Sheridan Rd. ! Evanston, IL 60208-4100 ! Tel: 847-491-3395 Fax: 847-491-9916 www.northwestern.edu/ipr, ! [email protected] Abstract Average accuracy of jury verdicts for a set of cases can be studied empirically and systematically even when the correct verdict cannot be known. The key is to obtain a second rating of the verdict, for example the judge’s, as in the recent study of criminal cases in the U.S. by the National Center for State Courts (NCSC). That study, like the famous Kalven-Zeisel study, showed only modest judge-jury agreement. Simple estimates of jury accuracy can be developed from the judge-jury agreement rate; the judge’s verdict is not taken as the gold standard. Although the estimates of accuracy are subject to error, under plausible conditions they tend to overestimate the average accuracy of jury verdicts. The jury verdict was estimated to be accurate in no more than 87% of the NCSC cases (which, however, should not be regarded as a representative sample with respect to jury accuracy). More refined estimates, including false conviction and false acquittal rates, are developed with models using stronger assumptions. For example, the conditional probability that the jury incorrectly convicts given that the defendant truly was not guilty (a “type I error”) was estimated at 0.25, with an estimated standard error (s.e.) of 0.07, the conditional probability that a jury incorrectly acquits given that the defendant truly was guilty (“type II error”) was estimated at 0.14 (s.e. -

General Probability, II: Independence and Conditional Proba- Bility

Math 408, Actuarial Statistics I A.J. Hildebrand General Probability, II: Independence and conditional proba- bility Definitions and properties 1. Independence: A and B are called independent if they satisfy the product formula P (A ∩ B) = P (A)P (B). 2. Conditional probability: The conditional probability of A given B is denoted by P (A|B) and defined by the formula P (A ∩ B) P (A|B) = , P (B) provided P (B) > 0. (If P (B) = 0, the conditional probability is not defined.) 3. Independence of complements: If A and B are independent, then so are A and B0, A0 and B, and A0 and B0. 4. Connection between independence and conditional probability: If the con- ditional probability P (A|B) is equal to the ordinary (“unconditional”) probability P (A), then A and B are independent. Conversely, if A and B are independent, then P (A|B) = P (A) (assuming P (B) > 0). 5. Complement rule for conditional probabilities: P (A0|B) = 1 − P (A|B). That is, with respect to the first argument, A, the conditional probability P (A|B) satisfies the ordinary complement rule. 6. Multiplication rule: P (A ∩ B) = P (A|B)P (B) Some special cases • If P (A) = 0 or P (B) = 0 then A and B are independent. The same holds when P (A) = 1 or P (B) = 1. • If B = A or B = A0, A and B are not independent except in the above trivial case when P (A) or P (B) is 0 or 1. In other words, an event A which has probability strictly between 0 and 1 is not independent of itself or of its complement. -

Propensities and Probabilities

ARTICLE IN PRESS Studies in History and Philosophy of Modern Physics 38 (2007) 593–625 www.elsevier.com/locate/shpsb Propensities and probabilities Nuel Belnap 1028-A Cathedral of Learning, University of Pittsburgh, Pittsburgh, PA 15260, USA Received 19 May 2006; accepted 6 September 2006 Abstract Popper’s introduction of ‘‘propensity’’ was intended to provide a solid conceptual foundation for objective single-case probabilities. By considering the partly opposed contributions of Humphreys and Miller and Salmon, it is argued that when properly understood, propensities can in fact be understood as objective single-case causal probabilities of transitions between concrete events. The chief claim is that propensities are well-explicated by describing how they fit into the existing formal theory of branching space-times, which is simultaneously indeterministic and causal. Several problematic examples, some commonsense and some quantum-mechanical, are used to make clear the advantages of invoking branching space-times theory in coming to understand propensities. r 2007 Elsevier Ltd. All rights reserved. Keywords: Propensities; Probabilities; Space-times; Originating causes; Indeterminism; Branching histories 1. Introduction You are flipping a fair coin fairly. You ascribe a probability to a single case by asserting The probability that heads will occur on this very next flip is about 50%. ð1Þ The rough idea of a single-case probability seems clear enough when one is told that the contrast is with either generalizations or frequencies attributed to populations asserted while you are flipping a fair coin fairly, such as In the long run; the probability of heads occurring among flips is about 50%. ð2Þ E-mail address: [email protected] 1355-2198/$ - see front matter r 2007 Elsevier Ltd. -

Conditional Probability and Bayes Theorem A

Conditional probability And Bayes theorem A. Zaikin 2.1 Conditional probability 1 Conditional probablity Given events E and F ,oftenweareinterestedinstatementslike if even E has occurred, then the probability of F is ... Some examples: • Roll two dice: what is the probability that the sum of faces is 6 given that the first face is 4? • Gene expressions: What is the probability that gene A is switched off (e.g. down-regulated) given that gene B is also switched off? A. Zaikin 2.2 Conditional probability 2 This conditional probability can be derived following a similar construction: • Repeat the experiment N times. • Count the number of times event E occurs, N(E),andthenumberoftimesboth E and F occur jointly, N(E ∩ F ).HenceN(E) ≤ N • The proportion of times that F occurs in this reduced space is N(E ∩ F ) N(E) since E occurs at each one of them. • Now note that the ratio above can be re-written as the ratio between two (unconditional) probabilities N(E ∩ F ) N(E ∩ F )/N = N(E) N(E)/N • Then the probability of F ,giventhatE has occurred should be defined as P (E ∩ F ) P (E) A. Zaikin 2.3 Conditional probability: definition The definition of Conditional Probability The conditional probability of an event F ,giventhataneventE has occurred, is defined as P (E ∩ F ) P (F |E)= P (E) and is defined only if P (E) > 0. Note that, if E has occurred, then • F |E is a point in the set P (E ∩ F ) • E is the new sample space it can be proved that the function P (·|·) defyning a conditional probability also satisfies the three probability axioms. -

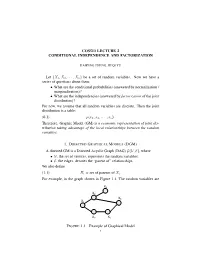

Cos513 Lecture 2 Conditional Independence and Factorization

COS513 LECTURE 2 CONDITIONAL INDEPENDENCE AND FACTORIZATION HAIPENG ZHENG, JIEQI YU Let {X1,X2, ··· ,Xn} be a set of random variables. Now we have a series of questions about them: • What are the conditional probabilities (answered by normalization / marginalization)? • What are the independencies (answered by factorization of the joint distribution)? For now, we assume that all random variables are discrete. Then the joint distribution is a table: (0.1) p(x1, x2, ··· , xn). Therefore, Graphic Model (GM) is a economic representation of joint dis- tribution taking advantage of the local relationships between the random variables. 1. DIRECTED GRAPHICAL MODELS (DGM) A directed GM is a Directed Acyclic Graph (DAG) G(V, E), where •V, the set of vertices, represents the random variables; •E, the edges, denotes the “parent of” relationships. We also define (1.1) Πi = set of parents of Xi. For example, in the graph shown in Figure 1.1, The random variables are X4 X4 X2 X2 X 6 X X1 6 X1 X3 X5 X3 X5 XZY XZY FIGURE 1.1. Example of Graphical Model 1 Y X Y Y X Y XZZ XZZ Y XYY XY XZZ XZZ XZY XZY 2 HAIPENG ZHENG, JIEQI YU {X1,X2 ··· ,X6}, and (1.2) Π6 = {X2,X3}. This DAG represents the following joint distribution: (1.3) p(x1:6) = p(x1)p(x2|x1)p(x3|x1)p(x4|x2)p(x5|x3)p(x6|x2, x5). In general, n Y (1.4) p(x1:n) = p(xi|xπi ) i=1 specifies a particular joint distribution. Note here πi stands for the set of indices of the parents of i. -

CONDITIONAL EXPECTATION Definition 1. Let (Ω,F,P)

CONDITIONAL EXPECTATION 1. CONDITIONAL EXPECTATION: L2 THEORY ¡ Definition 1. Let (,F ,P) be a probability space and let G be a σ algebra contained in F . For ¡ any real random variable X L2(,F ,P), define E(X G ) to be the orthogonal projection of X 2 j onto the closed subspace L2(,G ,P). This definition may seem a bit strange at first, as it seems not to have any connection with the naive definition of conditional probability that you may have learned in elementary prob- ability. However, there is a compelling rationale for Definition 1: the orthogonal projection E(X G ) minimizes the expected squared difference E(X Y )2 among all random variables Y j ¡ 2 L2(,G ,P), so in a sense it is the best predictor of X based on the information in G . It may be helpful to consider the special case where the σ algebra G is generated by a single random ¡ variable Y , i.e., G σ(Y ). In this case, every G measurable random variable is a Borel function Æ ¡ of Y (exercise!), so E(X G ) is the unique Borel function h(Y ) (up to sets of probability zero) that j minimizes E(X h(Y ))2. The following exercise indicates that the special case where G σ(Y ) ¡ Æ for some real-valued random variable Y is in fact very general. Exercise 1. Show that if G is countably generated (that is, there is some countable collection of set B G such that G is the smallest σ algebra containing all of the sets B ) then there is a j 2 ¡ j G measurable real random variable Y such that G σ(Y ). -

Data-Mining Probabilists Or Experimental Determinists?

Gopnik-CH_13.qxd 10/10/2006 1:39 PM Page 208 13 Data-Mining Probabilists or Experimental Determinists? A Dialogue on the Principles Underlying Causal Learning in Children Thomas Richardson, Laura Schulz, & Alison Gopnik This dialogue is a distillation of a real series of conver- Meno: Leaving aside the question of how children sations that took place at that most platonic of acade- learn for a while, can we agree on some basic prin- mies, the Center for Advanced Studies in the ciples for causal inference by anyone—child, Behavioral Sciences, between the first and third adult, or scientist? \edq1\ authors.\edq1\ The second author had determinist leanings to begin with and acted as an intermediary. Laplace: I think so. I think we can both generally agree that, subject to various caveats, two variables will not be dependent in probability unless there is Determinism, Faithfulness, and Causal some kind of causal connection present. Inference Meno: Yes—that’s what I’d call the weak causal Narrator: [Meno and Laplace stand in the corridor Markov condition. I assume that the kinds of of a daycare, observing toddlers at play through a caveats you have in mind are to restrict this princi- window.] ple to situations in which a discussion of cause and effect might be reasonable in the first place? Meno: Do you ever wonder how it is that children manage to learn so many causal relations so suc- Laplace: Absolutely. I don’t want to get involved in cessfully, so quickly? They make it all seem effort- discussions of whether X causes 2X or whether the less. -

ST 371 (VIII): Theory of Joint Distributions

ST 371 (VIII): Theory of Joint Distributions So far we have focused on probability distributions for single random vari- ables. However, we are often interested in probability statements concerning two or more random variables. The following examples are illustrative: • In ecological studies, counts, modeled as random variables, of several species are often made. One species is often the prey of another; clearly, the number of predators will be related to the number of prey. • The joint probability distribution of the x, y and z components of wind velocity can be experimentally measured in studies of atmospheric turbulence. • The joint distribution of the values of various physiological variables in a population of patients is often of interest in medical studies. • A model for the joint distribution of age and length in a population of ¯sh can be used to estimate the age distribution from the length dis- tribution. The age distribution is relevant to the setting of reasonable harvesting policies. 1 Joint Distribution The joint behavior of two random variables X and Y is determined by the joint cumulative distribution function (cdf): (1.1) FXY (x; y) = P (X · x; Y · y); where X and Y are continuous or discrete. For example, the probability that (X; Y ) belongs to a given rectangle is P (x1 · X · x2; y1 · Y · y2) = F (x2; y2) ¡ F (x2; y1) ¡ F (x1; y2) + F (x1; y1): 1 In general, if X1; ¢ ¢ ¢ ;Xn are jointly distributed random variables, the joint cdf is FX1;¢¢¢ ;Xn (x1; ¢ ¢ ¢ ; xn) = P (X1 · x1;X2 · x2; ¢ ¢ ¢ ;Xn · xn): Two- and higher-dimensional versions of probability distribution functions and probability mass functions exist. -

Bayesian Networks

Bayesian Networks Read R&N Ch. 14.1-14.2 Next lecture: Read R&N 18.1-18.4 You will be expected to know • Basic concepts and vocabulary of Bayesian networks. – Nodes represent random variables. – Directed arcs represent (informally) direct influences. – Conditional probability tables, P( Xi | Parents(Xi) ). • Given a Bayesian network: – Write down the full joint distribution it represents. • Given a full joint distribution in factored form: – Draw the Bayesian network that represents it. • Given a variable ordering and some background assertions of conditional independence among the variables: – Write down the factored form of the full joint distribution, as simplified by the conditional independence assertions. Computing with Probabilities: Law of Total Probability Law of Total Probability (aka “summing out” or marginalization) P(a) = Σb P(a, b) = Σb P(a | b) P(b) where B is any random variable Why is this useful? given a joint distribution (e.g., P(a,b,c,d)) we can obtain any “marginal” probability (e.g., P(b)) by summing out the other variables, e.g., P(b) = Σa Σc Σd P(a, b, c, d) Less obvious: we can also compute any conditional probability of interest given a joint distribution, e.g., P(c | b) = Σa Σd P(a, c, d | b) = (1 / P(b)) Σa Σd P(a, c, d, b) where (1 / P(b)) is just a normalization constant Thus, the joint distribution contains the information we need to compute any probability of interest. Computing with Probabilities: The Chain Rule or Factoring We can always write P(a, b, c, … z) = P(a | b, c, …. -

Recent Progress on Conditional Randomness (Probability Symposium)

数理解析研究所講究録 39 第2030巻 2017年 39-46 Recent progress on conditional randomness * Hayato Takahashi Random Data Lab. Abstract We review the recent progress on the definition of randomness with respect to conditional probabilities and a generalization of van Lambal‐ gen theorem (Takahashi 2006, 2008, 2009, 2011). In addition we show a new result on the random sequences when the conditional probabili‐ tie \mathrm{s}^{\backslash }\mathrm{a}\mathrm{r}\mathrm{e} mutually singular, which is a generalization of Kjos Hanssens theorem (2010). Finally we propose a definition of random sequences with respect to conditional probability and argue the validity of the definition from the Bayesian statistical point of view. Keywords: Martin‐Löf random sequences, Lambalgen theo‐ rem, conditional probability, Bayesian statistics 1 Introduction The notion of conditional probability is one of the main idea in probability theory. In order to define conditional probability rigorously, Kolmogorov introduced measure theory into probability theory [4]. The notion of ran‐ domness is another important subject in probability and statistics. In statistics, the set of random points is defined as the compliment of give statistical tests. In practice, data is finite and statistical test is a set of small probability, say 3%, with respect to null hypothesis. In order to dis‐ cuss whether a point is random or not rigorously, we study the randomness of sequences (infinite data) and null sets as statistical tests. The random set depends on the class of statistical tests. Kolmogorov brought an idea of recursion theory into statistics and proposed the random set as the compli‐ ment of the effective null sets [5, 6, 8]. -

(Ω, F,P) Be a Probability Spac

Lecture 9: Independence, conditional independence, conditional distribution Definition 1.7. Let (Ω, F,P ) be a probability space. (i) Let C be a collection of subsets in F. Events in C are said to be independent if and only if for any positive integer n and distinct events A1,...,An in C, P (A1 ∩ A2 ∩···∩ An)= P (A1)P (A2) ··· P (An). (ii) Collections Ci ⊂ F, i ∈ I (an index set that can be uncountable), are said to be independent if and only if events in any collection of the form {Ai ∈ Ci : i ∈ I} are independent. (iii) Random elements Xi, i ∈ I, are said to be independent if and only if σ(Xi), i ∈ I, are independent. A useful result for checking the independence of σ-fields. Lemma 1.3. Let Ci, i ∈ I, be independent collections of events. Suppose that each Ci has the property that if A ∈ Ci and B ∈ Ci, then A∩B ∈ Ci. Then σ(Ci), i ∈ I, are independent. Random variables Xi, i =1, ..., k, are independent according to Definition 1.7 if and only if k F(X1,...,Xk)(x1, ..., xk)= FX1 (x1) ··· FXk (xk), (x1, ..., xk) ∈ R Take Ci = {(a, b] : a ∈ R, b ∈ R}, i =1, ..., k If X and Y are independent random vectors, then so are g(X) and h(Y ) for Borel functions g and h. Two events A and B are independent if and only if P (B|A) = P (B), which means that A provides no information about the probability of the occurrence of B. Proposition 1.11.