21. Orthonormal Bases

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

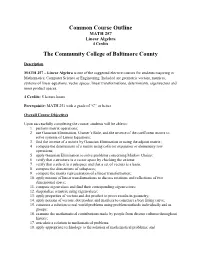

Common Course Outline MATH 257 Linear Algebra 4 Credits

Common Course Outline MATH 257 Linear Algebra 4 Credits The Community College of Baltimore County Description MATH 257 – Linear Algebra is one of the suggested elective courses for students majoring in Mathematics, Computer Science or Engineering. Included are geometric vectors, matrices, systems of linear equations, vector spaces, linear transformations, determinants, eigenvectors and inner product spaces. 4 Credits: 5 lecture hours Prerequisite: MATH 251 with a grade of “C” or better Overall Course Objectives Upon successfully completing the course, students will be able to: 1. perform matrix operations; 2. use Gaussian Elimination, Cramer’s Rule, and the inverse of the coefficient matrix to solve systems of Linear Equations; 3. find the inverse of a matrix by Gaussian Elimination or using the adjoint matrix; 4. compute the determinant of a matrix using cofactor expansion or elementary row operations; 5. apply Gaussian Elimination to solve problems concerning Markov Chains; 6. verify that a structure is a vector space by checking the axioms; 7. verify that a subset is a subspace and that a set of vectors is a basis; 8. compute the dimensions of subspaces; 9. compute the matrix representation of a linear transformation; 10. apply notions of linear transformations to discuss rotations and reflections of two dimensional space; 11. compute eigenvalues and find their corresponding eigenvectors; 12. diagonalize a matrix using eigenvalues; 13. apply properties of vectors and dot product to prove results in geometry; 14. apply notions of vectors, dot product and matrices to construct a best fitting curve; 15. construct a solution to real world problems using problem methods individually and in groups; 16. -

Introduction to Linear Bialgebra

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by University of New Mexico University of New Mexico UNM Digital Repository Mathematics and Statistics Faculty and Staff Publications Academic Department Resources 2005 INTRODUCTION TO LINEAR BIALGEBRA Florentin Smarandache University of New Mexico, [email protected] W.B. Vasantha Kandasamy K. Ilanthenral Follow this and additional works at: https://digitalrepository.unm.edu/math_fsp Part of the Algebra Commons, Analysis Commons, Discrete Mathematics and Combinatorics Commons, and the Other Mathematics Commons Recommended Citation Smarandache, Florentin; W.B. Vasantha Kandasamy; and K. Ilanthenral. "INTRODUCTION TO LINEAR BIALGEBRA." (2005). https://digitalrepository.unm.edu/math_fsp/232 This Book is brought to you for free and open access by the Academic Department Resources at UNM Digital Repository. It has been accepted for inclusion in Mathematics and Statistics Faculty and Staff Publications by an authorized administrator of UNM Digital Repository. For more information, please contact [email protected], [email protected], [email protected]. INTRODUCTION TO LINEAR BIALGEBRA W. B. Vasantha Kandasamy Department of Mathematics Indian Institute of Technology, Madras Chennai – 600036, India e-mail: [email protected] web: http://mat.iitm.ac.in/~wbv Florentin Smarandache Department of Mathematics University of New Mexico Gallup, NM 87301, USA e-mail: [email protected] K. Ilanthenral Editor, Maths Tiger, Quarterly Journal Flat No.11, Mayura Park, 16, Kazhikundram Main Road, Tharamani, Chennai – 600 113, India e-mail: [email protected] HEXIS Phoenix, Arizona 2005 1 This book can be ordered in a paper bound reprint from: Books on Demand ProQuest Information & Learning (University of Microfilm International) 300 N. -

Abstract Tensor Systems and Diagrammatic Representations

Abstract tensor systems and diagrammatic representations J¯anisLazovskis September 28, 2012 Abstract The diagrammatic tensor calculus used by Roger Penrose (most notably in [7]) is introduced without a solid mathematical grounding. We will attempt to derive the tools of such a system, but in a broader setting. We show that Penrose's work comes from the diagrammisation of the symmetric algebra. Lie algebra representations and their extensions to knot theory are also discussed. Contents 1 Abstract tensors and derived structures 2 1.1 Abstract tensor notation . 2 1.2 Some basic operations . 3 1.3 Tensor diagrams . 3 2 A diagrammised abstract tensor system 6 2.1 Generation . 6 2.2 Tensor concepts . 9 3 Representations of algebras 11 3.1 The symmetric algebra . 12 3.2 Lie algebras . 13 3.3 The tensor algebra T(g)....................................... 16 3.4 The symmetric Lie algebra S(g)................................... 17 3.5 The universal enveloping algebra U(g) ............................... 18 3.6 The metrized Lie algebra . 20 3.6.1 Diagrammisation with a bilinear form . 20 3.6.2 Diagrammisation with a symmetric bilinear form . 24 3.6.3 Diagrammisation with a symmetric bilinear form and an orthonormal basis . 24 3.6.4 Diagrammisation under ad-invariance . 29 3.7 The universal enveloping algebra U(g) for a metrized Lie algebra g . 30 4 Ensuing connections 32 A Appendix 35 Note: This work relies heavily upon the text of Chapter 12 of a draft of \An Introduction to Quantum and Vassiliev Invariants of Knots," by David M.R. Jackson and Iain Moffatt, a yet-unpublished book at the time of writing. -

Multivector Differentiation and Linear Algebra 0.5Cm 17Th Santaló

Multivector differentiation and Linear Algebra 17th Santalo´ Summer School 2016, Santander Joan Lasenby Signal Processing Group, Engineering Department, Cambridge, UK and Trinity College Cambridge [email protected], www-sigproc.eng.cam.ac.uk/ s jl 23 August 2016 1 / 78 Examples of differentiation wrt multivectors. Linear Algebra: matrices and tensors as linear functions mapping between elements of the algebra. Functional Differentiation: very briefly... Summary Overview The Multivector Derivative. 2 / 78 Linear Algebra: matrices and tensors as linear functions mapping between elements of the algebra. Functional Differentiation: very briefly... Summary Overview The Multivector Derivative. Examples of differentiation wrt multivectors. 3 / 78 Functional Differentiation: very briefly... Summary Overview The Multivector Derivative. Examples of differentiation wrt multivectors. Linear Algebra: matrices and tensors as linear functions mapping between elements of the algebra. 4 / 78 Summary Overview The Multivector Derivative. Examples of differentiation wrt multivectors. Linear Algebra: matrices and tensors as linear functions mapping between elements of the algebra. Functional Differentiation: very briefly... 5 / 78 Overview The Multivector Derivative. Examples of differentiation wrt multivectors. Linear Algebra: matrices and tensors as linear functions mapping between elements of the algebra. Functional Differentiation: very briefly... Summary 6 / 78 We now want to generalise this idea to enable us to find the derivative of F(X), in the A ‘direction’ – where X is a general mixed grade multivector (so F(X) is a general multivector valued function of X). Let us use ∗ to denote taking the scalar part, ie P ∗ Q ≡ hPQi. Then, provided A has same grades as X, it makes sense to define: F(X + tA) − F(X) A ∗ ¶XF(X) = lim t!0 t The Multivector Derivative Recall our definition of the directional derivative in the a direction F(x + ea) − F(x) a·r F(x) = lim e!0 e 7 / 78 Let us use ∗ to denote taking the scalar part, ie P ∗ Q ≡ hPQi. -

Linear Algebra for Dummies

Linear Algebra for Dummies Jorge A. Menendez October 6, 2017 Contents 1 Matrices and Vectors1 2 Matrix Multiplication2 3 Matrix Inverse, Pseudo-inverse4 4 Outer products 5 5 Inner Products 5 6 Example: Linear Regression7 7 Eigenstuff 8 8 Example: Covariance Matrices 11 9 Example: PCA 12 10 Useful resources 12 1 Matrices and Vectors An m × n matrix is simply an array of numbers: 2 3 a11 a12 : : : a1n 6 a21 a22 : : : a2n 7 A = 6 7 6 . 7 4 . 5 am1 am2 : : : amn where we define the indexing Aij = aij to designate the component in the ith row and jth column of A. The transpose of a matrix is obtained by flipping the rows with the columns: 2 3 a11 a21 : : : an1 6 a12 a22 : : : an2 7 AT = 6 7 6 . 7 4 . 5 a1m a2m : : : anm T which evidently is now an n × m matrix, with components Aij = Aji = aji. In other words, the transpose is obtained by simply flipping the row and column indeces. One particularly important matrix is called the identity matrix, which is composed of 1’s on the diagonal and 0’s everywhere else: 21 0 ::: 03 60 1 ::: 07 6 7 6. .. .7 4. .5 0 0 ::: 1 1 It is called the identity matrix because the product of any matrix with the identity matrix is identical to itself: AI = A In other words, I is the equivalent of the number 1 for matrices. For our purposes, a vector can simply be thought of as a matrix with one column1: 2 3 a1 6a2 7 a = 6 7 6 . -

Exercise 1 Advanced Sdps Matrices and Vectors

Exercise 1 Advanced SDPs Matrices and vectors: All these are very important facts that we will use repeatedly, and should be internalized. • Inner product and outer products. Let u = (u1; : : : ; un) and v = (v1; : : : ; vn) be vectors in n T Pn R . Then u v = hu; vi = i=1 uivi is the inner product of u and v. T The outer product uv is an n × n rank 1 matrix B with entries Bij = uivj. The matrix B is a very useful operator. Suppose v is a unit vector. Then, B sends v to u i.e. Bv = uvT v = u, but Bw = 0 for all w 2 v?. m×n n×p • Matrix Product. For any two matrices A 2 R and B 2 R , the standard matrix Pn product C = AB is the m × p matrix with entries cij = k=1 aikbkj. Here are two very useful ways to view this. T Inner products: Let ri be the i-th row of A, or equivalently the i-th column of A , the T transpose of A. Let bj denote the j-th column of B. Then cij = ri cj is the dot product of i-column of AT and j-th column of B. Sums of outer products: C can also be expressed as outer products of columns of A and rows of B. Pn T Exercise: Show that C = k=1 akbk where ak is the k-th column of A and bk is the k-th row of B (or equivalently the k-column of BT ). -

Orthonormal Bases in Hilbert Space APPM 5440 Fall 2017 Applied Analysis

Orthonormal Bases in Hilbert Space APPM 5440 Fall 2017 Applied Analysis Stephen Becker November 18, 2017(v4); v1 Nov 17 2014 and v2 Jan 21 2016 Supplementary notes to our textbook (Hunter and Nachtergaele). These notes generally follow Kreyszig’s book. The reason for these notes is that this is a simpler treatment that is easier to follow; the simplicity is because we generally do not consider uncountable nets, but rather only sequences (which are countable). I have tried to identify the theorems from both books; theorems/lemmas with numbers like 3.3-7 are from Kreyszig. Note: there are two versions of these notes, with and without proofs. The version without proofs will be distributed to the class initially, and the version with proofs will be distributed later (after any potential homework assignments, since some of the proofs maybe assigned as homework). This version does not have proofs. 1 Basic Definitions NOTE: Kreyszig defines inner products to be linear in the first term, and conjugate linear in the second term, so hαx, yi = αhx, yi = hx, αy¯ i. In contrast, Hunter and Nachtergaele define the inner product to be linear in the second term, and conjugate linear in the first term, so hαx,¯ yi = αhx, yi = hx, αyi. We will follow the convention of Hunter and Nachtergaele, so I have re-written the theorems from Kreyszig accordingly whenever the order is important. Of course when working with the real field, order is completely unimportant. Let X be an inner product space. Then we can define a norm on X by kxk = phx, xi, x ∈ X. -

Multilinear Algebra and Applications July 15, 2014

Multilinear Algebra and Applications July 15, 2014. Contents Chapter 1. Introduction 1 Chapter 2. Review of Linear Algebra 5 2.1. Vector Spaces and Subspaces 5 2.2. Bases 7 2.3. The Einstein convention 10 2.3.1. Change of bases, revisited 12 2.3.2. The Kronecker delta symbol 13 2.4. Linear Transformations 14 2.4.1. Similar matrices 18 2.5. Eigenbases 19 Chapter 3. Multilinear Forms 23 3.1. Linear Forms 23 3.1.1. Definition, Examples, Dual and Dual Basis 23 3.1.2. Transformation of Linear Forms under a Change of Basis 26 3.2. Bilinear Forms 30 3.2.1. Definition, Examples and Basis 30 3.2.2. Tensor product of two linear forms on V 32 3.2.3. Transformation of Bilinear Forms under a Change of Basis 33 3.3. Multilinear forms 34 3.4. Examples 35 3.4.1. A Bilinear Form 35 3.4.2. A Trilinear Form 36 3.5. Basic Operation on Multilinear Forms 37 Chapter 4. Inner Products 39 4.1. Definitions and First Properties 39 4.1.1. Correspondence Between Inner Products and Symmetric Positive Definite Matrices 40 4.1.1.1. From Inner Products to Symmetric Positive Definite Matrices 42 4.1.1.2. From Symmetric Positive Definite Matrices to Inner Products 42 4.1.2. Orthonormal Basis 42 4.2. Reciprocal Basis 46 4.2.1. Properties of Reciprocal Bases 48 4.2.2. Change of basis from a basis to its reciprocal basis g 50 B B III IV CONTENTS 4.2.3. -

Lecture 4: April 8, 2021 1 Orthogonality and Orthonormality

Mathematical Toolkit Spring 2021 Lecture 4: April 8, 2021 Lecturer: Avrim Blum (notes based on notes from Madhur Tulsiani) 1 Orthogonality and orthonormality Definition 1.1 Two vectors u, v in an inner product space are said to be orthogonal if hu, vi = 0. A set of vectors S ⊆ V is said to consist of mutually orthogonal vectors if hu, vi = 0 for all u 6= v, u, v 2 S. A set of S ⊆ V is said to be orthonormal if hu, vi = 0 for all u 6= v, u, v 2 S and kuk = 1 for all u 2 S. Proposition 1.2 A set S ⊆ V n f0V g consisting of mutually orthogonal vectors is linearly inde- pendent. Proposition 1.3 (Gram-Schmidt orthogonalization) Given a finite set fv1,..., vng of linearly independent vectors, there exists a set of orthonormal vectors fw1,..., wng such that Span (fw1,..., wng) = Span (fv1,..., vng) . Proof: By induction. The case with one vector is trivial. Given the statement for k vectors and orthonormal fw1,..., wkg such that Span (fw1,..., wkg) = Span (fv1,..., vkg) , define k u + u = v − hw , v i · w and w = k 1 . k+1 k+1 ∑ i k+1 i k+1 k k i=1 uk+1 We can now check that the set fw1,..., wk+1g satisfies the required conditions. Unit length is clear, so let’s check orthogonality: k uk+1, wj = vk+1, wj − ∑ hwi, vk+1i · wi, wj = vk+1, wj − wj, vk+1 = 0. i=1 Corollary 1.4 Every finite dimensional inner product space has an orthonormal basis. -

Orthonormality and the Gram-Schmidt Process

Unit 4, Section 2: The Gram-Schmidt Process Orthonormality and the Gram-Schmidt Process The basis (e1; e2; : : : ; en) n n for R (or C ) is considered the standard basis for the space because of its geometric properties under the standard inner product: 1. jjeijj = 1 for all i, and 2. ei, ej are orthogonal whenever i 6= j. With this idea in mind, we record the following definitions: Definitions 6.23/6.25. Let V be an inner product space. • A list of vectors in V is called orthonormal if each vector in the list has norm 1, and if each pair of distinct vectors is orthogonal. • A basis for V is called an orthonormal basis if the basis is an orthonormal list. Remark. If a list (v1; : : : ; vn) is orthonormal, then ( 0 if i 6= j hvi; vji = 1 if i = j: Example. The list (e1; e2; : : : ; en) n n forms an orthonormal basis for R =C under the standard inner products on those spaces. 2 Example. The standard basis for Mn(C) consists of n matrices eij, 1 ≤ i; j ≤ n, where eij is the n × n matrix with a 1 in the ij entry and 0s elsewhere. Under the standard inner product on Mn(C) this is an orthonormal basis for Mn(C): 1. heij; eiji: ∗ heij; eiji = tr (eijeij) = tr (ejieij) = tr (ejj) = 1: 1 Unit 4, Section 2: The Gram-Schmidt Process 2. heij; ekli, k 6= i or j 6= l: ∗ heij; ekli = tr (ekleij) = tr (elkeij) = tr (0) if k 6= i, or tr (elj) if k = i but l 6= j = 0: So every vector in the list has norm 1, and every distinct pair of vectors is orthogonal. -

Determinants Math 122 Calculus III D Joyce, Fall 2012

Determinants Math 122 Calculus III D Joyce, Fall 2012 What they are. A determinant is a value associated to a square array of numbers, that square array being called a square matrix. For example, here are determinants of a general 2 × 2 matrix and a general 3 × 3 matrix. a b = ad − bc: c d a b c d e f = aei + bfg + cdh − ceg − afh − bdi: g h i The determinant of a matrix A is usually denoted jAj or det (A). You can think of the rows of the determinant as being vectors. For the 3×3 matrix above, the vectors are u = (a; b; c), v = (d; e; f), and w = (g; h; i). Then the determinant is a value associated to n vectors in Rn. There's a general definition for n×n determinants. It's a particular signed sum of products of n entries in the matrix where each product is of one entry in each row and column. The two ways you can choose one entry in each row and column of the 2 × 2 matrix give you the two products ad and bc. There are six ways of chosing one entry in each row and column in a 3 × 3 matrix, and generally, there are n! ways in an n × n matrix. Thus, the determinant of a 4 × 4 matrix is the signed sum of 24, which is 4!, terms. In this general definition, half the terms are taken positively and half negatively. In class, we briefly saw how the signs are determined by permutations. -

Glossary of Linear Algebra Terms

INNER PRODUCT SPACES AND THE GRAM-SCHMIDT PROCESS A. HAVENS 1. The Dot Product and Orthogonality 1.1. Review of the Dot Product. We first recall the notion of the dot product, which gives us a familiar example of an inner product structure on the real vector spaces Rn. This product is connected to the Euclidean geometry of Rn, via lengths and angles measured in Rn. Later, we will introduce inner product spaces in general, and use their structure to define general notions of length and angle on other vector spaces. Definition 1.1. The dot product of real n-vectors in the Euclidean vector space Rn is the scalar product · : Rn × Rn ! R given by the rule n n ! n X X X (u; v) = uiei; viei 7! uivi : i=1 i=1 i n Here BS := (e1;:::; en) is the standard basis of R . With respect to our conventions on basis and matrix multiplication, we may also express the dot product as the matrix-vector product 2 3 v1 6 7 t î ó 6 . 7 u v = u1 : : : un 6 . 7 : 4 5 vn It is a good exercise to verify the following proposition. Proposition 1.1. Let u; v; w 2 Rn be any real n-vectors, and s; t 2 R be any scalars. The Euclidean dot product (u; v) 7! u · v satisfies the following properties. (i:) The dot product is symmetric: u · v = v · u. (ii:) The dot product is bilinear: • (su) · v = s(u · v) = u · (sv), • (u + v) · w = u · w + v · w.