Voice API for Windows Operating Systems Programming Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Alexander Graham Bell

WEEK 2 LEVEL 7 Alexander Graham Bell Alexander Graham Bell is the famous inventor of the telephone. Born in Scotland on March 3, 1847, he was the second son of Alexander and Eliza Bell. His father taught students the art of speaking clearly, or elocution, and his mother played the piano. Bell’s mother was almost deaf. His father’s career and his mother’s hearing impairment influenced the course of his career. He became a teacher of deaf people. As a child, Bell didn’t care for school, and he eventually dropped out. He did like to solve problems though. For example, when he was only 12, he invented a new farm implement. The tool removed the tiny husks from wheat grains. After the deaths of his two brothers from tuberculosis, Bell and his parents moved from Europe to Canada in 1870. They thought the climate there was healthier than in Scotland. A year later, Bell moved to the United States. He got a job teaching at the Boston School for Deaf Mutes. © 2019 Scholar Within, Inc. WEEK 2 LEVEL 7 One of his students was a 15-year-old named Mabel Hubbard. He was 10 years older than she was, but they fell in love and married in 1877. The Bells raised two daughters but lost two sons who both died as babies. Bell’s father-in-law, Gardiner Hubbard, knew Bell was interested in inventing things, so he asked him to improve the telegraph. Telegraph messages were tapped out with a machine using dots and dashes known as Morse code. -

Cisco Unified Communications Manager System Guide, Release 9.1(1) First Published: December 20, 2012 Last Modified: September 08, 2015

Cisco Unified Communications Manager System Guide, Release 9.1(1) First Published: December 20, 2012 Last Modified: September 08, 2015 Americas Headquarters Cisco Systems, Inc. 170 West Tasman Drive San Jose, CA 95134-1706 USA http://www.cisco.com Tel: 408 526-4000 800 553-NETS (6387) Fax: 408 527-0883 Text Part Number: OL-27946-01 THE SPECIFICATIONS AND INFORMATION REGARDING THE PRODUCTS IN THIS MANUAL ARE SUBJECT TO CHANGE WITHOUT NOTICE. ALL STATEMENTS, INFORMATION, AND RECOMMENDATIONS IN THIS MANUAL ARE BELIEVED TO BE ACCURATE BUT ARE PRESENTED WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED. USERS MUST TAKE FULL RESPONSIBILITY FOR THEIR APPLICATION OF ANY PRODUCTS. THE SOFTWARE LICENSE AND LIMITED WARRANTY FOR THE ACCOMPANYING PRODUCT ARE SET FORTH IN THE INFORMATION PACKET THAT SHIPPED WITH THE PRODUCT AND ARE INCORPORATED HEREIN BY THIS REFERENCE. IF YOU ARE UNABLE TO LOCATE THE SOFTWARE LICENSE OR LIMITED WARRANTY, CONTACT YOUR CISCO REPRESENTATIVE FOR A COPY. The Cisco implementation of TCP header compression is an adaptation of a program developed by the University of California, Berkeley (UCB) as part of UCB's public domain version of the UNIX operating system. All rights reserved. Copyright © 1981, Regents of the University of California. NOTWITHSTANDING ANY OTHER WARRANTY HEREIN, ALL DOCUMENT FILES AND SOFTWARE OF THESE SUPPLIERS ARE PROVIDED “AS IS" WITH ALL FAULTS. CISCO AND THE ABOVE-NAMED SUPPLIERS DISCLAIM ALL WARRANTIES, EXPRESSED OR IMPLIED, INCLUDING, WITHOUT LIMITATION, THOSE OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT OR ARISING FROM A COURSE OF DEALING, USAGE, OR TRADE PRACTICE. IN NO EVENT SHALL CISCO OR ITS SUPPLIERS BE LIABLE FOR ANY INDIRECT, SPECIAL, CONSEQUENTIAL, OR INCIDENTAL DAMAGES, INCLUDING, WITHOUT LIMITATION, LOST PROFITS OR LOSS OR DAMAGE TO DATA ARISING OUT OF THE USE OR INABILITY TO USE THIS MANUAL, EVEN IF CISCO OR ITS SUPPLIERS HAVE BEEN ADVISED OF THE POSSIBILITY OF SUCH DAMAGES. -

Driving to One Percent: Call Analysis/Answering Machine Detection

Driving to One Percent: Call Analysis/Answering Machine Detection Table of Contents Introduction ........................................................................................................................ 3 Call Analysis: More Than Just Answering Machine Detection ............................................ 4 Hardware Versus Software-based Dialers .......................................................................... 4 In Summary ......................................................................................................................... 7 Copyright © 2013-2014 Interactive Intelligence, Inc. All rights reserved. Brand, product, and service names referred to in this document are the trademarks or registered trademarks of their respective companies. Interactive Intelligence, Inc. 7601 Interactive Way Indianapolis, Indiana 46278 Telephone 800.267.1364 www.ININ.com Rev. 06/13, version 2 © 2013-2014 Interactive Intelligence, Inc. 2 Driving to One Percent: Call Analysis/Answering Machine Detection Introduction Small changes, big results. Everyone from the Red Cross Foundation, to Oprah, to self-improvement guides tout it. And this approach/philosophy pops-up in numerous aspects of our lives — from slight changes we can make in our lifestyle and diet to improve our overall health, to small donations we make that support larger causes. In this series, we view this phenomenon from a business perspective and how seemingly minor additions, deletions, or shifts can reap substantive results. The first topic in our “Driving -

Long Distance Voice Services Introduction

LONG DISTANCE VOICE SERVICES 1. GENERAL 1.1 Service Definition 1.2 Platforms 1.3 Standard Service Features 1.4 Descriptions of Features and Feature Packages 2. AVAILABLE VERSIONS 2.1 Interstate Services 2.2 International Services 2.3 LD Virtual VoIP Service 2.4 Switched Digital Services 2.5 Intrastate Inbound and Outbound Service 3. SUPPLEMENTAL TERMS 4. FINANCIAL TERMS 5. DEFINITIONS 1. GENERAL 1.1 Service Definition. Long Distance Voice Services enable Customer to make telephone calls beyond their local calling area. Verizon offers several types of Long Distance Voice Services, including Interstate, International, LD Virtual VoIP, Switched Digital, and Intrastate. Customers choose one of four Feature Options, which defines the Base Features included with that option and optional features. Feature Option A (formerly Feature Option 1). Feature Option A (Option A) offers inbound and outbound service. Feature Option B (formerly Feature Option 2). Feature Option B (Option B) offers inbound and outbound service. Feature Option C-1 (formerly Feature Option 3A). Feature Option C-1 (Option C-1) offers outbound service only and is characterized by a private dialing plan. Feature Option C-2 (formerly Feature Option 3B). Feature Option C-2 (Option C-2) offers inbound toll free service only. 1.2 Platforms. These terms apply to non-Optimized Long Distance Voice Services only. 1.3 Standard Service Features. Customers receive the Base Features associated with the Feature Options Customer selects, as reflected in the Feature Availability Table below. Feature Options may be available either on a stand-alone basis or as part of a combined feature package. -

Policy Issue Notation Vote

POLICY ISSUE NOTATION VOTE August 2, 2006 SECY-06-0173 FOR: The Commissioners FROM: Luis A. Reyes Executive Director for Operations SUBJECT: HISTORY OF THE EMERGENCY NOTIFICATION SYSTEM AND OPTIONS TO PROVIDE CONFIRMATION OF AUTHORITY/IDENTITY OF A CALLER PURPOSE: In response to the Staff Requirements Memoranda (SRM), M060117, dated February 3, 2006, this paper provides the Commission with the history of the Emergency Notification System (ENS) lines and requests Commission approval of a method to quickly confirm the authority or identity of a caller with respect to the imminent threat and physical attack procedures. SUMMARY: The Commission has identified the need to establish a method to quickly confirm the authority/ identity of a caller with respect to an imminent threat. The Emergency Notification System (ENS) provides a reliable voice communication system that allows NRC to communicate with power reactor licensees during an emergency, including an imminent threat. However, the current configuration of ENS will not support caller identification (caller ID). The staff evaluated several alternatives including the restoration of dedicated direct lines (ring downs). Additionally, the staff has provided an update of the communications evaluation (study) that is being undertaken to assess the overall status and health of emergency communications. The staff recommends the Commission approve the use of authentication codes as the method to confirm the authority/identity of a caller in an imminent threat situation. This process is described in Enclosure 2. CONTACT: Jason Kozal, NSIR/DPR (301) 415-5776 The Commissioners - 2 - BACKGROUND: In the aftermath of the Three Mile Island accident, the U.S. Nuclear Regulatory Commission (NRC) established the Emergency Telecommunications System (ETS) to provide reliable communications between NRC and its power reactor licensees. -

User Guide TABLE of CONTENTS the Basics Phone Overview

User guide TABLE OF CONTENTS THE BASICS Phone overview...........................................................................................................................................................................4 Navigating your phone..............................................................................................................................................................7 Installing the battery ..................................................................................................................................................................8 Removing the battery and SIM card.........................................................................................................................................9 Turning your phone on and off ...............................................................................................................................................12 Home screen ............................................................................................................................................................................12 Phone status Icons.....................................................................................................................................................................12 Notifications ..............................................................................................................................................................................14 CONVENIENT FEATURES Vibrate mode ............................................................................................................................................................................15 -

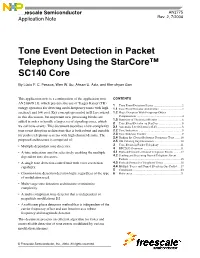

AN2775, Tone Event Detection in Packet Telephony Using The

Freescale Semiconductor AN2775 Application Note Rev. 2, 7/2004 Tone Event Detection in Packet Telephony Using the StarCore™ SC140 Core By Lúcio F. C. Pessoa, Wen W. Su, Ahsan U. Aziz, and Kim-chyan Gan This application note is a continuation of the application note CONTENTS AN2384/D [1], which presents the use of Teager-Kaiser (TK) 1 Tone Event Detection Basics ......................................2 energy operators for detecting multi-frequency tones with high 1.1 Tone Event Detector Architecture ..............................2 accuracy and low cost. Key concepts presented in [1] are reused 1.2 Phase Detection With Frequency Offset in this discussion, but important new processing blocks are Compensation .............................................................4 1.3 Summary of Theoretical Results .................................6 added in order to handle a larger set of signaling tones, which 2 Tone Event Detector on StarCore ...............................8 we call tone events. This document describes a low-complexity 2.1 Automatic Level Control (ALC) .................................8 tone event detection architecture that is both robust and suitable 2.2 Tone Indication ...........................................................9 for packet telephony systems with high channel density. The 2.3 Tone Indicator Counter ...............................................9 2.4 Finding the Closest Reference Frequency Tone .......10 proposed architecture is composed of: 2.5 FIR Filtering Implementation ...................................10 -

Integral Enterprise Feature Description

Integral Enterprise Feature Description Issue 2 February 2008 © 2008 Avaya Inc. All Rights Reserved. Notice While reasonable efforts were made to ensure that the information in this document was complete and accurate at the time of printing, Avaya Inc. can assume no liability for any errors. Changes and corrections to the information in this document may be incorporated in future releases. For full support information, please see the complete document, Avaya Support Notices for Software Documentation, document number 03-600758. To locate this document on our Web site, simply go to http://www.avaya.com/support and search for the document number in the search box. Documentation disclaimer Avaya Inc. is not responsible for any modifications, additions, or deletions to the original published version of this documentation unless such modifications, additions, or deletions were performed by Avaya. Customer and/or End User agree to indemnify and hold harmless Avaya, Avaya's agents, servants and employees against all claims, lawsuits, demands and judgments arising out of, or in connection with, subsequent modifications, additions or deletions to this documentation to the extent made by the Customer or End User. Link disclaimer Avaya Inc. is not responsible for the contents or reliability of any linked Web sites referenced elsewhere within this documentation, and Avaya does not necessarily endorse the products, services, or information described or offered within them. We cannot guarantee that these links will work all of the time and we have no control over the availability of the linked pages. Warranty Avaya Inc. provides a limited warranty on this product. Refer to your sales agreement to establish the terms of the limited warranty. -

Bell Telephone Magazine

»y{iiuiiLviiitiJjitAi.¥A^»yj|tiAt^^ p?fsiJ i »^'iiy{i Hound / \T—^^, n ••J Period icsl Hansiasf Cttp public Hibrarp This Volume is for 5j I REFERENCE USE ONLY I From the collection of the ^ m o PreTinger a V IjJJibrary San Francisco, California 2008 I '. .':>;•.' '•, '•,.L:'',;j •', • .v, ;; Index to tne;i:'A ";.""' ;•;'!!••.'.•' Bell Telephone Magazine Volume XXVI, 1947 Information Department AMERICAN TELEPHONE AND TELEGRAPH COMPANY New York 7, N. Y. PRINTKD IN U. S. A. — BELL TELEPHONE MAGAZINE VOLUME XXVI, 1947 TABLE OF CONTENTS SPRING, 1947 The Teacher, by A. M . Sullivan 3 A Tribute to Alexander Graham Bell, by Walter S. Gifford 4 Mr. Bell and Bell Laboratories, by Oliver E. Buckley 6 Two Men and a Piece of Wire and faith 12 The Pioneers and the First Pioneer 21 The Bell Centennial in the Press 25 Helen Keller and Dr. Bell 29 The First Twenty-Five Years, by The Editors 30 America Is Calling, by IVilliani G. Thompson 35 Preparing Histories of the Telephone Business, by Samuel T. Gushing 52 Preparing a History of the Telephone in Connecticut, by Edward M. Folev, Jr 56 Who's Who & What's What 67 SUMMER, 1947 The Responsibility of Managcincnt in the r^)e!I System, by Walter S. Gifford .'. 70 Helping Customers Improve Telephone Usage Habits, by Justin E. Hoy 72 Employees Enjoy more than 70 Out-of-hour Activities, by /()/;// (/. Simmons *^I Keeping Our Automotive Equipment Modern. l)y Temf^le G. Smith 90 Mark Twain and the Telephone 100 0"^ Crossed Wireless ^ Twenty-five Years Ago in the Bell Telephone Quarterly 105 Who's Who & What's What 107 3 i3(J5'MT' SEP 1 5 1949 BELL TELEPHONE MAGAZINE INDEX. -

Telephone Line Interface FT 635 UELE

Telephone Line Interface FT 635 UELE Kompetent für Elektroniksysteme Table of Contents Connection possibilities 3 Connection examples 4 Carrier detection 5 Transmitter control 5 Transmitter follow-up time 6 Transmission time limit 6 Transmitter lead-up time 6 In- and outputs 7 Inputs 7 Outputs 8 Digital output control 8 AF-signals (telephone to radio) 9 AFsignals (radio to telephone) 9 AF-signaling pathways 9 DTMF 9 Tone Sequence Encoder and Decoder 10 Dial-up - Telephone -> Radio 13 Direct Dialing by DTMF - Telephone -> Radio 13 Automatic Connection - Telephone -> Radio 13 Automatic Call Forwarding with Direct Call - Tel -> Radio 14 Night Mode - Telephone -> Radio 14 Direct Dialing with DTMF - Radio -> Telephone 14 Radio -> Telephone 14 Direct Dialing with tone sequence - Radio -> Telephone 15 Speed Dail - Radio -> Telephone 15 Speed Dial Memory 16 Call Monitoring 16 Operating Mode 17 Voice Announcement (Option) 18 Example for the configuration 19 Call progress tone detection 20 T11-55 22 EEPROM register layout 23 Register in TIM (Telefon Interface Modul) 28 DTMF Geber, Auswerter 29 Installation TIM (Telephone Interface Module) 32 Connector pinout 33 RS232-Connection cable 35 Service program and setting 35 Ordering information 38 Technical data 38 General Safety Instructions 39 Revision remarks 40 - 2 - ft635ule_eng (21.06.2012) - 3 - ft635ule _eng(21.06.2012) Kompetent für Elektroniksysteme Kompetent für Elektroniksysteme FT635 Telephone Line Interface The FT635 telephone line interface (UELE) consists of a CPU card of Europe with an attached TIM (telephone interface module). There are 3 different housings available. The standard version is a flange aluminum housing. There also is a 19“ plug-in unit and a special version in the FT635 system housing.The version in the system housing has a connector which is pin-compatible to FT633UELE to the two-way radio.In the standard version only the most important connectors are available as sockets on the front. -

Authenticall: Efficient Identity and Content Authentication for Phone

AuthentiCall: Efficient Identity and Content Authentication for Phone Calls Bradley Reaves, North Carolina State University; Logan Blue, Hadi Abdullah, Luis Vargas, Patrick Traynor, and Thomas Shrimpton, University of Florida https://www.usenix.org/conference/usenixsecurity17/technical-sessions/presentation/reaves This paper is included in the Proceedings of the 26th USENIX Security Symposium August 16–18, 2017 • Vancouver, BC, Canada ISBN 978-1-931971-40-9 Open access to the Proceedings of the 26th USENIX Security Symposium is sponsored by USENIX AuthentiCall: Efficient Identity and Content Authentication for Phone Calls Bradley Reaves Logan Blue Hadi Abdullah North Carolina State University University of Florida University of Florida reaves@ufl.edu bluel@ufl.edu hadi10102@ufl.edu Luis Vargas Patrick Traynor Thomas Shrimpton University of Florida University of Florida University of Florida lfvargas14@ufl.edu [email protected]fl.edu [email protected]fl.edu Abstract interact call account owners. Power grid operators who detect phase synchronization problems requiring Phones are used to confirm some of our most sensi- careful remediation speak on the phone with engineers tive transactions. From coordination between energy in adjacent networks. Even the Federal Emergency providers in the power grid to corroboration of high- Management Agency (FEMA) recommends that citizens value transfers with a financial institution, we rely on in disaster areas rely on phones to communicate sensitive telephony to serve as a trustworthy communications identity information (e.g., social security numbers) to path. However, such trust is not well placed given the assist in recovery [29]. In all of these cases, participants widespread understanding of telephony’s inability to depend on telephony networks to help them validate provide end-to-end authentication between callers. -

AT&T Phone User Guide

® ® MakeMakeTop callscalls With U-verse Voice byFeatures phone or phoneWith AT&T service, U-verse you by phone or haveVoice more digital calling home freedomphone service,and control you Click to Call. have more calling Voice AT&T Phone than ever. Voice Click to Call. freedom and control than ever. user guide AT&T Phone userVoiceuser guide guide • How to make calls by phone or Click to Call user guide • Topuser Features guide • How to manage Phone Features • How to manage Phone Features • HowH o w to makemanage calls or by change phone Voicemail or Click to Settings Call • How to manage or change Voicemail Settings Dial fromVoicemail your phone Viewer and Voicemail-to-Text (VMTT) • HowAT&T to U-verse manage support Phone Features Dial from your phone • Need more help? Get VMTT on your qualifying iOS or Android™ device via the • How to manage or change Voicemail Settings Voicemail Viewer App or choose to automatically forward your • Need more help? voicemail messages with VMTT to a designated email address accessible from your smartphone, tablet, or computer. NeedLearn moremore help? Call Blocking att.com/uversevoicemail Dial fromBlock the unwanted Web calls from numbers you specify, avoid those Need more help? Dial fromwith the anonymous Web information, and screen the callers you want Questions? Visit att.com/digitalvoicemail for more information to hear from with this feature. on setting up and customizingatt.com/uversesupport your voicemail. Caller ID on TV 1.800.288.2020 Questions? 1. Seeatt.com/myatt who is calling on your TV screen, and decide whether you ¿Habla español? 1 wantatt.com/myatt to answer (Phone and U-verse TV service required).