Primer: Elements of Processor Architecture. the Hardware/Software Interplay

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Lecture 7 CUDA

Lecture 7 CUDA Dr. Wilson Rivera ICOM 6025: High Performance Computing Electrical and Computer Engineering Department University of Puerto Rico Outline • GPU vs CPU • CUDA execution Model • CUDA Types • CUDA programming • CUDA Timer ICOM 6025: High Performance Computing 2 CUDA • Compute Unified Device Architecture – Designed and developed by NVIDIA – Data parallel programming interface to GPUs • Requires an NVIDIA GPU (GeForce, Tesla, Quadro) ICOM 4036: Programming Languages 3 CUDA SDK GPU and CPU: The Differences ALU ALU Control ALU ALU Cache DRAM DRAM CPU GPU • GPU – More transistors devoted to computation, instead of caching or flow control – Threads are extremely lightweight • Very little creation overhead – Suitable for data-intensive computation • High arithmetic/memory operation ratio Grids and Blocks Host • Kernel executed as a grid of thread Device blocks Grid 1 – All threads share data memory Kernel Block Block Block space 1 (0, 0) (1, 0) (2, 0) • Thread block is a batch of threads, Block Block Block can cooperate with each other by: (0, 1) (1, 1) (2, 1) – Synchronizing their execution: For hazard-free shared Grid 2 memory accesses Kernel 2 – Efficiently sharing data through a low latency shared memory Block (1, 1) • Two threads from two different blocks cannot cooperate Thread Thread Thread Thread Thread (0, 0) (1, 0) (2, 0) (3, 0) (4, 0) – (Unless thru slow global Thread Thread Thread Thread Thread memory) (0, 1) (1, 1) (2, 1) (3, 1) (4, 1) • Threads and blocks have IDs Thread Thread Thread Thread Thread (0, 2) (1, 2) (2, -

Gs-35F-4677G

March 2013 NCS Technologies, Inc. Information Technology (IT) Schedule Contract Number: GS-35F-4677G FEDERAL ACQUISTIION SERVICE INFORMATION TECHNOLOGY SCHEDULE PRICELIST GENERAL PURPOSE COMMERCIAL INFORMATION TECHNOLOGY EQUIPMENT Special Item No. 132-8 Purchase of Hardware 132-8 PURCHASE OF EQUIPMENT FSC CLASS 7010 – SYSTEM CONFIGURATION 1. End User Computer / Desktop 2. Professional Workstation 3. Server 4. Laptop / Portable / Notebook FSC CLASS 7-25 – INPUT/OUTPUT AND STORAGE DEVICES 1. Display 2. Network Equipment 3. Storage Devices including Magnetic Storage, Magnetic Tape and Optical Disk NCS TECHNOLOGIES, INC. 7669 Limestone Drive Gainesville, VA 20155-4038 Tel: (703) 621-1700 Fax: (703) 621-1701 Website: www.ncst.com Contract Number: GS-35F-4677G – Option Year 3 Period Covered by Contract: May 15, 1997 through May 14, 2017 GENERAL SERVICE ADMINISTRATION FEDERAL ACQUISTIION SERVICE Products and ordering information in this Authorized FAS IT Schedule Price List is also available on the GSA Advantage! System. Agencies can browse GSA Advantage! By accessing GSA’s Home Page via Internet at www.gsa.gov. TABLE OF CONTENTS INFORMATION FOR ORDERING OFFICES ............................................................................................................................................................................................................................... TC-1 SPECIAL NOTICE TO AGENCIES – SMALL BUSINESS PARTICIPATION 1. Geographical Scope of Contract ............................................................................................................................................................................................................................. -

ATI Radeon™ HD 4870 Computation Highlights

AMD Entering the Golden Age of Heterogeneous Computing Michael Mantor Senior GPU Compute Architect / Fellow AMD Graphics Product Group [email protected] 1 The 4 Pillars of massively parallel compute offload •Performance M’Moore’s Law Î 2x < 18 Month s Frequency\Power\Complexity Wall •Power Parallel Î Opportunity for growth •Price • Programming Models GPU is the first successful massively parallel COMMODITY architecture with a programming model that managgped to tame 1000’s of parallel threads in hardware to perform useful work efficiently 2 Quick recap of where we are – Perf, Power, Price ATI Radeon™ HD 4850 4x Performance/w and Performance/mm² in a year ATI Radeon™ X1800 XT ATI Radeon™ HD 3850 ATI Radeon™ HD 2900 XT ATI Radeon™ X1900 XTX ATI Radeon™ X1950 PRO 3 Source of GigaFLOPS per watt: maximum theoretical performance divided by maximum board power. Source of GigaFLOPS per $: maximum theoretical performance divided by price as reported on www.buy.com as of 9/24/08 ATI Radeon™HD 4850 Designed to Perform in Single Slot SP Compute Power 1.0 T-FLOPS DP Compute Power 200 G-FLOPS Core Clock Speed 625 Mhz Stream Processors 800 Memory Type GDDR3 Memory Capacity 512 MB Max Board Power 110W Memory Bandwidth 64 GB/Sec 4 ATI Radeon™HD 4870 First Graphics with GDDR5 SP Compute Power 1.2 T-FLOPS DP Compute Power 240 G-FLOPS Core Clock Speed 750 Mhz Stream Processors 800 Memory Type GDDR5 3.6Gbps Memory Capacity 512 MB Max Board Power 160 W Memory Bandwidth 115.2 GB/Sec 5 ATI Radeon™HD 4870 X2 Incredible Balance of Performance,,, Power, Price -

AMD Accelerated Parallel Processing Opencl Programming Guide

AMD Accelerated Parallel Processing OpenCL Programming Guide November 2013 rev2.7 © 2013 Advanced Micro Devices, Inc. All rights reserved. AMD, the AMD Arrow logo, AMD Accelerated Parallel Processing, the AMD Accelerated Parallel Processing logo, ATI, the ATI logo, Radeon, FireStream, FirePro, Catalyst, and combinations thereof are trade- marks of Advanced Micro Devices, Inc. Microsoft, Visual Studio, Windows, and Windows Vista are registered trademarks of Microsoft Corporation in the U.S. and/or other jurisdic- tions. Other names are for informational purposes only and may be trademarks of their respective owners. OpenCL and the OpenCL logo are trademarks of Apple Inc. used by permission by Khronos. The contents of this document are provided in connection with Advanced Micro Devices, Inc. (“AMD”) products. AMD makes no representations or warranties with respect to the accuracy or completeness of the contents of this publication and reserves the right to make changes to specifications and product descriptions at any time without notice. The information contained herein may be of a preliminary or advance nature and is subject to change without notice. No license, whether express, implied, arising by estoppel or other- wise, to any intellectual property rights is granted by this publication. Except as set forth in AMD’s Standard Terms and Conditions of Sale, AMD assumes no liability whatsoever, and disclaims any express or implied warranty, relating to its products including, but not limited to, the implied warranty of merchantability, fitness for a particular purpose, or infringement of any intellectual property right. AMD’s products are not designed, intended, authorized or warranted for use as compo- nents in systems intended for surgical implant into the body, or in other applications intended to support or sustain life, or in any other application in which the failure of AMD’s product could create a situation where personal injury, death, or severe property or envi- ronmental damage may occur. -

Computer Organization and Architecture Designing for Performance Ninth Edition

COMPUTER ORGANIZATION AND ARCHITECTURE DESIGNING FOR PERFORMANCE NINTH EDITION William Stallings Boston Columbus Indianapolis New York San Francisco Upper Saddle River Amsterdam Cape Town Dubai London Madrid Milan Munich Paris Montréal Toronto Delhi Mexico City São Paulo Sydney Hong Kong Seoul Singapore Taipei Tokyo Editorial Director: Marcia Horton Designer: Bruce Kenselaar Executive Editor: Tracy Dunkelberger Manager, Visual Research: Karen Sanatar Associate Editor: Carole Snyder Manager, Rights and Permissions: Mike Joyce Director of Marketing: Patrice Jones Text Permission Coordinator: Jen Roach Marketing Manager: Yez Alayan Cover Art: Charles Bowman/Robert Harding Marketing Coordinator: Kathryn Ferranti Lead Media Project Manager: Daniel Sandin Marketing Assistant: Emma Snider Full-Service Project Management: Shiny Rajesh/ Director of Production: Vince O’Brien Integra Software Services Pvt. Ltd. Managing Editor: Jeff Holcomb Composition: Integra Software Services Pvt. Ltd. Production Project Manager: Kayla Smith-Tarbox Printer/Binder: Edward Brothers Production Editor: Pat Brown Cover Printer: Lehigh-Phoenix Color/Hagerstown Manufacturing Buyer: Pat Brown Text Font: Times Ten-Roman Creative Director: Jayne Conte Credits: Figure 2.14: reprinted with permission from The Computer Language Company, Inc. Figure 17.10: Buyya, Rajkumar, High-Performance Cluster Computing: Architectures and Systems, Vol I, 1st edition, ©1999. Reprinted and Electronically reproduced by permission of Pearson Education, Inc. Upper Saddle River, New Jersey, Figure 17.11: Reprinted with permission from Ethernet Alliance. Credits and acknowledgments borrowed from other sources and reproduced, with permission, in this textbook appear on the appropriate page within text. Copyright © 2013, 2010, 2006 by Pearson Education, Inc., publishing as Prentice Hall. All rights reserved. Manufactured in the United States of America. -

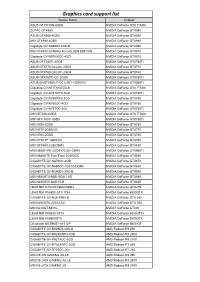

Graphics Card Support List

Graphics card support list Device Name Chipset ASUS GTXTITAN-6GD5 NVIDIA GeForce GTX TITAN ZOTAC GTX980 NVIDIA GeForce GTX980 ASUS GTX980-4GD5 NVIDIA GeForce GTX980 MSI GTX980-4GD5 NVIDIA GeForce GTX980 Gigabyte GV-N980D5-4GD-B NVIDIA GeForce GTX980 MSI GTX970 GAMING 4G GOLDEN EDITION NVIDIA GeForce GTX970 Gigabyte GV-N970IXOC-4GD NVIDIA GeForce GTX970 ASUS GTX780TI-3GD5 NVIDIA GeForce GTX780Ti ASUS GTX770-DC2OC-2GD5 NVIDIA GeForce GTX770 ASUS GTX760-DC2OC-2GD5 NVIDIA GeForce GTX760 ASUS GTX750TI-OC-2GD5 NVIDIA GeForce GTX750Ti ASUS ENGTX560-Ti-DCII/2D1-1GD5/1G NVIDIA GeForce GTX560Ti Gigabyte GV-NTITAN-6GD-B NVIDIA GeForce GTX TITAN Gigabyte GV-N78TWF3-3GD NVIDIA GeForce GTX780Ti Gigabyte GV-N780WF3-3GD NVIDIA GeForce GTX780 Gigabyte GV-N760OC-4GD NVIDIA GeForce GTX760 Gigabyte GV-N75TOC-2GI NVIDIA GeForce GTX750Ti MSI NTITAN-6GD5 NVIDIA GeForce GTX TITAN MSI GTX 780Ti 3GD5 NVIDIA GeForce GTX780Ti MSI N780-3GD5 NVIDIA GeForce GTX780 MSI N770-2GD5/OC NVIDIA GeForce GTX770 MSI N760-2GD5 NVIDIA GeForce GTX760 MSI N750 TF 1GD5/OC NVIDIA GeForce GTX750 MSI GTX680-2GB/DDR5 NVIDIA GeForce GTX680 MSI N660Ti-PE-2GD5-OC/2G-DDR5 NVIDIA GeForce GTX660Ti MSI N680GTX Twin Frozr 2GD5/OC NVIDIA GeForce GTX680 GIGABYTE GV-N670OC-2GD NVIDIA GeForce GTX670 GIGABYTE GV-N650OC-1GI/1G-DDR5 NVIDIA GeForce GTX650 GIGABYTE GV-N590D5-3GD-B NVIDIA GeForce GTX590 MSI N580GTX-M2D15D5/1.5G NVIDIA GeForce GTX580 MSI N465GTX-M2D1G-B NVIDIA GeForce GTX465 LEADTEK GTX275/896M-DDR3 NVIDIA GeForce GTX275 LEADTEK PX8800 GTX TDH NVIDIA GeForce 8800GTX GIGABYTE GV-N26-896H-B -

NVIDIA Quadro RTX for V-Ray Next

NVIDIA QUADRO RTX V-RAY NEXT GPU Image courtesy of © Dabarti Studio, rendered with V-Ray GPU Quadro RTX Accelerates V-Ray Next GPU Rendering Solutions for V-Ray Next GPU V-Ray Next GPU taps into the power of NVIDIA® Quadro® NVIDIA Quadro® provides a wide range of RTX-enabled RTX™ to speed up production rendering with dedicated RT solutions for desktop, mobile, server-based rendering, and Cores for ray tracing and Tensor Cores for AI-accelerated virtual workstations with NVIDIA Quadro Virtual Data denoising.¹ With up to 18X faster rendering than CPU-based Center Workstation (Quadro vDWS) software.2 With up to 96 solutions and enhanced performance with NVIDIA NVLink™, gigabytes (GB) of GPU memory available,3 Quadro RTX V-Ray Next GPU with RTX support provides incredible provides the power you need for the largest professional performance improvements for your rendering workloads. graphics and rendering workloads. “ Accelerating artist productivity is always our top Benchmark: V-Ray Next GPU Rendering Performance Increase on Quadro RTX GPUs priority, so we’re quick to take advantage of the latest ray-tracing hardware breakthroughs. By Quadro RTX 6000 x2 1885 ™ Quadro RTX 6000 104 supporting NVIDIA RTX in V-Ray GPU, we’re Quadro RTX 4000 783 bringing our customers an exciting new boost in PU 1 0 2 4 6 8 10 12 14 16 18 20 their GPU production rendering speeds.” Relatve Performance – Phillip Miller, Vice President, Product Management, Chaos Group Desktop performance Tests run on 1x Xeon old 6154 3 Hz (37 Hz Turbo), 64 B DDR4 RAM Wn10x64 Drver verson 44128 Performance results may vary dependng on the scene NVIDIA Quadro professional graphics solutions are verified and recommended for the most demanding projects by Chaos Group. -

NVIDIA Launches Tegra X1 Mobile Super Chip

NVIDIA Launches Tegra X1 Mobile Super Chip Maxwell GPU Architecture Delivers First Teraflops Mobile Processor, Powering Deep Learning and Computer Vision Applications NVIDIA today unveiled Tegra® X1, its next-generation mobile super chip with over one teraflops of processing power – delivering capabilities that open the door to unprecedented graphics and sophisticated deep learning and computer vision applications. Tegra X1 is built on the same NVIDIA Maxwell™ GPU architecture rolled out only months ago for the world's top-performing gaming graphics card, the GeForce® GTX 980. The 256-core Tegra X1 provides twice the performance of its predecessor, the Tegra K1, which is based on the previous-generation Kepler™ architecture and debuted at last year's Consumer Electronics Show. Tegra processors are built for embedded products, mobile devices, autonomous machines and automotive applications. Tegra X1 will begin appearing in the first half of the year. It will be featured in the newly announced NVIDIA DRIVE™ car computers. DRIVE PX is an auto-pilot computing platform that can process video from up to 12 onboard cameras to run capabilities providing Surround-Vision, for a seamless 360-degree view around the car, and Auto-Valet, for true self-parking. DRIVE CX is a complete cockpit platform designed to power the advanced graphics required across the increasing number of screens used for digital clusters, infotainment, head-up displays, virtual mirrors and rear-seat entertainment. "We see a future of autonomous cars, robots and drones that see and learn, with seeming intelligence that is hard to imagine," said Jen-Hsun Huang, CEO and co-founder, NVIDIA. -

Msi Geforce Gtx 560 Ti (Fermi) Driver Download Available 4 Files for MSI N560GTX-Ti Twin Frozr II-SOC

msi geforce gtx 560 ti (fermi) driver download Available 4 files for MSI N560GTX-Ti Twin Frozr II-SOC. � Online update BIOS/Driver/Firmware/Utility. � Live Monitor auto-detects and suggests the latest BIOS/Driver/Utilities information. Utility Update only for MSI Mainboard Utility Only. Utility - MSI Afterburner. Windows 7 32/64 bits-Vista 32/64 bits-XP 32/64 bits. MSI's own "Afterburner" software is currently the most powerful graphics card overclocking software available. Aside from the basic frequency adjustment and system monitor, this software allows the user to adjust the voltage for the GPU. This important feature saves all the hassle of having to adjust the circuit board in order to provide a higher voltage to the GPU. Additionally, the software's automatic fan speed control has received a lot of praise from enthusiasts all over the world. With MSI's "Afterburner", the decision to run the fan quietly or for high-performance is in your hands! DRIVER GEFORCE GTX 560 TI FOR WINDOWS 7 DOWNLOAD. Geforce gtx 590, geforce gtx 580, geforce gtx 570, geforce gtx 560 ti, geforce gtx 560 se, geforce gtx 560, geforce gtx 555, geforce gtx 550 ti, geforce gt 545, geforce gt 530, geforce gt 520, geforce 510. Hey folks, which belongs to push twice more than reference. Built from the ground up for directx 11 tessellation, the geforce gtx 550 ti delivers revolutionary geometry performance for the ultimate next generation dx11 gaming experience. I also was having serious problems with the new graphics driver in windows 10 on a gtx 560m, i ended up going to device manager, deleting the driver from there, then restarting and windows restored my previous working driver. -

GPU-Based Deep Learning Inference

Whitepaper GPU-Based Deep Learning Inference: A Performance and Power Analysis November 2015 1 Contents Abstract ......................................................................................................................................................... 3 Introduction .................................................................................................................................................. 3 Inference versus Training .............................................................................................................................. 4 GPUs Excel at Neural Network Inference ..................................................................................................... 5 Inference Optimizations in Caffe and cuDNN 4 ........................................................................................ 5 Experimental Setup and Testing Methodology ........................................................................................ 7 Inference on Small and Large GPUs .......................................................................................................... 8 Conclusion ................................................................................................................................................... 10 References .................................................................................................................................................. 10 2 Abstract Deep learning methods are revolutionizing various areas of machine perception. On a -

Fast Calculation of the Lomb-Scargle Periodogram Using Graphics Processing Units 3

Draft version August 7, 2018 A Preprint typeset using LTEX style emulateapj v. 11/10/09 FAST CALCULATION OF THE LOMB-SCARGLE PERIODOGRAM USING GRAPHICS PROCESSING UNITS R. H. D. Townsend Department of Astronomy, University of Wisconsin-Madison, Sterling Hall, 475 N. Charter Street, Madison, WI 53706, USA; [email protected] Draft version August 7, 2018 ABSTRACT I introduce a new code for fast calculation of the Lomb-Scargle periodogram, that leverages the computing power of graphics processing units (GPUs). After establishing a background to the newly emergent field of GPU computing, I discuss the code design and narrate key parts of its source. Benchmarking calculations indicate no significant differences in accuracy compared to an equivalent CPU-based code. However, the differences in performance are pronounced; running on a low-end GPU, the code can match 8 CPU cores, and on a high-end GPU it is faster by a factor approaching thirty. Applications of the code include analysis of long photometric time series obtained by ongoing satellite missions and upcoming ground-based monitoring facilities; and Monte-Carlo simulation of periodogram statistical properties. Subject headings: methods: data analysis — methods: numerical — techniques: photometric — stars: oscillations 1. INTRODUCTION of the newly emergent field of GPU computing; then, Astronomical time-series observations are often charac- Section 3 reviews the formalism defining the L-S peri- terized by uneven temporal sampling (e.g., due to trans- odogram, and Section 4 presents a GPU-based code im- formation to the heliocentric frame) and/or non-uniform plementing this formalism. Benchmarking calculations coverage (e.g., from day/night cycles, or radiation belt to evaluate the accuracy and performance of the code passages). -

How to Download 382.33 Nvidia Driver Geforce Game Ready Driver

how to download 382.33 nvidia driver GeForce Game Ready Driver. As part of the NVIDIA Notebook Driver Program, this is a reference driver that can be installed on supported NVIDIA notebook GPUs. However, please note that your notebook original equipment manufacturer (OEM) provides certified drivers for your specific notebook on their website. NVIDIA recommends that you check with your notebook OEM about recommended software updates for your notebook. OEMs may not provide technical support for issues that arise from the use of this driver. Before downloading this driver: It is recommended that you backup your current system configuration. Click here for instructions. Game Ready Drivers provide the best possible gaming experience for all major new releases, including Virtual Reality games. Prior to a new title launching, our driver team is working up until the last minute to ensure every performance tweak and bug fix is included for the best gameplay on day-1. Game Ready Provides the optimal gaming experience for Tekken 7 and Star Trek Bridge Crew. Notebooks supporting Hybrid Power technology are not supported (NVIDIA Optimus technology is supported). The following Sony VAIO notebooks are included in the Verde notebook program: Sony VAIO F Series with NVIDIA GeForce 310M, GeForce GT 330M, GeForce GT 425M, GeForce GT 520M or GeForce GT 540M. Other Sony VAIO notebooks are not included (please contact Sony for driver support). Fujitsu notebooks are not included (Fujitsu Siemens notebooks are included). GeForce GTX 1080, GeForce GTX 1070, GeForce GTX 1060, GeForce GTX 1050 Ti, GeForce GTX 1050. GeForce 900M Series (Notebooks): GeForce GTX 980, GeForce GTX 980M, GeForce GTX 970M, GeForce GTX 965M, GeForce GTX 960M, GeForce GTX 950M, GeForce 945M, GeForce 940MX, GeForce 930MX, GeForce 920MX, GeForce 940M, GeForce 930M, GeForce 920M, GeForce 910M.