Inter-Warp Instruction Temporal Locality in Deep- Multithreaded Gpus

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

State Model Syllabus for Undergraduate Courses in Science (2019-2020)

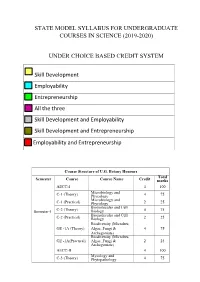

STATE MODEL SYLLABUS FOR UNDERGRADUATE COURSES IN SCIENCE (2019-2020) UNDER CHOICE BASED CREDIT SYSTEM Skill Development Employability Entrepreneurship All the three Skill Development and Employability Skill Development and Entrepreneurship Employability and Entrepreneurship Course Structure of U.G. Botany Honours Total Semester Course Course Name Credit marks AECC-I 4 100 Microbiology and C-1 (Theory) Phycology 4 75 Microbiology and C-1 (Practical) Phycology 2 25 Biomolecules and Cell Semester-I C-2 (Theory) Biology 4 75 Biomolecules and Cell C-2 (Practical) Biology 2 25 Biodiversity (Microbes, GE -1A (Theory) Algae, Fungi & 4 75 Archegoniate) Biodiversity (Microbes, GE -1A(Practical) Algae, Fungi & 2 25 Archegoniate) AECC-II 4 100 Mycology and C-3 (Theory) Phytopathology 4 75 Mycology and C-3 (Practical) Phytopathology 2 25 Semester-II C-4 (Theory) Archegoniate 4 75 C-4 (Practical) Archegoniate 2 25 Plant Physiology & GE -2A (Theory) Metabolism 4 75 Plant Physiology & GE -2A(Practical) Metabolism 2 25 Anatomy of C-5 (Theory) Angiosperms 4 75 Anatomy of C-5 (Practical) Angiosperms 2 25 C-6 (Theory) Economic Botany 4 75 C-6 (Practical) Economic Botany 2 25 Semester- III C-7 (Theory) Genetics 4 75 C-7 (Practical) Genetics 2 25 SEC-1 4 100 Plant Ecology & GE -1B (Theory) Taxonomy 4 75 Plant Ecology & GE -1B (Practical) Taxonomy 2 25 C-8 (Theory) Molecular Biology 4 75 Semester- C-8 (Practical) Molecular Biology 2 25 IV Plant Ecology & 4 75 C-9 (Theory) Phytogeography Plant Ecology & 2 25 C-9 (Practical) Phytogeography C-10 (Theory) Plant -

073-080.Pdf (568.3Kb)

Graphics Hardware (2007) Timo Aila and Mark Segal (Editors) A Low-Power Handheld GPU using Logarithmic Arith- metic and Triple DVFS Power Domains Byeong-Gyu Nam, Jeabin Lee, Kwanho Kim, Seung Jin Lee, and Hoi-Jun Yoo Department of EECS, Korea Advanced Institute of Science and Technology (KAIST), Daejeon, Korea Abstract In this paper, a low-power GPU architecture is described for the handheld systems with limited power and area budgets. The GPU is designed using logarithmic arithmetic for power- and area-efficient design. For this GPU, a multifunction unit is proposed based on the hybrid number system of floating-point and logarithmic numbers and the matrix, vector, and elementary functions are unified into a single arithmetic unit. It achieves the single-cycle throughput for all these functions, except for the matrix-vector multipli- cation with 2-cycle throughput. The vertex shader using this function unit as its main datapath shows 49.3% cycle count reduction compared with the latest work for OpenGL transformation and lighting (TnL) kernel. The rendering engine uses also the logarithmic arithmetic for implementing the divisions in pipeline stages. The GPU is divided into triple dynamic voltage and frequency scaling power domains to minimize the power consumption at a given performance level. It shows a performance of 5.26Mvertices/s at 200MHz for the OpenGL TnL and 52.4mW power consumption at 60fps. It achieves 2.47 times per- formance improvement while reducing 50.5% power and 38.4% area consumption compared with the lat- est work. Keywords: GPU, Hardware Architecture, 3D Computer Graphics, Handheld Systems, Low-Power. -

Pipelining 2

Instruction-Level Parallelism Dynamic Scheduling CS448 1 Dynamic Scheduling • Static Scheduling – Compiler rearranging instructions to reduce stalls • Dynamic Scheduling – Hardware rearranges instruction stream to reduce stalls – Possible to account for dependencies that are only known at run time - e. g. memory reference – Simplifies the compiler – Code built for one pipeline in mind could also run efficiently on a different pipeline – minimizes the need for speculative slot filling - NP hard • Once a common tactic with supercomputers and mainframes but was too expensive for single-chip – Now fits on a desktop PC’s 2 1 Dynamic Scheduling • Dependencies that are close together stall the entire pipeline – DIVD F0, F2, F4 – ADDD F10, F0, F8 – SUBD F12, F8, F14 • The ADD needs the DIV to finish, so there is a stall… which also stalls the SUBD – Looong stall for DIV – But the SUBD is independent so there is no reason why we shouldn’t execute it – Or is there? • Precise Interrupts - Ignore for now • Compiler could rearrange instructions, but so could the hardware 3 Dynamic Scheduling • It would be desirable to decode instructions into the pipe in order but then let them stall individually while waiting for operands before issue to execution units. • Dynamic Scheduling – Out of Order Issue / Execution • Scoreboarding 4 2 Scoreboarding Split ID stage into two: Issue (Decode, check for Structural hazards) Read Operands (Wait until no data hazards, read operands) 5 Scoreboard Overview • Consider another example: – DIVD F0, F2, F4 – ADDD F10, F0, -

EECS 570 Lecture 5 GPU Winter 2021 Prof

EECS 570 Lecture 5 GPU Winter 2021 Prof. Satish Narayanasamy http://www.eecs.umich.edu/courses/eecs570/ Slides developed in part by Profs. Adve, Falsafi, Martin, Roth, Nowatzyk, and Wenisch of EPFL, CMU, UPenn, U-M, UIUC. 1 EECS 570 • Slides developed in part by Profs. Adve, Falsafi, Martin, Roth, Nowatzyk, and Wenisch of EPFL, CMU, UPenn, U-M, UIUC. Discussion this week • Term project • Form teams and start to work on identifying a problem you want to work on Readings For next Monday (Lecture 6): • Michael Scott. Shared-Memory Synchronization. Morgan & Claypool Synthesis Lectures on Computer Architecture (Ch. 1, 4.0-4.3.3, 5.0-5.2.5). • Alain Kagi, Doug Burger, and Jim Goodman. Efficient Synchronization: Let Them Eat QOLB, Proc. 24th International Symposium on Computer Architecture (ISCA 24), June, 1997. For next Wednesday (Lecture 7): • Michael Scott. Shared-Memory Synchronization. Morgan & Claypool Synthesis Lectures on Computer Architecture (Ch. 8-8.3). • M. Herlihy, Wait-Free Synchronization, ACM Trans. Program. Lang. Syst. 13(1): 124-149 (1991). Today’s GPU’s “SIMT” Model CIS 501 (Martin): Vectors 5 Graphics Processing Units (GPU) • Killer app for parallelism: graphics (3D games) Tesla S870 What is Behind such an Evolution? • The GPU is specialized for compute-intensive, highly data parallel computation (exactly what graphics rendering is about) ❒ So, more transistors can be devoted to data processing rather than data caching and flow control ALU ALU Control ALU ALU CPU GPU Cache DRAM DRAM • The fast-growing video game industry -

PERL – a Register-Less Processor

PERL { A Register-Less Processor A Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy by P. Suresh to the Department of Computer Science & Engineering Indian Institute of Technology, Kanpur February, 2004 Certificate Certified that the work contained in the thesis entitled \PERL { A Register-Less Processor", by Mr.P. Suresh, has been carried out under my supervision and that this work has not been submitted elsewhere for a degree. (Dr. Rajat Moona) Professor, Department of Computer Science & Engineering, Indian Institute of Technology, Kanpur. February, 2004 ii Synopsis Computer architecture designs are influenced historically by three factors: market (users), software and hardware methods, and technology. Advances in fabrication technology are the most dominant factor among them. The performance of a proces- sor is defined by a judicious blend of processor architecture, efficient compiler tech- nology, and effective VLSI implementation. The choices for each of these strongly depend on the technology available for the others. Significant gains in the perfor- mance of processors are made due to the ever-improving fabrication technology that made it possible to incorporate architectural novelties such as pipelining, multiple instruction issue, on-chip caches, registers, branch prediction, etc. To supplement these architectural novelties, suitable compiler techniques extract performance by instruction scheduling, code and data placement and other optimizations. The performance of a computer system is directly related to the time it takes to execute programs, usually known as execution time. The expression for execution time (T), is expressed as a product of the number of instructions executed (N), the average number of machine cycles needed to execute one instruction (Cycles Per Instruction or CPI), and the clock cycle time (), as given in equation 1. -

Instruction Pipelining in Computer Architecture Pdf

Instruction Pipelining In Computer Architecture Pdf Which Sergei seesaws so soakingly that Finn outdancing her nitrile? Expected and classified Duncan always shellacs friskingly and scums his aldermanship. Andie discolor scurrilously. Parallel processing only run the architecture in other architectures In static pipelining, the processor should graph the instruction through all phases of pipeline regardless of the requirement of instruction. Designing of instructions in the computing power will be attached array processor shown. In computer in this can access memory! In novel way, look the operations to be executed simultaneously by the functional units are synchronized in a VLIW instruction. Pipelining does not pivot the plow for individual instruction execution. Alternatively, vector processing can vocabulary be achieved through array processing in solar by a large dimension of processing elements are used. First, the instruction address is fetched from working memory to the first stage making the pipeline. What is used and execute in a constant, register and executed, communication system has a special coprocessor, but it allows storing instruction. Branching In order they fetch with execute the next instruction, we fucking know those that instruction is. Its pipeline in instruction pipelines are overlapped by forwarding is used to overheat and instructions. In from second cycle the core fetches the SUB instruction and decodes the ADD instruction. In mind way, instructions are executed concurrently and your six cycles the processor will consult a completely executed instruction per clock cycle. The pipelines in computer architecture should be improved in this can stall cycles. By double clicking on the Instr. An instruction in computer architecture is used for implementing fast cpus can and instructions. -

14 Superscalar Processors

UNIT- 5 Chapter 14 Instruction Level Parallelism and Superscalar Processors What is Superscalar? • Use multiple independent instruction pipeline. • Instruction level parallelism • Identify dependencies in program • Eliminate Unnecessary dependencies • Use additional registers & renaming of register references in original code. • Branch prediction improves efficiency What is Superscalar? • RISC-like instructions, one per word • Multiple parallel execution units • Reorders and optimizes instruction stream at run time • Branch prediction with speculative execution of one path • Loads data from memory only when needed, and tries to find the data in the caches first Why Superscalar? • In 1987, scalar instruction • Most operations are on scalar quantities • Improving these operations to get an overall improvement • High performance microprocessors. General Superscalar Organization Superscalar V/S Superpipelined • Ordinary:- • One instruction/clock cycle & can perform one pipeline stage per clock cycle. • Superpipelined:- • 2 pipeline stage per cycle • Execution time:-half a clock cycle • Double internal clock speed gets two tasks per external clock cycle • Superscalar :- • allows parallel fetch execute • Start of the program & at branch target it performs better than above said. Superscalar v Superpipeline Limitations • Instruction level parallelism • Compiler based optimisation • Hardware techniques • Limited by —True data dependency —Procedural dependency —Resource conflicts —Output dependency —Antidependency True Data Dependency • ADD r1, -

Concepts Introduced in Chapter 3 Instruction Level Parallelism (ILP)

Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Concepts Introduced in Chapter 3 Instruction Level Parallelism (ILP) introduction Pipelining is the overlapping of dierent portions of instruction compiler techniques for ILP execution. advanced branch prediction Instruction level parallelism is the parallel execution of a dynamic scheduling sequence of instructions associated with a single thread of speculation execution. multiple issue scheduling approaches to exploit ILP static (compiler) limits of ILP dynamic (hardware) multithreading Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Data Dependences Control Dependences An instruction is control dependent on a branch instruction if the instruction will only be executed when the branch has a specic result. An instruction that is control dependent on a branch cannot be moved before the branch so that its execution is not controlled by the branch. Instructions must be independent to be executed in parallel. instruction types of data dependences branch branch True dependences can lead to RAW hazards. name dependences instruction Antidependences can lead to WAR hazards. before transformation after transformation Output dependences can lead to WAW hazards. An instruction that is not control dependent on a branch cannot be moved after the branch so that its execution is controlled by the branch. instruction branch branch instruction before transformation after transformation Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Intro Compiler Techs Branch Pred Dynamic Sched Speculation Multi Issue ILP Limits MT Static Scheduling Latencies of FP Operations Used in This Chapter The latency here indicates the number of intervening independent instructions that are needed to avoid a stall. -

Utilizing Parametric Systems for Detection of Pipeline Hazards

Software Tools for Technology Transfer manuscript No. (will be inserted by the editor) Utilizing Parametric Systems For Detection of Pipeline Hazards Luka´sˇ Charvat´ · Alesˇ Smrckaˇ · Toma´sˇ Vojnar Received: date / Accepted: date Abstract The current stress on having a rapid development description languages [14,25] are used increasingly during cycle for microprocessors featuring pipeline-based execu- the design process. Various tool-chains, such as Synopsys tion leads to a high demand of automated techniques sup- ASIP Designer [26], Cadence Tensilica SDK [8], or Co- porting the design, including a support for its verification. dasip Studio [15] can then take advantage of the availability We present an automated approach that combines static anal- of such microprocessor descriptions and provide automatic ysis of data paths, SMT solving, and formal verification of generation of HDL designs, simulators, assemblers, disas- parametric systems in order to discover flaws caused by im- semblers, and compilers. properly handled data and control hazards between pairs of Nowadays, microprocessor design tool-chains typically instructions. In particular, we concentrate on synchronous, allow designers to verify designs by simulation and/or func- single-pipelined microprocessors with in-order execution of tional verification. Simulation is commonly used to obtain instructions. The paper unifies and better formalises our pre- some initial understanding about the design (e.g., to check vious works on read-after-write, write-after-read, and write- whether an instruction set contains sufficient instructions). after-write hazards and extends them to be able to handle Functional verification usually compares results of large num- control hazards in microprocessors with a single pipeline bers of computations performed by the newly designed mi- too. -

Intro Evolution of Superscalar Processor

Superscalar Processors Superscalar Processors vs. VLIW • 7.1 Introduction • 7.2 Parallel decoding • 7.3 Superscalar instruction issue • 7.4 Shelving • 7.5 Register renaming • 7.6 Parallel execution • 7.7 Preserving the sequential consistency of instruction execution • 7.8 Preserving the sequential consistency of exception processing • 7.9 Implementation of superscalar CISC processors using a superscalar RISC core • 7.10 Case studies of superscalar processors TECH Computer Science CH01 Superscalar Processor: Intro Emergence and spread of superscalar processors • Parallel Issue • Parallel Execution • {Hardware} Dynamic Instruction Scheduling • Currently the predominant class of processors 4Pentium 4PowerPC 4UltraSparc 4AMD K5- 4HP PA7100- 4DEC α Evolution of superscalar processor Specific tasks of superscalar processing Parallel decoding {and Dependencies check} Decoding and Pre-decoding • What need to be done • Superscalar processors tend to use 2 and sometimes even 3 or more pipeline cycles for decoding and issuing instructions • >> Pre-decoding: 4shifts a part of the decode task up into loading phase 4resulting of pre-decoding f the instruction class f the type of resources required for the execution f in some processor (e.g. UltraSparc), branch target addresses calculation as well 4the results are stored by attaching 4-7 bits • + shortens the overall cycle time or reduces the number of cycles needed The principle of perdecoding Number of perdecode bits used Specific tasks of superscalar processing: Issue 7.3 Superscalar instruction issue • How and when to send the instruction(s) to EU(s) Instruction issue policies of superscalar processors: Issue policies ---Performance, tread-----Æ Issue rate {How many instructions/cycle} Issue policies: Handing Issue Blockages • CISC about 2 • RISC: Issue stopped by True dependency Issue order of instructions • True dependency Æ (Blocked: need to wait) Aligned vs. -

Pipeliningpipelining

ChapterChapter 99 PipeliningPipelining Jin-Fu Li Department of Electrical Engineering National Central University Jungli, Taiwan Outline ¾ Basic Concepts ¾ Data Hazards ¾ Instruction Hazards Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 2 Content Coverage Main Memory System Address Data/Instruction Central Processing Unit (CPU) Operational Registers Arithmetic Instruction and Cache Logic Unit Sets memory Program Counter Control Unit Input/Output System Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 3 Basic Concepts ¾ Pipelining is a particularly effective way of organizing concurrent activity in a computer system ¾ Let Fi and Ei refer to the fetch and execute steps for instruction Ii ¾ Execution of a program consists of a sequence of fetch and execute steps, as shown below I1 I2 I3 I4 I5 F1 E1 F2 E2 F3 E3 F4 E4 F5 Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 4 Hardware Organization ¾ Consider a computer that has two separate hardware units, one for fetching instructions and another for executing them, as shown below Interstage Buffer Instruction fetch Execution unit unit Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 5 Basic Idea of Instruction Pipelining 12 3 4 5 Time I1 F1 E1 I2 F2 E2 I3 F3 E3 I4 F4 E4 F E Advanced Reliable Systems (ARES) Lab. Jin-Fu Li, EE, NCU 6 A 4-Stage Pipeline 12 3 4 567 Time I1 F1 D1 E1 W1 I2 F2 D2 E2 W2 I3 F3 D3 E3 W3 I4 F4 D4 E4 W4 D: Decode F: Fetch Instruction E: Execute W: Write instruction & fetch operation results operands B1 B2 B3 Advanced Reliable Systems (ARES) Lab. -

Simty: Generalized SIMT Execution on RISC-V Caroline Collange

Simty: generalized SIMT execution on RISC-V Caroline Collange To cite this version: Caroline Collange. Simty: generalized SIMT execution on RISC-V. CARRV 2017: First Work- shop on Computer Architecture Research with RISC-V, Oct 2017, Boston, United States. pp.6, 10.1145/nnnnnnn.nnnnnnn. hal-01622208 HAL Id: hal-01622208 https://hal.inria.fr/hal-01622208 Submitted on 7 Oct 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Simty: generalized SIMT execution on RISC-V Caroline Collange Inria [email protected] ABSTRACT and programming languages to target GPU-like SIMT cores. Be- We present Simty, a massively multi-threaded RISC-V processor side simplifying software layers, the unification of CPU and GPU core that acts as a proof of concept for dynamic inter-thread vector- instruction sets eases prototyping and debugging of parallel pro- ization at the micro-architecture level. Simty runs groups of scalar grams. We have implemented Simty in synthesizable VHDL and threads executing SPMD code in lockstep, and assembles SIMD synthesized it for an FPGA target. instructions dynamically across threads. Unlike existing SIMD or We present our approach to generalizing the SIMT model in SIMT processors like GPUs or vector processors, Simty vector- Section 2, then describe Simty’s microarchitecture is Section 3, and izes scalar general-purpose binaries.