Efficient Algorithms for Clique Problems

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Time Complexity

Chapter 3 Time complexity Use of time complexity makes it easy to estimate the running time of a program. Performing an accurate calculation of a program’s operation time is a very labour-intensive process (it depends on the compiler and the type of computer or speed of the processor). Therefore, we will not make an accurate measurement; just a measurement of a certain order of magnitude. Complexity can be viewed as the maximum number of primitive operations that a program may execute. Regular operations are single additions, multiplications, assignments etc. We may leave some operations uncounted and concentrate on those that are performed the largest number of times. Such operations are referred to as dominant. The number of dominant operations depends on the specific input data. We usually want to know how the performance time depends on a particular aspect of the data. This is most frequently the data size, but it can also be the size of a square matrix or the value of some input variable. 3.1: Which is the dominant operation? 1 def dominant(n): 2 result = 0 3 fori in xrange(n): 4 result += 1 5 return result The operation in line 4 is dominant and will be executedn times. The complexity is described in Big-O notation: in this caseO(n)— linear complexity. The complexity specifies the order of magnitude within which the program will perform its operations. More precisely, in the case ofO(n), the program may performc n opera- · tions, wherec is a constant; however, it may not performn 2 operations, since this involves a different order of magnitude of data. -

Quick Sort Algorithm Song Qin Dept

Quick Sort Algorithm Song Qin Dept. of Computer Sciences Florida Institute of Technology Melbourne, FL 32901 ABSTRACT each iteration. Repeat this on the rest of the unsorted region Given an array with n elements, we want to rearrange them in without the first element. ascending order. In this paper, we introduce Quick Sort, a Bubble sort works as follows: keep passing through the list, divide-and-conquer algorithm to sort an N element array. We exchanging adjacent element, if the list is out of order; when no evaluate the O(NlogN) time complexity in best case and O(N2) exchanges are required on some pass, the list is sorted. in worst case theoretically. We also introduce a way to approach the best case. Merge sort [4]has a O(NlogN) time complexity. It divides the 1. INTRODUCTION array into two subarrays each with N/2 items. Conquer each Search engine relies on sorting algorithm very much. When you subarray by sorting it. Unless the array is sufficiently small(one search some key word online, the feedback information is element left), use recursion to do this. Combine the solutions to brought to you sorted by the importance of the web page. the subarrays by merging them into single sorted array. 2 Bubble, Selection and Insertion Sort, they all have an O(N2) In Bubble sort, Selection sort and Insertion sort, the O(N ) time time complexity that limits its usefulness to small number of complexity limits the performance when N gets very big. element no more than a few thousand data points. -

Extended Branch Decomposition Graphs: Structural Comparison of Scalar Data

Eurographics Conference on Visualization (EuroVis) 2014 Volume 33 (2014), Number 3 H. Carr, P. Rheingans, and H. Schumann (Guest Editors) Extended Branch Decomposition Graphs: Structural Comparison of Scalar Data Himangshu Saikia, Hans-Peter Seidel, Tino Weinkauf Max Planck Institute for Informatics, Saarbrücken, Germany Abstract We present a method to find repeating topological structures in scalar data sets. More precisely, we compare all subtrees of two merge trees against each other – in an efficient manner exploiting redundancy. This provides pair-wise distances between the topological structures defined by sub/superlevel sets, which can be exploited in several applications such as finding similar structures in the same data set, assessing periodic behavior in time-dependent data, and comparing the topology of two different data sets. To do so, we introduce a novel data structure called the extended branch decomposition graph, which is composed of the branch decompositions of all subtrees of the merge tree. Based on dynamic programming, we provide two highly efficient algorithms for computing and comparing extended branch decomposition graphs. Several applications attest to the utility of our method and its robustness against noise. 1. Introduction • We introduce the extended branch decomposition graph: a novel data structure that describes the hierarchical decom- Structures repeat in both nature and engineering. An example position of all subtrees of a join/split tree. We abbreviate it is the symmetric arrangement of the atoms in molecules such with ‘eBDG’. as Benzene. A prime example for a periodic process is the • We provide a fast algorithm for computing an eBDG. Typ- combustion in a car engine where the gas concentration in a ical runtimes are in the order of milliseconds. -

Exploiting C-Closure in Kernelization Algorithms for Graph Problems

Exploiting c-Closure in Kernelization Algorithms for Graph Problems Tomohiro Koana Technische Universität Berlin, Algorithmics and Computational Complexity, Germany [email protected] Christian Komusiewicz Philipps-Universität Marburg, Fachbereich Mathematik und Informatik, Marburg, Germany [email protected] Frank Sommer Philipps-Universität Marburg, Fachbereich Mathematik und Informatik, Marburg, Germany [email protected] Abstract A graph is c-closed if every pair of vertices with at least c common neighbors is adjacent. The c-closure of a graph G is the smallest number such that G is c-closed. Fox et al. [ICALP ’18] defined c-closure and investigated it in the context of clique enumeration. We show that c-closure can be applied in kernelization algorithms for several classic graph problems. We show that Dominating Set admits a kernel of size kO(c), that Induced Matching admits a kernel with O(c7k8) vertices, and that Irredundant Set admits a kernel with O(c5/2k3) vertices. Our kernelization exploits the fact that c-closed graphs have polynomially-bounded Ramsey numbers, as we show. 2012 ACM Subject Classification Theory of computation → Parameterized complexity and exact algorithms; Theory of computation → Graph algorithms analysis Keywords and phrases Fixed-parameter tractability, kernelization, c-closure, Dominating Set, In- duced Matching, Irredundant Set, Ramsey numbers Funding Frank Sommer: Supported by the Deutsche Forschungsgemeinschaft (DFG), project MAGZ, KO 3669/4-1. 1 Introduction Parameterized complexity [9, 14] aims at understanding which properties of input data can be used in the design of efficient algorithms for problems that are hard in general. The properties of input data are encapsulated in the notion of a parameter, a numerical value that can be attributed to each input instance I. -

3.1 Matchings and Factors: Matchings and Covers

1 3.1 Matchings and Factors: Matchings and Covers This copyrighted material is taken from Introduction to Graph Theory, 2nd Ed., by Doug West; and is not for further distribution beyond this course. These slides will be stored in a limited-access location on an IIT server and are not for distribution or use beyond Math 454/553. 2 Matchings 3.1.1 Definition A matching in a graph G is a set of non-loop edges with no shared endpoints. The vertices incident to the edges of a matching M are saturated by M (M-saturated); the others are unsaturated (M-unsaturated). A perfect matching in a graph is a matching that saturates every vertex. perfect matching M-unsaturated M-saturated M Contains copyrighted material from Introduction to Graph Theory by Doug West, 2nd Ed. Not for distribution beyond IIT’s Math 454/553. 3 Perfect Matchings in Complete Bipartite Graphs a 1 The perfect matchings in a complete b 2 X,Y-bigraph with |X|=|Y| exactly c 3 correspond to the bijections d 4 f: X -> Y e 5 Therefore Kn,n has n! perfect f 6 matchings. g 7 Kn,n The complete graph Kn has a perfect matching iff… Contains copyrighted material from Introduction to Graph Theory by Doug West, 2nd Ed. Not for distribution beyond IIT’s Math 454/553. 4 Perfect Matchings in Complete Graphs The complete graph Kn has a perfect matching iff n is even. So instead of Kn consider K2n. We count the perfect matchings in K2n by: (1) Selecting a vertex v (e.g., with the highest label) one choice u v (2) Selecting a vertex u to match to v K2n-2 2n-1 choices (3) Selecting a perfect matching on the rest of the vertices. -

Approximation Algorithms

Lecture 21 Approximation Algorithms 21.1 Overview Suppose we are given an NP-complete problem to solve. Even though (assuming P = NP) we 6 can’t hope for a polynomial-time algorithm that always gets the best solution, can we develop polynomial-time algorithms that always produce a “pretty good” solution? In this lecture we consider such approximation algorithms, for several important problems. Specific topics in this lecture include: 2-approximation for vertex cover via greedy matchings. • 2-approximation for vertex cover via LP rounding. • Greedy O(log n) approximation for set-cover. • Approximation algorithms for MAX-SAT. • 21.2 Introduction Suppose we are given a problem for which (perhaps because it is NP-complete) we can’t hope for a fast algorithm that always gets the best solution. Can we hope for a fast algorithm that guarantees to get at least a “pretty good” solution? E.g., can we guarantee to find a solution that’s within 10% of optimal? If not that, then how about within a factor of 2 of optimal? Or, anything non-trivial? As seen in the last two lectures, the class of NP-complete problems are all equivalent in the sense that a polynomial-time algorithm to solve any one of them would imply a polynomial-time algorithm to solve all of them (and, moreover, to solve any problem in NP). However, the difficulty of getting a good approximation to these problems varies quite a bit. In this lecture we will examine several important NP-complete problems and look at to what extent we can guarantee to get approximately optimal solutions, and by what algorithms. -

A New Spectral Bound on the Clique Number of Graphs

A New Spectral Bound on the Clique Number of Graphs Samuel Rota Bul`o and Marcello Pelillo Dipartimento di Informatica - University of Venice - Italy {srotabul,pelillo}@dsi.unive.it Abstract. Many computer vision and patter recognition problems are intimately related to the maximum clique problem. Due to the intractabil- ity of this problem, besides the development of heuristics, a research di- rection consists in trying to find good bounds on the clique number of graphs. This paper introduces a new spectral upper bound on the clique number of graphs, which is obtained by exploiting an invariance of a continuous characterization of the clique number of graphs introduced by Motzkin and Straus. Experimental results on random graphs show the superiority of our bounds over the standard literature. 1 Introduction Many problems in computer vision and pattern recognition can be formulated in terms of finding a completely connected subgraph (i.e. a clique) of a given graph, having largest cardinality. This is called the maximum clique problem (MCP). One popular approach to object recognition, for example, involves matching an input scene against a stored model, each being abstracted in terms of a relational structure [1,2,3,4], and this problem, in turn, can be conveniently transformed into the equivalent problem of finding a maximum clique of the corresponding association graph. This idea was pioneered by Ambler et. al. [5] and was later developed by Bolles and Cain [6] as part of their local-feature-focus method. Now, it has become a standard technique in computer vision, and has been employing in such diverse applications as stereo correspondence [7], point pattern matching [8], image sequence analysis [9]. -

Multi-Budgeted Matchings and Matroid Intersection Via Dependent Rounding

Multi-budgeted Matchings and Matroid Intersection via Dependent Rounding Chandra Chekuri∗ Jan Vondrak´ y Rico Zenklusenz Abstract ing: given a fractional point x in a polytope P ⊂ Rn, ran- Motivated by multi-budgeted optimization and other applications, domly round x to a solution R corresponding to a vertex we consider the problem of randomly rounding a fractional solution of P . Here P captures the deterministic constraints that we x in the (non-bipartite graph) matching and matroid intersection wish the rounding to satisfy, and it is natural to assume that polytopes. We show that for any fixed δ > 0, a given point P is an integer polytope (typically a f0; 1g polytope). Of course the important issue is what properties we need R to x can be rounded to a random solution R such that E[1R] = (1 − δ)x and any linear function of x satisfies dimension-free satisfy, and this is dictated by the application at hand. A Chernoff-Hoeffding concentration bounds (the bounds depend on property that is useful in several applications is that R sat- δ and the expectation µ). We build on and adapt the swap isfies concentration properties for linear functions of x: that n rounding scheme in our recent work [9] to achieve this result. is, for any vector a 2 [0; 1] , we want the linear function P 1 Our main contribution is a non-trivial martingale based analysis a(R) = i2R ai to be concentrated around its expectation . framework to prove the desired concentration bounds. In this Ideally, we would like to have E[1R] = x, which would P paper we describe two applications. -

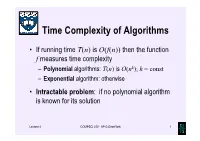

Time Complexity of Algorithms

Time Complexity of Algorithms • If running time T(n) is O(f(n)) then the function f measures time complexity – Polynomial algorithms: T(n) is O(nk); k = const – Exponential algorithm: otherwise • Intractable problem: if no polynomial algorithm is known for its solution Lecture 4 COMPSCI 220 - AP G Gimel'farb 1 Time complexity growth f(n) Number of data items processed per: 1 minute 1 day 1 year 1 century n 10 14,400 5.26⋅106 5.26⋅108 7 n log10n 10 3,997 883,895 6.72⋅10 n1.5 10 1,275 65,128 1.40⋅106 n2 10 379 7,252 72,522 n3 10 112 807 3,746 2n 10 20 29 35 Lecture 4 COMPSCI 220 - AP G Gimel'farb 2 Beware exponential complexity ☺If a linear O(n) algorithm processes 10 items per minute, then it can process 14,400 items per day, 5,260,000 items per year, and 526,000,000 items per century ☻If an exponential O(2n) algorithm processes 10 items per minute, then it can process only 20 items per day and 35 items per century... Lecture 4 COMPSCI 220 - AP G Gimel'farb 3 Big-Oh vs. Actual Running Time • Example 1: Let algorithms A and B have running times TA(n) = 20n ms and TB(n) = 0.1n log2n ms • In the “Big-Oh”sense, A is better than B… • But: on which data volume can A outperform B? TA(n) < TB(n) if 20n < 0.1n log2n, 200 60 or log2n > 200, that is, when n >2 ≈ 10 ! • Thus, in all practical cases B is better than A… Lecture 4 COMPSCI 220 - AP G Gimel'farb 4 Big-Oh vs. -

A Short History of Computational Complexity

The Computational Complexity Column by Lance FORTNOW NEC Laboratories America 4 Independence Way, Princeton, NJ 08540, USA [email protected] http://www.neci.nj.nec.com/homepages/fortnow/beatcs Every third year the Conference on Computational Complexity is held in Europe and this summer the University of Aarhus (Denmark) will host the meeting July 7-10. More details at the conference web page http://www.computationalcomplexity.org This month we present a historical view of computational complexity written by Steve Homer and myself. This is a preliminary version of a chapter to be included in an upcoming North-Holland Handbook of the History of Mathematical Logic edited by Dirk van Dalen, John Dawson and Aki Kanamori. A Short History of Computational Complexity Lance Fortnow1 Steve Homer2 NEC Research Institute Computer Science Department 4 Independence Way Boston University Princeton, NJ 08540 111 Cummington Street Boston, MA 02215 1 Introduction It all started with a machine. In 1936, Turing developed his theoretical com- putational model. He based his model on how he perceived mathematicians think. As digital computers were developed in the 40's and 50's, the Turing machine proved itself as the right theoretical model for computation. Quickly though we discovered that the basic Turing machine model fails to account for the amount of time or memory needed by a computer, a critical issue today but even more so in those early days of computing. The key idea to measure time and space as a function of the length of the input came in the early 1960's by Hartmanis and Stearns. -

The Complexity of Multivariate Matching Polynomials

The Complexity of Multivariate Matching Polynomials Ilia Averbouch∗ and J.A.Makowskyy Faculty of Computer Science Israel Institute of Technology Haifa, Israel failia,[email protected] February 28, 2007 Abstract We study various versions of the univariate and multivariate matching and rook polynomials. We show that there is most general multivariate matching polynomial, which is, up the some simple substitutions and multiplication with a prefactor, the original multivariate matching polynomial introduced by C. Heilmann and E. Lieb. We follow here a line of investigation which was very successfully pursued over the years by, among others, W. Tutte, B. Bollobas and O. Riordan, and A. Sokal in studying the chromatic and the Tutte polynomial. We show here that evaluating these polynomials over the reals is ]P-hard for all points in Rk but possibly for an exception set which is semi-algebraic and of dimension strictly less than k. This result is analoguous to the characterization due to F. Jaeger, D. Vertigan and D. Welsh (1990) of the points where the Tutte polynomial is hard to evaluate. Our proof, however, builds mainly on the work by M. Dyer and C. Greenhill (2000). 1 Introduction In this paper we study generalizations of the matching and rook polynomials and their complexity. The matching polynomial was originally introduced in [5] as a multivariate polynomial. Some of its general properties, in particular the so called half-plane property, were studied recently in [2]. We follow here a line of investigation which was very successfully pursued over the years by, among others, W. Tutte [15], B. -

Sorting Algorithm 1 Sorting Algorithm

Sorting algorithm 1 Sorting algorithm In computer science, a sorting algorithm is an algorithm that puts elements of a list in a certain order. The most-used orders are numerical order and lexicographical order. Efficient sorting is important for optimizing the use of other algorithms (such as search and merge algorithms) that require sorted lists to work correctly; it is also often useful for canonicalizing data and for producing human-readable output. More formally, the output must satisfy two conditions: 1. The output is in nondecreasing order (each element is no smaller than the previous element according to the desired total order); 2. The output is a permutation, or reordering, of the input. Since the dawn of computing, the sorting problem has attracted a great deal of research, perhaps due to the complexity of solving it efficiently despite its simple, familiar statement. For example, bubble sort was analyzed as early as 1956.[1] Although many consider it a solved problem, useful new sorting algorithms are still being invented (for example, library sort was first published in 2004). Sorting algorithms are prevalent in introductory computer science classes, where the abundance of algorithms for the problem provides a gentle introduction to a variety of core algorithm concepts, such as big O notation, divide and conquer algorithms, data structures, randomized algorithms, best, worst and average case analysis, time-space tradeoffs, and lower bounds. Classification Sorting algorithms used in computer science are often classified by: • Computational complexity (worst, average and best behaviour) of element comparisons in terms of the size of the list . For typical sorting algorithms good behavior is and bad behavior is .