Introduction to Data Compression the Morgan Kaufmann Series in Multimedia Information and Systems Series Editor, Edward A

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Multidimensional Companding Scheme for Source Coding Witha Perceptually Relevant Distortion Measure

A MULTIDIMENSIONAL COMPANDING SCHEME FOR SOURCE CODING WITHA PERCEPTUALLY RELEVANT DISTORTION MEASURE J. Crespo, P. Aguiar R. Heusdens Department of Electrical and Computer Engineering Department of Mediamatics Instituto Superior Técnico Technische Universiteit Delft Lisboa, Portugal Delft, The Netherlands ABSTRACT coding mechanisms based on quantization, where less percep- tually important information is quantized more roughly and In this paper, we develop a multidimensional companding vice-versa, and lossless coding of the symbols emitted by the scheme for the preceptual distortion measure by S. van de Par quantizer to reduce statistical redundancy. In the quantization [1]. The scheme is asymptotically optimal in the sense that process of the (en)coder, it is desirable to quantize the infor- it has a vanishing rate-loss with increasing vector dimension. mation extracted from the signal in a rate-distortion optimal The compressor operates in the frequency domain: in its sim- sense, i.e., in a way that minimizes the perceptual distortion plest form, it pointwise multiplies the Discrete Fourier Trans- experienced by the user subject to the constraint of a certain form (DFT) of the windowed input signal by the square-root available bitrate. of the inverse of the masking threshold, and then goes back into the time domain with the inverse DFT. The expander is Due to its mathematical tractability, the Mean Square based on numerical methods: we do one iteration in a fixed- Error (MSE) is the elected choice for the distortion measure point equation, and then we fine-tune the result using Broy- in many coding applications. In audio coding, however, the den’s method. -

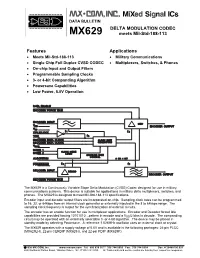

DELTA MODULATION CODEC Meets Mil-Std-188-113 Features

DATA BULLETIN DELTA MODULATION CODEC MX629 meets Mil-Std-188-113 Features Applications Meets Mil-Std-188-113 Military Communications Single Chip Full Duplex CVSD CODEC Multiplexers, Switches, & Phones On-chip Input and Output Filters Programmable Sampling Clocks 3- or 4-bit Companding Algorithm Powersave Capabilities Low Power, 5.0V Operation ➤ ➤ ➤ ➤➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤ ➤➤➤ ➤ The MX629 is a Continuously Variable Slope Delta Modulation (CVSD) Codec designed for use in military communications systems. This device is suitable for applications in military delta multiplexers, switches, and phones. The MX629 is designed to meet Mil-Std-188-113 specifications. Encoder input and decoder output filters are incorporated on-chip. Sampling clock rates can be programmed to 16, 32, or 64kbps from an internal clock generator or externally injected in the 8 to 64kbps range. The sampling clock frequency is output for the synchronization of external circuits. The encoder has an enable function for use in multiplexer applications. Encoder and Decoder forced idle capabilities are provided forcing 10101010…pattern in encode and a VDD/2 bias in decode. The companding circuit may be operated with an externally selectable 3- or 4-bit algorithm. The device may be placed in standby mode by selecting Powersave. A reference 1.024MHz oscillator uses an external clock or crystal. The MX629 operates with a supply voltage of 5.0V and is available in the following packages: 24-pin PLCC (MX629LH), 22-pin CERDIP (MX629J), and 22-pin PDIP (MX629P). 1998 MX-COM, Inc. www.mxcom.com Tel: 800 638 5577 336 744 5050 Fax: 336 744 5054 Doc. # 20480190.001 4800 Bethania Station Road, Winston-Salem, NC 27105-1201 USA All Trademarks and service marks are held by their respective companies. -

End-To-End Enterprise Encryption: a Look at Securezip® Technology

End-to-End Enterprise Encryption: A Look at SecureZIP® Technology TECHNICAL WHITE PAPER WP 700.xxxx End-to-End Enterprise Encryption: A Look at SecureZIP Technology Table of Contents SecureZIP Executive Summary 3 SecureZIP: The Next Generation of ZIP 4 PKZIP: The Foundation for SecureZIP 4 Implementation of ZIP Encryption 5 Hybrid Cryptosystem 6 Crytopgraphic Calculation Sources 7 Digital Signing 7 In Step with the Data Protection Market’s Needs 7 Conclusion 8 WP-SZ-032609 | 2 End-to-End Enterprise Encryption: A Look at SecureZIP Technology End-to-End Enterprise Encryption: A Look at SecureZIP Technology Every day sensitive data is exchanged within your organization, both internally and with external partners. Personal health & insurance data of your employees is shared between your HR department and outside insurance carriers. Customer PII (Personally Identifiable Information) is transferred from your corporate headquarters to various offices around the world. Payment transaction data flows between your store locations and your payments processor. All of these instances involve sensitive data and regulated information that must be exchanged between systems, locations, and partners; a breach of any of them could lead to irreparable damage to your reputation and revenue. Organizations today must adopt a means for mitigating the internal and external risks of data breach and compromise. The required solution must support the exchange of data across operating systems to account for both the diversity of your own infrastructure and the unknown infrastructures of your customers, partners, and vendors. Moreover, that solution must integrate naturally into your existing workflows to keep operational cost and impact to minimum while still protecting data end-to-end. -

The Basic Principles of Data Compression

The Basic Principles of Data Compression Author: Conrad Chung, 2BrightSparks Introduction Internet users who download or upload files from/to the web, or use email to send or receive attachments will most likely have encountered files in compressed format. In this topic we will cover how compression works, the advantages and disadvantages of compression, as well as types of compression. What is Compression? Compression is the process of encoding data more efficiently to achieve a reduction in file size. One type of compression available is referred to as lossless compression. This means the compressed file will be restored exactly to its original state with no loss of data during the decompression process. This is essential to data compression as the file would be corrupted and unusable should data be lost. Another compression category which will not be covered in this article is “lossy” compression often used in multimedia files for music and images and where data is discarded. Lossless compression algorithms use statistic modeling techniques to reduce repetitive information in a file. Some of the methods may include removal of spacing characters, representing a string of repeated characters with a single character or replacing recurring characters with smaller bit sequences. Advantages/Disadvantages of Compression Compression of files offer many advantages. When compressed, the quantity of bits used to store the information is reduced. Files that are smaller in size will result in shorter transmission times when they are transferred on the Internet. Compressed files also take up less storage space. File compression can zip up several small files into a single file for more convenient email transmission. -

A Practical Approach to Spatiotemporal Data Compression

A Practical Approach to Spatiotemporal Data Compres- sion Niall H. Robinson1, Rachel Prudden1 & Alberto Arribas1 1Informatics Lab, Met Office, Exeter, UK. Datasets representing the world around us are becoming ever more unwieldy as data vol- umes grow. This is largely due to increased measurement and modelling resolution, but the problem is often exacerbated when data are stored at spuriously high precisions. In an effort to facilitate analysis of these datasets, computationally intensive calculations are increasingly being performed on specialised remote servers before the reduced data are transferred to the consumer. Due to bandwidth limitations, this often means data are displayed as simple 2D data visualisations, such as scatter plots or images. We present here a novel way to efficiently encode and transmit 4D data fields on-demand so that they can be locally visualised and interrogated. This nascent “4D video” format allows us to more flexibly move the bound- ary between data server and consumer client. However, it has applications beyond purely scientific visualisation, in the transmission of data to virtual and augmented reality. arXiv:1604.03688v2 [cs.MM] 27 Apr 2016 With the rise of high resolution environmental measurements and simulation, extremely large scientific datasets are becoming increasingly ubiquitous. The scientific community is in the pro- cess of learning how to efficiently make use of these unwieldy datasets. Increasingly, people are interacting with this data via relatively thin clients, with data analysis and storage being managed by a remote server. The web browser is emerging as a useful interface which allows intensive 1 operations to be performed on a remote bespoke analysis server, but with the resultant information visualised and interrogated locally on the client1, 2. -

(A/V Codecs) REDCODE RAW (.R3D) ARRIRAW

What is a Codec? Codec is a portmanteau of either "Compressor-Decompressor" or "Coder-Decoder," which describes a device or program capable of performing transformations on a data stream or signal. Codecs encode a stream or signal for transmission, storage or encryption and decode it for viewing or editing. Codecs are often used in videoconferencing and streaming media solutions. A video codec converts analog video signals from a video camera into digital signals for transmission. It then converts the digital signals back to analog for display. An audio codec converts analog audio signals from a microphone into digital signals for transmission. It then converts the digital signals back to analog for playing. The raw encoded form of audio and video data is often called essence, to distinguish it from the metadata information that together make up the information content of the stream and any "wrapper" data that is then added to aid access to or improve the robustness of the stream. Most codecs are lossy, in order to get a reasonably small file size. There are lossless codecs as well, but for most purposes the almost imperceptible increase in quality is not worth the considerable increase in data size. The main exception is if the data will undergo more processing in the future, in which case the repeated lossy encoding would damage the eventual quality too much. Many multimedia data streams need to contain both audio and video data, and often some form of metadata that permits synchronization of the audio and video. Each of these three streams may be handled by different programs, processes, or hardware; but for the multimedia data stream to be useful in stored or transmitted form, they must be encapsulated together in a container format. -

Digital Communication Systems 2.2 Optimal Source Coding

Digital Communication Systems EES 452 Asst. Prof. Dr. Prapun Suksompong [email protected] 2. Source Coding 2.2 Optimal Source Coding: Huffman Coding: Origin, Recipe, MATLAB Implementation 1 Examples of Prefix Codes Nonsingular Fixed-Length Code Shannon–Fano code Huffman Code 2 Prof. Robert Fano (1917-2016) Shannon Award (1976 ) Shannon–Fano Code Proposed in Shannon’s “A Mathematical Theory of Communication” in 1948 The method was attributed to Fano, who later published it as a technical report. Fano, R.M. (1949). “The transmission of information”. Technical Report No. 65. Cambridge (Mass.), USA: Research Laboratory of Electronics at MIT. Should not be confused with Shannon coding, the coding method used to prove Shannon's noiseless coding theorem, or with Shannon–Fano–Elias coding (also known as Elias coding), the precursor to arithmetic coding. 3 Claude E. Shannon Award Claude E. Shannon (1972) Elwyn R. Berlekamp (1993) Sergio Verdu (2007) David S. Slepian (1974) Aaron D. Wyner (1994) Robert M. Gray (2008) Robert M. Fano (1976) G. David Forney, Jr. (1995) Jorma Rissanen (2009) Peter Elias (1977) Imre Csiszár (1996) Te Sun Han (2010) Mark S. Pinsker (1978) Jacob Ziv (1997) Shlomo Shamai (Shitz) (2011) Jacob Wolfowitz (1979) Neil J. A. Sloane (1998) Abbas El Gamal (2012) W. Wesley Peterson (1981) Tadao Kasami (1999) Katalin Marton (2013) Irving S. Reed (1982) Thomas Kailath (2000) János Körner (2014) Robert G. Gallager (1983) Jack KeilWolf (2001) Arthur Robert Calderbank (2015) Solomon W. Golomb (1985) Toby Berger (2002) Alexander S. Holevo (2016) William L. Root (1986) Lloyd R. Welch (2003) David Tse (2017) James L. -

Multimedia Compression Techniques for Streaming

International Journal of Innovative Technology and Exploring Engineering (IJITEE) ISSN: 2278-3075, Volume-8 Issue-12, October 2019 Multimedia Compression Techniques for Streaming Preethal Rao, Krishna Prakasha K, Vasundhara Acharya most of the audio codes like MP3, AAC etc., are lossy as Abstract: With the growing popularity of streaming content, audio files are originally small in size and thus need not have streaming platforms have emerged that offer content in more compression. In lossless technique, the file size will be resolutions of 4k, 2k, HD etc. Some regions of the world face a reduced to the maximum possibility and thus quality might be terrible network reception. Delivering content and a pleasant compromised more when compared to lossless technique. viewing experience to the users of such locations becomes a The popular codecs like MPEG-2, H.264, H.265 etc., make challenge. audio/video streaming at available network speeds is just not feasible for people at those locations. The only way is to use of this. FLAC, ALAC are some audio codecs which use reduce the data footprint of the concerned audio/video without lossy technique for compression of large audio files. The goal compromising the quality. For this purpose, there exists of this paper is to identify existing techniques in audio-video algorithms and techniques that attempt to realize the same. compression for transmission and carry out a comparative Fortunately, the field of compression is an active one when it analysis of the techniques based on certain parameters. The comes to content delivering. With a lot of algorithms in the play, side outcome would be a program that would stream the which one actually delivers content while putting less strain on the audio/video file of our choice while the main outcome is users' network bandwidth? This paper carries out an extensive finding out the compression technique that performs the best analysis of present popular algorithms to come to the conclusion of the best algorithm for streaming data. -

Steganography and Vulnerabilities in Popular Archives Formats.| Nyxengine Nyx.Reversinglabs.Com

Hiding in the Familiar: Steganography and Vulnerabilities in Popular Archives Formats.| NyxEngine nyx.reversinglabs.com Contents Introduction to NyxEngine ............................................................................................................................ 3 Introduction to ZIP file format ...................................................................................................................... 4 Introduction to steganography in ZIP archives ............................................................................................. 5 Steganography and file malformation security impacts ............................................................................... 8 References and tools .................................................................................................................................... 9 2 Introduction to NyxEngine Steganography1 is the art and science of writing hidden messages in such a way that no one, apart from the sender and intended recipient, suspects the existence of the message, a form of security through obscurity. When it comes to digital steganography no stone should be left unturned in the search for viable hidden data. Although digital steganography is commonly used to hide data inside multimedia files, a similar approach can be used to hide data in archives as well. Steganography imposes the following data hiding rule: Data must be hidden in such a fashion that the user has no clue about the hidden message or file's existence. This can be achieved by -

MC14SM5567 PCM Codec-Filter

Product Preview Freescale Semiconductor, Inc. MC14SM5567/D Rev. 0, 4/2002 MC14SM5567 PCM Codec-Filter The MC14SM5567 is a per channel PCM Codec-Filter, designed to operate in both synchronous and asynchronous applications. This device 20 performs the voice digitization and reconstruction as well as the band 1 limiting and smoothing required for (A-Law) PCM systems. DW SUFFIX This device has an input operational amplifier whose output is the input SOG PACKAGE CASE 751D to the encoder section. The encoder section immediately low-pass filters the analog signal with an RC filter to eliminate very-high-frequency noise from being modulated down to the pass band by the switched capacitor filter. From the active R-C filter, the analog signal is converted to a differential signal. From this point, all analog signal processing is done differentially. This allows processing of an analog signal that is twice the amplitude allowed by a single-ended design, which reduces the significance of noise to both the inverted and non-inverted signal paths. Another advantage of this differential design is that noise injected via the power supplies is a common mode signal that is cancelled when the inverted and non-inverted signals are recombined. This dramatically improves the power supply rejection ratio. After the differential converter, a differential switched capacitor filter band passes the analog signal from 200 Hz to 3400 Hz before the signal is digitized by the differential compressing A/D converter. The decoder accepts PCM data and expands it using a differential D/A converter. The output of the D/A is low-pass filtered at 3400 Hz and sinX/X compensated by a differential switched capacitor filter. -

Principles of Communications ECS 332

Principles of Communications ECS 332 Asst. Prof. Dr. Prapun Suksompong (ผศ.ดร.ประพันธ ์ สขสมปองุ ) [email protected] 1. Intro to Communication Systems Office Hours: Check Google Calendar on the course website. Dr.Prapun’s Office: 6th floor of Sirindhralai building, 1 BKD 2 Remark 1 If the downloaded file crashed your device/browser, try another one posted on the course website: 3 Remark 2 There is also three more sections from the Appendices of the lecture notes: 4 Shannon's insight 5 “The fundamental problem of communication is that of reproducing at one point either exactly or approximately a message selected at another point.” Shannon, Claude. A Mathematical Theory Of Communication. (1948) 6 Shannon: Father of the Info. Age Documentary Co-produced by the Jacobs School, UCSD- TV, and the California Institute for Telecommunic ations and Information Technology 7 [http://www.uctv.tv/shows/Claude-Shannon-Father-of-the-Information-Age-6090] [http://www.youtube.com/watch?v=z2Whj_nL-x8] C. E. Shannon (1916-2001) Hello. I'm Claude Shannon a mathematician here at the Bell Telephone laboratories He didn't create the compact disc, the fax machine, digital wireless telephones Or mp3 files, but in 1948 Claude Shannon paved the way for all of them with the Basic theory underlying digital communications and storage he called it 8 information theory. C. E. Shannon (1916-2001) 9 https://www.youtube.com/watch?v=47ag2sXRDeU C. E. Shannon (1916-2001) One of the most influential minds of the 20th century yet when he died on February 24, 2001, Shannon was virtually unknown to the public at large 10 C. -

The Mizoram Gazette EXTRA ORDINARY Published by Authority RNI No

- 1 - Ex-59/2012 The Mizoram Gazette EXTRA ORDINARY Published by Authority RNI No. 27009/1973 Postal Regn. No. NE-313(MZ) 2006-2008 Re. 1/- per page VOL - XLI Aizawl, Thursday 9.2.2012 Magha 20, S.E. 1933, Issue No. 59 NOTIFICATION No.A.45011/1/2010-P&AR(GSW), the 3rd February, 20122012. In exercise of the powers conferred by the proviso to Article 309 of the Constitution of India, the Governor of Mizoram is pleased to make the following Regulations relating to the Mizoram Civil Services (Combined Competitive) Examinations, namely:- 1. SHORT TITLE AND COMMENCEMENT: (i) These Regulations may be called the Mizoram Civil Services (Combined Competitive Examination) Regulations, 2011. (ii) They shall come into force from the date of their publication in the Mizoram Gazette. (iii) These Regulations shall cover recruitment examination to the Junior Grade of the Mizoram Civil Service (MCS), the Mizoram Police Service (MPS), the Mizoram Finance & Accounts Service (MF&AS) and the Mizoram Information Service (MIS). 2. DEFINITIONS: In these regulations, unless the context otherwise requires:- (i) ‘Constitution’ means the Constitution of India; (ii) ‘Commission’ means the Mizoram Public Service Commission; (iii) ‘Examination’ means a Combined Competitive Examination for recruitment to the Junior Grade of MCS, MPS, MFAS and MIS; (iv) ‘Government’ means the State Government of Mizoram; (v) ‘Governor’ means the Governor of Mizoram; (vi) ‘List’ means the list of successful candidates in the written examination and selected candidates prepared by the Commission