Uncooperative Spacecraft Pose Estimation Using Monocular Monochromatic Images

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Relative Navigation Light Detection and Ranging (LIDAR) Sensor Development Test Objective (DTO) Performance Verification

NASA/TM2013-217992 NESC-RP-11-00753 Relative Navigation Light Detection and Ranging (LIDAR) Sensor Development Test Objective (DTO) Performance Verification Cornelius J. Dennehy/NESC Langley Research Center, Hampton, Virginia May 2013 NASA STI Program . in Profile Since its founding, NASA has been dedicated to the CONFERENCE PUBLICATION. advancement of aeronautics and space science. The Collected papers from scientific and NASA scientific and technical information (STI) technical conferences, symposia, seminars, program plays a key part in helping NASA maintain or other meetings sponsored or co- this important role. sponsored by NASA. The NASA STI program operates under the SPECIAL PUBLICATION. Scientific, auspices of the Agency Chief Information Officer. technical, or historical information from It collects, organizes, provides for archiving, and NASA programs, projects, and missions, disseminates NASA’s STI. The NASA STI often concerned with subjects having program provides access to the NASA Aeronautics substantial public interest. and Space Database and its public interface, the NASA Technical Report Server, thus providing one TECHNICAL TRANSLATION. of the largest collections of aeronautical and space English-language translations of foreign science STI in the world. Results are published in scientific and technical material pertinent to both non-NASA channels and by NASA in the NASA’s mission. NASA STI Report Series, which includes the following report types: Specialized services also include organizing and publishing research results, distributing specialized research announcements and feeds, TECHNICAL PUBLICATION. Reports of providing information desk and personal search completed research or a major significant phase support, and enabling data exchange services. of research that present the results of NASA Programs and include extensive data or For more information about the NASA STI theoretical analysis. -

STS-135: the Final Mission Dedicated to the Courageous Men and Women Who Have Devoted Their Lives to the Space Shuttle Program and the Pursuit of Space Exploration

National Aeronautics and Space Administration STS-135: The Final Mission Dedicated to the courageous men and women who have devoted their lives to the Space Shuttle Program and the pursuit of space exploration PRESS KIT/JULY 2011 www.nasa.gov 2 011 2009 2008 2007 2003 2002 2001 1999 1998 1996 1994 1992 1991 1990 1989 STS-1: The First Mission 1985 1981 CONTENTS Section Page SPACE SHUTTLE HISTORY ...................................................................................................... 1 INTRODUCTION ................................................................................................................................... 1 SPACE SHUTTLE CONCEPT AND DEVELOPMENT ................................................................................... 2 THE SPACE SHUTTLE ERA BEGINS ....................................................................................................... 7 NASA REBOUNDS INTO SPACE ............................................................................................................ 14 FROM MIR TO THE INTERNATIONAL SPACE STATION .......................................................................... 20 STATION ASSEMBLY COMPLETED AFTER COLUMBIA ........................................................................... 25 MISSION CONTROL ROSES EXPRESS THANKS, SUPPORT .................................................................... 30 SPACE SHUTTLE PROGRAM’S KEY STATISTICS (THRU STS-134) ........................................................ 32 THE ORBITER FLEET ............................................................................................................................ -

Batonomous Moon Cave Explorer) Mission

DEGREE PROJECT IN VEHICLE ENGINEERING, SECOND CYCLE, 30 CREDITS STOCKHOLM, SWEDEN 2019 Analysis and Definition of the BAT- ME (BATonomous Moon cave Explorer) Mission Analys och bestämning av BAT-ME (BATonomous Moon cave Explorer) missionen ALEXANDRU CAMIL MURESAN KTH ROYAL INSTITUTE OF TECHNOLOGY SCHOOL OF ENGINEERING SCIENCES Analysis and Definition of the BAT-ME (BATonomous Moon cave Explorer) Mission In Collaboration with Alexandru Camil Muresan KTH Supervisor: Christer Fuglesang Industrial Supervisor: Pau Mallol Master of Science Thesis, Aerospace Engineering, Department of Vehicle Engineering, KTH KTH ROYAL INSTITUTE OF TECHNOLOGY Abstract Humanity has always wanted to explore the world we live in and answer different questions about our universe. After the International Space Station will end its service one possible next step could be a Moon Outpost: a convenient location for research, astronaut training and technological development that would enable long-duration space. This location can be inside one of the presumed lava tubes that should be present under the surface but would first need to be inspected, possibly by machine capable of capturing and relaying a map to a team on Earth. In this report the past and future Moon base missions will be summarized considering feasible outpost scenarios from the space companies or agencies. and their prospected manned budget. Potential mission profiles, objectives, requirements and constrains of the BATonomous Moon cave Explorer (BAT- ME) mission will be discussed and defined. Vehicle and mission concept will be addressed, comparing and presenting possible propulsion or locomotion approaches inside the lava tube. The Inkonova “Batonomous™” system is capable of providing Simultaneous Localization And Mapping (SLAM), relay the created maps, with the possibility to easily integrate the system on any kind of vehicle that would function in a real-life scenario. -

International Space Station Benefits for Humanity, 3Rd Edition

International Space Station Benefits for Humanity 3RD Edition This book was developed collaboratively by the members of the International Space Station (ISS) Program Science Forum (PSF), which includes the National Aeronautics and Space Administration (NASA), Canadian Space Agency (CSA), European Space Agency (ESA), Japan Aerospace Exploration Agency (JAXA), State Space Corporation ROSCOSMOS (ROSCOSMOS), and the Italian Space Agency (ASI). NP-2018-06-013-JSC i Acknowledgments A Product of the International Space Station Program Science Forum National Aeronautics and Space Administration: Executive Editors: Julie Robinson, Kirt Costello, Pete Hasbrook, Julie Robinson David Brady, Tara Ruttley, Bryan Dansberry, Kirt Costello William Stefanov, Shoyeb ‘Sunny’ Panjwani, Managing Editor: Alex Macdonald, Michael Read, Ousmane Diallo, David Brady Tracy Thumm, Jenny Howard, Melissa Gaskill, Judy Tate-Brown Section Editors: Tara Ruttley Canadian Space Agency: Bryan Dansberry Luchino Cohen, Isabelle Marcil, Sara Millington-Veloza, William Stefanov David Haight, Louise Beauchamp Tracy Parr-Thumm European Space Agency: Michael Read Andreas Schoen, Jennifer Ngo-Anh, Jon Weems, Cover Designer: Eric Istasse, Jason Hatton, Stefaan De Mey Erik Lopez Japan Aerospace Exploration Agency: Technical Editor: Masaki Shirakawa, Kazuo Umezawa, Sakiko Kamesaki, Susan Breeden Sayaka Umemura, Yoko Kitami Graphic Designer: State Space Corporation ROSCOSMOS: Cynthia Bush Georgy Karabadzhak, Vasily Savinkov, Elena Lavrenko, Igor Sorokin, Natalya Zhukova, Natalia Biryukova, -

A Survey of LIDAR Technology and Its Use in Spacecraft Relative Navigation

https://ntrs.nasa.gov/search.jsp?R=20140000616 2019-08-29T15:23:06+00:00Z CORE Metadata, citation and similar papers at core.ac.uk Provided by NASA Technical Reports Server A Survey of LIDAR Technology and its Use in Spacecraft Relative Navigation John A. Christian* West Virginia University, Morgantown, WV 26506 Scott Cryan† NASA Johnson Space Center, Houston, TX 77058 This paper provides a survey of modern LIght Detection And Ranging (LIDAR) sensors from a perspective of how they can be used for spacecraft relative navigation. In addition to LIDAR technology commonly used in space applications today (e.g. scanning, flash), this paper reviews emerging LIDAR technologies gaining traction in other non-aerospace fields. The discussion will include an overview of sensor operating principles and specific pros/cons for each type of LIDAR. This paper provides a comprehensive review of LIDAR technology as applied specifically to spacecraft relative navigation. I. Introduction HE problem of orbital rendezvous and docking has been a consistent challenge for complex space missions T since before the Gemini 8 spacecraft performed the first successful on-orbit docking of two spacecraft in 1966. Over the years, a great deal of effort has been devoted to advancing technology associated with all aspects of the rendezvous, proximity operations, and docking (RPOD) flight phase. After years of perfecting the art of crewed rendezvous with the Gemini, Apollo, and Space Shuttle programs, NASA began investigating the problem of autonomous rendezvous and docking (AR&D) to support a host of different mission applications. Some of these applications include autonomous resupply of the International Space Station (ISS), robotic servicing/refueling of existing orbital assets, and on-orbit assembly.1 The push towards a robust AR&D capability has led to an intensified interest in a number of different sensors capable of providing insight into the relative state of two spacecraft. -

Cots 3D Camera for On-Orbit Proximity Operations Demonstration

33rd Space Symposium, Technical Track, Colorado Springs, Colorado, United States of America Presented on April 3, 2017 COTS 3D CAMERA FOR ON-ORBIT PROXIMITY OPERATIONS DEMONSTRATION Edward Hanlon United States Naval Academy, [email protected] George Piper United States Naval Academy, [email protected] ABSTRACT Space exploration and utilization trends demand efficient, reliable and autonomous on-orbit rendezvous and docking processes. Current rendezvous systems use heavy, power intensive scanning radar, or rely on transmitters/beacons from the host spacecraft. A possible alternative to these bulky legacy systems presents itself with the advent of highly efficient 3D cameras. These cameras, used as an input device for consumer electronics, provide an instantaneous 3D picture of a target without a complex mechanical scanning system. They are exceptionally compact and require little power—ideal for space operations. Researchers at the United States Naval Academy are utilizing a 3D camera as an active ranging solution for on- orbit rendezvous and docking with targets that have not been embedded with retro-reflectors or similar visual aid devices. As the accuracy requirements of relative attitude knowledge do not change based on the size of the platform, tests can be completed using low-cost cube satellites. The research has two stages: 1) terrestrial testing, involving systematic parameterization of camera performance; and 2) on-orbit demonstration, expected in Spring 2018. A representative commercial, off-the-shelf camera, the Intel R200 was selected to serve as a demonstration unit. In the first phase, the camera’s detection capability was tested on a variety of representative objects in a space like environment including eclipse, direct simulated sunlight, and in “off axis” sunlight. -

A Ladar-Based Pose Estimation Algorithm for Determining Relative Motion of a Spacecraft for Autonomous Rendezvous and Dock

Utah State University DigitalCommons@USU All Graduate Theses and Dissertations Graduate Studies 5-2008 A Ladar-Based Pose Estimation Algorithm for Determining Relative Motion of a Spacecraft for Autonomous Rendezvous and Dock Ronald Christopher Fenton Utah State University Follow this and additional works at: https://digitalcommons.usu.edu/etd Part of the Electrical and Electronics Commons Recommended Citation Fenton, Ronald Christopher, "A Ladar-Based Pose Estimation Algorithm for Determining Relative Motion of a Spacecraft for Autonomous Rendezvous and Dock" (2008). All Graduate Theses and Dissertations. 69. https://digitalcommons.usu.edu/etd/69 This Thesis is brought to you for free and open access by the Graduate Studies at DigitalCommons@USU. It has been accepted for inclusion in All Graduate Theses and Dissertations by an authorized administrator of DigitalCommons@USU. For more information, please contact [email protected]. A LADAR-BASED POSE ESTIMATION ALGORITHM FOR DETERMINING RELATIVE MOTION OF A SPACECRAFT FOR AUTONOMOUS RENDEZVOUS AND DOCK by Ronald C. Fenton A thesis submitted in partial ful¯llment of the requirements for the degree of MASTER OF SCIENCE in Electrical Engineering Approved: Dr. Rees Fullmer Dr. Scott Budge Major Professor Committee Member Dr. Charles Swenson Dr. Byron R. Burnham Committee Member Dean of Graduate Studies UTAH STATE UNIVERSITY Logan, Utah 2008 ii Copyright °c Ronald C. Fenton 2008 All Rights Reserved iii Abstract A Ladar-Based Pose Estimation Algorithm for Determining Relative Motion of a Spacecraft for Autonomous Rendezvous and Dock by Ronald C. Fenton, Master of Science Utah State University, 2008 Major Professor: Dr. Rees Fullmer Department: Electrical and Computer Engineering Future autonomous space missions will require autonomous rendezvous and docking operations. -

Flight Dy~Amics and GN&C 'For Spacecraft Servicing Missions

Flight Dy~amics and GN&C 'for Spacecraft Servicing Missions Bo Naasz1 NASA Goddard Space Center, Greenbelt, MD Doug Zimpfer and Ray Barrington2 Draper Laboratory, Houston, TX. .77058 and Tom Mulder3 Boeing Space Operations and Ground Systems, Houston, TX, 77062 Future human exploration m1ss1ons and commercial opportunities' will be enabled through In-space assembly and satellite servicing. Several recent efforts have developed technologies and capabilities to support these exciting future missions, including advances in flight dynamics and Guidance, Navigation and Control. The Space Shuttle has demonstrated significant capabilities for crewed servicing of the Hubble Space Telescope (HST) and assembly of the International Space Station (ISS). Following the Columbia disaster NASA made significant progress in developing a robotic mission to service the HST. The DARPA Orbital Express mission demonstrated automated rendezvous and capture, In-space propellant transfer, and commodity replacement. This paper will provide a summary of the recent technology developments and lessons learned, and provide a focus for potential future missions. 1 NASA Aerospace Engineer, Goddard Space Flight Center 800 Greenbelt Rd Greenbelt MD 20771 2 Space Systems Associate Director, PFA, 17629 El Camino Real Suite 470 Houston, Tx 77058 2 Space Systems Program Manager, PFA, 17629 El Camino Real Suite 470 Houston, Tx 77058 3 Boeing Associate Technical Fellow; 13100 Space Center Blvd, HM6- l 0, Houston, TX 77059; AIAA Associate Fellow American Institute of Aeronautics and Astronautics I I. Introduction potential game changing element of future exploration may be the ability to perfonn in-space assembly and A servicing of spacecraft. Future exploration missions are likely to require some fonn of in-space assembly similar to the International Space Station (ISS) to assemble components for missions beyond Low Earth Orbit (LEO). -

Space Shuttle Missions Summary - Book 2 Sts- 97 Through Sts-131 Revision T Pcn-5 June 2010

SPACE SHUTTLE MISSIONS SUMMARY - BOOK 2 STS- 97 THROUGH STS-131 REVISION T PCN-5 JUNE 2010 Authors: DA8/Robert D. Legler & DA8/Floyd V. Bennett Book Manager: DA8/Mary C. Thomas 281-483-9018 Typist: DA8/Karen.J. Chisholm 281-483-1091 281-483-5988 IN MEMORIAM Bob Legler April 4, 1927 - March 16, 2007 Bob Legler, the originator of this Space Shuttle Missions Summary Book, was born a natural Corn Husker and lived a full life. His true love was serving his country in the US Coast Guard, Merchant Marines, United Nations, US Army, and the NASA Space Programs as an aerospace engineer. As one of a handful of people to ever support the Mercury, Gemini, Apollo, Skylab, Space Shuttle, and International Space Station missions, Bob was an icon to his peers. He spent 44 years in this noble endeavor called manned space flight. In the memorial service for Bob, Milt Heflin provided the following insight: “Bob was about making things happen, no matter what his position or rank, in whatever the enterprise was at that time…it might have been dodging bullets and bombs while establishing communication systems for United Nations outposts in crazy places…it might have been while riding the Coastal Sentry Quebec Tracking ship in the Indian Ocean…watching over the Lunar Module electrical power system or the operation of the Apollo Telescope Mount…serving as a SPAN Manager in the MCC (where a lot of really good stories were told during crew sleep)…or even while serving as the Chairman of the Annual FOD Chili Cook-off or his beloved Chairmanship of the Apollo Flight -

STS-131 Experiment Express PRESS KIT/April 2010

National Aeronautics and Space Administration SPACE SHUTTLE MISSION STS-131 Experiment Express PRESS KIT/April 2010 www.nasa.gov CONTENTS Section Page STS-131/19A MISSION OVERVIEW ........................................................................................ 1 STS-131 TIMELINE OVERVIEW ............................................................................................... 11 MISSION PROFILE ................................................................................................................... 15 MISSION OBJECTIVES ............................................................................................................ 17 MISSION PERSONNEL ............................................................................................................. 19 STS-131 CREW ....................................................................................................................... 21 PAYLOAD OVERVIEW .............................................................................................................. 31 LEONARDO MULTI-PURPOSE LOGISTICS MODULE (MPLM) FLIGHT MODULE 1 (FM1) ........................... 33 THE LIGHTWEIGHT MULTI-PURPOSE EXPERIMENT SUPPORT STRUCTURE CARRIER (LMC) ................ 44 RENDEZVOUS & DOCKING ....................................................................................................... 45 UNDOCKING, SEPARATION, AND DEPARTURE ...................................................................................... 46 SPACEWALKS ........................................................................................................................ -

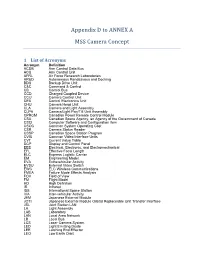

Appendix D to ANNEX A

Appendix D to ANNEX A MSS Camera Concept 1 List of Acronyms Acronym Definition ACDB Arm Control Data Bus ACU Arm Control Unit AFRL Air Force Research Laboratories AR&D Autonomous Rendezvous and Docking BDU Backup Drive Unit C&C Command & Control CB Control Bus CCD Charged Coupled Device CCU Camera Control Unit CEU Control Electronics Unit CHU Camera Head Unit CLA Camera and Light Assembly CLPA Camera/Light Pan/Tilt Unit Assembly CPRCM Canadian Power Remote Control Module CSA Canadian Space Agency, an Agency of the Government of Canada CSCI Computer Software and Configuration Item CSOC Common System Operating Cost CSR Camera Status Reader CSSP Canadian Space Station Program CVIU Common Video Interface Units CVT Current Value Table DCP Display and Control Panel EEE Electrical, Electronic, and Electromechanical EFL Effective Focal Length ELC Express Logistic Carrier EM Engineering Model EVA Extravehicular Activity EVSU External Video Switch EWC ELC Wireless Communications FMEA Failure Mode Effects Analysis FOV Field of View FM Flight Model HD High Definition IR Infrared ISS International Space Station IVA Inter-vehicular Activity JEM Japanese External Module JOTI Japanese External Module Orbital Replaceable Unit Transfer Interface JSL Joint Station LAN LA Light Assembly LAB Laboratory LAN Local Area Network LB Local Bus LCS Laser Camera System LED Light Emitting Diode LEE Latching End Effector LEO Low Earth Orbit LiDAR Light Detection and Ranging M Meter MT Mobile Transporter MBS Mobile Base System MCC-H Mission Control Center, Houston MCDB -

Sts-135(Ulf7)ミッション概要

STS-135(ULF7)ミッション概要 宇宙航空研究開発機構 2011/7/4 初版 STS-135ミッション概要 1 目次 1. ミッションの目的・特徴 2. 飛行計画 3. 搭載品 4. ミッション概要 5. フライトスケジュール 6. 第28/29次長期滞在期間中の主要イベント 7. JAXA関連(打上げ/回収) -------------------- Backup Charts STS-135ミッション概要 2 1.ミッションの目的・特徴 • 多目的補給モジュール(MPLM)で物資の運搬・回収を行い、米国の 商業補給船の実用化が遅れた場合でも、ISS滞在クルー6人の滞在を 1年間継続できるだけの補給を行います(注:HTV,ATVの補給を含むため、実際は半 年分に相当)。ISSから大量の物資を回収するのはこれが最後の機会です。 • シャトルクルー4人での打ち上げは1983年4月のSTS-6以来であり、 シャトルミッションでありながら人手不足を補うためにISS滞在クルー が活躍するミッションとなります (4人しか搭乗しないのは緊急時の帰還方法を考慮 してのことです) 。 (P43参照) • 今回がアトランティス号の最後のフライトで、シャトル最後のフライトで す。(P44参照) STS-135ミッション概要 3 2.飛行計画 項 目 計 画 STSミッション番号 STS-135 (通算135回目のスペースシャトルフライト: 最後のシャトルフライト) ISS組立フライト番号 ULF7 (スペースシャトルによる37回目のISSフライト) オービタ名称 アトランティス号(OV-105) (アトランティス号の33回目の飛行) 2011年 7月9日 午前 0時 26分46秒 (日本時間) 打上げ日時 2011年 7月8日 午前 11時 26分46秒 (米国東部夏時間) 打上げ可能時間帯は5分間 (打上げ可能な期間 7/8~7/10, 7/16~7/31) 打上げ場所 フロリダ州NASAケネディ宇宙センター(KSC)39A発射台 飛行期間 約12日間(ドッキング期間7日間) コマンダー : クリストファー・ファーガソン : サンドラ・マグナス 搭乗員 MS1 パイロット : ダグラス・ハーリー MS2 : レックス・ウォルハイム 軌道 軌道投入高度: 約226 km ランデブ高度: 約390km 軌道傾斜角: 51.6度 2011年 7月20日 午後 8時06分頃 (日本時間) 帰還予定日 2011年 7月20日 午前 7時06分頃 (米国東部夏時間) 主帰還地 : フロリダ州NASAケネディ宇宙センター(KSC) 帰還予定場所 代替帰還地 : カリフォルニア州エドワーズ空軍基地内NASAドライデン飛行研究センター(DFRC) ニューメキシコ州ホワイトサンズ宇宙基地 【貨物室 】多目的補給モジュール(MPLM)、LMC(燃料補給実験装置RRMを運搬、帰還時はポンプモジュールを回収) 主搭載品 【ミッドデッキ】補給物資、実験機材など。 帰還時はISSの故障した機器や不要品などを回収。 STS-135ミッション概要 4 2.飛行計画(続き) STS-135ミッションクルー 船長(Commander) MS2 クリストファー・ファーガソン(Christopher J.Ferguson) レックス・ウォルハイム(Rex J.Walheim) 1961年、ペンシルバニア州生まれ。米海軍大佐。 1962年、カリフォルニア州生まれ生産工学修士、米空軍大佐。 1998年にNASAパイロット宇宙飛行士として選抜。 1996年にNASA宇宙飛行士として選抜。 STS-115でパイロットとして初飛行した。コマンダーを