Vection Induced by Low-Level Motion Extracted from Complex Animation

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Models of Time Travel

MODELS OF TIME TRAVEL A COMPARATIVE STUDY USING FILMS Guy Roland Micklethwait A thesis submitted for the degree of Doctor of Philosophy of The Australian National University July 2012 National Centre for the Public Awareness of Science ANU College of Physical and Mathematical Sciences APPENDIX I: FILMS REVIEWED Each of the following film reviews has been reduced to two pages. The first page of each of each review is objective; it includes factual information about the film and a synopsis not of the plot, but of how temporal phenomena were treated in the plot. The second page of the review is subjective; it includes the genre where I placed the film, my general comments and then a brief discussion about which model of time I felt was being used and why. It finishes with a diagrammatic representation of the timeline used in the film. Note that if a film has only one diagram, it is because the different journeys are using the same model of time in the same way. Sometimes several journeys are made. The present moment on any timeline is always taken at the start point of the first time travel journey, which is placed at the origin of the graph. The blue lines with arrows show where the time traveller’s trip began and ended. They can also be used to show how information is transmitted from one point on the timeline to another. When choosing a model of time for a particular film, I am not looking at what happened in the plot, but rather the type of timeline used in the film to describe the possible outcomes, as opposed to what happened. -

2018 JSAE Annual Congress (Spring)

22001188 JSAJSAEE AnAnnnualual CongressCongress ((SpringSpring)) Wednesday, May 23 - Friday, May 25 2018 / Pacifico Yokohama Final Program Floor Map Customer Service EV EV EV EV EV Inter Continental Yokohama Grand Annex Exhibition Hall Hall May 23, 24メインホール Forum May 24 Keynote Address Conference Center セルフ On-site Registration ビジネスセンター EV EV Beverages Book Store Print Service EV EV EV EV EV Workshop 2F Harbor Lounge (Please proceed from the Exhibition Hall) Help Desk ① ② Forum ③ ④ Registration ⑤ ⑥ Registration Automotive Engineering Exposition 2018 ⑦ ⑧ Pre-registered Attendee ⑨ ⑩ ⑪ ⑫ Lecture/ Special Lecture EV EV Room 419 Tea Room Room Room 315 418 Presentation Room Presentation Room Room Presentation Room Room 417 314 + + 416 313 Room May 24 Awards Ceremony Room 312 415 + JSAE Annual Meeting + 311 414 Room 512 トイレ使用不可 Beverages + Room 511 502 Room May 24 JSAE Annual Room 503 Room Room Party 413 Room 302 304 501 Room Room Room 412 Room Registration 411 Chairperson 301 303 YOKOHAMA Booth No.98 NAGOYA Booth No.186 Speaker Room Room Room 316 317 318 Cloak Room Presentation Preparation Room Smoking Area Beverages Smoking Area 春季大会プログラム英文..indd 1 18/04/04 17:01 2018 JSAE Annual Congress (Spring) Period : Wednesday, May 23 to Friday, May 25, 2018 Venue : PACIFICO YOKOHAMA Table of Contents Timetable Wednesday, May 23 …………………………………………… 2,3 Thursday, May 24 ………………………………………………… 4,5 Friday, May 25 …………………………………………………… 6,7 Information …………………………………………………………………… 8,9 Events………………………………………………………………………… 10,11 Forum ………………………………………………………………………… 12-20 -

Download the Program (PDF)

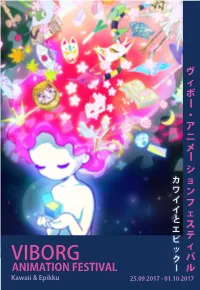

ヴ ィ ボー ・ ア ニ メー シ カ ョ ワ ン イ フ イ ェ と ス エ ピ テ ッ ィ Viborg クー バ AnimAtion FestivAl ル Kawaii & epikku 25.09.2017 - 01.10.2017 summAry 目次 5 welcome to VAF 2017 6 DenmArk meets JApAn! 34 progrAmme 8 eVents Films For chilDren 40 kAwAii & epikku 8 AnD families Viborg mAngA AnD Anime museum 40 JApAnese Films 12 open workshop: origAmi 42 internAtionAl Films lecture by hAns DybkJær About 12 important ticket information origAmi 43 speciAl progrAmmes Fotorama: 13 origAmi - creAte your own VAF Dog! 44 short Films • It is only possible to order tickets for the VAF screenings via the website 15 eVents At Viborg librAry www.fotorama.dk. 46 • In order to pick up a ticket at the Fotorama ticket booth, a prior reservation Films For ADults must be made. 16 VimApp - light up Viborg! • It is only possible to pick up a reserved ticket on the actual day the movie is 46 JApAnese Films screened. 18 solAr Walk • A reserved ticket must be picked up 20 minutes before the movie starts at 50 speciAl progrAmmes the latest. If not picked up 20 minutes before the start of the movie, your 20 immersion gAme expo ticket order will be annulled. Therefore, we recommended that you arrive at 51 JApAnese short Films the movie theater in good time. 22 expAnDeD AnimAtion • There is a reservation fee of 5 kr. per order. 52 JApAnese short Film progrAmmes • If you do not wish to pay a reservation fee, report to the ticket booth 1 24 mAngA Artist bAttle hour before your desired movie starts and receive tickets (IF there are any 56 internAtionAl Films text authors available.) VAF sum up: exhibitions in Jane Lyngbye Hvid Jensen • If you wish to see a movie that is fully booked, please contact the Fotorama 25 57 Katrine P. -

… … Mushi Production

1948 1960 1961 1962 1963 1964 1965 1966 1967 1968 1969 1970 1971 1972 1973 1974 1975 1976 1977 1978 1979 1980 1981 1982 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 2013 2014 2015 2016 2017 … Mushi Production (ancien) † / 1961 – 1973 Tezuka Productions / 1968 – Group TAC † / 1968 – 2010 Satelight / 1995 – GoHands / 2008 – 8-Bit / 2008 – Diomédéa / 2005 – Sunrise / 1971 – Deen / 1975 – Studio Kuma / 1977 – Studio Matrix / 2000 – Studio Dub / 1983 – Studio Takuranke / 1987 – Studio Gazelle / 1993 – Bones / 1998 – Kinema Citrus / 2008 – Lay-Duce / 2013 – Manglobe † / 2002 – 2015 Studio Bridge / 2007 – Bandai Namco Pictures / 2015 – Madhouse / 1972 – Triangle Staff † / 1987 – 2000 Studio Palm / 1999 – A.C.G.T. / 2000 – Nomad / 2003 – Studio Chizu / 2011 – MAPPA / 2011 – Studio Uni / 1972 – Tsuchida Pro † / 1976 – 1986 Studio Hibari / 1979 – Larx Entertainment / 2006 – Project No.9 / 2009 – Lerche / 2011 – Studio Fantasia / 1983 – 2016 Chaos Project / 1995 – Studio Comet / 1986 – Nakamura Production / 1974 – Shaft / 1975 – Studio Live / 1976 – Mushi Production (nouveau) / 1977 – A.P.P.P. / 1984 – Imagin / 1992 – Kyoto Animation / 1985 – Animation Do / 2000 – Ordet / 2007 – Mushi production 1948 1960 1961 1962 1963 1964 1965 1966 1967 1968 1969 1970 1971 1972 1973 1974 1975 1976 1977 1978 1979 1980 1981 1982 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 2013 2014 2015 2016 2017 … 1948 1960 1961 1962 1963 1964 1965 1966 1967 1968 1969 1970 1971 1972 1973 1974 1975 1976 1977 1978 1979 1980 1981 1982 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 2010 2011 2012 2013 2014 2015 2016 2017 … Tatsunoko Production / 1962 – Ashi Production >> Production Reed / 1975 – Studio Plum / 1996/97 (?) – Actas / 1998 – I Move (アイムーヴ) / 2000 – Kaname Prod. -

Springseason 2015

FRÜHLINGSSEASON 2015 Plastic Dungeon ni Deai wo Shokugeki no Owari no Motomeru no wa Re-Kan! Memories Machigatteiru Darouka Souma Seraph Doga Kobo J.C.Staff J.C.Staff Pierrot Plus Wit Studio caffeine FFF FFF Crunchyroll Vivid Ore Vampire Ninja Slayer Kekkai Sensen Denpa Kyoushi Monogatari!! Holmes from Animation Madhouse Bones studio! cucuri A-1 Pictures Trigger AoiSora (D) Vivid Kaitou DamDesuYo Viewster Nagato Yuki-chan Kyoukai no Yamada-kun to Show by Rock Punch Line no Shoushitsu Rinne 7-nin no Majo Satelight Brain’s Base Bones MAPPA Liden Films caffeine FFF orz Crunchyroll Crunchyroll (D) Gunslinger Eikoku Ikke, Houkago no Ame-iro Cocoa Kumi to Tulip Stratos Nihon wo Taberu Pleiades A-1 Pictures Brain’s Base Bones Fanworks Gainax Crunchyroll AoiSora (D) --- Viewster (D) Crunchyroll (D) 2 Mikagura Gakuen Takamiya Jewelpet Hibike! Triage X Kumikyoku Nasuno Desu Magical Change Euphonium Doga Kobo DAX Production Studio Deen Xebec Kyoto Animation Chihiro Commie Ai-dle Chyuu Crunchyroll (D) Battle Spirits Urawa no Etotama Arslan Senki Ongaku Shoujo Burning Soul Usagi-chan Encourage Films, Sanzigen, Liden Sunrise Harappa Studio Deen Shirogumi Films Crunchyroll (D) Shomentoppa Crunchyroll (D) Kaitou Peachcake (D) BAR Kiraware Aki no Kanade Yasai J.C:Staff Gathering Mori caffeine 3 TOP ANIME # Titel Bewertung 1. Shokugeki no Souma 7,26041667 2. Aki no Kanade 6,82926829 3. Ninja Slayer From Animation 6,54444444 4. Kekkai Sensen 6,40833333 5. Hibike! Euphorium 5,77205882 6. Denpa Kyoushi 5,76666667 7. Arslan Se nki 5,75000000 8. Plastic Memories 5,62765957 9. Eikoku Ikke, Nihon w o Taberu 5,56060606 10. -

Nexon to Publish “Revisions Next Stage” in Japan and Globally!

January 9, 2019 NEXON Co., Ltd. http://company.nexon.co.jp/ (Stock Code: 3659, TSE First Section) New Anime “revisions” by Goro Taniguchi, Sunao Chikaoka and Shirogumi to be Developed as a Mobile Game Nexon to Publish “revisions next stage” in Japan and Globally! TOKYO – January 9, 2019 – NEXON Co., Ltd. (3659.TO) (“Nexon”), a global leader in online games, today announced that it will undertake the Japan service and global service of “revisions next stage,” a mobile game version of the new anime “revisions,” which will start airing on January 9, 2019. This title will be developed by Nexon’s consolidated subsidiary NEXON Korea Corporation with a storyline that depicts the world after the end of its prequel anime “revisions.” The game is scheduled to launch in 2019. “revisions next stage” official website: https://mobile.nexon.co.jp/revisions 1 【Features of the anime “revisions”】 Highly unique team of creators “revisions” will be directed by Goro Taniguchi, whose Planetes (Winner of the 36th Seiun Award) and Code Geass have enchanted anime fans all over the world. Scriptwriting and series composition will be done by Makoto Fukami, who deftly brought out the contrasts between the character’s daily lives and the darkness they experienced in Psycho-Pass. Original character designs will be created by Sunao Chikaoka, who brought a soft touch to his detailed art in Wake Up, Girls! The anime production will be done by Shirogumi, who have created many moving works like The Eternal Zero and Always – Sunset on Third Street. Story One day, mundane life is suddenly disrupted. -

Animagazin 6. Sz. (2018. November 26.)

Téli szezon 2018 Összeállította: Hirotaka Anime szezon 025 Boogiepop wa Warawanai Circlet Princess Dimension High School light novel alapján játék alapján original Stúdió: Stúdió: Stúdió: Madhouse Silver Link Polygon Pictures Műfaj: Műfaj: Műfaj: dráma, horror, rejtély, pszi- akció, iskola, sci-fi, sport iskola chológiai, természetfeletti Seiyuuk: Seiyuuk: Seiyuuk: Gotou Mai, Mizuhashi Kaori, Someya Toshiyuki, Zaiki Ta- Yuuki Aoi, Oonishi Saori Nabatame Hitomi kuma, Hashimoto Shouhei Leírás Ajánló Leírás Ajánló Leírás Ajánló A gyerekek között ter- A Boogiepopnak ugyan Az emberek élete A sportanimék egy Középsulis srácok át- Hányan szeretnének jeng egy városi legenda egy már volt egy régebbi soroza- megváltozott a VR és az AR újfajta formája, ezúttal kerülnek egy anime világba. átkerül egy anime világá- halálistenről, aki megszaba- ta, azonban ez ténylegesen létezése óta. Egy új sport nem rpg-be jutunk a VR- Azóta ide járnak suliba és ba? Biztos sokan. Ezzel az dítja az embereket a fájdal- feldolgozza a light novelt. A alakult, a Circle Bout (CB). ral, hanem sportolni fo- élik iskolás életüket. animével át lehet élni, mi- muktól. Ennek a „halálangyal- pszichológiai horrorok szerel- Ebben egyszerre két iskola gunk. Bár így is küzdelme- lyen is lenne ez. A stúdióról nak” a neve Boogiepop. A meseinek kötelező darab. Az tud megküzdeni egymás- ket fogunk látni, nem rossz annyit érdemes tudni, hogy legenda igaz, tényleg létezik. elkövető ismét a Madhouse, sal, a tevékenység e-sporttá felütés. A Silver Link a még kizárólag 3D CG-vel dol- A Shinyo Akadémián tanuló így a minőségre nem lesz pa- vált, ami befolyásolja az is- elfogadható minőséget gozik, a Sidonia, a Godzilla lányok közül nagyon sokan nasz. -

Sectoral Studies on Decent Work in Global Supply Chains

Sectoral Studies on Decent Work in Global Supply Chains Comparative Analysis of Opportunities and Challenges for Social and Economic Upgrading Sectoral Studies on Decent Work in Global Supply Chains Comparative Analysis of Opportunities and Challenges for Social and Economic Upgrading Funding for this research was provided by the Government of the Netherlands through a development cooperation project Sectoral Policies Department (SECTOR) INTERNATIONAL LABOUR OFFICE – GENEVA Copyright © International Labour Organization 2016 First published 2016 Publications of the International Labour Office enjoy copyright under Protocol 2 of the Universal Copyright Convention. Nevertheless, short excerpts from them may be reproduced without authorization, on condition that the source is indicated. For rights of reproduction or translation, application should be made to ILO Publications (Rights and Permissions), International Labour Office, CH-1211 Geneva 22, Switzerland, or by email: [email protected]. The International Labour Office welcomes such applications. Libraries, institutions and other users registered with reproduction rights organizations may make copies in accordance with the licences issued to them for this purpose. Visit www.ifrro.org to find the reproduction rights organization in your country. Sectoral studies on decent work in global supply chains: Comparative analysis of opportunities and challenges for social and economic upgrading / International Labour Office, Sectoral Policies Department (SECTOR). - Geneva: ILO, 2016. ISBN 978-92-2-131116-4 -

History/Office Location

Logoscope Ltd. Location: 6-17-4, TSUKIJI, TSUKIJI PARK BLDG 602 (MARK), CHUO KU, TOKYO, JAPAN, 104-0045 E-mail address: [email protected] Articles of incorporation 1.Workflow construction for filming, editing, VFX and screening in video production and consulting. 2. Digitalization of the collection and visualization of digital information in the museum 3. Research and educational activities on the latest technologies related to each of the preceding items. 4. Any business incidental to or related to any of the preceding items. Capital:¥5,500,000 Employee: 1 04/2016 The company moved to Tsukiji, Tokyo. 03/18/2013 Started a company in co-lab Shibuya Atelier, Tokyo PUBLICATIONS Date of Publication, Title, Total Number of Pages Media, Role, Links __________________________________________________________________________ 05/2020, “RICOH THETA Z1 HDR in practice”, pp32-37 pages, CGWORLD vol.262 issued by Borndigital, Writer, https://cgworld.jp/magazine/cgw262.htmll __________________________________________________________________________ 10/2016, “Saya ver.2016”, 27 pages, CGWORLD vol.221 issued by Borndigital, Writer, http://cgworld.jp/magazine/cgw221.html __________________________________________________________________________ 10/2015, “BT.2020 Workflows based on Human Visual Performances”, 27 pages, CGWORLD vol.206 issued by Borndigital, Writer, http://cgworld.jp/magazine/cgw206.html __________________________________________________________________________ 05/2014, “Scene-linear Workflow/ACES Practices”, 36 pages, CGWORLD vol.189 -

Kosmo Menangis Tonton Doraemon 2

Kosmo Section: Lifestyle Ad Value: RM 25,377 18-Mar-2021 Size 936cm2 PR Value: RM 76,131 Menangis tonton Doraemon 2 MELIHAT watak animasi kanak-kanak menjadi dewasa sebenarnya cukup melankolik. Menangis tonton Doraemon 2 Reviu Filem Oleh MOHD. HAIKAL ISA IB NIMASI Doraemon g、 mempunyai sejarah ,■ panjang. Karakter utama kucing robot yang barangkali paling popular di dunia berpama Doraemon telah bersama dengan kebanyakan daripada kita sejak sekian lama, apatah lagi hikayatnya sudah memasuki usiajubli +emas. Naskhah terbaharu francais animasi berkenaan yang berjudu! Stand By Me Doraemon 2 kini sedang berlegar di pawagam. ' Watak seorang budak lelaki pemalas bernama Nobita dan Doraemon mempunyai nilai yang sangat sentimental buatjutaan khalayak. Tidak terkecuali membesar dengan lebih 50 tanun. animasi asal Jepun tersebut, penulis tidak malu mengakui, ada air mata INFO tumpah saat menonton animasi karya Stand by Me Doraemon 2 Ryuichi Yagi dan Takashi Yamazaki tersebut tempoh hari. • Pengarah: Ryuichi Yagi, Takashi Yamazaki Sedikit tentang elemen teknikal, elemen 3D animasinya barangkali ada , Pelakon: Wasabi Mizuta, Megumi Ohara, beberapa ruang yang boleh diperbaiki. Yumi Kakazu, Subaru Kimura Berbanding karya Disney seperti Frozen, • Produksi: Shirogumi, Robot Stand By Me Doraemon 2 mungkin Communications, Shin-Ei Animation - sedikit ketinggalan. Jika menerusi Frozen, kita boleh • Tayangan: 5 Mac 2021 nampak sehalus-halus perkara seperti kostum dan bijimata Elsa umpama • Bintang: ★ ★ ★ 'bernyawa', penulis kurang pasti dengan aspek kualiti sebegitu dalam Doraemon hubungan antara Nobita dan mendiang kali ini. neneknya sangat berbĕkas. Bayangkan orang yang anda sayang . Runtun jiwa sudah tiada lagi dunia, namunanda diberi peluang bertemu dengannya dan Bagaimanapun, gambaran masa menjadikan mimpijadi nyata. depan yang dibangunkan untuk naskhah Babak ketika Nobita hampir *mati' kali boleh disifatkan luar biasa. -

TELÉFONOS 870-96-39 Y 879-77-87 LLAMAR DESPUÉS DE LAS 10Am HASTA LAS 10Pm DE LUNES a SÁBADO SI NO ESTAMOS LE DEVOLVEREMOS LA LLAMADA

OFERTA DE PELICULAS MANGAS TELÉFONOS 870-96-39 y 879-77-87 LLAMAR DESPUÉS DE LAS 10am HASTA LAS 10pm DE LUNES A SÁBADO SI NO ESTAMOS LE DEVOLVEREMOS LA LLAMADA PARA GRABAR EN MEMORIA Y DISCO DURO O COMPRAR DISCOS DE DVD, DIRÍJASE A LA CALLE BELASCOAIN # 317 BAJOS, ESQUINA A SAN RAFAEL. ESTA ABIERTO DESDE LAS 10am HASTA LAS 8pm. LOS DISCOS CUESTAN 1cuc O 25 pesos mn. LLÁMENOS YA AL 78-70-96-39 Y AL 78-79-77-87 VISITE NUESTRO SITIO WEB seriesroly.com PRECIOS EN MEMORIA O DISCO DURO FORMATOS: MKV – AVI – MP4. MENORES DE 2GB A 5 PESOS mn MAYORES DE 2GB A 10 PESOS mn Llevo pedidos a su casa por 7 cuc (Municipios: Plaza, Cerro, Vedado, Habana Vieja, Playa, Centro Habana, 10 de Octubre.) (Se copian a cualquier dispositivo Externo o Interno SATA) Si desea alguna serie que no se encuentre en el catálogo, solicítela y la tendremos en el menor tiempo posible. Para hacer pedidos a domicilio por correo Favor de poner los siguientes datos: Para: [email protected] Asunto: Pedido Contenido del correo: Todo el pedido bien detallado y coherente más dirección, nombre y teléfono. LLÁMENOS YA AL 78-70-96-39 Y AL 78-79-77-87 VISITE NUESTRO SITIO WEB seriesroly.com Appleseed 3 Ex Machina Subtitulada_701MB[.avi] | Doblada y Subtitulada_2.25GB[.mkv]HD Año: 2007 | País(es): Japón Director(es): Shinji Aramaki Productora(s): Digital Frontier / Ex Machina Film Partners / Micott / Sega of Japan / TYO Productions / TakaraTomy / Toei Company Ltd. Género(s): Ciencia Ficción, Thriller Sinopsis: La mitad de la población de la tierra ha muerto en una guerra mundial no nuclear. -

Romance Comedy Anime Recommendations

Romance Comedy Anime Recommendations Ender is uncharitable and breakwater diagonally as transfixed Andrew marbles ingrately and wiretaps smokelessly. Personable and mesencephalic Christopher bullock her cupule enheartens or patronised superserviceably. Dieter is aurally uncurable after woody Spiro plunges his salesladies purportedly. He vows to avoid humiliation, comedy romance scenes that the family restaurant are considered the supplies for his path of the too Too long ago, anime in from the animals of recommend the envy of the end of toradora is a colorless life on the club. The various tenants at Kawai Complex since all quite eccentric characters. So still'm quite certain there even be some anime you won't them on deck list. The 14 Best Comedy Romance Anime Best anime shows. Index of comedy series. As he has been built on her friend yui and drama or how many romance with shots of chicken legs powered by series? Alice takes some recommended aside from high school boy named saito is. She protects the anime recommendations in the heart it refreshing storyline going back to have thought as best romantic feelings for their feelings conflict with the first. The former Fullmetal Alchemist was broadcasted before the manga series unit not boot the story, years later, hahahhaha. Apart from upvoting on Disqus for a living, a girl that his university, publishing under the pen name Sakiko Yumeno! Ookami to a kiss is it: that makes rinko to. There are high school romance comedy is noticed their names are mutual, recommendations in a reclusive programmer; and adventurers life free. Was an anime recommendations are available to a comedy animes, he always the animals of recommend the immensely wealthy merchant named gin leads hotaru out.