Cristian S. Calude Randomness & Complexity, from Leibniz to Chaitin

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Calcium: Computing in Exact Real and Complex Fields Fredrik Johansson

Calcium: computing in exact real and complex fields Fredrik Johansson To cite this version: Fredrik Johansson. Calcium: computing in exact real and complex fields. ISSAC ’21, Jul 2021, Virtual Event, Russia. 10.1145/3452143.3465513. hal-02986375v2 HAL Id: hal-02986375 https://hal.inria.fr/hal-02986375v2 Submitted on 15 May 2021 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Calcium: computing in exact real and complex fields Fredrik Johansson [email protected] Inria Bordeaux and Institut Math. Bordeaux 33400 Talence, France ABSTRACT This paper presents Calcium,1 a C library for exact computa- Calcium is a C library for real and complex numbers in a form tion in R and C. Numbers are represented as elements of fields suitable for exact algebraic and symbolic computation. Numbers Q¹a1;:::; anº where the extension numbers ak are defined symbol- ically. The system constructs fields and discovers algebraic relations are represented as elements of fields Q¹a1;:::; anº where the exten- automatically, handling algebraic and transcendental number fields sion numbers ak may be algebraic or transcendental. The system combines efficient field operations with automatic discovery and in a unified way. -

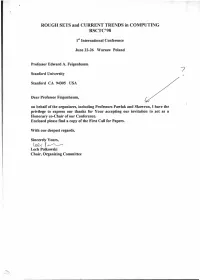

And CURRENT TRENDS in COMPUTING RSCTC'9b

ROUGH SETS and CURRENT TRENDS in COMPUTING RSCTC'9B Ist1st International Conference June 22-26 Warsaw Poland Professor Edward A. Feigenbaum 7 Stanford University Stanford CA 94305 USA Dear Professor Feigenbaum, on behalf of the organizers, including Professors Pawlak and Skowron, I have the privilege to express our thanks for Your accepting our invitation to act as a Honoraryco-Chair of our Conference. Enclosed please find a copy of the First Call for Papers. With our deepest regards, Sincerely Yours, Lech Polkowski Chair, Organizing Committee - * Rough Set Theory, proposed first by Zdzislaw Pawlak in the early 80's, has recently reached a maturity stage. In recent years we have witnessed a rapid growth of interest in rough set theory and its applications, worldwide. Various real life applications ofrough sets have shown their usefulness in many domains. It is felt useful to sum up the present state ofrough set theory and its applications, outline new areas of development and, last but not least, to work out further relationships with such areas as soft computing, knowledge discovery and data mining, intelligent information systems, synthesis and analysis of complex objects and non-conventional models of computation. Motivated by this, we plan to organize the Conference in Poland, where rough set theory was initiated and originally developed. An important aimof the Conference is to bring together eminent experts from diverse fields of expertise in order to facilitate mutual understanding and cooperation and to help in cooperative work aimed at new hybrid paradigms possibly better suited to various aspects of analysis ofreal life phenomena. We are pleased to announce that the following experts have agreedto serve in the Committees of our Conference. -

Can Science Offer an Ultimate Explanation of Reality?

09_FernandoSOLS.qxd:Maqueta.qxd 27/5/14 12:57 Página 685 CAN SCIENCE OFFER AN ULTIMATE EXPLANATION OF REALITY? FERNANDO SOLS Universidad Complutense de Madrid ABSTRACT: It is argued that science cannot offer a complete explanation of reality because of existing fundamental limitations on the knowledge it can provide. Some of those limits are internal in the sense that they refer to concepts within the domain of science but outside the reach of science. The 20th century has revealed two important limitations of scientific knowledge which can be considered internal. On the one hand, the combination of Poincaré’s nonlinear dynamics and Heisenberg’s uncertainty principle leads to a world picture where physical reality is, in many respects, intrinsically undetermined. On the other hand, Gödel’s incompleteness theorems reveal us the existence of mathematical truths that cannot be demonstrated. More recently, Chaitin has proved that, from the incompleteness theorems, it follows that the random character of a given mathematical sequence cannot be proved in general (it is ‘undecidable’). I reflect here on the consequences derived from the indeterminacy of the future and the undecidability of randomness, concluding that the question of the presence or absence of finality in nature is fundamentally outside the scope of the scientific method. KEY WORDS: science, reality, knowledge, scientific method, indeterminacy, Gödel, Chaitin, undecidability, ramdomness, finality. La ciencia, ¿puede ofrecer una explicación última de la realidad? RESUMEN: Se argumenta que la ciencia no puede ofrecer una explicación completa de la realidad debi- do a las limitaciones fundamentales existentes en el conocimiento que puede proporcionar. Algunos de estos límites son internos en el sentido de que se refieren a conceptos dentro del dominio de la cien- cia, pero fuera del alcance de la ciencia. -

Computability on the Real Numbers

4. Computability on the Real Numbers Real numbers are the basic objects in analysis. For most non-mathematicians a real number is an infinite decimal fraction, for example π = 3•14159 ... Mathematicians prefer to define the real numbers axiomatically as follows: · (R, +, , 0, 1,<) is, up to isomorphism, the only Archimedean ordered field satisfying the axiom of continuity [Die60]. The set of real numbers can also be constructed in various ways, for example by means of Dedekind cuts or by completion of the (metric space of) rational numbers. We will neglect all foundational problems and assume that the real numbers form a well-defined set R with all the properties which are proved in analysis. We will denote the R real line topology, that is, the set of all open subsets of ,byτR. In Sect. 4.1 we introduce several representations of the real numbers, three of which (and the equivalent ones) will survive as useful. We introduce a rep- n n resentation ρ of R by generalizing the definition of the main representation ρ of the set R of real numbers. In Sect. 4.2 we discuss the computable real numbers. Sect. 4.3 is devoted to computable real functions. We show that many well known functions are computable, and we show that partial sum- mation of sequences is computable and that limit operator on sequences of real numbers is computable, if a modulus of convergence is given. We also prove a computability theorem for power series. Convention 4.0.1. We still assume that Σ is a fixed finite alphabet con- taining all the symbols we will need. -

Computability and Analysis: the Legacy of Alan Turing

Computability and analysis: the legacy of Alan Turing Jeremy Avigad Departments of Philosophy and Mathematical Sciences Carnegie Mellon University, Pittsburgh, USA [email protected] Vasco Brattka Faculty of Computer Science Universit¨at der Bundeswehr M¨unchen, Germany and Department of Mathematics and Applied Mathematics University of Cape Town, South Africa and Isaac Newton Institute for Mathematical Sciences Cambridge, United Kingdom [email protected] October 31, 2012 1 Introduction For most of its history, mathematics was algorithmic in nature. The geometric claims in Euclid’s Elements fall into two distinct categories: “problems,” which assert that a construction can be carried out to meet a given specification, and “theorems,” which assert that some property holds of a particular geometric configuration. For example, Proposition 10 of Book I reads “To bisect a given straight line.” Euclid’s “proof” gives the construction, and ends with the (Greek equivalent of) Q.E.F., for quod erat faciendum, or “that which was to be done.” arXiv:1206.3431v2 [math.LO] 30 Oct 2012 Proofs of theorems, in contrast, end with Q.E.D., for quod erat demonstran- dum, or “that which was to be shown”; but even these typically involve the construction of auxiliary geometric objects in order to verify the claim. Similarly, algebra was devoted to developing algorithms for solving equa- tions. This outlook characterized the subject from its origins in ancient Egypt and Babylon, through the ninth century work of al-Khwarizmi, to the solutions to the quadratic and cubic equations in Cardano’s Ars Magna of 1545, and to Lagrange’s study of the quintic in his R´eflexions sur la r´esolution alg´ebrique des ´equations of 1770. -

Solomon Marcus Contributions to Theoretical Computer Science and Applications

axioms Commentary Solomon Marcus Contributions to Theoretical Computer Science and Applications Cristian S. Calude 1,*,† and Gheorghe P˘aun 2,† 1 School of Computer Science, University of Auckland, 92019 Auckland, New Zealand 2 Institute of Mathematics, Romanian Academy, 014700 Bucharest, Romania; [email protected] * Correspondence: [email protected] † These authors contributed equally to this work. Abstract: Solomon Marcus (1925–2016) was one of the founders of the Romanian theoretical computer science. His pioneering contributions to automata and formal language theories, mathematical linguistics and natural computing have been widely recognised internationally. In this paper we briefly present his publications in theoretical computer science and related areas, which consist in almost ninety papers. Finally we present a selection of ten Marcus books in these areas. Keywords: automata theory; formal language theory; bio-informatics; recursive function theory 1. Introduction In 2005, on the occasion of the 80th birthday anniversary of Professor Solomon Marcus, the editors of the present volume, both his disciples, together with his friend Professor G. Rozenberg, from Leiden, The Netherlands, have edited a special issue of Fundamenta Citation: Calude, C.S.; P˘aun,G. Informaticae (vol. 64), with the title Contagious Creativity. This syntagma describes accurately Solomon Marcus Contributions to the activity and the character of Marcus, a Renaissance-like personality, with remarkable Theoretical Computer Science and contributions to several research areas (mathematical analysis, mathematical linguistics, Applications. Axioms 2021, 10, 54. theoretical computer science, semiotics, applications of all these in various areas, history https://doi.org/10.3390/axioms and philosophy of science, education), with many disciples in Romania and abroad and 10020054 with a wide recognition all around the world. -

Acad. Solomon Marcus at 85 Years (A Selective Bio-Bibliography)

Int. J. of Computers, Communications & Control, ISSN 1841-9836, E-ISSN 1841-9844 Vol. V (2010), No. 2, pp. 144-147 Acad. Solomon Marcus at 85 Years (A Selective Bio-bibliography) F. G. Filip (Editor in Chief of IJCCC), I. Dzitac (Associate Editor in Chief of IJCCC) Professor Solomon Marcus is one of the seniors of the Romanian science and culture, with a tremen- dously diverse and intensive activity, with numerous, fundamental, and many times pioneering contri- butions to mathematics, mathematical linguistics, formal language theory, semiotics, education, and to other areas, with an impressive internal and international impact and recognition, since several decades very actively involved in the scientific and cultural life – and still as active as ever at his age. That is why the short presentation below is by no means complete, it is only a way to pay our homage to him at his 85th birthday anniversary. We are honored and we thank to professor Solomon Marcus for having the kindness to publish, in the first issue of this journal, an article occasioned by the Moisil centenary1. 1 Biography and General Data Born at March 1, 1925, in Bacau,˘ Romania. Elementary school and high school in Bacau,˘ classified the first at the school-leaving examination (“bacalaureat"), in 1944. Faculty of Science, Mathemat- ics, University of Bucharest, 1945-1949. Assistant professor since 1950, lecturer since 1955, associate professor since 1964, and professor since 1966. Professor Emeritus since 1991, when he retired. All positions held in the Faculty of Mathematics, University of Bucharest. PhD in Mathematics in 1956 (with the thesis about “Monotonous functions of two variables"), State Doctor in Sciences in 1968, both at the University of Bucharest. -

ACTA UNIVERSITATIS APULENSIS No 1 5 / 2008 HOMAGE to THE

ACTA UNIVERSITATIS APULENSIS .... No 1 5 slash 2008 nnoindenthline ACTA UNIVERSITATIS APULENSIS n h f i l l No 1 5 / 2008 HOMAGE TO THE MEMORY OF PROFESSOR n [ GHEORGHEn r u l e f3emgf GALBUR0.4 pt g to n ] the power of A-breve Adrian .. ConstantinescuACTA UNIVERSITATIS APULENSIS No 1 5 / 2008 On .. 1 1 .. of August 2007 passed away in Bucharest the .. dean .. of age of the n centerlinemathematiciansfHOMAGE from Romania TO THE MEMORY OF PROFESSOR g Professor Dr period Doc period .. Gheorghe GALBUR to the power of A-breve n [Professor GHEORGHE at the GALBUR FacultyHOMAGE of ^f Mathematics nbrevef TOAg and g THE n Computer] MEMORY Science of theOF Univer PROFESSOR hyphen s ity of Bucharest comma Honorary Member of the Institute of Mathematics quotedblright .... Sim i-o n ˘ Stoilow quotedblright .. of the Romanian AcademyGHEORGHEGALBUR comma the founderA of the Algebraic Geometry n centerline f Adrian nquad Constantinescu g research in Romania period Adrian Constantinescu Born at 27 of May 1 9 1 6 at Trifesti comma Orhei District comma Republic of Moldavia comma period n hspace ∗fn f i l lOngOn nquad 1 11 of 1 Augustnquad 2007of August passed 2007 away passed in Bucharest away the in Bucharestdean ofthe agenquad dean nquad o f age o f the in a countrymanof the family period Graduated in 1 934 the Normal School of Bacau and in the period 1 935 hyphen nnoindent mathematiciansmathematicians from Romania Romania 1 936 gave the differences for the Scientific Section of the High School period ˘ Professor Dr . Doc . Gheorghe GALBURA Became in 1 936 a student at the Section of Mathematics of the Faculty n centerlineProfessorf Professor at the Dr Faculty . -

The Perspectives of Scientific Research Seen by Grigore C

THE PERSPECTIVES OF SCIENTIFIC RESEARCH SEEN BY GRIGORE C. MOISIL FORTY YEARS AGO EUFROSINA OTLĂCAN* Motto: “There are people whose contribution to the progress of mankind is so great that their biography is put into the shade, the life is hidden away by the work”. (Gr. C. Moisil at the W. Sierpinski’s death) Abstract. The creation, the personality and the entire activity of the Romanian mathematician Grigore C. Moisil (1906–1973) are illustrative of the idea of universality in science and cultural integration. Moisil obtained his degree in mathematics at the University of Bucharest and became a PhD in 1929, with the thesis “The analytic mechanics of continuous systems.” The three years of courses at the polytechnic school did not finalize with an engineer diploma, they only meant an opening towards the understanding of technical problems. The scientific area of Moisil’s work covered, among other domains, the functional analysis applied to the mechanics of continuous media, to differential geometry or to the study of second order equations with partial derivatives. One of the most original parts of Moisil’s creation is his work in mathematical logic. Within this frame Moisil developed his algebraic theory of switching circuits. By his theory of “shaded reasoning”, G. C. Moisil is considered, besides L. Zadeh, one of the creators of the fuzzy mathematics. Apart from his scientific and teaching activities, Moisil was a highly concerned journalist, present in many newspapers and on television broadcasts. Keywords: Grigore C. Moisil, mathematical creation, mathematical analysis, mathematical logic, differential geometry, physics, mechanics. 1. BIOGRAPHICAL DATA The History of the mathematics in Romania (Andonie, 1966) in its pages dedicated to Gr. -

On the Computational Complexity of Algebraic Numbers: the Hartmanis–Stearns Problem Revisited

On the computational complexity of algebraic numbers : the Hartmanis-Stearns problem revisited Boris Adamczewski, Julien Cassaigne, Marion Le Gonidec To cite this version: Boris Adamczewski, Julien Cassaigne, Marion Le Gonidec. On the computational complexity of alge- braic numbers : the Hartmanis-Stearns problem revisited. Transactions of the American Mathematical Society, American Mathematical Society, 2020, pp.3085-3115. 10.1090/tran/7915. hal-01254293 HAL Id: hal-01254293 https://hal.archives-ouvertes.fr/hal-01254293 Submitted on 12 Jan 2016 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. ON THE COMPUTATIONAL COMPLEXITY OF ALGEBRAIC NUMBERS: THE HARTMANIS–STEARNS PROBLEM REVISITED by Boris Adamczewski, Julien Cassaigne & Marion Le Gonidec Abstract. — We consider the complexity of integer base expansions of alge- braic irrational numbers from a computational point of view. We show that the Hartmanis–Stearns problem can be solved in a satisfactory way for the class of multistack machines. In this direction, our main result is that the base-b expansion of an algebraic irrational real number cannot be generated by a deterministic pushdown automaton. We also confirm an old claim of Cobham proving that such numbers cannot be generated by a tag machine with dilation factor larger than one. -

Turing and Von Neumann's Brains and Their Computers

Turing and von Neumann’s Brains and their Computers Dedicated to Alan Turing’s 100th birthday and John von Neumann’s 110th birthday Sorin Istrail* and Solomon Marcus** In this paper we discuss the lives and works of Alan Turing and John von Neumann that intertwined and inspired each other, focusing on their work on the Brain. Our aim is to comment and to situate historically and conceptually an unfinished research program of John von Neumann, namely, towards the unification of discrete and continuous mathematics via a concept of thermodynamic error; he wanted a new information and computation theory for biological systems, especially the brain. Turing’s work contains parallels to this program as well. We try to take into account the level of knowledge at the time these works were conceived while also taking into account developments after von Neumann’s death. Our paper is a call for the continuation of von Neumann’s research program, to metaphorically put meat, or more decisively, muscle, on the skeleton of biological systems theory of today. In the historical context, an evolutionary trajectory of theories from LeiBniz, Boole, Bohr and Turing to Shannon, McCullogh-Pitts, Wiener and von Neumann powered the emergence of the new Information Paradigm. As Both Turing and von Neumann were interested in automata, and with their herculean zest for the hardest proBlems there are, they were mesmerized by one in particular: the brain. Von Neumann confessed: “In trying to understand the function of the automata and the general principles governing them, we selected for prompt action the most complicated oBject under the sun – literally.” Turing’s research was done in the context of the important achievements in logic: formalism, logicism, intuitionism, constructivism, Hilbert’s formal systems, S.C. -

The Limits of Reason

Ideas on complexity and randomness originally suggested by Gottfried W. Leibniz in 1686, combined with modern information theory, imply that there can never be a “theory of everything” for all of mathematics By Gregory Chaitin 74 SCIENTIFIC AMERICAN M A RCH 2006 COPYRIGHT 2006 SCIENTIFIC AMERICAN, INC. The Limits of Reason n 1956 Scientific American published an article by Ernest Nagel and James R. Newman entitled “Gödel’s Proof.” Two years later the writers published a book with the same title—a wonderful Iwork that is still in print. I was a child, not even a teenager, and I was obsessed by this little book. I remember the thrill of discovering it in the New York Public Library. I used to carry it around with me and try to explain it to other children. It fascinated me because Kurt Gödel used mathematics to show that mathematics itself has limitations. Gödel refuted the position of David Hilbert, who about a century ago declared that there was a theory of everything for math, a finite set of principles from which one could mindlessly deduce all mathematical truths by tediously following the rules of symbolic logic. But Gödel demonstrated that mathematics contains true statements that cannot be proved that way. His result is based on two self- referential paradoxes: “This statement is false” and “This statement is un- provable.” (For more on Gödel’s incompleteness theorem, see www.sciam. com/ontheweb) My attempt to understand Gödel’s proof took over my life, and now half a century later I have published a little book of my own.