Vitis AI User Guide

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

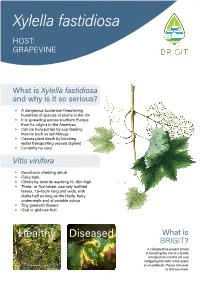

Xylella Fastidiosa HOST: GRAPEVINE

Xylella fastidiosa HOST: GRAPEVINE What is Xylella fastidiosa and why is it so serious? ◆ A dangerous bacterium threatening hundreds of species of plants in the UK ◆ It is spreading across southern Europe from its origins in the Americas ◆ Can be transported by sap-feeding insects such as spittlebugs ◆ Causes plant death by blocking water transporting vessels (xylem) ◆ Currently no cure Vitis vinifera ◆ Deciduous climbing shrub ◆ Flaky bark ◆ Climbs by tendrils reaching 15–18m high ◆ Three- or five-lobed, coarsely toothed leaves, 7.5–15cm long and wide, with stalks half as long as the blade, hairy underneath and of variable colour ◆ Tiny greenish flowers ◆ Oval or globose fruit Healthy Diseased What is BRIGIT? A collaborative project aimed at reducing the risk of a Xylella introduction into the UK and mitigating the risks in the event of an outbreak. Please turn over to find out more. What to look 1 out for 2 ◆ Marginal leaf scorch 1 ◆ Leaf chlorosis 2 ◆ Premature loss of leaves 3 ◆ Matchstick petioles 3 ◆ Irregular cane maturation (green islands in stems) 4 ◆ Fruit drying and wilting 5 ◆ Stunting of new shoots 5 ◆ Death of plant in 1–5 years Where is the plant from? 3 ◆ Plants sourced from infected countries are at a much higher risk of carrying the disease-causing bacterium Do not panic! 4 How long There are other reasons for disease symptoms to appear. Consider California. of University Montpellier; watercolour, RHS Lindley Collections; “healthy”, RHS / Tim Sandall; “diseased”, J. Clark, California 3 J. Clark & A.H. Purcell, University of California 4 J. Clark, University of California 5 ENSA, Images © 1 M. -

DEEP-Hybriddatacloud ASSESSMENT of AVAILABLE TECHNOLOGIES for SUPPORTING ACCELERATORS and HPC, INITIAL DESIGN and IMPLEMENTATION PLAN

DEEP-HybridDataCloud ASSESSMENT OF AVAILABLE TECHNOLOGIES FOR SUPPORTING ACCELERATORS AND HPC, INITIAL DESIGN AND IMPLEMENTATION PLAN DELIVERABLE: D4.1 Document identifier: DEEP-JRA1-D4.1 Date: 29/04/2018 Activity: WP4 Lead partner: IISAS Status: FINAL Dissemination level: PUBLIC Permalink: http://hdl.handle.net/10261/164313 Abstract This document describes the state of the art of technologies for supporting bare-metal, accelerators and HPC in cloud and proposes an initial implementation plan. Available technologies will be analyzed from different points of views: stand-alone use, integration with cloud middleware, support for accelerators and HPC platforms. Based on results of these analyses, an initial implementation plan will be proposed containing information on what features should be developed and what components should be improved in the next period of the project. DEEP-HybridDataCloud – 777435 1 Copyright Notice Copyright © Members of the DEEP-HybridDataCloud Collaboration, 2017-2020. Delivery Slip Name Partner/Activity Date From Viet Tran IISAS / JRA1 25/04/2018 Marcin Plociennik PSNC 20/04/2018 Cristina Duma Aiftimiei Reviewed by INFN 25/04/2018 Zdeněk Šustr CESNET 25/04/2018 Approved by Steering Committee 30/04/2018 Document Log Issue Date Comment Author/Partner TOC 17/01/2018 Table of Contents Viet Tran / IISAS 0.01 06/02/2018 Writing assignment Viet Tran / IISAS 0.99 10/04/2018 Partner contributions WP members 1.0 19/04/2018 Version for first review Viet Tran / IISAS Updated version according to 1.1 22/04/2018 Viet Tran / IISAS recommendations from first review 2.0 24/04/2018 Version for second review Viet Tran / IISAS Updated version according to 2.1 27/04/2018 Viet Tran / IISAS recommendations from second review 3.0 29/04/2018 Final version Viet Tran / IISAS DEEP-HybridDataCloud – 777435 2 Table of Contents Executive Summary.............................................................................................................................5 1. -

Wire-Aware Architecture and Dataflow for CNN Accelerators

Wire-Aware Architecture and Dataflow for CNN Accelerators Sumanth Gudaparthi Surya Narayanan Rajeev Balasubramonian University of Utah University of Utah University of Utah Salt Lake City, Utah Salt Lake City, Utah Salt Lake City, Utah [email protected] [email protected] [email protected] Edouard Giacomin Hari Kambalasubramanyam Pierre-Emmanuel Gaillardon University of Utah University of Utah University of Utah Salt Lake City, Utah Salt Lake City, Utah Salt Lake City, Utah [email protected] [email protected] pierre- [email protected] ABSTRACT The 52nd Annual IEEE/ACM International Symposium on Microarchitecture In spite of several recent advancements, data movement in modern (MICRO-52), October 12–16, 2019, Columbus, OH, USA. ACM, New York, NY, USA, 13 pages. https://doi.org/10.1145/3352460.3358316 CNN accelerators remains a significant bottleneck. Architectures like Eyeriss implement large scratchpads within individual pro- cessing elements, while architectures like TPU v1 implement large systolic arrays and large monolithic caches. Several data move- 1 INTRODUCTION ments in these prior works are therefore across long wires, and ac- Several neural network accelerators have emerged in recent years, count for much of the energy consumption. In this work, we design e.g., [9, 11, 12, 28, 38, 39]. Many of these accelerators expend sig- a new wire-aware CNN accelerator, WAX, that employs a deep and nificant energy fetching operands from various levels of the mem- distributed memory hierarchy, thus enabling data movement over ory hierarchy. For example, the Eyeriss architecture and its row- short wires in the common case. An array of computational units, stationary dataflow require non-trivial storage for scratchpads and each with a small set of registers, is placed adjacent to a subarray registers per processing element (PE) to maximize reuse [11]. -

Efficient Management of Scratch-Pad Memories in Deep Learning

Efficient Management of Scratch-Pad Memories in Deep Learning Accelerators Subhankar Pal∗ Swagath Venkataramaniy Viji Srinivasany Kailash Gopalakrishnany ∗ y University of Michigan, Ann Arbor, MI IBM TJ Watson Research Center, Yorktown Heights, NY ∗ y [email protected] [email protected] fviji,[email protected] Abstract—A prevalent challenge for Deep Learning (DL) ac- TABLE I celerators is how they are programmed to sustain utilization PERFORMANCE IMPROVEMENT USING INTER-NODE SPM MANAGEMENT. Incep Incep Res Multi- without impacting end-user productivity. Little prior effort has Alex VGG Goog SSD Res Mobile Squee tion- tion- Net- Head Geo been devoted to the effective management of their on-chip Net 16 LeNet 300 NeXt NetV1 zeNet PTB v3 v4 50 Attn Mean Scratch-Pad Memory (SPM) across the DL operations of a 1 SPM 1.04 1.19 1.94 1.64 1.58 1.75 1.31 3.86 5.17 2.84 1.02 1.06 1.76 Deep Neural Network (DNN). This is especially critical due to 1-Step 1.04 1.03 1.01 1.10 1.11 1.33 1.18 1.40 2.84 1.57 1.01 1.02 1.24 trends in complex network topologies and the emergence of eager execution. This work demonstrates that there exists up to a speedups of 12 ConvNets, LSTM and Transformer DNNs [18], 5.2× performance gap in DL inference to be bridged using SPM management, on a set of image, object and language networks. [19], [21], [26]–[33] compared to the case when there is no We propose OnSRAM, a novel SPM management framework SPM management, i.e. -

The Muscadine Grape (Vitis Rotundifolia Michx)1 Peter C

HS763 The Muscadine Grape (Vitis rotundifolia Michx)1 Peter C. Andersen, Ali Sarkhosh, Dustin Huff, and Jacque Breman2 Introduction from improved selections, and in fact, one that has been found in the Scuppernong River of North Carolina has The muscadine grape is native to the southeastern United been named ‘Scuppernong’. There are over 100 improved States and was the first native grape species to be cultivated cultivars of muscadine grapes that vary in size from 1/4 to in North America (Figure 1). The natural range of musca- 1 ½ inches in diameter and 4 to 15 grams in weight. Skin dine grapes extends from Delaware to central Florida and color ranges from light bronze to pink to purple to black. occurs in all states along the Gulf Coast to east Texas. It also Flesh is clear and translucent for all muscadine grape extends northward along the Mississippi River to Missouri. berries. Muscadine grapes will perform well throughout Florida, although performance is poor in calcareous soils or in soils with very poor drainage. Most scientists divide the Vitis ge- nus into two subgenera: Euvitis (the European, Vitis vinifera L. grapes and the American bunch grapes, Vitis labrusca L.) and the Muscadania grapes (muscadine grapes). There are three species within the Muscadania subgenera (Vitis munsoniana, Vitis popenoei and Vitis rotundifolia). Euvitis and Muscadania have somatic chromosome numbers of 38 and 40, respectively. Vines do best in deep, fertile soils, and they can often be found in adjacent riverbeds. Wild muscadine grapes are functionally dioecious due to incomplete stamen formation in female vines and incom- plete pistil formation in male vines. -

Efficient Processing of Deep Neural Networks

Efficient Processing of Deep Neural Networks Vivienne Sze, Yu-Hsin Chen, Tien-Ju Yang, Joel Emer Massachusetts Institute of Technology Reference: V. Sze, Y.-H.Chen, T.-J. Yang, J. S. Emer, ”Efficient Processing of Deep Neural Networks,” Synthesis Lectures on Computer Architecture, Morgan & Claypool Publishers, 2020 For book updates, sign up for mailing list at http://mailman.mit.edu/mailman/listinfo/eems-news June 15, 2020 Abstract This book provides a structured treatment of the key principles and techniques for enabling efficient process- ing of deep neural networks (DNNs). DNNs are currently widely used for many artificial intelligence (AI) applications, including computer vision, speech recognition, and robotics. While DNNs deliver state-of-the- art accuracy on many AI tasks, it comes at the cost of high computational complexity. Therefore, techniques that enable efficient processing of deep neural networks to improve key metrics—such as energy-efficiency, throughput, and latency—without sacrificing accuracy or increasing hardware costs are critical to enabling the wide deployment of DNNs in AI systems. The book includes background on DNN processing; a description and taxonomy of hardware architectural approaches for designing DNN accelerators; key metrics for evaluating and comparing different designs; features of DNN processing that are amenable to hardware/algorithm co-design to improve energy efficiency and throughput; and opportunities for applying new technologies. Readers will find a structured introduction to the field as well as formalization and organization of key concepts from contemporary work that provide insights that may spark new ideas. 1 Contents Preface 9 I Understanding Deep Neural Networks 13 1 Introduction 14 1.1 Background on Deep Neural Networks . -

Accelerate Scientific Deep Learning Models on Heteroge- Neous Computing Platform with FPGA

Accelerate Scientific Deep Learning Models on Heteroge- neous Computing Platform with FPGA Chao Jiang1;∗, David Ojika1;5;∗∗, Sofia Vallecorsa2;∗∗∗, Thorsten Kurth3, Prabhat4;∗∗∗∗, Bhavesh Patel5;y, and Herman Lam1;z 1SHREC: NSF Center for Space, High-Performance, and Resilient Computing, University of Florida 2CERN openlab 3NVIDIA 4National Energy Research Scientific Computing Center 5Dell EMC Abstract. AI and deep learning are experiencing explosive growth in almost every domain involving analysis of big data. Deep learning using Deep Neural Networks (DNNs) has shown great promise for such scientific data analysis appli- cations. However, traditional CPU-based sequential computing without special instructions can no longer meet the requirements of mission-critical applica- tions, which are compute-intensive and require low latency and high throughput. Heterogeneous computing (HGC), with CPUs integrated with GPUs, FPGAs, and other science-targeted accelerators, offers unique capabilities to accelerate DNNs. Collaborating researchers at SHREC1at the University of Florida, CERN Openlab, NERSC2at Lawrence Berkeley National Lab, Dell EMC, and Intel are studying the application of heterogeneous computing (HGC) to scientific prob- lems using DNN models. This paper focuses on the use of FPGAs to accelerate the inferencing stage of the HGC workflow. We present case studies and results in inferencing state-of-the-art DNN models for scientific data analysis, using Intel distribution of OpenVINO, running on an Intel Programmable Acceleration Card (PAC) equipped with an Arria 10 GX FPGA. Using the Intel Deep Learning Acceleration (DLA) development suite to optimize existing FPGA primitives and develop new ones, we were able accelerate the scientific DNN models under study with a speedup from 2.46x to 9.59x for a single Arria 10 FPGA against a single core (single thread) of a server-class Skylake CPU. -

Sustainable Viticulture: Effects of Soil Management in Vitis Vinifera

agronomy Article Sustainable Viticulture: Effects of Soil Management in Vitis vinifera Eleonora Cataldo 1, Linda Salvi 1 , Sofia Sbraci 1, Paolo Storchi 2 and Giovan Battista Mattii 1,* 1 Department of Agriculture, Food, Environment and Forestry (DAGRI), University of Florence, 50019 Sesto Fiorentino (FI), Italy; eleonora.cataldo@unifi.it (E.C.); linda.salvi@unifi.it (L.S.); sofia.sbraci@unifi.it (S.S.) 2 Council for Agricultural Research and Economics, Research Centre for Viticulture and Enology (CREA-VE), 52100 Arezzo, Italy; [email protected] * Correspondence: giovanbattista.mattii@unifi.it; Tel.: 390-554-574-043 Received: 19 October 2020; Accepted: 8 December 2020; Published: 11 December 2020 Abstract: Soil management in vineyards is of fundamental importance not only for the productivity and quality of grapes, both in biological and conventional management, but also for greater sustainability of the production. Conservative soil management techniques play an important role, compared to conventional tillage, in order to preserve biodiversity, to save soil fertility, and to keep vegetative-productive balance. Thus, it is necessary to evaluate long-term adaptation strategies to create a balance between the vine and the surrounding environment. This work sought to assess the effects of following different management practices on Vitis vinifera L. cv. Cabernet Sauvignon during 2017 and 2018 seasons: soil tillage (T), temporary cover cropping over all inter-rows (C), and mulching with plant residues every other row (M). The main physiological parameters of vines (leaf gas exchange, stem water potential, chlorophyll fluorescence, and indirect chlorophyll content) as well as qualitative and quantitative grape parameters (technological and phenolic analyses) were measured. -

Chemical Composition of Red Wines Made from Hybrid Grape and Common Grape (Vitis Vinifera L.) Cultivars

444 Proceedings of the Estonian Academy of Sciences, 2014, 63, 4, 444–453 Proceedings of the Estonian Academy of Sciences, 2014, 63, 4, 444–453 doi: 10.3176/proc.2014.4.10 Available online at www.eap.ee/proceedings Chemical composition of red wines made from hybrid grape and common grape (Vitis vinifera L.) cultivars Priit Pedastsaara*, Merike Vaherb, Kati Helmjab, Maria Kulpb, Mihkel Kaljurandb, Kadri Karpc, Ain Raald, Vaios Karathanose, and Tõnu Püssaa a Department of Food Hygiene, Estonian University of Life Sciences, Kreutzwaldi 58A, 51014 Tartu, Estonia b Department of Chemistry, Tallinn University of Technology, Akadeemia tee 15, 12618 Tallinn, Estonia c Department of Horticulture, Estonian University of Life Sciences, Kreutzwaldi 1, 51014 Tartu, Estonia d Department of Pharmacy, University of Tartu, Nooruse 1, 50411 Tartu, Estonia e Department of Dietetics and Nutrition, Harokopio University, 70 El. Venizelou Ave., Athens, Greece Received 21 June 2013, revised 8 May 2014, accepted 23 May 2014, available online 20 November 2014 Abstract. Since the formulation of the “French paradox”, red grape wines are generally considered to be health-promoting products rather than culpable alcoholic beverages. The total wine production, totalling an equivalent of 30 billion 750 mL bottles in 2009, only verifies the fact that global demand is increasing and that the polyphenols present in wines are accounting for a significant proportion of the daily antioxidant intake of the general population. Both statements justify the interest of new regions to be self-sufficient in the wine production. Novel cold tolerant hybrid grape varieties also make it possible to produce wines in regions where winter temperatures fall below – 30 °C and the yearly sum of active temperatures does not exceed 1750 °C. -

In Vitro Clonal Propagation of Grapevine (Vitis Spp.)

In vitro clonal Propagation of grapevine (Vitis spp.) By Magdoleen Gamar Eldeen Osman B.Sc. Agriculture Science (Honours) Sudan University of Science and Technology Thesis submitted in partial fulfillment of the requirements for the degree of master of science in Agric Supervisor :Prof.Abd El Gaffar Al Hag Said Co supervisor :Dr.Mustafa .M.El Balla Department of Horticulture Faculty of Agriculture University of Khartoum October 2oo4 TABLE OF CONTENTS Dedication………………………………. Acknowledgment………………………….……… i Contents…………………………………………… ii Abstract……………………………………………. iv Arabic Abstract…………………………………… vi 1. Introduction ………………………………………. 1 2. Literature review………………………………… 3 2.1 Origin and distribution of grape (vitis sp)………. 3 2.2 Botany……………………………………………... 3 2.3 Soil and Location………………………………… 4 2.4 Yield and storage…………………………………. 4 2.5 Pests and diseases…………………………………. 2.6 World production………………………………… 2.7 Propagation methods…………………………… 7 2.7.1 Traditional propagation ………………………… 7 2.7.2 Propagation by tissue culture……………………. 8 2.7.2.1 The ex-plant……………………………………….. 9 2.7.2.2 Media composition ……………………………….. 11 2.7.2.2.1 Inorganic nutrients ……………………………… 11 2.7.2.2.2 Organic nutrien…………………………………… 13 2.7.2.2.2.1 Carbohydrates…………………………………… 14 2.7.2.2.2.2 Growth regulators………………………………… 15 3. Materials and methods…………………………… 19 3.1 Plant material……………………………………... 19 3.2 Sterilization……………………………………… 19 3.3 Preparation of ex-plants …………………………. 19 3.4 Basal nutrient medium…………………………… 20 3.5 Culture incubation……………………………….. 20 3.6 Experimentation………………………………….. 20 3.6.1 Effect of MS salts mix……………………………. 20 3.6.2 Effect of supplementary phosphate …………………….. 21 3.6.3 Effect of ex-plant……………………………. 21 3.6.4 Growth regulators………………………………… 21 3.6.4.1 Combined effect of kinetin annaphthaleneacetic acid (NAA) on 21 different growth parameters…… 3.6.4.2 Induction of roots…………………………………. -

Exploring the Programmability for Deep Learning Processors: from Architecture to Tensorization Chixiao Chen, Huwan Peng, Xindi Liu, Hongwei Ding and C.-J

Exploring the Programmability for Deep Learning Processors: from Architecture to Tensorization Chixiao Chen, Huwan Peng, Xindi Liu, Hongwei Ding and C.-J. Richard Shi Department of Electrical Engineering, University of Washington, Seattle, WA, 98195 {cxchen2,hwpeng,xindil,cjshi}@uw.edu ABSTRACT Custom tensorization explores problem-specific computing data This paper presents an instruction and Fabric Programmable Neuron flows for maximizing energy efficiency and/or throughput. For Array (iFPNA) architecture, its 28nm CMOS chip prototype, and a example, Eyeriss proposed a row-stationary (RS) data flow to reduce compiler for the acceleration of a variety of deep learning neural memory access by exploiting feature map reuse [1]. However, row networks (DNNs) including convolutional neural networks (CNNs), stationary is only effective for convolutional layers with small recurrent neural networks (RNNs), and fully connected (FC) net strides, and shows poor performance on the Alexnet CNN CONVl works on chip. The iFPNA architecture combines instruction-level layer and RNNs. Systolic arrays, on the other hand, feature both programmability as in an Instruction Set Architecture (ISA) with efficiency and high throughput for matrix-matrix computation [4]. logic-level reconfigurability as in a Field-Prograroroable Gate Anay But they take less advantage of convolutional reuse, thus require (FPGA) in a sliced structure for scalability. Four data flow models, more data transfer bandwidth. namely weight stationary, input stationary, row stationary and Fixed data flow schemes in deep learning processors limit their tunnel stationary, are described as the abstraction of various DNN coverage of advanced algorithms. A flexible data flow engine is data and computational dependence. The iFPNA compiler parti desired. -

Deep Learning Inference in Facebook Data Centers: Characterization, Performance Optimizations and Hardware Implications

Deep Learning Inference in Facebook Data Centers: Characterization, Performance Optimizations and Hardware Implications Jongsoo Park,∗ Maxim Naumov†, Protonu Basu, Summer Deng, Aravind Kalaiah, Daya Khudia, James Law, Parth Malani, Andrey Malevich, Satish Nadathur, Juan Pino, Martin Schatz, Alexander Sidorov, Viswanath Sivakumar, Andrew Tulloch, Xiaodong Wang, Yiming Wu, Hector Yuen, Utku Diril, Dmytro Dzhulgakov, Kim Hazelwood, Bill Jia, Yangqing Jia, Lin Qiao, Vijay Rao, Nadav Rotem, Sungjoo Yoo and Mikhail Smelyanskiy Facebook, 1 Hacker Way, Menlo Park, CA Abstract The application of deep learning techniques resulted in re- markable improvement of machine learning models. In this paper we provide detailed characterizations of deep learning models used in many Facebook social network services. We present computational characteristics of our models, describe high-performance optimizations targeting existing systems, point out their limitations and make suggestions for the fu- ture general-purpose/accelerated inference hardware. Also, Figure 1: Server demand for DL inference across data centers we highlight the need for better co-design of algorithms, nu- merics and computing platforms to address the challenges of In order to perform a characterizations of the DL models workloads often run in data centers. and address aforementioned concerns, we had direct access to the current systems as well as applications projected to drive 1. Introduction them in the future. Many inference workloads need flexibility, availability and low latency provided by CPUs. Therefore, Machine learning (ML), deep learning (DL) in particular, is our optimizations were mostly targeted for these general pur- used across many social network services. The high quality pose processors. However, our characterization suggests the visual, speech, and language DL models must scale to billions following general requirements for new DL hardware designs: of users of Facebook’s social network services [25].