Key Components Documented for Output of D3.5 E.G. BHL-Europe Portal, OCR Demonstrators, Distributed Storage Model, Etc

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

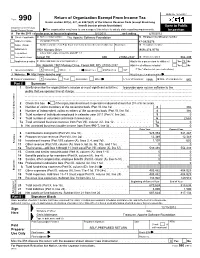

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

An Unobtrusive, Scalable Approach to Large Scale Software License Analysis

DRAT: An Unobtrusive, Scalable Approach to Large Scale Software License Analysis Chris A. Mattmann1,2, Ji-Hyun Oh1,2, Tyler Palsulich1*, Lewis John McGibbney1, Yolanda Gil2,3, Varun Ratnakar3 1Jet Propulsion Laboratory 2Computer Science Department 3USC Information Sciences Institute California Institute of Technology University of Southern California University of Southern California Pasadena, CA 91109 USA Los Angeles, CA 90089 USA Marina Del Rey, CA [email protected] {mattmann,jihyuno}@usc.edu {gil,varunr}@ isi.edu Abstract— The Apache Release Audit Tool (RAT) performs (OSI) for complying with open source software open source license auditing and checking, however definition, however, there exist slight differences among these RAT fails to successfully audit today's large code bases. Being a licenses [2]. For instance, GPL is a “copyleft” license that natural language processing (NLP) tool and a crawler, RAT only allows derivative works under the original license, marches through a code base, but uses rudimentary black lists whereas MIT license is a “permissive” license that grants the and white lists to navigate source code repositories, and often does a poor job of identifying source code versus binary files. In right to sublicense the code under any kind of license [2]. This addition RAT produces no incremental output and thus on code difference could affect architectural design of the software. bases that themselves are "Big Data", RAT could run for e.g., a Furthermore, circumstances are more complicated when month and still not provide any status report. We introduce people publish software under the multiple licenses. Distributed "RAT" or the Distributed Release Audit Tool Therefore, an automated tool for verifying software licenses in (DRAT). -

Avaliando a Dívida Técnica Em Produtos De Código Aberto Por Meio De Estudos Experimentais

UNIVERSIDADE FEDERAL DE GOIÁS INSTITUTO DE INFORMÁTICA IGOR RODRIGUES VIEIRA Avaliando a dívida técnica em produtos de código aberto por meio de estudos experimentais Goiânia 2014 IGOR RODRIGUES VIEIRA Avaliando a dívida técnica em produtos de código aberto por meio de estudos experimentais Dissertação apresentada ao Programa de Pós–Graduação do Instituto de Informática da Universidade Federal de Goiás, como requisito parcial para obtenção do título de Mestre em Ciência da Computação. Área de concentração: Ciência da Computação. Orientador: Prof. Dr. Auri Marcelo Rizzo Vincenzi Goiânia 2014 Ficha catalográfica elaborada automaticamente com os dados fornecidos pelo(a) autor(a), sob orientação do Sibi/UFG. Vieira, Igor Rodrigues Avaliando a dívida técnica em produtos de código aberto por meio de estudos experimentais [manuscrito] / Igor Rodrigues Vieira. - 2014. 100 f.: il. Orientador: Prof. Dr. Auri Marcelo Rizzo Vincenzi. Dissertação (Mestrado) - Universidade Federal de Goiás, Instituto de Informática (INF) , Programa de Pós-Graduação em Ciência da Computação, Goiânia, 2014. Bibliografia. Apêndice. Inclui algoritmos, lista de figuras, lista de tabelas. 1. Dívida técnica. 2. Qualidade de software. 3. Análise estática. 4. Produto de código aberto. 5. Estudo experimental. I. Vincenzi, Auri Marcelo Rizzo, orient. II. Título. Todos os direitos reservados. É proibida a reprodução total ou parcial do trabalho sem autorização da universidade, do autor e do orientador(a). Igor Rodrigues Vieira Graduado em Sistemas de Informação, pela Universidade Estadual de Goiás – UEG, com pós-graduação lato sensu em Desenvolvimento de Aplicações Web com Interfaces Ricas, pela Universidade Federal de Goiás – UFG. Foi Coordenador da Ouvidoria da UFG e, atualmente, é Analista de Tecnologia da Informação do Centro de Recursos Computacionais – CERCOMP/UFG. -

Mahasen: Distributed Storage Resource Broker K

Mahasen: Distributed Storage Resource Broker K. Perera, T. Kishanthan, H. Perera, D. Madola, Malaka Walpola, Srinath Perera To cite this version: K. Perera, T. Kishanthan, H. Perera, D. Madola, Malaka Walpola, et al.. Mahasen: Distributed Storage Resource Broker. 10th International Conference on Network and Parallel Computing (NPC), Sep 2013, Guiyang, China. pp.380-392, 10.1007/978-3-642-40820-5_32. hal-01513774 HAL Id: hal-01513774 https://hal.inria.fr/hal-01513774 Submitted on 25 Apr 2017 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Distributed under a Creative Commons Attribution| 4.0 International License Mahasen: Distributed Storage Resource Broker K.D.A.K.S.Perera1, T Kishanthan1, H.A.S.Perera1, D.T.H.V.Madola1, Malaka Walpola1, Srinath Perera2 1 Computer Science and Engineering Department, University Of Moratuwa, Sri Lanka. {shelanrc, kshanth2101, ashansa.perera, hirunimadola, malaka.uom}@gmail.com 2 WSO2 Lanka, No 59, Flower Road, Colombo 07, Sri Lanka [email protected] Abstract. Modern day systems are facing an avalanche of data, and they are being forced to handle more and more data intensive use cases. These data comes in many forms and shapes: Sensors (RFID, Near Field Communication, Weather Sensors), transaction logs, Web, social networks etc. -

LNCS 8147, Pp

Mahasen: Distributed Storage Resource Broker K.D.A.K.S. Perera1, T. Kishanthan1, H.A.S. Perera1, D.T.H.V. Madola1, Malaka Walpola1, and Srinath Perera2 1 Computer Science and Engineering Department, University Of Moratuwa, Sri Lanka {shelanrc,kshanth2101,ashansa.perera,hirunimadola, malaka.uom}@gmail.com 2 WSO2 Lanka, No. 59, Flower Road, Colombo 07, Sri Lanka [email protected] Abstract. Modern day systems are facing an avalanche of data, and they are be- ing forced to handle more and more data intensive use cases. These data comes in many forms and shapes: Sensors (RFID, Near Field Communication, Weath- er Sensors), transaction logs, Web, social networks etc. As an example, weather sensors across the world generate a large amount of data throughout the year. Handling these and similar data require scalable, efficient, reliable and very large storages with support for efficient metadata based searching. This paper present Mahasen, a highly scalable storage for high volume data intensive ap- plications built on top of a peer-to-peer layer. In addition to scalable storage, Mahasen also supports efficient searching, built on top of the Distributed Hash table (DHT) 1 Introduction Currently United States collects weather data from many sources like Doppler readers deployed across the country, aircrafts, mobile towers and Balloons etc. These sensors keep generating a sizable amount of data. Processing them efficiently as needed is pushing our understanding about large-scale data processing to its limits. Among many challenges data poses, a prominent one is storing the data and index- ing them so that scientist and researchers can come and ask for specific type of data collected at a given time and in a given region. -

Full-Graph-Limited-Mvn-Deps.Pdf

org.jboss.cl.jboss-cl-2.0.9.GA org.jboss.cl.jboss-cl-parent-2.2.1.GA org.jboss.cl.jboss-classloader-N/A org.jboss.cl.jboss-classloading-vfs-N/A org.jboss.cl.jboss-classloading-N/A org.primefaces.extensions.master-pom-1.0.0 org.sonatype.mercury.mercury-mp3-1.0-alpha-1 org.primefaces.themes.overcast-${primefaces.theme.version} org.primefaces.themes.dark-hive-${primefaces.theme.version}org.primefaces.themes.humanity-${primefaces.theme.version}org.primefaces.themes.le-frog-${primefaces.theme.version} org.primefaces.themes.south-street-${primefaces.theme.version}org.primefaces.themes.sunny-${primefaces.theme.version}org.primefaces.themes.hot-sneaks-${primefaces.theme.version}org.primefaces.themes.cupertino-${primefaces.theme.version} org.primefaces.themes.trontastic-${primefaces.theme.version}org.primefaces.themes.excite-bike-${primefaces.theme.version} org.apache.maven.mercury.mercury-external-N/A org.primefaces.themes.redmond-${primefaces.theme.version}org.primefaces.themes.afterwork-${primefaces.theme.version}org.primefaces.themes.glass-x-${primefaces.theme.version}org.primefaces.themes.home-${primefaces.theme.version} org.primefaces.themes.black-tie-${primefaces.theme.version}org.primefaces.themes.eggplant-${primefaces.theme.version} org.apache.maven.mercury.mercury-repo-remote-m2-N/Aorg.apache.maven.mercury.mercury-md-sat-N/A org.primefaces.themes.ui-lightness-${primefaces.theme.version}org.primefaces.themes.midnight-${primefaces.theme.version}org.primefaces.themes.mint-choc-${primefaces.theme.version}org.primefaces.themes.afternoon-${primefaces.theme.version}org.primefaces.themes.dot-luv-${primefaces.theme.version}org.primefaces.themes.smoothness-${primefaces.theme.version}org.primefaces.themes.swanky-purse-${primefaces.theme.version} -

Chris Mattmann

Chris Mattmann Associate Chief Technologist, NASA JPL Chris Mattmann is the Associate Chief Technologist and Innovation Officer in the Office of the Chief Technology and Innovation. Mattmann manages the IT Advanced Research and Open Source Projects Office and the NSF and Open Source Applications Office. Mattmann was formerly a member of the Engineering Administrative Office and formerly Chief Architect of the Instrument and Science Data Systems Section at NASA JPL with the responsibility for influencing science data system designs and facilitating the infusion of new technologies to meet our future challenges. Dr. Mattmann is also JPL's first Principal Scientist in the area of Data Science. He has over 18 years of experience at JPL and has conceived, realized and delivered the architecture for the next generation of reusable science data processing systems for NASA's Orbiting Carbon Observatory, NPP Sounder PEATE, and the Soil Moisture Active Passive (SMAP) Earth science missions. Mattmann's work has been funded by NASA, DARPA, DHS, NSF, NIH, NLM and by private industry. Mattmann was the first Vice President (VP) of Apache OODT (Object Oriented Data Technology), the first NASA project to enter the Apache Software Foundation (ASF) and he led the project's transition from JPL to the ASF. He contributes to open source as a Director at the Apache Software Foundation where he was one of the initial contributors to Apache Nutch as a member of its project management committee, the predecessor to the Apache Hadoop project. Mattmann is the progenitor of the Apache Tika framework, the digital "babel fish" and de-facto content analysis and detection framework that exists. -

Programming Hive

www.it-ebooks.info www.it-ebooks.info Programming Hive Edward Capriolo, Dean Wampler, and Jason Rutherglen Beijing • Cambridge • Farnham • Köln • Sebastopol • Tokyo www.it-ebooks.info Programming Hive by Edward Capriolo, Dean Wampler, and Jason Rutherglen Copyright © 2012 Edward Capriolo, Aspect Research Associates, and Jason Rutherglen. All rights re- served. Printed in the United States of America. Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472. O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are also available for most titles (http://my.safaribooksonline.com). For more information, contact our corporate/institutional sales department: 800-998-9938 or [email protected]. Editors: Mike Loukides and Courtney Nash Indexer: Bob Pfahler Production Editors: Iris Febres and Rachel Steely Cover Designer: Karen Montgomery Proofreaders: Stacie Arellano and Kiel Van Horn Interior Designer: David Futato Illustrator: Rebecca Demarest October 2012: First Edition. Revision History for the First Edition: 2012-09-17 First release See http://oreilly.com/catalog/errata.csp?isbn=9781449319335 for release details. Nutshell Handbook, the Nutshell Handbook logo, and the O’Reilly logo are registered trademarks of O’Reilly Media, Inc. Programming Hive, the image of a hornet’s hive, and related trade dress are trade- marks of O’Reilly Media, Inc. Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in this book, and O’Reilly Media, Inc., was aware of a trademark claim, the designations have been printed in caps or initial caps. While every precaution has been taken in the preparation of this book, the publisher and authors assume no responsibility for errors or omissions, or for damages resulting from the use of the information con- tained herein. -

Big Data Processing on Arbitrarily Distributed Dataset

Big Data Processing on Arbitrarily Distributed Dataset by Dongyao Wu A THESIS SUBMITTED IN PARTIAL FULFILLMENT OF THE REQUIREMENTS FOR THE DEGREE OF DOCTOR OF PHILOSOPHY SCHOOL OF COMPUTER SCIENCE AND ENGINEERING FACULTY OF ENGINEERING Thursday, 1st June, 2017 All rights reserved. This work may not be reproduced in whole or in part, by photocopy or other means, without the permission of the author. c 2017 by Dongyao Wu PLEASE TYPE THE UNIVERSITY OF NEW SOUTH WALES Thesis/Dissertation Sheet Surname or Family name: Wu First name: Dongyao Other name/s: Abbreviation for degree as given in the University calendar: PhD School: School of Computer Science and Engineering Faculty: Faculty of Engineering Title: Big Data Processing on Arbitrarily Distributed Dataset Abstract 350 words maximum: (PLEASE TYPE) Over the past years, frameworks such as MapReduce and Spark have been Introduced to ease the task of developing big data programs and applications. These frameworks significantly reduce the complexity of developing big data programs and applications. However, in reality, many real-world scenarios require pipelining and Integration of multiple big data jobs. As the big data pipelines and applications become more and more complicated, It Is almost Impossible to manually optimize the performance for each component not to mention the whole pipeline/application. At the same time, there are also increasing requirements to facilitate interaction, composition and Integration for big data analytics applications In continuously evolving, Integrating and delivering scenarios. In addition, with the emergence and development of cloud computing, mobile computing and the Internet of Things, data are Increasingly collected and stored In highly distributed infrastructures (e.g. -

Code Smell Prediction Employing Machine Learning Meets Emerging Java Language Constructs"

Appendix to the paper "Code smell prediction employing machine learning meets emerging Java language constructs" Hanna Grodzicka, Michał Kawa, Zofia Łakomiak, Arkadiusz Ziobrowski, Lech Madeyski (B) The Appendix includes two tables containing the dataset used in the paper "Code smell prediction employing machine learning meets emerging Java lan- guage constructs". The first table contains information about 792 projects selected for R package reproducer [Madeyski and Kitchenham(2019)]. Projects were the base dataset for cre- ating the dataset used in the study (Table I). The second table contains information about 281 projects filtered by Java version from build tool Maven (Table II) which were directly used in the paper. TABLE I: Base projects used to create the new dataset # Orgasation Project name GitHub link Commit hash Build tool Java version 1 adobe aem-core-wcm- www.github.com/adobe/ 1d1f1d70844c9e07cd694f028e87f85d926aba94 other or lack of unknown components aem-core-wcm-components 2 adobe S3Mock www.github.com/adobe/ 5aa299c2b6d0f0fd00f8d03fda560502270afb82 MAVEN 8 S3Mock 3 alexa alexa-skills- www.github.com/alexa/ bf1e9ccc50d1f3f8408f887f70197ee288fd4bd9 MAVEN 8 kit-sdk-for- alexa-skills-kit-sdk- java for-java 4 alibaba ARouter www.github.com/alibaba/ 93b328569bbdbf75e4aa87f0ecf48c69600591b2 GRADLE unknown ARouter 5 alibaba atlas www.github.com/alibaba/ e8c7b3f1ff14b2a1df64321c6992b796cae7d732 GRADLE unknown atlas 6 alibaba canal www.github.com/alibaba/ 08167c95c767fd3c9879584c0230820a8476a7a7 MAVEN 7 canal 7 alibaba cobar www.github.com/alibaba/ -

Testsmelldescriber Enabling Developers’ Awareness on Test Quality with Test Smell Summaries

Bachelor Thesis January 31, 2018 TestSmellDescriber Enabling Developers’ Awareness on Test Quality with Test Smell Summaries Ivan Taraca of Pfullendorf, Germany (13-751-896) supervised by Prof. Dr. Harald C. Gall Dr. Sebastiano Panichella software evolution & architecture lab Bachelor Thesis TestSmellDescriber Enabling Developers’ Awareness on Test Quality with Test Smell Summaries Ivan Taraca software evolution & architecture lab Bachelor Thesis Author: Ivan Taraca, [email protected] URL: http://bit.ly/2DUiZrC Project period: 20.10.2018 - 31.01.2018 Software Evolution & Architecture Lab Department of Informatics, University of Zurich Acknowledgements First of all, I like to thank Dr. Harald Gall for giving me the opportunity to write this thesis at the Software Evolution & Architecture Lab. Special thanks goes out to Dr. Sebastiano Panichella for his instructions, guidance and help during the making of this thesis, without whom this would not have been possible. I would also like to express my gratitude to Dr. Fabio Polomba, Dr. Yann-Gaël Guéhéneuc and Dr. Nikolaos Tsantalis for providing me access to their research and always being available for questions. Last, but not least, do I want to thank my parents, sisters and nephews for the support and love they’ve given all those years. Abstract With the importance of software in today’s society, malfunctioning software can not only lead to disrupting our day-to-day lives, but also large monetary damages. A lot of time and effort goes into the development of test suites to ensure the quality and accuracy of software. But how do we elevate the quality of test code? This thesis presents TestSmellDescriber, a tool with the ability to generate descriptions detailing potential problems in test cases, which are collected by conducting a Test Smell analysis. -

CHAPTER ONE INTRODUCTION 1.1 Background of the Study Advances

CHAPTER ONE INTRODUCTION 1.1 Background of the Study Advances in ICT today has made data more voluminous and multifarious and its being transferred at high speed (Sergio, 2015). Applications in cloud like Yahoo weather, Facebook photo gallery and Google search index is changing the IT landscape in a profound way (Stone et al., 2008; Barroso et al., 2003). Reasons for these trends include scientific organizations solving big problems related to high performance computing workloads, diverse public services being digitized and new resources used. Mobile devices, global positioning systems, sensors, social media, medical imaging, financial transaction logs and lots of them are all sources of massive data generating large sets of complex data (Sergio, 2015). These applications are evolving to be data- intensive which processes very large volumes of data hence, require dynamically scalable, virtualized resources to handle them. Large firms like Google, Amazon, IBM, Microsoft and Apple are processing vast amount of data (Dominique, 2015). International Data Corporation (IDC) survey in 2011 estimated the total world wide data size which they called digital data universe at 1.8 zegabytes (ZB) (Dominique, 2015). IBM observed that about 2.5 quintillion bytes of data is created each day and about 90% of data in the world was created in the last two year (IBM, 2012). This is obviously large. An analysis given by Douglas (2012) showed that data generated from the earliest starting point until 2003 represented close to 5exabytes and rose to 2.7zettabytes as at 2012 (Douglas, 2012). Type of data that has rapid increase is the unstructured data (Nawsher et al., 2014).