Von Neumann Computers 1 Introduction

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

PIPELINING and ASSOCIATED TIMING ISSUES Introduction: While

PIPELINING AND ASSOCIATED TIMING ISSUES Introduction: While studying sequential circuits, we studied about Latches and Flip Flops. While Latches formed the heart of a Flip Flop, we have explored the use of Flip Flops in applications like counters, shift registers, sequence detectors, sequence generators and design of Finite State machines. Another important application of latches and flip flops is in pipelining combinational/algebraic operation. To understand what is pipelining consider the following example. Let us take a simple calculation which has three operations to be performed viz. 1. add a and b to get (a+b), 2. get magnitude of (a+b) and 3. evaluate log |(a + b)|. Each operation would consume a finite period of time. Let us assume that each operation consumes 40 nsec., 35 nsec. and 60 nsec. respectively. The process can be represented pictorially as in Fig. 1. Consider a situation when we need to carry this out for a set of 100 such pairs. In a normal course when we do it one by one it would take a total of 100 * 135 = 13,500 nsec. We can however reduce this time by the realization that the whole process is a sequential process. Let the values to be evaluated be a1 to a100 and the corresponding values to be added be b1 to b100. Since the operations are sequential, we can first evaluate (a1 + b1) while the value |(a1 + b1)| is being evaluated the unit evaluating the sum is dormant and we can use it to evaluate (a2 + b2) giving us both |(a1 + b1)| and (a2 + b2) at the end of another evaluation period. -

2.5 Classification of Parallel Computers

52 // Architectures 2.5 Classification of Parallel Computers 2.5 Classification of Parallel Computers 2.5.1 Granularity In parallel computing, granularity means the amount of computation in relation to communication or synchronisation Periods of computation are typically separated from periods of communication by synchronization events. • fine level (same operations with different data) ◦ vector processors ◦ instruction level parallelism ◦ fine-grain parallelism: – Relatively small amounts of computational work are done between communication events – Low computation to communication ratio – Facilitates load balancing 53 // Architectures 2.5 Classification of Parallel Computers – Implies high communication overhead and less opportunity for per- formance enhancement – If granularity is too fine it is possible that the overhead required for communications and synchronization between tasks takes longer than the computation. • operation level (different operations simultaneously) • problem level (independent subtasks) ◦ coarse-grain parallelism: – Relatively large amounts of computational work are done between communication/synchronization events – High computation to communication ratio – Implies more opportunity for performance increase – Harder to load balance efficiently 54 // Architectures 2.5 Classification of Parallel Computers 2.5.2 Hardware: Pipelining (was used in supercomputers, e.g. Cray-1) In N elements in pipeline and for 8 element L clock cycles =) for calculation it would take L + N cycles; without pipeline L ∗ N cycles Example of good code for pipelineing: §doi =1 ,k ¤ z ( i ) =x ( i ) +y ( i ) end do ¦ 55 // Architectures 2.5 Classification of Parallel Computers Vector processors, fast vector operations (operations on arrays). Previous example good also for vector processor (vector addition) , but, e.g. recursion – hard to optimise for vector processors Example: IntelMMX – simple vector processor. -

Computer Architectures

Computer Architectures Central Processing Unit (CPU) Pavel Píša, Michal Štepanovský, Miroslav Šnorek The lecture is based on A0B36APO lecture. Some parts are inspired by the book Paterson, D., Henessy, V.: Computer Organization and Design, The HW/SW Interface. Elsevier, ISBN: 978-0-12-370606-5 and it is used with authors' permission. Czech Technical University in Prague, Faculty of Electrical Engineering English version partially supported by: European Social Fund Prague & EU: We invests in your future. AE0B36APO Computer Architectures Ver.1.10 1 Computer based on von Neumann's concept ● Control unit Processor/microprocessor ● ALU von Neumann architecture uses common ● Memory memory, whereas Harvard architecture uses separate program and data memories ● Input ● Output Input/output subsystem The control unit is responsible for control of the operation processing and sequencing. It consists of: ● registers – they hold intermediate and programmer visible state ● control logic circuits which represents core of the control unit (CU) AE0B36APO Computer Architectures 2 The most important registers of the control unit ● PC (Program Counter) holds address of a recent or next instruction to be processed ● IR (Instruction Register) holds the machine instruction read from memory ● Another usually present registers ● General purpose registers (GPRs) may be divided to address and data or (partially) specialized registers ● SP (Stack Pointer) – points to the top of the stack; (The stack is usually used to store local variables and subroutine return addresses) ● PSW (Program Status Word) ● IM (Interrupt Mask) ● Optional Floating point (FPRs) and vector/multimedia regs. AE0B36APO Computer Architectures 3 The main instruction cycle of the CPU 1. Initial setup/reset – set initial PC value, PSW, etc. -

Early Stored Program Computers

Stored Program Computers Thomas J. Bergin Computing History Museum American University 7/9/2012 1 Early Thoughts about Stored Programming • January 1944 Moore School team thinks of better ways to do things; leverages delay line memories from War research • September 1944 John von Neumann visits project – Goldstine’s meeting at Aberdeen Train Station • October 1944 Army extends the ENIAC contract research on EDVAC stored-program concept • Spring 1945 ENIAC working well • June 1945 First Draft of a Report on the EDVAC 7/9/2012 2 First Draft Report (June 1945) • John von Neumann prepares (?) a report on the EDVAC which identifies how the machine could be programmed (unfinished very rough draft) – academic: publish for the good of science – engineers: patents, patents, patents • von Neumann never repudiates the myth that he wrote it; most members of the ENIAC team contribute ideas; Goldstine note about “bashing” summer7/9/2012 letters together 3 • 1.0 Definitions – The considerations which follow deal with the structure of a very high speed automatic digital computing system, and in particular with its logical control…. – The instructions which govern this operation must be given to the device in absolutely exhaustive detail. They include all numerical information which is required to solve the problem…. – Once these instructions are given to the device, it must be be able to carry them out completely and without any need for further intelligent human intervention…. • 2.0 Main Subdivision of the System – First: since the device is a computor, it will have to perform the elementary operations of arithmetics…. – Second: the logical control of the device is the proper sequencing of its operations (by…a control organ. -

Microprocessor Architecture

EECE416 Microcomputer Fundamentals Microprocessor Architecture Dr. Charles Kim Howard University 1 Computer Architecture Computer System CPU (with PC, Register, SR) + Memory 2 Computer Architecture •ALU (Arithmetic Logic Unit) •Binary Full Adder 3 Microprocessor Bus 4 Architecture by CPU+MEM organization Princeton (or von Neumann) Architecture MEM contains both Instruction and Data Harvard Architecture Data MEM and Instruction MEM Higher Performance Better for DSP Higher MEM Bandwidth 5 Princeton Architecture 1.Step (A): The address for the instruction to be next executed is applied (Step (B): The controller "decodes" the instruction 3.Step (C): Following completion of the instruction, the controller provides the address, to the memory unit, at which the data result generated by the operation will be stored. 6 Harvard Architecture 7 Internal Memory (“register”) External memory access is Very slow For quicker retrieval and storage Internal registers 8 Architecture by Instructions and their Executions CISC (Complex Instruction Set Computer) Variety of instructions for complex tasks Instructions of varying length RISC (Reduced Instruction Set Computer) Fewer and simpler instructions High performance microprocessors Pipelined instruction execution (several instructions are executed in parallel) 9 CISC Architecture of prior to mid-1980’s IBM390, Motorola 680x0, Intel80x86 Basic Fetch-Execute sequence to support a large number of complex instructions Complex decoding procedures Complex control unit One instruction achieves a complex task 10 -

What Do We Mean by Architecture?

Embedded programming: Comparing the performance and development workflows for architectures Embedded programming week FABLAB BRIGHTON 2018 What do we mean by architecture? The architecture of microprocessors and microcontrollers are classified based on the way memory is allocated (memory architecture). There are two main ways of doing this: Von Neumann architecture (also known as Princeton) Von Neumann uses a single unified cache (i.e. the same memory) for both the code (instructions) and the data itself, Under pure von Neumann architecture the CPU can be either reading an instruction or reading/writing data from/to the memory. Both cannot occur at the same time since the instructions and data use the same bus system. Harvard architecture Harvard architecture uses different memory allocations for the code (instructions) and the data, allowing it to be able to read instructions and perform data memory access simultaneously. The best performance is achieved when both instructions and data are supplied by their own caches, with no need to access external memory at all. How does this relate to microcontrollers/microprocessors? We found this page to be a good introduction to the topic of microcontrollers and microprocessors, the architectures they use and the difference between some of the common types. First though, it’s worth looking at the difference between a microprocessor and a microcontroller. Microprocessors (e.g. ARM) generally consist of just the Central Processing Unit (CPU), which performs all the instructions in a computer program, including arithmetic, logic, control and input/output operations. Microcontrollers (e.g. AVR, PIC or 8051) contain one or more CPUs with RAM, ROM and programmable input/output peripherals. -

Instruction Pipelining (1 of 7)

Chapter 5 A Closer Look at Instruction Set Architectures Objectives • Understand the factors involved in instruction set architecture design. • Gain familiarity with memory addressing modes. • Understand the concepts of instruction- level pipelining and its affect upon execution performance. 5.1 Introduction • This chapter builds upon the ideas in Chapter 4. • We present a detailed look at different instruction formats, operand types, and memory access methods. • We will see the interrelation between machine organization and instruction formats. • This leads to a deeper understanding of computer architecture in general. 5.2 Instruction Formats (1 of 31) • Instruction sets are differentiated by the following: – Number of bits per instruction. – Stack-based or register-based. – Number of explicit operands per instruction. – Operand location. – Types of operations. – Type and size of operands. 5.2 Instruction Formats (2 of 31) • Instruction set architectures are measured according to: – Main memory space occupied by a program. – Instruction complexity. – Instruction length (in bits). – Total number of instructions in the instruction set. 5.2 Instruction Formats (3 of 31) • In designing an instruction set, consideration is given to: – Instruction length. • Whether short, long, or variable. – Number of operands. – Number of addressable registers. – Memory organization. • Whether byte- or word addressable. – Addressing modes. • Choose any or all: direct, indirect or indexed. 5.2 Instruction Formats (4 of 31) • Byte ordering, or endianness, is another major architectural consideration. • If we have a two-byte integer, the integer may be stored so that the least significant byte is followed by the most significant byte or vice versa. – In little endian machines, the least significant byte is followed by the most significant byte. -

Review of Computer Architecture

Basic Computer Architecture CSCE 496/896: Embedded Systems Witawas Srisa-an Review of Computer Architecture Credit: Most of the slides are made by Prof. Wayne Wolf who is the author of the textbook. I made some modifications to the note for clarity. Assume some background information from CSCE 430 or equivalent von Neumann architecture Memory holds data and instructions. Central processing unit (CPU) fetches instructions from memory. Separate CPU and memory distinguishes programmable computer. CPU registers help out: program counter (PC), instruction register (IR), general- purpose registers, etc. von Neumann Architecture Memory Unit Input CPU Output Unit Control + ALU Unit CPU + memory address 200PC memory data CPU 200 ADD r5,r1,r3 ADD IRr5,r1,r3 Recalling Pipelining Recalling Pipelining What is a potential Problem with von Neumann Architecture? Harvard architecture address data memory data PC CPU address program memory data von Neumann vs. Harvard Harvard can’t use self-modifying code. Harvard allows two simultaneous memory fetches. Most DSPs (e.g Blackfin from ADI) use Harvard architecture for streaming data: greater memory bandwidth. different memory bit depths between instruction and data. more predictable bandwidth. Today’s Processors Harvard or von Neumann? RISC vs. CISC Complex instruction set computer (CISC): many addressing modes; many operations. Reduced instruction set computer (RISC): load/store; pipelinable instructions. Instruction set characteristics Fixed vs. variable length. Addressing modes. Number of operands. Types of operands. Tensilica Xtensa RISC based variable length But not CISC Programming model Programming model: registers visible to the programmer. Some registers are not visible (IR). Multiple implementations Successful architectures have several implementations: varying clock speeds; different bus widths; different cache sizes, associativities, configurations; local memory, etc. -

The Von Neumann Computer Model 5/30/17, 10:03 PM

The von Neumann Computer Model 5/30/17, 10:03 PM CIS-77 Home http://www.c-jump.com/CIS77/CIS77syllabus.htm The von Neumann Computer Model 1. The von Neumann Computer Model 2. Components of the Von Neumann Model 3. Communication Between Memory and Processing Unit 4. CPU data-path 5. Memory Operations 6. Understanding the MAR and the MDR 7. Understanding the MAR and the MDR, Cont. 8. ALU, the Processing Unit 9. ALU and the Word Length 10. Control Unit 11. Control Unit, Cont. 12. Input/Output 13. Input/Output Ports 14. Input/Output Address Space 15. Console Input/Output in Protected Memory Mode 16. Instruction Processing 17. Instruction Components 18. Why Learn Intel x86 ISA ? 19. Design of the x86 CPU Instruction Set 20. CPU Instruction Set 21. History of IBM PC 22. Early x86 Processor Family 23. 8086 and 8088 CPU 24. 80186 CPU 25. 80286 CPU 26. 80386 CPU 27. 80386 CPU, Cont. 28. 80486 CPU 29. Pentium (Intel 80586) 30. Pentium Pro 31. Pentium II 32. Itanium processor 1. The von Neumann Computer Model Von Neumann computer systems contain three main building blocks: The following block diagram shows major relationship between CPU components: the central processing unit (CPU), memory, and input/output devices (I/O). These three components are connected together using the system bus. The most prominent items within the CPU are the registers: they can be manipulated directly by a computer program. http://www.c-jump.com/CIS77/CPU/VonNeumann/lecture.html Page 1 of 15 IPR2017-01532 FanDuel, et al. -

Hardware Architecture

Hardware Architecture Components Computing Infrastructure Components Servers Clients LAN & WLAN Internet Connectivity Computation Software Storage Backup Integration is the Key ! Security Data Network Management Computer Today’s Computer Computer Model: Von Neumann Architecture Computer Model Input: keyboard, mouse, scanner, punch cards Processing: CPU executes the computer program Output: monitor, printer, fax machine Storage: hard drive, optical media, diskettes, magnetic tape Von Neumann architecture - Wiki Article (15 min YouTube Video) Components Computer Components Components Computer Components CPU Memory Hard Disk Mother Board CD/DVD Drives Adaptors Power Supply Display Keyboard Mouse Network Interface I/O ports CPU CPU CPU – Central Processing Unit (Microprocessor) consists of three parts: Control Unit • Execute programs/instructions: the machine language • Move data from one memory location to another • Communicate between other parts of a PC Arithmetic Logic Unit • Arithmetic operations: add, subtract, multiply, divide • Logic operations: and, or, xor • Floating point operations: real number manipulation Registers CPU Processor Architecture See How the CPU Works In One Lesson (20 min YouTube Video) CPU CPU CPU speed is influenced by several factors: Chip Manufacturing Technology: nm (2002: 130 nm, 2004: 90nm, 2006: 65 nm, 2008: 45nm, 2010:32nm, Latest is 22nm) Clock speed: Gigahertz (Typical : 2 – 3 GHz, Maximum 5.5 GHz) Front Side Bus: MHz (Typical: 1333MHz , 1666MHz) Word size : 32-bit or 64-bit word sizes Cache: Level 1 (64 KB per core), Level 2 (256 KB per core) caches on die. Now Level 3 (2 MB to 8 MB shared) cache also on die Instruction set size: X86 (CISC), RISC Microarchitecture: CPU Internal Architecture (Ivy Bridge, Haswell) Single Core/Multi Core Multi Threading Hyper Threading vs. -

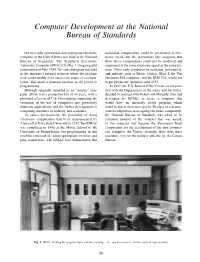

Computer Development at the National Bureau of Standards

Computer Development at the National Bureau of Standards The first fully operational stored-program electronic individual computations could be performed at elec- computer in the United States was built at the National tronic speed, but the instructions (the program) that Bureau of Standards. The Standards Electronic drove these computations could not be modified and Automatic Computer (SEAC) [1] (Fig. 1.) began useful sequenced at the same electronic speed as the computa- computation in May 1950. The stored program was held tions. Other early computers in academia, government, in the machine’s internal memory where the machine and industry, such as Edvac, Ordvac, Illiac I, the Von itself could modify it for successive stages of a compu- Neumann IAS computer, and the IBM 701, would not tation. This made a dramatic increase in the power of begin productive operation until 1952. programming. In 1947, the U.S. Bureau of the Census, in coopera- Although originally intended as an “interim” com- tion with the Departments of the Army and Air Force, puter, SEAC had a productive life of 14 years, with a decided to contract with Eckert and Mauchly, who had profound effect on all U.S. Government computing, the developed the ENIAC, to create a computer that extension of the use of computers into previously would have an internally stored program which unknown applications, and the further development of could be run at electronic speeds. Because of a demon- computing machines in industry and academia. strated competence in designing electronic components, In earlier developments, the possibility of doing the National Bureau of Standards was asked to be electronic computation had been demonstrated by technical monitor of the contract that was issued, Atanasoff at Iowa State University in 1937. -

The ABC of Computing

COMMENT BOOKS & ARTS teamed up with recent graduate Clifford DEPT Berry to develop the system that became S ON known as the Atanasoff–Berry Computer I (ABC). Built on a shoestring budget, the ECT LL O C simple ‘breadboard’ prototype that emerged L A contained significant innovations. These I included the use of vacuum tubes as the com- B./SPEC puting mechanism and operating memory; I V. L V. binary and logical calculation; serial com- I putation; and the use of capacitors as storage memory. By the summer of 1940, Smiley tells us, a second, more-developed prototype was UN STATE IOWA running and Atanasoff and Berry had writ- ten a 35-page manuscript describing it. Other people were working on similar devices. In the United Kingdom and at Princeton University in New Jersey, Turing was investigating practical outlets for the concepts in his 1936 paper ‘On Comput- able Numbers’. In London, British engineer Tommy Flowers was using vacuum tubes as electronic switches for telephone exchanges in the General Post Office. In Germany, Konrad Zuse was working on a floating-point calculator — albeit based on electromechani- cal technology — that would have a 64-word The 1940s Atanasoff–Berry Computer (ABC) was the first to use innovations such as vacuum tubes. storage capacity by 1941. Smiley weaves these stories into the narrative effectively, giving a BIOGRAPHY broad sense of the rich ecology of thought that burgeoned during this crucial period of technological and logical development. The Second World War changed every- The ABC of thing. Atanasoff left Iowa State to work in the Naval Ordnance Laboratory in Washing- ton DC.