Supplementary Material Transcriptome Variation in Human

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Whole Exome Sequencing in Families at High Risk for Hodgkin Lymphoma: Identification of a Predisposing Mutation in the KDR Gene

Hodgkin Lymphoma SUPPLEMENTARY APPENDIX Whole exome sequencing in families at high risk for Hodgkin lymphoma: identification of a predisposing mutation in the KDR gene Melissa Rotunno, 1 Mary L. McMaster, 1 Joseph Boland, 2 Sara Bass, 2 Xijun Zhang, 2 Laurie Burdett, 2 Belynda Hicks, 2 Sarangan Ravichandran, 3 Brian T. Luke, 3 Meredith Yeager, 2 Laura Fontaine, 4 Paula L. Hyland, 1 Alisa M. Goldstein, 1 NCI DCEG Cancer Sequencing Working Group, NCI DCEG Cancer Genomics Research Laboratory, Stephen J. Chanock, 5 Neil E. Caporaso, 1 Margaret A. Tucker, 6 and Lynn R. Goldin 1 1Genetic Epidemiology Branch, Division of Cancer Epidemiology and Genetics, National Cancer Institute, NIH, Bethesda, MD; 2Cancer Genomics Research Laboratory, Division of Cancer Epidemiology and Genetics, National Cancer Institute, NIH, Bethesda, MD; 3Ad - vanced Biomedical Computing Center, Leidos Biomedical Research Inc.; Frederick National Laboratory for Cancer Research, Frederick, MD; 4Westat, Inc., Rockville MD; 5Division of Cancer Epidemiology and Genetics, National Cancer Institute, NIH, Bethesda, MD; and 6Human Genetics Program, Division of Cancer Epidemiology and Genetics, National Cancer Institute, NIH, Bethesda, MD, USA ©2016 Ferrata Storti Foundation. This is an open-access paper. doi:10.3324/haematol.2015.135475 Received: August 19, 2015. Accepted: January 7, 2016. Pre-published: June 13, 2016. Correspondence: [email protected] Supplemental Author Information: NCI DCEG Cancer Sequencing Working Group: Mark H. Greene, Allan Hildesheim, Nan Hu, Maria Theresa Landi, Jennifer Loud, Phuong Mai, Lisa Mirabello, Lindsay Morton, Dilys Parry, Anand Pathak, Douglas R. Stewart, Philip R. Taylor, Geoffrey S. Tobias, Xiaohong R. Yang, Guoqin Yu NCI DCEG Cancer Genomics Research Laboratory: Salma Chowdhury, Michael Cullen, Casey Dagnall, Herbert Higson, Amy A. -

Integrated Molecular Characterisation of the MAPK Pathways in Human

ARTICLE https://doi.org/10.1038/s42003-020-01552-6 OPEN Integrated molecular characterisation of the MAPK pathways in human cancers reveals pharmacologically vulnerable mutations and gene dependencies 1234567890():,; ✉ Musalula Sinkala 1 , Panji Nkhoma 2, Nicola Mulder 1 & Darren Patrick Martin1 The mitogen-activated protein kinase (MAPK) pathways are crucial regulators of the cellular processes that fuel the malignant transformation of normal cells. The molecular aberrations which lead to cancer involve mutations in, and transcription variations of, various MAPK pathway genes. Here, we examine the genome sequences of 40,848 patient-derived tumours representing 101 distinct human cancers to identify cancer-associated mutations in MAPK signalling pathway genes. We show that patients with tumours that have mutations within genes of the ERK-1/2 pathway, the p38 pathways, or multiple MAPK pathway modules, tend to have worse disease outcomes than patients with tumours that have no mutations within the MAPK pathways genes. Furthermore, by integrating information extracted from various large-scale molecular datasets, we expose the relationship between the fitness of cancer cells after CRISPR mediated gene knockout of MAPK pathway genes, and their dose-responses to MAPK pathway inhibitors. Besides providing new insights into MAPK pathways, we unearth vulnerabilities in specific pathway genes that are reflected in the re sponses of cancer cells to MAPK targeting drugs: a revelation with great potential for guiding the development of innovative therapies. -

Methylation of Leukocyte DNA and Ovarian Cancer

Fridley et al. BMC Medical Genomics 2014, 7:21 http://www.biomedcentral.com/1755-8794/7/21 RESEARCH ARTICLE Open Access Methylation of leukocyte DNA and ovarian cancer: relationships with disease status and outcome Brooke L Fridley1*, Sebastian M Armasu2, Mine S Cicek2, Melissa C Larson2, Chen Wang2, Stacey J Winham2, Kimberly R Kalli3, Devin C Koestler1,4, David N Rider2, Viji Shridhar5, Janet E Olson2, Julie M Cunningham5 and Ellen L Goode2 Abstract Background: Genome-wide interrogation of DNA methylation (DNAm) in blood-derived leukocytes has become feasible with the advent of CpG genotyping arrays. In epithelial ovarian cancer (EOC), one report found substantial DNAm differences between cases and controls; however, many of these disease-associated CpGs were attributed to differences in white blood cell type distributions. Methods: We examined blood-based DNAm in 336 EOC cases and 398 controls; we included only high-quality CpG loci that did not show evidence of association with white blood cell type distributions to evaluate association with case status and overall survival. Results: Of 13,816 CpGs, no significant associations were observed with survival, although eight CpGs associated with survival at p < 10−3, including methylation within a CpG island located in the promoter region of GABRE (p = 5.38 x 10−5, HR = 0.95). In contrast, 53 CpG methylation sites were significantly associated with EOC risk (p <5 x10−6). The top association was observed for the methylation probe cg04834572 located approximately 315 kb upstream of DUSP13 (p = 1.6 x10−14). Other disease-associated CpGs included those near or within HHIP (cg14580567; p =5.6x10−11), HDAC3 (cg10414058; p = 6.3x10−12), and SCR (cg05498681; p = 4.8x10−7). -

Tumour-Stroma Signalling in Cancer Cell Motility and Metastasis

Tumour-Stroma Signalling in Cancer Cell Motility and Metastasis by Valbona Luga A thesis submitted in conformity with the requirements for the degree of Doctor of Philosophy, Department of Molecular Genetics, University of Toronto © Copyright by Valbona Luga, 2013 Tumour-Stroma Signalling in Cancer Cell Motility and Metastasis Valbona Luga Doctor of Philosophy Department of Molecular Genetics University of Toronto 2013 Abstract The tumour-associated stroma, consisting of fibroblasts, inflammatory cells, vasculature and extracellular matrix proteins, plays a critical role in tumour growth, but how it regulates cancer cell migration and metastasis is poorly understood. The Wnt-planar cell polarity (PCP) pathway regulates convergent extension movements in vertebrate development. However, it is unclear whether this pathway also functions in cancer cell migration. In addition, the factors that mobilize long-range signalling of Wnt morphogens, which are tightly associated with the plasma membrane, have yet to be completely characterized. Here, I show that fibroblasts secrete membrane microvesicles of endocytic origin, termed exosomes, which promote tumour cell protrusive activity, motility and metastasis via the exosome component Cd81. In addition, I demonstrate that fibroblast exosomes activate autocrine Wnt-PCP signalling in breast cancer cells as detected by the association of Wnt with Fzd receptors and the asymmetric distribution of Fzd-Dvl and Vangl-Pk complexes in exosome-stimulated cancer cell protrusive structures. Moreover, I show that Pk expression in breast cancer cells is essential for fibroblast-stimulated cancer cell metastasis. Lastly, I reveal that trafficking in cancer cells promotes tethering of autocrine Wnt11 to fibroblast exosomes. These studies further our understanding of the role of ii the tumour-associated stroma in cancer metastasis and bring us closer to a more targeted approach for the treatment of cancer spread. -

Dual Specificity Phosphatases from Molecular Mechanisms to Biological Function

International Journal of Molecular Sciences Dual Specificity Phosphatases From Molecular Mechanisms to Biological Function Edited by Rafael Pulido and Roland Lang Printed Edition of the Special Issue Published in International Journal of Molecular Sciences www.mdpi.com/journal/ijms Dual Specificity Phosphatases Dual Specificity Phosphatases From Molecular Mechanisms to Biological Function Special Issue Editors Rafael Pulido Roland Lang MDPI • Basel • Beijing • Wuhan • Barcelona • Belgrade Special Issue Editors Rafael Pulido Roland Lang Biocruces Health Research Institute University Hospital Erlangen Spain Germany Editorial Office MDPI St. Alban-Anlage 66 4052 Basel, Switzerland This is a reprint of articles from the Special Issue published online in the open access journal International Journal of Molecular Sciences (ISSN 1422-0067) from 2018 to 2019 (available at: https: //www.mdpi.com/journal/ijms/special issues/DUSPs). For citation purposes, cite each article independently as indicated on the article page online and as indicated below: LastName, A.A.; LastName, B.B.; LastName, C.C. Article Title. Journal Name Year, Article Number, Page Range. ISBN 978-3-03921-688-8 (Pbk) ISBN 978-3-03921-689-5 (PDF) c 2019 by the authors. Articles in this book are Open Access and distributed under the Creative Commons Attribution (CC BY) license, which allows users to download, copy and build upon published articles, as long as the author and publisher are properly credited, which ensures maximum dissemination and a wider impact of our publications. The book as a whole is distributed by MDPI under the terms and conditions of the Creative Commons license CC BY-NC-ND. Contents About the Special Issue Editors .................................... -

Dynamics of Dual Specificity Phosphatases and Their Interplay with Protein Kinases in Immune Signaling Yashwanth Subbannayya1,2, Sneha M

bioRxiv preprint doi: https://doi.org/10.1101/568576; this version posted March 5, 2019. The copyright holder for this preprint (which was not certified by peer review) is the author/funder. All rights reserved. No reuse allowed without permission. Dynamics of dual specificity phosphatases and their interplay with protein kinases in immune signaling Yashwanth Subbannayya1,2, Sneha M. Pinto1,2, Korbinian Bösl1, T. S. Keshava Prasad2 and Richard K. Kandasamy1,3,* 1Centre of Molecular Inflammation Research (CEMIR), and Department of Clinical and Molecular Medicine (IKOM), Norwegian University of Science and Technology, N-7491 Trondheim, Norway 2Center for Systems Biology and Molecular Medicine, Yenepoya (Deemed to be University), Mangalore 575018, India 3Centre for Molecular Medicine Norway (NCMM), Nordic EMBL Partnership, University of Oslo and Oslo University Hospital, N-0349 Oslo, Norway *Correspondence: Richard K. Kandasamy ([email protected]) Abstract Dual specificity phosphatases (DUSPs) have a well-known role as regulators of the immune response through the modulation of mitogen activated protein kinases (MAPKs). Yet the precise interplay between the various members of the DUSP family with protein kinases is not well understood. Recent multi-omics studies characterizing the transcriptomes and proteomes of immune cells have provided snapshots of molecular mechanisms underlying innate immune response in unprecedented detail. In this study, we focused on deciphering the interplay between members of the DUSP family with protein kinases in immune cells using publicly available omics datasets. Our analysis resulted in the identification of potential DUSP- mediated hub proteins including MAPK7, MAPK8, AURKA, and IGF1R. Furthermore, we analyzed the association of DUSP expression with TLR4 signaling and identified VEGF, FGFR and SCF-KIT pathway modules to be regulated by the activation of TLR4 signaling. -

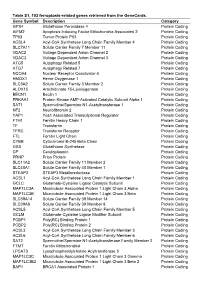

Table S1. 103 Ferroptosis-Related Genes Retrieved from the Genecards

Table S1. 103 ferroptosis-related genes retrieved from the GeneCards. Gene Symbol Description Category GPX4 Glutathione Peroxidase 4 Protein Coding AIFM2 Apoptosis Inducing Factor Mitochondria Associated 2 Protein Coding TP53 Tumor Protein P53 Protein Coding ACSL4 Acyl-CoA Synthetase Long Chain Family Member 4 Protein Coding SLC7A11 Solute Carrier Family 7 Member 11 Protein Coding VDAC2 Voltage Dependent Anion Channel 2 Protein Coding VDAC3 Voltage Dependent Anion Channel 3 Protein Coding ATG5 Autophagy Related 5 Protein Coding ATG7 Autophagy Related 7 Protein Coding NCOA4 Nuclear Receptor Coactivator 4 Protein Coding HMOX1 Heme Oxygenase 1 Protein Coding SLC3A2 Solute Carrier Family 3 Member 2 Protein Coding ALOX15 Arachidonate 15-Lipoxygenase Protein Coding BECN1 Beclin 1 Protein Coding PRKAA1 Protein Kinase AMP-Activated Catalytic Subunit Alpha 1 Protein Coding SAT1 Spermidine/Spermine N1-Acetyltransferase 1 Protein Coding NF2 Neurofibromin 2 Protein Coding YAP1 Yes1 Associated Transcriptional Regulator Protein Coding FTH1 Ferritin Heavy Chain 1 Protein Coding TF Transferrin Protein Coding TFRC Transferrin Receptor Protein Coding FTL Ferritin Light Chain Protein Coding CYBB Cytochrome B-245 Beta Chain Protein Coding GSS Glutathione Synthetase Protein Coding CP Ceruloplasmin Protein Coding PRNP Prion Protein Protein Coding SLC11A2 Solute Carrier Family 11 Member 2 Protein Coding SLC40A1 Solute Carrier Family 40 Member 1 Protein Coding STEAP3 STEAP3 Metalloreductase Protein Coding ACSL1 Acyl-CoA Synthetase Long Chain Family Member 1 Protein -

Gene Correlation Network Analysis to Identify Regulatory Factors in Sepsis Zhongheng Zhang1*† , Lin Chen2†, Ping Xu3†, Lifeng Xing1, Yucai Hong1 and Pengpeng Chen1

Zhang et al. J Transl Med (2020) 18:381 https://doi.org/10.1186/s12967-020-02561-z Journal of Translational Medicine RESEARCH Open Access Gene correlation network analysis to identify regulatory factors in sepsis Zhongheng Zhang1*† , Lin Chen2†, Ping Xu3†, Lifeng Xing1, Yucai Hong1 and Pengpeng Chen1 Abstract Background and objectives: Sepsis is a leading cause of mortality and morbidity in the intensive care unit. Regu- latory mechanisms underlying the disease progression and prognosis are largely unknown. The study aimed to identify master regulators of mortality-related modules, providing potential therapeutic target for further translational experiments. Methods: The dataset GSE65682 from the Gene Expression Omnibus (GEO) database was utilized for bioinformatic analysis. Consensus weighted gene co-expression netwoek analysis (WGCNA) was performed to identify modules of sepsis. The module most signifcantly associated with mortality were further analyzed for the identifcation of master regulators of transcription factors and miRNA. Results: A total number of 682 subjects with various causes of sepsis were included for consensus WGCNA analysis, which identifed 27 modules. The network was well preserved among diferent causes of sepsis. Two modules desig- nated as black and light yellow module were found to be associated with mortality outcome. Key regulators of the black and light yellow modules were the transcription factor CEBPB (normalized enrichment score 5.53) and ETV6 (NES 6), respectively. The top 5 miRNA regulated the most number of genes were hsa-miR-335-5p= (n 59), hsa- miR-26b-5p= (n 57), hsa-miR-16-5p (n 44), hsa-miR-17-5p (n 42), and hsa-miR-124-3p (n 38). -

4. WNK Kinases in Invasion and Metastasis

Emerging Signaling Pathways in Tumor Biology 2010 Editor Pedro A. Lazo IBMCC-Centro de Investigación del Cáncer, CSIC-Universidad de Salamanca, Campus Miguel de Unamuno, E-37007 Salamanca, Spain Transworld Research Network, T.C. 37/661 (2), Fort P.O. Trivandrum-695 023 Kerala, India Published by Transworld Research Network 2010; Rights Reserved Transworld Research Network T.C. 37/661(2), Fort P.O., Trivandrum-695 023, Kerala, India Editor Pedro A. Lazo Managing Editor S.G. Pandalai Publication Manager A. Gayathri Transworld Research Network and the Editor assume no responsibility for the opinions and statements advanced by contributors ISBN: 978-81-7895-477-6 Preface The specificity of biological effects in different cells types is determined by the composition of their proteome, the physical and functional interaction between different signaling pathways and theirs spatial and temporal organization in signaling complexes. Although the implication of some specific pathways with major phenotypes, such as proliferation, apoptosis or differentiation is well known, the final effect varies depending on cell type, which might even be the opposite. These phenotypes play an essential role, and are frequently deregulated in tumor cells, which make some of them potential targets for development of new drugs. In this context, protein kinases represent the main regulatory family, which can be activated and deactivated by reversible phosphorylation, or by integration in spatially organized signaling complexes with scaffold proteins. The human kinome is composed of 518 kinases and for many of them their characterization from the point of view of regulation and substrate specificity, as well as their integration in novel signaling pathways, or their effect on other better know pathways such as MAPK, are not yet understood. -

Dynamics of Dual Specificity Phosphatases

International Journal of Molecular Sciences Article Dynamics of Dual Specificity Phosphatases and Their Interplay with Protein Kinases in Immune Signaling Yashwanth Subbannayya 1,2 , Sneha M. Pinto 1,2, Korbinian Bösl 1 , T. S. Keshava Prasad 2 and Richard K. Kandasamy 1,3,* 1 Centre of Molecular Inflammation Research (CEMIR), Department of Clinical and Molecular Medicine (IKOM), Norwegian University of Science and Technology, N-7491 Trondheim, Norway; [email protected] (Y.S.); [email protected] (S.M.P.); [email protected] (K.B.) 2 Center for Systems Biology and Molecular Medicine, Yenepoya (Deemed to be University), Mangalore 575018, India; [email protected] 3 Centre for Molecular Medicine Norway (NCMM), Nordic EMBL Partnership, University of Oslo and Oslo University Hospital, N-0349 Oslo, Norway * Correspondence: [email protected]; Tel.: +47-7282-4511 Received: 5 March 2019; Accepted: 25 April 2019; Published: 27 April 2019 Abstract: Dual specificity phosphatases (DUSPs) have a well-known role as regulators of the immune response through the modulation of mitogen-activated protein kinases (MAPKs). Yet the precise interplay between the various members of the DUSP family with protein kinases is not well understood. Recent multi-omics studies characterizing the transcriptomes and proteomes of immune cells have provided snapshots of molecular mechanisms underlying innate immune response in unprecedented detail. In this study, we focus on deciphering the interplay between members of the DUSP family with protein kinases in immune cells using publicly available omics datasets. Our analysis resulted in the identification of potential DUSP-mediated hub proteins including MAPK7, MAPK8, AURKA, and IGF1R. -

Genetic Investigation in Non-Obstructive Azoospermia: from the X Chromosome to The

DOTTORATO DI RICERCA IN SCIENZE BIOMEDICHE CICLO XXIX COORDINATORE Prof. Persio dello Sbarba Genetic investigation in non-obstructive azoospermia: from the X chromosome to the whole exome Settore Scientifico Disciplinare MED/13 Dottorando Tutore Dott. Antoni Riera-Escamilla Prof. Csilla Gabriella Krausz _______________________________ _____________________________ (firma) (firma) Coordinatore Prof. Carlo Maria Rotella _______________________________ (firma) Anni 2014/2016 A la meva família Agraïments El resultat d’aquesta tesis és fruit d’un esforç i treball continu però no hauria estat el mateix sense la col·laboració i l’ajuda de molta gent. Segurament em deixaré molta gent a qui donar les gràcies però aquest són els que em venen a la ment ara mateix. En primer lloc voldria agrair a la Dra. Csilla Krausz por haberme acogido con los brazos abiertos desde el primer día que llegué a Florencia, por enseñarme, por su incansable ayuda y por contagiarme de esta pasión por la investigación que nunca se le apaga. Voldria agrair també al Dr. Rafael Oliva per haver fet possible el REPROTRAIN, per haver-me ensenyat moltíssim durant l’època del màster i per acceptar revisar aquesta tesis. Alhora voldria agrair a la Dra. Willy Baarends, thank you for your priceless help and for reviewing this thesis. També voldria donar les gràcies a la Dra. Elisabet Ars per acollir-me al seu laboratori a Barcelona, per ajudar-me sempre que en tot el què li he demanat i fer-me tocar de peus a terra. Ringrazio a tutto il gruppo di Firenze, che dal primo giorno mi sono trovato come a casa. -

Differential Chromatin Accessibility Landscape of Gain-Of-Function Mutant P53 Tumours

bioRxiv preprint doi: https://doi.org/10.1101/2020.11.24.395913; this version posted November 24, 2020. The copyright holder for this preprint (which was not certified by peer review) is the author/funder, who has granted bioRxiv a license to display the preprint in perpetuity. It is made available under aCC-BY-NC-ND 4.0 International license. Differential chromatin accessibility landscape of gain-of-function mutant p53 tumours Bhavya Dhaka and Radhakrishnan Sabarinathan* National Centre for Biological Sciences, Tata Institute of Fundamental Research, Bengaluru 560065, India. * To whom correspondence should be addressed. Email: [email protected] Abstract Mutations in TP53 not only affect its tumour suppressor activity but also exerts oncogenic gain-of-function activity. While the genome-wide mutant p53 binding sites have been identified in cancer cell lines, the chromatin accessibility landscape driven by mutant p53 in primary tumours is unknown. Here, we leveraged the chromatin accessibility data of primary tumours from TCGA to identify differentially accessible regions in mutant p53 tumours compared to wild p53 tumours, especially in breast and colon cancers. We found 1587 lost and 984 gained accessible regions in breast, and 1143 lost and 640 gained regions in colon. However, less than half of those regions in both cancer types contain sequence motifs for wild-type or mutant p53 binding. Whereas, the remaining showed enrichment for master transcriptional regulators, such as FOX-Family TFs and NF-kB in lost and SMAD and KLF TFs in gained regions of breast. In colon, ATF3 and FOS/JUN TFs were enriched in lost, and CDX family TFs and HNF4A in gained regions.