Networking and TCP/IP Stack for Helenos System

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Transport Layer: Tutorial and Survey SAMI IREN and PAUL D

The Transport Layer: Tutorial and Survey SAMI IREN and PAUL D. AMER University of Delaware AND PHILLIP T. CONRAD Temple University Transport layer protocols provide for end-to-end communication between two or more hosts. This paper presents a tutorial on transport layer concepts and terminology, and a survey of transport layer services and protocols. The transport layer protocol TCP is used as a reference point, and compared and contrasted with nineteen other protocols designed over the past two decades. The service and protocol features of twelve of the most important protocols are summarized in both text and tables. Categories and Subject Descriptors: C.2.0 [Computer-Communication Networks]: General—Data communications; Open System Interconnection Reference Model (OSI); C.2.1 [Computer-Communication Networks]: Network Architecture and Design—Network communications; Packet-switching networks; Store and forward networks; C.2.2 [Computer-Communication Networks]: Network Protocols; Protocol architecture (OSI model); C.2.5 [Computer- Communication Networks]: Local and Wide-Area Networks General Terms: Networks Additional Key Words and Phrases: Congestion control, flow control, transport protocol, transport service, TCP/IP 1. INTRODUCTION work of routers, bridges, and communi- cation links that moves information be- In the OSI 7-layer Reference Model, the tween hosts. A good transport layer transport layer is the lowest layer that service (or simply, transport service) al- operates on an end-to-end basis be- lows applications to use a standard set tween two or more communicating of primitives and run on a variety of hosts. This layer lies at the boundary networks without worrying about differ- between these hosts and an internet- ent network interfaces and reliabilities. -

Moving to Ipv6(PDF)

1 Chicago, IL 9/1/15 2 Moving to IPv6 Mark Kosters, Chief Technology Officer With some help from Geoff Huston 3 The Amazing Success of the Internet • 2.92 billion users! • 4.5 online hours per day per user! • 5.5% of GDP for G-20 countries Just about anything about the Internet Time 3 4 Success-Disaster 5 The Original IPv6 Plan - 1995 Size of the Internet IPv6 Deployment IPv6 Transition – Dual Stack IPv4 Pool Size Time 6 The Revised IPv6 Plan - 2005 IPv4 Pool Size Size of the Internet IPv6 Transition – Dual Stack IPv6 Deployment 2004 2006 2008 2010 2012 Date 7 Oops! We were meant to have completed the transition to IPv6 BEFORE we completely exhausted the supply channels of IPv4 addresses! 8 Today’s Plan IPv4 Pool Size Today Size of the Internet ? IPv6 Transition IPv6 Deployment 0.8% Time 9 Transition... The downside of an end-to-end architecture: – There is no backwards compatibility across protocol families – A V6-only host cannot communicate with a V4-only host We have been forced to undertake a Dual Stack transition: – Provision the entire network with both IPv4 AND IPv6 – In Dual Stack, hosts configure the hosts’ applications to prefer IPv6 to IPv4 – When the traffic volumes of IPv4 dwindle to insignificant levels, then it’s possible to shut down support for IPv4 10 Dual Stack Transition ... We did not appreciate the operational problems with this dual stack plan while it was just a paper exercise: • The combination of an end host preference for IPv6 and a disconnected set of IPv6 “islands” created operational problems – Protocol -

Routing Loop Attacks Using Ipv6 Tunnels

Routing Loop Attacks using IPv6 Tunnels Gabi Nakibly Michael Arov National EW Research & Simulation Center Rafael – Advanced Defense Systems Haifa, Israel {gabin,marov}@rafael.co.il Abstract—IPv6 is the future network layer protocol for A tunnel in which the end points’ routing tables need the Internet. Since it is not compatible with its prede- to be explicitly configured is called a configured tunnel. cessor, some interoperability mechanisms were designed. Tunnels of this type do not scale well, since every end An important category of these mechanisms is automatic tunnels, which enable IPv6 communication over an IPv4 point must be reconfigured as peers join or leave the tun- network without prior configuration. This category includes nel. To alleviate this scalability problem, another type of ISATAP, 6to4 and Teredo. We present a novel class of tunnels was introduced – automatic tunnels. In automatic attacks that exploit vulnerabilities in these tunnels. These tunnels the egress entity’s IPv4 address is computationally attacks take advantage of inconsistencies between a tunnel’s derived from the destination IPv6 address. This feature overlay IPv6 routing state and the native IPv6 routing state. The attacks form routing loops which can be abused as a eliminates the need to keep an explicit routing table at vehicle for traffic amplification to facilitate DoS attacks. the tunnel’s end points. In particular, the end points do We exhibit five attacks of this class. One of the presented not have to be updated as peers join and leave the tunnel. attacks can DoS a Teredo server using a single packet. The In fact, the end points of an automatic tunnel do not exploited vulnerabilities are embedded in the design of the know which other end points are currently part of the tunnels; hence any implementation of these tunnels may be vulnerable. -

Lecture 11: PKI, SSL and VPN Information Security – Theory Vs

Lecture 11: PKI, SSL and VPN Information Security – Theory vs. Reality 0368-4474-01, Winter 2011 Yoav Nir ©2011 Check Point Software Technologies Ltd. | [Restricted] ONLY for designated groups and individuals What is VPN ° VPN stands for Virtual Private Network ° A private network is pretty obvious – An Ethernet LAN running in my building – Wifi with access control – A locked door and thick walls can be access control – An optical fiber running between two buildings ©2011 Check Point Software Technologies Ltd. 2 What is a VPN ° What is a non-private network? – Obviously, someone else’s network. ° Obvious example: the Internet. ° The Internet is pretty fast, and really cheap. ° What do I do if I have offices in several cities – And people working from home – And sales people working from who knows where ° We could use the Internet, but… – People could see my data – People could modify my messsages – People could pretend to be other people ©2011 Check Point Software Technologies Ltd. 3 What is a VPN ° I could run my own cables around the world. ° I could buy an MPLS connection from a local telco – Bezeq would love to sell me one. They call it IPVPN, and it’s pretty much a switched link just for my company – But I can’t get that to the home or mobile users – And it’s mediocre (but consistent) speed – And Bezeq can still do whatever it wants – And it’s really expensive – The incremental cost of using the Internet is zero ° A virtual private network combines the relative low-cost of using the Internet with the privacy of a leased line. -

Ipsec and IKE Administration Guide

IPsec and IKE Administration Guide Sun Microsystems, Inc. 4150 Network Circle Santa Clara, CA 95054 U.S.A. Part No: 816–7264–10 April 2003 Copyright 2003 Sun Microsystems, Inc. 4150 Network Circle, Santa Clara, CA 95054 U.S.A. All rights reserved. This product or document is protected by copyright and distributed under licenses restricting its use, copying, distribution, and decompilation. No part of this product or document may be reproduced in any form by any means without prior written authorization of Sun and its licensors, if any. Third-party software, including font technology, is copyrighted and licensed from Sun suppliers. Parts of the product may be derived from Berkeley BSD systems, licensed from the University of California. UNIX is a registered trademark in the U.S. and other countries, exclusively licensed through X/Open Company, Ltd. Sun, Sun Microsystems, the Sun logo, docs.sun.com, AnswerBook, AnswerBook2, SunOS, Sun ONE Certificate Server, and Solaris are trademarks, registered trademarks, or service marks of Sun Microsystems, Inc. in the U.S. and other countries. All SPARC trademarks are used under license and are trademarks or registered trademarks of SPARC International, Inc. in the U.S. and other countries. Products bearing SPARC trademarks are based upon an architecture developed by Sun Microsystems, Inc. The OPEN LOOK and Sun™ Graphical User Interface was developed by Sun Microsystems, Inc. for its users and licensees. Sun acknowledges the pioneering efforts of Xerox in researching and developing the concept of visual or graphical user interfaces for the computer industry. Sun holds a non-exclusive license from Xerox to the Xerox Graphical User Interface, which license also covers Sun’s licensees who implement OPEN LOOK GUIs and otherwise comply with Sun’s written license agreements. -

An Introduction to the Identifier-Locator Network Protocol (ILNP)

An Introduction to the Identifier-Locator Network Protocol (ILNP) Presented by Joel Halpern Material prepared by Saleem N. Bhatti & Ran Atkinson https://ilnp.cs.st-andrews.ac.uk/ IETF102, Montreal, CA. (C) Saleem Bhatti, 21 June 2018. 1 Thanks! • Joel Halpern: presenting today! • Ran Atkinson: co-conspirator. • Students at the University of St Andrews: • Dr Ditchaphong Phoomikiatissak (Linux) • Dr Bruce Simpson (FreeBSD, Cisco) • Khawar Shezhad (DNS/Linux, Verisign) • Ryo Yanagida (Linux, Time Warner) • … many other students on sub-projects … • IRTF (Routing RG, now concluded) • Discussions on various email lists. IETF102, Montreal, CA. (C) Saleem Bhatti, 21 June 2018. 2 Background to Identifier-Locator IETF102, Montreal, CA. (C) Saleem Bhatti, 21 June 2018. 3 “IP addresses considered harmful” • “IP addresses considered harmful” Brian E. Carpenter ACM SIGCOMM CCR, vol. 44, issue 2, Apr 2014 http://dl.acm.org/citation.cfm?id=2602215 http://dx.doi.org/10.1145/2602204.2602215 • Abstract This note describes how the Internet has got itself into deep trouble by over-reliance on IP addresses and discusses some possible ways forward. IETF102, Montreal, CA. (C) Saleem Bhatti, 21 June 2018. 4 RFC2104 (I), IAB, Feb 1997 • RFC2104, “IPv4 Address Behaviour Today”. • Ideal behaviour of Identifiers and Locators: Identifiers should be assigned at birth, never change, and never be re-used. Locators should describe the host's position in the network's topology, and should change whenever the topology changes. Unfortunately neither of the these ideals are met by IPv4 addresses. IETF102, Montreal, CA. (C) Saleem Bhatti, 21 June 2018. 5 RFC6115 (I), Feb 2011 • RFC6115, “Recommendation for a Routing Architecture”. -

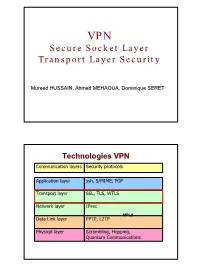

Secure Socket Layer Transport Layer Security

VPN Secure Socket Layer Transport Layer Security Mureed HUSSAIN, Ahmed MEHAOUA, Dominique SERET Technologies VPN Communication layers Security protocols Application layer ssh, S/MIME, PGP Transport layer SSL, TLS, WTLS Network layer IPsec MPLS Data Link layer PPTP, L2TP Physical layer Scrambling, Hopping, Quantum Communications 1 Agenda • Introduction – Motivation, evolution, standardization – Applications • SSL Protocol – SSL phases and services – Sessions and connections – SSL protocols and layers • SSL Handshake protocol • SSL Record protocol / layer • SSL solutions and products • Conclusion Sécurisation des échanges • Pour sécuriser les échanges ayant lieu sur le réseau Internet, il existe plusieurs approches : - niveau applicatif (PGP) - niveau réseau (protocole IPsec) - niveau physique (boîtiers chiffrant). • TLS/SSL vise à sécuriser les échanges au niveau de la couche Transport. • Application typique : sécurisation du Web 2 Transport Layer Security • Advantages – Does not require enhancement to each application – NAT friendly – Firewall Friendly • Disadvantages – Embedded in the application stack (some mis-implementation) – Protocol specific --> need to duplicated for each transport protocol – Need to maintain context for connection (not currently implemented for UDP) – Doesn’t protect IP adresses & headers Security-Sensitive Web Applications • Online banking • Online purchases, auctions, payments • Restricted website access • Software download • Web-based Email • Requirements – Authentication: Of server, of client, or (usually) -

Openflow, in Proceedings of the Interna- Tional Conference on Pervasive Computing and Communication Workshops (Percom Workshops)

Traffic Control for Multi-homed End-hosts via Software Defined Networking Anees Mohsin Hadi Al-Najjar B.Sc. (Computer Science), M.Sc. (Computer Science) A thesis submitted for the degree of Doctor of Philosophy at The University of Queensland in 2019 School of Information Technology & Electrical Engineering Abstract Software Defined Networking (SDN) is an emerging technology that allows computer networks to be more efficiently managed and controlled by providing a high level of abstraction and network programmability. Having powerful abstractions and pro- grammability via a centralised network controller provides new potential improve- ments to computer networks, such as easier network management, faster innovation and reduced cost. SDN has been successfully applied in wide area and data centre networks, and has achieved a significant improvement in network performance and efficiency. However, using SDN to control network traffic in end-host devices has not been investigated thoroughly. The research presented in this thesis aims to address this gap and inves- tigates the potential benefits of SDN for end-hosts. This thesis explores the feasibility of applying the SDN methodology to control network traffic on multi-homed end de- vices. The objective was to create a control mechanism by changing the network stack on the client in a way that is transparent to the application layer, the network infras- tructure, and other hosts on the network. In contrast to other solutions such as MPTCP, which require a protocol stack upgrade on all the participating nodes, the approach presented in this thesis allows quick and easy client-side-only deployment. This thesis presents an architecture for embedding SDN components, i.e. -

Misuse Patterns for the SSL/TLS Protocol

Misuse Patterns for the SSL/TLS Protocol by Ali Alkazimi A Dissertation Submitted to the Faculty of College of Engineering & Computer Science In Partial Fulfillment of the Requirements for the Degree of Doctor of Philosophy Florida Atlantic University Boca Raton, FL May 2017 Copyright by Ali Alkazimi 2017 ii ACKNOWLEDGEMENTS I would like to thank my advisor, Dr. Eduardo B. Fernandez, for his guidance during these years. He has been a true mentor by supporting me not only during my research but also in my personal life. I would also like to express my gratitude to my committee members, Dr. Mohammad Ilyas, Dr. Maria Petrie, and the members of the Secure Systems Research Group for all their advice and constructive comments of this dissertation. I would like to thank my beloved wife, Abrar Almoosa, and my kids, Fadak, Mohammed, Jude and Lia for giving me the support during my studies. I want to thank my father and my mother because without them I would not accomplish anything. I also want to thank my brothers (Mohammad and Zaid) and my sister (Noor) for their continuous support. I want to thank the Central Bank of Kuwait for their generous support and for giving me the opportunity to achieve my goals. iv ABSTRACT Author: Ali Alkazimi Title: Misuse Patterns for the SSL/TLS Protocol Institution: Florida Atlantic University Dissertation Advisor: Dr. Eduardo B. Fernandez Degree: Doctor of Philosophy Year: 2017 The SSL/TLS is the main protocol used to provide secure data connection between a client and a server. The main concern of using this protocol is to avoid the secure connection from being breached. -

Ipv6 for Helenos

Charles University in Prague Faculty of Mathematics and Physics MASTER THESIS Mgr. Bc. Anton´ınSteinhauser IPv6 for HelenOS Department of Distributed and Dependable Systems Supervisor of the master thesis: Mgr. Martin Dˇeck´y Study programme: Informatics Specialization: Software Systems Prague 2013 I am much obliged to my thesis supervisor, Mgr. Martin Dˇeck´y,for his advices and hints in this research. I declare that I carried out this master thesis independently, and only with the cited sources, literature and other professional sources. I understand that my work relates to the rights and obligations under the Act No. 121/2000 Coll., the Copyright Act, as amended, in particular the fact that the Charles University in Prague has the right to conclude a license agreement on the use of this work as a school work pursuant to Section 60 paragraph 1 of the Copyright Act. In Prague date 18. 7. 2013 Anton´ınSteinhauser N´azevpr´ace:IPv6 for HelenOS Autor: Anton´ınSteinhauser Katedra: Katedra distribuovan´ych a spolehliv´ych syst´em˚u Vedouc´ıdiplomov´epr´ace: Mgr. Martin Dˇeck´y,Katedra distribuovan´ych a spolehliv´ych syst´em˚u Abstrakt: Tato pr´acerozˇsiˇrujeoperaˇcn´ısyst´emHelenOS o podporu nov´ehoIPv6 protokolu. Implementace protokolu IPv6 je na stejn´e´urovni jako dˇr´ıvˇejˇs´ıimple- mentace IPv4 protokolu. S´ıˇtov´ystack HelenOS nyn´ınab´ız´ıtˇrim´odypr´acese s´ıt´ı: uˇz´ıv´an´ıpouze IPv4 protokolu, uˇz´ıv´an´ıpouze IPv6 protokolu a du´aln´ım´od, kter´y umoˇzˇnujepouˇz´ıvat oba protokoly najednou. Pr´acepopisuje pˇredchoz´ıstav s´ıˇtov´ehostacku HelenOS, analyzuje rozd´ılymezi IPv4 protokolem a IPv6 protokolem a zd˚uvodˇnujejednotliv´astrategick´arozhod- nut´ı. -

Shelf Manager User Guide (May 15, 2018) Page 1 / 321

User Guide Pigeon Point Shelf Manager Release 3.7.1 May 15, 2018 nVent Schroff GmbH [email protected] www.pigeonpoint.com schroff.nVent.com This document is furnished under license and may be used or copied only in accordance with the terms of such license. The content of this manual is furnished for informational use only, is subject to change without notice, and should not be construed as a commitment by nVent. nVent assumes no responsibility or liability for any errors or inaccuracies that may appear in this book. Except as permitted by such license, no part of this publication may be reproduced, stored in a retrieval system, or transmitted, in any form or by any means, electronic, manual, recording, or otherwise, without the prior written permission of nVent. The Pigeon Point Shelf Manager uses an implementation of the MD5 Message-Digest algorithm that is derived from the RSA Data Security, Inc. MD5 Message-Digest algorithm. All nVent marks and logos are owned or licensed by nVent Services GmbH or its affiliates. All other trademarks are the property of their respective owners. nVent reserves the right to change specifications without notice. Pigeon Point Shelf Manager User Guide (May 15, 2018) Page 1 / 321 Table of contents 1 About this document 9 1.1 Shelf Manager documentation 9 1.1.1 Conventions used in this document 9 1.2 Additional resources 10 2 Introduction 11 2.1 In this section 11 2.2 Intelligent Platform Management: an ATCA overview 11 2.3 Pigeon Point Board Management Reference: hardware and firmware 14 2.4 -

Ipsec and IKE Administration Guide

IPsec and IKE Administration Guide Sun Microsystems, Inc. 4150 Network Circle Santa Clara, CA 95054 U.S.A. Part No: 817–2694–10 December 2003 Copyright 2003 Sun Microsystems, Inc. 4150 Network Circle, Santa Clara, CA 95054 U.S.A. All rights reserved. This product or document is protected by copyright and distributed under licenses restricting its use, copying, distribution, and decompilation. No part of this product or document may be reproduced in any form by any means without prior written authorization of Sun and its licensors, if any. Third-party software, including font technology, is copyrighted and licensed from Sun suppliers. Parts of the product may be derived from Berkeley BSD systems, licensed from the University of California. UNIX is a registered trademark in the U.S. and other countries, exclusively licensed through X/Open Company, Ltd. Sun, Sun Microsystems, the Sun logo, docs.sun.com, AnswerBook, AnswerBook2, Sun Crypto Accelerator 1000, Sun Crypto Accelerator 4000 and Solaris are trademarks, registered trademarks, or service marks of Sun Microsystems, Inc. in the U.S. and other countries. All SPARC trademarks are used under license and are trademarks or registered trademarks of SPARC International, Inc. in the U.S. and other countries. Products bearing SPARC trademarks are based upon an architecture developed by Sun Microsystems, Inc. The OPEN LOOK and Sun™ Graphical User Interface was developed by Sun Microsystems, Inc. for its users and licensees. Sun acknowledges the pioneering efforts of Xerox in researching and developing the concept of visual or graphical user interfaces for the computer industry. Sun holds a non-exclusive license from Xerox to the Xerox Graphical User Interface, which license also covers Sun’s licensees who implement OPEN LOOK GUIs and otherwise comply with Sun’s written license agreements.