A Guide to Parsing

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Journal of Computer Science and Engineering Parsing

IJRDO - Journal of Computer Science and Engineering ISSN: 2456-1843 JOURNAL OF COMPUTER SCIENCE AND ENGINEERING PARSING TECHNIQUES Ojesvi Bhardwaj Abstract: ‘Parsing’ is the term used to describe the process of automatically building syntactic analyses of a sentence in terms of a given grammar and lexicon. The resulting syntactic analyses may be used as input to a process of semantic interpretation, (or perhaps phonological interpretation, where aspects of this, like prosody, are sensitive to syntactic structure). Occasionally, ‘parsing’ is also used to include both syntactic and semantic analysis. We use it in the more conservative sense here, however. In most contemporary grammatical formalisms, the output of parsing is something logically equivalent to a tree, displaying dominance and precedence relations between constituents of a sentence, perhaps with further annotations in the form of attribute-value equations (‘features’) capturing other aspects of linguistic description. However, there are many different possible linguistic formalisms, and many ways of representing each of them, and hence many different ways of representing the results of parsing. We shall assume here a simple tree representation, and an underlying context-free grammatical (CFG) formalism. *Student, CSE, Dronachraya Collage of Engineering, Gurgaon Volume-1 | Issue-12 | December, 2015 | Paper-3 29 - IJRDO - Journal of Computer Science and Engineering ISSN: 2456-1843 1. INTRODUCTION Parsing or syntactic analysis is the process of analyzing a string of symbols, either in natural language or in computer languages, according to the rules of a formal grammar. The term parsing comes from Latin pars (ōrātiōnis), meaning part (of speech). The term has slightly different meanings in different branches of linguistics and computer science. -

An Efficient Implementation of the Head-Corner Parser

An Efficient Implementation of the Head-Corner Parser Gertjan van Noord" Rijksuniversiteit Groningen This paper describes an efficient and robust implementation of a bidirectional, head-driven parser for constraint-based grammars. This parser is developed for the OVIS system: a Dutch spoken dialogue system in which information about public transport can be obtained by telephone. After a review of the motivation for head-driven parsing strategies, and head-corner parsing in particular, a nondeterministic version of the head-corner parser is presented. A memorization technique is applied to obtain a fast parser. A goal-weakening technique is introduced, which greatly improves average case efficiency, both in terms of speed and space requirements. I argue in favor of such a memorization strategy with goal-weakening in comparison with ordinary chart parsers because such a strategy can be applied selectively and therefore enormously reduces the space requirements of the parser, while no practical loss in time-efficiency is observed. On the contrary, experiments are described in which head-corner and left-corner parsers imple- mented with selective memorization and goal weakening outperform "standard" chart parsers. The experiments include the grammar of the OV/S system and the Alvey NL Tools grammar. Head-corner parsing is a mix of bottom-up and top-down processing. Certain approaches to robust parsing require purely bottom-up processing. Therefore, it seems that head-corner parsing is unsuitable for such robust parsing techniques. However, it is shown how underspecification (which arises very naturally in a logic programming environment) can be used in the head-corner parser to allow such robust parsing techniques. -

Derivatives of Parsing Expression Grammars

Derivatives of Parsing Expression Grammars Aaron Moss Cheriton School of Computer Science University of Waterloo Waterloo, Ontario, Canada [email protected] This paper introduces a new derivative parsing algorithm for recognition of parsing expression gram- mars. Derivative parsing is shown to have a polynomial worst-case time bound, an improvement on the exponential bound of the recursive descent algorithm. This work also introduces asymptotic analysis based on inputs with a constant bound on both grammar nesting depth and number of back- tracking choices; derivative and recursive descent parsing are shown to run in linear time and constant space on this useful class of inputs, with both the theoretical bounds and the reasonability of the in- put class validated empirically. This common-case constant memory usage of derivative parsing is an improvement on the linear space required by the packrat algorithm. 1 Introduction Parsing expression grammars (PEGs) are a parsing formalism introduced by Ford [6]. Any LR(k) lan- guage can be represented as a PEG [7], but there are some non-context-free languages that may also be represented as PEGs (e.g. anbncn [7]). Unlike context-free grammars (CFGs), PEGs are unambiguous, admitting no more than one parse tree for any grammar and input. PEGs are a formalization of recursive descent parsers allowing limited backtracking and infinite lookahead; a string in the language of a PEG can be recognized in exponential time and linear space using a recursive descent algorithm, or linear time and space using the memoized packrat algorithm [6]. PEGs are formally defined and these algo- rithms outlined in Section 3. -

Generalized Probabilistic LR Parsing of Natural Language (Corpora) with Unification-Based Grammars

Generalized Probabilistic LR Parsing of Natural Language (Corpora) with Unification-Based Grammars Ted Briscoe* John Carroll* University of Cambridge University of Cambridge We describe work toward the construction of a very wide-coverage probabilistic parsing system for natural language (NL), based on LR parsing techniques. The system is intended to rank the large number of syntactic analyses produced by NL grammars according to the frequency of occurrence of the individual rules deployed in each analysis. We discuss a fully automatic procedure for constructing an LR parse table from a unification-based grammar formalism, and consider the suitability of alternative LALR(1) parse table construction methods for large grammars. The parse table is used as the basis for two parsers; a user-driven interactive system that provides a computationally tractable and labor-efficient method of supervised training of the statistical information required to drive the probabilistic parser. The latter is constructed by associating probabilities with the LR parse table directly. This technique is superior to parsers based on probabilistic lexical tagging or probabilistic context-free grammar because it allows for a more context-dependent probabilistic language model, as well as use of a more linguistically adequate grammar formalism. We compare the performance of an optimized variant of Tomita's (1987) generalized LR parsing algorithm to an (efficiently indexed and optimized) chart parser. We report promising results of a pilot study training on 150 noun definitions from the Longman Dictionary of Contemporary English (LDOCE) and retesting on these plus a further 55 definitions. Finally, we discuss limitations of the current system and possible extensions to deal with lexical (syntactic and semantic)frequency of occurrence. -

CS 375, Compilers: Class Notes Gordon S. Novak Jr. Department Of

CS 375, Compilers: Class Notes Gordon S. Novak Jr. Department of Computer Sciences University of Texas at Austin [email protected] http://www.cs.utexas.edu/users/novak Copyright c Gordon S. Novak Jr.1 1A few slides reproduce figures from Aho, Lam, Sethi, and Ullman, Compilers: Principles, Techniques, and Tools, Addison-Wesley; these have footnote credits. 1 I wish to preach not the doctrine of ignoble ease, but the doctrine of the strenuous life. { Theodore Roosevelt Innovation requires Austin, Texas. We need faster chips and great compilers. Both those things are from Austin. { Guy Kawasaki 2 Course Topics • Introduction • Lexical Analysis: characters ! words lexer { Regular grammars { Hand-written lexical analyzer { Number conversion { Regular expressions { LEX • Syntax Analysis: words ! sentences parser { Context-free grammars { Operator precedence { Recursive descent parsing { Shift-reduce parsing, YACC { Intermediate code { Symbol tables • Code Generation { Code generation from trees { Register assignment { Array references { Subroutine calls • Optimization { Constant folding, partial evaluation, Data flow analysis • Object-oriented programming 3 Pascal Test Program program graph1(output); { Jensen & Wirth 4.9 } const d = 0.0625; {1/16, 16 lines for [x,x+1]} s = 32; {32 character widths for [y,y+1]} h = 34; {character position of x-axis} c = 6.28318; {2*pi} lim = 32; var x,y : real; i,n : integer; begin for i := 0 to lim do begin x := d*i; y := exp(-x)*sin(c*x); n := round(s*y) + h; repeat write(' '); n := n-1 until n=0; writeln('*') end end. * * * * * * * * * * * * * * * * * * * * * * * * * * 4 Introduction • What a compiler does; why we need compilers • Parts of a compiler and what they do • Data flow between the parts 5 Machine Language A computer is basically a very fast pocket calculator attached to a large memory. -

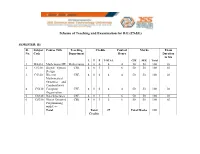

Scheme of Teaching and Examination for BE

Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: III Sl. Subject Course Title Teaching Credits Contact Marks Exam No. Code Department Hours Duration in hrs L T P TOTAL CIE SEE Total 1 MA310 Mathematics III Mathematics 4 0 0 4 4 50 50 100 03 2 CS310 Digital System CSE 4 0 1 5 6 50 50 100 03 Design 3 CS320 Discrete CSE 4 0 0 4 4 50 50 100 03 Mathematical Structures and Combinatorics 4 CS330 Computer CSE 4 0 0 4 4 50 50 100 03 Organization 5 CS340 Data Structures CSE 4 0 1 5 6 50 50 100 03 6 CS350 Object Oriented CSE 4 0 1 5 6 50 50 100 03 Programming with C++ Total Total 27 Total Marks 600 Credits Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: IV Sl. Subject Course Title Teaching Credits Contact Marks Exam No. Code Department Hours Duration in hrs L T P TOTAL CIE SEE Total 1 MA410 Probability, Mathematics 4 0 0 4 4 50 50 100 03 Statistics and Queuing 2 CS410 Operating CSE 4 0 1 5 6 50 50 100 03 Systems 3 CS420 Design and CSE 4 0 1 5 6 50 50 100 03 Analysis of Algorithms 4 CS430 Theory of CSE 4 0 0 4 4 50 50 100 03 Computation 5 CS440 Microprocessors CSE 4 0 1 5 6 50 50 100 03 6 CS450 Data CSE 4 0 0 4 4 50 50 100 03 Communication Total Total 27 Total Marks 600 Credits Scheme of Teaching and Examination for B.E (CS&E) SEMESTER: V Sl. -

LATE Ain't Earley: a Faster Parallel Earley Parser

LATE Ain’T Earley: A Faster Parallel Earley Parser Peter Ahrens John Feser Joseph Hui [email protected] [email protected] [email protected] July 18, 2018 Abstract We present the LATE algorithm, an asynchronous variant of the Earley algorithm for pars- ing context-free grammars. The Earley algorithm is naturally task-based, but is difficult to parallelize because of dependencies between the tasks. We present the LATE algorithm, which uses additional data structures to maintain information about the state of the parse so that work items may be processed in any order. This property allows the LATE algorithm to be sped up using task parallelism. We show that the LATE algorithm can achieve a 120x speedup over the Earley algorithm on a natural language task. 1 Introduction Improvements in the efficiency of parsers for context-free grammars (CFGs) have the potential to speed up applications in software development, computational linguistics, and human-computer interaction. The Earley parser has an asymptotic complexity that scales with the complexity of the CFG, a unique, desirable trait among parsers for arbitrary CFGs. However, while the more commonly used Cocke-Younger-Kasami (CYK) [2, 5, 12] parser has been successfully parallelized [1, 7], the Earley algorithm has seen relatively few attempts at parallelization. Our research objectives were to understand when there exists parallelism in the Earley algorithm, and to explore methods for exploiting this parallelism. We first tried to naively parallelize the Earley algorithm by processing the Earley items in each Earley set in parallel. We found that this approach does not produce any speedup, because the dependencies between Earley items force much of the work to be performed sequentially. -

CWI Scanprofile/PDF/300

Centrum voor Wiskunde en lnformatica Centre for Mathematics and Computer Science J. Heering, P. Klint, J.G. Rekers Incremental generation of parsers , Computer Science/Department of Software Technology Report CS-R8822 May Biblk>tlleek Centrum ypor Wisl~unde en lnformatk:a Am~tel>dam The Centre for Mathematics and Computer Science is a research institute of the Stichting Mathematisch Centrum, which was founded on February 11, 1946, as a nonprofit institution aim ing at the promotion of mathematics, computer science, and their applications. It is sponsored by the Dutch Government through the Netherlands Organization for the Advancement of Pure Research (Z.W.0.). q\ ' Copyright (t:: Stichting Mathematisch Centrum, Amsterdam 1 Incremental Generation of Parsers J. Heering Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AS Amsterdam, The Netherlands P. Klint Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AS Amsterdam, The Netherlands and Programming Research Group, University of Amsterdam P.O. BOX 41882, 1009 DB Amsterdam, The Netherlands J. Rekers Department of Software Technology, Centre for Mathematics and Computer Science P.O. Box 4079, 1009 AB Amsterdam, The Netherlands A parser checks whether a text is a sentence in a language. Therefore, the parser is provided with the grammar of the language, and it usually generates a structure (parse tree) that represents the text according to that grammar. Most present-day parsers are not directly driven by the grammar but by a 'parse table', which is generated by a parse table generator. A table based parser wolks more efficiently than a grammar based parser does, and provided that the parser is used often enough, the cost of gen erating the parse table is outweighed by the gain in parsing efficiency. -

Lecture 10: CYK and Earley Parsers Alvin Cheung Building Parse Trees Maaz Ahmad CYK and Earley Algorithms Talia Ringer More Disambiguation

Hack Your Language! CSE401 Winter 2016 Introduction to Compiler Construction Ras Bodik Lecture 10: CYK and Earley Parsers Alvin Cheung Building Parse Trees Maaz Ahmad CYK and Earley algorithms Talia Ringer More Disambiguation Ben Tebbs 1 Announcements • HW3 due Sunday • Project proposals due tonight – No late days • Review session this Sunday 6-7pm EEB 115 2 Outline • Last time we saw how to construct AST from parse tree • We will now discuss algorithms for generating parse trees from input strings 3 Today CYK parser builds the parse tree bottom up More Disambiguation Forcing the parser to select the desired parse tree Earley parser solves CYK’s inefficiency 4 CYK parser Parser Motivation • Given a grammar G and an input string s, we need an algorithm to: – Decide whether s is in L(G) – If so, generate a parse tree for s • We will see two algorithms for doing this today – Many others are available – Each with different tradeoffs in time and space 6 CYK Algorithm • Parsing algorithm for context-free grammars • Invented by John Cocke, Daniel Younger, and Tadao Kasami • Basic idea given string s with n tokens: 1. Find production rules that cover 1 token in s 2. Use 1. to find rules that cover 2 tokens in s 3. Use 2. to find rules that cover 3 tokens in s 4. … N. Use N-1. to find rules that cover n tokens in s. If succeeds then s is in L(G), else it is not 7 A graphical way to visualize CYK Initial graph: the input (terminals) Repeat: add non-terminal edges until no more can be added. -

Parsing Based on N-Path Tree-Controlled Grammars

Theoretical and Applied Informatics ISSN 1896–5334 Vol.23 (2011), no. 3-4 pp. 213–228 DOI: 10.2478/v10179-011-0015-7 Parsing Based on n-Path Tree-Controlled Grammars MARTIN Cˇ ERMÁK,JIR͡ KOUTNÝ,ALEXANDER MEDUNA Formal Model Research Group Department of Information Systems Faculty of Information Technology Brno University of Technology Božetechovaˇ 2, 612 66 Brno, Czech Republic icermak, ikoutny, meduna@fit.vutbr.cz Received 15 November 2011, Revised 1 December 2011, Accepted 4 December 2011 Abstract: This paper discusses recently introduced kind of linguistically motivated restriction placed on tree-controlled grammars—context-free grammars with some root-to-leaf paths in their derivation trees restricted by a control language. We deal with restrictions placed on n ¸ 1 paths controlled by a deter- ministic context–free language, and we recall several basic properties of such a rewriting system. Then, we study the possibilities of corresponding parsing methods working in polynomial time and demonstrate that some non-context-free languages can be generated by this regulated rewriting model. Furthermore, we illustrate the syntax analysis of LL grammars with controlled paths. Finally, we briefly discuss how to base parsing methods on bottom-up syntax–analysis. Keywords: regulated rewriting, derivation tree, tree-controlled grammars, path-controlled grammars, parsing, n-path tree-controlled grammars 1. Introduction The investigation of context-free grammars with restricted derivation trees repre- sents an important trend in today’s formal language theory (see [3], [6], [10], [11], [12], [13] and [15]). In essence, these grammars generate their languages just like ordinary context-free grammars do, but their derivation trees have to satisfy some simple pre- scribed conditions. -

Abstract Syntax Trees & Top-Down Parsing

Abstract Syntax Trees & Top-Down Parsing Review of Parsing • Given a language L(G), a parser consumes a sequence of tokens s and produces a parse tree • Issues: – How do we recognize that s ∈ L(G) ? – A parse tree of s describes how s ∈ L(G) – Ambiguity: more than one parse tree (possible interpretation) for some string s – Error: no parse tree for some string s – How do we construct the parse tree? Compiler Design 1 (2011) 2 Abstract Syntax Trees • So far, a parser traces the derivation of a sequence of tokens • The rest of the compiler needs a structural representation of the program • Abstract syntax trees – Like parse trees but ignore some details – Abbreviated as AST Compiler Design 1 (2011) 3 Abstract Syntax Trees (Cont.) • Consider the grammar E → int | ( E ) | E + E • And the string 5 + (2 + 3) • After lexical analysis (a list of tokens) int5 ‘+’ ‘(‘ int2 ‘+’ int3 ‘)’ • During parsing we build a parse tree … Compiler Design 1 (2011) 4 Example of Parse Tree E • Traces the operation of the parser E + E • Captures the nesting structure • But too much info int5 ( E ) – Parentheses – Single-successor nodes + E E int 2 int3 Compiler Design 1 (2011) 5 Example of Abstract Syntax Tree PLUS PLUS 5 2 3 • Also captures the nesting structure • But abstracts from the concrete syntax a more compact and easier to use • An important data structure in a compiler Compiler Design 1 (2011) 6 Semantic Actions • This is what we’ll use to construct ASTs • Each grammar symbol may have attributes – An attribute is a property of a programming language construct -

Simple, Efficient, Sound-And-Complete Combinator Parsing for All Context

Simple, efficient, sound-and-complete combinator parsing for all context-free grammars, using an oracle OCaml 2014 workshop, talk proposal Tom Ridge University of Leicester, UK [email protected] This proposal describes a parsing library that is based on current grammar, the start symbol and the input to the back-end Earley work due to be published as [3]. The talk will focus on the OCaml parser, which parses the input and returns a parsing oracle. The library and its usage. oracle is then used to guide the action phase of the parser. 1. Combinator parsers 4. Real-world performance 3 Parsers for context-free grammars can be implemented directly and In addition to O(n ) theoretical performance, we also optimize our naturally in a functional style known as “combinator parsing”, us- back-end Earley parser. Extensive performance measurements on ing recursion following the structure of the grammar rules. Tradi- small grammars (described in [3]) indicate that the performance is tionally parser combinators have struggled to handle all features of arguably better than the Happy parser generator [1]. context-free grammars, such as left recursion. 5. Online distribution 2. Sound and complete combinator parsers This work has led to the P3 parsing library for OCaml, freely Previous work [2] introduced novel parser combinators that could available online1. The distribution includes extensive examples. be used to parse all context-free grammars. A parser generator built Some of the examples are: using these combinators was proved both sound and complete in the • HOL4 theorem prover. Parsing and disambiguation of arithmetic expressions using This previous approach has all the traditional benefits of combi- precedence annotations.