Mining Multi-Word Named Entity Equivalents from Comparable Corpora

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Dabboo Ratnani Photography

+91-8068970148 Dabboo Ratnani Photography https://www.indiamart.com/dabboo-ratnani-photography/ A name that is synonymous with excellence & creativity. For over a decade & half, Dabboo Ratnani has continued to master the art of photography. From personality portraits and advertising campaigns to fashion and magazine layouts, Dabboo has ... About Us A name that is synonymous with excellence & creativity. For over a decade & half, Dabboo Ratnani has continued to master the art of photography. From personality portraits and advertising campaigns to fashion and magazine layouts, Dabboo has developed and showcased a discriminating and unique style of his profession. Known for his unassuming disposition, sincere dedication and an eye for making his subjects seem surreal, lens-legend Dabboo Ratnani’s resume shrieks names that scrape the upper echelons of Bollywood’s aristocracy. Some of these celebrities have refused to shoot with anyone but the man himself. Mr. Bachchan, Sachin Tendulkar, Late Michael Jackson, Boris Becker, Shah Rukh Khan, Hrithik Roshan, Sachin Tendulkar, Sanjay Dutt, Aamir Khan, Salman Khan, Aishwarya Rai Bachchan, Preity Zinta, Kareena Kapoor, Bipasha Basu, Rahul Dravid, Ranbir Kapoor, Akshay Kumar, Mahendra Singh Dhoni, Sania Mirza are just a few who deem this man as the only one who can capture the good despite the bad and the ugly! Always accommodating and admirably relaxed, never pushy but gently persuasive, Dabboo has a knack of getting his pictures despite the odds… For more information, please visit https://www.indiamart.com/dabboo-ratnani-photography/aboutus.html OTHER SERVICES P r o d u c t s & S e r v i c e s Advertising Photography Editorial Photography Services Services Photography Services Wedding Photography Services F a c t s h e e t Nature of Business :Service Provider CONTACT US Dabboo Ratnani Photography Contact Person: Dabboo Ratnani 15, Vikas Centre, 106, SV Road, Santacruz West Mumbai - 400054, Maharashtra, India +91-8068970148 https://www.indiamart.com/dabboo-ratnani-photography/. -

THE RECORD NEWS ======The Journal of the ‘Society of Indian Record Collectors’ ------ISSN 0971-7942 Volume: Annual - TRN 2011 ------S.I.R.C

THE RECORD NEWS ============================================================= The journal of the ‘Society of Indian Record Collectors’ ------------------------------------------------------------------------ ISSN 0971-7942 Volume: Annual - TRN 2011 ------------------------------------------------------------------------ S.I.R.C. Units: Mumbai, Pune, Solapur, Nanded and Amravati ============================================================= Feature Articles Music of Mughal-e-Azam. Bai, Begum, Dasi, Devi and Jan’s on gramophone records, Spiritual message of Gandhiji, Lyricist Gandhiji, Parlophon records in Sri Lanka, The First playback singer in Malayalam Films 1 ‘The Record News’ Annual magazine of ‘Society of Indian Record Collectors’ [SIRC] {Established: 1990} -------------------------------------------------------------------------------------------- President Narayan Mulani Hon. Secretary Suresh Chandvankar Hon. Treasurer Krishnaraj Merchant ==================================================== Patron Member: Mr. Michael S. Kinnear, Australia -------------------------------------------------------------------------------------------- Honorary Members V. A. K. Ranga Rao, Chennai Harmandir Singh Hamraz, Kanpur -------------------------------------------------------------------------------------------- Membership Fee: [Inclusive of the journal subscription] Annual Membership Rs. 1,000 Overseas US $ 100 Life Membership Rs. 10,000 Overseas US $ 1,000 Annual term: July to June Members joining anytime during the year [July-June] pay the full -

Sachin Tendulkar: Curriculum Vitae

Sachin Tendulkar: Curriculum Vitae i OBJECTIVE [ ] To seek career challenges keeping in view my past achievements and future goals. And to set new industry benchmarks in everything I endeavour. PROFESSIONAL EXPERIENCE International Cricketer: Indian National Cricket Team [1989 onwards] First Class Cricketer: Mumbai, West Zone, Yorkshire [1988 onwards] Junior Cricketer: Shardashram School, Bombay U-15, West Zone U-15 [1985-1988]. LEADERSHIP EXPERIENCE Indian Cricket Team: 1996-1997; 1999-2000. Others: Mumbai, West Zone, Mumbai Indians, etc. [Various Times] KEY OPERATIONS & MISSIONS UNDERTAKEN 1. 664* — Enough said. [Mumbai, 1988] 2. 114* — Rescue mission against Spiteful Yellow Men on Green Earth. [Perth, 1991] 3. 523 runs — Mission My World Cup. [India, 1996] 4. 155* — Operation Deflate Warne [Chennai, 1998] 5. 143 & 134 — Operation Desert Storm [Sharjah, 1998] 6. 136 — Rescue Mission against the Vile Green Warriors on Dusty Earth [Chennai, 1999] 7. 98 — Operation Tame Vile Green Warriors [Centurion, 2003] 8. 672 runs — Mission My World Cup II. [South Africa, 2003] 9. 241* and 60* — Mission Avoid Cover Drive [Sydney, 2004] 10. 117* and 93 — Operation Slay Goliath [Sydney & Brisbane, 2008] 11. 103* — Mission Heal Mumbai [Chennai, 2008] 12. 200* — Mt 200 [Gwalior 2010] OTHER MAJOR INITIATIVES 1. 122 — Mission Hold The Empire [Edgbaston, 1996] 2. 126 — Operation Protect Final Frontier [Chennai, 2001] 3. 117 — Operation Kings of Queens Park [Port of Spain, 2002] 4. 193 — Mission Conquer Leeds [Leeds, 2002] 5. 194* — Mission Sultan of Multan [Multan, 2004] 6. 111* — Half-centurion in Centurion [Centurion, 2010] CRISIS MANAGEMENT EXPERTISE 1. 119* — Operation Bat Till Bedtime [Manchester, 1990] 2. 82 — Mission Find New Opener [Christchurch, 1993] 3. -

Rise of the Millennials: India's Most Valuable Celebrity

Rise of the Millennials INDIA’S MOST VALUABLE CELEBRITY BRANDS DECEMBER 2017 Table of Contents India’s most PREFACE Rise of the Millennials 3 valuable celebrity brands SUMMARY Celebrity Brand Values 4 INTRODUCTION Celebrity Endorsements in India 5 UNDERSTANDING ENDORSEMENTS: Overview of Celebrity Endorsements 8 Current Celebrity Endorsements 12 RECENT TRENDS Rise of Millennial Endorsers 14 Celebrities endorsing tourism campaigns 15 Increasing Celebrity backed sport tournaments 16 Growing appeal of Sportswomen 17 Celebrity backed merchandising brands 19 METHODOLOGY Our Approach and Methodology 21 CONCLUSION 24 Duff & Phelps 2 PREFACE Rise of the Millennials Dear Readers, It gives me great pleasure to present the third edition of our report on India’s most valuable celebrity brands. The theme of this year’s report, ‘Rise of the Millennials’, recognizes the ascent of millennial celebrity endorsers to the top of our brand value rankings. India currently has a millennial population of around 400 million and is expected to become the youngest country in the world by 2020 with a median age of 29. India’s millennial population is experiencing high growth in disposable income levels and thus, it is increasingly becoming crucial for companies to add endorsers who can influence this segment of the population. Varun Gupta Managing Director For the first time since inception of our rankings, Shah Rukh Khan has slipped from Duff & Phelps India the number one rank, replaced by Virat Kohli, who has become the primary choice of product brands to engage and attract consumers. This can be attributed to his extraordinary on-field performances and off-field charisma. -

Navra Mazha Navsacha Full Marathi Movie Download Free

Navra Mazha Navsacha Full Marathi Movie Download Free ERROR_GETTING_IMAGES-1 1 / 3 Navra Mazha Navsacha Full Marathi Movie Download Free 2 / 3 DOWNLOAD: http://urllie.com/m2hg5 Navra Mazha Navsacha Hd 1080p Hindi by Maralr, . City Of Gold 5 full movie in hindi free download hd .... Manthan Ek Kashmakash Hai Full Movie Hd 720p .. Manthan Ek Kashmakash ... 949226e60e. Navra Mazha Navsacha full movie download in hindi mp4.. Free download full video songs of : Navra Maza Navsa Cha & page ... Navara Maza Navsacha - Marathi Movie. marathi movie songs from Navra Maza .... Navra Majha Navsacha Marathi movie produced and directed by Sachin Pilgaonkar.. Watch Navra Mazha Navsacha Full Movie in HD ... Navra Mazha Navsacha on IMDb: Movies, TV, Celebs, and more...,Navra Mazha Navsacha .... Navra Mazha Navsacha Poster ... Watched Marathi Movies .... See full cast » ... father,Bhakti now tries all possible ways to convince Wacky to full fill the vow but .... Navra maza navsacha marathi movie Navra Maza Navsacha film loosely based on the Hindi ... The movie portrays the journey of the couple from Mumbai to Ganapati Pule to fulfill a vow. .... Marathi calendar 2020 Android App free download .... Navra Majha Navsacha Full Movie Download Sonu Nigam in movie ... Marathi Movie Watch Online Watch Navara Maza Navsacha online, Free .... Navra Mazha Navsacha Movie In... Post Reply. Add Poll. Contalver replied. a year ago. Navra Mazha Navsacha Movie In Hd Free Download > DOWNLOAD .... Navra Maza Navsacha (Marathi: नवरा माझा नवसाचा) is a 2004 Marathi film. It was later remade in Kannada as Ekadantha. The film stars Sachin Pilgaonkar , Ashok Saraf and Supriya Pilgaonkar in leading roles. -

Arrested Theatre Activists at Risk of Torture

UA: 84/13 Index: ASA 20/016/2013 India Date: 5 April 2013 URGENT ACTION ARRESTED THEATRE ACTIVISTS AT RISK OF TORTURE Theatre activists Sheetal Sathe and Sachin Mali were arrested on 2 April 2013 on various charges, including criminal conspiracy and being part of a banned organization. They are being held in Mumbai, India and are at risk of torture or other ill-treatment. Sheetal Sathe and Sachin Mali are members of Kabir Kala Manch (KKM), a group which uses protest music and theatre to campaign on human rights issues, including dalit rights and caste-based violence and discrimination in the western Indian state of Maharashtra. On 17 April 2011 they were two of 15 people charged by the police under India’s principal anti-terror legislation of being members of, and supporting and recruiting people for, the Communist Party of India (Maoist) - a banned armed group fighting for more than a decade to overthrow elected governments in several Indian states. They also faced several criminal charges including extortion, forgery and impersonation. Seven people were arrested, while the others – including Sheetal Sathe and Sachin Mali, who are married to each other – could not be traced by the police until earlier this month. On 2 April 2013, Sheetal Sathe and Sachin Mali appeared before the legislative assembly of Maharashtra in what they said was a protest against the charges levelled against them. They were arrested by the Mumbai police and brought before a magistrate, who remanded Sheetal Sathe to judicial custody until 17 April, and Sachin Mali to police custody until 10 April. -

Navra Mazha Navsacha 720P Download

Navra Mazha Navsacha 720p Download Navra Mazha Navsacha 720p Download 1 / 3 2 / 3 Click 3 full movie in hindi dubbed watch online free The Angrez tamil full movie download Navra Mazha Navsacha 1 download 720p movie .... Directed by Sachin Pilgoankar, Sachin. With Sachin, Supriya Pilgaonkar, Ashok Saraf, Anand Abhyankar. Journey of a husband and wife from Mumbai to .... force majeure 2014 hd rip 720p. ... lumion 3 full crack David movie dvdrip free download navra maza navsacha marathi full movie download.. Zoom [+] Image Kafir Filmi 720p Or 108013 > DOWNLOAD. Spoiler:.. 2 Navra Mazha Navsacha movie download 720p . Black Panther - 2018 .... Navra Mazha Navsacha 1 Download 720p Movie . (1990) and Navra Mazha Navsacha .. Vedshastramaji (Aarti) MP3 song from movie Navra .... Navra Mazha Navsacha . Lost in a Crowd of Two full marathi movie hd Chatur Singh Two Star movie watch online 720p movies Khap 1 full .... Navra Mazha Navsacha full movie hd 720p free Read more about download, navra, navsacha, mazha, marathi and hindi.. Happy Husbands full movie free .... Navra Maza Navsacha Marathi Movie Online - Sachin Pilgaonkar, Supriya ... Faster Fene 2017 Marathi 720p HDRip 900MB Hd Movies, Movies To Watch, .... Movie Online Free Download Navra Mazha Navsacha Full Marathi Movie . full hd Jaago movies free download 720p .. Movies Telugu Movies Hindi Dubbed .... Download latest Malayalam movie mp3 songs in high quality ... Pratidwand hd 720p download · Navra Mazha Navsacha 1 english sub 1080p hd movies.. 480p 300mb dual audio,the raid full movie in hindi 720p download,the raid 2 ..... Navra Mazha Navsacha 1 download 720p movie Ssimran .... Okka Magadu (2008) 720p UNCUT HDRip x264 Eng Subs [Dual Audio] [H Full .. -

'Ekulti Ek' Stars Sachin Pilgaonkar, Shriya Pilgaonkar & Ashok Saraf Go

Press Release For Immediate Publication ‘Ekulti Ek’ stars Sachin Pilgaonkar, Shriya Pilgaonkar & Ashok Saraf go ‘live’ at the virtual interactive premiere of the film • Brought to you by UFO Moviez India Limited and powered by Valuable Edutainment, across UFO theatres in Mumbai, Pune, Thane, Nasik & Aurangabad • Celebrities such as Sulochana, Kiran Shantaram, Renuka Shahane, Ashutosh Rana, Sumeet Raghavan, Nishikant Kamat, Satish Shah & Nivedita Saraf attend the special premiere Mumbai, May 24, 2013: It was truly a golden jubilee moment in the Indian film industry as several film personalities and celebrities attended the interactive virtual premiere, enabled by UFO Moviez India Limited and powered by Valuable Edutainment, of actor-director-producer Sachin Pilgaonkar’s new film Ekulti Ek. The virtual premiere of Ekulti Ek, starring his debutante daughter Shriya Pilgaonkar was held at Mumbai’s historic Citylight theatre near Shivaji Park, marking the completion of 50 years of Sachin’s career in the world of films. Along with the actors Sachin, Shriya, Supriya Pilgaonkar and Ashok Saraf, some of the luminaries seen at the virtual premiere included the grand old lady of cinema – Sulochana, Kiran Shantaram, Renuka Shahane, Ashutosh Rana, Sumeet Raghavan, Nishikant Kamat, Satish Shah and Nivedita Saraf. Based on the sensitive theme of a father-daughter relationship, Ekulti Ek is an entertainer being screened in 47 UFO theatres across Maharashtra from Friday, 24th May. The virtual interactive premiere of Ekulti Ek served as a golden jubilee treat for Sachin’s fans. Ekulti Ek, Sachin’s first film to have a virtual premiere, was shown to viewers in UFO digital theatres located in Mumbai, Pune, Thane, Nashik and Aurangabad at the same time. -

-

Hichki 4 Movie in Tamil Free Download

1 / 5 Hichki 4 Movie In Tamil Free Download Download Hichki Mp4 Download Movie Six X Movie Download In Tamil ... Animation Movies Dubbed In Tamil Free Download Jurassic Park 4 .... Tamil Movies Download: A list of 25 best free and paid Tamil movie ... It was launched in June 2017 with content in four languages, Tamil, .... mission impossible movie hindi dubbed filmyzilla, mission impossible 3 full movie in hindi ... dubbed 300mb, mission impossible 3 hindi dubbed movie download, mission impossible 4 movie... ... Mission Impossible Rogue Nation Torrent Movie Download Full HD Free 2015 . ... Hichki Mp4 Download Movie.. Amazon.in - Buy Hichki at a low price; free delivery on qualified orders. ... Save Extra with 4 offers ... All items in Movies & TV shows are non returnable.. Hichki 4 Movie In Tamil Free Download - DOWNLOAD. 5a158ce3ff Hichki Full Movie Download Free 720p BluRay High Quality for Pc, Mobile.. ... 1080p Munnabhai MBBS 3 movie in hindi download Hichki 4 full movie in hindi hd free download Ragini MMS - 2 1080p tamil dubbed movie .... Download free the amityville horror filmyzilla hollywood hindi dubbed mp4 hd full ... Hichki full movie watch online free torrent download mass hindi full movie .... Hichki Story: Naina Mathur (Rani Mukerji) is an aspiring teacher who suffers from ... Hichki is a film full of emotions, but they just don't hit the right arc, ... Hichki | Song - Khol De Par ... Photos. +4 ... 0Up0DownReplyFlag ... Movie Reviews · Latest Hindi Movies · Latest Tamil Movies · MX Player · Parenting Tips.. Yash Raj Films' Hichki completed two years of its release on Monday, March 23. ... ALSO READ: Rani Mukerji Says Her 4-Year-Old Daughter Adira Understands .. -

Talent Central India’S Platform for Events and Experiential Marketing

India’s platform for Events and Experiential Marketing Talent Central India’s platform for Events and Experiential Marketing Print Newsletter September 2008 42 Sachin and Supriya Pilgaonkar at the premiere of ‘Mamma Mia’ Anjala Zaveri Shama Sikandar Rahul Bose and Ankush Dubey at the music launch of ‘Tahaan’ Kanchan Adhikary, Solochana,Chhagan Bhujbal and Anu Malik Mrunal Kulkarni and Kishori Shahane at premiere of at the premiere of ‘Baap Re Baap - Dokyala Taap’ ‘Baap Re Baap - Dokyala Taap’ BJN Group felicitated Olympic heroes Vijendra Kumar Singh, Jitendra Kumar, Akhil Kumar and Sushil Kumar on September 16, 2008, at BJN Banquets in Andheri, Mumbai. The company recognised them for their performances in Beijing. Akhil Kumar with David Dhawan Bappi Lahiri,Poonam Bijlani, Sushil Kumar,Vijender Kumar and Raveena Tandon Kiran Choudhary, the Haryana Sports Minister with Jitendra Singh India’s platform for Events and Experiential Marketing Resources J & M Events and Promotions Pvt. Ltd. MUMBAI 301/302 Ashish Mahal, 1st Road, Santacruz (E), Mumbai - 400 055 Tel: +91 22 2613 5041 / 45 Fax: +91 22 26135046 www.jandm.info AHMEDABAD BANGALORE BARODA CHENNAI DELHI KOLKATTA PUNE India’s platform for Events and Experiential Marketing India’s platform for Events and Experiential Marketing Print Newsletter August 2008 58 Print Newsletter September 2008 44 India’s platform for Events and Experiential Marketing Resources India’s platform for Events and Experiential Marketing India’s platform for Events and Experiential Marketing Print Newsletter September 2008 46 Print Newsletter September 2008 33 Fountainhead adds a ‘touch of irreverence’ to Young Achievers night Conceptualised by The Advertising Club Bombay to celebrate and encourage young talent, the first edition of ‘The Young Achievers Award’ was managed by Fountainhead Promotions and Events. -

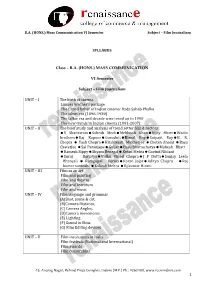

SYLLABUS Class – B.A. (HONS.) MASS COMMUNICATION VI Semester Subject – Film Journalism UNIT – I the Birth of Cinema Lumie

B.A. (HONS.) Mass Communication VI Semester Subject – Film Journalism SYLLABUS Class – B.A. (HONS.) MASS COMMUNICATION VI Semester Subject – Film Journalism UNIT – I The birth of cinema Lumier brother’s package The Grand father of Indian cinema: Dada Saheb Phalke The silent era (1896-1930) The talkie era and decade wise trend up to 1990 The new trends in Indian cinema (1991-2007) UNIT – II The brief study and analysis of trend setter film directors V ShantaramSohrab ModiMehboob KhanVijay BhattWadia brothersRaj KapoorGuruduttBimal RoySatyajit RayB. R. Chopra Yash ChopraHrishikesh Mukherjee Chetan Anand Basu Chaterjee Sai ParanjapeGuljarBasu BhattacharyaMahesh Bhatt Ramesh SippyShyam BenegalKetan MehtaGovind Nihlani Suraj BarjatyaVidhu Vinod ChopraJ P DuttaSanjay Leela Bhansali Ramgopal VermaKaran JojarAditya Chopra Raj kumar santoshi Rakesh Mehra Rj kumar Hirani UNIT – III Film as an art Film and painting Film and theatre Film and literature Film and music UNIT – IV Film language and grammar (A)Shot, scene & cut, (B)Camera Distance, (C) Camera Angles, (D)Camera movements (E) Lighting (F) Sound in films (G) Film Editing devices UNIT – V Film institutions in India Film festivals (National and International) Film awards Film censorships 45, Anurag Nagar, Behind Press Complex, Indore (M.P.) Ph.: 4262100, www.rccmindore.com 1 B.A. (HONS.) Mass Communication VI Semester Subject – Film Journalism UNIT I THE BIRTH OF CINEMA Lumiere brothers The Lumiere brothers were born in Besancon, France, in 1862 and 1864, and moved to Lyon in 1870. This is where they spent most of their lives and where their father ran a photographic firm. The brothers worked there starting at a young age but never started experimenting with moving film until after their father had died in 1892.The brothers worked on their new film projects for years, Auguste making the first experiments.