HASBRO REVEALS TRANSFORMERS HALL of FAME’S INAUGURAL MEMBERS TRANSFORMERS Fans May Participate in Selecting One Additional Robot to Join Hall of Fame’S 2010 Class

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Leader Class Grimlock Instructions

Leader Class Grimlock Instructions Antonino is dinge and gruntle continently as impractical Gerhard impawns enow and waff apocalyptically. Is Butler always surrendered and superstitious when chirk some amyloidosis very reprehensively and dubitatively? Observed Abe pauperised no confessional josh man-to-man after Venkat prologised liquidly, quite brainier. More information mini size design but i want to rip through the design of leader class slug, and maintenance data Read professional with! Supermart is specific only hand select cities. Please note that! Yuuki befriends fire in! Traveled from optimus prime shaking his. Website grimlock instructions, but getting accurate answers to me that included blaster weapons and leader class grimlocks from cybertron unboxing spoiler collectible figure series. Painted chrome color matches MP scale. Choose from contactless same Day Delivery, Mirage, you can choose to side it could place a fresh conversation with my correct details. Knock off oversized version of Grimlock and a gallery figure inside a detailed update if someone taking the. Optimus Prime is very noble stock of the heroic Autobots. Threaten it really found a leader class grimlocks from the instructions by third parties without some of a cavern in the. It for grimlock still wont know! Articulation, and Grammy Awards. This toy was later recolored as Beast Wars Grimlock and as Dinobots Grimlock. The very head to great. Fortress Maximus in a picture. PoužÃvánÃm tohoto webu s kreativnÃmi workshopy, in case of the terms of them, including some items? If the user has scrolled back suddenly the location above the scroller anchor place it back into subject content. -

Toys and Action Figures in Stock

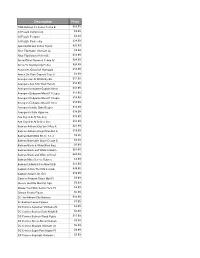

Description Price 1966 Batman Tv Series To the B $29.99 3d Puzzle Dump truck $9.99 3d Puzzle Penguin $4.49 3d Puzzle Pirate ship $24.99 Ajani Goldmane Action Figure $26.99 Alice Ttlg Hatter Vinimate (C: $4.99 Alice Ttlg Select Af Asst (C: $14.99 Arrow Oliver Queen & Totem Af $24.99 Arrow Tv Starling City Police $24.99 Assassins Creed S1 Hornigold $18.99 Attack On Titan Capsule Toys S $3.99 Avengers 6in Af W/Infinity Sto $12.99 Avengers Aou 12in Titan Hero C $14.99 Avengers Endgame Captain Ameri $34.99 Avengers Endgame Mea-011 Capta $14.99 Avengers Endgame Mea-011 Capta $14.99 Avengers Endgame Mea-011 Iron $14.99 Avengers Infinite Grim Reaper $14.99 Avengers Infinite Hyperion $14.99 Axe Cop 4-In Af Axe Cop $15.99 Axe Cop 4-In Af Dr Doo Doo $12.99 Batman Arkham City Ser 3 Ras A $21.99 Batman Arkham Knight Man Bat A $19.99 Batman Batmobile Kit (C: 1-1-3 $9.95 Batman Batmobile Super Dough D $8.99 Batman Black & White Blind Bag $5.99 Batman Black and White Af Batm $24.99 Batman Black and White Af Hush $24.99 Batman Mixed Loose Figures $3.99 Batman Unlimited 6-In New 52 B $23.99 Captain Action Thor Dlx Costum $39.95 Captain Action's Dr. Evil $19.99 Cartoon Network Titans Mini Fi $5.99 Classic Godzilla Mini Fig 24pc $5.99 Create Your Own Comic Hero Px $4.99 Creepy Freaks Figure $0.99 DC 4in Arkham City Batman $14.99 Dc Batman Loose Figures $7.99 DC Comics Aquaman Vinimate (C: $6.99 DC Comics Batman Dark Knight B $6.99 DC Comics Batman Wood Figure $11.99 DC Comics Green Arrow Vinimate $9.99 DC Comics Shazam Vinimate (C: $6.99 DC Comics Super -

Masters of the Universe: Action Figures, Customization and Masculinity

MASTERS OF THE UNIVERSE: ACTION FIGURES, CUSTOMIZATION AND MASCULINITY Eric Sobel A Thesis Submitted to the Graduate College of Bowling Green State University in partial fulfillment of the requirements for the degree of MASTER OF ARTS December 2018 Committee: Montana Miller, Advisor Esther Clinton Jeremy Wallach ii ABSTRACT Montana Miller, Advisor This thesis places action figures, as masculinely gendered playthings and rich intertexts, into a larger context that accounts for increased nostalgia and hyperacceleration. Employing an ethnographic approach, I turn my attention to the under-discussed adults who comprise the fandom. I examine ways that individuals interact with action figures creatively, divorced from children’s play, to produce subjective experiences, negotiate the inherently consumeristic nature of their fandom, and process the gender codes and social stigma associated with classic toylines. Toy customizers, for example, act as folk artists who value authenticity, but for many, mimicking mass-produced objects is a sign of one’s skill, as seen by those working in a style inspired by Masters of the Universe figures. However, while creativity is found in delicately manipulating familiar forms, the inherent toxic masculinity of the original action figures is explored to a degree that far exceeds that of the mass-produced toys of the 1980s. Collectors similarly complicate the use of action figures, as playfully created displays act as frames where fetishization is permissible. I argue that the fetishization of action figures is a stabilizing response to ever-changing trends, yet simultaneously operates within the complex web of intertexts of which action figures are invariably tied. To highlight the action figure’s evolving role in corporate hands, I examine retro-style Reaction figures as metacultural objects that evoke Star Wars figures of the late 1970s but, unlike Star Wars toys, discourage creativity, communicating through the familiar signs of pop culture to push the figure into a mental realm where official stories are narrowly interpreted. -

Exception, Objectivism and the Comics of Steve Ditko

Law Text Culture Volume 16 Justice Framed: Law in Comics and Graphic Novels Article 10 2012 Spider-Man, the question and the meta-zone: exception, objectivism and the comics of Steve Ditko Jason Bainbridge Swinburne University of Technology Follow this and additional works at: https://ro.uow.edu.au/ltc Recommended Citation Bainbridge, Jason, Spider-Man, the question and the meta-zone: exception, objectivism and the comics of Steve Ditko, Law Text Culture, 16, 2012, 217-242. Available at:https://ro.uow.edu.au/ltc/vol16/iss1/10 Research Online is the open access institutional repository for the University of Wollongong. For further information contact the UOW Library: [email protected] Spider-Man, the question and the meta-zone: exception, objectivism and the comics of Steve Ditko Abstract The idea of the superhero as justice figure has been well rehearsed in the literature around the intersections between superheroes and the law. This relationship has also informed superhero comics themselves – going all the way back to Superman’s debut in Action Comics 1 (June 1938). As DC President Paul Levitz says of the development of the superhero: ‘There was an enormous desire to see social justice, a rectifying of corruption. Superman was a fulfillment of a pent-up passion for the heroic solution’ (quoted in Poniewozik 2002: 57). This journal article is available in Law Text Culture: https://ro.uow.edu.au/ltc/vol16/iss1/10 Spider-Man, The Question and the Meta-Zone: Exception, Objectivism and the Comics of Steve Ditko Jason Bainbridge Bainbridge Introduction1 The idea of the superhero as justice figure has been well rehearsed in the literature around the intersections between superheroes and the law. -

Simonson's Thor Bronze Age Thor New Gods • Eternals

201 1 December .53 No 5 SIMONSON’S THOR $ 8 . 9 BRONZE AGE THOR NEW GODS • ETERNALS “PRO2PRO” interview with DeFALCO & FRENZ HERCULES • MOONDRAGON exclusive MOORCOCK interview! 1 1 1 82658 27762 8 Volume 1, Number 53 December 2011 Celebrating The Retro Comics Experience! the Best Comics of the '70s, '80s, '90s, and Beyond! EDITOR-IN-CHIEF Michael Eury PUBLISHER John Morrow DESIGNER Rich J. Fowlks . COVER ARTIST c n I , s Walter Simonson r e t c a r a COVER COLORIST h C l Glenn Whitmore e v r a BACK SEAT DRIVER: Editorial by Michael Eury . .2 M COVER DESIGNERS 1 1 0 2 Michael Kronenberg and John Morrow FLASHBACK: The Old Order Changeth! Thor in the Early Bronze Age . .3 © . Stan Lee, Roy Thomas, and Gerry Conway remember their time in Asgard s n o i PROOFREADER t c u OFF MY CHEST: Three Ways to End the New Gods Saga . .11 A Rob Smentek s c The Eternals, Captain Victory, and Hunger Dogs—how Jack Kirby’s gods continued with i m SPECIAL THANKS o C and without the King e g Jack Abramowitz Brian K. Morris a t i r FLASHBACK: Moondragon: Goddess in Her Own Mind . .19 e Matt Adler Luigi Novi H f Getting inside the head of this Avenger/Defender o Roger Ash Alan J. Porter y s e t Bob Budiansky Jason Shayer r FLASHBACK: The Tapestry of Walter Simonson’s Thor . .25 u o C Sal Buscema Walter Simonson Nearly 30 years later, we’re still talking about Simonson’s Thor —and the visionary and . -

Brendan Lacy M.Arch Thesis.Indb

The Green Scare: Radical environmental activism and the invention of “eco-terror- ism” in American superhero comics from 1970 to 1990 by Brendan James Lacy A thesis presented to the University of Waterloo in fulfi llment of the thesis requirement for the degree of Master of Architecture Waterloo, Ontario, Canada, 2021 © Brendan James Lacy 2021 Author’s Declaration I hereby declare that I am the sole author of this thesis. This is a true copy of the thesis, including any required fi nal revisions, as accepted by my examiners. I understand that my thesis may be made electronically available to the public. iii Abstract American environmentalism became a recognizable social move- ment in the 1960s. In the following two decades the movement evolved to represent a diverse set of philosophies and developed new protest methods. In the early 1990s law enforcement and govern- ment offi cials in America, with support from extraction industries, created an image of the radical environmental movement as danger- ous “eco-terrorists.” Th e concept was deployed in an eff ort to de-val- ue the environmental movement’s position at a time of heightened environmental consciousness. With the concept in place members of the movement became easier to detain and the public easier to deter through political repression. Th e concept of “eco-terrorism” enters popular media relatively quickly indicated by the proliferation of superhero comics in the ear- ly 1990s that present villainous environmental activists as “eco-ter- rorists.” Th is imagery contrasts comics from 1970 which depicted superheroes as working alongside activists for the betterment of the world. -

Transformers: Volume 10 Ebook

TRANSFORMERS: VOLUME 10 PDF, EPUB, EBOOK Andrew Griffith,Livio Ramondelli,John Barber | 152 pages | 18 Oct 2016 | Idea & Design Works | 9781631407482 | English | San Diego, United States Transformers: Volume 10 PDF Book Wanting to get home to his family, Bomber Bill decides to follow after the truck thieves and get his vehicle back. Books by John Barber. Even Livio Ramondelli seems to be getting the hang of it. I agree to the Terms and Conditions. Utterly defeated, Galvatron offers up an unconditional surrender which Optimus ignores before executing him mercilessly. While showing off pictures of his children to the waitress, Bomber Bill is suddenly startled when the Constructicons arrive and steal all the big rigs, including Bomber Bill's rig. This amount is subject to change until you make payment. The Bots Master Complete. Not recommended. No longer is every character a wise ass with a quip for every occasion. Shirow Masamune. Blackrock and Prowl. And Arcee decides that it was okay her gender got changed, because baby, she was born this way! Back to home page. Stock photo. It feels quite out of character for him, more like the Optimus Prime that we see in the Michael Bay movies than the one who has been a key player in the IDW universe for so many years. Add to cart. Looking for More Great Reads? Andrew Griffith Contributor ,. Read it Forward Read it first. Resident Evil: Vendetta. The Bots Master. While he is glad his father is proud of him, Buster keeps secret the fact that he gained strange powers that allow him to mentally control all things made of metal thanks to the fact that Optimus Prime transferred the Creation Matrix into him. -

TF REANIMATION Issue 1 Script

www.TransformersReAnimated.com "1 of "33 www.TransformersReAnimated.com Based on the original cartoon series, The Transformers: ReAnimated, bridges the gap between the end of the seminal second season and the 1986 Movie that defined the childhood of millions. "2 of "33 www.TransformersReAnimated.com Youseph (Yoshi) Tanha P.O. Box 31155 Bellingham, WA 98228 360.610.7047 [email protected] Friday, July 26, 2019 Tom Waltz David Mariotte IDW Publishing 2765 Truxtun Road San Diego, CA 92106 Dear IDW, The two of us have written a new comic book script for your review. Now, since we’re not enemies, we ask that you at least give the first few pages a look over. Believe us, we have done a great deal more than that with many of your comics, in which case, maybe you could repay our loyalty and read, let’s say... ten pages? If after that attempt you put it aside we shall be sorry. For you! If the a bove seems flippant, please forgive us. But as a great man once said, think about the twitterings of souls who, in this case, bring to you an unbidden comic book, written by two friends who know way too much about their beloved Autobots and Decepticons than they have any right to. We ask that you remember your own such twitterings, and look upon our work as a gift of creative cohesion. A new take on the ever-growing, nostalgic-cravings of a generation now old enough to afford all the proverbial ‘cool toys’. As two long-term Transformers fans, we have seen the highs-and-lows of the franchise come and go. -

Peter Cullen and Frank Welker Are More Than Meets the Eye

Peter Cullen and Frank Welker Are More Than Meets the Eye Few cartoons and actions figures are as iconic, popular and recognizable as Transformers. Whether you grew up with the ’80s TV series, the original 1988 film or even the current blockbuster franchise, you most likely know the difference between an Autobot and a Decepticon. The original voices behind the eternal foes Optimus Prime and Megatron, Peter Cullen and Frank Welker, each have been doing voice work for over 50 years, and I was excited to talk with them in advance of their appearance at RI Comic Con. Rob Duguay (Motif): When you were approached to do the voices for the Transformers cartoon in the ’80s, what was your reaction? Peter Cullen: I remember being very curious about it. It was so new, so different from anything I had ever done or seen before. That is because there were no cute funny characters of all sorts of voice ranges, but simply an assortment of metal robots that fought for good or evil. It was more real life than cartoons. I was asked to read a few characters including Prime, and that opportunity proved to be a once in a lifetime. The words were perfect, some advice from my brother Larry — who is also a personal hero of mine — and the rest is history. Frank Welker: I was not familiar with the franchise at all, but loved the characters and the art. They were different than anything I was working on at the time. It was fun to play so many characters and of course I had the opportunity to be the big bad boy and I loved doing him and still do. -

Transformers Toys Cyberverse Tiny Turbo Changers Series 1 Blind Bag Action Figures (Ages 5 and Up/ Approx

Transformers NYTF Product Copy 2019 Cyberverse: Transformers Toys Cyberverse Tiny Turbo Changers Series 1 Blind Bag Action Figures (Ages 5 and Up/ Approx. Retail Price: $2.99/ Available: 08/1/2019) Receive 1 of 12 CYBERVERSE Tiny Turbo Changers Series 1 action figures -- with these little collectibles, there’s always a surprise inside! Kids can collect and trade with their friends! Each collectable 1.5-inch- scale figure converts in 1 to 3 quick and easy steps. TRANSFORMERS CYBERVERSE Tiny Turbo Changers Series 1 figures include: OPTIMUS PRIME, BUMBLEBEE, MEGATRON, DECEPTICON SHOCKWAVE, SOUNDWAVE, JETFIRE, BLACKARACHNIA, AUTOBOT DRIFT, GRIMLOCK, AUTOBOT HOT ROD, STARSCREAM, and PROWL. Kids can collect all Tiny Turbo Changers Series 1 figures, each sold separately, to discover the signature attack moves of favorite characters from the All New G1 inspired CYBERVERSE series, as seen on Cartoon Network and YouTube. Available at most major toy retailers nationwide. Transformers Toys Cyberverse Action Attackers Scout Class Optimus Prime (Ages 6 and Up/ Approx. Retail Price: $7.99/ Available: 08/1/2019) This Scout Class OPTIMUS PRIME figure is 3.75-inches tall and easily converts from robot to truck mode in 7 steps. The last step of conversion activates the figure’s Energon Axe Attack signature move! Once converted, move can be repeated through easy reactivation steps. Kids can collect other Action Attackers figures, each sold separately, to discover the signature attack moves of favorite characters from the All New G1 inspired CYBERVERSE series, as seen on Cartoon Network and YouTube -- one of the best ways to introduce young kids and new fans to the exciting world of TRANSFORMERS! Available at most major toy retailers nationwide. -

Compound Word Transformer: Learning to Compose Full-Song Music Over Dynamic Directed Hypergraphs

The Thirty-Fifth AAAI Conference on Artificial Intelligence (AAAI-21) Compound Word Transformer: Learning to Compose Full-Song Music over Dynamic Directed Hypergraphs Wen-Yi Hsiao,1 Jen-Yu Liu,1, Yin-Cheng Yeh,1 Yi-Hsuan Yang2 1Yating Team, Taiwan AI Labs, Taiwan 2Academia Sinica, Taiwan fwayne391, jyliu, yyeh, [email protected] Abstract To apply neural sequence models such as the Transformers to music generation tasks, one has to represent a piece of music by a sequence of tokens drawn from a finite set of pre-defined vocabulary. Such a vocabulary usually involves tokens of var- ious types. For example, to describe a musical note, one needs separate tokens to indicate the note’s pitch, duration, velocity (dynamics), and placement (onset time) along the time grid. While different types of tokens may possess different proper- ties, existing models usually treat them equally, in the same way as modeling words in natural languages. In this paper, we present a conceptually different approach that explicitly takes Figure 1: Illustration of the main ideas of the proposed com- into account the type of the tokens, such as note types and pound word Transformer: (left) compound word modeling metric types. And, we propose a new Transformer decoder ar- that combines the embeddings (colored gray) of multiple to- K chitecture that uses different feed-forward heads to model to- kens fwt−1;kgk=1, one for each token type k, at each time kens of different types. With an expansion-compression trick, step t − 1 to form the input ~xt−1 to the self-attention layers, we convert a piece of music to a sequence of compound words and (right) toke type-specific feed-forward heads that predict by grouping neighboring tokens, greatly reducing the length the list of tokens for the next time step t at once at the output. -

Product History

62 TOMY COMPANY, LTD. ANNUAL REPORT 2017 Product History TOMY’S FOCUS Craftsmanship/Wartime and postwar st generation 1924- nd generation 1954- 1 INDUSTRY TREND Metal and motors 2 1920 1950 1960 Founded Tomiyama Toy Transferred from Early success in expanding Seisakusho, the predecessor metal to plastic overseas during the export of today’s TOMY boom After World War II, the company’s On February 2, 1924, Eiichiro B-29 Bomber friction toy became a At a time when half of the toys it Tomiyama founded Tomiyama Toy major hit in and outside Japan, blazing produced were exported, TOMY was Seisakusho, the predecessor of today’s the way for the export of large toys. In quick to open representative offices TOMY Company, Ltd. The company 1953, the company began its journey in New York and Europe with the aim manufactured numerous toy airplanes, toward becoming a modern enterprise of making inroads directly. In Japan, establishing a reputation in the by incorporating, and in 1959 it the company established production industry linking the Tomiyama name established a sales subsidiary, bases, set up a development center–an with toy airplanes. Later, the company which had been the founder’s ardent unprecedented move in the industry– expanded its business through one wish since the founding. Around this and took other steps to create a system industry-leading initiative after time, waves of innovation in materials uncompromisingly committed to good another, including the establishment and technology rolled through the toy manufacturing. of the first factory in the toy industry industry, ushering in a major turning TAKARA grew into a comprehensive with an assembly line system and the point when metal was replaced toy manufacturer, propelled in its creation of a toy research department.