Chapter 5: CPU Scheduling

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Comprehensive Review for Central Processing Unit Scheduling Algorithm

IJCSI International Journal of Computer Science Issues, Vol. 10, Issue 1, No 2, January 2013 ISSN (Print): 1694-0784 | ISSN (Online): 1694-0814 www.IJCSI.org 353 A Comprehensive Review for Central Processing Unit Scheduling Algorithm Ryan Richard Guadaña1, Maria Rona Perez2 and Larry Rutaquio Jr.3 1 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines 2 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines 3 Computer Studies and System Department, University of the East Caloocan City, 1400, Philippines Abstract when an attempt is made to execute a program, its This paper describe how does CPU facilitates tasks given by a admission to the set of currently executing processes is user through a Scheduling Algorithm. CPU carries out each either authorized or delayed by the long-term scheduler. instruction of the program in sequence then performs the basic Second is the Mid-term Scheduler that temporarily arithmetical, logical, and input/output operations of the system removes processes from main memory and places them on while a scheduling algorithm is used by the CPU to handle every process. The authors also tackled different scheduling disciplines secondary memory (such as a disk drive) or vice versa. and examples were provided in each algorithm in order to know Last is the Short Term Scheduler that decides which of the which algorithm is appropriate for various CPU goals. ready, in-memory processes are to be executed. Keywords: Kernel, Process State, Schedulers, Scheduling Algorithm, Utilization. 2. CPU Utilization 1. Introduction In order for a computer to be able to handle multiple applications simultaneously there must be an effective way The central processing unit (CPU) is a component of a of using the CPU. -

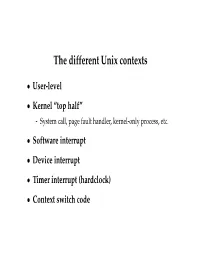

The Different Unix Contexts

The different Unix contexts • User-level • Kernel “top half” - System call, page fault handler, kernel-only process, etc. • Software interrupt • Device interrupt • Timer interrupt (hardclock) • Context switch code Transitions between contexts • User ! top half: syscall, page fault • User/top half ! device/timer interrupt: hardware • Top half ! user/context switch: return • Top half ! context switch: sleep • Context switch ! user/top half Top/bottom half synchronization • Top half kernel procedures can mask interrupts int x = splhigh (); /* ... */ splx (x); • splhigh disables all interrupts, but also splnet, splbio, splsoftnet, . • Masking interrupts in hardware can be expensive - Optimistic implementation – set mask flag on splhigh, check interrupted flag on splx Kernel Synchronization • Need to relinquish CPU when waiting for events - Disk read, network packet arrival, pipe write, signal, etc. • int tsleep(void *ident, int priority, ...); - Switches to another process - ident is arbitrary pointer—e.g., buffer address - priority is priority at which to run when woken up - PCATCH, if ORed into priority, means wake up on signal - Returns 0 if awakened, or ERESTART/EINTR on signal • int wakeup(void *ident); - Awakens all processes sleeping on ident - Restores SPL a time they went to sleep (so fine to sleep at splhigh) Process scheduling • Goal: High throughput - Minimize context switches to avoid wasting CPU, TLB misses, cache misses, even page faults. • Goal: Low latency - People typing at editors want fast response - Network services can be latency-bound, not CPU-bound • BSD time quantum: 1=10 sec (since ∼1980) - Empirically longest tolerable latency - Computers now faster, but job queues also shorter Scheduling algorithms • Round-robin • Priority scheduling • Shortest process next (if you can estimate it) • Fair-Share Schedule (try to be fair at level of users, not processes) Multilevel feeedback queues (BSD) • Every runnable proc. -

Kernel-Scheduled Entities for Freebsd

Kernel-Scheduled Entities for FreeBSD Jason Evans [email protected] November 7, 2000 Abstract FreeBSD has historically had less than ideal support for multi-threaded application programming. At present, there are two threading libraries available. libc r is entirely invisible to the kernel, and multiplexes threads within a single process. The linuxthreads port, which creates a separate process for each thread via rfork(), plus one thread to handle thread synchronization, relies on the kernel scheduler to multiplex ”threads” onto the available processors. Both approaches have scaling problems. libc r does not take advantage of multiple processors and cannot avoid blocking when doing I/O on ”fast” devices. The linuxthreads port requires one process per thread, and thus puts strain on the kernel scheduler, as well as requiring significant kernel resources. In addition, thread switching can only be as fast as the kernel's process switching. This paper summarizes various methods of implementing threads for application programming, then goes on to describe a new threading architecture for FreeBSD, based on what are called kernel- scheduled entities, or scheduler activations. 1 Background FreeBSD has been slow to implement application threading facilities, perhaps because threading is difficult to implement correctly, and the FreeBSD's general reluctance to build substandard solutions to problems. Instead, two technologies originally developed by others have been integrated. libc r is based on Chris Provenzano's userland pthreads implementation, and was significantly re- worked by John Birrell and integrated into the FreeBSD source tree. Since then, numerous other people have improved and expanded libc r's capabilities. At this time, libc r is a high-quality userland implementation of POSIX threads. -

Integrating Preemption Threshold Scheduling and Dynamic Voltage Scaling for Energy Efficient Real-Time Systems

Integrating Preemption Threshold Scheduling and Dynamic Voltage Scaling for Energy Efficient Real-Time Systems Ravindra Jejurikar1 and Rajesh Gupta2 1 Centre for Embedded Computer Systems, University of California Irvine, Irvine CA 92697, USA jeÞÞ@cec׺ÙciºedÙ 2 Department of Computer Science and Engineering, University of California San Diego, La Jolla, CA 92093, USA gÙÔØa@c׺Ùc×dºedÙ Abstract. Preemption threshold scheduling (PTS) enables designing scalable real-time systems. PTS not only decreases the run-time overhead of the system, but can also be used to decrease the number of threads and the memory require- ments of the system. In this paper, we combine preemption threshold scheduling with dynamic voltage scaling to enable energy efficient scheduling in real-time systems. We consider scheduling with task priorities defined by the Earliest Dead- line First (EDF) policy. We present an algorithm to compute threshold preemption levels for tasks with given static slowdown factors. The proposed algorithm im- proves upon known algorithms in terms of time complexity. Experimental results show that preemption threshold scheduling reduces on an average 90% context switches, even in the presence of task slowdown. Further, we describe a dynamic slack reclamation technique that working in conjunction with PTS that yields on an average 10% additional energy savings. 1 Introduction With increasing mobility and proliferation of embedded systems, low power consump- tion is an important aspect of embedded systems design. Generally speaking, the pro- cessor consumes a significant portion of the total energy, primarily due to increased computational demands. Scaling the processor frequency and voltage based on the per- formance requirements can lead to considerable energy savings. -

What Is an Operating System III 2.1 Compnents II an Operating System

Page 1 of 6 What is an Operating System III 2.1 Compnents II An operating system (OS) is software that manages computer hardware and software resources and provides common services for computer programs. The operating system is an essential component of the system software in a computer system. Application programs usually require an operating system to function. Memory management Among other things, a multiprogramming operating system kernel must be responsible for managing all system memory which is currently in use by programs. This ensures that a program does not interfere with memory already in use by another program. Since programs time share, each program must have independent access to memory. Cooperative memory management, used by many early operating systems, assumes that all programs make voluntary use of the kernel's memory manager, and do not exceed their allocated memory. This system of memory management is almost never seen any more, since programs often contain bugs which can cause them to exceed their allocated memory. If a program fails, it may cause memory used by one or more other programs to be affected or overwritten. Malicious programs or viruses may purposefully alter another program's memory, or may affect the operation of the operating system itself. With cooperative memory management, it takes only one misbehaved program to crash the system. Memory protection enables the kernel to limit a process' access to the computer's memory. Various methods of memory protection exist, including memory segmentation and paging. All methods require some level of hardware support (such as the 80286 MMU), which doesn't exist in all computers. -

Chapter 1. Origins of Mac OS X

1 Chapter 1. Origins of Mac OS X "Most ideas come from previous ideas." Alan Curtis Kay The Mac OS X operating system represents a rather successful coming together of paradigms, ideologies, and technologies that have often resisted each other in the past. A good example is the cordial relationship that exists between the command-line and graphical interfaces in Mac OS X. The system is a result of the trials and tribulations of Apple and NeXT, as well as their user and developer communities. Mac OS X exemplifies how a capable system can result from the direct or indirect efforts of corporations, academic and research communities, the Open Source and Free Software movements, and, of course, individuals. Apple has been around since 1976, and many accounts of its history have been told. If the story of Apple as a company is fascinating, so is the technical history of Apple's operating systems. In this chapter,[1] we will trace the history of Mac OS X, discussing several technologies whose confluence eventually led to the modern-day Apple operating system. [1] This book's accompanying web site (www.osxbook.com) provides a more detailed technical history of all of Apple's operating systems. 1 2 2 1 1.1. Apple's Quest for the[2] Operating System [2] Whereas the word "the" is used here to designate prominence and desirability, it is an interesting coincidence that "THE" was the name of a multiprogramming system described by Edsger W. Dijkstra in a 1968 paper. It was March 1988. The Macintosh had been around for four years. -

Comparing Systems Using Sample Data

Operating System and Process Monitoring Tools Arik Brooks, [email protected] Abstract: Monitoring the performance of operating systems and processes is essential to debug processes and systems, effectively manage system resources, making system decisions, and evaluating and examining systems. These tools are primarily divided into two main categories: real time and log-based. Real time monitoring tools are concerned with measuring the current system state and provide up to date information about the system performance. Log-based monitoring tools record system performance information for post-processing and analysis and to find trends in the system performance. This paper presents a survey of the most commonly used tools for monitoring operating system and process performance in Windows- and Unix-based systems and describes the unique challenges of real time and log-based performance monitoring. See Also: Table of Contents: 1. Introduction 2. Real Time Performance Monitoring Tools 2.1 Windows-Based Tools 2.1.1 Task Manager (taskmgr) 2.1.2 Performance Monitor (perfmon) 2.1.3 Process Monitor (pmon) 2.1.4 Process Explode (pview) 2.1.5 Process Viewer (pviewer) 2.2 Unix-Based Tools 2.2.1 Process Status (ps) 2.2.2 Top 2.2.3 Xosview 2.2.4 Treeps 2.3 Summary of Real Time Monitoring Tools 3. Log-Based Performance Monitoring Tools 3.1 Windows-Based Tools 3.1.1 Event Log Service and Event Viewer 3.1.2 Performance Logs and Alerts 3.1.3 Performance Data Log Service 3.2 Unix-Based Tools 3.2.1 System Activity Reporter (sar) 3.2.2 Cpustat 3.3 Summary of Log-Based Monitoring Tools 4. -

A Simple Chargeback System for SAS® Applications Running on UNIX and Linux Servers

SAS Global Forum 2007 Systems Architecture Paper: 194-2007 A Simple Chargeback System For SAS® Applications Running on UNIX and Linux Servers Michael A. Raithel, Westat, Rockville, MD Abstract Organizations that run SAS on UNIX and Linux servers often have a need to measure overall SAS usage and to charge the projects and users who utilize the servers. This allows an organization to pay for the hardware, software, and labor necessary to maintain and run these types of shared servers. However, performance management and accounting software is normally expensive. Purchasing such software, configuring it, and managing it may be prohibitive in terms of cost and in terms of having staff with the right skill sets to do so. Consequently, many organizations with UNIX or Linux servers do not have a reliable way to measure the overall use of SAS software and to charge individuals and projects for its use. This paper presents a simple methodology for creating a chargeback system on UNIX and Linux servers. It uses basic accounting programs found in the UNIX and Linux operating systems, and exploits them using SAS software. The paper presents an overview of the UNIX/Linux “sa” command and the basic accounting system. Then, it provides a SAS program that utilizes this command to capture and store monthly SAS usage statistics. The paper presents a second SAS program that reads the monthly SAS usage SAS data set and creates both a chargeback report and a general usage report. After reading this paper, you should be able to easily adapt the sample SAS programs to run on servers in your own environment. -

CS414 SP 2007 Assignment 1

CS414 SP 2007 Assignment 1 Due Feb. 07 at 11:59pm Submit your assignment using CMS 1. Which of the following should NOT be allowed in user mode? Briefly explain. a) Disable all interrupts. b) Read the time-of-day clock c) Set the time-of-day clock d) Perform a trap e) TSL (test-and-set instruction used for synchronization) Answer: (a), (c) should not be allowed. Which of the following components of a program state are shared across threads in a multi-threaded process ? Briefly explain. a) Register Values b) Heap memory c) Global variables d) Stack memory Answer: (b) and (c) are shared 2. After an interrupt occurs, hardware needs to save its current state (content of registers etc.) before starting the interrupt service routine. One issue is where to save this information. Here are two options: a) Put them in some special purpose internal registers which are exclusively used by interrupt service routine. b) Put them on the stack of the interrupted process. Briefly discuss the problems with above two options. Answer: (a) The problem is that second interrupt might occur while OS is in the middle of handling the first one. OS must prevent the second interrupt from overwriting the internal registers. This strategy leads to long dead times when interrupts are disabled and possibly lost interrupts and lost data. (b) The stack of the interrupted process might be a user stack. The stack pointer may not be legal which would cause a fatal error when the hardware tried to write some word at it. -

A Hardware Preemptive Multitasking Mechanism Based on Scan-Path Register Structure for FPGA-Based Reconfigurable Systems

A Hardware Preemptive Multitasking Mechanism Based on Scan-path Register Structure for FPGA-based Reconfigurable Systems S. Jovanovic, C. Tanougast and S. Weber Université Henri Poincaré, LIEN, 54506 Vandoeuvre lès Nancy, France [email protected] Abstract all the registers as well as all memories used in the circuit In this paper, we propose a hardware preemptive and to determine the starting point of the restoration multitasking mechanism which uses scan-path register process. On the other hand, the goal of the restoration is to structure and allows identifying the total task’s register restore the task’s context of processing as it was before its size for the FPGA-based reconfigurable systems. The interruption, in order to continue the execution previously main objective of this preemptive mechanism is to stopped. Several techniques allowing the hardware suspend hardware task having low priority, replace it by preemption are proposed in the literature. The pros and high-priority task and restart them at another time cons of mostly used approaches we will disscus in the (and/or from another area of the FPGA in FPGA-based following. designs). The main advantages of the proposed method The readback approach for context saving and storing are that it provides an attractive way for context saving of an outgoing task is one of the approaches which was and restoring of a hardware task without freezing other mostly used for the FPGA-based designs. No additional tasks during pre-emption phases and a small area hardware structures, simple implementation, no extra overhead. We show its feasibility by allowing us to design design efforts and no extra hardware consumption are the a simple computing example as well as the one of the most important advantages of this approach. -

Thread Scheduling in Multi-Core Operating Systems Redha Gouicem

Thread Scheduling in Multi-core Operating Systems Redha Gouicem To cite this version: Redha Gouicem. Thread Scheduling in Multi-core Operating Systems. Computer Science [cs]. Sor- bonne Université, 2020. English. tel-02977242 HAL Id: tel-02977242 https://hal.archives-ouvertes.fr/tel-02977242 Submitted on 24 Oct 2020 HAL is a multi-disciplinary open access L’archive ouverte pluridisciplinaire HAL, est archive for the deposit and dissemination of sci- destinée au dépôt et à la diffusion de documents entific research documents, whether they are pub- scientifiques de niveau recherche, publiés ou non, lished or not. The documents may come from émanant des établissements d’enseignement et de teaching and research institutions in France or recherche français ou étrangers, des laboratoires abroad, or from public or private research centers. publics ou privés. Ph.D thesis in Computer Science Thread Scheduling in Multi-core Operating Systems How to Understand, Improve and Fix your Scheduler Redha GOUICEM Sorbonne Université Laboratoire d’Informatique de Paris 6 Inria Whisper Team PH.D.DEFENSE: 23 October 2020, Paris, France JURYMEMBERS: Mr. Pascal Felber, Full Professor, Université de Neuchâtel Reviewer Mr. Vivien Quéma, Full Professor, Grenoble INP (ENSIMAG) Reviewer Mr. Rachid Guerraoui, Full Professor, École Polytechnique Fédérale de Lausanne Examiner Ms. Karine Heydemann, Associate Professor, Sorbonne Université Examiner Mr. Etienne Rivière, Full Professor, University of Louvain Examiner Mr. Gilles Muller, Senior Research Scientist, Inria Advisor Mr. Julien Sopena, Associate Professor, Sorbonne Université Advisor ABSTRACT In this thesis, we address the problem of schedulers for multi-core architectures from several perspectives: design (simplicity and correct- ness), performance improvement and the development of application- specific schedulers. -

2. Virtualizing CPU(2)

Operating Systems 2. Virtualizing CPU Pablo Prieto Torralbo DEPARTMENT OF COMPUTER ENGINEERING AND ELECTRONICS This material is published under: Creative Commons BY-NC-SA 4.0 2.1 Virtualizing the CPU -Process What is a process? } A running program. ◦ Program: Static code and static data sitting on the disk. ◦ Process: Dynamic instance of a program. ◦ You can have multiple instances (processes) of the same program (or none). ◦ Users usually run more than one program at a time. Web browser, mail program, music player, a game… } The process is the OS’s abstraction for execution ◦ often called a job, task... ◦ Is the unit of scheduling Program } A program consists of: ◦ Code: machine instructions. ◦ Data: variables stored and manipulated in memory. Initialized variables (global) Dynamically allocated (malloc, new) Stack variables (function arguments, C automatic variables) } What is added to a program to become a process? ◦ DLLs: Libraries not compiled or linked with the program (probably shared with other programs). ◦ OS resources: open files… Program } Preparing a program: Task Editor Source Code Source Code A B Compiler/Assembler Object Code Object Code Other A B Objects Linker Executable Dynamic Program File Libraries Loader Executable Process in Memory Process Creation Process Address space Code Static Data OS res/DLLs Heap CPU Memory Stack Loading: Code OS Reads on disk program Static Data and places it into the address space of the process Program Disk Eagerly/Lazily Process State } Each process has an execution state, which indicates what it is currently doing ps -l, top ◦ Running (R): executing on the CPU Is the process that currently controls the CPU How many processes can be running simultaneously? ◦ Ready/Runnable (R): Ready to run and waiting to be assigned by the OS Could run, but another process has the CPU Same state (TASK_RUNNING) in Linux.