2. Virtualizing CPU(2)

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Kernel-Scheduled Entities for Freebsd

Kernel-Scheduled Entities for FreeBSD Jason Evans [email protected] November 7, 2000 Abstract FreeBSD has historically had less than ideal support for multi-threaded application programming. At present, there are two threading libraries available. libc r is entirely invisible to the kernel, and multiplexes threads within a single process. The linuxthreads port, which creates a separate process for each thread via rfork(), plus one thread to handle thread synchronization, relies on the kernel scheduler to multiplex ”threads” onto the available processors. Both approaches have scaling problems. libc r does not take advantage of multiple processors and cannot avoid blocking when doing I/O on ”fast” devices. The linuxthreads port requires one process per thread, and thus puts strain on the kernel scheduler, as well as requiring significant kernel resources. In addition, thread switching can only be as fast as the kernel's process switching. This paper summarizes various methods of implementing threads for application programming, then goes on to describe a new threading architecture for FreeBSD, based on what are called kernel- scheduled entities, or scheduler activations. 1 Background FreeBSD has been slow to implement application threading facilities, perhaps because threading is difficult to implement correctly, and the FreeBSD's general reluctance to build substandard solutions to problems. Instead, two technologies originally developed by others have been integrated. libc r is based on Chris Provenzano's userland pthreads implementation, and was significantly re- worked by John Birrell and integrated into the FreeBSD source tree. Since then, numerous other people have improved and expanded libc r's capabilities. At this time, libc r is a high-quality userland implementation of POSIX threads. -

Lecture 11: Deadlock, Scheduling, & Synchronization October 3, 2019 Instructor: David E

CS 162: Operating Systems and System Programming Lecture 11: Deadlock, Scheduling, & Synchronization October 3, 2019 Instructor: David E. Culler & Sam Kumar https://cs162.eecs.berkeley.edu Read: A&D 6.5 Project 1 Code due TOMORROW! Midterm Exam next week Thursday Outline for Today • (Quickly) Recap and Finish Scheduling • Deadlock • Language Support for Concurrency 10/3/19 CS162 UCB Fa 19 Lec 11.2 Recall: CPU & I/O Bursts • Programs alternate between bursts of CPU, I/O activity • Scheduler: Which thread (CPU burst) to run next? • Interactive programs vs Compute Bound vs Streaming 10/3/19 CS162 UCB Fa 19 Lec 11.3 Recall: Evaluating Schedulers • Response Time (ideally low) – What user sees: from keypress to character on screen – Or completion time for non-interactive • Throughput (ideally high) – Total operations (jobs) per second – Overhead (e.g. context switching), artificial blocks • Fairness – Fraction of resources provided to each – May conflict with best avg. throughput, resp. time 10/3/19 CS162 UCB Fa 19 Lec 11.4 Recall: What if we knew the future? • Key Idea: remove convoy effect –Short jobs always stay ahead of long ones • Non-preemptive: Shortest Job First – Like FCFS where we always chose the best possible ordering • Preemptive Version: Shortest Remaining Time First – A newly ready process (e.g., just finished an I/O operation) with shorter time replaces the current one 10/3/19 CS162 UCB Fa 19 Lec 11.5 Recall: Multi-Level Feedback Scheduling Long-Running Compute Tasks Demoted to Low Priority • Intuition: Priority Level proportional -

Process Scheduling Ii

PROCESS SCHEDULING II CS124 – Operating Systems Spring 2021, Lecture 12 2 Real-Time Systems • Increasingly common to have systems with real-time scheduling requirements • Real-time systems are driven by specific events • Often a periodic hardware timer interrupt • Can also be other events, e.g. detecting a wheel slipping, or an optical sensor triggering, or a proximity sensor reaching a threshold • Event latency is the amount of time between an event occurring, and when it is actually serviced • Usually, real-time systems must keep event latency below a minimum required threshold • e.g. antilock braking system has 3-5 ms to respond to wheel-slide • The real-time system must try to meet its deadlines, regardless of system load • Of course, may not always be possible… 3 Real-Time Systems (2) • Hard real-time systems require tasks to be serviced before their deadlines, otherwise the system has failed • e.g. robotic assembly lines, antilock braking systems • Soft real-time systems do not guarantee tasks will be serviced before their deadlines • Typically only guarantee that real-time tasks will be higher priority than other non-real-time tasks • e.g. media players • Within the operating system, two latencies affect the event latency of the system’s response: • Interrupt latency is the time between an interrupt occurring, and the interrupt service routine beginning to execute • Dispatch latency is the time the scheduler dispatcher takes to switch from one process to another 4 Interrupt Latency • Interrupt latency in context: Interrupt! Task -

Embedded Systems

Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering STUDY MATERIAL for III rd Year VI th Semester Subject Name: EMBEDDED SYSTEMS Prepared by Dr.P.Venkatesan, Associate Professor, ECE, SCSVMV University Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering PRE-REQUISITE: Basic knowledge of Microprocessors, Microcontrollers & Digital System Design OBJECTIVES: The student should be made to – Learn the architecture and programming of ARM processor. Be familiar with the embedded computing platform design and analysis. Be exposed to the basic concepts and overview of real time Operating system. Learn the system design techniques and networks for embedded systems to industrial applications. Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering SYLLABUS UNIT – I INTRODUCTION TO EMBEDDED COMPUTING AND ARM PROCESSORS Complex systems and micro processors– Embedded system design process –Design example: Model train controller- Instruction sets preliminaries - ARM Processor – CPU: programming input and output- supervisor mode, exceptions and traps – Co- processors- Memory system mechanisms – CPU performance- CPU power consumption. UNIT – II EMBEDDED COMPUTING PLATFORM DESIGN The CPU Bus-Memory devices and systems–Designing with computing platforms – consumer electronics architecture – platform-level performance analysis - Components for embedded programs- Models of programs- -

Advanced Scheduling

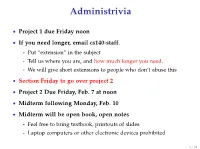

Administrivia • Project 1 due Friday noon • If you need longer, email cs140-staff. - Put “extension” in the subject - Tell us where you are, and how much longer you need. - We will give short extensions to people who don’t abuse this • Section Friday to go over project 2 • Project 2 Due Friday, Feb. 7 at noon • Midterm following Monday, Feb. 10 • Midterm will be open book, open notes - Feel free to bring textbook, printouts of slides - Laptop computers or other electronic devices prohibited 1 / 38 Fair Queuing (FQ) [Demers] • Digression: packet scheduling problem - Which network packet should router send next over a link? - Problem inspired some algorithms we will see today - Plus good to reinforce concepts in a different domain. • For ideal fairness, would use bit-by-bit round-robin (BR) - Or send more bits from more important flows (flow importance can be expressed by assigning numeric weights) Flow 1 Flow 2 Round-robin service Flow 3 Flow 4 2 / 38 SJF • Recall limitations of SJF from last lecture: - Can’t see the future . solved by packet length - Optimizes response time, not turnaround time . but these are the same when sending whole packets - Not fair Packet scheduling • Differences from CPU scheduling - No preemption or yielding—must send whole packets . Thus, can’t send one bit at a time - But know how many bits are in each packet . Can see the future and know how long packet needs link • What scheduling algorithm does this suggest? 3 / 38 Packet scheduling • Differences from CPU scheduling - No preemption or yielding—must send whole packets . -

(FCFS) Scheduling

Lecture 4: Uniprocessor Scheduling Prof. Seyed Majid Zahedi https://ece.uwaterloo.ca/~smzahedi Outline • History • Definitions • Response time, throughput, scheduling policy, … • Uniprocessor scheduling policies • FCFS, SJF/SRTF, RR, … A Bit of History on Scheduling • By year 2000, scheduling was considered a solved problem “And you have to realize that there are not very many things that have aged as well as the scheduler. Which is just another proof that scheduling is easy.” Linus Torvalds, 2001[1] • End to Dennard scaling in 2004, led to multiprocessor era • Designing new (multiprocessor) schedulers gained traction • Energy efficiency became top concern • In 2016, it was shown that bugs in Linux kernel scheduler could cause up to 138x slowdown in some workloads with proportional energy waist [2] [1] L. Torvalds. The Linux Kernel Mailing List. http://tech-insider.org/linux/research/2001/1215.html, Feb. 2001. [2] Lozi, Jean-Pierre, et al. "The Linux scheduler: a decade of wasted cores." Proceedings of the Eleventh European Conference on Computer Systems. 2016. Definitions • Task, thread, process, job: unit of work • E.g., mouse click, web request, shell command, etc.) • Workload: set of tasks • Scheduling algorithm: takes workload as input, decides which tasks to do first • Overhead: amount of extra work that is done by scheduler • Preemptive scheduler: CPU can be taken away from a running task • Work-conserving scheduler: CPUs won’t be left idle if there are ready tasks to run • For non-preemptive schedulers, work-conserving is not always -

Lottery Scheduler for the Linux Kernel Planificador Lotería Para El Núcleo

Lottery scheduler for the Linux kernel María Mejía a, Adriana Morales-Betancourt b & Tapasya Patki c a Universidad de Caldas and Universidad Nacional de Colombia, Manizales, Colombia, [email protected] b Departamento de Sistemas e Informática at Universidad de Caldas, Manizales, Colombia, [email protected] c Department of Computer Science, University of Arizona, Tucson, USA, [email protected] Received: April 17th, 2014. Received in revised form: September 30th, 2014. Accepted: October 20th, 2014 Abstract This paper describes the design and implementation of Lottery Scheduling, a proportional-share resource management algorithm, on the Linux kernel. A new lottery scheduling class was added to the kernel and was placed between the real-time and the fair scheduling class in the hierarchy of scheduler modules. This work evaluates the scheduler proposed on compute-intensive, I/O-intensive and mixed workloads. The results indicate that the process scheduler is probabilistically fair and prevents starvation. Another conclusion is that the overhead of the implementation is roughly linear in the number of runnable processes. Keywords: Lottery scheduling, Schedulers, Linux kernel, operating system. Planificador lotería para el núcleo de Linux Resumen Este artículo describe el diseño e implementación del planificador Lotería en el núcleo de Linux, este planificador es un algoritmo de administración de proporción igual de recursos, Una nueva clase, el planificador Lotería (Lottery scheduler), fue adicionado al núcleo y ubicado entre la clase de tiempo-real y la clase de planificador completamente equitativo (Complete Fair scheduler-CFS) en la jerarquía de los módulos planificadores. Este trabajo evalúa el planificador propuesto en computación intensiva, entrada-salida intensiva y cargas de trabajo mixtas. -

Processor Affinity Or Bound Multiprocessing?

Processor Affinity or Bound Multiprocessing? Easing the Migration to Embedded Multicore Processing Shiv Nagarajan, Ph.D. QNX Software Systems [email protected] Processor Affinity or Bound Multiprocessing? QNX Software Systems Abstract Thanks to higher computing power and system density at lower clock speeds, multicore processing has gained widespread acceptance in embedded systems. Designing systems that make full use of multicore processors remains a challenge, however, as does migrating systems designed for single-core processors. Bound multiprocessing (BMP) can help with these designs and these migrations. It adds a subtle but critical improvement to symmetric multiprocessing (SMP) processor affinity: it implements runmask inheritance, a feature that allows developers to bind all threads in a process or even a subsystem to a specific processor without code changes. QNX Neutrino RTOS Introduction The QNX® Neutrino® RTOS has been Effective use of multicore processors can multiprocessor capable since 1997, and profoundly improve the performance of embedded is deployed in hundreds of systems and applications ranging from industrial control systems thousands of multicore processors in to multimedia platforms. Designing systems that embedded environments. It supports make effective use of multiprocessors remains a AMP, SMP — and BMP. challenge, however. The problem is not just one of making full use of the new multicore environments to optimize performance. Developers must not only guarantee that the systems they themselves write will run correctly on multicore processors, but they must also ensure that applications written for single-core processors will not fail or cause other applications to fail when they are migrated to a multicore environment. To complicate matters further, these new and legacy applications can contain third-party code, which developers may not be able to change. -

CPU Scheduling

CS 372: Operating Systems Mike Dahlin Lecture #3: CPU Scheduling ********************************* Review -- 1 min ********************************* Deadlock ♦ definition ♦ conditions for its occurrence ♦ solutions: breaking deadlocks, avoiding deadlocks ♦ efficiency v. complexity Other hard (liveness) problems priority inversion starvation denial of service ********************************* Outline - 1 min ********************************** CPU Scheduling • goals • algorithms and evaluation Goal of lecture: We will discuss a range of options. There are many more out there. The important thing is not to memorize the scheduling algorithms I describe. The important thing is to develop strategy for analyzing scheduling algorithms in general. ********************************* Preview - 1 min ********************************* File systems 1 02/24/11 CS 372: Operating Systems Mike Dahlin ********************************* Lecture - 20 min ********************************* 1. Scheduling problem definition Threads = concurrency abstraction Last several weeks: what threads are, how to build them, how to use them 3 main states: ready, running, waiting Running: TCB on CPU Waiting: TCB on a lock, semaphore, or condition variable queue Ready: TCB on ready queue Operating system can choose when to stop running one and when to start the next ready one. OS can choose which ready thread to start (ready “queue” doesn’t have to be FIFO) Key principle of OS design: separate mechanism from policy Mechanism – how to do something Policy – what to do, when to do it 2 02/24/11 CS 372: Operating Systems Mike Dahlin In this case, design our context switch mechanism and synchronization methodology allow OS to switch from any thread to any other one at any time (system will behave correctly) Thread/process scheduling policy decides when to switch in order to meet performance goals 1.1 Pre-emptive v. -

CPU Scheduling

CPU Scheduling CS 416: Operating Systems Design Department of Computer Science Rutgers University http://www.cs.rutgers.edu/~vinodg/teaching/416 What and Why? What is processor scheduling? Why? At first to share an expensive resource ± multiprogramming Now to perform concurrent tasks because processor is so powerful Future looks like past + now Computing utility – large data/processing centers use multiprogramming to maximize resource utilization Systems still powerful enough for each user to run multiple concurrent tasks Rutgers University 2 CS 416: Operating Systems Assumptions Pool of jobs contending for the CPU Jobs are independent and compete for resources (this assumption is not true for all systems/scenarios) Scheduler mediates between jobs to optimize some performance criterion In this lecture, we will talk about processes and threads interchangeably. We will assume a single-threaded CPU. Rutgers University 3 CS 416: Operating Systems Multiprogramming Example Process A 1 sec start idle; input idle; input stop Process B start idle; input idle; input stop Time = 10 seconds Rutgers University 4 CS 416: Operating Systems Multiprogramming Example (cont) Process A Process B start B start idle; input idle; input stop A idle; input idle; input stop B Total Time = 20 seconds Throughput = 2 jobs in 20 seconds = 0.1 jobs/second Avg. Waiting Time = (0+10)/2 = 5 seconds Rutgers University 5 CS 416: Operating Systems Multiprogramming Example (cont) Process A start idle; input idle; input stop A context switch context switch to B to A Process B idle; input idle; input stop B Throughput = 2 jobs in 11 seconds = 0.18 jobs/second Avg. -

CPU Scheduling

Chapter 5: CPU Scheduling Operating System Concepts – 10th Edition Silberschatz, Galvin and Gagne ©2018 Chapter 5: CPU Scheduling Basic Concepts Scheduling Criteria Scheduling Algorithms Thread Scheduling Multi-Processor Scheduling Real-Time CPU Scheduling Operating Systems Examples Algorithm Evaluation Operating System Concepts – 10th Edition 5.2 Silberschatz, Galvin and Gagne ©2018 Objectives Describe various CPU scheduling algorithms Assess CPU scheduling algorithms based on scheduling criteria Explain the issues related to multiprocessor and multicore scheduling Describe various real-time scheduling algorithms Describe the scheduling algorithms used in the Windows, Linux, and Solaris operating systems Apply modeling and simulations to evaluate CPU scheduling algorithms Operating System Concepts – 10th Edition 5.3 Silberschatz, Galvin and Gagne ©2018 Basic Concepts Maximum CPU utilization obtained with multiprogramming CPU–I/O Burst Cycle – Process execution consists of a cycle of CPU execution and I/O wait CPU burst followed by I/O burst CPU burst distribution is of main concern Operating System Concepts – 10th Edition 5.4 Silberschatz, Galvin and Gagne ©2018 Histogram of CPU-burst Times Large number of short bursts Small number of longer bursts Operating System Concepts – 10th Edition 5.5 Silberschatz, Galvin and Gagne ©2018 CPU Scheduler The CPU scheduler selects from among the processes in ready queue, and allocates the a CPU core to one of them Queue may be ordered in various ways CPU scheduling decisions may take place when a -

Cross-Layer Resource Control and Scheduling for Improving Interactivity in Android

SOFTWARE—PRACTICE AND EXPERIENCE Softw. Pract. Exper. 2014; 00:1–22 Published online in Wiley InterScience (www.interscience.wiley.com). DOI: 10.1002/spe Cross-Layer Resource Control and Scheduling for Improving Interactivity in Android ∗ † Sungju Huh1, Jonghun Yoo2 and Seongsoo Hong1,2,3, , 1Department of Transdisciplinary Studies, Graduate School of Convergence Science and Technology, Seoul National University, Suwon-si, Gyeonggi-do, Republic of Korea 2Department of Electrical and Computer Engineering, Seoul National University, Seoul, Republic of Korea 3Advanced Institutes of Convergence Technology, Suwon-si, Gyeonggi-do, Republic of Korea SUMMARY Android smartphones are often reported to suffer from sluggish user interactions due to poor interactivity. This is partly because Android and its task scheduler, the completely fair scheduler (CFS), may incur perceptibly long response time to user-interactive tasks. Particularly, the Android framework cannot systemically favor user-interactive tasks over other background tasks since it does not distinguish between them. Furthermore, user-interactive tasks can suffer from high dispatch latency due to the non-preemptive nature of CFS. To address these problems, this paper presents framework-assisted task characterization and virtual time-based CFS. The former is a cross-layer resource control mechanism between the Android framework and the underlying Linux kernel. It identifies user-interactive tasks at the framework-level, by using the notion of a user-interactive task chain. It then enables the kernel scheduler to selectively promote the priorities of worker tasks appearing in the task chain to reduce the preemption latency. The latter is a cross-layer refinement of CFS in terms of interactivity. It allows a task to be preempted at every predefined period.