Simple, Fast, and Practical Non-Blocking and Concurrent Queue Algorithms

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

The Challenges of Hardware Synthesis from C-Like Languages

The Challenges of Hardware Synthesis from C-like Languages Stephen A. Edwards∗ Department of Computer Science Columbia University, New York Abstract most successful C-like languages, in fact, bear little syntactic or semantic resemblance to C, effectively forcing users to learn The relentless increase in the complexity of integrated circuits a new language anyway. As a result, techniques for synthesiz- we can fabricate imposes a continuing need for ways to de- ing hardware from C either generate inefficient hardware or scribe complex hardware succinctly. Because of their ubiquity propose a language that merely adopts part of C syntax. and flexibility, many have proposed to use the C and C++ lan- For space reasons, this paper is focused purely on the use of guages as specification languages for digital hardware. Yet, C-like languages for synthesis. I deliberately omit discussion tools based on this idea have seen little commercial interest. of other important uses of a design language, such as validation In this paper, I argue that C/C++ is a poor choice for specify- and algorithm exploration. C-like languages are much more ing hardware for synthesis and suggest a set of criteria that the compelling for these tasks, and one in particular (SystemC) is next successful hardware description language should have. now widely used, as are many ad hoc variants. 1 Introduction 2 A Short History of C Familiarity is the main reason C-like languages have been pro- Dennis Ritchie developed C in the early 1970 [18] based on posed for hardware synthesis. Synthesize hardware from C, experience with Ken Thompson’s B language, which had itself proponents claim, and we will effectively turn every C pro- evolved from Martin Richards’ BCPL [17]. -

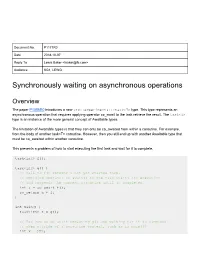

Synchronously Waiting on Asynchronous Operations

Document No. P1171R0 Date 2018-10-07 Reply To Lewis Baker <[email protected]> Audience SG1, LEWG Synchronously waiting on asynchronous operations Overview The paper P1056R0 introduces a new std::experimental::task<T> type. This type represents an asynchronous operation that requires applying operator co_await to the task retrieve the result. The task<T> type is an instance of the more general concept of Awaitable types. The limitation of Awaitable types is that they can only be co_awaited from within a coroutine. For example, from the body of another task<T> coroutine. However, then you still end up with another Awaitable type that must be co_awaited within another coroutine. This presents a problem of how to start executing the first task and wait for it to complete. task<int> f(); task<int> g() { // Call to f() returns a not-yet-started task. // Applying operator co_await() to the task starts its execution // and suspends the current coroutine until it completes. int a = co_await f(); co_return a + 1; } int main() { task<int> t = g(); // But how do we start executing g() and waiting for it to complete // when outside of a coroutine context, such as in main()? int x = ???; return x; } This paper proposes a new function, sync_wait(), that will allow a caller to pass an arbitrary Awaitable type into the function. The function will co_await the passed Awaitable object on the current thread and then block waiting for that co_await operation to complete; either synchronously on the current thread, or asynchronously on another thread. When the operation completes, the result is captured on whichever thread the operation completed on. -

Lock-Free Programming

Lock-Free Programming Geoff Langdale L31_Lockfree 1 Desynchronization ● This is an interesting topic ● This will (may?) become even more relevant with near ubiquitous multi-processing ● Still: please don’t rewrite any Project 3s! L31_Lockfree 2 Synchronization ● We received notification via the web form that one group has passed the P3/P4 test suite. Congratulations! ● We will be releasing a version of the fork-wait bomb which doesn't make as many assumptions about task id's. – Please look for it today and let us know right away if it causes any trouble for you. ● Personal and group disk quotas have been grown in order to reduce the number of people running out over the weekend – if you try hard enough you'll still be able to do it. L31_Lockfree 3 Outline ● Problems with locking ● Definition of Lock-free programming ● Examples of Lock-free programming ● Linux OS uses of Lock-free data structures ● Miscellanea (higher-level constructs, ‘wait-freedom’) ● Conclusion L31_Lockfree 4 Problems with Locking ● This list is more or less contentious, not equally relevant to all locking situations: – Deadlock – Priority Inversion – Convoying – “Async-signal-safety” – Kill-tolerant availability – Pre-emption tolerance – Overall performance L31_Lockfree 5 Problems with Locking 2 ● Deadlock – Processes that cannot proceed because they are waiting for resources that are held by processes that are waiting for… ● Priority inversion – Low-priority processes hold a lock required by a higher- priority process – Priority inheritance a possible solution L31_Lockfree -

CMS Application Multitasking

z/VM Version 7 Release 1 CMS Application Multitasking IBM SC24-6258-00 Note: Before you use this information and the product it supports, read the information in “Notices” on page 337. This edition applies to version 7, release 1, modification 0 of IBM® z/VM® (product number 5741-A09) and to all subsequent releases and modifications until otherwise indicated in new editions. Last updated: 2018-09-12 © Copyright International Business Machines Corporation 1992, 2018. US Government Users Restricted Rights – Use, duplication or disclosure restricted by GSA ADP Schedule Contract with IBM Corp. Contents List of Figures....................................................................................................... ix List of Tables........................................................................................................ xi About This Document..........................................................................................xiii Intended Audience.................................................................................................................................... xiii Where to Find More Information...............................................................................................................xiii Links to Other Documents and Websites............................................................................................ xiii How to Send Your Comments to IBM.....................................................................xv Summary of Changes for z/VM CMS Application Multitasking.............................. -

Automating Non-Blocking Synchronization in Concurrent Data Abstractions

Automating Non-Blocking Synchronization In Concurrent Data Abstractions Jiange Zhang Qing Yi Damian Dechev University of Colorado at University of Colorado at University of Central Florida Colorado Springs, CO, USA Colorado Springs, CO, USA Orlando, FL, USA [email protected] [email protected] [email protected] Abstract—This paper investigates using compiler tion needs. We show that by automatically weaving syn- technology to automatically convert sequential C++ chronization schemes with sequential implementations of data abstractions, e.g., queues, stacks, maps, and trees, data structures, many efficient lock-free data structures to concurrent lock-free implementations. By auto- matically tailoring a number of state-of-the-practice can be made readily available, with performance compet- synchronization methods to the underlying sequen- itive to that of the manually crafted ones by experts. tial implementations of different data structures, our The key technical difference between our work and exist- automatically synchronized code can attain perfor- ing STM research is that we support multiple synchroniza- mance competitive to that of concurrent data struc- tion strategies and automatically select the best strategy tures manually-written by experts and much better performance than heavier-weight support by software for each piece of code at compile time. No existing compiler transactional memory (STM). for STM supports automatic tailoring of synchronizations to what is needed by different pieces of code. The compiler I. Introduction algorithms we present are the first to do this. While we The advent of the multi-core era has brought multi- mostly rely on standard compiler techniques (e.g., pointer threaded programming to the mainstream and with it the and data-flow analysis), the problem formulations and challenges of managing shared data among the threads. -

Mutual Exclusion: Classical Algorithms for Locks

Mutual Exclusion: Classical Algorithms for Locks Bill Scherer Department of Computer Science Rice University [email protected] COMP 422 Lecture 20 March 2008 Motivation Ensure that a block of code manipulating a data structure is executed by only one thread at a time • Why? avoid conflicting accesses to shared data (data races) —read/write conflicts —write/write conflicts • Approach: critical section • Mechanism: lock —methods – acquire – release • Usage —acquire lock to enter the critical section —release lock to leave the critical section 2 Problems with Locks • Conceptual —coarse-grained: poor scalability —fine-grained: hard to write • Semantic —deadlock —priority inversion • Performance —convoying —intolerance of page faults and preemption 3 Lock Alternatives • Transactional memory (TM) + Easy to use, well-understood metaphor – High overhead (so far) ± Subject of much active research • Ad hoc nonblocking synchronization (NBS) + Thread failure/delay cannot prevent progress + Can be faster than locks (stacks, queues) – Notoriously difficult to write – every new algorithm is a publishable result + Can be “canned” in libraries (e.g. java.util) 4 Synchronization Landscape Ad Hoc NBS Fine Locks Software HW TM (STM) TM Programmer Effort Coarse Canned Locks NBS System Performance 5 Properties of Good Lock Algorithms • Mutual exclusion (safety property) —critical sections of different threads do not overlap – cannot guarantee integrity of computation without this property • No deadlock —if some thread attempts to acquire the lock, then some -

Synchronization Key Concepts: Critical Sections, Mutual Exclusion, Test-And-Set, Spinlocks, Blocking and Blocking Locks, Semaphores, Condition Variables, Deadlocks

Synchronization key concepts: critical sections, mutual exclusion, test-and-set, spinlocks, blocking and blocking locks, semaphores, condition variables, deadlocks Ali Mashtizadeh and Lesley Istead David R. Cheriton School of Computer Science University of Waterloo Fall 2019 1 / 56 Thread Synchronization All threads in a concurrent program share access to the program's global variables and the heap. The part of a concurrent program in which a shared object is accessed is called a critical section. What happens if several threads try to access the same global variable or heap object at the same time? 2 / 56 Critical Section Example /* Note the use of volatile; revisit later */ int ________volatiletotal = 0; void add() f void sub() f int i; int i; for (i=0; i<N; i++) f for (i=0; i<N; i++) f total++; total--; g g g g If one thread executes add and another executes sub what is the value of total when they have finished? 3 / 56 Critical Section Example (assembly detail) /* Note the use of volatile */ int ________volatiletotal = 0; void add() f void sub() f loadaddr R8 total loadaddr R10 total for (i=0; i<N; i++) f for (i=0; i<N; i++) f lw R9 0(R8) lw R11 0(R10) add R9 1 sub R11 1 sw R9 0(R8) sw R11 0(R10) g g g g 4 / 56 Critical Section Example (Trace 1) Thread 1 Thread 2 loadaddr R8 total lw R9 0(R8) R9=0 add R9 1 R9=1 sw R9 0(R8) total=1 <INTERRUPT> loadaddr R10 total lw R11 0(R10) R11=1 sub R11 1 R11=0 sw R11 0(R10) total=0 One possible order of execution. -

Concurrent Programming Without Locks

Concurrent Programming Without Locks KEIR FRASER University of Cambridge Computer Laboratory and TIM HARRIS Microsoft Research Cambridge Mutual exclusion locks remain the de facto mechanism for concurrency control on shared-memory data structures. However, their apparent simplicity is deceptive: it is hard to design scalable locking strategies because locks can harbour problems such as priority inversion, deadlock and convoying. Furthermore, scalable lock-based systems are not readily composable when building compound operations. In looking for solutions to these problems, interest has developed in non- blocking systems which have promised scalability and robustness by eschewing mutual exclusion while still ensuring safety. However, existing techniques for building non-blocking systems are rarely suitable for practical use, imposing substantial storage overheads, serialising non-conflicting operations, or requiring instructions not readily available on today’s CPUs. In this paper we present three APIs which make it easier to develop non-blocking implemen- tations of arbitrary data structures. The first API is a multi-word compare-and-swap operation (MCAS) which atomically updates a set of memory locations. This can be used to advance a data structure from one consistent state to another. The second API is a word-based software transactional memory (WSTM) which can allow sequential code to be re-used more directly than with MCAS and which provides better scalability when locations are being read rather than being updated. The third API is an object-based software transactional memory (OSTM). OSTM allows a more streamlined implementation than WSTM, but at the cost of re-engineering the code to use OSTM objects. We present practical implementations of all three of these APIs, built from operations available across all of today’s major CPU families. -

Enabling Task Level Parallelism in Handelc Thamer S

Enabling Task Level Parallelism in HandelC Thamer S. AbuYasin Submitted to the Department of Electrical Engineering & Computer Science and the Faculty of the Graduate School of the University of Kansas in partial fulfillment of the requirements for the degree of Master’s of Science Thesis Committee: Dr. David Andrews: Chairperson Dr. Arvin Agah Dr. Perry Alexander Date Defended 2007/12/14 The Thesis Committee for Thamer S. AbuYasin certifies That this is the approved version of the following thesis: Enabling Task Level Parallelism in HandelC Committee: Chairperson Date Approved i Chapter 1 Abstract HandelC is a programming language used to target hardware and is similar in syntax to ANSI-C. HandelC offers constructs that allow programmers to express instruction level parallelism. Also, HandelC offers primitives that allow task level parallelism. However, HandelC does not offer any runtime support that enables programmers to express task level parallelism efficiently. This thesis discusses this issue and suggests a support library called HCthreads as a solution. HCthreads offers a subset of Pthreads functionality and interface relevant to the HandelC environment. This study offers means to identify the best configuration of HC- threads to achieve the highest speedups in real systems. This thesis investigates the issue of integrating HandelC within platforms not supported by Celoxica. A support library is implemented to solve this issue by utilizing the high level abstractions offered by Hthreads. This support library abstracts away any HWTI specific synchronization making the coding experience quite close to software. HCthreads is proven effective and generic for various algorithms with different threading behaviors. HCthreads is an adequate method to implement recursive algorithms even if no task level parallelism is warranted. -

Concurrent Data Structures

1 Concurrent Data Structures 1.1 Designing Concurrent Data Structures ::::::::::::: 1-1 Performance ² Blocking Techniques ² Nonblocking Techniques ² Complexity Measures ² Correctness ² Verification Techniques ² Tools of the Trade 1.2 Shared Counters and Fetch-and-Á Structures ::::: 1-12 1.3 Stacks and Queues :::::::::::::::::::::::::::::::::::: 1-14 1.4 Pools :::::::::::::::::::::::::::::::::::::::::::::::::::: 1-17 1.5 Linked Lists :::::::::::::::::::::::::::::::::::::::::::: 1-18 1.6 Hash Tables :::::::::::::::::::::::::::::::::::::::::::: 1-19 1.7 Search Trees:::::::::::::::::::::::::::::::::::::::::::: 1-20 Mark Moir and Nir Shavit 1.8 Priority Queues :::::::::::::::::::::::::::::::::::::::: 1-22 Sun Microsystems Laboratories 1.9 Summary ::::::::::::::::::::::::::::::::::::::::::::::: 1-23 The proliferation of commercial shared-memory multiprocessor machines has brought about significant changes in the art of concurrent programming. Given current trends towards low- cost chip multithreading (CMT), such machines are bound to become ever more widespread. Shared-memory multiprocessors are systems that concurrently execute multiple threads of computation which communicate and synchronize through data structures in shared memory. The efficiency of these data structures is crucial to performance, yet designing effective data structures for multiprocessor machines is an art currently mastered by few. By most accounts, concurrent data structures are far more difficult to design than sequential ones because threads executing concurrently may interleave -

UNIT 10C Multitasking & Operating Systems

11/5/2011 UNIT 10C Concurrency: Multitasking & Deadlock 15110 Principles of Computing, Carnegie 1 Mellon University - CORTINA Multitasking & Operating Systems • Multitasking - The coordination of several computational processes on one processor. • An operating system (OS) is the system software responsible for the direct control and management of hardware and basic system operations. • An OS provides a foundation upon which to run application software such as word processing programs, web browsers, etc. from Wikipedia 15110 Principles of Computing, Carnegie 2 Mellon University - CORTINA 1 11/5/2011 Operating System “Flavors” • UNIX – System V, BSD, Linux – Proprietary: Solaris, Mac OS X • Windows – Windows XP – Windows Vista – Windows 7 15110 Principles of Computing, Carnegie 3 Mellon University - CORTINA Multitasking • Each (un-blocked) application runs for a very short time on the computer's processor and then another process runs, then another... • This is done at such a fast rate that it appears to the computer user that all applications are running at the same time. – How do actors appear to move in a motion picture? 15110 Principles of Computing, Carnegie 4 Mellon University - CORTINA 2 11/5/2011 Critical Section • A critical section is a section of computer code that must only be executed by one process or thread at a time. • Examples: – A printer queue receiving a file to be printed – Code to set a seat as reserved – Web server that generates and returns a web page showing current registration in course 15110 Principles of Computing, Carnegie 5 Mellon University - CORTINA A Critical Section Cars may not make turns through this intersection. They may only proceed straight ahead. -

Deadlocks (Chapter 3, Tanenbaum)

Deadlocks (Chapter 3, Tanenbaum) Copyright © 1996±2002 Eskicioglu and Marsland (and Prentice-Hall and July 99 Paul Lu) Deadlocks What is a deadlock? Deadlock is defined as the permanent blocking of a set of processes that compete for system resources, including database records or communication lines. Unlike other problems in multiprogramming systems, there is no efficient solution to the deadlock problem in the general case. Deadlock prevention, by design, is the ªbestº solution. Deadlock occurs when a set of processes are in a wait state, because each process is waiting for a resource that is held by some other waiting process. Therefore, all deadlocks involve conflicting resource needs by two or more processes. Copyright © 1996±2002 Eskicioglu and Marsland (and Prentice-Hall and July 99 Paul Lu) Deadlocks 2 Classification of resourcesÐI Two general categories of resources can be distinguished: · Reusable: something that can be safely used by one process at a time and is not depleted by that use. Processes obtain resources that they later release for reuse by others. E.g., CPU, memory, specific I/O devices, or files. · Consumable: these can be created and destroyed. When a resource is acquired by a process, the resource ceases to exist. E.g., interrupts, signals, or messages. Copyright © 1996±2002 Eskicioglu and Marsland (and Prentice-Hall and July 99 Paul Lu) Deadlocks 3 Classification of resourcesÐII One other taxonomy again identifies two types of resources: · Preemptable: these can be taken away from the process owning it with no ill effects (needs save/restore). E.g., memory or CPU. · Non-preemptable: cannot be taken away from its current owner without causing the computation to fail.