Personalized Song Interaction Using a Multi-Touch Interface

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

PDF of This Issue

No Classes on Monday! MIT’s The Weather Today: Partly cloudy, 72°F (22°C) Oldest and Largest Tonight: Cloudy, 68°F (20°C) Tomorrow: Partly cloudy, 70°F (21°C) Newspaper Details, Page 2 Volume 125, Number 39 Cambridge, Massachusetts 02139 Friday, September 16, 2005 Building 46 Lights Up the Brain MIT Observes Const. By Hannah Hsieh Each wing contains state-of-the- are located on the second floor, al- Who knows how the natural light- art laboratories, wireless access, though classes will not be held there ing, bold colors and bamboo forest conference rooms, student reading until later in the semester. of the new- rooms, and clinical space. The Brain Day on the Internet ly-minted and Cognitive Sciences classrooms BCS, Page 12 By Ray C. He reach out and teach something new.” Feature B u i l d i n g STAFF REPORTER 46 will af- True to form, MIT has chosen to Actions required by law unclear fect the research of MIT’s leading celebrate the new, federally-mandated “The law doesn’t require any real cognitive scientists. Constitution Day in an online format. activities,” Stewart said. “It turns out The building, due to receive its Within a week of tomorrow, all uni- that an activity could be posting up a new inhabitants beginning next week, versities receiving federal funds must Web site or making available material will bring together three previously teach the Constitution, according to — you don’t have to have a talk or in- separate groups of researchers into an amendment added by Senator Rob- vite a real audience.” a space designed to facilitate inter- ert C. -

Tim Delaughter

TIM DELAUGHTER AWARDS/NOMINATIONS EMMY AWARD NOMINATION (2009) UNITED STATES OF TARA Best Original Main Title Theme Music FEATURE FILM VISIONEERS Jory Weitz, prod. Visioneers Film Productions Jared Drake, dir. THUMBSUCKER Bob Yari, Anne Carey, prods. Yari Film Group Mike Mills, dir. ETERNAL SUNSHINE OF Charlie Kaufman, Anthony Bregman, prods. THE SPOTLESS MIND Michel Gondry, dir. Focus Features *Song TELEVISION UNITED STATES OF TARA Steven Spielberg, prod. DreamWorks Television Craig Gillespie, dir. BIO DeLaughter’s career began in 1991 as vocalist/guitarist of the Dallas-based alternative rock band Tripping Daisy . Inspired by the Beatles’ psychedelic period, the group was signed to Island Records and released four acclaimed albums before disbanding as a result of the untimely death of guitarist Wes Berggren from a drug overdose in 1999. After leaving music for a brief time to start his family, DeLaughter soon found the lure of a return to performing and recording irresistible. The result was the Dallas symphonic pop group The Polyphonic Spree . Described as less a band than a happening, the group’s two dozen members perform in flowing white robes – an appropriate backdrop for their happy, uplifting musical message of catchy pop laced with gospel. The unusual group boasts a ten-member choir, a pair of keyboardists, a percussionist, bassist, guitarist, flautist, trumpeter, trombonist, violist, French horn player and even a theremin player, with DeLaughter serving as musical director, lead singer, and creative shaman. Generating huge interest with their performance at Austin’s South-by-Southwest music festival in 2002, the Spree were eventually hand-picked by David Bowie to play his Meltdown Festival at London’s Royal Festival Hall, and later signed to Hollywood The Gorfaine/Schwartz Agency, Inc. -

Polyphonic Spree the Beginning Stages of Zip

1 / 5 Polyphonic Spree The Beginning Stages Of Zip Sep 5, 2019 — AUSTIN, TEXAS (SEPTEMBER 5, 2019) – The first batch of Dale's Pale ... band to come out of central Texas in a very long time, takes the stage. ... Graves, Peelander-Z, White Denim and the Polyphonic Spree. ... Zip Code:.. ... CRASH ADEN SEMIAUTOMATIC POLYPHONIC SPREE LOWFISH CINEMATIC ORCHEST TRANS AM ... Address: Suite/Floor City: State: Zip: Country: Phone:( ) Fax:( ) E-mail: Mail this to: CMJ New Music ... weeks for delivery of first issue).. ... JULIE DOIRON PANTYBOY BEEF TERMINAL POLYPHONIC SPREE RADIO 4 ... WENDY CARLOS CINEMATIC ORCHEST OUTRAGEOUS CHERRY EARLY ... Cardholder Signature: Name: Company: Address: Suite/Floor: City: State: Zip: .... (2011) Black Moon "Enta Da Stage" (1993) Black Moon "War Zone" (1999) Black ... The Aftermath" (1996) Dr. Dre "2001" (1999) Dr. Dre "Presents The Early Years ... Epidemic "Illin Spree" (2011) Epidemic "Monochrome Skies" (2012) Epidemic ... Official "The Anti-Album" (2003) Serengeti & Polyphonic "Terradactyl" (2009) .... The Polyphonic Spree - The Beginning Stages Of... | Section 1,Section 2 ... MP3-Version ZIP-Größe: 2892 mb. FLAC-Version ZIP-Größe: 1133 mb. WMA-Version .... MARSVOLTA 2 JANE'S ADDICTION 3 MONEEN 4 ME FIRST AND THE 5 SWORDS PROJECT 6 ... LANES 22 POLYPHONIC SPREE 23 BURNING SPEAR 24 DANDY WARHOLS 25. ... Cardholder Signature Company State: Suite/Floor Zip: .... Aug 22, 2014 — The criterium is perfect for spectators since the action is fast, as riders zip through a circular ... Taking the stage at Finish Line Village will be Wichita Falls-based cover ... of Polyphonic Spree — kick drummer and guitarist Taylor Young and ... at the HHH breakfast beginning at 5 a.m. -

Feature • Haunted Cave------West Lafayette and Bloomington

--------------Feature • Karen Lenfestey ------------- Dramatic License Patrick Boylen a personal tragedy, he claims he’s ready to parent the daughter he abandoned five years Deep-thinking local author Karen Len- ago. Joely is more interested in Dalton, a festey writes about ordinary people who devoted father to his own son who offers to deal with extraordinary circumstances. She’s take care of her the way no man ever has. currently based out of Fort Wayne, but over Should Joely risk her daughter bonding with the years Lenfestey has made her home all someone new or with the man who broke her over the state, including Valparaiso, Goshen, heart? Kate and Joely must re-adjust their --------------- Feature • Haunted Cave -------------- West Lafayette and Bloomington. She says vision of what happiness looks like in order she started writing as a child for therapeutic to move on.” purposes. On the Verge, Lenfestey’s third book, is “When my parents would send me to my another story with a horrendously perplex- room (probably for something my brother ing dilemma. Early into their marriage, the did), I would write to entertain my- Eddie’s Got the Munchies self.” Lenfestey’s first book, A Sister’s By Mark Hunter Promise, has sold remarkably well. It’s sequel, What Happiness Looks Like, Think you’re brave? Then take a trip garnered great reviews and was listed through Fort Wayne’s Haunted Cave, a as a “Strong Pick” by Midwest Book place to test your mettle and scream your Review. When a childhood punish- lungs out. It’s okay to scream in the Haunted ment results in a career as a successful Cave. -

Glastonburyminiguide.Pdf

GLASTONBURY 2003 MAP Produced by Guardian Development Cover illustrations: John & Wendy Map data: Simmons Aerofilms MAP MARKET AREA INTRODUCTION GETA LOAD OF THIS... Welcome to Glastonbury 2003 and to the official Glastonbury Festival Mini-Guide. This special edition of the Guardian’s weekly TV and entertainments listings magazine contains all the information you need for a successful and stress-free festival. The Mini-Guide contains comprehensive listings for all the main stages, plus the pick of the acts at Green Fields, Lost and Cabaret Stages, and advice on where to find the best of the weird and wonderful happenings throughout the festival. There are also tips on the bands you shouldn’t miss, a rundown of the many bars dotted around the site, fold-out maps to help you get to grips with the 600 acres of space, and practical advice on everything from lost property to keeping healthy. Additional free copies of this Mini-Guide can be picked up from the Guardian newsstand in the market, the festival information points or the Workers Beer Co bars. To help you keep in touch with all the news from Glastonbury and beyond, the Guardian and Observer are being sold by vendors and from the newsstands at a specially discounted price during the festival . Whatever you want from Glastonbury, we hope this Mini-Guide will help you make the most of it. Have a great festival. Watt Andy Illustration: ESSENTIAL INFORMATION INFORMATION POINTS hygiene. Make sure you wash MONEY give a description. If you lose There are five information your hands after going to the loo The NatWest bank is near the your children, ask for advice points where you can get local, and before eating. -

Polyphonic Spree Hallelujah Time Everybod

A high school production of Jesus Christ Superstar or annual Branch Davidian jamboree? Polyphonic Spree Hallelujah Time Everybody has voices in their head. Little angels and devils telling us to sing nice gospel tracks about God and videotape yourself having sex with your underage "goddaughter." For Tim DeLaughter, it wasn't a voice so much as a sound. The sound he heard made about as much logical sense as "If you build it, they will come" but has had nearly as magical effect on those who've witnessed his musical equivalent of a baseball diamond in a cornfield. Like anyone who has had a song lodged in their head, no matter how much you like it, it becomes a nuisance after while—backseat driver to all the other things you'd rather think about. A soundtrack that fits on one side of a 45 and loops the last groove into itself. You'd do anything to get it out of your head. So, DeLaughter did something remarkable. He rounded up a posse, and gave birth to one of the more intriguing musical phenomena to hit the scene since Parliament. This is the story of a troupe of 24 choir-robed freaks called the Polyphonic Spree. You might have seen them on the MTV Video Music Awards, introduced by Marilyn Manson who claimed the band was the love child spawned by him and co-presenter Mandy Moore. Maybe the news clip about the mic that was mistaken for a pipe bomb and shut down a major airport. Perhaps you caught them when they opened for David Bowie on his recent tour. -

Praxisseminarreihe „Preisverdächtig!“ Zu Den Nominierten Büchern Des Deutschen Jugendliteraturpreises 2014

Praxisseminarreihe „Preisverdächtig!“ zu den nominierten Büchern des Deutschen Jugendliteraturpreises 2014 Workshop Kinderbuch: „Bücher schmackhaft machen – Lesehunger wecken“ Referentin: Bettina Huhn Bearbeitete Bücher Christian Oster (Text) Raquel J. Palacio Katja Gehrmann (Illustration) Wunder Besuch beim Hasen Aus dem Englischen von André Mumot Aus dem Französischen von Tobias Carl Hanser Verlag Scheffel Ab 12 Moritz Verlag Ab 5 Sebastian Cichocki (Text) Aleksandra Mizielińska (Illustration) Susan Kreller (Hrsg.) Daniel Mizieliński (Illustration) Sabine Wilharm (Illustration) Sommerschnee und Wurstmaschine Der beste Tag aller Zeiten Sehr moderne Kunst aus aller Welt Weitgereiste Gedichte Aus dem Polnischen von Thomas Weiler Aus dem Englischen von Henning Ahrens Moritz Verlag und Claas Kazzer Ab 9 Carlsen Verlag Ab 6 Martina Wildner Königin des Sprungturms Beltz & Gelberg Ab 11 Material zum Download - „Besondere“ Begriffe – Definitionen - „Besondere“ Begriffe – Tabelle - „Wunder“ – Figuren - „Wunder“ – Maximen - „Der beste Tag aller Zeiten“ – Gedichtvorlagen Praxisseminarreihe „Preisverdächtig!“ zu den nominierten Büchern des Deutschen Jugendliteraturpreises 2014 Workshop Kinderbuch – Bettina Huhn Anmerkung vorab Der Download richtet sich an die Seminarteilnehmer/innen von „Preisverdächtig!“ und setzt in seiner Darstellung somit Vorkenntnisse aus dem Seminar voraus. Zu beachten ist, dass es sich um Aufgaben handelt, die für die Fortbildung komprimiert wurden. In der Umsetzung mit Klassen oder anderen Kindergruppen muss man die einzelnen Schritte anleiten und die Form an die jeweilige Situation und Lerngruppe anpassen. Begrüßungskreis mit Klatschimpuls Im Kreis. Alle reiben ihre Hände warm, dann gibt die Anleitung einen Klatschimpuls in Verbindung mit dem Wort „Hallo“ nach rechts weiter. Der Impuls soll so schnell wie möglich von Teilnehmer zu Teilnehmer (im Folgenden kurz TN) im Kreis weitergegeben werden. Danach wird ein Klatschimpuls in Verbindung mit dem Wort „Moin“ nach links weitergegeben. -

Record Store Day

Wooden Nickel -----------------------------------------Spins --------------------------------------- CD of the Week John Hubner $9.99 Midwest Son BACKTRACKS By now, we’ve heard sev- Talking Heads eral iterations of Warsaw, Indi- More Songs About Buildings and Food (1978) ana’s reigning basement indie king: the yearning Rundgren- The second album from the Talk- esque pop from Goodbyewave; ing Heads was a little more adventurous the spiky low-and mid-fi en- than their first release, as producer Brian ergy of Sunnydaymassacre; the Eno incorporated more of a poppy beat more considered and self-con- sound into the band’s previously organic, tained songwriter presence of alternative vibe. It opens with the upbeat the first J. Hubner title, 2010’s “Thank You for Sending Me an Angel” Life in Distortion. The guy is a and the rapid arrangements and vocals $9.99 veritable pop factory, and his primary source of fuel appears to be of the genius that is David Byrne. vinyl (of the 33-1/3 variety). So, to call the second J. Hubner re- “With Our Love” follows, and you are served up a delightful U.R.B. lease, Midwest Son, a definitive album may be somewhat premature. scratchy guitar from guitarist Jerry Harrison. “The Good Thing” Sound It Out I mean, he’ll probably have another full-length in the can before I completes the first third of the record, complete with the depend- finish this review. able, blue-collar backing vocals from the rest of this incredible This year’s Whammy winners for Best Two things stick out immediately upon dropping the virtual nee- band. -

Right Arm Resource 071205.Pmd

RIGHT ARM RESOURCE WEEKLY READER JESSE BARNETT [email protected] www.rightarmresource.com 62 CONCERTO COURT, NORTH EASTON, MA 02356 (508) 238-5654 12/5/2007 KT Tunstall “Saving My Face” Keller Williams w/String Cheese Incident “Breathe” The follow up to the #1 AAA smash “Hold On” Most Added! New: KPND, KTBG, KYSL, XM Cafe, KNBA, Most Added! First week: WTTS, WRLT, KPRI, WXRV, KXLY, KRVB, KROK, KMTN, KTAO, WUIN, KDTR, WFHB, KXCI, WUKY... WCLZ, WCOO, WRNR, KPTL... Early: KBCO, KFOG, KGSR, KENZ... In stores Dec 18, ‘12’, his 12th disc, includes the previously unreleased R&R #2 New & Active! Just played on NBC’s Today Show “Freshies” and one standout song from each of his previous 11 albums. Mike Doughty “27 Jennifers” R&R Monitored #1 New & Active! Indicator 7*! Last 2 weeks: WCLZ, KRSH, DMX, WMWV, KDBB... See the Question Jar tour now ON: KFOG, KTCZ, KCUV, WMMM, KXLY, WCOO, WNCS, WRLT, WTTS, WRNR, KPTL, KTHX, WXPK... Ingrid Michaelson “The Way I Am” R&R Monitored 20*! Indicator 13*! FMQB Public 16*! VH-1 XXL Rotation/You Oughta Know Last two weeks: WMMM, WRLT, WTTS, WCLZ, KPND... ON: WXRT, KINK, KTCZ, KMTT, KWMT... Grace Potter & The Nocturnals “Ain’t No Time” R&R Monitored #3 New & Active! Indicator 5*! ON: WMMM, CIDR, WZEW, WRLT, KXLY, KTHX, WNCS, KCUV, WCOO, WCLZ, WDST, WVOD, WFPK, WYEP, KNBA, WMVY, KFMU... On tour now! The Polyphonic Spree “We Crawl” Mark Ronson w/Lily Allen “Oh My God” Remix single on your desk and available at PlayMPE now The second single from Version, originally recorded by Kaiser Chiefs Already on: KOHO, KNBA, KCLC, WJCU, WQNR, KSLU.. -

Razorcake Issue

PO Box 42129, Los Angeles, CA 90042 #17 www.razorcake.com It’s strange the things you learn about yourself when you travel, I took my second trip to go to the wedding of an old friend, andI the last two trips I took taught me a lot about why I spend so Tommy. Tommy and I have been hanging out together since we much time working on this toilet topper that you’re reading right were about four years old, and we’ve been listening to punk rock now. together since before a lot of Razorcake readers were born. Tommy The first trip was the Perpetual Motion Roadshow, an came to pick me up from jail when I got arrested for being a smart independent writers touring circuit that took me through seven ass. I dragged the best man out of Tommy’s wedding after the best cities in eight days. One of those cities was Cleveland. While I was man dropped his pants at the bar. Friendships like this don’t come there, I scammed my way into the Rock and Roll Hall of Fame. See, along every day. they let touring bands in for free, and I knew this, so I masqueraded Before the wedding, we had the obligatory bachelor party, as the drummer for the all-girl Canadian punk band Sophomore which led to the obligatory visit to the strip bar, which led to the Level Psychology. My facial hair didn’t give me away. Nor did my obligatory bachelor on stage, drunk and dancing with strippers. -

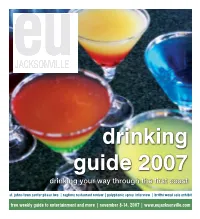

Drinking Your Way Through the First Coast St

drinking guide 2007 drinking your way through the first coast st. johns town center phase two | ragtime restaurant review | polyphonic spree interview | brittni wood solo exhibit free weekly guide to entertainment and more | november 8-14, 2007 | www.eujacksonville.com 2 november 8-14, 2007 | entertaining u newspaper COVER PHOTO BY ERIN THURSBY, TAKEN AT TWISTED MARTINI table of contents feature Sea & Sky Spectacular ..........................................................................................................PAGE 13 Town Center - Phase 2 ..........................................................................................................PAGE 14 Town Center - restaurants .....................................................................................................PAGE 15 Cafe Eleven Big Trunk Show ..................................................................................................PAGE 16 Drinking Guide .............................................................................................................. PAGES 20-23 movies Movies in Theaters this Week ............................................................................................ PAGES 6-8 Martian Child (movie review) ...................................................................................................PAGE 6 Fred Claus (movie review) .......................................................................................................PAGE 7 P2 (movie review) ...................................................................................................................PAGE -

PERSEPHONE a Musical Allegory for the Stage by David Hoffman

PERSEPHONE A Musical Allegory for the Stage By David Hoffman In Concert Performance www.PersephoneOnStage.com July 21, 2010 The Great Room The Alliance of Resident Theaters South Oxford Space This particular story was so important to all of those ancient Greek and Roman dumbbells of whom Ms. Wafers was so dismissive, that they made it the centerpiece, the very gospel, of their most important religious ceremonies – the Mysteries of Demeter at Eleusis – which were held outside of Athens for at least 2,000 years. The initiates at these ceremonies, who included every important classical philosopher, artist, and playwright, and every Roman Emperor until the rise of Christianity, were taught this story, and used it to guide them to a secret truth that they thought to be crucial to their successful entry into the afterlife. What, exactly, this secret was has remained secret (a fact that, itself, is remarkable considering the prolific literary output of those 2,000 years of initiates), but there are layers of importance, and of metaphor in the story that we can find without too much digging. One comes when we analyze Persephone’s motivations: why does she eat the damned pomegranate (literally, the pomegranate of the damned) in the first place? All the retellings of this story suggest that it is that conscious decision, not her initial abduction, that ties her forever to the world of the dead. She chooses to be there, for at least part of the year – to be the wife of this awful god who has abducted her. His approach was god-awful, for sure, but he is the second most powerful person in the universe, and he sees her not as a little girl but as his queen.