Nonlinear Dispersive Equations: Local and Global Analysis Terence

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Dynamics, Equations and Applications Book of Abstracts Session

DYNAMICS, EQUATIONS AND APPLICATIONS BOOK OF ABSTRACTS SESSION D21 AGH University of Science and Technology Kraków, Poland 1620 September 2019 2 Dynamics, Equations and Applications CONTENTS Plenary lectures 7 Artur Avila, GENERIC CONSERVATIVE DYNAMICS . .7 Alessio Figalli, ON THE REGULARITY OF STABLE SOLUTIONS TO SEMI- LINEAR ELLIPTIC PDES . .7 Martin Hairer, RANDOM LOOPS . .8 Stanislav Smirnov, 2D PERCOLATION REVISITED . .8 Shing-Tung Yau, STABILITY AND NONLINEAR PDES IN MIRROR SYMMETRY8 Maciej Zworski, FROM CLASSICAL TO QUANTUM AND BACK . .9 Public lecture 11 Alessio Figalli, FROM OPTIMAL TRANSPORT TO SOAP BUBBLES AND CLOUDS: A PERSONAL JOURNEY . 11 Invited talks of part D2 13 Stefano Bianchini, DIFFERENTIABILITY OF THE FLOW FOR BV VECTOR FIELDS . 13 Yoshikazu Giga, ON THE LARGE TIME BEHAVIOR OF SOLUTIONS TO BIRTH AND SPREAD TYPE EQUATIONS . 14 David Jerison, THE TWO HYPERPLANE CONJECTURE . 14 3 4 Dynamics, Equations and Applications Sergiu Klainerman, ON THE NONLINEAR STABILITY OF BLACK HOLES . 15 Aleksandr Logunov, ZERO SETS OF LAPLACE EIGENFUCNTIONS . 16 Felix Otto, EFFECTIVE BEHAVIOR OF RANDOM MEDIA . 17 Endre Süli, IMPLICITLY CONSTITUTED FLUID FLOW MODELS: ANALYSIS AND APPROXIMATION . 17 András Vasy, GLOBAL ANALYSIS VIA MICROLOCAL TOOLS: FREDHOLM THEORY IN NON-ELLIPTIC SETTINGS . 19 Luis Vega, THE VORTEX FILAMENT EQUATION, THE TALBOT EFFECT, AND NON-CIRCULAR JETS . 20 Enrique Zuazua, POPULATION DYNAMICS AND CONTROL . 20 Talks of session D21 23 Giovanni Bellettini, ON THE RELAXED AREA OF THE GRAPH OF NONS- MOOTH MAPS FROM THE PLANE TO THE PLANE . 23 Sun-Sig Byun, GLOBAL GRADIENT ESTIMATES FOR NONLINEAR ELLIP- TIC PROBLEMS WITH NONSTANDARD GROWTH . 24 Juan Calvo, A BRIEF PERSPECTIVE ON TEMPERED DIFFUSION EQUATIONS 25 Giacomo Canevari, THE SET OF TOPOLOGICAL SINGULARITIES OF VECTOR- VALUED MAPS . -

Research Notices

AMERICAN MATHEMATICAL SOCIETY Research in Collegiate Mathematics Education. V Annie Selden, Tennessee Technological University, Cookeville, Ed Dubinsky, Kent State University, OH, Guershon Hare I, University of California San Diego, La jolla, and Fernando Hitt, C/NVESTAV, Mexico, Editors This volume presents state-of-the-art research on understanding, teaching, and learning mathematics at the post-secondary level. The articles are peer-reviewed for two major features: (I) advancing our understanding of collegiate mathematics education, and (2) readability by a wide audience of practicing mathematicians interested in issues affecting their students. This is not a collection of scholarly arcana, but a compilation of useful and informative research regarding how students think about and learn mathematics. This series is published in cooperation with the Mathematical Association of America. CBMS Issues in Mathematics Education, Volume 12; 2003; 206 pages; Softcover; ISBN 0-8218-3302-2; List $49;AII individuals $39; Order code CBMATH/12N044 MATHEMATICS EDUCATION Also of interest .. RESEARCH: AGul<lelbrthe Mathematics Education Research: Hothomatldan- A Guide for the Research Mathematician --lllll'tj.M...,.a.,-- Curtis McKnight, Andy Magid, and -- Teri J. Murphy, University of Oklahoma, Norman, and Michelynn McKnight, Norman, OK 2000; I 06 pages; Softcover; ISBN 0-8218-20 16-8; List $20;AII AMS members $16; Order code MERN044 Teaching Mathematics in Colleges and Universities: Case Studies for Today's Classroom Graduate Student Edition Faculty -

Black Hole Formation and Stability: a Mathematical Investigation

BULLETIN (New Series) OF THE AMERICAN MATHEMATICAL SOCIETY Volume 55, Number 1, January 2018, Pages 1–30 http://dx.doi.org/10.1090/bull/1592 Article electronically published on September 25, 2017 BLACK HOLE FORMATION AND STABILITY: A MATHEMATICAL INVESTIGATION LYDIA BIERI Abstract. The dynamics of the Einstein equations feature the formation of black holes. These are related to the presence of trapped surfaces in the spacetime manifold. The mathematical study of these phenomena has gained momentum since D. Christodoulou’s breakthrough result proving that, in the regime of pure general relativity, trapped surfaces form through the focusing of gravitational waves. (The latter were observed for the first time in 2015 by Advanced LIGO.) The proof combines new ideas from geometric analysis and nonlinear partial differential equations, and it introduces new methods to solve large data problems. These methods have many applications beyond general relativity. D. Christodoulou’s result was generalized by S. Klainerman and I. Rodnianski, and more recently by these authors and J. Luk. Here, we investigate the dynamics of the Einstein equations, focusing on these works. Finally, we address the question of stability of black holes and what has been known so far, involving recent works of many contributors. Contents 1. Introduction 1 2. Mathematical general relativity 3 3. Gravitational radiation 12 4. The formation of closed trapped surfaces 14 5. Stability of black holes 25 6. Cosmic censorship 26 Acknowledgments 27 About the author 27 References 27 1. Introduction The Einstein equations exhibit singularities that are hidden behind event hori- zons of black holes. A black hole is a region of spacetime that cannot be ob- served from infinity. -

Meetings & Conferences of The

Meetings & Conferences of the AMS IMPORTANT INFORMATION REGARDING MEETINGS PROGRAMS: AMS Sectional Meeting programs do not appear in the print version of the Notices. However, comprehensive and continually updated meeting and program information with links to the abstract for each talk can be found on the AMS website. See http://www.ams.org/meetings/. Final programs for Sectional Meetings will be archived on the AMS website accessible from the stated URL and in an electronic issue of the Notices as noted below for each meeting. Mihnea Popa, University of Illinois at Chicago, Vanish- Alba Iulia, Romania ing theorems and holomorphic one-forms. Dan Timotin, Institute of Mathematics of the Romanian University of Alba Iulia Academy, Horn inequalities: Finite and infinite dimensions. June 27–30, 2013 Special Sessions Thursday – Sunday Algebraic Geometry, Marian Aprodu, Institute of Meeting #1091 Mathematics of the Romanian Academy, Mircea Mustata, University of Michigan, Ann Arbor, and Mihnea Popa, First Joint International Meeting of the AMS and the Ro- University of Illinois, Chicago. manian Mathematical Society, in partnership with the “Simion Stoilow” Institute of Mathematics of the Romanian Articulated Systems: Combinatorics, Geometry and Academy. Kinematics, Ciprian S. Borcea, Rider University, and Ileana Associate secretary: Steven H. Weintraub Streinu, Smith College. Announcement issue of Notices: January 2013 Calculus of Variations and Partial Differential Equations, Program first available on AMS website: Not applicable Marian Bocea, Loyola University, Chicago, Liviu Ignat, Program issue of electronic Notices: Not applicable Institute of Mathematics of the Romanian Academy, Mihai Issue of Abstracts: Not applicable Mihailescu, University of Craiova, and Daniel Onofrei, University of Houston. -

THE GLOBAL EXISTENCE PROBLEM in GENERAL RELATIVITY 3 Played a Vital Role As Motivation and Guide in the Development of the Results Discussed Here

THE GLOBAL EXISTENCE PROBLEM IN GENERAL RELATIVITY LARS ANDERSSON1 Abstract. We survey some known facts and open questions concerning the global properties of 3+1 dimensional spacetimes containing a com- pact Cauchy surface. We consider spacetimes with an ℓ–dimensional Lie algebra of space–like Killing fields. For each ℓ ≤ 3, we give some basic results and conjectures on global existence and cosmic censorship. Contents 1. Introduction 1 2. The Einstein equations 3 3. Bianchi 15 4. G2 23 5. U(1) 30 6. 3+1 34 7. Concluding remarks 39 Appendix A. Basic Causality concepts 40 References 41 1. Introduction arXiv:gr-qc/9911032v4 28 Apr 2006 In this review, we will describe some results and conjectures about the global structure of solutions to the Einstein equations in 3+1 dimensions. We consider spacetimes (M,¯ g¯) with an ℓ–dimensional Lie algebra of space– like Killing fields. We may say that such spacetimes have a (local) isometry group G of dimension ℓ with the action of G generated by space–like Killing fields. For each value ℓ 3 of the dimension of the isometry group, we state the reduced field equations≤ as well as attempt to give an overview of the most important results and conjectures. We will concentrate on the vacuum case. In section 2, we present the Einstein equations and give the 3+1 decom- position into constraint and evolution equations, cf. subsection 2.2. Due to Date: June 27, 2004. 1Supported in part by the Swedish Natural Sciences Research Council, contract no. F-FU 4873-307, and the NSF under contract no. -

Letter from the Chair Celebrating the Lives of John and Alicia Nash

Spring 2016 Issue 5 Department of Mathematics Princeton University Letter From the Chair Celebrating the Lives of John and Alicia Nash We cannot look back on the past Returning from one of the crowning year without first commenting on achievements of a long and storied the tragic loss of John and Alicia career, John Forbes Nash, Jr. and Nash, who died in a car accident on his wife Alicia were killed in a car their way home from the airport last accident on May 23, 2015, shock- May. They were returning from ing the department, the University, Norway, where John Nash was and making headlines around the awarded the 2015 Abel Prize from world. the Norwegian Academy of Sci- ence and Letters. As a 1994 Nobel Nash came to Princeton as a gradu- Prize winner and a senior research ate student in 1948. His Ph.D. thesis, mathematician in our department “Non-cooperative games” (Annals for many years, Nash maintained a of Mathematics, Vol 54, No. 2, 286- steady presence in Fine Hall, and he 95) became a seminal work in the and Alicia are greatly missed. Their then-fledgling field of game theory, life and work was celebrated during and laid the path for his 1994 Nobel a special event in October. Memorial Prize in Economics. After finishing his Ph.D. in 1950, Nash This has been a very busy and pro- held positions at the Massachusetts ductive year for our department, and Institute of Technology and the In- we have happily hosted conferences stitute for Advanced Study, where 1950s Nash began to suffer from and workshops that have attracted the breadth of his work increased. -

January 2002 Prizes and Awards

January 2002 Prizes and Awards 4:25 p.m., Monday, January 7, 2002 PROGRAM OPENING REMARKS Ann E. Watkins, President Mathematical Association of America BECKENBACH BOOK PRIZE Mathematical Association of America BÔCHER MEMORIAL PRIZE American Mathematical Society LEVI L. CONANT PRIZE American Mathematical Society LOUISE HAY AWARD FOR CONTRIBUTIONS TO MATHEMATICS EDUCATION Association for Women in Mathematics ALICE T. S CHAFER PRIZE FOR EXCELLENCE IN MATHEMATICS BY AN UNDERGRADUATE WOMAN Association for Women in Mathematics CHAUVENET PRIZE Mathematical Association of America FRANK NELSON COLE PRIZE IN NUMBER THEORY American Mathematical Society AWARD FOR DISTINGUISHED PUBLIC SERVICE American Mathematical Society CERTIFICATES OF MERITORIOUS SERVICE Mathematical Association of America LEROY P. S TEELE PRIZE FOR MATHEMATICAL EXPOSITION American Mathematical Society LEROY P. S TEELE PRIZE FOR SEMINAL CONTRIBUTION TO RESEARCH American Mathematical Society LEROY P. S TEELE PRIZE FOR LIFETIME ACHIEVEMENT American Mathematical Society DEBORAH AND FRANKLIN TEPPER HAIMO AWARDS FOR DISTINGUISHED COLLEGE OR UNIVERSITY TEACHING OF MATHEMATICS Mathematical Association of America CLOSING REMARKS Hyman Bass, President American Mathematical Society MATHEMATICAL ASSOCIATION OF AMERICA BECKENBACH BOOK PRIZE The Beckenbach Book Prize, established in 1986, is the successor to the MAA Book Prize. It is named for the late Edwin Beckenbach, a long-time leader in the publica- tions program of the Association and a well-known professor of mathematics at the University of California at Los Angeles. The prize is awarded for distinguished, innov- ative books published by the Association. Citation Joseph Kirtland Identification Numbers and Check Digit Schemes MAA Classroom Resource Materials Series This book exploits a ubiquitous feature of daily life, identification numbers, to develop a variety of mathematical ideas, such as modular arithmetic, functions, permutations, groups, and symmetries. -

Nodal and Multiple Constant Sign Solution for Equations with the P-Laplacian

Progress in Nonlinear Differential Equations and Their Applications Volume 75 Editor Haim Brezis Université Pierre et Marie Curie Paris and Rutgers University New Brunswick, N.J. Editorial Board Antonio Ambrosetti, Scuola Internazionale Superiore di Studi Avanzati, Trieste A. Bahri, Rutgers University, New Brunswick Felix Browder, Rutgers University, New Brunswick Luis Cafarelli, Institute for Advanced Study, Princeton Lawrence C. Evans, University of California, Berkeley Mariano Giaquinta, University of Pisa David Kinderlehrer, Carnegie-Mellon University, Pittsburgh Sergiu Klainerman, Princeton University Robert Kohn, New York University P.L. Lions, University of Paris IX Jean Mahwin, Université Catholique de Louvain Louis Nirenberg, New York University Lambertus Peletier, University of Leiden Paul Rabinowitz, University of Wisconsin, Madison John Toland, University of Bath Differential Equations, Chaos and Variational Problems Vasile Staicu Editor Birkhäuser Basel · Boston · Berlin Editor: Vasile Staicu Department of Mathematics University of Aveiro 3810-193 Aveiro Portugal e-mail: [email protected] 2000 Mathematics Subject Classification: 34, 35, 37, 39, 45, 49, 58, 70, 76, 80, 91, 93 Library of Congress Control Number: 2007935492 Bibliographic information published by Die Deutsche Bibliothek Die Deutsche Bibliothek lists this publication in the Deutsche Nationalbibliografie; detailed bibliographic data is available in the Internet at <http://dnb.ddb.de>. ISBN 978-3-7643-8481-4 Birkhäuser Verlag AG, Basel · Boston · Berlin This work is subject to copyright. All rights are reserved, whether the whole or part of the material is concerned, specifically the rights of translation, reprinting, re-use of illustrations, broadcasting, reproduction on microfilms or in other ways, and storage in data banks. For any kind of use whatsoever, permission from the copyright owner must be obtained. -

2002 Bôcher Prize

2002 Bôcher Prize The 2002 Maxime Bôcher Memorial Prize was functional framework which has played an impor- awarded at the 108th Annual Meeting of the AMS tant role in the recent breakthrough of T. Tao on in San Diego in January 2002. the critical regularity for wave maps in two and three The Bôcher Prize is awarded every three years for dimensions. The work of Tataru and Tao opens up a notable research memoir in analysis that has ap- exciting new possibilities in the study of nonlinear peared during the previous five years in a recognized wave equations. North American journal (until 2001, the prize was The prize also recognizes Tataru’s important usually awarded every five years). Established in work on Strichartz estimates for wave equations 1923, the prize honors the memory of Maxime Bôcher with rough coefficients and applications to quasi- (1867–1918), who was the Society’s second Collo- linear wave equations, as well as his many deep quium Lecturer in 1896 and who served as AMS pres- contributions to unique continuation problems. ident during 1909–10. Bôcher was also one of the Biographical Sketch founding editors of Transactions of the AMS. The Daniel Tataru was born on May 6, 1967, in a small prize carries a cash award of $5,000. city in the northeast of Romania. He received his The Bôcher Prize is awarded by the AMS Coun- undergraduate degree in 1990 from the University cil acting on the recommendation of a selection of Iasi, Romania, and his Ph.D. in 1992 from the Uni- committee. -

Demetrios Christodoulou Autobiography I Was Born in Athens in October 1951

Demetrios Christodoulou Autobiography I was born in Athens in October 1951. My father Lambros was born in Alexandria to Greek parents from Cyprus who had immigrated to Egypt. My paternal grandfather, Miltiades was from Agios Theodoros and my paternal grandmother Eleni was from Choirokoitia. My mother Maria was born in Athens to a family of Greek refugees from Asia Minor. When I was a child, I used to take long walks with my father in the environs of the ancient monuments and he used to inspire me with stories from the distant past when ancient Greece had made outstanding contributions to human civilization. My paternal grandparents left Egypt and settled in Greece in the 1950's, so I also had the opportunity to listen to many wonderful stories from my grandfather about his adventures as a youth in Cyprus during the last part of the 19th century. My father used to take me to a movie theater for children which showed documentaries. I still remember how impressed I was on seeing a documentary about Einstein. A problem in Euclidean geometry was the spark which initiated in me, at the age of 14, a burning interest in mathematics and theoretical physics. Within a couple of years I became fascinated with the concepts of space and time, with Riemann's geometry and Einstein's relativity. My case was brought to the attention of Achiles Papapetrou, a Greek physicist at the Institute Henri Poincare, who in turn contacted Princeton physics professor John Wheeler, on leave in Paris at that time. So in the beginning of 1968 I came to Paris and was examined by them. -

Lutions to Einstein's Equations with Accel

On the future stability of cosmological so- lutions to Einstein's equations with accel- erated expansion Hans Ringstr¨om∗ Abstract. The solutions of Einstein's equations used by physicists to model the universe have a high degree of symmetry. In order to verify that they are reasonable models, it is therefore necessary to demonstrate that they are future stable under small perturba- tions of the corresponding initial data. The purpose of this contribution is to describe mathematical results that have been obtained on this topic. A question which turns out to be related concerns the topology of the universe: what limitations do the observations impose? Using methods similar to ones arising in the proof of future stability, it is possi- ble to construct solutions with arbitrary closed spatial topology. The existence of these solutions indicate that the observations might not impose any limitations at all. Mathematics Subject Classification (2010). Primary 83C05; Secondary 35Q76. Keywords. General relativity, stability of solutions, Einstein-Vlasov system. 1. Introduction In 1915, the interpretation of gravitational forces was fundamentally altered by the introduction of Einstein's general theory of relativity. The underlying math- ematical structures were not well understood at the time, and as a consequence, some of the fundamental questions have only recently been phrased in the form of mathematical problems. Since Einstein's equations are not as commonly studied in mathematics as many other equations that appear in physics, we here wish to give a brief description of their origin and of how different perspectives on them have developed since the inception of general relativity. -

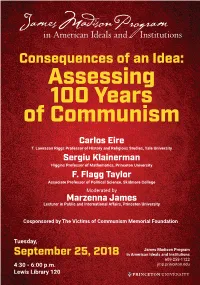

Assessing 100 Years of Communism Carlos Eire T

Consequences of an Idea: Assessing 100 Years of Communism Carlos Eire T. Lawrason Riggs Professor of History and Religious Studies, Yale University Sergiu Klainerman Higgins Professor of Mathematics, Princeton University F. Flagg Taylor Associate Professor of Political Science, Skidmore College Moderated by Marzenna James Lecturer in Public and International Affairs, Princeton University Cosponsored by The Victims of Communism Memorial Foundation Tuesday, James Madison Program September 25, 2018 in American Ideals and Institutions 609-258-1122 4:30 - 6:00 p.m. jmp.princeton.edu Lewis Library 120 CARLOS EIRE was born in Havana in 1950 and fled to the United States without his parents at the age of eleven. After living in a series of foster homes for three years, he was reunited with his mother in Chicago, but his father was never allowed to leave Cuba. He is now the T. L. Riggs Professor of History and Religious Studies at Yale University, where he has served as chair of the Department of Religious Studies and the Renaissance Studies Program. He has taught at St. John’s University in Minnesota and the University of Virginia, has been a Fulbright scholar in Spain, a Fellow of the Institute for Advanced Study in Princeton, and a member of the Lilly Foundation’s Seminar in Lived Theology. He is the author of several scholarly books, including War Against the Idols (1986), From Madrid to Purgatory (1995), A Very Brief History of Eternity (2009), and Reformations: The Early Modern World (2016), which won the Hawkins Award from the Association of American Publishers. A past president of the American Society for Reformation Research, he is best known outside scholarly circles as the author of the memoir Waiting for Snow in Havana (2003), which won the nonfiction National Book Award, and his second memoir, Learning to Die in Miami (2010).