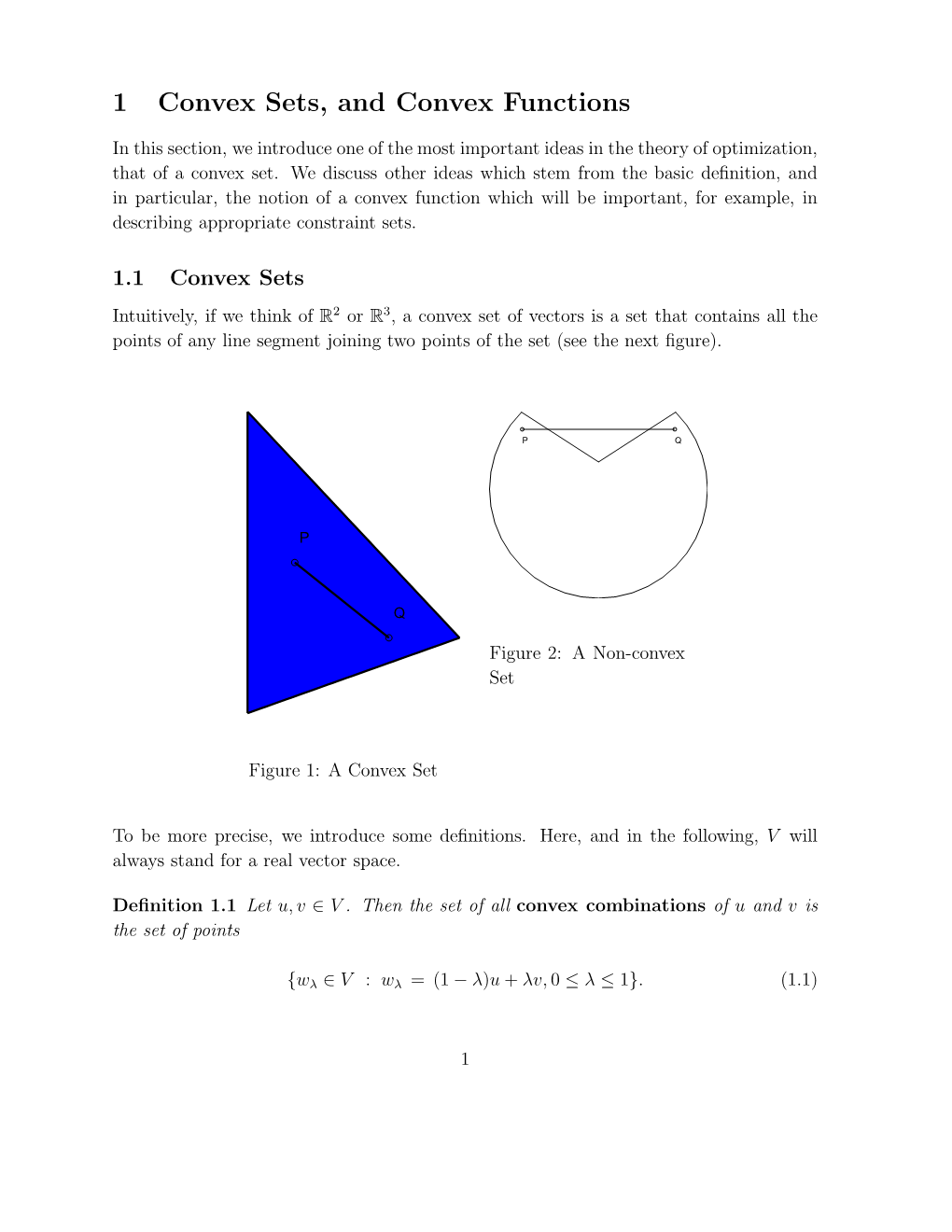

1 Convex Sets, and Convex Functions

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Computational Geometry – Problem Session Convex Hull & Line Segment Intersection

Computational Geometry { Problem Session Convex Hull & Line Segment Intersection LEHRSTUHL FUR¨ ALGORITHMIK · INSTITUT FUR¨ THEORETISCHE INFORMATIK · FAKULTAT¨ FUR¨ INFORMATIK Guido Bruckner¨ 04.05.2018 Guido Bruckner¨ · Computational Geometry { Problem Session Modus Operandi To register for the oral exam we expect you to present an original solution for at least one problem in the exercise session. • this is about working together • don't worry if your idea doesn't work! Guido Bruckner¨ · Computational Geometry { Problem Session Outline Convex Hull Line Segment Intersection Guido Bruckner¨ · Computational Geometry { Problem Session Definition of Convex Hull Def: A region S ⊆ R2 is called convex, when for two points p; q 2 S then line pq 2 S. The convex hull CH(S) of S is the smallest convex region containing S. Guido Bruckner¨ · Computational Geometry { Problem Session Definition of Convex Hull Def: A region S ⊆ R2 is called convex, when for two points p; q 2 S then line pq 2 S. The convex hull CH(S) of S is the smallest convex region containing S. Guido Bruckner¨ · Computational Geometry { Problem Session Definition of Convex Hull Def: A region S ⊆ R2 is called convex, when for two points p; q 2 S then line pq 2 S. The convex hull CH(S) of S is the smallest convex region containing S. In physics: Guido Bruckner¨ · Computational Geometry { Problem Session Definition of Convex Hull Def: A region S ⊆ R2 is called convex, when for two points p; q 2 S then line pq 2 S. The convex hull CH(S) of S is the smallest convex region containing S. -

On Quasi Norm Attaining Operators Between Banach Spaces

ON QUASI NORM ATTAINING OPERATORS BETWEEN BANACH SPACES GEUNSU CHOI, YUN SUNG CHOI, MINGU JUNG, AND MIGUEL MART´IN Abstract. We provide a characterization of the Radon-Nikod´ymproperty in terms of the denseness of bounded linear operators which attain their norm in a weak sense, which complement the one given by Bourgain and Huff in the 1970's. To this end, we introduce the following notion: an operator T : X ÝÑ Y between the Banach spaces X and Y is quasi norm attaining if there is a sequence pxnq of norm one elements in X such that pT xnq converges to some u P Y with }u}“}T }. Norm attaining operators in the usual (or strong) sense (i.e. operators for which there is a point in the unit ball where the norm of its image equals the norm of the operator) and also compact operators satisfy this definition. We prove that strong Radon-Nikod´ymoperators can be approximated by quasi norm attaining operators, a result which does not hold for norm attaining operators in the strong sense. This shows that this new notion of quasi norm attainment allows to characterize the Radon-Nikod´ymproperty in terms of denseness of quasi norm attaining operators for both domain and range spaces, completing thus a characterization by Bourgain and Huff in terms of norm attaining operators which is only valid for domain spaces and it is actually false for range spaces (due to a celebrated example by Gowers of 1990). A number of other related results are also included in the paper: we give some positive results on the denseness of norm attaining Lipschitz maps, norm attaining multilinear maps and norm attaining polynomials, characterize both finite dimensionality and reflexivity in terms of quasi norm attaining operators, discuss conditions to obtain that quasi norm attaining operators are actually norm attaining, study the relationship with the norm attainment of the adjoint operator and, finally, present some stability results. -

CORE View Metadata, Citation and Similar Papers at Core.Ac.Uk

View metadata, citation and similar papers at core.ac.uk brought to you by CORE provided by Bulgarian Digital Mathematics Library at IMI-BAS Serdica Math. J. 27 (2001), 203-218 FIRST ORDER CHARACTERIZATIONS OF PSEUDOCONVEX FUNCTIONS Vsevolod Ivanov Ivanov Communicated by A. L. Dontchev Abstract. First order characterizations of pseudoconvex functions are investigated in terms of generalized directional derivatives. A connection with the invexity is analysed. Well-known first order characterizations of the solution sets of pseudolinear programs are generalized to the case of pseudoconvex programs. The concepts of pseudoconvexity and invexity do not depend on a single definition of the generalized directional derivative. 1. Introduction. Three characterizations of pseudoconvex functions are considered in this paper. The first is new. It is well-known that each pseudo- convex function is invex. Then the following question arises: what is the type of 2000 Mathematics Subject Classification: 26B25, 90C26, 26E15. Key words: Generalized convexity, nonsmooth function, generalized directional derivative, pseudoconvex function, quasiconvex function, invex function, nonsmooth optimization, solution sets, pseudomonotone generalized directional derivative. 204 Vsevolod Ivanov Ivanov the function η from the definition of invexity, when the invex function is pseudo- convex. This question is considered in Section 3, and a first order necessary and sufficient condition for pseudoconvexity of a function is given there. It is shown that the class of strongly pseudoconvex functions, considered by Weir [25], coin- cides with pseudoconvex ones. The main result of Section 3 is applied to characterize the solution set of a nonlinear programming problem in Section 4. The base results of Jeyakumar and Yang in the paper [13] are generalized there to the case, when the function is pseudoconvex. -

Convexity I: Sets and Functions

Convexity I: Sets and Functions Ryan Tibshirani Convex Optimization 10-725/36-725 See supplements for reviews of basic real analysis • basic multivariate calculus • basic linear algebra • Last time: why convexity? Why convexity? Simply put: because we can broadly understand and solve convex optimization problems Nonconvex problems are mostly treated on a case by case basis Reminder: a convex optimization problem is of ● the form ● ● min f(x) x2D ● ● subject to gi(x) 0; i = 1; : : : m ≤ hj(x) = 0; j = 1; : : : r ● ● where f and gi, i = 1; : : : m are all convex, and ● hj, j = 1; : : : r are affine. Special property: any ● local minimizer is a global minimizer ● 2 Outline Today: Convex sets • Examples • Key properties • Operations preserving convexity • Same for convex functions • 3 Convex sets n Convex set: C R such that ⊆ x; y C = tx + (1 t)y C for all 0 t 1 2 ) − 2 ≤ ≤ In words, line segment joining any two elements lies entirely in set 24 2 Convex sets Figure 2.2 Some simple convexn and nonconvex sets. Left. The hexagon, Convex combinationwhich includesof x1; its : :boundary : xk (shownR : darker), any linear is convex. combinationMiddle. The kidney shaped set is not convex, since2 the line segment between the twopointsin the set shown as dots is not contained in the set. Right. The square contains some boundaryθ1x points1 + but::: not+ others,θkxk and is not convex. k with θi 0, i = 1; : : : k, and θi = 1. Convex hull of a set C, ≥ i=1 conv(C), is all convex combinations of elements. Always convex P 4 Figure 2.3 The convex hulls of two sets in R2. -

POLARS and DUAL CONES 1. Convex Sets

POLARS AND DUAL CONES VERA ROSHCHINA Abstract. The goal of this note is to remind the basic definitions of convex sets and their polars. For more details see the classic references [1, 2] and [3] for polytopes. 1. Convex sets and convex hulls A convex set has no indentations, i.e. it contains all line segments connecting points of this set. Definition 1 (Convex set). A set C ⊆ Rn is called convex if for any x; y 2 C the point λx+(1−λ)y also belongs to C for any λ 2 [0; 1]. The sets shown in Fig. 1 are convex: the first set has a smooth boundary, the second set is a Figure 1. Convex sets convex polygon. Singletons and lines are also convex sets, and linear subspaces are convex. Some nonconvex sets are shown in Fig. 2. Figure 2. Nonconvex sets A line segment connecting two points x; y 2 Rn is denoted by [x; y], so we have [x; y] = f(1 − α)x + αy; α 2 [0; 1]g: Likewise, we can consider open line segments (x; y) = f(1 − α)x + αy; α 2 (0; 1)g: and half open segments such as [x; y) and (x; y]. Clearly any line segment is a convex set. The notion of a line segment connecting two points can be generalised to an arbitrary finite set n n by means of a convex combination. Given a finite set fx1; x2; : : : ; xpg ⊂ R , a point x 2 R is the convex combination of x1; x2; : : : ; xp if it can be represented as p p X X x = αixi; αi ≥ 0 8i = 1; : : : ; p; αi = 1: i=1 i=1 Given an arbitrary set S ⊆ Rn, we can consider all possible finite convex combinations of points from this set. -

Locally Solid Riesz Spaces with Applications to Economics / Charalambos D

http://dx.doi.org/10.1090/surv/105 alambos D. Alipr Lie University \ Burkinshaw na University-Purdue EDITORIAL COMMITTEE Jerry L. Bona Michael P. Loss Peter S. Landweber, Chair Tudor Stefan Ratiu J. T. Stafford 2000 Mathematics Subject Classification. Primary 46A40, 46B40, 47B60, 47B65, 91B50; Secondary 28A33. Selected excerpts in this Second Edition are reprinted with the permissions of Cambridge University Press, the Canadian Mathematical Bulletin, Elsevier Science/Academic Press, and the Illinois Journal of Mathematics. For additional information and updates on this book, visit www.ams.org/bookpages/surv-105 Library of Congress Cataloging-in-Publication Data Aliprantis, Charalambos D. Locally solid Riesz spaces with applications to economics / Charalambos D. Aliprantis, Owen Burkinshaw.—2nd ed. p. cm. — (Mathematical surveys and monographs, ISSN 0076-5376 ; v. 105) Rev. ed. of: Locally solid Riesz spaces. 1978. Includes bibliographical references and index. ISBN 0-8218-3408-8 (alk. paper) 1. Riesz spaces. 2. Economics, Mathematical. I. Burkinshaw, Owen. II. Aliprantis, Char alambos D. III. Locally solid Riesz spaces. IV. Title. V. Mathematical surveys and mono graphs ; no. 105. QA322 .A39 2003 bib'.73—dc22 2003057948 Copying and reprinting. Individual readers of this publication, and nonprofit libraries acting for them, are permitted to make fair use of the material, such as to copy a chapter for use in teaching or research. Permission is granted to quote brief passages from this publication in reviews, provided the customary acknowledgment of the source is given. Republication, systematic copying, or multiple reproduction of any material in this publication is permitted only under license from the American Mathematical Society. -

On the Ekeland Variational Principle with Applications and Detours

Lectures on The Ekeland Variational Principle with Applications and Detours By D. G. De Figueiredo Tata Institute of Fundamental Research, Bombay 1989 Author D. G. De Figueiredo Departmento de Mathematica Universidade de Brasilia 70.910 – Brasilia-DF BRAZIL c Tata Institute of Fundamental Research, 1989 ISBN 3-540- 51179-2-Springer-Verlag, Berlin, Heidelberg. New York. Tokyo ISBN 0-387- 51179-2-Springer-Verlag, New York. Heidelberg. Berlin. Tokyo No part of this book may be reproduced in any form by print, microfilm or any other means with- out written permission from the Tata Institute of Fundamental Research, Colaba, Bombay 400 005 Printed by INSDOC Regional Centre, Indian Institute of Science Campus, Bangalore 560012 and published by H. Goetze, Springer-Verlag, Heidelberg, West Germany PRINTED IN INDIA Preface Since its appearance in 1972 the variational principle of Ekeland has found many applications in different fields in Analysis. The best refer- ences for those are by Ekeland himself: his survey article [23] and his book with J.-P. Aubin [2]. Not all material presented here appears in those places. Some are scattered around and there lies my motivation in writing these notes. Since they are intended to students I included a lot of related material. Those are the detours. A chapter on Nemyt- skii mappings may sound strange. However I believe it is useful, since their properties so often used are seldom proved. We always say to the students: go and look in Krasnoselskii or Vainberg! I think some of the proofs presented here are more straightforward. There are two chapters on applications to PDE. -

Convex Functions

Convex Functions Prof. Daniel P. Palomar ELEC5470/IEDA6100A - Convex Optimization The Hong Kong University of Science and Technology (HKUST) Fall 2020-21 Outline of Lecture • Definition convex function • Examples on R, Rn, and Rn×n • Restriction of a convex function to a line • First- and second-order conditions • Operations that preserve convexity • Quasi-convexity, log-convexity, and convexity w.r.t. generalized inequalities (Acknowledgement to Stephen Boyd for material for this lecture.) Daniel P. Palomar 1 Definition of Convex Function • A function f : Rn −! R is said to be convex if the domain, dom f, is convex and for any x; y 2 dom f and 0 ≤ θ ≤ 1, f (θx + (1 − θ) y) ≤ θf (x) + (1 − θ) f (y) : • f is strictly convex if the inequality is strict for 0 < θ < 1. • f is concave if −f is convex. Daniel P. Palomar 2 Examples on R Convex functions: • affine: ax + b on R • powers of absolute value: jxjp on R, for p ≥ 1 (e.g., jxj) p 2 • powers: x on R++, for p ≥ 1 or p ≤ 0 (e.g., x ) • exponential: eax on R • negative entropy: x log x on R++ Concave functions: • affine: ax + b on R p • powers: x on R++, for 0 ≤ p ≤ 1 • logarithm: log x on R++ Daniel P. Palomar 3 Examples on Rn • Affine functions f (x) = aT x + b are convex and concave on Rn. n • Norms kxk are convex on R (e.g., kxk1, kxk1, kxk2). • Quadratic functions f (x) = xT P x + 2qT x + r are convex Rn if and only if P 0. -

Sum of Squares and Polynomial Convexity

CONFIDENTIAL. Limited circulation. For review only. Sum of Squares and Polynomial Convexity Amir Ali Ahmadi and Pablo A. Parrilo Abstract— The notion of sos-convexity has recently been semidefiniteness of the Hessian matrix is replaced with proposed as a tractable sufficient condition for convexity of the existence of an appropriately defined sum of squares polynomials based on sum of squares decomposition. A multi- decomposition. As we will briefly review in this paper, by variate polynomial p(x) = p(x1; : : : ; xn) is said to be sos-convex if its Hessian H(x) can be factored as H(x) = M T (x) M (x) drawing some appealing connections between real algebra with a possibly nonsquare polynomial matrix M(x). It turns and numerical optimization, the latter problem can be re- out that one can reduce the problem of deciding sos-convexity duced to the feasibility of a semidefinite program. of a polynomial to the feasibility of a semidefinite program, Despite the relative recency of the concept of sos- which can be checked efficiently. Motivated by this computa- convexity, it has already appeared in a number of theo- tional tractability, it has been speculated whether every convex polynomial must necessarily be sos-convex. In this paper, we retical and practical settings. In [6], Helton and Nie use answer this question in the negative by presenting an explicit sos-convexity to give sufficient conditions for semidefinite example of a trivariate homogeneous polynomial of degree eight representability of semialgebraic sets. In [7], Lasserre uses that is convex but not sos-convex. sos-convexity to extend Jensen’s inequality in convex anal- ysis to linear functionals that are not necessarily probability I. -

Subgradients

Subgradients Ryan Tibshirani Convex Optimization 10-725/36-725 Last time: gradient descent Consider the problem min f(x) x n for f convex and differentiable, dom(f) = R . Gradient descent: (0) n choose initial x 2 R , repeat (k) (k−1) (k−1) x = x − tk · rf(x ); k = 1; 2; 3;::: Step sizes tk chosen to be fixed and small, or by backtracking line search If rf Lipschitz, gradient descent has convergence rate O(1/) Downsides: • Requires f differentiable next lecture • Can be slow to converge two lectures from now 2 Outline Today: crucial mathematical underpinnings! • Subgradients • Examples • Subgradient rules • Optimality characterizations 3 Subgradients Remember that for convex and differentiable f, f(y) ≥ f(x) + rf(x)T (y − x) for all x; y I.e., linear approximation always underestimates f n A subgradient of a convex function f at x is any g 2 R such that f(y) ≥ f(x) + gT (y − x) for all y • Always exists • If f differentiable at x, then g = rf(x) uniquely • Actually, same definition works for nonconvex f (however, subgradients need not exist) 4 Examples of subgradients Consider f : R ! R, f(x) = jxj 2.0 1.5 1.0 f(x) 0.5 0.0 −0.5 −2 −1 0 1 2 x • For x 6= 0, unique subgradient g = sign(x) • For x = 0, subgradient g is any element of [−1; 1] 5 n Consider f : R ! R, f(x) = kxk2 f(x) x2 x1 • For x 6= 0, unique subgradient g = x=kxk2 • For x = 0, subgradient g is any element of fz : kzk2 ≤ 1g 6 n Consider f : R ! R, f(x) = kxk1 f(x) x2 x1 • For xi 6= 0, unique ith component gi = sign(xi) • For xi = 0, ith component gi is any element of [−1; 1] 7 n -

NP-Hardness of Deciding Convexity of Quartic Polynomials and Related Problems

NP-hardness of Deciding Convexity of Quartic Polynomials and Related Problems Amir Ali Ahmadi, Alex Olshevsky, Pablo A. Parrilo, and John N. Tsitsiklis ∗y Abstract We show that unless P=NP, there exists no polynomial time (or even pseudo-polynomial time) algorithm that can decide whether a multivariate polynomial of degree four (or higher even degree) is globally convex. This solves a problem that has been open since 1992 when N. Z. Shor asked for the complexity of deciding convexity for quartic polynomials. We also prove that deciding strict convexity, strong convexity, quasiconvexity, and pseudoconvexity of polynomials of even degree four or higher is strongly NP-hard. By contrast, we show that quasiconvexity and pseudoconvexity of odd degree polynomials can be decided in polynomial time. 1 Introduction The role of convexity in modern day mathematical programming has proven to be remarkably fundamental, to the point that tractability of an optimization problem is nowadays assessed, more often than not, by whether or not the problem benefits from some sort of underlying convexity. In the famous words of Rockafellar [39]: \In fact the great watershed in optimization isn't between linearity and nonlinearity, but convexity and nonconvexity." But how easy is it to distinguish between convexity and nonconvexity? Can we decide in an efficient manner if a given optimization problem is convex? A class of optimization problems that allow for a rigorous study of this question from a com- putational complexity viewpoint is the class of polynomial optimization problems. These are op- timization problems where the objective is given by a polynomial function and the feasible set is described by polynomial inequalities. -

The Ekeland Variational Principle, the Bishop-Phelps Theorem, and The

The Ekeland Variational Principle, the Bishop-Phelps Theorem, and the Brøndsted-Rockafellar Theorem Our aim is to prove the Ekeland Variational Principle which is an abstract result that found numerous applications in various fields of Mathematics. As its application to Convex Analysis, we provide a proof of the famous Bishop- Phelps Theorem and some related results. Let us recall that the epigraph and the hypograph of a function f : X ! [−∞; +1] (where X is a set) are the following subsets of X × R: epi f = f(x; t) 2 X × R : t ≥ f(x)g ; hyp f = f(x; t) 2 X × R : t ≤ f(x)g : Notice that, if f > −∞ on X then epi f 6= ; if and only if f is proper, that is, f is finite at at least one point. 0.1. The Ekeland Variational Principle. In what follows, (X; d) is a metric space. Given λ > 0 and (x; t) 2 X × R, we define the set Kλ(x; t) = f(y; s): s ≤ t − λd(x; y)g = f(y; s): λd(x; y) ≤ t − sg ⊂ X × R: Notice that Kλ(x; t) = hyp[t − λ(x; ·)]. Let us state some useful properties of these sets. Lemma 0.1. (a) Kλ(x; t) is closed and contains (x; t). (b) If (¯x; t¯) 2 Kλ(x; t) then Kλ(¯x; t¯) ⊂ Kλ(x; t). (c) If (xn; tn) ! (x; t) and (xn+1; tn+1) 2 Kλ(xn; tn) (n 2 N), then \ Kλ(x; t) = Kλ(xn; tn) : n2N Proof. (a) is quite easy.