The Volumepro Real-Time Ray-Casting System

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Rendering of Feature-Rich Dynamically Changing Volumetric Datasets on GPU

Procedia Computer Science Volume 29, 2014, Pages 648–658 ICCS 2014. 14th International Conference on Computational Science Rendering of Feature-Rich Dynamically Changing Volumetric Datasets on GPU Martin Schreiber, Atanas Atanasov, Philipp Neumann, and Hans-Joachim Bungartz Technische Universit¨at M¨unchen, Munich, Germany [email protected],[email protected],[email protected],[email protected] Abstract Interactive photo-realistic representation of dynamic liquid volumes is a challenging task for today’s GPUs and state-of-the-art visualization algorithms. Methods of the last two decades consider either static volumetric datasets applying several optimizations for volume casting, or dynamic volumetric datasets with rough approximations to realistic rendering. Nevertheless, accurate real-time visualization of dynamic datasets is crucial in areas of scientific visualization as well as areas demanding for accurate rendering of feature-rich datasets. An accurate and thus realistic visualization of such datasets leads to new challenges: due to restrictions given by computational performance, the datasets may be relatively small compared to the screen resolution, and thus each voxel has to be rendered highly oversampled. With our volumetric datasets based on a real-time lattice Boltzmann fluid simulation creating dynamic cavities and small droplets, existing real-time implementations are not applicable for a realistic surface extraction. This work presents a volume tracing algorithm capable of producing multiple refractions which is also robust to small droplets and cavities. Furthermore we show advantages of our volume tracing algorithm compared to other implementations. Keywords: 1 Introduction The photo-realistic real-time rendering of dynamic liquids, represented by free surface flows and volumetric datasets, has been studied in numerous works [13, 9, 10, 14, 18]. -

Ray Casting Architectures for Volume Visualization

Ray Casting Architectures for Volume Visualization The Harvard community has made this article openly available. Please share how this access benefits you. Your story matters Citation Ray, Harvey, Hanspeter Pfister, Deborah Silver, and Todd A. Cook. 1999. Ray casting architectures for volume visualization. IEEE Transactions on Visualization and Computer Graphics 5(3): 210-223. Published Version doi:10.1109/2945.795213 Citable link http://nrs.harvard.edu/urn-3:HUL.InstRepos:4138553 Terms of Use This article was downloaded from Harvard University’s DASH repository, and is made available under the terms and conditions applicable to Other Posted Material, as set forth at http:// nrs.harvard.edu/urn-3:HUL.InstRepos:dash.current.terms-of- use#LAA Ray Casting Architectures for Volume Visualization Harvey Ray, Hansp eter P ster , Deb orah Silver , Todd A. Co ok Abstract | Real-time visualization of large volume datasets demands high p erformance computation, pushing the stor- age, pro cessing, and data communication requirements to the limits of current technology. General purp ose paral- lel pro cessors have b een used to visualize mo derate size datasets at interactive frame rates; however, the cost and size of these sup ercomputers inhibits the widespread use for real-time visualization. This pap er surveys several sp e- cial purp ose architectures that seek to render volumes at interactive rates. These sp ecialized visualization accelera- tors have cost, p erformance, and size advantages over par- allel pro cessors. All architectures implement ray casting using parallel and pip elined hardware. Weintro duce a new metric that normalizes p erformance to compare these ar- Fig. -

Ray Tracing Height Fields

Ray Tracing Height Fields £ £ £ Huamin Qu£ Feng Qiu Nan Zhang Arie Kaufman Ming Wan † £ Center for Visual Computing (CVC) and Department of Computer Science State University of New York at Stony Brook, Stony Brook, NY 11794-4400 †The Boeing Company, P.O. Box 3707, M/C 7L-40, Seattle, WA 98124-2207 Abstract bility, make the ray tracing approach a promising alterna- tive for rasterization approach when the size of the terrain We present a novel surface reconstruction algorithm is very large [13]. More importantly, ray tracing provides which can directly reconstruct surfaces with different levels more flexibility than hardware rendering. For example, ray of smoothness in one framework from height fields using 3D tracing allows us to operate directly on the image/Z-buffer discrete grid ray tracing. Our algorithm exploits the 2.5D to render special effects such as terrain with underground nature of the elevation data and the regularity of the rect- bunkers, terrain with shadows, and flythrough with a fish- angular grid from which the height field surface is sampled. eye view. In addition, it is easy to incorporate clouds, haze, Based on this reconstruction method, we also develop a hy- flames, and other amorphous phenomena into the scene by brid rendering method which has the features of both ras- ray tracing. Ray tracing can also be used to fill in holes in terization and ray tracing. This hybrid method is designed image-based rendering. to take advantage of GPUs newly available flexibility and processing power. Most ray tracing height field papers [2, 4, 8, 10] focused on fast ray traversal algorithms and antialiasing methods. -

Ray Casting and Rendering

MIT EECS 6.837 Computer Graphics Part 2 – Rendering Today: Intro to Rendering, Ray Casting © NVIDIA Inc. All rights reserved. This content is excluded from our Creative Commons license. For more information, see http://ocw.mit.edu/help/faq-fair-use/. NVIDIA MIT EECS 6.837 – Matusik 1 Cool Artifacts from Assignment 1 © source unknown. All rights reserved. This content is excluded from our Creative Commons license. For more information, see http://ocw.mit.edu/help/faq-fair-use/. 2 Cool Artifacts from Assignment 1 © source unknown. All rights reserved. This content is excluded from our Creative Commons license. For more information, see http://ocw.mit.edu/help/faq-fair-use/. 3 The Story So Far • Modeling – splines, hierarchies, transformations, meshes, etc. • Animation – skinning, ODEs, masses and springs • Now we’ll to see how to generate an image given a scene description! 4 The Remainder of the Term • Ray Casting and Ray Tracing • Intro to Global Illumination – Monte Carlo techniques, photon mapping, etc. • Shading, texture mapping – What makes materials look like they do? • Image-based Rendering • Sampling and antialiasing • Rasterization, z-buffering • Shadow techniques • Graphics Hardware © ACM. All rights reserved. This content is excluded from our Creative Commons [Lehtinen et al. 2008] license. For more information, see http://ocw.mit.edu/help/faq-fair-use/. 5 Today • What does rendering mean? • Basics of ray casting 6 © source unknown. All rights reserved. This content is excluded from our Creative Commons license. For more information, see http://ocw.mit.edu/help/faq-fair-use/. © Oscar Meruvia-Pastor, Daniel Rypl. All rights reserved. -

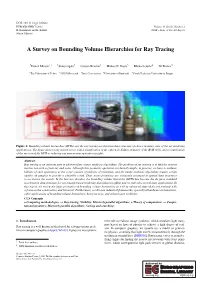

A Survey on Bounding Volume Hierarchies for Ray Tracing

DOI: 10.1111/cgf.142662 EUROGRAPHICS 2021 Volume 40 (2021), Number 2 H. Rushmeier and K. Bühler STAR – State of The Art Report (Guest Editors) A Survey on Bounding Volume Hierarchies for Ray Tracing yDaniel Meister1z yShinji Ogaki2 Carsten Benthin3 Michael J. Doyle3 Michael Guthe4 Jiríˇ Bittner5 1The University of Tokyo 2ZOZO Research 3Intel Corporation 4University of Bayreuth 5Czech Technical University in Prague Figure 1: Bounding volume hierarchies (BVHs) are the ray tracing acceleration data structure of choice in many state of the art rendering applications. The figure shows a ray-traced scene, with a visualization of the otherwise hidden structure of the BVH (left), and a visualization of the success of the BVH in reducing ray intersection operations (right). Abstract Ray tracing is an inherent part of photorealistic image synthesis algorithms. The problem of ray tracing is to find the nearest intersection with a given ray and scene. Although this geometric operation is relatively simple, in practice, we have to evaluate billions of such operations as the scene consists of millions of primitives, and the image synthesis algorithms require a high number of samples to provide a plausible result. Thus, scene primitives are commonly arranged in spatial data structures to accelerate the search. In the last two decades, the bounding volume hierarchy (BVH) has become the de facto standard acceleration data structure for ray tracing-based rendering algorithms in offline and recently also in real-time applications. In this report, we review the basic principles of bounding volume hierarchies as well as advanced state of the art methods with a focus on the construction and traversal. -

Ray Casting Architectures for Volume Visualization

MITSUBISHI ELECTRIC RESEARCH LABORATORIES http://www.merl.com Ray Casting Architectures for Volume Visualization Harvey Ray, Hanspeter Pfister, Deborah Silver, Todd A. Cook TR99-17 April 1999 Abstract Real-time visualization of large volume datasets demands high performance computation, push- ing the storage, processing, and data communication requirements to the limits of current tech- nology. General purpose parallel processors have been used to visualize moderate size datasets at interactive frame rates; however, the cost and size of these supercomputers inhibits the widespread use for real-time visualization. This paper surveys several special purpose architectures that seek to render volumes at interactive rates. These specialized visualization accelerators have cost, per- formance, and size advantages over parallel processors. All architectures implement ray casting using parallel and pipelined hardware. We introduce a new metric that normalizes performance to compare these architectures. The architectures included in this survey are VOGUE, VIRIM, Array Based Ray Casting, EM-Cube, and VIZARD II. We also discuss future applications of special purpose accelerators. IEEE Transactions on Visualization and Computer Graphics This work may not be copied or reproduced in whole or in part for any commercial purpose. Permission to copy in whole or in part without payment of fee is granted for nonprofit educational and research purposes provided that all such whole or partial copies include the following: a notice that such copying is by permission of Mitsubishi Electric Research Laboratories, Inc.; an acknowledgment of the authors and individual contributions to the work; and all applicable portions of the copyright notice. Copying, reproduction, or republishing for any other purpose shall require a license with payment of fee to Mitsubishi Electric Research Laboratories, Inc. -

Ray Casting Architectures for Volume Visualization

Ray Casting Architectures for Volume Visualization Harvey Ray, Hansp eter P ster , Deb orah Silver , Todd A. Co ok Abstract | Real-time visualization of large volume datasets demands high p erformance computation, pushing the stor- age, pro cessing, and data communication requirements to the limits of current technology. General purp ose paral- lel pro cessors have b een used to visualize mo derate size datasets at interactive frame rates; however, the cost and size of these sup ercomputers inhibits the widespread use for real-time visualization. This pap er surveys several sp e- cial purp ose architectures that seek to render volumes at interactive rates. These sp ecialized visualization accelera- tors have cost, p erformance, and size advantages over par- allel pro cessors. All architectures implement ray casting using parallel and pip elined hardware. Weintro duce a new metric that normalizes p erformance to compare these ar- Fig. 1. Volume dataset. chitectures. The architectures included in this survey are VOGUE, VIRIM, Array Based Ray Casting, EM-Cub e, and VIZARD II. We also discuss future applications of sp ecial ume rendering architectures that seek to achieveinteractive purp ose accelerators. volume rendering for rectilinear datasets. A survey of other metho ds used to achieve real time volume rendering is pre- I. Introduction sented in [47]. The motivation for custom volume renderers OLUME visualization is an imp ortant to ol to view is discussed in the next section. Several other custom ar- and analyze large amounts of data from various sci- V chitectures exist [1], [8], [10], [16], [17], [19], [36], [38] but enti c disciplines. -

Proposed Mobile System for Bone Visualization Using Gpu Raycasting

12 International Journal "Information Technologies & Knowledge" Volume 10, Number 1, © 2016 PROPOSED MOBILE SYSTEM FOR BONE VISUALIZATION USING GPU RAYCASTING VOLUME RENDERING TECHNIQUE Yassmin Abdallah, Abdel-Badeeh M.Salem Abstract: Despite the rapid development of mobile phones, the existence of medical visualization applications on stores is rare. This paper represents iOS mobile application that helps medical students and doctors to show bones processed over in an orthopedic surgery. The mobile application implements GPU ray casting algorithm. Experimental results obtained visually by comparing the result to the result obtained from ImageVis3D which use slice based volume rendering technique. ImageVis3D's development was initiated in 2007 by the NIH/NCRR Center for Integrative Biomedical Computing and additionally supported by the DOE Visualization and Analytics Center for Enabling Technologies at the SCI Institute. The result shows that proposed application based on GPU ray- casting technique present better visualized result than ImageVis3D. The dataset used in the experiment is CT images obtained from Osirix datasets for surgical repair of facial deformity. Keywords: mHealth, volume visualization, mobile-based biomedical computing , CT images , GPU ray casting ACM Classification Keywords: D.2: SOFTWARE ENGINEERING , I.3: COMPUTER GRAPHICS , J.3: LIFE AND MEDICAL SCIENCES Introduction During common orthopedic surgery training, students must learn how to perform numerous surgical procedures like fixing fractures which requires training on artificial bones with the usage of surgical tools and implants. These artificial bones have a high cost that depends on the bone's type and quality. Thus the idea of using a computer based simulators for orthopedic surgery training appeared. Simulators will decrease the cost and help students to practice various procedures on a large number of available simulated surgeries in a safe and controlled environment. -

Efficient Ray Casting of Volumetric Images Using Distance Maps For

Computational Visual Media https://doi.org/10.1007/s41095-019-0155-y Vol. 6, No. 1, March 2020, 53–63 Research Article Efficient ray casting of volumetric images using distance maps for empty space skipping Lachlan J. Deakin1 ( ), Mark A. Knackstedt1 c The Author(s) 2019. Abstract Volume and isosurface rendering are methods 1 Introduction of projecting volumetric images to two dimensions for Volumetric images (volumes) are three-dimensional visualisation. These methods are common in medical scalar fields of voxels (volume + pixels). Volumes imaging and scientific visualisation. Head-mounted optical see-through displays have can be acquired with imaging technologies such recently become an affordable technology and are a as Computed Tomography (CT) and Magnetic promising platform for volumetric image visualisation. Resonance Imaging (MRI) which make it possible Images displayed on a head-mounted display must be to see inside opaque physical objects or people. presented at a high frame rate and with low latency Computer graphics hardware and pipelines have to compensate for head motion. High latency can be generally been optimised for rendering surface jarring and may cause cybersickness which has similar representations of scenes. Volumes are not surfaces and symptoms to motion sickness. must be rendered with specialised volume rendering Volumetric images can be very computationally techniques. Volume ray casting is a common volume expensive to render as they often have hundreds of rendering method well suited to acceleration on a millions of scalar values. Fortunately, certain materials Graphical Processing Unit (GPU) [1–3]. Rays are in images such as air surrounding an object boundary projected from each pixel of a display which pass are often made transparent and need not be sampled, through volumes and composite the colours and which improves rendering efficiency. -

Ray Casting of Multiple Volumetric Datasets with Polyhedral Boundaries on Manycore Gpus

Ray Casting of Multiple Volumetric Datasets with Polyhedral Boundaries on Manycore GPUs Bernhard Kainz ∗ Markus Grabner ∗ Alexander Bornik y Stefan Hauswiesner ∗ Judith Muehl ∗ Dieter Schmalstieg ∗ z Figure 1: Examples of our new approach to efficiently combine direct volume rendering with polyhedral geometry. Our renderer is able to render many volumes together with complex geometry. Images from left to right: Rendering a 20k polygons dragon with a 2563 brain volume and nine 643 smoke clouds; three 10k segmented vessels, tumor and liver surface in a ribcage 5123 volume; a segmented brain in an open skull, each a 2563 volume; 35 translucent rods each with 76 polygons placed in an apple with 5123 voxels. Abstract 1 Introduction Ray casting has prevailed as the most versatile approach for direct We present a new GPU-based rendering system for ray casting of volume rendering because of its flexibility in producing high qual- multiple volumes. Our approach supports a large number of vol- ity images of medical datasets, industrial scans and environmental umes, complex translucent and concave polyhedral objects as well effects [Engel et al. 2006]. The large amount of homogeneous data as CSG intersections of volumes and geometry in any combination. contained in a volumetric model lends itself very well to paralleliza- The system (including the rasterization stage) is implemented en- tion. Today even a commodity graphics processing unit (GPU) is tirely in CUDA, which allows full control of the memory hierarchy, capable of ray casting a single high resolution volumetric dataset at in particular access to high bandwidth and low latency shared mem- a high frame rate using hardware-accelerated shaders. -

Fast Walkthroughs with Image Caches and Ray Casting

Fast Walkthroughs with Image Caches and Ray Casting Michael Wimmer1 Markus Giegl2 Dieter Schmalstieg3 Abstract a large part of the screen is covered by a small set of We present an output-sensitive rendering algorithm for polygons that are very near to the observer (in the accelerating walkthroughs of large, densely occluded ‘Near Field’) virtual environments using a multi-stage Image Based another large part of the screen is covered by sky Rendering Pipeline. In the first stage, objects within a pixels that don’t fall into one of these two categories certain distance are rendered using the traditional are usually covered by very small polygons, or even graphics pipeline, whereas the remaining scene is by more than one polygon. rendered by a pixel based approach using an Image Cache, horizon estimation to avoid calculating sky The number of polygons that falls outside a certain pixels, and finally, ray casting. The time complexity of ‘Area of Interest’ is usually much larger than a this approach does not depend on the total number of polygonal renderer can handle - but they still primitives in the scene. We have measured speedups of contribute to the final image. up to one order of magnitude. The main contribution of this paper is a new algorithm CR Categories and Subject Descriptors: I.3.3 for accelerated rendering of such environments that [Computer Graphics]: Picture/Image Generation - exploits the observations listed above: the scene is Display Algorithms, Viewing algorithms; I.3.7 partitioned into a ‘Near Field’ and a ‘Far Field’. [Computer Graphics]: Three-Dimensional Graphics and Following the ideas of Occlusion Culling, the Near Field Realism - Virtual Reality. -

Volume Ray Casting Techniques and Applications Using General Purpose Computations on Graphics Processing Units Michael Romero

Rochester Institute of Technology RIT Scholar Works Theses Thesis/Dissertation Collections 6-1-2009 Volume ray casting techniques and applications using general purpose computations on graphics processing units Michael Romero Follow this and additional works at: http://scholarworks.rit.edu/theses Recommended Citation Romero, Michael, "Volume ray casting techniques and applications using general purpose computations on graphics processing units" (2009). Thesis. Rochester Institute of Technology. Accessed from This Thesis is brought to you for free and open access by the Thesis/Dissertation Collections at RIT Scholar Works. It has been accepted for inclusion in Theses by an authorized administrator of RIT Scholar Works. For more information, please contact [email protected]. Volume Ray Casting Techniques and Applications using General Purpose Computations on Graphics Processing Units by Michael Romero A Thesis Submitted in Partial Fulfillment of the Requirements for the Degree of Master of Science in Computer Engineering Supervised by Professor Dr. Muhammad Shaaban Department of Computer Engineering Kate Gleason College of Engineering Rochester Institute of Technology Rochester, New York June 2009 Approved by: Dr. Muhammad Shaaban Thesis Advisor, Department of Computer Engineering Dr. Roy Melton Committee Member, Department of Computer Engineering Dr. Joe Geigel Committee Member, Department of Computer Science Dr. Reynold Bailey Committee Member, Department of Computer Science Thesis Release Permission Form Rochester Institute of Technology Kate Gleason College of Engineering Title: Volume Ray Casting Techniques and Applications using General Purpose Computations on Graphics Processing Units I, Michael Romero, hereby grant permission to the Wallace Memorial Library to reproduce my thesis in whole or part. Michael Romero Date iii Dedication For my family, for their love and support, and for sharing with me the best times of my life.