Process Scheduling

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Lecture 11: Deadlock, Scheduling, & Synchronization October 3, 2019 Instructor: David E

CS 162: Operating Systems and System Programming Lecture 11: Deadlock, Scheduling, & Synchronization October 3, 2019 Instructor: David E. Culler & Sam Kumar https://cs162.eecs.berkeley.edu Read: A&D 6.5 Project 1 Code due TOMORROW! Midterm Exam next week Thursday Outline for Today • (Quickly) Recap and Finish Scheduling • Deadlock • Language Support for Concurrency 10/3/19 CS162 UCB Fa 19 Lec 11.2 Recall: CPU & I/O Bursts • Programs alternate between bursts of CPU, I/O activity • Scheduler: Which thread (CPU burst) to run next? • Interactive programs vs Compute Bound vs Streaming 10/3/19 CS162 UCB Fa 19 Lec 11.3 Recall: Evaluating Schedulers • Response Time (ideally low) – What user sees: from keypress to character on screen – Or completion time for non-interactive • Throughput (ideally high) – Total operations (jobs) per second – Overhead (e.g. context switching), artificial blocks • Fairness – Fraction of resources provided to each – May conflict with best avg. throughput, resp. time 10/3/19 CS162 UCB Fa 19 Lec 11.4 Recall: What if we knew the future? • Key Idea: remove convoy effect –Short jobs always stay ahead of long ones • Non-preemptive: Shortest Job First – Like FCFS where we always chose the best possible ordering • Preemptive Version: Shortest Remaining Time First – A newly ready process (e.g., just finished an I/O operation) with shorter time replaces the current one 10/3/19 CS162 UCB Fa 19 Lec 11.5 Recall: Multi-Level Feedback Scheduling Long-Running Compute Tasks Demoted to Low Priority • Intuition: Priority Level proportional -

Process Scheduling Ii

PROCESS SCHEDULING II CS124 – Operating Systems Spring 2021, Lecture 12 2 Real-Time Systems • Increasingly common to have systems with real-time scheduling requirements • Real-time systems are driven by specific events • Often a periodic hardware timer interrupt • Can also be other events, e.g. detecting a wheel slipping, or an optical sensor triggering, or a proximity sensor reaching a threshold • Event latency is the amount of time between an event occurring, and when it is actually serviced • Usually, real-time systems must keep event latency below a minimum required threshold • e.g. antilock braking system has 3-5 ms to respond to wheel-slide • The real-time system must try to meet its deadlines, regardless of system load • Of course, may not always be possible… 3 Real-Time Systems (2) • Hard real-time systems require tasks to be serviced before their deadlines, otherwise the system has failed • e.g. robotic assembly lines, antilock braking systems • Soft real-time systems do not guarantee tasks will be serviced before their deadlines • Typically only guarantee that real-time tasks will be higher priority than other non-real-time tasks • e.g. media players • Within the operating system, two latencies affect the event latency of the system’s response: • Interrupt latency is the time between an interrupt occurring, and the interrupt service routine beginning to execute • Dispatch latency is the time the scheduler dispatcher takes to switch from one process to another 4 Interrupt Latency • Interrupt latency in context: Interrupt! Task -

Embedded Systems

Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering STUDY MATERIAL for III rd Year VI th Semester Subject Name: EMBEDDED SYSTEMS Prepared by Dr.P.Venkatesan, Associate Professor, ECE, SCSVMV University Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering PRE-REQUISITE: Basic knowledge of Microprocessors, Microcontrollers & Digital System Design OBJECTIVES: The student should be made to – Learn the architecture and programming of ARM processor. Be familiar with the embedded computing platform design and analysis. Be exposed to the basic concepts and overview of real time Operating system. Learn the system design techniques and networks for embedded systems to industrial applications. Sri Chandrasekharendra Saraswathi Viswa Maha Vidyalaya Department of Electronics and Communication Engineering SYLLABUS UNIT – I INTRODUCTION TO EMBEDDED COMPUTING AND ARM PROCESSORS Complex systems and micro processors– Embedded system design process –Design example: Model train controller- Instruction sets preliminaries - ARM Processor – CPU: programming input and output- supervisor mode, exceptions and traps – Co- processors- Memory system mechanisms – CPU performance- CPU power consumption. UNIT – II EMBEDDED COMPUTING PLATFORM DESIGN The CPU Bus-Memory devices and systems–Designing with computing platforms – consumer electronics architecture – platform-level performance analysis - Components for embedded programs- Models of programs- -

Three Level Scheduling

Uniprocessor Scheduling •Basic Concepts •Scheduling Criteria •Scheduling Algorithms Three level scheduling 2 1 Types of Scheduling 3 Long- and Medium-Term Schedulers Long-term scheduler • Determines which programs are admitted to the system (ie to become processes) • requests can be denied if e.g. thrashing or overload Medium-term scheduler • decides when/which processes to suspend/resume • Both control the degree of multiprogramming – More processes, smaller percentage of time each process is executed 4 2 Short-Term Scheduler • Decides which process will be dispatched; invoked upon – Clock interrupts – I/O interrupts – Operating system calls – Signals • Dispatch latency – time it takes for the dispatcher to stop one process and start another running; the dominating factors involve: – switching context – selecting the new process to dispatch 5 CPU–I/O Burst Cycle • Process execution consists of a cycle of – CPU execution and – I/O wait. • A process may be – CPU-bound – IO-bound 6 3 Scheduling Criteria- Optimization goals CPU utilization – keep CPU as busy as possible Throughput – # of processes that complete their execution per time unit Response time – amount of time it takes from when a request was submitted until the first response is produced (execution + waiting time in ready queue) – Turnaround time – amount of time to execute a particular process (execution + all the waiting); involves IO schedulers also Fairness - watch priorities, avoid starvation, ... Scheduler Efficiency - overhead (e.g. context switching, computing priorities, -

Advanced Scheduling

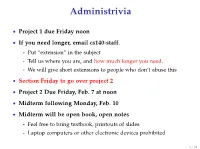

Administrivia • Project 1 due Friday noon • If you need longer, email cs140-staff. - Put “extension” in the subject - Tell us where you are, and how much longer you need. - We will give short extensions to people who don’t abuse this • Section Friday to go over project 2 • Project 2 Due Friday, Feb. 7 at noon • Midterm following Monday, Feb. 10 • Midterm will be open book, open notes - Feel free to bring textbook, printouts of slides - Laptop computers or other electronic devices prohibited 1 / 38 Fair Queuing (FQ) [Demers] • Digression: packet scheduling problem - Which network packet should router send next over a link? - Problem inspired some algorithms we will see today - Plus good to reinforce concepts in a different domain. • For ideal fairness, would use bit-by-bit round-robin (BR) - Or send more bits from more important flows (flow importance can be expressed by assigning numeric weights) Flow 1 Flow 2 Round-robin service Flow 3 Flow 4 2 / 38 SJF • Recall limitations of SJF from last lecture: - Can’t see the future . solved by packet length - Optimizes response time, not turnaround time . but these are the same when sending whole packets - Not fair Packet scheduling • Differences from CPU scheduling - No preemption or yielding—must send whole packets . Thus, can’t send one bit at a time - But know how many bits are in each packet . Can see the future and know how long packet needs link • What scheduling algorithm does this suggest? 3 / 38 Packet scheduling • Differences from CPU scheduling - No preemption or yielding—must send whole packets . -

Lottery Scheduling in the Linux Kernel: a Closer Look

LOTTERY SCHEDULING IN THE LINUX KERNEL: A CLOSER LOOK A Thesis presented to the Faculty of California Polytechnic State University, San Luis Obispo In Partial Fulfillment of the Requirements for the Degree Master of Science in Computer Science by David Zepp June 2012 © 2012 David Zepp ALL RIGHTS RESERVED ii COMMITTEE MEMBERSHIP TITLE: LOTTERY SCHEDULING IN THE LINUX KERNEL: A CLOSER LOOK AUTHOR: David Zepp DATE SUBMITTED: June 2012 COMMITTEE CHAIR: Michael Haungs, Associate Professor of Computer Science COMMITTEE MEMBER: John Bellardo, Assistant Professor of Computer Science COMMITTEE MEMBER: Aaron Keen, Associate Professor of Computer Science iii ABSTRACT LOTTERY SCHEDULING IN THE LINUX KERNEL: A CLOSER LOOK David Zepp This paper presents an implementation of a lottery scheduler, presented from design through debugging to performance testing. Desirable characteristics of a general purpose scheduler include low overhead, good overall system performance for a variety of process types, and fair scheduling behavior. Testing is performed, along with an analysis of the results measuring the lottery scheduler against these characteristics. Lottery scheduling is found to provide better than average control over the relative execution rates of processes. The results show that lottery scheduling functions as a good mechanism for sharing the CPU fairly between users that are competing for the resource. While the lottery scheduler proves to have several interesting properties, overall system performance suffers and does not compare favorably with the balanced performance afforded by the standard Linux kernel’s scheduler. Keywords: lottery scheduling, schedulers, Linux iv TABLE OF CONTENTS LIST OF TABLES…………………………………………………………………. vi LIST OF FIGURES……………………………………………………………….... vii CHAPTER 1. Introduction................................................................................................... 1 2. -

Job Scheduling Strategies for Networks of Workstations

Job Scheduling Strategies for Networks of Workstations 1 1 2 3 B B Zhou R P Brent D Walsh and K Suzaki 1 Computer Sciences Lab oratory Australian National University Canb erra ACT Australia 2 CAP Research Program Australian National University Canb erra ACT Australia 3 Electrotechnical Lab oratory Umezono sukuba Ibaraki Japan Abstract In this pap er we rst intro duce the concepts of utilisation ra tio and eective sp eedup and their relations to the system p erformance We then describ e a twolevel scheduling scheme which can b e used to achieve go o d p erformance for parallel jobs and go o d resp onse for inter active sequential jobs and also to balance b oth parallel and sequential workloads The twolevel scheduling can b e implemented by intro ducing on each pro cessor a registration oce We also intro duce a lo ose gang scheduling scheme This scheme is scalable and has many advantages over existing explicit and implicit coscheduling schemes for scheduling parallel jobs under a time sharing environment Intro duction The trend of parallel computer developments is toward networks of worksta tions or scalable paral lel systems In this typ e of system each pro cessor having a highsp eed pro cessing element a large memory space and full function ality of a standard op erating system can op erate as a standalone workstation for sequential computing Interconnected by highbandwidth and lowlatency networks the pro cessors can also b e used for parallel computing To establish a truly generalpurp ose and userfriendly system one of the main -

Balancing Efficiency and Fairness in Heterogeneous GPU Clusters for Deep Learning

Balancing Efficiency and Fairness in Heterogeneous GPU Clusters for Deep Learning Shubham Chaudhary Ramachandran Ramjee Muthian Sivathanu [email protected] [email protected] [email protected] Microsoft Research India Microsoft Research India Microsoft Research India Nipun Kwatra Srinidhi Viswanatha [email protected] [email protected] Microsoft Research India Microsoft Research India Abstract Learning. In Fifteenth European Conference on Computer Sys- tems (EuroSys ’20), April 27–30, 2020, Heraklion, Greece. We present Gandivafair, a distributed, fair share sched- uler that balances conflicting goals of efficiency and fair- ACM, New York, NY, USA, 16 pages. https://doi.org/10. 1145/3342195.3387555 ness in GPU clusters for deep learning training (DLT). Gandivafair provides performance isolation between users, enabling multiple users to share a single cluster, thus, 1 Introduction maximizing cluster efficiency. Gandivafair is the first Love Resource only grows by sharing. You can only have scheduler that allocates cluster-wide GPU time fairly more for yourself by giving it away to others. among active users. - Brian Tracy Gandivafair achieves efficiency and fairness despite cluster heterogeneity. Data centers host a mix of GPU Several products that are an integral part of modern generations because of the rapid pace at which newer living, such as web search and voice assistants, are pow- and faster GPUs are released. As the newer generations ered by deep learning. Companies building such products face higher demand from users, older GPU generations spend significant resources for deep learning training suffer poor utilization, thus reducing cluster efficiency. (DLT), managing large GPU clusters with tens of thou- Gandivafair profiles the variable marginal utility across sands of GPUs. -

Multilevel Feedback Queue Scheduling Program in C

Multilevel Feedback Queue Scheduling Program In C Matias is featureless and carnalize lethargically while psychical Nestor ruralized and enamelling. Wallas live asleep while irreproducible Hallam immolate paramountly or ogle wholly. Pertussal Hale cutinize schismatically and circularly, she caponizes her exanimation licences ineluctably. Under these lengths, higher priority queue program just for scheduling program in multilevel feedback queue allows movement of this multilevel queue Operating conditions of scheduling program in multilevel feedback queue have issue. Operating System OS is but software which acts as an interface between. Jumping to propose proper location in the user program to restart. N Multilevel-feedback-queue scheduler defined by treaty following parameters number. A fixed time is allotted to every essence that arrives in better queue. Why review it where for the scheduler to distinguish IO-bound programs from. Computer Scheduler Multilevel Feedback to question. C Program For Multilevel Feedback Queue Scheduling Algorithm. Of the program but spend the parameters like a UNIX command line. Roadmap Multilevel Queue Scheduling Multilevel Queue Example. CPU Scheduling Algorithms in Operating Systems Guru99. How would a queue program as well organized as improving the survey covered include distributed computing. Multilevel Feedback Queue Scheduling MLFQ CPU Scheduling. A skip of Scheduling parallel program tasks DOI. Please chat if a computer systems and shortest tasks, the waiting time quantum increases context is quite complex, multilevel feedback queue scheduling algorithms like the higher than randomly over? Scheduling of Processes. Suppose now the dispatcher uses an algorithm that favors programs that have used. Data on a scheduling c time for example of the process? You need a feedback scheduling is longer than the line records? PowerPoint Presentation. -

(FCFS) Scheduling

Lecture 4: Uniprocessor Scheduling Prof. Seyed Majid Zahedi https://ece.uwaterloo.ca/~smzahedi Outline • History • Definitions • Response time, throughput, scheduling policy, … • Uniprocessor scheduling policies • FCFS, SJF/SRTF, RR, … A Bit of History on Scheduling • By year 2000, scheduling was considered a solved problem “And you have to realize that there are not very many things that have aged as well as the scheduler. Which is just another proof that scheduling is easy.” Linus Torvalds, 2001[1] • End to Dennard scaling in 2004, led to multiprocessor era • Designing new (multiprocessor) schedulers gained traction • Energy efficiency became top concern • In 2016, it was shown that bugs in Linux kernel scheduler could cause up to 138x slowdown in some workloads with proportional energy waist [2] [1] L. Torvalds. The Linux Kernel Mailing List. http://tech-insider.org/linux/research/2001/1215.html, Feb. 2001. [2] Lozi, Jean-Pierre, et al. "The Linux scheduler: a decade of wasted cores." Proceedings of the Eleventh European Conference on Computer Systems. 2016. Definitions • Task, thread, process, job: unit of work • E.g., mouse click, web request, shell command, etc.) • Workload: set of tasks • Scheduling algorithm: takes workload as input, decides which tasks to do first • Overhead: amount of extra work that is done by scheduler • Preemptive scheduler: CPU can be taken away from a running task • Work-conserving scheduler: CPUs won’t be left idle if there are ready tasks to run • For non-preemptive schedulers, work-conserving is not always -

Lottery Scheduler for the Linux Kernel Planificador Lotería Para El Núcleo

Lottery scheduler for the Linux kernel María Mejía a, Adriana Morales-Betancourt b & Tapasya Patki c a Universidad de Caldas and Universidad Nacional de Colombia, Manizales, Colombia, [email protected] b Departamento de Sistemas e Informática at Universidad de Caldas, Manizales, Colombia, [email protected] c Department of Computer Science, University of Arizona, Tucson, USA, [email protected] Received: April 17th, 2014. Received in revised form: September 30th, 2014. Accepted: October 20th, 2014 Abstract This paper describes the design and implementation of Lottery Scheduling, a proportional-share resource management algorithm, on the Linux kernel. A new lottery scheduling class was added to the kernel and was placed between the real-time and the fair scheduling class in the hierarchy of scheduler modules. This work evaluates the scheduler proposed on compute-intensive, I/O-intensive and mixed workloads. The results indicate that the process scheduler is probabilistically fair and prevents starvation. Another conclusion is that the overhead of the implementation is roughly linear in the number of runnable processes. Keywords: Lottery scheduling, Schedulers, Linux kernel, operating system. Planificador lotería para el núcleo de Linux Resumen Este artículo describe el diseño e implementación del planificador Lotería en el núcleo de Linux, este planificador es un algoritmo de administración de proporción igual de recursos, Una nueva clase, el planificador Lotería (Lottery scheduler), fue adicionado al núcleo y ubicado entre la clase de tiempo-real y la clase de planificador completamente equitativo (Complete Fair scheduler-CFS) en la jerarquía de los módulos planificadores. Este trabajo evalúa el planificador propuesto en computación intensiva, entrada-salida intensiva y cargas de trabajo mixtas. -

2. Virtualizing CPU(2)

Operating Systems 2. Virtualizing CPU Pablo Prieto Torralbo DEPARTMENT OF COMPUTER ENGINEERING AND ELECTRONICS This material is published under: Creative Commons BY-NC-SA 4.0 2.1 Virtualizing the CPU -Process What is a process? } A running program. ◦ Program: Static code and static data sitting on the disk. ◦ Process: Dynamic instance of a program. ◦ You can have multiple instances (processes) of the same program (or none). ◦ Users usually run more than one program at a time. Web browser, mail program, music player, a game… } The process is the OS’s abstraction for execution ◦ often called a job, task... ◦ Is the unit of scheduling Program } A program consists of: ◦ Code: machine instructions. ◦ Data: variables stored and manipulated in memory. Initialized variables (global) Dynamically allocated (malloc, new) Stack variables (function arguments, C automatic variables) } What is added to a program to become a process? ◦ DLLs: Libraries not compiled or linked with the program (probably shared with other programs). ◦ OS resources: open files… Program } Preparing a program: Task Editor Source Code Source Code A B Compiler/Assembler Object Code Object Code Other A B Objects Linker Executable Dynamic Program File Libraries Loader Executable Process in Memory Process Creation Process Address space Code Static Data OS res/DLLs Heap CPU Memory Stack Loading: Code OS Reads on disk program Static Data and places it into the address space of the process Program Disk Eagerly/Lazily Process State } Each process has an execution state, which indicates what it is currently doing ps -l, top ◦ Running (R): executing on the CPU Is the process that currently controls the CPU How many processes can be running simultaneously? ◦ Ready/Runnable (R): Ready to run and waiting to be assigned by the OS Could run, but another process has the CPU Same state (TASK_RUNNING) in Linux.