Orthogonality

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A New Description of Space and Time Using Clifford Multivectors

A new description of space and time using Clifford multivectors James M. Chappell† , Nicolangelo Iannella† , Azhar Iqbal† , Mark Chappell‡ , Derek Abbott† †School of Electrical and Electronic Engineering, University of Adelaide, South Australia 5005, Australia ‡Griffith Institute, Griffith University, Queensland 4122, Australia Abstract Following the development of the special theory of relativity in 1905, Minkowski pro- posed a unified space and time structure consisting of three space dimensions and one time dimension, with relativistic effects then being natural consequences of this space- time geometry. In this paper, we illustrate how Clifford’s geometric algebra that utilizes multivectors to represent spacetime, provides an elegant mathematical framework for the study of relativistic phenomena. We show, with several examples, how the application of geometric algebra leads to the correct relativistic description of the physical phenomena being considered. This approach not only provides a compact mathematical representa- tion to tackle such phenomena, but also suggests some novel insights into the nature of time. Keywords: Geometric algebra, Clifford space, Spacetime, Multivectors, Algebraic framework 1. Introduction The physical world, based on early investigations, was deemed to possess three inde- pendent freedoms of translation, referred to as the three dimensions of space. This naive conclusion is also supported by more sophisticated analysis such as the existence of only five regular polyhedra and the inverse square force laws. If we lived in a world with four spatial dimensions, for example, we would be able to construct six regular solids, and in arXiv:1205.5195v2 [math-ph] 11 Oct 2012 five dimensions and above we would find only three [1]. -

Orthogonal Reduction 1 the Row Echelon Form -.: Mathematical

MATH 5330: Computational Methods of Linear Algebra Lecture 9: Orthogonal Reduction Xianyi Zeng Department of Mathematical Sciences, UTEP 1 The Row Echelon Form Our target is to solve the normal equation: AtAx = Atb ; (1.1) m×n where A 2 R is arbitrary; we have shown previously that this is equivalent to the least squares problem: min jjAx−bjj : (1.2) x2Rn t n×n As A A2R is symmetric positive semi-definite, we can try to compute the Cholesky decom- t t n×n position such that A A = L L for some lower-triangular matrix L 2 R . One problem with this approach is that we're not fully exploring our information, particularly in Cholesky decomposition we treat AtA as a single entity in ignorance of the information about A itself. t m×m In particular, the structure A A motivates us to study a factorization A=QE, where Q2R m×n is orthogonal and E 2 R is to be determined. Then we may transform the normal equation to: EtEx = EtQtb ; (1.3) t m×m where the identity Q Q = Im (the identity matrix in R ) is used. This normal equation is equivalent to the least squares problem with E: t min Ex−Q b : (1.4) x2Rn Because orthogonal transformation preserves the L2-norm, (1.2) and (1.4) are equivalent to each n other. Indeed, for any x 2 R : jjAx−bjj2 = (b−Ax)t(b−Ax) = (b−QEx)t(b−QEx) = [Q(Qtb−Ex)]t[Q(Qtb−Ex)] t t t t t t t t 2 = (Q b−Ex) Q Q(Q b−Ex) = (Q b−Ex) (Q b−Ex) = Ex−Q b : Hence the target is to find an E such that (1.3) is easier to solve. -

Basic Concepts of Linear Algebra by Jim Carrell Department of Mathematics University of British Columbia 2 Chapter 1

1 Basic Concepts of Linear Algebra by Jim Carrell Department of Mathematics University of British Columbia 2 Chapter 1 Introductory Comments to the Student This textbook is meant to be an introduction to abstract linear algebra for first, second or third year university students who are specializing in math- ematics or a closely related discipline. We hope that parts of this text will be relevant to students of computer science and the physical sciences. While the text is written in an informal style with many elementary examples, the propositions and theorems are carefully proved, so that the student will get experince with the theorem-proof style. We have tried very hard to em- phasize the interplay between geometry and algebra, and the exercises are intended to be more challenging than routine calculations. The hope is that the student will be forced to think about the material. The text covers the geometry of Euclidean spaces, linear systems, ma- trices, fields (Q; R, C and the finite fields Fp of integers modulo a prime p), vector spaces over an arbitrary field, bases and dimension, linear transfor- mations, linear coding theory, determinants, eigen-theory, projections and pseudo-inverses, the Principal Axis Theorem for unitary matrices and ap- plications, and the diagonalization theorems for complex matrices such as the Jordan decomposition. The final chapter gives some applications of symmetric matrices positive definiteness. We also introduce the notion of a graph and study its adjacency matrix. Finally, we prove the convergence of the QR algorithm. The proof is based on the fact that the unitary group is compact. -

Mechanics 84 6.1 2D Particle in Cartesian Coordinates

A Hostetler Handbook v 0.82 Contents Preface v 1 Newton's Laws1 1.1 Many Particles ................................. 2 1.2 Cartesian Coordinates............................. 3 1.3 Polar Coordinates ............................... 4 1.4 Cylindrical Coordinates ............................ 7 1.5 Air Resistance, Friction, and Buoyancy.................... 8 1.6 Charged Particle in a Magnetic Field..................... 17 1.7 Summary: Newton's Laws........................... 20 2 Momentum and Center of Mass 23 2.1 Rocket with no External Force ........................ 24 2.2 Multistage Rockets............................... 25 2.3 Rocket with External Force.......................... 25 2.4 Center of Mass................................. 26 2.5 Angular Momentum of a Single Particle................... 28 2.6 Angular Momentum of a System of Particles ................ 29 2.7 Angular Momentum of a Continuous Mass Distribution .......... 30 2.8 Summary: Momentum and Center of Mass ................. 34 3 Energy 36 3.1 General One-dimensional Systems ...................... 40 3.2 Atwood Machine................................ 43 3.3 Spherically Symmetric Central Forces .................... 44 3.4 The Energy of a System of Particles ..................... 45 3.5 Summary: Energy ............................... 47 4 Oscillations 49 4.1 Simple Harmonic Oscillators.......................... 49 4.2 Damped Harmonic Oscillators......................... 52 4.3 Driven Oscillators ............................... 57 4.4 Summary: Oscillations............................ -

On the Eigenvalues of Euclidean Distance Matrices

“main” — 2008/10/13 — 23:12 — page 237 — #1 Volume 27, N. 3, pp. 237–250, 2008 Copyright © 2008 SBMAC ISSN 0101-8205 www.scielo.br/cam On the eigenvalues of Euclidean distance matrices A.Y. ALFAKIH∗ Department of Mathematics and Statistics University of Windsor, Windsor, Ontario N9B 3P4, Canada E-mail: [email protected] Abstract. In this paper, the notion of equitable partitions (EP) is used to study the eigenvalues of Euclidean distance matrices (EDMs). In particular, EP is used to obtain the characteristic poly- nomials of regular EDMs and non-spherical centrally symmetric EDMs. The paper also presents methods for constructing cospectral EDMs and EDMs with exactly three distinct eigenvalues. Mathematical subject classification: 51K05, 15A18, 05C50. Key words: Euclidean distance matrices, eigenvalues, equitable partitions, characteristic poly- nomial. 1 Introduction ( ) An n ×n nonzero matrix D = di j is called a Euclidean distance matrix (EDM) 1, 2,..., n r if there exist points p p p in some Euclidean space < such that i j 2 , ,..., , di j = ||p − p || for all i j = 1 n where || || denotes the Euclidean norm. i , ,..., Let p , i ∈ N = {1 2 n}, be the set of points that generate an EDM π π ( , ,..., ) D. An m-partition of D is an ordered sequence = N1 N2 Nm of ,..., nonempty disjoint subsets of N whose union is N. The subsets N1 Nm are called the cells of the partition. The n-partition of D where each cell consists #760/08. Received: 07/IV/08. Accepted: 17/VI/08. ∗Research supported by the Natural Sciences and Engineering Research Council of Canada and MITACS. -

Lecture 4: April 8, 2021 1 Orthogonality and Orthonormality

Mathematical Toolkit Spring 2021 Lecture 4: April 8, 2021 Lecturer: Avrim Blum (notes based on notes from Madhur Tulsiani) 1 Orthogonality and orthonormality Definition 1.1 Two vectors u, v in an inner product space are said to be orthogonal if hu, vi = 0. A set of vectors S ⊆ V is said to consist of mutually orthogonal vectors if hu, vi = 0 for all u 6= v, u, v 2 S. A set of S ⊆ V is said to be orthonormal if hu, vi = 0 for all u 6= v, u, v 2 S and kuk = 1 for all u 2 S. Proposition 1.2 A set S ⊆ V n f0V g consisting of mutually orthogonal vectors is linearly inde- pendent. Proposition 1.3 (Gram-Schmidt orthogonalization) Given a finite set fv1,..., vng of linearly independent vectors, there exists a set of orthonormal vectors fw1,..., wng such that Span (fw1,..., wng) = Span (fv1,..., vng) . Proof: By induction. The case with one vector is trivial. Given the statement for k vectors and orthonormal fw1,..., wkg such that Span (fw1,..., wkg) = Span (fv1,..., vkg) , define k u + u = v − hw , v i · w and w = k 1 . k+1 k+1 ∑ i k+1 i k+1 k k i=1 uk+1 We can now check that the set fw1,..., wk+1g satisfies the required conditions. Unit length is clear, so let’s check orthogonality: k uk+1, wj = vk+1, wj − ∑ hwi, vk+1i · wi, wj = vk+1, wj − wj, vk+1 = 0. i=1 Corollary 1.4 Every finite dimensional inner product space has an orthonormal basis. -

4.1 RANK of a MATRIX Rank List Given Matrix M, the Following Are Equal

page 1 of Section 4.1 CHAPTER 4 MATRICES CONTINUED SECTION 4.1 RANK OF A MATRIX rank list Given matrix M, the following are equal: (1) maximal number of ind cols (i.e., dim of the col space of M) (2) maximal number of ind rows (i.e., dim of the row space of M) (3) number of cols with pivots in the echelon form of M (4) number of nonzero rows in the echelon form of M You know that (1) = (3) and (2) = (4) from Section 3.1. To see that (3) = (4) just stare at some echelon forms. This one number (that all four things equal) is called the rank of M. As a special case, a zero matrix is said to have rank 0. how row ops affect rank Row ops don't change the rank because they don't change the max number of ind cols or rows. example 1 12-10 24-20 has rank 1 (maximally one ind col, by inspection) 48-40 000 []000 has rank 0 example 2 2540 0001 LetM= -2 1 -1 0 21170 To find the rank of M, use row ops R3=R1+R3 R4=-R1+R4 R2ØR3 R4=-R2+R4 2540 0630 to get the unreduced echelon form 0001 0000 Cols 1,2,4 have pivots. So the rank of M is 3. how the rank is limited by the size of the matrix IfAis7≈4then its rank is either 0 (if it's the zero matrix), 1, 2, 3 or 4. The rank can't be 5 or larger because there can't be 5 ind cols when there are only 4 cols to begin with. -

Chapter 8. Eigenvalues: Further Applications and Computations

8.1 Diagonalization of Quadratic Forms 1 Chapter 8. Eigenvalues: Further Applications and Computations 8.1. Diagonalization of Quadratic Forms Note. In this section we define a quadratic form and relate it to a vector and ma- trix product. We define diagonalization of a quadratic form and give an algorithm to diagonalize a quadratic form. The fact that every quadratic form can be diago- nalized (using an orthogonal matrix) is claimed by the “Principal Axis Theorem” (Theorem 8.1). It is applied in Section 8.2 to address quadratic surfaces. Definition. A quadratic form f(~x) = f(x1, x2,...,xn) in n variables is a polyno- mial that can be written as n f(~x)= u x x X ij i j i,j=1; i≤j where not all uij are zero. Note. For n = 2, a quadratic form is f(x, y)= ax2 + bxy + xy2. The graph of such an equation in the xy-plane is a conic section (ellipse, hyperbola, or parabola). For n = 3, we have 2 2 2 f(x1, x2, x3)= u11x1 + u12x1x2 + u13x1x3 + u22x2 + u23x2x3 + u33x2. We’ll see in the next section that the graph of f(x1, x2, x3) is a quadric surface. We 8.1 Diagonalization of Quadratic Forms 2 can easily verify that u11 u12 u13 x1 [f(x1, x2, x3)]= [x1, x2, x3] 0 u22 u23 x2 . 0 0 u33 x3 Definition. In the quadratic form ~xT U~x, the matrix U is the upper-triangular coefficient matrix of the quadratic form. Example. Page 417 Number 4(a). -

Orthogonality Handout

3.8 (SUPPLEMENT) | ORTHOGONALITY OF EIGENFUNCTIONS We now develop some properties of eigenfunctions, to be used in Chapter 9 for Fourier Series and Partial Differential Equations. 1. Definition of Orthogonality R b We say functions f(x) and g(x) are orthogonal on a < x < b if a f(x)g(x) dx = 0 . [Motivation: Let's approximate the integral with a Riemann sum, as follows. Take a large integer N, put h = (b − a)=N and partition the interval a < x < b by defining x1 = a + h; x2 = a + 2h; : : : ; xN = a + Nh = b. Then Z b f(x)g(x) dx ≈ f(x1)g(x1)h + ··· + f(xN )g(xN )h a = (uN · vN )h where uN = (f(x1); : : : ; f(xN )) and vN = (g(x1); : : : ; g(xN )) are vectors containing the values of f and g. The vectors uN and vN are said to be orthogonal (or perpendicular) if their dot product equals zero (uN ·vN = 0), and so when we let N ! 1 in the above formula it makes R b sense to say the functions f and g are orthogonal when the integral a f(x)g(x) dx equals zero.] R π 1 2 π Example. sin x and cos x are orthogonal on −π < x < π, since −π sin x cos x dx = 2 sin x −π = 0. 2. Integration Lemma Suppose functions Xn(x) and Xm(x) satisfy the differential equations 00 Xn + λnXn = 0; a < x < b; 00 Xm + λmXm = 0; a < x < b; for some numbers λn; λm. Then Z b 0 0 b (λn − λm) Xn(x)Xm(x) dx = [Xn(x)Xm(x) − Xn(x)Xm(x)]a: a Proof. -

Inner Products and Orthogonality

Advanced Linear Algebra – Week 5 Inner Products and Orthogonality This week we will learn about: • Inner products (and the dot product again), • The norm induced by the inner product, • The Cauchy–Schwarz and triangle inequalities, and • Orthogonality. Extra reading and watching: • Sections 1.3.4 and 1.4.1 in the textbook • Lecture videos 17, 18, 19, 20, 21, and 22 on YouTube • Inner product space at Wikipedia • Cauchy–Schwarz inequality at Wikipedia • Gram–Schmidt process at Wikipedia Extra textbook problems: ? 1.3.3, 1.3.4, 1.4.1 ?? 1.3.9, 1.3.10, 1.3.12, 1.3.13, 1.4.2, 1.4.5(a,d) ??? 1.3.11, 1.3.14, 1.3.15, 1.3.25, 1.4.16 A 1.3.18 1 Advanced Linear Algebra – Week 5 2 There are many times when we would like to be able to talk about the angle between vectors in a vector space V, and in particular orthogonality of vectors, just like we did in Rn in the previous course. This requires us to have a generalization of the dot product to arbitrary vector spaces. Definition 5.1 — Inner Product Suppose that F = R or F = C, and V is a vector space over F. Then an inner product on V is a function h·, ·i : V × V → F such that the following three properties hold for all c ∈ F and all v, w, x ∈ V: a) hv, wi = hw, vi (conjugate symmetry) b) hv, w + cxi = hv, wi + chv, xi (linearity in 2nd entry) c) hv, vi ≥ 0, with equality if and only if v = 0. -

Rotation Matrix - Wikipedia, the Free Encyclopedia Page 1 of 22

Rotation matrix - Wikipedia, the free encyclopedia Page 1 of 22 Rotation matrix From Wikipedia, the free encyclopedia In linear algebra, a rotation matrix is a matrix that is used to perform a rotation in Euclidean space. For example the matrix rotates points in the xy -Cartesian plane counterclockwise through an angle θ about the origin of the Cartesian coordinate system. To perform the rotation, the position of each point must be represented by a column vector v, containing the coordinates of the point. A rotated vector is obtained by using the matrix multiplication Rv (see below for details). In two and three dimensions, rotation matrices are among the simplest algebraic descriptions of rotations, and are used extensively for computations in geometry, physics, and computer graphics. Though most applications involve rotations in two or three dimensions, rotation matrices can be defined for n-dimensional space. Rotation matrices are always square, with real entries. Algebraically, a rotation matrix in n-dimensions is a n × n special orthogonal matrix, i.e. an orthogonal matrix whose determinant is 1: . The set of all rotation matrices forms a group, known as the rotation group or the special orthogonal group. It is a subset of the orthogonal group, which includes reflections and consists of all orthogonal matrices with determinant 1 or -1, and of the special linear group, which includes all volume-preserving transformations and consists of matrices with determinant 1. Contents 1 Rotations in two dimensions 1.1 Non-standard orientation -

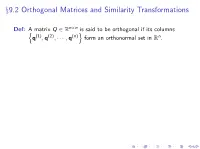

§9.2 Orthogonal Matrices and Similarity Transformations

n×n Thm: Suppose matrix Q 2 R is orthogonal. Then −1 T I Q is invertible with Q = Q . n T T I For any x; y 2 R ,(Q x) (Q y) = x y. n I For any x 2 R , kQ xk2 = kxk2. Ex 0 1 1 1 1 1 2 2 2 2 B C B 1 1 1 1 C B − 2 2 − 2 2 C B C T H = B C ; H H = I : B C B − 1 − 1 1 1 C B 2 2 2 2 C @ A 1 1 1 1 2 − 2 − 2 2 x9.2 Orthogonal Matrices and Similarity Transformations n×n Def: A matrix Q 2 R is said to be orthogonal if its columns n (1) (2) (n)o n q ; q ; ··· ; q form an orthonormal set in R . Ex 0 1 1 1 1 1 2 2 2 2 B C B 1 1 1 1 C B − 2 2 − 2 2 C B C T H = B C ; H H = I : B C B − 1 − 1 1 1 C B 2 2 2 2 C @ A 1 1 1 1 2 − 2 − 2 2 x9.2 Orthogonal Matrices and Similarity Transformations n×n Def: A matrix Q 2 R is said to be orthogonal if its columns n (1) (2) (n)o n q ; q ; ··· ; q form an orthonormal set in R . n×n Thm: Suppose matrix Q 2 R is orthogonal. Then −1 T I Q is invertible with Q = Q . n T T I For any x; y 2 R ,(Q x) (Q y) = x y.