DR S: Deep Regression with Region Selection for Camera Quality

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Xerox Confidentcolor Technology Putting Exacting Control in Your Hands

Xerox FreeFlow® The future-thinking Print Server ConfidentColor Technology print server. Brochure It anticipates your needs. With the FreeFlow Print Server, you’re positioned to not only better meet your customers’ demands today, but to accommodate whatever applications you need to print tomorrow. Add promotional messages to transactional documents. Consolidate your data center and print shop. Expand your color-critical applications. Move files around the world. It’s an investment that allows you to evolve and grow. PDF/X support for graphic arts Color management for applications. transactional applications. With one button, the FreeFlow Print Server If you’re a transactional printer, this is the assures that a PDF/X file runs as intended. So print server for you. It supports color profiles when a customer embeds color-management in an IPDS data stream with AFP Color settings in a file using Adobe® publishing Management—so you can print color with applications, you can run that file with less time confidence. Images and other content can be in prepress and with consistent color. Files can incorporated from a variety of sources and reliably be sent to multiple locations and multiple appropriately rendered for accurate results. And printers with predictable results. when you’re ready to expand into TransPromo applications, it’s ready, too. Xerox ConfidentColor Technology Find out more Putting exacting control To learn more about the FreeFlow Print Server and ConfidentColor Technology, contact your Xerox sales representative or call 1-800-ASK-XEROX. Or visit us online at www.xerox.com/freeflow. in your hands. © 2009 Xerox Corporation. All rights reserved. -

1.1 Introduction to the Digital Systems

1.1 Introduction to the digital systems PHO 130 F Digital Photography Prof. Lorenzo Guasti How a DSLR work and why we call a camera “reflex” The heart of all digital cameras is of course the digital imaging sensor. It is the component that converts the light coming from the subject you are photographing into an electronic signal, and ultimately into the digital photograph that you can view or print PHO 130 F Digital Photography Prof. Lorenzo Guasti Although they all perform the same task and operate in broadly the same way, there are in fact th- ree different types of sensor in common use today. The first one is the CCD, or Charge Coupled Device. CCDs have been around since the 1960s, and have become very advanced, however they can be slower to operate than other types of sensor. The main alternative to CCD is the CMOS, or Complimentary Metal-Oxide Semiconductor sen- sor. The main proponent of this technology being Canon, which uses it in its EOS range of digital SLR cameras. CMOS sensors have some of the signal processing transistors mounted alongside the sensor cell, so they operate more quickly and can be cheaper to make. A third but less common type of sensor is the revolutionary Foveon X3, which offers a number of advantages over conventional sensors but is so far only found in Sigma’s range of digital SLRs and its forthcoming DP1 compact camera. I’ll explain the X3 sensor after I’ve explained how the other two types work. PHO 130 F Digital Photography Prof. -

Diy Photography & Jr: Photographic Stickers

1 DIY PHOTOGRAPHY & JR: PHOTOGRAPHIC STICKERS For grade levels 3-12 Developed by: PEITER GRIGA The world now contains more photographs than bricks, and they are, astonishingly, all different. - John Szarkowski Are images the spine and rib cage of our society or are we so saturated in photography that we forget the underlying messages we ‘read’ from images? Are we protected/ supported by images or flooded by them? JR’s work elaborates on the idea of how we value images by demonstrating our likenesses and differences. This dichotomy is so great in his work; JR can eliminate himself as the artist, becoming the egoless ‘guide.’ As part of his Inside Out Project he allows people from all around the world to send him pictures they take of themselves, which he then prints out a poster sized image and returns to the image maker, who then selects a location and hangs the work in a public space. In a traditional sense, he hands the artistic power over to the participant and only controls the printing and specs for the images. The CAC participated in the Inside Out Project as early as 2011, but most recently through installations in Fountain Square, Rabbit Hash, KY, Findlay Market, and inside the CAC Lobby. The power of the image and the location of the image are vital aspects of JR’s site-specific work. Building upon these two factors is the material used to place the image in the public space. His materials usually include wheat paste, paper, and a large scale digitally printed image. -

Digital Photography: the Influence of CCD Pixel-Size on Imaging

IS&T’s 1999 PICS Conference IS&T's 1999 PICS Conference Digital Photography: The Influence of CCD Pixel Size on Imaging Performance Rodney Shaw Hewlett-Packard Research Laboratories Palo Alto, California 94304 Abstract with the question of enlargement, since this is a well-known factor in both analog and digital photography, and can be dealt As digital photography becomes increasingly competitive with with in a separate, well-established manner. traditional analog systems, questions of both comparative and The question here is the applicability of a similar global ultimate performance become of great practical relevance. In set of photographic performance parameters for any given particular the questions of camera speed and of the image digital sensor array, taking into account the complicating ex- sharpness and noise properties are of interest, especially from istence of a grid with a fixed pixel size. However to address the possibility of an opening up of new desirable areas of this question we can take the silver-halide analogy further by photographic performance with new digital technologies. considering the case where a conventional negative image is Clearly the camera format (array size, number of pixels) plays scanned as input to a digital system, which is in fact an in- a prominent role in defining overall photographic perfor- creasingly commonplace activity. In doing so the scanning mance, but it is less clear how the absolute pixel dimensions system implies placing over the film a virtual grid much akin define individual photographic parameters. This present study to the physical grid of sensor arrays. The choice of the grid uses a previously published end-to-end signal-to-noise ratio size is not seen as interfering with the global photographic model to investigate the influence of pixel size on various exposure properties, though clearly it will impose its own reso- aspects of imaging performance. -

An Analytical Study on the Modern History of Digital Photography Keywords

203 Amr Galal An analytical study on the modern history of digital photography Dr. Amr Mohamed Galal Lecturer in Faculty of mass Communication, MISR International University (MIU) Abstract: Keywords: Since its emergence more than thirty years ago, digital photography has undergone Digital Photography rapid transformations and developments. Digital technology has produced Image Sensor (CCD-CMOS) generations of personal computers, which turned all forms of technology into a digital one. Photography received a large share in this development in the making Image Storage (Digital of cameras, sensitive surfaces, image storage, image transfer, and image quality and Memory) clarity. This technology also allowed the photographer to record all his visuals with Playback System a high efficiency that keeps abreast of the age’s requirements and methods of communication. The final form of digital technology was not reached all of a Bayer Color Filter sudden; this development – in spite of its fast pace – has been subject to many LCD display pillars, all of which have contributed to reaching the modern traditional digital Digital Viewfinder. shape of the camera and granted the photographer capabilities he can use to produce images that fulfill their task. Reaching this end before digital technology was quite difficult and required several procedures to process sensitive film and paper material and many chemical processes. Nowadays, this process is done by pushing a few buttons. This research sheds light on these main foundations for the stages of digital development according to their chronological order, along with presenting scientists or production companies that have their own research laboratories which develop and enhance their products. -

Making the Transition from Film to Digital

TECHNICAL PAPER Making the Transition from Film to Digital TABLE OF CONTENTS Photography became a reality in the 1840s. During this time, images were recorded on 2 Making the transition film that used particles of silver salts embedded in a physical substrate, such as acetate 2 The difference between grain or gelatin. The grains of silver turned dark when exposed to light, and then a chemical and pixels fixer made that change more or less permanent. Cameras remained pretty much the 3 Exposure considerations same over the years with features such as a lens, a light-tight chamber to hold the film, 3 This won’t hurt a bit and an aperture and shutter mechanism to control exposure. 3 High-bit images But the early 1990s brought a dramatic change with the advent of digital technology. 4 Why would you want to use a Instead of using grains of silver embedded in gelatin, digital photography uses silicon to high-bit image? record images as numbers. Computers process the images, rather than optical enlargers 5 About raw files and tanks of often toxic chemicals. Chemically-developed wet printing processes have 5 Saving a raw file given way to prints made with inkjet printers, which squirt microscopic droplets of ink onto paper to create photographs. 5 Saving a JPEG file 6 Pros and cons 6 Reasons to shoot JPEG 6 Reasons to shoot raw 8 Raw converters 9 Reading histograms 10 About color balance 11 Noise reduction 11 Sharpening 11 It’s in the cards 12 A matter of black and white 12 Conclusion Snafellnesjokull Glacier Remnant. -

Evolution of Photography: Film to Digital

University of North Georgia Nighthawks Open Institutional Repository Honors Theses Honors Program Fall 10-2-2018 Evolution of Photography: Film to Digital Charlotte McDonnold University of North Georgia, [email protected] Follow this and additional works at: https://digitalcommons.northgeorgia.edu/honors_theses Part of the Art and Design Commons, and the Fine Arts Commons Recommended Citation McDonnold, Charlotte, "Evolution of Photography: Film to Digital" (2018). Honors Theses. 63. https://digitalcommons.northgeorgia.edu/honors_theses/63 This Thesis is brought to you for free and open access by the Honors Program at Nighthawks Open Institutional Repository. It has been accepted for inclusion in Honors Theses by an authorized administrator of Nighthawks Open Institutional Repository. Evolution of Photography: Film to Digital A Thesis Submitted to the Faculty of the University of North Georgia In Partial Fulfillment Of the Requirements for the Degree Bachelor of Art in Studio Art, Photography and Graphic Design With Honors Charlotte McDonnold Fall 2018 EVOLUTION OF PHOTOGRAPHY 2 Acknowledgements I would like thank my thesis panel, Dr. Stephen Smith, Paul Dunlap, Christopher Dant, and Dr. Nancy Dalman. Without their support and guidance, this project would not have been possible. I would also like to thank my Honors Research Class from spring 2017. They provided great advice and were willing to listen to me talk about photography for an entire semester. A special thanks to my family and friends for reading over drafts, offering support, and advice throughout this project. EVOLUTION OF PHOTOGRAPHY 3 Abstract Due to the ever changing advancements in technology, photography is a constantly growing field. What was once an art form solely used by professionals is now accessible to every consumer in the world. -

Intro to Digital Photography.Pdf

ABSTRACT Learn and master the basic features of your camera to gain better control of your photos. Individualized chapters on each of the cameras basic functions as well as cheat sheets you can download and print for use while shooting. Neuberger, Lawrence INTRO TO DGMA 3303 Digital Photography DIGITAL PHOTOGRAPHY Mastering the Basics Table of Contents Camera Controls ............................................................................................................................. 7 Camera Controls ......................................................................................................................... 7 Image Sensor .............................................................................................................................. 8 Camera Lens .............................................................................................................................. 8 Camera Modes ............................................................................................................................ 9 Built-in Flash ............................................................................................................................. 11 Viewing System ........................................................................................................................ 11 Image File Formats ....................................................................................................................... 13 File Compression ...................................................................................................................... -

Art and Art History 1

Art and Art History 1 ART AND ART HISTORY art.as.miami.edu Dept. Codes: ART, ARH Educational Objectives The Department of Art and Art History provides facilities and instruction to serve the needs of the general student. The program fosters participation and appreciation in the visual arts for students with specialized interests and abilities preparing for careers in the production and interpretation of art and art history. Degree Programs The Department of Art and Art History offers two undergraduate degrees: • The Bachelor of Arts, with tracks in: • Art (General Study) • Art History • Studio Art • The Bachelor of Fine Arts in Studio Art, which allows for primary and secondary concentrations in: • Painting • Sculpture • Printmaking • Photography/Digital Imaging • Graphic Design/Multimedia • Ceramics The B.A. requires a minimum of 36 credit hours in the department with a grade of C or higher. The B. A. major is also required to have a minor outside the department. Minor requirements are specified by each department and are listed in the Bulletin. The B.F.A. requires a minimum of 72 credit hours in the department, a grade of C or higher in each course, a group exhibition and at least a 3.0 average in departmental courses. The B.F.A. major is not required to have a minor outside the department. Writing within the Discipline To satisfy the College of Arts and Sciences writing requirement in the discipline, students whose first major is art or art history must take at least one of the following courses for a writing credit: ARH 343: Modern Art, and/or ARH 344: Contemporary Art. -

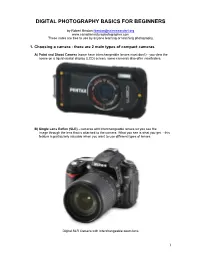

Digital Photography Basics for Beginners

DIGITAL PHOTOGRAPHY BASICS FOR BEGINNERS by Robert Berdan [email protected] www.canadiannaturephotographer.com These notes are free to use by anyone learning or teaching photography. 1. Choosing a camera - there are 2 main types of compact cameras A) Point and Shoot Camera (some have interchangeable lenses most don't) - you view the scene on a liquid crystal display (LCD) screen, some cameras also offer viewfinders. B) Single Lens Reflex (SLR) - cameras with interchangeable lenses let you see the image through the lens that is attached to the camera. What you see is what you get - this feature is particularly valuable when you want to use different types of lenses. Digital SLR Camera with Interchangeable zoom lens 1 Point and shoot cameras are small, light weight and can be carried in a pocket. These cameras tend to be cheaper then SLR cameras. Many of these cameras offer a built in macro mode allowing extreme close-up pictures. Generally the quality of the images on compact cameras is not as good as that from SLR cameras, but they are capable of taking professional quality images. SLR cameras are bigger and usually more expensive. SLRs can be used with a wide variety of interchangeable lenses such as telephoto lenses and macro lenses. SLR cameras offer excellent image quality, lots of features and accessories (some might argue too many features). SLR cameras also shoot a higher frame rates then compact cameras making them better for action photography. Their disadvantages include: higher cost, larger size and weight. They are called Single Lens Reflex, because you see through the lens attached to the camera, the light is reflected by a mirror through a prism and then the viewfinder. -

Sunburns and Shotguns

Southeastern College Art Conference October 20-23, 2010 (Richmond, Virginia) Studio Sessions Panel All That Was Old Is New Again: The Revival of Alternative Photographic Processes Digital photographic processes have all but eliminated the necessity of darkroom developing and printing. But in increasing numbers, artists including Sally Mann, Chuck Close, Lois Connor and Jerry Spagnoli, have turned to the techniques from the medium’s origin, including the daguerreotype, calotype, tintype, cyanotype, ambrotype, platinum prints and collodion glass-plate exposures. This trend of shunning the control offered by digitalization in favor of reclaiming the handcrafted, explorative nature of photography is both an aesthetic choice and a practical one. Many suppliers have stopped making various papers and film, prompting photographers to prepare their own surfaces by hand. Today, these various approaches – as well as a blending of digital and alternative processes – are being taught in our studio classrooms. As such, they assuredly will be a part of studio practice for a new generation of artists. However, they are largely absent from our contemporary art histories. This panel invited papers from a range of scholars and art practitioners who wish to address the revival of alternative and digital-hybrid photographic practices, and the meaning of such a thing for our histories. Chairs: Kris Belden-Adams, Kansas City Art Institute and Amelia Ishmael, School of the Art Institute of Chicago Panelists: Rich Gere, Savannah College of Art and Design Photographic -

2006 SPIE Invited Paper: a Brief History of 'Pixel'

REPRINT — author contact: <[email protected]> A Brief History of ‘Pixel’ Richard F. Lyon Foveon Inc., 2820 San Tomas Expressway, Santa Clara CA 95051 ABSTRACT The term pixel, for picture element, was first published in two different SPIE Proceedings in 1965, in articles by Fred C. Billingsley of Caltech’s Jet Propulsion Laboratory. The alternative pel was published by William F. Schreiber of MIT in the Proceedings of the IEEE in 1967. Both pixel and pel were propagated within the image processing and video coding field for more than a decade before they appeared in textbooks in the late 1970s. Subsequently, pixel has become ubiquitous in the fields of computer graphics, displays, printers, scanners, cameras, and related technologies, with a variety of sometimes conflicting meanings. Reprint — Paper EI 6069-1 Digital Photography II — Invited Paper IS&T/SPIE Symposium on Electronic Imaging 15–19 January 2006, San Jose, California, USA Copyright 2006 SPIE and IS&T. This paper has been published in Digital Photography II, IS&T/SPIE Symposium on Electronic Imaging, 15–19 January 2006, San Jose, California, USA, and is made available as an electronic reprint with permission of SPIE and IS&T. One print or electronic copy may be made for personal use only. Systematic or multiple reproduction, distribution to multiple locations via electronic or other means, duplication of any material in this paper for a fee or for commercial purposes, or modification of the content of the paper are prohibited. A Brief History of ‘Pixel’ Richard F. Lyon Foveon Inc., 2820 San Tomas Expressway, Santa Clara CA 95051 ABSTRACT The term pixel, for picture element, was first published in two different SPIE Proceedings in 1965, in articles by Fred C.