Statistics for Calculus Students

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

A Computer Algebra System for R: Macaulay2 and the M2r Package

A computer algebra system for R: Macaulay2 and the m2r package David Kahle∗1, Christopher O'Neilly2, and Jeff Sommarsz3 1Department of Statistical Science, Baylor University 2Department of Mathematics, University of California, Davis 3Department of Mathematics, Statistics, and Computer Science, University of Illinois at Chicago Abstract Algebraic methods have a long history in statistics. The most prominent manifestation of modern algebra in statistics can be seen in the field of algebraic statistics, which brings tools from commutative algebra and algebraic geometry to bear on statistical problems. Now over two decades old, algebraic statistics has applications in a wide range of theoretical and applied statistical domains. Nevertheless, algebraic statistical methods are still not mainstream, mostly due to a lack of easy off-the-shelf imple- mentations. In this article we debut m2r, an R package that connects R to Macaulay2 through a persistent back-end socket connection running locally or on a cloud server. Topics range from basic use of m2r to applications and design philosophy. 1 Introduction Algebra, a branch of mathematics concerned with abstraction, structure, and symmetry, has a long history of applications in statistics. For example, Pearson's early work on method of moments estimation in mixture models ultimately involved systems of polynomial equations that he painstakingly and remarkably solved by hand (Pearson, 1894; Am´endolaet al., 2016). Fisher's work in design was strongly algebraic and combinato- rial, focusing on topics such as Latin squares (Fisher, 1934). Invariance and equivariance continue to form a major pillar of mathematical statistics through the lens of location-scale families (Pitman, 1939; Bondesson, 1983; Lehmann and Romano, 2005). -

Algebraic Statistics Tutorial I Alleles Are in Hardy-Weinberg Equilibrium, the Genotype Frequencies Are

Example: Hardy-Weinberg Equilibrium Suppose a gene has two alleles, a and A. If allele a occurs in the population with frequency θ (and A with frequency 1 − θ) and these Algebraic Statistics Tutorial I alleles are in Hardy-Weinberg equilibrium, the genotype frequencies are P(X = aa)=θ2, P(X = aA)=2θ(1 − θ), P(X = AA)=(1 − θ)2 Seth Sullivant The model of Hardy-Weinberg equilibrium is the set North Carolina State University July 22, 2012 2 2 M = θ , 2θ(1 − θ), (1 − θ) | θ ∈ [0, 1] ⊂ ∆3 2 I(M)=paa+paA+pAA−1, paA−4paapAA Seth Sullivant (NCSU) Algebraic Statistics July 22, 2012 1 / 32 Seth Sullivant (NCSU) Algebraic Statistics July 22, 2012 2 / 32 Main Point of This Tutorial Phylogenetics Problem Given a collection of species, find the tree that explains their history. Many statistical models are described by (semi)-algebraic constraints on a natural parameter space. Generators of the vanishing ideal can be useful for constructing algorithms or analyzing properties of statistical model. Two Examples Phylogenetic Algebraic Geometry Sampling Contingency Tables Data consists of aligned DNA sequences from homologous genes Human: ...ACCGTGCAACGTGAACGA... Chimp: ...ACCTTGGAAGGTAAACGA... Gorilla: ...ACCGTGCAACGTAAACTA... Seth Sullivant (NCSU) Algebraic Statistics July 22, 2012 3 / 32 Seth Sullivant (NCSU) Algebraic Statistics July 22, 2012 4 / 32 Model-Based Phylogenetics Phylogenetic Models Use a probabilistic model of mutations Assuming site independence: Parameters for the model are the combinatorial tree T , and rate Phylogenetic Model is a latent class graphical model parameters for mutations on each edge Vertex v ∈ T gives a random variable Xv ∈ {A, C, G, T} Models give a probability for observing a particular aligned All random variables corresponding to internal nodes are latent collection of DNA sequences ACCGTGCAACGTGAACGA Human: X1 X2 X3 Chimp: ACGTTGCAAGGTAAACGA Gorilla: ACCGTGCAACGTAAACTA Y 2 Assuming site independence, data is summarized by empirical Y 1 distribution of columns in the alignment. -

Elect Your Council

Volume 41 • Issue 3 IMS Bulletin April/May 2012 Elect your Council Each year IMS holds elections so that its members can choose the next President-Elect CONTENTS of the Institute and people to represent them on IMS Council. The candidate for 1 IMS Elections President-Elect is Bin Yu, who is Chancellor’s Professor in the Department of Statistics and the Department of Electrical Engineering and Computer Science, at the University 2 Members’ News: Huixia of California at Berkeley. Wang, Ming Yuan, Allan Sly, The 12 candidates standing for election to the IMS Council are (in alphabetical Sebastien Roch, CR Rao order) Rosemary Bailey, Erwin Bolthausen, Alison Etheridge, Pablo Ferrari, Nancy 4 Author Identity and Open L. Garcia, Ed George, Haya Kaspi, Yves Le Jan, Xiao-Li Meng, Nancy Reid, Laurent Bibliography Saloff-Coste, and Richard Samworth. 6 Obituary: Franklin Graybill The elected Council members will join Arnoldo Frigessi, Steve Lalley, Ingrid Van Keilegom and Wing Wong, whose terms end in 2013; and Sandrine Dudoit, Steve 7 Statistical Issues in Assessing Hospital Evans, Sonia Petrone, Christian Robert and Qiwei Yao, whose terms end in 2014. Performance Read all about the candidates on pages 12–17, and cast your vote at http://imstat.org/elections/. Voting is open until May 29. 8 Anirban’s Angle: Learning from a Student Left: Bin Yu, candidate for IMS President-Elect. 9 Parzen Prize; Recent Below are the 12 Council candidates. papers: Probability Surveys Top row, l–r: R.A. Bailey, Erwin Bolthausen, Alison Etheridge, Pablo Ferrari Middle, l–r: Nancy L. Garcia, Ed George, Haya Kaspi, Yves Le Jan 11 COPSS Fisher Lecturer Bottom, l–r: Xiao-Li Meng, Nancy Reid, Laurent Saloff-Coste, Richard Samworth 12 Council Candidates 18 Awards nominations 19 Terence’s Stuff: Oscars for Statistics? 20 IMS meetings 24 Other meetings 27 Employment Opportunities 28 International Calendar of Statistical Events 31 Information for Advertisers IMS Bulletin 2 . -

Algebraic Statistics of Design of Experiments

Thammasat University, Bangkok Algebraic Statistics of Design of Experiments Giovanni Pistone Bangkok, March 14 2012 The talk 1. A short history of AS from a personal perspective. See a longer account by E. Riccomagno in • E. Riccomagno, Metrika 69(2-3), 397 (2009), ISSN 0026-1335, http://dx.doi.org/10.1007/s00184-008-0222-3. 2. The algebraic description of a designed experiment. I use the tutorial by M.P. Rogantin: • http://www.dima.unige.it/~rogantin/AS-DOE.pdf 3. Topics from the theory, cfr. the course teached by E. Riccomagno, H. Wynn and Hugo Maruri-Aguilar in 2009 at the Second de Brun Workshop in Galway: • http://hamilton.nuigalway.ie/DeBrunCentre/ SecondWorkshop/online.html. CIMPA Ecole \Statistique", 1980 `aNice (France) Sumate Sompakdee, GP, and Chantaluck Na Pombejra First paper People • Roberto Fontana, DISMA Politecnico di Torino. • Fabio Rapallo, Universit`adel Piemonte Orientale. • Eva Riccomagno DIMA Universit`adi Genova. • Maria Piera Rogantin, DIMA, Universit`adi Genova. • Henry P. Wynn, LSE London. AS and DoE: old biblio and state of the art Beginning of AS in DoE • G. Pistone, H.P. Wynn, Biometrika 83(3), 653 (1996), ISSN 0006-3444 • L. Robbiano, Gr¨obnerBases and Statistics, in Gr¨obnerBases and Applications (Proc. of the Conf. 33 Years of Gr¨obnerBases), edited by B. Buchberger, F. Winkler (Cambridge University Press, 1998), Vol. 251 of London Mathematical Society Lecture Notes, pp. 179{204 • R. Fontana, G. Pistone, M. Rogantin, Journal of Statistical Planning and Inference 87(1), 149 (2000), ISSN 0378-3758 • G. Pistone, E. Riccomagno, H.P. Wynn, Algebraic statistics: Computational commutative algebra in statistics, Vol. -

A Matrix-Valued Bernoulli Distribution Gianfranco Lovison1 Dipartimento Di Scienze Statistiche E Matematiche “S

Journal of Multivariate Analysis 97 (2006) 1573–1585 www.elsevier.com/locate/jmva A matrix-valued Bernoulli distribution Gianfranco Lovison1 Dipartimento di Scienze Statistiche e Matematiche “S. Vianelli”, Università di Palermo Viale delle Scienze 90128 Palermo, Italy Received 17 September 2004 Available online 10 August 2005 Abstract Matrix-valued distributions are used in continuous multivariate analysis to model sample data matrices of continuous measurements; their use seems to be neglected for binary, or more generally categorical, data. In this paper we propose a matrix-valued Bernoulli distribution, based on the log- linear representation introduced by Cox [The analysis of multivariate binary data, Appl. Statist. 21 (1972) 113–120] for the Multivariate Bernoulli distribution with correlated components. © 2005 Elsevier Inc. All rights reserved. AMS 1991 subject classification: 62E05; 62Hxx Keywords: Correlated multivariate binary responses; Multivariate Bernoulli distribution; Matrix-valued distributions 1. Introduction Matrix-valued distributions are used in continuous multivariate analysis (see, for exam- ple, [10]) to model sample data matrices of continuous measurements, allowing for both variable-dependence and unit-dependence. Their potentials seem to have been neglected for binary, and more generally categorical, data. This is somewhat surprising, since the natural, elementary representation of datasets with categorical variables is precisely in the form of sample binary data matrices, through the 0-1 coding of categories. E-mail address: [email protected] URL: http://dssm.unipa.it/lovison. 1 Work supported by MIUR 60% 2000, 2001 and MIUR P.R.I.N. 2002 grants. 0047-259X/$ - see front matter © 2005 Elsevier Inc. All rights reserved. doi:10.1016/j.jmva.2005.06.008 1574 G. -

Discrete Probability Distributions Uniform Distribution Bernoulli

Discrete Probability Distributions Uniform Distribution Experiment obeys: all outcomes equally probable Random variable: outcome Probability distribution: if k is the number of possible outcomes, 1 if x is a possible outcome p(x)= k ( 0 otherwise Example: tossing a fair die (k = 6) Bernoulli Distribution Experiment obeys: (1) a single trial with two possible outcomes (success and failure) (2) P trial is successful = p Random variable: number of successful trials (zero or one) Probability distribution: p(x)= px(1 − p)n−x Mean and variance: µ = p, σ2 = p(1 − p) Example: tossing a fair coin once Binomial Distribution Experiment obeys: (1) n repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: number of successful trials n x n−x Probability distribution: b(x; n,p)= x p (1 − p) Mean and variance: µ = np, σ2 = np(1 − p) Example: tossing a fair coin n times Approximations: (1) b(x; n,p) ≈ p(x; λ = pn) if p ≪ 1, x ≪ n (Poisson approximation) (2) b(x; n,p) ≈ n(x; µ = pn,σ = np(1 − p) ) if np ≫ 1, n(1 − p) ≫ 1 (Normal approximation) p Geometric Distribution Experiment obeys: (1) indeterminate number of repeated trials (2) each trial has two possible outcomes (success and failure) (3) P ith trial is successful = p for all i (4) the trials are independent Random variable: trial number of first successful trial Probability distribution: p(x)= p(1 − p)x−1 1 2 1−p Mean and variance: µ = p , σ = p2 Example: repeated attempts to start -

STATS8: Introduction to Biostatistics 24Pt Random Variables And

STATS8: Introduction to Biostatistics Random Variables and Probability Distributions Babak Shahbaba Department of Statistics, UCI Random variables • In this lecture, we will discuss random variables and their probability distributions. • Formally, a random variable X assigns a numerical value to each possible outcome (and event) of a random phenomenon. • For instance, we can define X based on possible genotypes of a bi-allelic gene A as follows: 8 < 0 for genotype AA; X = 1 for genotype Aa; : 2 for genotype aa: • Alternatively, we can define a random, Y , variable this way: 0 for genotypes AA and aa; Y = 1 for genotype Aa: Random variables • After we define a random variable, we can find the probabilities for its possible values based on the probabilities for its underlying random phenomenon. • This way, instead of talking about the probabilities for different outcomes and events, we can talk about the probability of different values for a random variable. • For example, suppose P(AA) = 0:49, P(Aa) = 0:42, and P(aa) = 0:09. • Then, we can say that P(X = 0) = 0:49, i.e., X is equal to 0 with probability of 0.49. • Note that the total probability for the random variable is still 1. Random variables • The probability distribution of a random variable specifies its possible values (i.e., its range) and their corresponding probabilities. • For the random variable X defined based on genotypes, the probability distribution can be simply specified as follows: 8 < 0:49 for x = 0; P(X = x) = 0:42 for x = 1; : 0:09 for x = 2: Here, x denotes a specific value (i.e., 0, 1, or 2) of the random variable. -

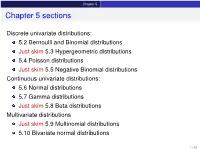

Chapter 5 Sections

Chapter 5 Chapter 5 sections Discrete univariate distributions: 5.2 Bernoulli and Binomial distributions Just skim 5.3 Hypergeometric distributions 5.4 Poisson distributions Just skim 5.5 Negative Binomial distributions Continuous univariate distributions: 5.6 Normal distributions 5.7 Gamma distributions Just skim 5.8 Beta distributions Multivariate distributions Just skim 5.9 Multinomial distributions 5.10 Bivariate normal distributions 1 / 43 Chapter 5 5.1 Introduction Families of distributions How: Parameter and Parameter space pf /pdf and cdf - new notation: f (xj parameters ) Mean, variance and the m.g.f. (t) Features, connections to other distributions, approximation Reasoning behind a distribution Why: Natural justification for certain experiments A model for the uncertainty in an experiment All models are wrong, but some are useful – George Box 2 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Bernoulli distributions Def: Bernoulli distributions – Bernoulli(p) A r.v. X has the Bernoulli distribution with parameter p if P(X = 1) = p and P(X = 0) = 1 − p. The pf of X is px (1 − p)1−x for x = 0; 1 f (xjp) = 0 otherwise Parameter space: p 2 [0; 1] In an experiment with only two possible outcomes, “success” and “failure”, let X = number successes. Then X ∼ Bernoulli(p) where p is the probability of success. E(X) = p, Var(X) = p(1 − p) and (t) = E(etX ) = pet + (1 − p) 8 < 0 for x < 0 The cdf is F(xjp) = 1 − p for 0 ≤ x < 1 : 1 for x ≥ 1 3 / 43 Chapter 5 5.2 Bernoulli and Binomial distributions Binomial distributions Def: Binomial distributions – Binomial(n; p) A r.v. -

Lecture 6: Special Probability Distributions

The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Lecture 6: Special Probability Distributions Assist. Prof. Dr. Emel YAVUZ DUMAN MCB1007 Introduction to Probability and Statistics Istanbul˙ K¨ult¨ur University The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di Outline 1 The Discrete Uniform Distribution 2 The Bernoulli Distribution 3 The Binomial Distribution 4 The Negative Binomial and Geometric Distribution The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The Discrete Uniform Distribution If a random variable can take on k different values with equal probability, we say that it has a discrete uniform distribution; symbolically, Definition 1 A random variable X has a discrete uniform distribution and it is referred to as a discrete uniform random variable if and only if its probability distribution is given by 1 f (x)= for x = x , x , ··· , xk k 1 2 where xi = xj when i = j. The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di The mean and the variance of this distribution are Mean: k k 1 x1 + x2 + ···+ xk μ = E[X ]= xi f (xi ) = xi · = , k k i=1 i=1 1/k Variance: k 2 2 2 1 σ = E[(X − μ) ]= (xi − μ) · k i=1 2 2 2 (x − μ) +(x − μ) + ···+(xk − μ) = 1 2 k The Discrete Uniform Distribution The Bernoulli Distribution The Binomial Distribution The Negative Binomial and Geometric Di In the special case where xi = i, the discrete uniform distribution 1 becomes f (x)= k for x =1, 2, ··· , k, and in this from it applies, for example, to the number of points we roll with a balanced die. -

11. Parameter Estimation

11. Parameter Estimation Chris Piech and Mehran Sahami May 2017 We have learned many different distributions for random variables and all of those distributions had parame- ters: the numbers that you provide as input when you define a random variable. So far when we were working with random variables, we either were explicitly told the values of the parameters, or, we could divine the values by understanding the process that was generating the random variables. What if we don’t know the values of the parameters and we can’t estimate them from our own expert knowl- edge? What if instead of knowing the random variables, we have a lot of examples of data generated with the same underlying distribution? In this chapter we are going to learn formal ways of estimating parameters from data. These ideas are critical for artificial intelligence. Almost all modern machine learning algorithms work like this: (1) specify a probabilistic model that has parameters. (2) Learn the value of those parameters from data. Parameters Before we dive into parameter estimation, first let’s revisit the concept of parameters. Given a model, the parameters are the numbers that yield the actual distribution. In the case of a Bernoulli random variable, the single parameter was the value p. In the case of a Uniform random variable, the parameters are the a and b values that define the min and max value. Here is a list of random variables and the corresponding parameters. From now on, we are going to use the notation q to be a vector of all the parameters: Distribution Parameters Bernoulli(p) q = p Poisson(l) q = l Uniform(a,b) q = (a;b) Normal(m;s 2) q = (m;s 2) Y = mX + b q = (m;b) In the real world often you don’t know the “true” parameters, but you get to observe data. -

(Introduction to Probability at an Advanced Level) - All Lecture Notes

Fall 2018 Statistics 201A (Introduction to Probability at an advanced level) - All Lecture Notes Aditya Guntuboyina August 15, 2020 Contents 0.1 Sample spaces, Events, Probability.................................5 0.2 Conditional Probability and Independence.............................6 0.3 Random Variables..........................................7 1 Random Variables, Expectation and Variance8 1.1 Expectations of Random Variables.................................9 1.2 Variance................................................ 10 2 Independence of Random Variables 11 3 Common Distributions 11 3.1 Ber(p) Distribution......................................... 11 3.2 Bin(n; p) Distribution........................................ 11 3.3 Poisson Distribution......................................... 12 4 Covariance, Correlation and Regression 14 5 Correlation and Regression 16 6 Back to Common Distributions 16 6.1 Geometric Distribution........................................ 16 6.2 Negative Binomial Distribution................................... 17 7 Continuous Distributions 17 7.1 Normal or Gaussian Distribution.................................. 17 1 7.2 Uniform Distribution......................................... 18 7.3 The Exponential Density...................................... 18 7.4 The Gamma Density......................................... 18 8 Variable Transformations 19 9 Distribution Functions and the Quantile Transform 20 10 Joint Densities 22 11 Joint Densities under Transformations 23 11.1 Detour to Convolutions...................................... -

Lecture Notes #17: Some Important Distributions and Coupon Collecting

EECS 70 Discrete Mathematics and Probability Theory Spring 2014 Anant Sahai Note 17 Some Important Distributions In this note we will introduce three important probability distributions that are widely used to model real- world phenomena. The first of these which we already learned about in the last Lecture Note is the binomial distribution Bin(n; p). This is the distribution of the number of Heads, Sn, in n tosses of a biased coin with n k n−k probability p to be Heads. As we saw, P[Sn = k] = k p (1 − p) . E[Sn] = np, Var[Sn] = np(1 − p) and p s(Sn) = np(1 − p). Geometric Distribution Question: A biased coin with Heads probability p is tossed repeatedly until the first Head appears. What is the distribution and the expected number of tosses? As always, our first step in answering the question must be to define the sample space W. A moment’s thought tells us that W = fH;TH;TTH;TTTH;:::g; i.e., W consists of all sequences over the alphabet fH;Tg that end with H and contain no other H’s. This is our first example of an infinite sample space (though it is still discrete). What is the probability of a sample point, say w = TTH? Since successive coin tosses are independent (this is implicit in the statement of the problem), we have Pr[TTH] = (1 − p) × (1 − p) × p = (1 − p)2 p: And generally, for any sequence w 2 W of length i, we have Pr[w] = (1 − p)i−1 p.