Automated Debugging: Are We Close?

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Testing E-Commerce Systems: a Practical Guide - Wing Lam

Testing E-Commerce Systems: A Practical Guide - Wing Lam As e-customers (whether business or consumer), we are unlikely to have confidence in a Web site that suffers frequent downtime, hangs in the middle of a transaction, or has a poor sense of usability. Testing, therefore, has a crucial role in the overall development process. Given unlimited time and resources, you could test a system to exhaustion. However, most projects operate within fixed budgets and time scales, so project managers need a systematic and cost- effective approach to testing that maximizes test confidence. This article provides a quick and practical introduction to testing medium- to large-scale transactional e-commerce systems based on project experiences developing tailored solutions for B2C Web retailing and B2B procurement. Typical of most e-commerce systems, the application architecture includes front-end content delivery and management systems, and back-end transaction processing and legacy integration. Aimed primarily at project and test managers, this article explains how to · establish a systematic test process, and · test e-commerce systems. The Test Process You would normally expect to spend between 25 to 40 percent of total project effort on testing and validation activities. Most seasoned project managers would agree that test planning needs to be carried out early in the project lifecycle. This will ensure the time needed for test preparation (establishing a test environment, finding test personnel, writing test scripts, and so on) before any testing can actually start. The different kinds of testing (unit, system, functional, black-box, and others) are well documented in the literature. -

IBM Developer for Z/OS Enterprise Edition

Solution Brief IBM Developer for z/OS Enterprise Edition A comprehensive, robust toolset for developing z/OS applications using DevOps software delivery practices Companies must be agile to respond to market demands. The digital transformation is a continuous process, embracing hybrid cloud and the Application Program Interface (API) economy. To capitalize on opportunities, businesses must modernize existing applications and build new cloud native applications without disrupting services. This transformation is led by software delivery teams employing DevOps practices that include continuous integration and continuous delivery to a shared pipeline. For z/OS Developers, this transformation starts with modern tools that empower them to deliver more, faster, with better quality and agility. IBM Developer for z/OS Enterprise Edition is a modern, robust solution that offers the program analysis, edit, user build, debug, and test capabilities z/OS developers need, plus easy integration with the shared pipeline. The challenge IBM z/OS application development and software delivery teams have unique challenges with applications, tools, and skills. Adoption of agile practices Application modernization “DevOps and agile • Mainframe applications • Applications require development on the platform require frequent updates access to digital services have jumped from the early adopter stage in 2016 to • Development teams must with controlled APIs becoming common among adopt DevOps practices to • The journey to cloud mainframe businesses”1 improve their -

Interpreting Reliability and Availability Requirements for Network-Centric Systems What MCOTEA Does

Marine Corps Operational Test and Evaluation Activity Interpreting Reliability and Availability Requirements for Network-Centric Systems What MCOTEA Does Planning Testing Reporting Expeditionary, C4ISR & Plan Naval, and IT/Business Amphibious Systems Systems Test Evaluation Plans Evaluation Reports Assessment Plans Assessment Reports Test Plans Test Data Reports Observation Plans Observation Reports Combat Ground Service Combat Support Initial Operational Test Systems Systems Follow-on Operational Test Multi-service Test Quick Reaction Test Test Observations 2 Purpose • To engage test community in a discussion about methods in testing and evaluating RAM for software-intensive systems 3 Software-intensive systems – U.S. military one of the largest users of information technology and software in the world [1] – Dependence on these types of systems is increasing – Software failures have had disastrous consequences Therefore, software must be highly reliable and available to support mission success 4 Interpreting Requirements Excerpts from capabilities documents for software intensive systems: Availability Reliability “The system is capable of achieving “Average duration of 716 hours a threshold operational without experiencing an availability of 95% with an operational mission fault” objective of 98%” “Mission duration of 24 hours” “Operationally Available in its intended operating environment “Completion of its mission in its with at least a 0.90 probability” intended operating environment with at least a 0.90 probability” 5 Defining Reliability & Availability What do we mean reliability and availability for software intensive systems? – One consideration: unlike traditional hardware systems, a highly reliable and maintainable system will not necessarily be highly available - Highly recoverable systems can be less available - A system that restarts quickly after failures can be highly available, but not necessarily reliable - Risk in inflating availability and underestimating reliability if traditional equations are used. -

E2 Studio Integrated Development Environment User's Manual: Getting

User’s Manual e2 studio Integrated Development Environment User’s Manual: Getting Started Guide Target Device RX, RL78, RH850 and RZ Family Rev.4.00 May 2016 www.renesas.com Notice 1. Descriptions of circuits, software and other related information in this document are provided only to illustrate the operation of semiconductor products and application examples. You are fully responsible for the incorporation of these circuits, software, and information in the design of your equipment. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from the use of these circuits, software, or information. 2. Renesas Electronics has used reasonable care in preparing the information included in this document, but Renesas Electronics does not warrant that such information is error free. Renesas Electronics assumes no liability whatsoever for any damages incurred by you resulting from errors in or omissions from the information included herein. 3. Renesas Electronics does not assume any liability for infringement of patents, copyrights, or other intellectual property rights of third parties by or arising from the use of Renesas Electronics products or technical information described in this document. No license, express, implied or otherwise, is granted hereby under any patents, copyrights or other intellectual property rights of Renesas Electronics or others. 4. You should not alter, modify, copy, or otherwise misappropriate any Renesas Electronics product, whether in whole or in part. Renesas Electronics assumes no responsibility for any losses incurred by you or third parties arising from such alteration, modification, copy or otherwise misappropriation of Renesas Electronics product. 5. Renesas Electronics products are classified according to the following two quality grades: “Standard” and “High Quality”. -

QA and Testing Have Become More of a Concurrent Process

Special Feature: SW Testing “QA and testing have become more of a concurrent process rather than an end of lifecycle process” Manish Tandon, VP & Head– Independent Validation and Testing Solutions, Infosys Interview by: Anil Chopra, PCQuest n an exclusive interview with the PCQuest Editor, Manish talks become a software tester? about the global trends in software testing, skillsets required Software testing has evolved into a very special function and Ito enter the field, how technology has evolved in this area to anybody wanting to enter the domain requires two special provide higher ROI, and much more. An IIT and IIM graduate, skillsets. One is deep functional or domain skills to understand Manish is responsible for formulating and executing the business the functionality. Plus, you need specialized technical skills strategy for Independent Validation and Testing Solutions practice as well because you want to do automation, middleware, and at Infosys. He mentors the unit specifically in meeting business performance testing. It’s a unique combination, and we focus goals and targets. In addition, he manages critical relationships very strongly on both these dimensions. A good software tester with client executives, industry analysts, deal consultants, and should always have an eye for detail. So people who have a slightly anchors the training and development of key personnel. Provided critical eye and are willing to dig deeper always make much better here are his expert comments on the subject. testers than people who want to fly at 30k feet. Q> What are some of the global trends in the software Q> You mentioned that software testing is now required testing business? How do you see the market moving? for front-end applications. -

SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California

PharmaSUG 2017 - Paper TT03 SAS® Debugging 101 Kirk Paul Lafler, Software Intelligence Corporation, Spring Valley, California Abstract SAS® users are almost always surprised to discover their programs contain bugs. In fact, when asked users will emphatically stand by their programs and logic by saying they are bug free. But, the vast number of experiences along with the realities of writing code says otherwise. Bugs in software can appear anywhere; whether accidentally built into the software by developers, or introduced by programmers when writing code. No matter where the origins of bugs occur, the one thing that all SAS users know is that debugging SAS program errors and warnings can be a daunting, and humbling, task. This presentation explores the world of SAS bugs, providing essential information about the types of bugs, how bugs are created, the symptoms of bugs, and how to locate bugs. Attendees learn how to apply effective techniques to better understand, identify, and repair bugs and enable program code to work as intended. Introduction From the very beginning of computing history, program code, or the instructions used to tell a computer how and when to do something, has been plagued with errors, or bugs. Even if your own program code is determined to be error-free, there is a high probability that the operating system and/or software being used, such as the SAS software, have embedded program errors in them. As a result, program code should be written to assume the unexpected and, as much as possible, be able to handle unforeseen events using acceptable strategies and programming methodologies. -

Agile Playbook V2.1—What’S New?

AGILE P L AY B O OK TABLE OF CONTENTS INTRODUCTION ..........................................................................................................4 Who should use this playbook? ................................................................................6 How should you use this playbook? .........................................................................6 Agile Playbook v2.1—What’s new? ...........................................................................6 How and where can you contribute to this playbook?.............................................7 MEET YOUR GUIDES ...................................................................................................8 AN AGILE DELIVERY MODEL ....................................................................................10 GETTING STARTED.....................................................................................................12 THE PLAYS ...................................................................................................................14 Delivery ......................................................................................................................15 Play: Start with Scrum ...........................................................................................15 Play: Seeing success but need more fexibility? Move on to Scrumban ............17 Play: If you are ready to kick of the training wheels, try Kanban .......................18 Value ......................................................................................................................19 -

Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK

1 From Start-ups to Scale-ups: Opportunities and Open Problems for Static and Dynamic Program Analysis Mark Harman∗, Peter O’Hearn∗ ∗Facebook London and University College London, UK Abstract—This paper1 describes some of the challenges and research questions that target the most productive intersection opportunities when deploying static and dynamic analysis at we have yet witnessed: that between exciting, intellectually scale, drawing on the authors’ experience with the Infer and challenging science, and real-world deployment impact. Sapienz Technologies at Facebook, each of which started life as a research-led start-up that was subsequently deployed at scale, Many industrialists have perhaps tended to regard it unlikely impacting billions of people worldwide. that much academic work will prove relevant to their most The paper identifies open problems that have yet to receive pressing industrial concerns. On the other hand, it is not significant attention from the scientific community, yet which uncommon for academic and scientific researchers to believe have potential for profound real world impact, formulating these that most of the problems faced by industrialists are either as research questions that, we believe, are ripe for exploration and that would make excellent topics for research projects. boring, tedious or scientifically uninteresting. This sociological phenomenon has led to a great deal of miscommunication between the academic and industrial sectors. I. INTRODUCTION We hope that we can make a small contribution by focusing on the intersection of challenging and interesting scientific How do we transition research on static and dynamic problems with pressing industrial deployment needs. Our aim analysis techniques from the testing and verification research is to move the debate beyond relatively unhelpful observations communities to industrial practice? Many have asked this we have typically encountered in, for example, conference question, and others related to it. -

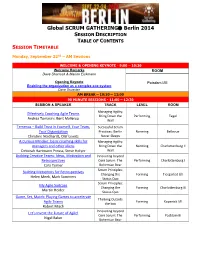

Global SCRUM GATHERING® Berlin 2014

Global SCRUM GATHERING Berlin 2014 SESSION DESCRIPTION TABLE OF CONTENTS SESSION TIMETABLE Monday, September 22nd – AM Sessions WELCOME & OPENING KEYNOTE - 9:00 – 10:30 Welcome Remarks ROOM Dave Sharrock & Marion Eickmann Opening Keynote Potsdam I/III Enabling the organization as a complex eco-system Dave Snowden AM BREAK – 10:30 – 11:00 90 MINUTE SESSIONS - 11:00 – 12:30 SESSION & SPEAKER TRACK LEVEL ROOM Managing Agility: Effectively Coaching Agile Teams Bring Down the Performing Tegel Andrea Tomasini, Bent Myllerup Wall Temenos – Build Trust in Yourself, Your Team, Successful Scrum Your Organization Practices: Berlin Norming Bellevue Christine Neidhardt, Olaf Lewitz Never Sleeps A Curious Mindset: basic coaching skills for Managing Agility: managers and other aliens Bring Down the Norming Charlottenburg II Deborah Hartmann Preuss, Steve Holyer Wall Building Creative Teams: Ideas, Motivation and Innovating beyond Retrospectives Core Scrum: The Performing Charlottenburg I Cara Turner Bohemian Bear Scrum Principles: Building Metaphors for Retrospectives Changing the Forming Tiergarted I/II Helen Meek, Mark Summers Status Quo Scrum Principles: My Agile Suitcase Changing the Forming Charlottenburg III Martin Heider Status Quo Game, Set, Match: Playing Games to accelerate Thinking Outside Agile Teams Forming Kopenick I/II the box Robert Misch Innovating beyond Let's Invent the Future of Agile! Core Scrum: The Performing Postdam III Nigel Baker Bohemian Bear Monday, September 22nd – PM Sessions LUNCH – 12:30 – 13:30 90 MINUTE SESSIONS - 13:30 -

Building Useful Program Analysis Tools Using an Extensible Java Compiler

Building Useful Program Analysis Tools Using an Extensible Java Compiler Edward Aftandilian, Raluca Sauciuc Siddharth Priya, Sundaresan Krishnan Google, Inc. Google, Inc. Mountain View, CA, USA Hyderabad, India feaftan, [email protected] fsiddharth, [email protected] Abstract—Large software companies need customized tools a specific task, but they fail for several reasons. First, ad- to manage their source code. These tools are often built in hoc program analysis tools are often brittle and break on an ad-hoc fashion, using brittle technologies such as regular uncommon-but-valid code patterns. Second, simple ad-hoc expressions and home-grown parsers. Changes in the language cause the tools to break. More importantly, these ad-hoc tools tools don’t provide sufficient information to perform many often do not support uncommon-but-valid code code patterns. non-trivial analyses, including refactorings. Type and symbol We report our experiences building source-code analysis information is especially useful, but amounts to writing a tools at Google on top of a third-party, open-source, extensible type-checker. Finally, more sophisticated program analysis compiler. We describe three tools in use on our Java codebase. tools are expensive to create and maintain, especially as the The first, Strict Java Dependencies, enforces our dependency target language evolves. policy in order to reduce JAR file sizes and testing load. The second, error-prone, adds new error checks to the compilation In this paper, we present our experience building special- process and automates repair of those errors at a whole- purpose tools on top of the the piece of software in our codebase scale. -

The Art, Science, and Engineering of Fuzzing: a Survey

1 The Art, Science, and Engineering of Fuzzing: A Survey Valentin J.M. Manes,` HyungSeok Han, Choongwoo Han, Sang Kil Cha, Manuel Egele, Edward J. Schwartz, and Maverick Woo Abstract—Among the many software vulnerability discovery techniques available today, fuzzing has remained highly popular due to its conceptual simplicity, its low barrier to deployment, and its vast amount of empirical evidence in discovering real-world software vulnerabilities. At a high level, fuzzing refers to a process of repeatedly running a program with generated inputs that may be syntactically or semantically malformed. While researchers and practitioners alike have invested a large and diverse effort towards improving fuzzing in recent years, this surge of work has also made it difficult to gain a comprehensive and coherent view of fuzzing. To help preserve and bring coherence to the vast literature of fuzzing, this paper presents a unified, general-purpose model of fuzzing together with a taxonomy of the current fuzzing literature. We methodically explore the design decisions at every stage of our model fuzzer by surveying the related literature and innovations in the art, science, and engineering that make modern-day fuzzers effective. Index Terms—software security, automated software testing, fuzzing. ✦ 1 INTRODUCTION Figure 1 on p. 5) and an increasing number of fuzzing Ever since its introduction in the early 1990s [152], fuzzing studies appear at major security conferences (e.g. [225], has remained one of the most widely-deployed techniques [52], [37], [176], [83], [239]). In addition, the blogosphere is to discover software security vulnerabilities. At a high level, filled with many success stories of fuzzing, some of which fuzzing refers to a process of repeatedly running a program also contain what we consider to be gems that warrant a with generated inputs that may be syntactically or seman- permanent place in the literature. -

Usage of Prototyping in Software Testing

Multi-Knowledge Electronic Comprehensive Journal For Education And Science Publications (MECSJ) ISSUE (14), Nov (2018) www.mescj.com USAGE OF PROTOTYPING IN SOFTWARE TESTING 1st Khansaa Azeez Obayes Al-Husseini Babylon Technical Insitute , Al-Furat Al-Awsat Technical University,51015 Babylon,Iraq. 1st [email protected] 2nd Ali Hamzah Obaid Babylon Technical Insitute , Al-Furat Al-Awsat Technical University,51015 Babylon,Iraq. 2nd [email protected] Abstract: Prototyping process is an important part of software development. This article describes usage of prototyping using Question – and – Answer memory and visual prototype diesign to realize Prototyping software development model. It also includes review of different models of software lifecycle with comparison them with Prototyping model. Key word: Question – and – Answer , Prototype, Software Development, RAD model. 1. Introduction One of the most important parts of software development is project design. Software project designing as a process of project creation can be divided in two large parts (very conditional): design of the functionality and design of user interface. To design the functionality, tools such as UML and IDEF0 are used, which have already become industry standards for software development. In the design of the graphical user interface there are no established standards, there are separate recommendations, techniques, design features, traditions, operating conditions for software, etc. At the same time, an important, but not always properly performed, part of this process is prototyping, i.e. the creation of a prototype or prototype of a future system. Prototypes can be different: paper, presentation, imitation, etc., up to exact correspondence to the future program. Most of the modern integrated development environments for software (IDE) allows to create something similar to prototypes, but it is connected with specific knowledge of IDE and programming language.