The Effect of Time of Shearing on Wool Production and Management of a Spring-Lambing Merino Flock

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

National Park Service Cultural Landscapes Inventory Cant Ranch Historic District John Day Fossil Beds National Monument 2009

National Park Service Cultural Landscapes Inventory 2009 Cant Ranch Historic District John Day Fossil Beds National Monument ____________________________________________________ Table of Contents Inventory Unit Summary and Site Plan Inventory Unit Description ................................................................................................................ 2 Site Plans ......................................................................................................................................... 4 Park Information ............................................................................................................................... 5 Concurrence Status Inventory Status ............................................................................................................................... 6 Geographic Information and Location Map Inventory Unit Boundary Description ............................................................................................... 6 State and County ............................................................................................................................. 7 Size .................................................................................................................................................. 7 Boundary UTMS ............................................................................................................................... 8 Location Map ................................................................................................................................. -

Worker Exposure to Dusts and Bioaerosols in the Sheep Shearing Industry in Eastern NSW

Worker Exposure to Dusts and Bioaerosols in the Sheep Shearing Industry in Eastern NSW. by Ryan Kift BAppSc (Hons) (Occupational Health and Environment) BAppSc (Environmental Health) A thesis presented in fulfillment of the requirement for the degree of Doctor of Philosophy March 2007 CERTIFICATE OF ORIGINALITY The text of this thesis contains no material which has been accepted as part of the requirements for another degree or diploma in any University, or material previously published or written by another author unless due reference to this material has been made. Ryan Kift 2 March 2007 ii ACKNOWLEDGEMENTS Thank you to my family, supervisors, friends and colleagues that helped and supported me throughout this study. Thank you to the University of Western Sydney for their financial, academic and resource support. Thank you to all of the people involved in the sheep shearing industry that participated in this study. Without the help and support of all of these people this study would not have been possible. iii ABSTRACT The air found in a sheep shearing environment is normally contaminated with many different airborne substances. These contaminants include dust (predominantly organic), bioaerosols (fungi and bacteria) and gases (ammonia and carbon monoxide). Respiratory disorders, such as Hypersensitivity Pneumonitis, chronic bronchitis and asthma, have been associated with exposure to the types of airborne contaminants found in a normal sheep shearing environment. The majority of Australian and international research in the livestock handling industries that has investigated dust exposure has focused on the poultry and pig industries. Some worldwide studies have been undertaken on feedlot cattle. Research in the sheep shearing industry in relation to worker exposure data for airborne contaminants has been identified as a major need as no documented studies have been undertaken anywhere in the world. -

2020 Supply Catalog

2020 Supply Catalog 1-800-841-9665 | (614) 834-2006 | www.midstateswoolgrowers.com The sheep industry has seen many changes in the past 100 years. Mid-States Wool Growers Cooperative has been here to witness all these changes and to continue to provide quality products at a fair price with excellent service. As we begin our next century, let’s look back at the events that have shaped Mid-States Wool Growers Cooperative into the company that it is today. 1918 Parent wool marketing organization, Tri-State Wool Growers was formed. 1921 First cooperative warehouse was purchased for $125,000 in Columbus, Ohio. 1931 Midwest Wool Marketing Cooperative—an organization that played a major role in the current cooperative—was organized in Kansas City, Missouri. 1945 Tri-State Wool Growers was reorganized and the name was changed to the Ohio Wool Growers Cooperative Association. 1957 Ohio Wool Growers added a livestock supply division, which provided the Midwestern sheep producer with supplies and equipment needed to make sheep operations more efficient and successful. 1958 A new 60,000 sq. ft. warehouse was built at Groves Road in Columbus. This building utilized some of the most efficient wool grading and marketing technology available at the time. 1969 Ohio Wool Growers added a new, industry related retail clothing store known as Woolen Square. 1974 Ohio Wool Growers Association and Midwest Wool Marketing Cooperative joined forces through a merger that resulted in the new and stronger organization being named Mid-States Wool Growers Cooperative. The merger added a warehouse in South Hutchinson, Kansas as well as a livestock supply division operated by Midwest. -

Research Theme: the Life of Shepherds and Sheep Farmers

CULTURE AND NATURE: THE EUROPEAN HERITAGE OF SHEEP FARMING AND PASTORAL LIFE RESEARCH THEME: THE LIFE OF SHEPHERDS AND SHEEP FARMERS RESEARCH REPORT FOR THE UK By Gemma Bell and Simon Bell Estonian University of Life Sciences November 2011 The CANEPAL project is co-funded by the European Commission, Directorate General Education and Culture, CULTURE 2007-2013.Project no: 508090-CU-1-2010-1-HU-CULTURE-VOL11 This report reflects the authors’ view and the Commission is not liable for any use that may be made of the information contained herein 1. INTRODUCTION Since the agricultural revolution, the reorganisation of land use patterns, the Highland clearances (when most people in the Scottish Highlands were removed from the land and replaced by sheep flocks) sheep farming has become a branch of “normal” farming with resident farmers and employed shepherds. Since the land used for sheep farming is normally spatially connected to the farmstead and since also the sheep breeds are hardy and stay outside all year round there is no need to move them long distances to summer pastures or for shepherds to tend them and stay away from home. Thus the kind of activities and the life of shepherds found in some European countries have no place in Britain. However, that is not to say that the life of a sheep farmer is easy and that it may not be a solitary and lonely occupation set in a remote hill region. Sheep farming in the UK can be divided generally between upland and lowland activities. Upland farmers normally breed sheep and sell the lambs to lowland farmers for “finishing”, that is fattened up to the optimum weight for slaughter. -

Boolcoomatta Reserve CLICK WENT the SHEARS

Boolcoomatta Reserve CLICK WENT THE SHEARS A social history of Boolcoomatta Station, 1857 to 2020 Judy D. Johnson Editor: Eva Finzel Collated and written by Judy Johnson 2019 Edited version by Eva Finzel 2021 We acknowledge the Adnyamathanha People and Wilyakali People as the Traditional Owners of what we know as Boolcoomatta. We recognise and respect the enduring relationship they have with their lands and waters, and we pay our respects to Elders past, present and future. Front page map based on Pastoral Run Sheet 5, 1936-1964, (163-0031) Courtesy of the State Library of South Australia Bush Heritage Australia Level 1, 395 Collins Street | PO Box 329 Flinders Lane Melbourne, VIC 8009 T: (03) 8610 9100 T: 1300 628 873 (1300 NATURE) F: (03) 8610 9199 E: [email protected] W: www.bushheritage.org.au Content Author’s note and acknowledgements viii Editor’s note ix Timeline, 1830 to 2020 x Conversions xiv Abbreviations xiv An introduction to Boolcoomatta 1 The Adnyamathanha People and Wilyakali People 4 The European history of Boolcoomatta 6 European settlement 6 From sheep station to a place of conservation 8 Notes 9 Early explorers, surveyors and settlers, 1830 to 1859 10 Early European exploration and settlement 10 Goyder’s discoveries and Line 11 Settlement during the 1800s 13 The shepherd and the top hats The Tapley family, 1857 to 1858 14 The shepherds 15 The top hats 16 The Tapleys’ short lease of Boolcoomatta 16 Thomas and John E Tapley's life after the sale of Boolcoomatta 17 Boolcoomatta’s neighbours in 1857 18 The timber -

Sheep Shearing and Basic Care 101 Student Handbook

Sheep Shearing and Basic Care 101 Student Handbook Written by Alison Smith and Trevor Hollenback, Instructors Photo by Paige Green by Paige Photo Fibershed University of California Dept. of Agriculture and Natural Resources Hopland Research and Extension Center Development of curriculum and production of this course handbook have been made possible by generous funding from Fibershed. A heartfelt ‘thank you’ goes out to them for this support and the work they do. Fibershed develops regional and regenerative fiber systems on behalf of independent working producers, by expanding opportunities to implement carbon farming, forming catalytic foundations to rebuild regional manufacturing, and through connecting end-users to farms and ranches through public education. For more information, visit fibershed.org Table of Contents Introduction – page 2 SECTION 1 – Sheep Care and Management, by Alison Smith – page 3 SECTION 2 – Shearing Sheep, by Trevor Hollenback – page 13 APPENDIX A – Glossary of Terms, by Trevor Hollenback – page 53 APPENDIX B – Sample Sheep Management Calendars, by Alison Smith – page 55 Photo by Paige Green by Paige Photo Sheep Shearing and Basic Care 101 1 Introduction to this Handbook his handbook has been written as a supplemental document for students going through the Sheep Shearing Tand Basic Care 101 course at the UCANR Hopland Research and Extension Center. It is not a standalone how-to book, but rather a document intended to serve as your notes for this course. All of the primary principles covered in this course can be found in this handbook, saving you valuable time and energy that might have been spent diligently taking notes during class. -

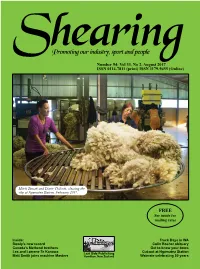

Shearing Magazine on Line At

Read Shearing magazine on line at www.lastsidepublishing.co.nz Shearing Promoting our industry, sport and people Number 94: Vol 33, No 2, August 2017 ISSN 0114-7811 (print) ISSN 1179-9455 (Online) Mitch Tamati and Diane Chilcott, classing the clip at Ngamatea Station, February 2017. FREE See inside for mailing rates Inside: Truck Days in WA Rowly’s new record Colin Bosher obituary Canada’s Metheral brothers Get to know your bows Les and Lorrene Te Kanawa Last Side Publishing Cut-out at Ngamatea Station Matt Smith joins machine Masters Hamilton, New Zealand Waimate celebrating 50 years Shearing 1 Read Shearing magazine on line at www.lastsidepublishing.co.nz SAFETY. FIRST. Shearing shed safety starts with the No.1 & most trusted name in shearing Search Heiniger Shed Safety on YouTube EVO Shearing Plant - Winner of 2 Worksafe Industry Awards - Unique electronic safety switch - Designed to eliminate handpiece lockups - Proven choice for commercial shearing contractors in Australia and New Zealand TPW Xpress Woolpress - Safety screen guard with automatic return - Presses more weight into less packs - Fast pack locking system - Automatic bale pinning and bale ejection - Contamination-free short square bales Put Shed Safety First! Get in to your local stockists or call is today! Heiniger New Zealand | 1B Chinook Place, Hornby, Christchurch 8042 | +64 3 349 8282 | www.heiniger.com Shearing 2 Read Shearing magazine on line at www.lastsidepublishing.co.nz Number 94: Vol 33, No 2, August 2017 Promoting our industry, sport and people Shearing ISSN 0114 - 7811 (print) ISSN 1179 - 9455 (online) CONTENTS UNDER COVER STORY 4 NZ Wool Classers’ Association Greetings readers and welcome to our August 2017 6 Something to do with old combs edition of the magazine. -

Sheep Farming and Shearing (1874) Sara K

Cedarville University DigitalCommons@Cedarville Research Papers Martha McMillan Journal Collection Spring 2015 Sheep Farming and Shearing (1874) Sara K. Bennett Cedarville University, [email protected] Follow this and additional works at: http://digitalcommons.cedarville.edu/ mcmillan_research_papers Part of the Agriculture Commons, Animal Sciences Commons, Christianity Commons, Civic and Community Engagement Commons, Education Commons, and the Family, Life Course, and Society Commons The McMillan Journal Collection is an archive of the journals of Martha McMillan of Cedarville, Ohio, who maintained a daily journal from 1867 until her death in 1913. Recommended Citation Bennett, Sara K., "Sheep Farming and Shearing (1874)" (2015). Research Papers. 4. http://digitalcommons.cedarville.edu/mcmillan_research_papers/4 This Research Paper is brought to you for free and open access by DigitalCommons@Cedarville, a service of the Centennial Library. It has been accepted for inclusion in Research Papers by an authorized administrator of DigitalCommons@Cedarville. For more information, please contact [email protected]. Sheep Farming and Shearing (1874 – May – August) Sara Bennett 2015 Sheep may seem insignificant and even silly to consider important but to Martha and James McMillan (also known as Mr. Mc.) sheep had great importance in their daily lives. Mr. Mc kept sheep as a large part of his farm. From 1867-1906 sheep lived on the McMillan farm. Martha writes on 16 April 1867 “Tuesday. Rain. Rain. James has been out all day making arrangements for his flock. There is no doubt of it but James is a shepherd in the highest sense of the term - a shepherd of sheep I mean (!) (!) (11)” (ed. Wood). -

Australian Merino Wool

Proceedings of the Symposium on Natural Fibres 83 Australian Merino wool Ben Lyons, Australian Wool Foundation A COMBINATION OF SCIENCE, ART AND EVOLUTION • Today • Tomorrow • Product developments and innovations • Priorities for the future In 2007 Australia’s wool industry celebrated 200 years of commercially exporting to England, it is the fi bre of a nation. Overview Wool production continues to be one of Australia’s most important agricultural industries, representing 6.3% of the gross value of agricultural production and $2.3 billion in export income in 2003-2004. The current sheep fl ock of 107 million occupies some 25% of Australia’s land mass, with approximately 30% of the fl ock in the high rainfall zone, 55% in the sheep/cereal zone and 15% in the pastoral zone. The natural resource base of soil, water and vegetation provide the fundamentals on which the wool industry builds signifi cant economic and social benefi ts for the nation. In addition, the effective control of pests and weeds increases productivity, reduces production costs and delivers benefi ts to the natural ecosystems. This all greatly assists wool growers demonstrate their sense of pride in their farms and their desire to see their assets passed on to future generations in better condition. Merino wool - the perfect choice for today’s fast paced environment Faced with an abundance of choice, consumers will always look for quality. Merino wool is the smart, timeless and versatile fi bre choice, with the added advantage of being produced in a natural, sustainable manner. Time is the most precious com- modity for today’s consumers, and thanks to evolution and innovation, Merino wool has easy care, quick dry quali- ties, making them practical and convenient for time-poor consumers. -

Old Deseret Live Stock Company

Utah State University DigitalCommons@USU All USU Press Publications USU Press 2008 Old Deseret Live Stock Company W. Dean Frischknecht Follow this and additional works at: https://digitalcommons.usu.edu/usupress_pubs Part of the United States History Commons Recommended Citation Frischknecht, W. D. (2008). Old Deseret Live Stock Company: A stockman's memoir. Logan, Utah: Utah State University Press. This Book is brought to you for free and open access by the USU Press at DigitalCommons@USU. It has been accepted for inclusion in All USU Press Publications by an authorized administrator of DigitalCommons@USU. For more information, please contact [email protected]. Old Deseret Live Stock Company Old Deseret Live Stock Company A Stockman’s Memoir W. Dean Frischknecht Utah State University Press Logan, Utah Copyright ©2008 Utah State University Press All rights reserved Utah State University Press Logan, Utah 84322-7200 www.usu.edu/usupress Manufactured in the United States of America Printed on recycled, acid-free paper ISBN: 978-0-87421-711-7 (cloth) ISBN: 978-0-87421-712-4 (e-book) Library of Congress Cataloging-in-Publication Data Frischknecht, W. Dean. Old Deseret Live Stock Company : a stockman’s memoir / W. Dean Frischknecht. p. cm. Includes index. ISBN 978-0-87421-711-7 (cloth : alk. paper) -- ISBN 978-0-87421-712-4 (e-book) 1. Frischknecht, W. Dean. 2. Sheep ranchers--Wasatch Range (Utah and Idaho)--Biography. 3. Ranch life--Wasatch Range (Utah and Idaho) 4. Deseret Live Stock Company--History. 5. Wasatch Range (Utah and Idaho)--Biography. -

On the Sheep's Back

On the Sheep’s back The rise and fall of English wool By Richard Martin IVE HUNDRED YEARS AGO, ‘half the wealth of England rides on the back of the sheep’ was no idle boast. ‘Sheep turned grass into wool’1 to Fprovide the raw material for the woollen cloth-weaving industry that was by far the most dominant component of the medieval economy. For this reason, the growing of sheep for wool was not just a matter for farmers: its management was an integral and important part of the governance of the realm. 1000 years earlier, the Roman Empire had brought sheep to many parts of Northern Europe, and English looms had clothed the Mediterranean legions fighting in these colder latitudes. Indeed there is evidence that there was an important and growing export trade in English woollen cloth. But with the break-up of the empire, the more disparate way of life of the successive waves of Scandinavian invaders reduced the sheep to a subservient part of subsistence agriculture. Even by the conquest, milk and manure were probably the most valuable products of the sheep2, with both having little importance beyond the boundaries of the village. However, during the following three centuries, the sheep population ballooned everywhere but in parts of Southwest England and Wales. The climate and geology, the system of manorial and monastic land tenure, and the voracious market for good wool, particularly in Flanders and Northern Italy3, all played a part. A spectacular part in turning England into a ‘wool factory’ was played by the large monastic estates. -

Download the Brochure

WESTWEST AUSTRALIANAUSTRALIAN STATIONSTATION STAYSSTAYS WESTERN AUSTRALIA Forget the hustle and bustle of city life and stay on an Outback Station Stay www.wastationstays.com.au WESTWEST AUSTRALIANAUSTRALIAN STATION STATIONSTAYSSTAYS SHEEP AND CATTLE STATIONS HAVE BEEN THE BACKBONE OF WEST AUSTRALIA’S OUTBACK FOR OVER 130 YEARS - AND HOSPITALITY IS NOTHING NEW FOR THEM. WELCOMING THE SHEARERS, JACKAROOS, GOVERNESSES, HEAD STOCK MEN, NEIGHBOURS, MAIL CONTRACTORS AND DISTINGUISHED GUESTS IS A WAY OF LIFE FOR THE PEOPLE WHO LIVE IN THESE REMOTE AREAS. A diverse range of accommodation offers a multitude of experiences. Grand old homesteads present comfortable respite whilst you watch the day to day work of running a station. Self-contained cottages are relaxed and personable. Get comfy down at the shearers quarters and imagine life back in the days of booming wool prices. Most stations will also offer you a patch to roll out your swag or set up your camper, surrounded by stunning scenery and whistling birds in the trees above. Despite the fact you could sit back all day and soak up the atmosphere of the outback, the list of activities you can partake in is endless. 4WDing, hiking, mountain-bike riding, birdwatching, painting, horse treks, walk-trails, guided tours, wildflowers, stargazing or perhaps even lend a hand and get involved in station life. No matter your choice you can be assured of sharing hundreds of thousands of acres with only a few others. However you choose to spend your time at a Station Stay you can be guaranteed of an experience like no other. Forget the hustle and bustle of city life and allow the wide sweeping plains and ruggedly beautiful landscapes of these Mid-West Stations Stays capture your imagination.