Hadoop Programming Options

Total Page:16

File Type:pdf, Size:1020Kb

Load more

Recommended publications

-

Scalable Cloud Computing

Scalable Cloud Computing Keijo Heljanko Department of Computer Science and Engineering School of Science Aalto University [email protected] 2.10-2013 Mobile Cloud Computing - Keijo Heljanko (keijo.heljanko@aalto.fi) 1/57 Guest Lecturer I Guest Lecturer: Assoc. Prof. Keijo Heljanko, Department of Computer Science and Engineering, Aalto University, I Email: [email protected] I Homepage: https://people.aalto.fi/keijo_heljanko I For more info into today’s topic, attend the course: “T-79.5308 Scalable Cloud Computing” Mobile Cloud Computing - Keijo Heljanko (keijo.heljanko@aalto.fi) 2/57 Business Drivers of Cloud Computing I Large data centers allow for economics of scale I Cheaper hardware purchases I Cheaper cooling of hardware I Example: Google paid 40 MEur for a Summa paper mill site in Hamina, Finland: Data center cooled with sea water from the Baltic Sea I Cheaper electricity I Cheaper network capacity I Smaller number of administrators / computer I Unreliable commodity hardware is used I Reliability obtained by replication of hardware components and a combined with a fault tolerant software stack Mobile Cloud Computing - Keijo Heljanko (keijo.heljanko@aalto.fi) 3/57 Cloud Computing Technologies A collection of technologies aimed to provide elastic “pay as you go” computing I Virtualization of computing resources: Amazon EC2, Eucalyptus, OpenNebula, Open Stack Compute, . I Scalable file storage: Amazon S3, GFS, HDFS, . I Scalable batch processing: Google MapReduce, Apache Hadoop, PACT, Microsoft Dryad, Google Pregel, Spark, ::: I Scalable datastore: Amazon Dynamo, Apache Cassandra, Google Bigtable, HBase,. I Distributed Coordination: Google Chubby, Apache Zookeeper, . I Scalable Web applications hosting: Google App Engine, Microsoft Azure, Heroku, . -

An Enterprise Knowledge Network

Fogbeam Labs Cut Through The Information Fog http://www.fogbeam.com An Enterprise Knowledge Network Knowledge exists in many forms inside your organization – ranging from tacit knowledge which exists only in the minds of the users who possess it, to codified knowledge stored in databases and document repositories. Unfortunately while knowledge exists throughout the organization, it is often not easy (if even possible) to locate, use, share, and reuse existing knowledge. This results in a situation often described as “the left hand doesn't know what the right hand is doing” and damages morale as employees spend their days frustrated and complaining that “nobody knows what is going on around here”. The obstacles that hinder access to existing knowledge can be cultural, geographical, social, and/or technological. And while no technological solution can guarantee perfect knowledge-sharing, tools drawn from big data, data mining / machine learning, deep learning, and artificial intelligence techniques can improve an organization's power to generate, capture, use, share and reuse knowledge. Using technologies developed as part of the semantic web initiative, and applying the principles of linked data within the enterprise, the Fogbeam Labs Enterprise Knowledge Network approach can help your firm integrate and aggregate knowledge which is spread across your existing enterprise applications, content repositories and Intranet. An Enterprise Knowledge Network enables your firm's capabilities to: • engage in high levels of knowledge transfer and -

Zookeeper Administrator's Guide

ZooKeeper Administrator's Guide A Guide to Deployment and Administration by Table of contents 1 Deployment........................................................................................................................ 2 1.1 System Requirements....................................................................................................2 1.2 Clustered (Multi-Server) Setup.....................................................................................2 1.3 Single Server and Developer Setup..............................................................................4 2 Administration.................................................................................................................... 4 2.1 Designing a ZooKeeper Deployment........................................................................... 5 2.2 Provisioning.................................................................................................................. 6 2.3 Things to Consider: ZooKeeper Strengths and Limitations..........................................6 2.4 Administering................................................................................................................6 2.5 Maintenance.................................................................................................................. 6 2.6 Supervision....................................................................................................................7 2.7 Monitoring.....................................................................................................................8 -

Unravel Data Systems Version 4.5

UNRAVEL DATA SYSTEMS VERSION 4.5 Component name Component version name License names jQuery 1.8.2 MIT License Apache Tomcat 5.5.23 Apache License 2.0 Tachyon Project POM 0.8.2 Apache License 2.0 Apache Directory LDAP API Model 1.0.0-M20 Apache License 2.0 apache/incubator-heron 0.16.5.1 Apache License 2.0 Maven Plugin API 3.0.4 Apache License 2.0 ApacheDS Authentication Interceptor 2.0.0-M15 Apache License 2.0 Apache Directory LDAP API Extras ACI 1.0.0-M20 Apache License 2.0 Apache HttpComponents Core 4.3.3 Apache License 2.0 Spark Project Tags 2.0.0-preview Apache License 2.0 Curator Testing 3.3.0 Apache License 2.0 Apache HttpComponents Core 4.4.5 Apache License 2.0 Apache Commons Daemon 1.0.15 Apache License 2.0 classworlds 2.4 Apache License 2.0 abego TreeLayout Core 1.0.1 BSD 3-clause "New" or "Revised" License jackson-core 2.8.6 Apache License 2.0 Lucene Join 6.6.1 Apache License 2.0 Apache Commons CLI 1.3-cloudera-pre-r1439998 Apache License 2.0 hive-apache 0.5 Apache License 2.0 scala-parser-combinators 1.0.4 BSD 3-clause "New" or "Revised" License com.springsource.javax.xml.bind 2.1.7 Common Development and Distribution License 1.0 SnakeYAML 1.15 Apache License 2.0 JUnit 4.12 Common Public License 1.0 ApacheDS Protocol Kerberos 2.0.0-M12 Apache License 2.0 Apache Groovy 2.4.6 Apache License 2.0 JGraphT - Core 1.2.0 (GNU Lesser General Public License v2.1 or later AND Eclipse Public License 1.0) chill-java 0.5.0 Apache License 2.0 Apache Commons Logging 1.2 Apache License 2.0 OpenCensus 0.12.3 Apache License 2.0 ApacheDS Protocol -

Talend Open Studio for Big Data Release Notes

Talend Open Studio for Big Data Release Notes 6.0.0 Talend Open Studio for Big Data Adapted for v6.0.0. Supersedes previous releases. Publication date July 2, 2015 Copyleft This documentation is provided under the terms of the Creative Commons Public License (CCPL). For more information about what you can and cannot do with this documentation in accordance with the CCPL, please read: http://creativecommons.org/licenses/by-nc-sa/2.0/ Notices Talend is a trademark of Talend, Inc. All brands, product names, company names, trademarks and service marks are the properties of their respective owners. License Agreement The software described in this documentation is licensed under the Apache License, Version 2.0 (the "License"); you may not use this software except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0.html. Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License. This product includes software developed at AOP Alliance (Java/J2EE AOP standards), ASM, Amazon, AntlR, Apache ActiveMQ, Apache Ant, Apache Avro, Apache Axiom, Apache Axis, Apache Axis 2, Apache Batik, Apache CXF, Apache Cassandra, Apache Chemistry, Apache Common Http Client, Apache Common Http Core, Apache Commons, Apache Commons Bcel, Apache Commons JxPath, Apache -

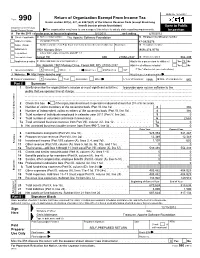

Return of Organization Exempt from Income

OMB No. 1545-0047 Return of Organization Exempt From Income Tax Form 990 Under section 501(c), 527, or 4947(a)(1) of the Internal Revenue Code (except black lung benefit trust or private foundation) Open to Public Department of the Treasury Internal Revenue Service The organization may have to use a copy of this return to satisfy state reporting requirements. Inspection A For the 2011 calendar year, or tax year beginning 5/1/2011 , and ending 4/30/2012 B Check if applicable: C Name of organization The Apache Software Foundation D Employer identification number Address change Doing Business As 47-0825376 Name change Number and street (or P.O. box if mail is not delivered to street address) Room/suite E Telephone number Initial return 1901 Munsey Drive (909) 374-9776 Terminated City or town, state or country, and ZIP + 4 Amended return Forest Hill MD 21050-2747 G Gross receipts $ 554,439 Application pending F Name and address of principal officer: H(a) Is this a group return for affiliates? Yes X No Jim Jagielski 1901 Munsey Drive, Forest Hill, MD 21050-2747 H(b) Are all affiliates included? Yes No I Tax-exempt status: X 501(c)(3) 501(c) ( ) (insert no.) 4947(a)(1) or 527 If "No," attach a list. (see instructions) J Website: http://www.apache.org/ H(c) Group exemption number K Form of organization: X Corporation Trust Association Other L Year of formation: 1999 M State of legal domicile: MD Part I Summary 1 Briefly describe the organization's mission or most significant activities: to provide open source software to the public that we sponsor free of charge 2 Check this box if the organization discontinued its operations or disposed of more than 25% of its net assets. -

Graft: a Debugging Tool for Apache Giraph

Graft: A Debugging Tool For Apache Giraph Semih Salihoglu, Jaeho Shin, Vikesh Khanna, Ba Quan Truong, Jennifer Widom Stanford University {semih, jaeho.shin, vikesh, bqtruong, widom}@cs.stanford.edu ABSTRACT optional master.compute() function is executed by the Master We address the problem of debugging programs written for Pregel- task between supersteps. like systems. After interviewing Giraph and GPS users, we devel- We have tackled the challenge of debugging programs written oped Graft. Graft supports the debugging cycle that users typically for Pregel-like systems. Despite being a core component of pro- go through: (1) Users describe programmatically the set of vertices grammers’ development cycles, very little work has been done on they are interested in inspecting. During execution, Graft captures debugging in these systems. We interviewed several Giraph and the context information of these vertices across supersteps. (2) Us- GPS programmers (hereafter referred to as “users”) and studied vertex.compute() ing Graft’s GUI, users visualize how the values and messages of the how they currently debug their functions. captured vertices change from superstep to superstep,narrowing in We found that the following three steps were common across users: suspicious vertices and supersteps. (3) Users replay the exact lines (1) Users add print statements to their code to capture information of the vertex.compute() function that executed for the sus- about a select set of potentially “buggy” vertices, e.g., vertices that picious vertices and supersteps, by copying code that Graft gener- are assigned incorrect values, send incorrect messages, or throw ates into their development environments’ line-by-line debuggers. -

HDP 3.1.4 Release Notes Date of Publish: 2019-08-26

Release Notes 3 HDP 3.1.4 Release Notes Date of Publish: 2019-08-26 https://docs.hortonworks.com Release Notes | Contents | ii Contents HDP 3.1.4 Release Notes..........................................................................................4 Component Versions.................................................................................................4 Descriptions of New Features..................................................................................5 Deprecation Notices.................................................................................................. 6 Terminology.......................................................................................................................................................... 6 Removed Components and Product Capabilities.................................................................................................6 Testing Unsupported Features................................................................................ 6 Descriptions of the Latest Technical Preview Features.......................................................................................7 Upgrading to HDP 3.1.4...........................................................................................7 Behavioral Changes.................................................................................................. 7 Apache Patch Information.....................................................................................11 Accumulo........................................................................................................................................................... -

Analysis and Detection of Anomalies in Mobile Devices

Master’s Degree in Informatics Engineering Dissertation Final Report Analysis and detection of anomalies in mobile devices António Carlos Lagarto Cabral Bastos de Lima [email protected] Supervisor: Prof. Dr. Tiago Cruz Co-Supervisor: Prof. Dr. Paulo Simões Date: September 1, 2017 Master’s Degree in Informatics Engineering Dissertation Final Report Analysis and detection of anomalies in mobile devices António Carlos Lagarto Cabral Bastos de Lima [email protected] Supervisor: Prof. Dr. Tiago Cruz Co-Supervisor: Prof. Dr. Paulo Simões Date: September 1, 2017 i Acknowledgements I strongly believe that both nature and nurture playing an equal part in shaping an in- dividual, and that in the end, it is what you do with the gift of life that determines who you are. However, in order to achieve great things motivation alone might just not cut it, and that’s where surrounding yourself with people that want to watch you succeed and better yourself comes in. It makes the trip easier and more enjoyable, and there is a plethora of people that I want to acknowledge for coming this far. First of all, I’d like to thank professor Tiago Cruz for giving me the support, motivation and resources to work on this project. The idea itself started over one of our then semi- regular morning coffee conversations and from there it developed into a full-fledged concept quickly. But this acknowledgement doesn’t start there, it dates a few years back when I first had the pleasure of having him as my teacher in one of the introductory courses. -

Open Source and Third Party Documentation

Open Source and Third Party Documentation Verint.com Twitter.com/verint Facebook.com/verint Blog.verint.com Content Introduction.....................2 Licenses..........................3 Page 1 Open Source Attribution Certain components of this Software or software contained in this Product (collectively, "Software") may be covered by so-called "free or open source" software licenses ("Open Source Components"), which includes any software licenses approved as open source licenses by the Open Source Initiative or any similar licenses, including without limitation any license that, as a condition of distribution of the Open Source Components licensed, requires that the distributor make the Open Source Components available in source code format. A license in each Open Source Component is provided to you in accordance with the specific license terms specified in their respective license terms. EXCEPT WITH REGARD TO ANY WARRANTIES OR OTHER RIGHTS AND OBLIGATIONS EXPRESSLY PROVIDED DIRECTLY TO YOU FROM VERINT, ALL OPEN SOURCE COMPONENTS ARE PROVIDED "AS IS" AND ANY EXPRESSED OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE DISCLAIMED. Any third party technology that may be appropriate or necessary for use with the Verint Product is licensed to you only for use with the Verint Product under the terms of the third party license agreement specified in the Documentation, the Software or as provided online at http://verint.com/thirdpartylicense. You may not take any action that would separate the third party technology from the Verint Product. Unless otherwise permitted under the terms of the third party license agreement, you agree to only use the third party technology in conjunction with the Verint Product. -

60 Recipes for Apache Cloudstack

60 Recipes for Apache CloudStack Sébastien Goasguen 60 Recipes for Apache CloudStack by Sébastien Goasguen Copyright © 2014 Sébastien Goasguen. All rights reserved. Printed in the United States of America. Published by O’Reilly Media, Inc., 1005 Gravenstein Highway North, Sebastopol, CA 95472. O’Reilly books may be purchased for educational, business, or sales promotional use. Online editions are also available for most titles (http://safaribooksonline.com). For more information, contact our corporate/ institutional sales department: 800-998-9938 or [email protected]. Editor: Brian Anderson Indexer: Ellen Troutman Zaig Production Editor: Matthew Hacker Cover Designer: Karen Montgomery Copyeditor: Jasmine Kwityn Interior Designer: David Futato Proofreader: Linley Dolby Illustrator: Rebecca Demarest September 2014: First Edition Revision History for the First Edition: 2014-08-22: First release See http://oreilly.com/catalog/errata.csp?isbn=9781491910139 for release details. Nutshell Handbook, the Nutshell Handbook logo, and the O’Reilly logo are registered trademarks of O’Reilly Media, Inc. 60 Recipes for Apache CloudStack, the image of a Virginia Northern flying squirrel, and related trade dress are trademarks of O’Reilly Media, Inc. Many of the designations used by manufacturers and sellers to distinguish their products are claimed as trademarks. Where those designations appear in this book, and O’Reilly Media, Inc. was aware of a trademark claim, the designations have been printed in caps or initial caps. While every precaution has been taken in the preparation of this book, the publisher and authors assume no responsibility for errors or omissions, or for damages resulting from the use of the information contained herein. -

Apache Giraph 3

Other Distributed Frameworks Shannon Quinn Distinction 1. General Compute Engines – Hadoop 2. User-facing APIs – Cascading – Scalding Alternative Frameworks 1. Apache Mahout 2. Apache Giraph 3. GraphLab 4. Apache Storm 5. Apache Tez 6. Apache Flink Alternative Frameworks 1. Apache Mahout 2. Apache Giraph 3. GraphLab 4. Apache Storm 5. Apache Tez 6. Apache Flink Apache Mahout • A Tale of Two Frameworks 1. Distributed machine learning on Hadoop – 0.1 to 0.9 2. “Samsara” – New in 0.10+ Machine learning on Hadoop • Born out of the Apache Lucene project • Built on Hadoop (all in Java) • Pragmatic machine learning at scale 1: Recommendation 2: Classification 3: Clustering Other MapReduce algorithms • Dimensionality reduction – Lanczos – SSVD – LDA • Regression – Logistic – Linear – Random Forest • Evolutionary algorithms Mahout-Samsara • Programming “environment” for distributed machine learning • R-like syntax • Interactive shell (like Spark) • Under-the-hood algebraic optimizer • Engine-agnostic – Spark – H2O – Flink – ? Mahout-Samsara Mahout • 3 main components Engine-agnostic environment for Engine-specific Legacy MapReduce building scalable ML algorithms (Spark, algorithms algorithms H2O) (Samsara) Mahout • v0.10.0 released April 11 (as in, 5 days ago!) • 0.10.1 – More base linear algebra functionality • 0.11.0 – Compatible with Spark 1.3 • 1.0 – ? Mahout features by engine Mahout features by engine Mahout features by engine • No engine-agnostic clustering algorithms yet – Still the domain of legacy MapReduce • H2O and especially Flink